XCP-ng 8.0.0 Beta now available!

-

@s_mcleod issue with the next button is an upstream bug and will be fixed in XCP-ng Center 8.0.1

-

@borzel good catch!

Thanks,

-

So VM.suspend is failing right? I wonder if it's reproducible in CH 8.0, I think we saw the same thing here in XCP-ng.

I'll update our few hosts running XS 7.6 to CH 8.0 next week, so we'll be able to compare

-

Thanks for your hard work!

Just did my first upgrade with the ISO from XCP-NG 7.6 (VirtualBox 6.08 VM). Upgrade went without a problem.My ext4 datastore was detached after the upgrade and could not be attached, because the "sm-additional-drivers" are not installed by default (as was written in your WHAT'S NEW section).

After "yum install sm-additional-drivers" and a toolstack restart it was working again.

Also installing the container package with "yum install xscontainer" went without problems.I did import a coreos.vhd with XCP-NG Center 8, converted the VM from HVM to PV with XCP-NG Center and I could start it.

I can confirm the problem with suspending the VM, I get the same error (xenopsd End_of_file). -

It's a little strange to see older but capable CPUs dropped from the list. The old Opteron 2356 I have didn't make the cut, even though it works just fine in 7.6. I'm still going to try out 8.0 anyways.

I understand that vendors don't want to "extend support forever" but it's silly when you have 10+ year old hardware that runs fine, and the only limitation really comes down to "hardware feature XYZ is a requirement". But so far, I've not seen the actual minimum CPU requirement; which makes me a bit suspicious about "it won't run".

-

@apayne said in XCP-ng 8.0.0 Beta now available!:

Opteron 2356

That's a 12 year old CPU so I don't see any issue with dropping it.

-

@DustinB said in XCP-ng 8.0.0 Beta now available!:

That's a 12 year old CPU so I don't see any issue with dropping it.

As I said, 10+ year old hardware; and I also said, I get that vendors want to draw lines in the sand so they don't end up supporting everything under the sun. It's good business sense to limit expenditures to equipment that is commonly used.

But my (poorly articulated from the last posting) point remains: there isn't a known or posted reason why the software forces me to drop the CPU. Citrix just waved their hands and said "these don't work anymore". Well, I suspect it really does work, and this is just the side-effect of a vendor cost-cutting decision for support that has nothing to do with XCP-ng, but unfortunately impacts it anyways. So it's worth a try, and if it fails, so be it - at least there will be a known reason why, instead of the Citrix response of "nothing to see here, move along..."

XCP-ng is a killer deal, probably THE killer deal when viewed through the lens of a home lab.

That makes it hard to justify shelling out money for Windows Hyper-V or VMWare when there is a family to feed and rent to pay. Maybe that explains why I am so keen on seeing if Citrix really did make changes that prevent it from running.Fail or succeed, either way, it'll be more information to be contributed back to the community here, and something will be learned. That's a positive outcome all the way around.

-

Regarding Citrix choice: I think they can't really publicly communicate about the reasons. It might be related to security issues and some CPU vendors not upgrading microcodes anymore.

That's why it should work technically speaking, but Citrix won't be liable for any security breach due to an "old" CPU.

Regarding XCP-ng: as long as it just works, and you don't pay for support, I think you'll be fine for your home lab

Please report if you have any issue with 8.0 on your old hardware, we'll try to help as far as we could.

-

My experience with "XOA Quick Deploy"

It failed.

Because I need to use a proxy, I opened an issue here:

https://github.com/xcp-ng/xcp/issues/193 -

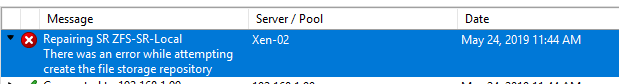

Installed fine on one of my Intel X56XX based system and the system boots into XCP 8.0 although the zfs local storage wasn't mounted and a repair fails as well.

modprobe zfs fails with "module zfs not found"

Is there any updated ZFS documentation for 8.0 that would help?

-

zfs is not part of the default installation, so you need to install it manually using

yumfrom our repositories. Documentation on the wiki has not been updated yet for 8.0: https://github.com/xcp-ng/xcp/wiki/ZFS-on-XCP-ng

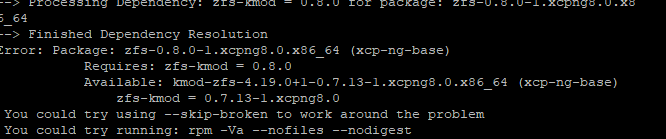

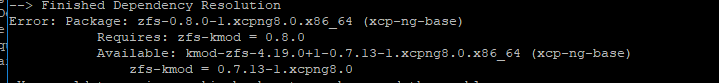

Now the zfs packages are directly in our main repositories, no need to add extra--enablerepooptions. Justyum install zfs.I've just built an updated zfs package (new major version 0.8.0 instead of the 0.7.3 that was initially available in the repos for the beta) in the hope that it solves some of the issues we had with previous versions (such as VDI export or the need to patch some packages to remove the use of

O_DIRECT). I'm just waiting for the main mirror to sync to test that it installs fine. -

@stormi Thanks for the quick reply .. tried the yum install zfs and it errors with the following:

-

That's why I told that I'm waiting for the main mirror to sync

And the build machine is having a "I'm feeling all slow" moment. -

@stormi Ok..... silly me .. will wait for you to give the aok.

-

@AllooTikeeChaat You can now try.

-

@stormi still broken .. same error

-

@AllooTikeeChaat yum considers that your medata are recent enough. Ask it to clean them:

yum clean all. -

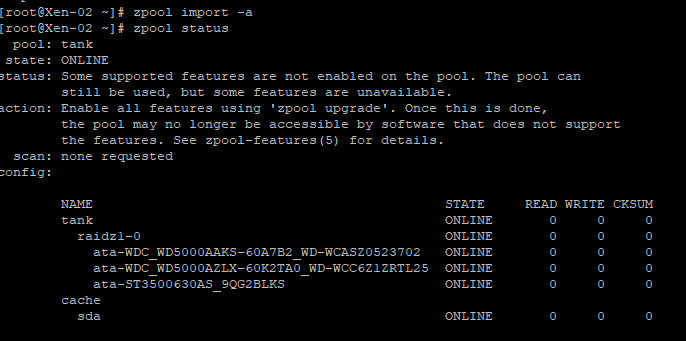

@stormi yum clean all worked. Installed aok and modprobe zfs doesn't complain so that's all good.

A repair of the ZFS SR fails with the same error and "zpool status: no pools available" and "zfs list no datasets available".

I'll open a new post if I can't get it working rather than replying to this one.

Update: fixed the issue ..

-

Which was? (the issue)

-

@olivierlambert

(1) Missing the ZFS packages from the base install.

(2) Needed a "yum clean all" to be able to install zfs packages.

(3) Needed to manually import the ZFS zpoolI'm a noob with zfs so had to work out how to import an existing zpool and once thats done it can be repaired/mounted.