Memory Ballooning (DMC) broken since XCP-ng 8.3 January 2026 patches

-

Hello XCP-ng community and Vates team,

I have noticed an issue regarding dynamic memory control (DMC) or rather with its memory ballooning feature.

I have DMC configured for quite a few VMs.Before January's round of patches, everything had been working fine but since applying those patches the VM that has DMC enabled gets its RAM shrunk down to "dynamic min" during live migration but it never gets expanded back to its normal value after the live migration finished.

It stays with its dynamic min RAM until it gets rebooted.

After cleanly rebooting the VM via Xen Orchestra RAM is at its normal value (dynamic max) again.I did not report this issue earlier since I was hoping it will eventually get fixed by itself by March round of patches but unfortunately the issue is still there.

I suspect the bug was introduced by this change that is mentioned in January update release notes:

"Fix regression on dynamic memory management, in XAPI, during live migration, causing VMs not to balloon down before the migration."

I feel like after this change VMs get ballooned down more aggressively before the migration but in my case never get ballooned up again.I currently have multiple VMs that have this issue. Below please find dmesg output of one of the VMs in question:

[24931.647978] Freezing user space processes [24931.693168] Freezing user space processes completed (elapsed 0.044 seconds) [24931.693392] OOM killer disabled. [24931.693395] Freezing remaining freezable tasks [24931.704356] Freezing remaining freezable tasks completed (elapsed 0.010 seconds) [24931.764470] suspending xenstore... [24931.859456] xen:grant_table: Grant tables using version 1 layout [24931.859792] xen: --> irq=9, pirq=16 [24931.859818] xen: --> irq=8, pirq=17 [24931.859843] xen: --> irq=12, pirq=18 [24931.859867] xen: --> irq=1, pirq=19 [24931.859891] xen: --> irq=6, pirq=20 [24931.859913] xen: --> irq=4, pirq=21 [24931.859937] xen: --> irq=7, pirq=22 [24931.859960] xen: --> irq=23, pirq=23 [24931.859984] xen: --> irq=28, pirq=24 [24931.889330] usb usb1: root hub lost power or was reset [24932.179596] usb 1-2: reset full-speed USB device number 2 using uhci_hcd [24932.436726] OOM killer enabled. [24932.436732] Restarting tasks ... done. [24932.492024] Setting capacity to 1048576000 [24932.493343] Setting capacity to 524288000 [24932.494439] Setting capacity to 4273496064After live migration, before rebooting:

root@mldmzdocker:~# free -m total used free shared buff/cache available Mem: 2300 1135 352 109 1185 1164 Swap: 979 65 914After rebooting:

root@mldmzdocker:~# free -m total used free shared buff/cache available Mem: 7932 754 6747 9 679 7178 Swap: 979 0 979Also: this issue seems to only occur on Linux guests. I tested on Debian 12/13 and Windows Server 2019.

Please let me know if I can provide something else that could possibly help to track down the issue.

Thanks in advance and best regards.

-

@MajorP93 Hello, what does

xe vm-param-get uuid=... param-name=memory-targetsay on your VM after migrating? -

Hello @dinhngtu , thanks for your reply.

I had to use another VM, that also has the issue, this time since the other one used for illustration in my initial post got rebooted and is back to normal again.Below please find the relevant output:

dmesg:

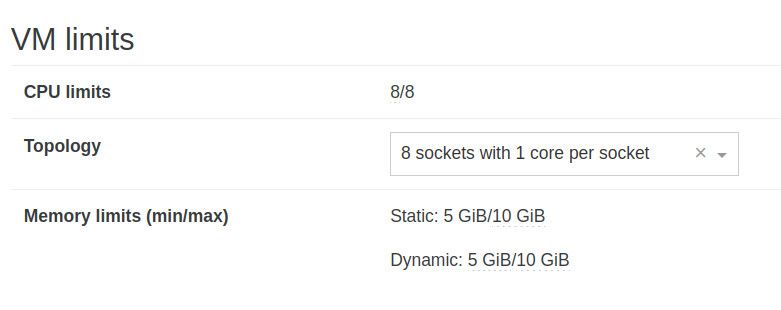

[ 7085.628802] Freezing user space processes [ 7085.652324] Freezing user space processes completed (elapsed 0.023 seconds) [ 7085.652367] OOM killer disabled. [ 7085.652370] Freezing remaining freezable tasks [ 7092.127309] Freezing remaining freezable tasks completed (elapsed 6.475 seconds) [ 7092.178860] suspending xenstore... [ 7092.197832] xen:grant_table: Grant tables using version 1 layout [ 7092.198328] xen: --> irq=9, pirq=16 [ 7092.198349] xen: --> irq=8, pirq=17 [ 7092.198370] xen: --> irq=12, pirq=18 [ 7092.198390] xen: --> irq=1, pirq=19 [ 7092.198423] xen: --> irq=6, pirq=20 [ 7092.198479] xen: --> irq=4, pirq=21 [ 7092.198525] xen: --> irq=7, pirq=22 [ 7092.198571] xen: --> irq=23, pirq=23 [ 7092.198616] xen: --> irq=28, pirq=24 [ 7092.218627] usb usb1: root hub lost power or was reset [ 7092.378841] ata2: found unknown device (class 0) [ 7092.463544] usb 1-2: reset full-speed USB device number 2 using uhci_hcd [ 7092.705603] OOM killer enabled. [ 7092.705610] Restarting tasks: Starting [ 7092.707866] Restarting tasks: Done [ 7092.746034] Setting capacity to 125829120root@docker-xo01:~# free -m total used free shared buff/cache available Mem: 4821 1850 683 3 2654 2970 Swap: 7123 31 7092[12:40 xcpng01 ~]# xe vm-param-get uuid=b010699a-4c63-b44d-b5ac-c7b51e7c0d68 param-name=memory-target 5372903424This is the memory configuration of this particular VM in Xen Orchestra:

@dinhngtu Anything else to check/provide? I could reboot the VM and get output of "xe vm-param-get uuid=... param-name=memory-target" again.

Best regards

-

@MajorP93 Thanks for the info, I'll need to discuss with the team first

-

@MajorP93 Could you attach

/var/log/xensource.logfrom the time of these issues on the destination host? We want to understand whatsqueezedis thinking and why it doesn't rebalance the memory. -

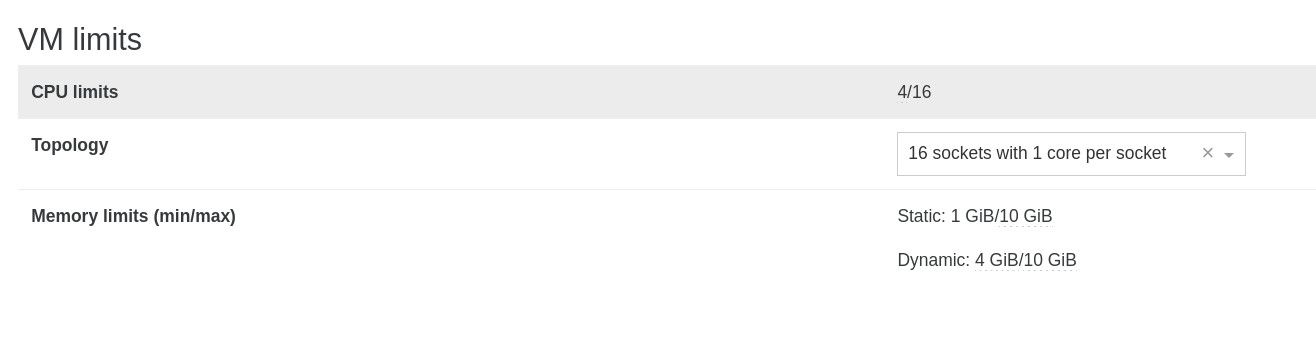

@andriy.sultanov Hello! Had to use another VM as the one used previously is a production VM and had to be rebooted in order to get dynamic max again.

This time I used a temporary test VM that I can use for these kind of tests.

After live migrating this test VM, issue is there again.

It is really easy to re-produce.

root@tmptest02:~# free -m gesamt benutzt frei gemns. Puffer/Cache verfügbar Speicher: 3794 344 3511 3 109 3449 Swap:journalctl -k | cat Mär 11 14:26:19 tmptest02 kernel: Freezing user space processes Mär 11 14:26:23 tmptest02 kernel: Freezing user space processes completed (elapsed 0.018 seconds) Mär 11 14:26:23 tmptest02 kernel: OOM killer disabled. Mär 11 14:26:23 tmptest02 kernel: Freezing remaining freezable tasks Mär 11 14:26:23 tmptest02 kernel: Freezing remaining freezable tasks completed (elapsed 0.003 seconds) Mär 11 14:26:23 tmptest02 kernel: suspending xenstore... Mär 11 14:26:23 tmptest02 kernel: xen:grant_table: Grant tables using version 1 layout Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=9, pirq=16 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=8, pirq=17 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=12, pirq=18 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=1, pirq=19 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=6, pirq=20 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=4, pirq=21 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=7, pirq=22 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=23, pirq=23 Mär 11 14:26:23 tmptest02 kernel: xen: --> irq=28, pirq=24 Mär 11 14:26:23 tmptest02 kernel: usb usb1: root hub lost power or was reset Mär 11 14:26:23 tmptest02 kernel: ata2: found unknown device (class 0) Mär 11 14:26:23 tmptest02 kernel: usb 1-2: reset full-speed USB device number 2 using uhci_hcd Mär 11 14:26:23 tmptest02 kernel: OOM killer enabled. Mär 11 14:26:23 tmptest02 kernel: Restarting tasks: Starting Mär 11 14:26:23 tmptest02 kernel: Restarting tasks: Done Mär 11 14:26:23 tmptest02 kernel: Setting capacity to 125829120xensource.log excerpt on the target XCP-ng host that the VM got live migrated to:

[14:35 xcpng02 log]# cat xensource.log | grep "Mar 11 14:26" | grep squeeze Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||squeeze] total_range = 20971520 gamma = 1.000000 gamma' = 18.007186 Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||squeeze] Total additional memory over dynamic_min = 377638052 KiB; will set gamma = 1.00 (leaving unallocated 356666532 KiB) Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||squeeze] free_memory_range ideal target = 4296680 Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||squeeze] change_host_free_memory required_mem = 4305896 KiB target_mem = 9216 KiB free_mem = 371880116 KiB Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||squeeze] change_host_free_memory all VM target meet true Mar 11 14:26:06 xcpng02 squeezed: [debug||253 ||memory] reserved 4296680 kib for reservation 6f0f8c43-7ffa-ffbf-7723-a1be3c1a61d1 Mar 11 14:26:06 xcpng02 squeezed: [debug||254 ||squeeze_xen] Xenctrl.domain_setmaxmem domid=53 max=4297704 (was=0) Mar 11 14:26:13 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /data/updated <- 1 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] Adding watches for domid: 53 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] Removing watches for domid: 52 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/initial-reservation <- 4296680 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/target <- 4196352 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /control/feature-balloon <- None Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /data/updated <- None Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/memory-offset <- None Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/uncooperative <- None Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/dynamic-min <- 4194304 Mar 11 14:26:19 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/dynamic-max <- 10485760 Mar 11 14:26:21 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /data/updated <- 1 Mar 11 14:26:22 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /data/updated <- 1 Mar 11 14:26:25 xcpng02 squeezed: [debug||3 ||squeeze_xen] domid 53 just started a guest agent (but has no balloon driver); calibrating memory-offset = 2024 KiB Mar 11 14:26:25 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /memory/memory-offset <- 2024 Mar 11 14:26:25 xcpng02 squeezed: [debug||3 ||squeeze_xen] Xenctrl.domain_setmaxmem domid=53 max=4199400 (was=4297704) Mar 11 14:26:28 xcpng02 squeezed: [debug||4 ||squeeze_xen] watch /data/updated <- 1I hope the filtered xensource.log (filtered for "squeezed") is enough or do you need other events aswell @andriy.sultanov ?

-

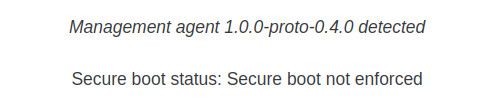

@MajorP93 Hi, are you using the Rust guest agent? We suspect that it's failing to rearm the ballooning feature after resuming from suspend.

-

@dinhngtu Yes, I am using the rust based guest agent (https://gitlab.com/xen-project/xen-guest-agent) on all of my Linux guests.

Good job tracking the issue down to that!

Is a suspend/resume performed automatically during live migration?

//EDIT: I switched from Citrix/Xenserver guest agent to the Vates rust one somewhere in January so maybe my assumption of this issue being related to January round of patches was wrong.

Or maybe I did not see this issue since the ballooning down to dynamic min was broken before said round of patches according to changelog. -

@dinhngtu Also if it is related to the Rust-based guest agent: do you guys plan to fix the issue and release a new version soon or is it advised to revert to Xenserver guest agent for now?

-

@MajorP93 AFAIK @teddyastie is working on a 1.0 release for Linux guests with this bug fix.

-

@dinhngtu Looking forward to that! I'll stick with the Rust guest utilities for the time being—hope to see that new release soon!

-

@dinhngtu @teddyastie Do you have a time line / rough estimated release date regarding Rust-based xen-guest-agent version 1.0?

Currently evaluating if I need to switch my Linux guests back to Citrix guest tools in the meantime.Looking forward to a reply.

Thank you in advance.

-

I can confirm that when using Citrix/Xenserver guest utilities version 8.4 (https://github.com/xenserver/xe-guest-utilities/releases/tag/v8.4.0) memory ballooning / DMC is working fine.

After live migration the RAM of the linux guest is expanded to dynamic_max again.

So this issue was in fact caused by Rust based xen-guest-agent.

For now I'll keep using Citrix/Xenserver guest utilities on my Linux guests until the feature is implemented in Vates rust-based guest utilities.

Best regards

-

M MajorP93 referenced this topic

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login