AMD Eypc 7402P ?

-

@nmym Which drives do you mean? The M2-SSDs are relatively slow and only for the OS. Our Storwize V5K (Gen1) is only connected with 8GB fiber channel and can only handle 1400MB/s and 25k IOPS. That's not fast anymore... I just didn't have anything else to test.

We had the system two weeks ago attached to an IBM flash system 900. That makes up to 1.2 million IOPs at low latency. Under VMWare it was very fast. I couldn't test XCP-NG there, because the Storage was already in production.

-

@Silencer80 said in AMD Eypc 7402P ?:

o an IBM flash system 900. Th

My NVMe disks cant break 1GB/s on xen, on vmware however its 70% faster. Since they are on a pci-e (3gen) 2x the theoretical limit is 2GB/s afaik

they should also bring (due to pci limit) around 200k iops. I get maks 90k . Just curious -

@nmym Currently I can not check it myself, because I do not have such spare hardware.

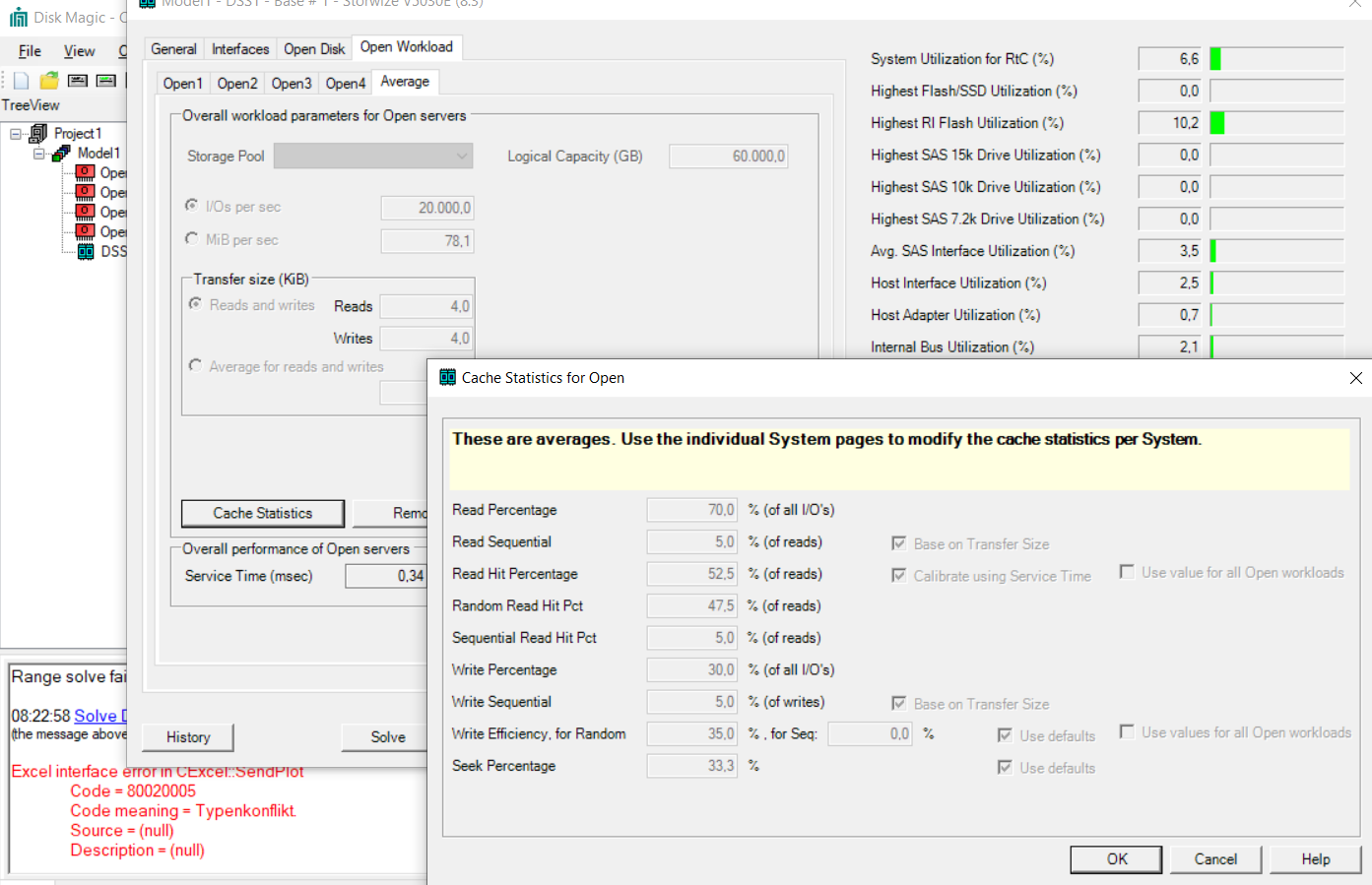

I also design our new SR655 pool with an AllFlash Storwize V5030E on a Fiber Channel SAN. I only need 90TB @ 90K IOPs. So this is not about the highest performance in the storage area. My oppinion is that it's more important to have thin provisioning on the storage side and SCSI unmap support on the hypervisor.

But that's my opinion and Oliver sees it quite differently. That's legitimate, too.

We only use local storage in very few projects.

This test was only about the validation of the CPUs.

Is there anything new from the Xen Devs to Rome? I can't see any limitations, bug or failure.

I am happy to do further tests. Write to me if you are interested in anything.

-

Interesting. Yes we all have different requirements.

We only use local storage to reduce complexity and money spend.

In that sense moving VM-s between servers takes longer than it would with a San (as whole disk needs to be moved aswell)

This is mainly the reason we want performance -

@Silencer80

I've been experimenting some here because I had a hard time getting your performance numbers on cpu passmark.

Anyway this was related to c-state not being disabled in BIOS.

After disabling this i got the numbers you got through a VM. Very nice.

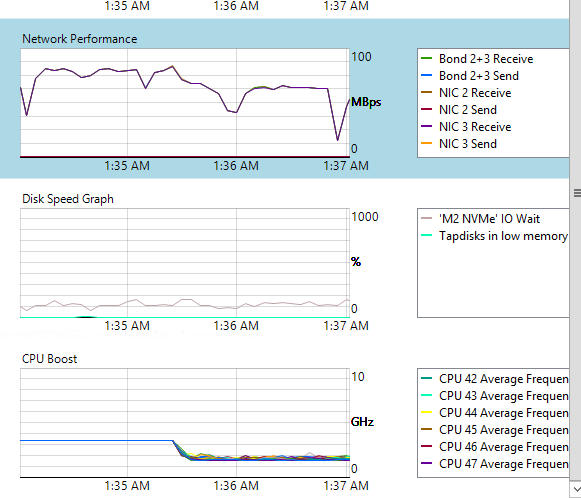

I had to do speedtests on network and disk, and one funny example is bandwidth which is heavy dependant on this. I used thexenpm set-scaling-governor performanceto set the max cpu clock andxenpm set-scaling-governor ondemandto reset it to default. (both commands gives tons of error in cli, but it somehow works.

When I set this to ondemand, my network speed drops. It's somehow ironic as I have the demand for this speed, but the process does not demand, resulting in slower network transfer.

Is there anything to automate this? (I will probably turn on performanc emode when i need it, though its not really the best way of achiving this. -

@nmym The Lenovo Bios may be better designed for optimal performance. After tuning the system by hand, I also asked Lenovo for the Optimal Bios settings and got the following answer with good result:

"the current recommendation is to set the UEFI Operating Mode to "Maximum Performance".

Please check if the "EfficiencyModeEn" parameter is set to "Auto" and not to "Enabled".

There was a FW bug, which should be fixed by the end of September. (UEFI CFE105D for pre-GA HW, UEFI CFE106D for GA HW)"Our test system is already running VMware again to add a few more tests. Afterwards we will send the system back to Lenovo next week.

However, we ordered 4 systems. So I can contribute more tests in the coming weeks. Then also with optimal RAM equipment (8x64GB @3200MHz) and new Shared Storage Storwize V5030E.

I have sized the storage as follows:

4x Hosts with 2x16GB HBAs each. Redundant FC-SAN. Storage with 8x16GB HBAs, distributed on both fabrics. Single-Initiator Zoning for each Server-HBA to both Storage Controlers.

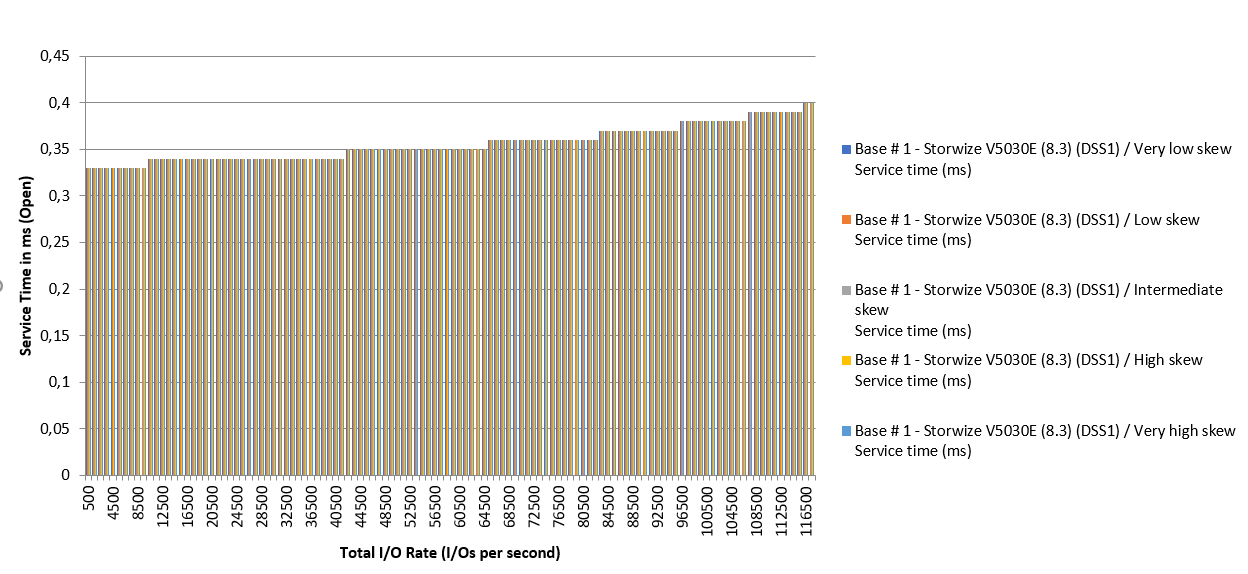

According to sizing tools (Capacity Magic / Disk Magic), the storage system then reliably delivers 90TB and approx. 120,000 IOP/s at <0.4ms. This meets our requirements.

-

Before installing Xen, the first thing I change is the BIOS power profile settings. In my experience, it works pretty well with

PerformanceandOS Control. For production, my choice is the latter because it gives to Xen the core frequency scaling management (boost) and saves energy when there's a low demand after hours.@nmym said in AMD Eypc 7402P ?:

When I set this to ondemand, my network speed drops. It's somehow ironic as I have the demand for this speed, but the process does not demand, resulting in slower network transfer

For benchmark purposes, try reducing the dom0 #vcpus (4 is good value IMHO for the tests). Since it's a high core count processor, a 8+ dom0 cores is good for hosting 100+ VMs but it might distort benchmark results because the packet processing will be spread across all dom0 cores. With the CPU governor set to

ondemandthe more cores you have the less will be the need to boost. This behavior doesn't occur inperformancedue to the cores being at the base clock minimum frequency. -

Does your statement explicitly refer to AMD CPUs?

I still have to investigate whether this KB article also reflects the optimum for ROME CPUs.

-

No, my systems are Intel-based but might work also for AMD processors. The only caveat is whether AMD fixed the C-STATES/MWAIT bug causing spontaneous reboots on the 1st gen architecture. Since ROME is a new arch, maybe it's not affected. The only way to be sure is enabling C-STATES and keep an eye on it.

That Citrix KB is my go-to reference about Xen power management. Very good article.

-

@tuxen I never saw such "performance mode" only very basic CPU settings, like cstate.

When i had C-state to auto I had never any issues with reboots (only had it running for 2 days straight), before I disabled the C-state.

I found out having C-state to auto my processors never reached their max clock frequence.

I did however never test to change the scaling-governor to performance.

I did not need to change this to performance to get full clockspeed when C-state was disabled. I just had to load the CPU. -

We're running this puppy :

Model: AMD EPYC 7352 24-Core Processor

Speed: 2300 MHzIf any of you want me to run tests, I can try

-

One socket right?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login