-

@geoffbland Without thin option, no LVM volume is created. It's expected. You must just have a VG

:

:# vgs VG #PV #LV #SN Attr VSize VFree VG_XenStorage-11daa412-d2b5-cb7c-e8ae-847821e367a6 1 1 0 wz--n- 144.23g 144.23g linstor_groupA LVM volume is only required for the thin driver of LINSTOR.

I updated the main post, it was not totally clear.

Regarding the force option, I fixed it here: https://gist.githubusercontent.com/Wescoeur/7bb568c0e09e796710b0ea966882fcac/raw/6aacde6b5c55f8e7b70ed585c59b9c2d54a2ea69/gistfile1.txt

It must be:

subprocess.call(['vgremove', '-f', LINSTOR_GROUP, '-y'])instead of:

subprocess.call(['vgremove', '-f', LINSTOR_GROUP, '-y'] + disks) -

@ronan-a said in XOSTOR hyperconvergence preview:

I updated the main post, it was not totally clear.

Thanks for that. I expected this was the case but wanted to be sure.

Looks like this is working so far then I will continue with my installation & testing. -

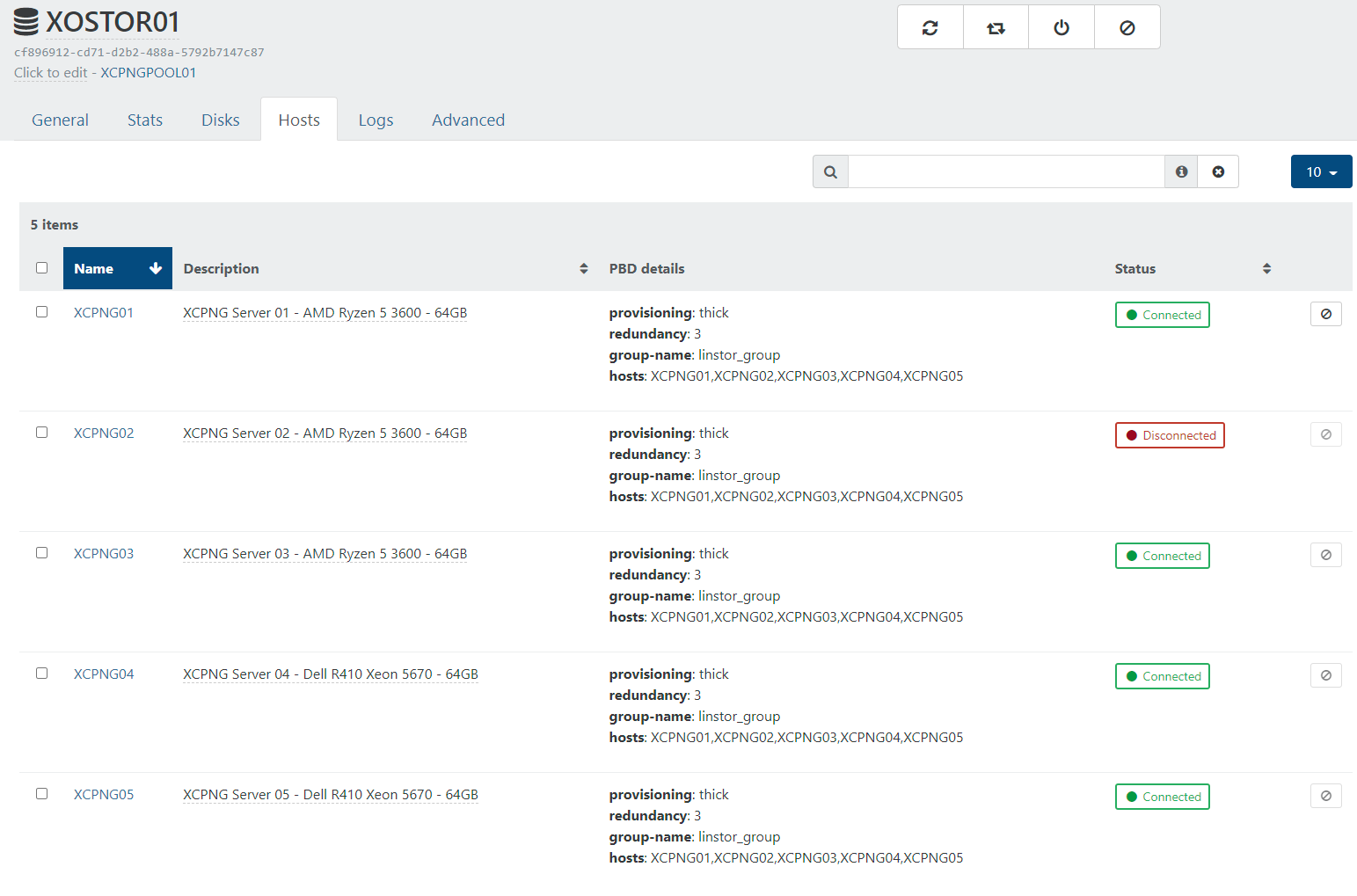

XOSTOR is now successfully installed on all 5 of my nodes in the cluster.

I ran this command (I'm assuming that this only needs running on one host?).

xe sr-create type=linstor name-label=XOSTOR01 host-uuid=28af0626-7788-4104-9449-xxxxxxxxxxxx device-config:hosts=XCPNG01,XCPNG02,XCPNG03,XCPNG04,XCPNG05 device-config:group-name=linstor_group device-config:redundancy=3 shared=true device-config:provisioning=thickAnd this returned the SR UUID

cf896912-cd71-d2b2-488a-xxxxxxxxxxxxthis looks like it worked as expected.Running

linstor node listshows the following:[12:01 XCPNG01 ~]# linstor node list ╭─────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ State ┊ ╞═════════════════════════════════════════════════════════╡ ┊ XCPNG01 ┊ COMBINED ┊ 192.168.1.41:3366 (PLAIN) ┊ Online ┊ ┊ XCPNG02 ┊ COMBINED ┊ 192.168.1.42:3366 (PLAIN) ┊ Online ┊ ┊ XCPNG03 ┊ COMBINED ┊ 192.168.1.43:3366 (PLAIN) ┊ Online ┊ ┊ XCPNG04 ┊ COMBINED ┊ 192.168.1.44:3366 (PLAIN) ┊ Online ┊ ┊ XCPNG05 ┊ COMBINED ┊ 192.168.1.45:3366 (PLAIN) ┊ Online ┊ ╰─────────────────────────────────────────────────────────╯ [12:05 XCPNG01 ~]# linstor volume list ╭────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ Node ┊ Resource ┊ StoragePool ┊ VolNr ┊ MinorNr ┊ DeviceName ┊ Allocated ┊ InUse ┊ State ┊ ╞════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ XCPNG01 ┊ xcp-persistent-database ┊ DfltDisklessStorPool ┊ 0 ┊ 1000 ┊ /dev/drbd1000 ┊ ┊ InUse ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-persistent-database ┊ DfltDisklessStorPool ┊ 0 ┊ 1000 ┊ /dev/drbd1000 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG03 ┊ xcp-persistent-database ┊ xcp-sr-linstor_group ┊ 0 ┊ 1000 ┊ /dev/drbd1000 ┊ 1.00 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-persistent-database ┊ xcp-sr-linstor_group ┊ 0 ┊ 1000 ┊ /dev/drbd1000 ┊ 1.00 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-persistent-database ┊ xcp-sr-linstor_group ┊ 0 ┊ 1000 ┊ /dev/drbd1000 ┊ 1.00 GiB ┊ Unused ┊ UpToDate ┊ ╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯Strangely running

linstor node listonly works on node XCPNO01, on the other 4 nodes I get the errorError: Unable to connect to linstor://localhost:3370: [Errno 99] Cannot assign requested address. Is this expected?However when I check on XO (from sources) it shows one of the hosts is disconnected.

When I try to connect it from XO I get this error, is this related to the problem with linstor commands only working on one host XCPNG01?

pbd.connect { "id": "8040176c-16da-674d-e9bd-708c3a66e68a" } { "code": "SR_BACKEND_FAILURE_47", "params": [ "", "The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://XCPNG01']]", "" ], "task": { "uuid": "c92909b2-8a4e-e071-3c91-0c89a32b1e01", "name_label": "Async.PBD.plug", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20220516T11:12:41Z", "finished": "20220516T11:13:11Z", "status": "failure", "resident_on": "OpaqueRef:a1e9a8f3-0a79-4824-b29f-d81b3246d190", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "SR_BACKEND_FAILURE_47", "", "The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://XCPNG01']]", "" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename lib/backtrace.ml)(line 210))((process xapi)(filename ocaml/xapi/storage_access.ml)(line 32))((process xapi)(filename ocaml/xapi/xapi_pbd.ml)(line 182))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 128))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 231))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 103)))" }, "message": "SR_BACKEND_FAILURE_47(, The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://XCPNG01']], )", "name": "XapiError", "stack": "XapiError: SR_BACKEND_FAILURE_47(, The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://XCPNG01']], ) at Function.wrap (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_XapiError.js:16:12) at _default (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_getTaskResult.js:11:29) at Xapi._addRecordToCache (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:949:24) at forEach (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:983:14) at Array.forEach (<anonymous>) at Xapi._processEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:973:12) at Xapi._watchEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:1139:14)" }XCPNG02 is the XCPNG pool master and also the host that I ran the

xe sr-createcommand on to create the SR.What do I need to look at now to resolve this?

-

@geoffbland Well it's a known error resolved in this commit: https://github.com/xcp-ng/sm/commit/df92fcf7193f7f87fd03423293589fb50faa246d

You can modify

/opt/xensource/sm/linstorvolumemanager.pywith this fix on each node, it should repair the PBD connection.

FYI, we planned to release a new beta with all our fixes before the end of this month.

-

@ronan-a Thanks that fixed it and all hosts now have the SR connected.

Running

linstor node listonly works on node XCPNO01, on the other 4 nodes I get the errorError: Unable to connect to linstor://localhost:3370: [Errno 99] Cannot assign requested addressstill. Is this expected? -

@geoffbland Yeah, you can only have one running controller in your pool, so you can use the command like this to use the controller remotely:

linstor --controllers=<node_ips> ...Where

node_ipsis a comma-separated list.

-

@ronan-a you can only have one running controller in your pool

OK, got it, that makes sense. I think it is time I started reading up on Linstor rather than bugging you with what are probably easy questions to answer if I just read up a bit more. Thanks.

-

This post is deleted! -

What is the URL for the GitHub repo for XOSTOR in case I find some issues and need to report them? I looked under the xcpng project - only sm (Stroage Manager) seemed relevant.

-

@geoffbland Yes, https://github.com/xcp-ng/sm is the right repository.

-

@ronan-a Thanks.

There's no Issues tab on this repo so no way to open issues on this repo. Are Issues turned on for this? -

@geoffbland The entry for issues is this repo: https://github.com/xcp-ng/xcp

The sm repo is used for the pull requests. -

First tests with XOSAN with newly created VMs have been good.

I'm now trying to migrate some existing VMs from NFS (TrueNAS) to XOSAN to test "active" VMs.

With the VM running - pressing the Migrate VDI button on the Disks tab, pauses the VM as expected but when the VM restarts the VDI is still on the original disk. The VDI has not been migrated to XOSAN.

If I first stop the VM and then press the Migrate VDI button on the Disks tab, I then do get an error.

vdi.migrate { "id": "8a3520ad-328f-4515-b547-2fb283edbd91", "sr_id": "cf896912-cd71-d2b2-488a-5792b7147c87" } { "code": "SR_BACKEND_FAILURE_46", "params": [ "", "The VDI is not available [opterr=Could not load f1ca0b16-ce23-408a-b80e-xxxxxxxxxxxx because: No such file or directory]", "" ], "task": { "uuid": "8b3b47ee-4135-fea7-5f30-xxxxxxxxxxxx", "name_label": "Async.VDI.pool_migrate", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20220522T12:20:12Z", "finished": "20220522T12:20:54Z", "status": "failure", "resident_on": "OpaqueRef:a1e9a8f3-0a79-4824-b29f-d81b3246d190", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "SR_BACKEND_FAILURE_46", "", "The VDI is not available [opterr=Could not load f1ca0b16-ce23-408a-b80e-xxxxxxxxxxxx because: No such file or directory]", "" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename ocaml/xapi-client/client.ml)(line 7))((process xapi)(filename ocaml/xapi-client/client.ml)(line 19))((process xapi)(filename ocaml/xapi-client/client.ml)(line 12325))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 131))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 231))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 103)))" }, "message": "SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Could not load f1ca0b16-ce23-408a-b80e-xxxxxxxxxxxx because: No such file or directory], )", "name": "XapiError", "stack": "XapiError: SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Could not load f1ca0b16-ce23-408a-b80e-xxxxxxxxxxxx because: No such file or directory], ) at Function.wrap (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_XapiError.js:16:12) at _default (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_getTaskResult.js:11:29) at Xapi._addRecordToCache (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:949:24) at forEach (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:983:14) at Array.forEach (<anonymous>) at Xapi._processEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:973:12) at Xapi._watchEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:1139:14)" }Exporting the VDI from NFS and (re)importing as a VM on XOSTOR does work.

I'm guessing this is not a problem with XOSTOR specifically but with XO or NFS, still I would like to work out what is causing migration and how to fix it?

Also I have noticed that the underlying volume on XOSTOR/linstor that was created and started to be populated does get cleaned up when the migrate fails.

This is using XO from the sources - updated fairly recently (commit 8ed84) and XO server 5.92.0.

-

It could be interesting to understand why the migration failed the first time. Is there absolutely no error during this first migration?

-

@olivierlambert Thanks for the prompt response.

I am pretty sure there was no error reported but as I cleared down the logs when I retried from on export/import I can't be 100% sure.

So I tested migration on another VM to try and replicate this and this time migration worked OK.

The only difference I can think of is that the failure occurred on a VM created quite a time ago - whilst the working VM had been created recently.

I will do a few more tests and see if I can replicate this again.

-

Okay great

If you can reproduce, that would be even better to try to do the migration with

If you can reproduce, that would be even better to try to do the migration with xeCLI, this way we remove more moving pieces in the middle

-

@olivierlambert Sorry, took some time to get around to this. But trying to migrate a VDI from an NFS store to XOSTOR is still failing most of the time. This is a VM that was created some time ago - it I do the same with the VDI of a a recently created VM the migration seems to work OK.

>xe vm-disk-list vm=lb01 Disk 0 VBD: uuid ( RO) : d9d06048-6f91-1913-714d-xxxxxxxxaece vm-name-label ( RO): lb01 userdevice ( RW): 0 Disk 0 VDI: uuid ( RO) : a38f27e8-c6a0-49d3-9fd3-xxxxxxxx10e3 name-label ( RW): lb01_tnc01_hdd sr-name-label ( RO): XCPNG_VMs_TrueNAS virtual-size ( RO): 10737418240 >xe sr-list name-label=XOSTOR01 uuid ( RO) : cf896912-cd71-d2b2-488a-xxxxxxxx7c87 name-label ( RW): XOSTOR01 name-description ( RW): host ( RO): <shared> type ( RO): linstor content-type ( RO): >xe vdi-pool-migrate uuid=a38f27e8-c6a0-49d3-9fd3-xxxxxxxx10e3 sr-uuid=cf896912-cd71-d2b2-488a-xxxxxxxx7c87 Error code: SR_BACKEND_FAILURE_46 Error parameters: , The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-xxxxxxxxec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']],Running this I see the VM pause as expected for a few minutes and then it just starts up again. VM is still running with no issues - it just did not move the VDI.

What is the resource with UUID

735fc2d7-f1f0-4cc6-9d35-xxxxxxxxec6cthat it is trying to find? That UUID does not match the VDI.The VDI must be OK as the VM is still up and running with no errors.

As this is probably not an XOSTOR issue - should I raise a new topic for this?

-

It's hard to tell. If you can migrate between non-XOSTOR SRs and see if you reproduce, then it's another issue. If it's only happening when using XOSTOR in the loop, then it's relevant here

-

@geoffbland I can't reproduce your problem, can you send me the SMlog of your hosts please?

-

@ronan-a said in XOSTOR hyperconvergence preview:

@geoffbland I can't reproduce your problem, can you send me the SMlog of your hosts please?

As requested,

May 24 09:13:22 XCPNG01 SM: [18127] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:23 XCPNG01 SM: [18127] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:23 XCPNG01 SM: [18127] 2048840192 May 24 09:13:23 XCPNG01 SM: [18127] query failed May 24 09:13:23 XCPNG01 SM: [18127] hidden: 0 May 24 09:13:23 XCPNG01 SM: [18127] ', stderr: '' May 24 09:13:23 XCPNG01 SM: [18127] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:26 XCPNG01 SM: [18158] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:26 XCPNG01 SM: [18158] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:26 XCPNG01 SM: [18158] 2048840192 May 24 09:13:26 XCPNG01 SM: [18158] query failed May 24 09:13:26 XCPNG01 SM: [18158] hidden: 0 May 24 09:13:26 XCPNG01 SM: [18158] ', stderr: '' May 24 09:13:26 XCPNG01 SM: [18158] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:29 XCPNG01 SM: [18200] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:29 XCPNG01 SM: [18200] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:29 XCPNG01 SM: [18200] 2048840192 May 24 09:13:29 XCPNG01 SM: [18200] query failed May 24 09:13:29 XCPNG01 SM: [18200] hidden: 0 May 24 09:13:29 XCPNG01 SM: [18200] ', stderr: '' May 24 09:13:29 XCPNG01 SM: [18200] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:32 XCPNG01 SM: [18212] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:32 XCPNG01 SM: [18212] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:32 XCPNG01 SM: [18212] 2048840192 May 24 09:13:32 XCPNG01 SM: [18212] query failed May 24 09:13:32 XCPNG01 SM: [18212] hidden: 0 May 24 09:13:32 XCPNG01 SM: [18212] ', stderr: '' May 24 09:13:32 XCPNG01 SM: [18212] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:35 XCPNG01 SM: [18247] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:35 XCPNG01 SM: [18247] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:35 XCPNG01 SM: [18247] 2048840192 May 24 09:13:35 XCPNG01 SM: [18247] query failed May 24 09:13:35 XCPNG01 SM: [18247] hidden: 0 May 24 09:13:35 XCPNG01 SM: [18247] ', stderr: '' May 24 09:13:35 XCPNG01 SM: [18247] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:36 XCPNG01 SM: [18259] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:36 XCPNG01 SM: [18259] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:36 XCPNG01 SM: [18259] 2048840192 May 24 09:13:36 XCPNG01 SM: [18259] query failed May 24 09:13:36 XCPNG01 SM: [18259] hidden: 0 May 24 09:13:36 XCPNG01 SM: [18259] ', stderr: '' May 24 09:13:36 XCPNG01 SM: [18259] linstor-manager:get_vhd_info error: No such file or directory

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login