Epyc VM to VM networking slow

-

@john-c If this Works the 600€ Minisforum will have a new job! Sitting idle now in the cabinet.

-

@manilx said in Epyc VM to VM networking slow:

@john-c If this Works the 600€ Minisforum will have a new job! Sitting idle now in the cabinet.

Depending on results of the test I would recommend an actual server hardware for hosting the XO/XOA VM as the server grade hardware receive more QA, than desktops, laptops and mini computers. So you may need to get an additional server hardware to use for XO/XOA VM.

Also actual server hardware also have out of band management controllers (BMCs). The non server grade hardware often don't have this functionality so remotely managing, and monitoring them is much harder or even impossible.

Finally on top of these they (server hardware) are more likely to be on the XenServer HCL so likely to get the paid support from Vates, through it.

-

@olivierlambert The user manilx is going to try a test with running XO/XOA on an Intel based host, to see what the results are like with the EPYC pool(s) connecting to it.

They're going run the test tomorrow and report back with results. I have posted my recommendation (opinion) above to maybe have a Intel based server grade hardware host running, the XO/XOA VM for the duration of the EPYC VM to VM networking bug.

What do you think?

-

@john-c Minisforum NPB7 all set up with xcp-ng 8.2 LTE

Ready to connect to network tomorrow. Will have results before 10:00 GMT. -

@john-c Let's see what the test will do. If this fixes it I will remove one host (HPE ProLiant DL360 Gen10) out of the 3 host DR pool and dedicate it to this.

-

@john-c I did a quick test. Installed XO on our DR pool. Run a new full backup, NIC's are 1G only, BUT the backup fully saturates the NIC, double the performance of XOA running on EPYC hosts!!

Tomorrow as said I'll run on Minisforum with 2,5G NIC's. Should saturate them also (does @homelab).

-

@manilx said in Epyc VM to VM networking slow:

@john-c I did a quick test. Installed XO on our DR pool. Run a new full backup, NIC's are 1G only, BUT the backup fully saturates the NIC, double the performance of XOA running on EPYC hosts!!

Tomorrow as said I'll run on Minisforum with 2,5G NIC's. Should saturate them also (does @homelab).

If the HPE ProLiant DL360 Gen10 when dedicated were to receive a 10 GB/s PCIe Ethernet NIC it would increase that further. Especially if its a 4 port version of that 10 GB/s PCIe NIC with two pairs of each port in 2 LACP bonds.

-

@john-c If the test goes well, this is what we'll do. Buy a 10G NIC (4 ports)

-

@manilx said in Epyc VM to VM networking slow:

@john-c If the test goes well, this is what we'll do. Buy a 10G NIC (2 ports)

I've bonded a 4 Port NIC (though in my case 1GB/s only as its all my home network can handle), into 2 LACP bonds. But if done with a 10G NIC 4 Port it will really fly with 2 pair LACP (IEE802.3ad) bonds. When I refer to 2 pair LACP (IEE802.3ad) bonds, its meaning 2 10G ports per bond, with 2 bonds; to really unleash its performance.

For example 536FLR FlexFabric 10Gb 4-port adapter from HPE is a 4 port 10G NIC, or another suitable NIC with 4 ports. Though can be a 2 port 10G NIC (without LACP - due to 1 port for each network), but will thus have less of a speed increase effect.

https://www.hpe.com/psnow/doc/c04939487.html?ver=13

https://support.hpe.com/hpesc/public/docDisplay?docId=a00018804en_us&docLocale=en_US -

@manilx P.S. Seem like the slow backup speed WAS related to the EPYC bug as I suggested a while ago.....

Should have tested this "workaround" a LONG time ago

-

@john-c Trued to test a new backup on the NPB7.

I restored the latest XOA backup to that host (didn't want to move the original one from the business pool).

On trying to test the backup I get: Error: feature Unauthorized

???I've spun up a XO instance in the meantime on the Intel test host and imported settings from XOA.

Run the same backup on both hosts to the same QNAP.

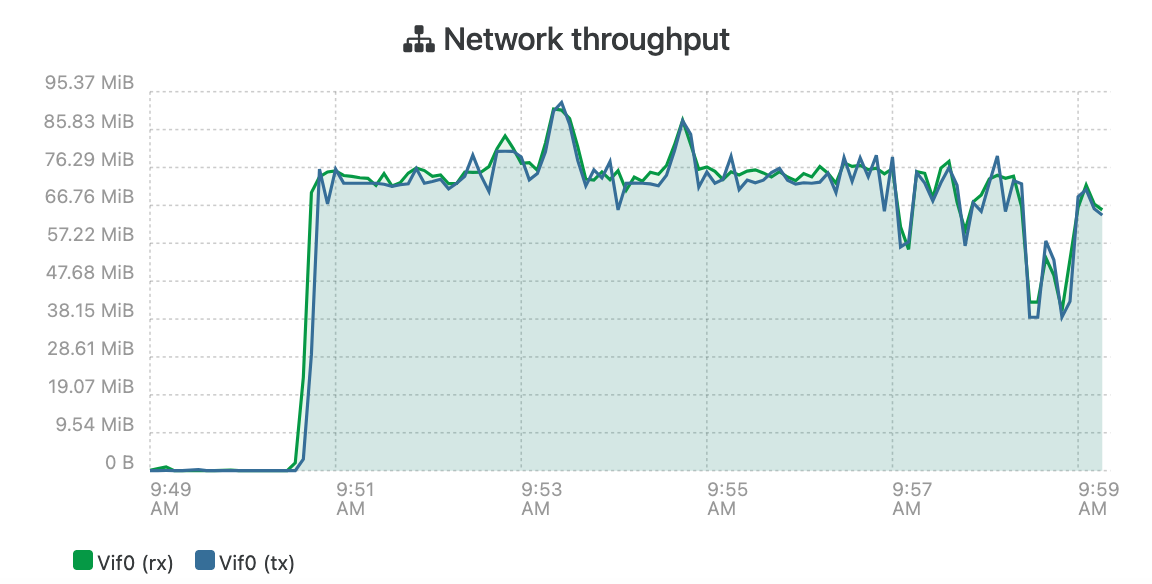

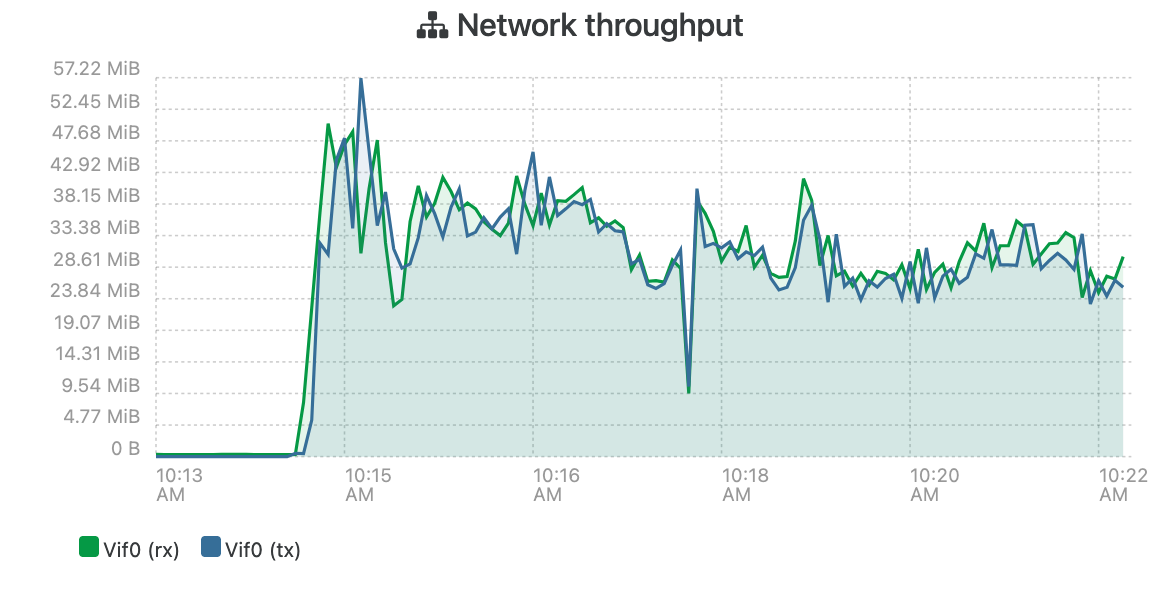

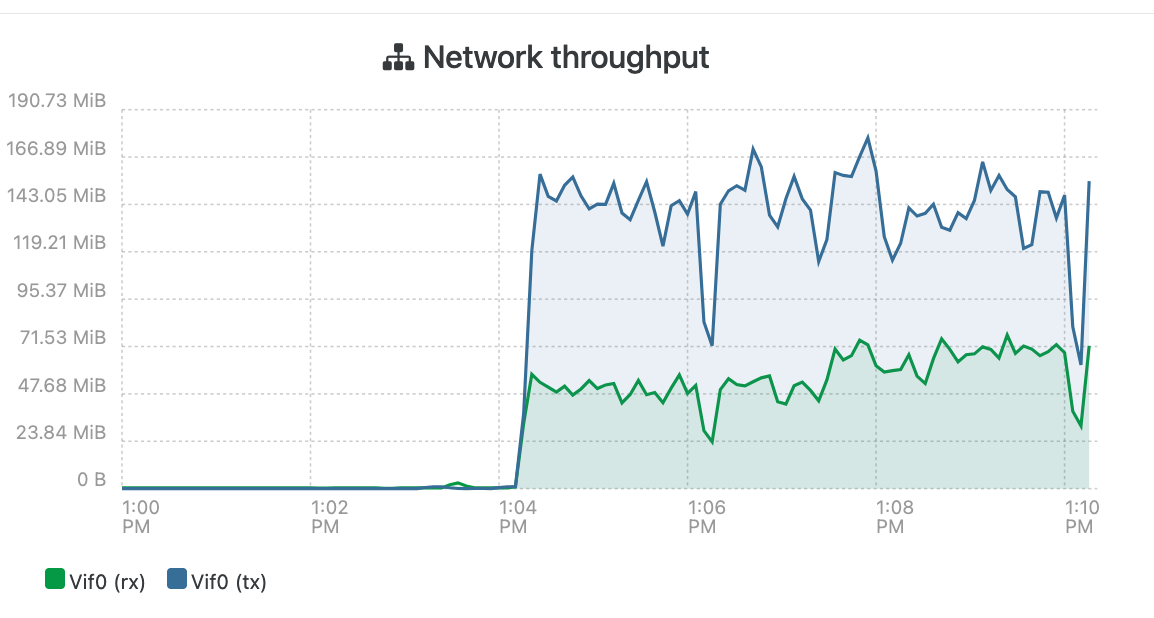

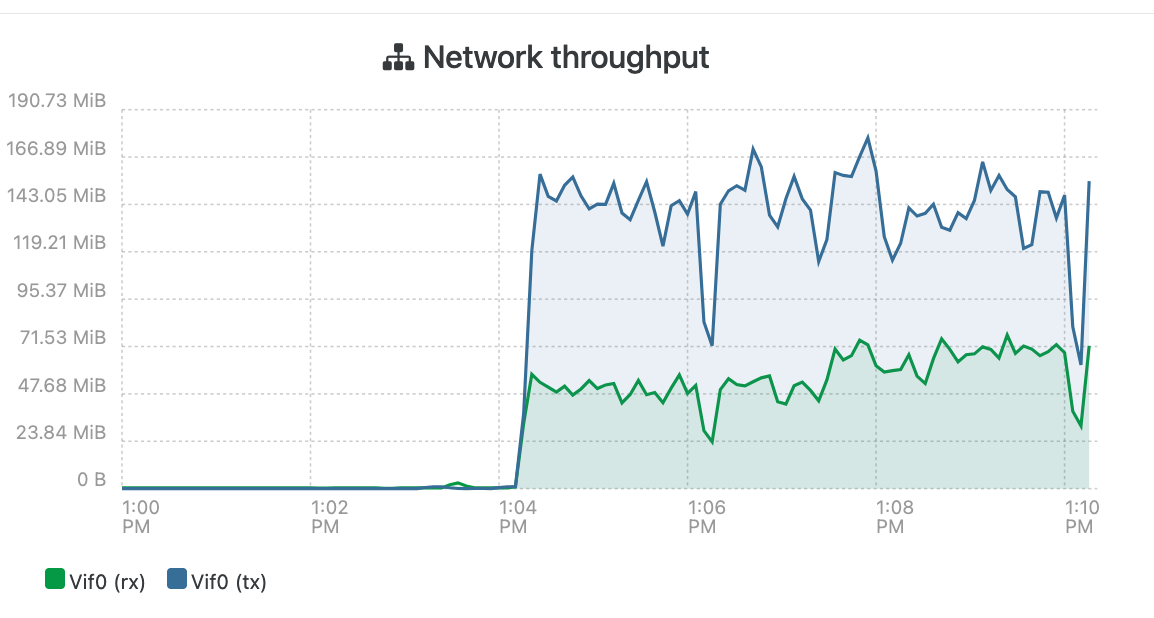

NPB7 Intel host connected via 2,5G:

HP EPYC host connected via 10G:

The difference is apparent!

I will now dettach the older HP from the backup pool and install it as an isolated pool and run XOA from there. Will order 10G NIC's

Now why does XOA error with "Error: feature Unauthorized" when I try to run backups from there??

-

@manilx said in Epyc VM to VM networking slow:

@john-c Trued to test a new backup on the NPB7.

I restored the latest XOA backup to that host (didn't want to move the original one from the business pool).

On trying to test the backup I get: Error: feature Unauthorized

???I've spun up a XO instance in the meantime on the Intel test host and imported settings from XOA.

Run the same backup on both hosts to the same QNAP.

NPB7 Intel host connected via 2,5G:

HP EPYC host connected via 10G:

The difference is apparent!

I will now dettach the older HP from the backup pool and install it as an isolated pool and run XOA from there. Will order 10G NIC's

Now why does XOA error with "Error: feature Unauthorized" when I try to run backups from there??

It's likely because the license is attached to the EPYC instance of the XO/XOA. The license can only be bound to one appliance at a time, and is currently bound to the EPYC instance. Your HPE Intel instance is only available as unlicensed or the Free Edition, until license is re-bound from the EPYC pool's instance.

Anyway overnight I realised to maintain the the availability of XO/XOA, during updates on the host it would need a second host for the dedicated server to join its pool. This would allow for RPU on the XO/XOA host when updating its XCP-ng instance.

https://xen-orchestra.com/docs/license_management.html#rebind-xo-license

-

@john-c License moved. All fine.

Backup Intel host running on 1G NIC's (for the time being) bonded lacp.

Already faster than before.

I have an XO instance running on a Proxmox host to be able to manage the pools when the main XOA is down (updates etc), so I'm good there and don't need another (2nd) backup host (would be crazy overkill).

-

@manilx said in Epyc VM to VM networking slow:

@john-c License moved. All fine.

Backup Intel host running on 1G NIC's (for the time being) bonded lacp.

Already faster than before.

I have an XO instance running on a Proxmox host to be able to manage the pools when the main XOA is down (updates etc), so I'm good there and don't need another (2nd) backup host (would be crazy overkill).

I mean have the Proxmox host as XCP-ng then and have it join the XO/XOA's pool, preferably if they are they same in hardware, components. That way when the HPE ProLiant DL360 Gen10 is down for updates, the XO/XOA VM can migrate between them live as required. So you can have RPU on the dedicated XO/XOA Intel based hosts.

-

@john-c Proxmox host is a Protectli. All good. XOA will be on the single Intel host pool, no need for redundancy here.

XO on Proxmox for emergencies.....Remember: this is ALL a WORKAROUND for the stupid AMD EPYC bug!!!!!!

Not in the least the final solution.The final is XOA running on our EPYC production pool as it was

-

@manilx said in Epyc VM to VM networking slow:

@john-c Proxmox host is a Protectli. All good. XOA will be on the single Intel host pool, no need for redundancy here.

XO on Proxmox for emergencies.....Remember: this is ALL a WORKAROUND for the stupid AMD EPYC bug!!!!!!

Not in the least the final solution.The final is XOA running on our EPYC production pool as it was

Alright in that case just the HPE ProLiant DL360 Gen10 as dedicated XO/XOA host. But bear in mind that when its updating the XCP-ng installed on it, the host will be unavailable and thus that instance of XO/XOA until the booting after reboot is complete.

-

@john-c Yes, obviously. For that I have XO on a mini-pc

-

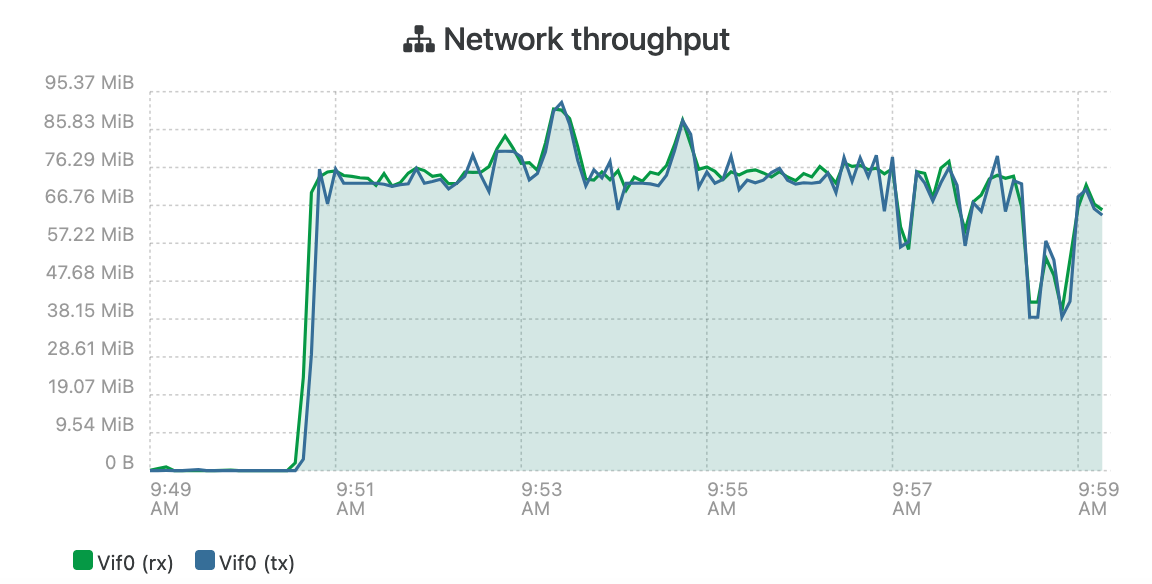

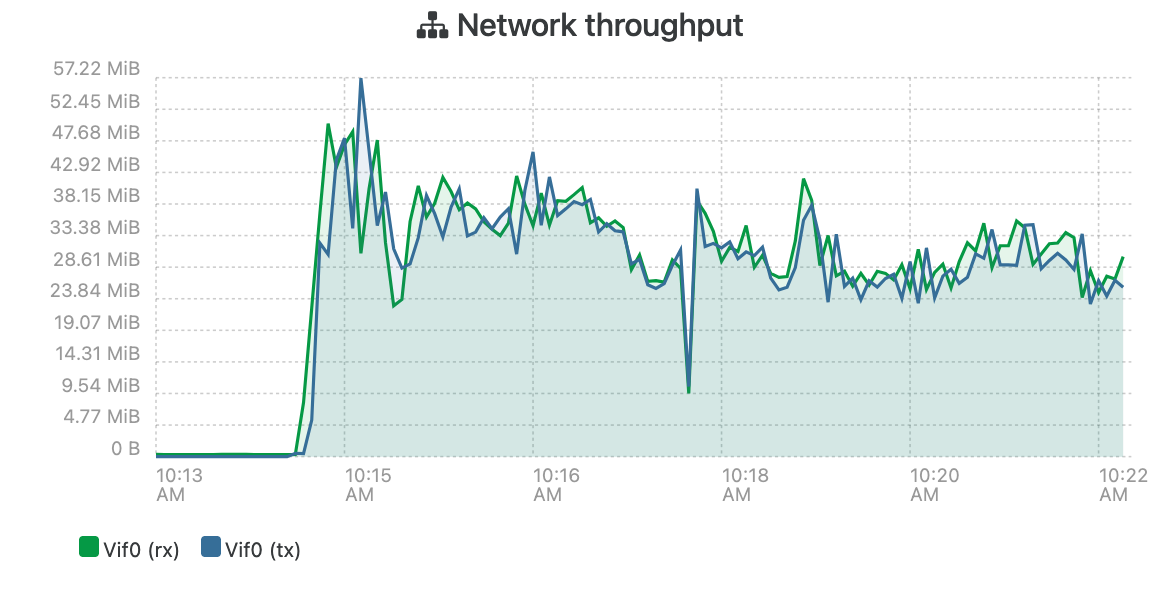

@john-c @olivierlambert

One of our standard backup jobs. This is a 100% increase!!! On 1G lacp bond. Instead of 10G on EPYC host!1,5 yrs battling with this and in the end it's all due to the same issue as we now see.

-

@manilx it is deffo interesting to see more proof that this but may be wider than expected.

-

@manilx said in Epyc VM to VM networking slow:

@john-c @olivierlambert

One of our standard backup jobs. This is a 100% increase!!! On 1G lacp bond. Instead of 10G on EPYC host!1,5 yrs battling with this and in the end it's all due to the same issue as we now see.

Don't forget to also post the comparison and screenshot when you have fitted the 10G 4 Port NIC with the 2 lacp bonds on the Intel HPE!