Device Emulation in the Xen Hypervisor for HVM Guests

In this article we are going to look at the implementation of device emulation in the Xen hypervisor and the device models operating in the control or hardware domain.

At Vates, we've been leveraging the device emulation hypercall interface of the Xen hypervisor in our implementation of UEFI Secure Boot for HVM guests, so we will look at the components in the hypervisor that make this possible.

Hardware-assisted Virtualization (i.e., HVM)

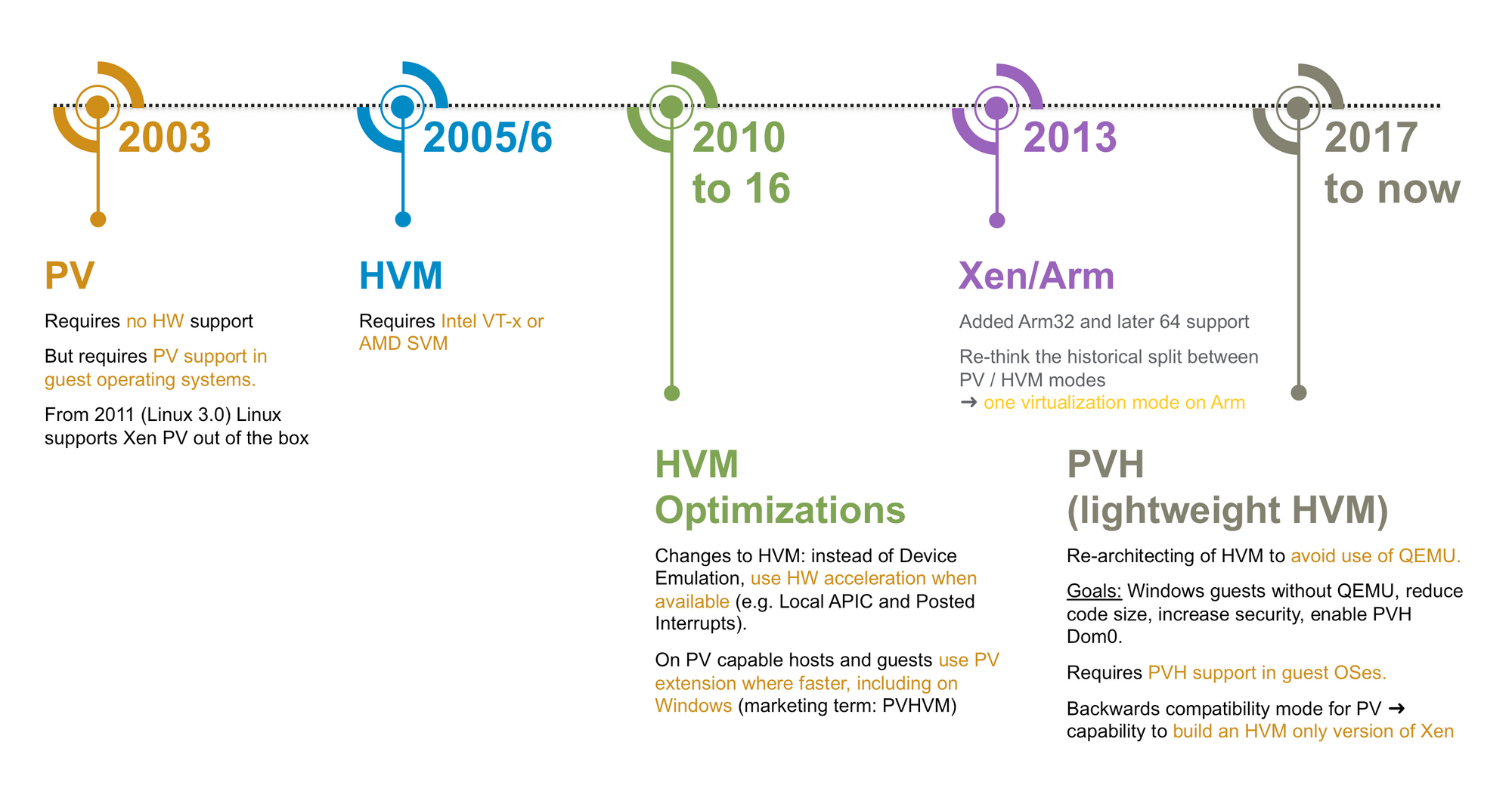

As previously mentioned, we are focused on device emulation for HVM guests. HVM guests utilize the full virtualization capabilities of the processor (i.e., VT-x for x86 or ARMv8 with EL2 implemented). This includes hardware support for multi-stage memory virtualization (EPT for Intel or Stage-2 Tables in ARMv8), interrupts (IRQ injection / routing), and the CPU itself (swapping registers based on current virtualization mode and trapping on privileged instructions). These processor features allow us to maximize the amount of processor time spent in guests instead of the hypervisor, in addition to rooting many security requirements of virtualization into the hardware itself. As these virtualization requirements are outsourced from software (in PV) to hardware (in HVM), your deployment will likely gain greater performance, stronger security through hardware-backed isolation and a potentially smaller software attack surface.

Although these hardware features provide virtualization of key system components, there is still much left to be done to offer guest operating systems the facade of exclusive and complete system access. You might be able to offer a guest operating system access to a piece of hardware via interrupt injection and nested page tables alone, but you will not be able to share that device across guests with just these features. You can prove this to yourself by imagining a shared device indicating data availability via interrupt. Even if you could get away with forwarding this interrupt to two guests (in practice you actually can't), how would the device itself respond to the duplication of accesses performed by the multiple guests? For many devices, the data would have already been consumed by the first guest access and lost on subsequent accesses by other guests.

Device emulation solves this problem and offers solutions to other problems as well.

How does device emulation in the Xen hypervisor work and how does it solve this issue?

Device Emulation for HVMs

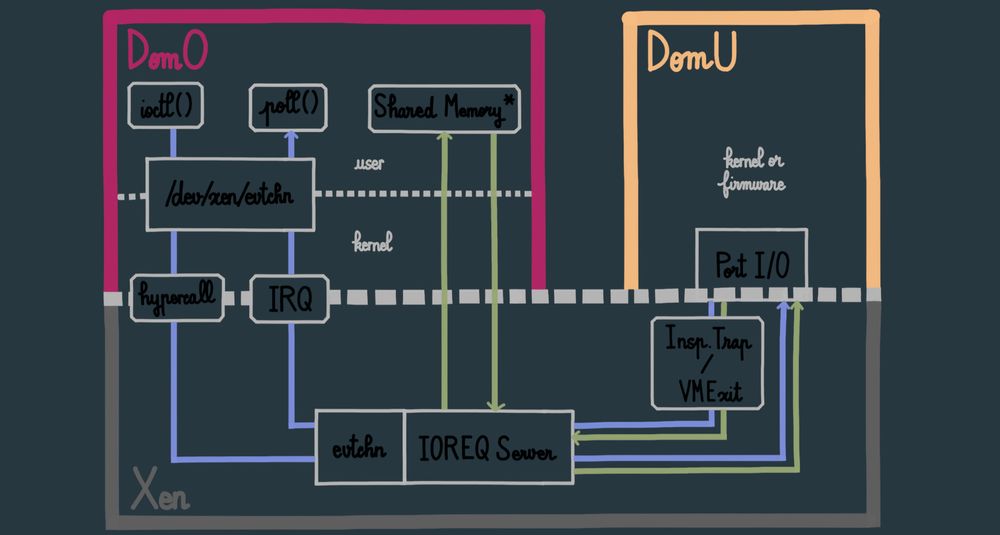

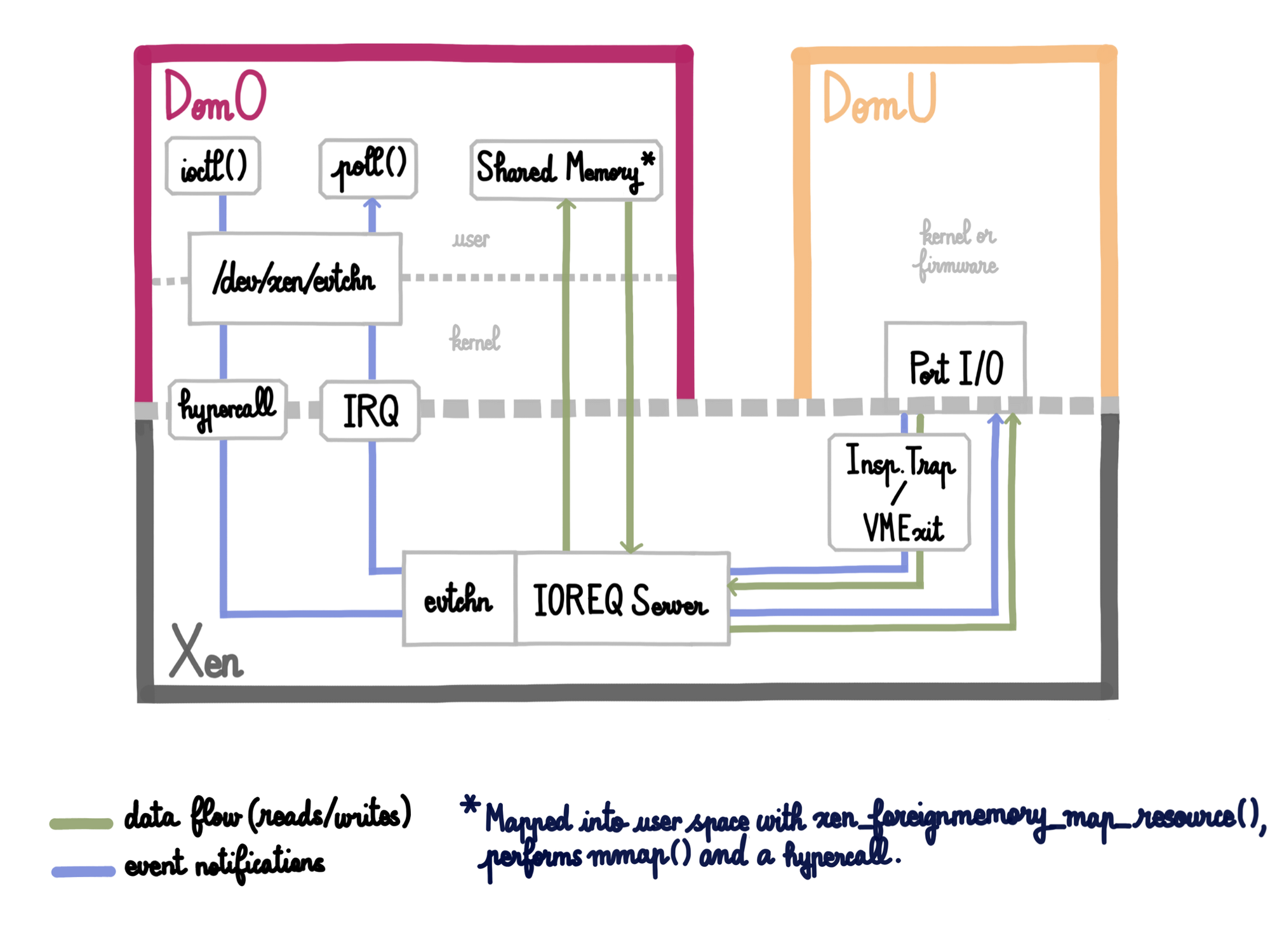

Xen implements infrastructure to support and facilitate the emulation of devices for guests. This infrastructure is exposed to privileged domain user-space via the /proc/xen/privcmd ioctl interface, which is backed by a kernel-space driver that performs hypercalls, causing the system to trap into Xen's hypercall handler. Xen user-space libraries provide an abstracted interface to make well-formed hypercalls against /proc/xen/privcmd. These libraries are xendevicemodel, xenforeignmemory, xenevtchn and friends, and we will be referring to them in this post. Remember that these APIs are what the device emulator uses to communicate with the the Xen hypervisor, but that they are simply an abstraction of the above-mentioned hypercall data flow from userspace, through the kernel, and finally to the hypervisor.

Because of our usage of port IO in the secure boot for guest VMs feature that we've been developing for XCP-ng, we will discuss the emulation of port IO devices.

In order to emulate a port IO device, you must implement a user space program in a privileged domain (we would recommend a hardware domain separate from your dom0, if possible). After interface initialization, this program requests that Xen initializes for it what is called an IOREQ server (i.e., I/O Request Server). An IOREQ server is an I/O communication management construct implemented by the hypervisor. It is registered to a specific domain, the hypervisor allocates for it two pages of memory for I/O requests (one page for buffered I/O and one page for non-buffered I/O), it has an ID, and has event channel ports allocated to it. Once the hypervisor initializes the IOREQ server, the hypervisor will return via the kernel driver to userspace the ID for that server. Userspace must subsequently request that the pages of memory for that server are mapped into user address space, that the hypervisor bind the IOREQ server event channel ports to the domain that devices are to be emulated for, and that the hypervisor notifies this user-space program via event channels of guest accesses to a specified range of IO ports.

Once this circuitry is hooked up, instruction traps into Xen made by that guest's port IO requests will be recognized as being in the range of specified IO ports for the IOREQ server (see xen/arch/x86/hvm/ioreq.c:hvm_select_ioreq_server ). The hypervisor will copy the IO data to the IO server page, and issue an event notification to the IO server event channel port. This notification will propagate through the hypervisor/kernel boundary to the kernel as an interrupt, and from the kernel to user space as a return value from the poll system call executed on the event channel file descriptor. The application can then use the memory (which, as described above, has already been mapped into this application's address space) to read the IO request being issued and emulate the expected behavior of the device. The application can return data to the guest by writing back to the IO memory pages and issuing an event channel notification.

Summary

The IOREQ server infrastructure in Xen allows for a relatively easy and simple implementation of the necessary "hookups" between your device emulator and the guest. The above method for device emulation is used by QEMU and the Xen reference implementation DEMU, and can be used for MMIO as well as PIO.

This approach also allows us to offload sensitive workloads to protected domains, using the hypervisor and hardware-enforced memory isolation between the domains as a high-privilege security boundary (i.e., a malicious guest program will lack access to the domain doing the emulation). This is the exact approach we have followed for protecting the sensitive information for "Secure Boot"-enabled guests on XCP-ng. That, however, will be for a future blog post.