XOA 6.2 parent VHD is missing followed by full Backup

-

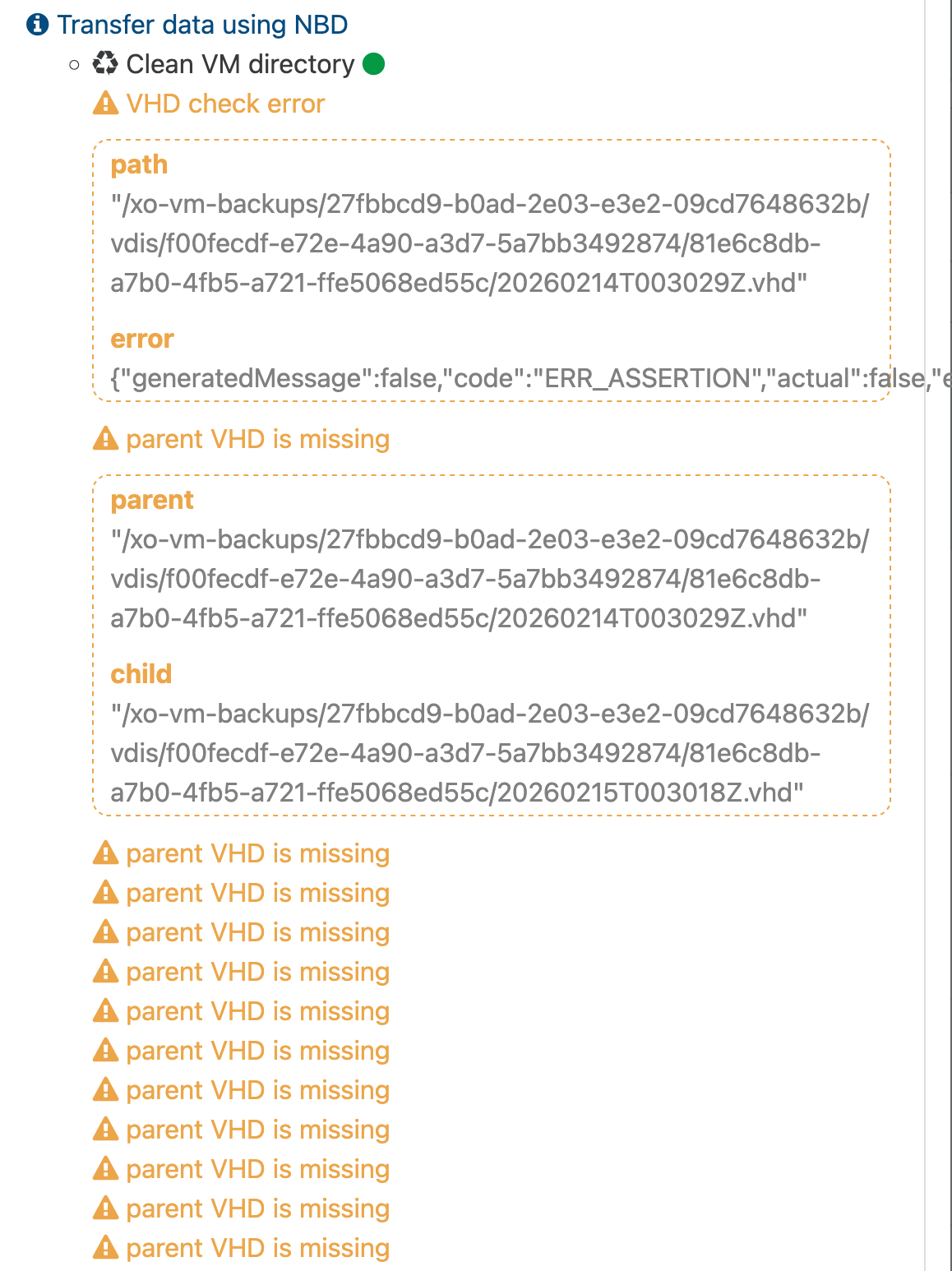

Since installing todays XOA appliance update i am noticing strange behaviour on multiple XO instances while backups are running. (2 XOA and my own XOCE in my home lab)

A number of VMs will display the error attached and then run a full backup. These jobs have been running flawlessly for quite sometime now. This wipes out the whole backup chain and leaves you with a single full backup in the end.

Looking at sudo journalctl -u xo-server -f -n 50 on XOA i see the following happening during the merge of each VM

Feb 26 21:20:24 xoa xo-server[1731622]: 2026-02-27T03:20:24.207Z xo:backups:mergeWorker INFO merge in progress { Feb 26 21:20:24 xoa xo-server[1731622]: done: 2647, Feb 26 21:20:24 xoa xo-server[1731622]: parent: '/xo-vm-backups/d6befe36-fea7-04f3-25f1-feada684702b/vdis/8ca7c07e-4509-463d-8867-87e6b2026ad6/5afe2bcd-42d4-4b58-ba95-2c77e5107692/20260207T014453Z.vhd', Feb 26 21:20:24 xoa xo-server[1731622]: progress: 93, Feb 26 21:20:24 xoa xo-server[1731622]: total: 2845 Feb 26 21:20:24 xoa xo-server[1731622]: } Feb 26 21:20:32 xoa xo-server[1731622]: 2026-02-27T03:20:32.199Z xo:fs:abstract WARN retrying method on fs { Feb 26 21:20:32 xoa xo-server[1731622]: method: '_write', Feb 26 21:20:32 xoa xo-server[1731622]: attemptNumber: 0, Feb 26 21:20:32 xoa xo-server[1731622]: delay: 100, Feb 26 21:20:32 xoa xo-server[1731622]: error: Error: EBADF: bad file descriptor, write Feb 26 21:20:32 xoa xo-server[1731622]: From: Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.addSyncStackTrace (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/local.js:21:26) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler._writeFd (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/local.js:209:35) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler._write (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/abstract.js:680:25) Feb 26 21:20:32 xoa xo-server[1731622]: at loopResolver (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:83:46) Feb 26 21:20:32 xoa xo-server[1731622]: at new Promise (<anonymous>) Feb 26 21:20:32 xoa xo-server[1731622]: at loop (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:85:22) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.retry (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:87:10) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler._write (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:103:18) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.write (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/abstract.js:482:16) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.write (/usr/local/lib/node_modules/xo-server/node_modules/limit-concurrency-decorator/index.js:97:24) { Feb 26 21:20:32 xoa xo-server[1731622]: errno: -9, Feb 26 21:20:32 xoa xo-server[1731622]: code: 'EBADF', Feb 26 21:20:32 xoa xo-server[1731622]: syscall: 'write' Feb 26 21:20:32 xoa xo-server[1731622]: }, Feb 26 21:20:32 xoa xo-server[1731622]: file: { Feb 26 21:20:32 xoa xo-server[1731622]: fd: 17, Feb 26 21:20:32 xoa xo-server[1731622]: path: '/xo-vm-backups/d6befe36-fea7-04f3-25f1-feada684702b/vdis/8ca7c07e-4509-463d-8867-87e6b2026ad6/5afe2bcd-42d4-4b58-ba95-2c77e5107692/20260206T014545Z.vhd' Feb 26 21:20:32 xoa xo-server[1731622]: }, Feb 26 21:20:32 xoa xo-server[1731622]: retryOpts: {} Feb 26 21:20:32 xoa xo-server[1731622]: } Feb 26 21:20:32 xoa xo-server[1731622]: 2026-02-27T03:20:32.200Z vhd-lib:VhdFile INFO double close { Feb 26 21:20:32 xoa xo-server[1731622]: path: { Feb 26 21:20:32 xoa xo-server[1731622]: fd: 18, Feb 26 21:20:32 xoa xo-server[1731622]: path: '/xo-vm-backups/d6befe36-fea7-04f3-25f1-feada684702b/vdis/8ca7c07e-4509-463d-8867-87e6b2026ad6/5afe2bcd-42d4-4b58-ba95-2c77e5107692/20260207T014453Z.vhd' Feb 26 21:20:32 xoa xo-server[1731622]: }, Feb 26 21:20:32 xoa xo-server[1731622]: firstClosedBy: 'Error\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at VhdFile.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:134:22)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDisk.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:104:26)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDisk.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:101:24)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDisk.unlink (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:426:16)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDiskChain.unlink (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:268:18)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at #step_cleanup (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:279:23)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at async MergeRemoteDisk.merge (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:159:11)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at async _mergeVhdChain (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cleanVm.mjs:56:20)', Feb 26 21:20:32 xoa xo-server[1731622]: closedAgainBy: 'Error\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at VhdFile.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:129:24)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDisk.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:104:26)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDisk.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:101:24)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:85:52\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at Array.map (<anonymous>)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at RemoteVhdDiskChain.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:85:35)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at #cleanup (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:309:21)\n' + Feb 26 21:20:32 xoa xo-server[1731622]: ' at async _mergeVhdChain (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cleanVm.mjs:56:20)' Feb 26 21:20:32 xoa xo-server[1731622]: } Feb 26 21:20:32 xoa xo-server[1731622]: 2026-02-27T03:20:32.274Z xo:backups:mergeWorker FATAL ENOENT: no such file or directory, rename '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/_20260227T020329Z-ukxolxktxyl' -> '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/__20260227T020329Z-ukxolxktxyl' { Feb 26 21:20:32 xoa xo-server[1731622]: error: Error: ENOENT: no such file or directory, rename '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/_20260227T020329Z-ukxolxktxyl' -> '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/__20260227T020329Z-ukxolxktxyl' Feb 26 21:20:32 xoa xo-server[1731622]: From: Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.addSyncStackTrace (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/local.js:21:26) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler._rename (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/local.js:191:35) Feb 26 21:20:32 xoa xo-server[1731622]: at /usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/utils.js:29:26 Feb 26 21:20:32 xoa xo-server[1731622]: at new Promise (<anonymous>) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.<anonymous> (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/fs/dist/utils.js:24:12) Feb 26 21:20:32 xoa xo-server[1731622]: at loopResolver (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:83:46) Feb 26 21:20:32 xoa xo-server[1731622]: at new Promise (<anonymous>) Feb 26 21:20:32 xoa xo-server[1731622]: at loop (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:85:22) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler.retry (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:87:10) Feb 26 21:20:32 xoa xo-server[1731622]: at LocalHandler._rename (/usr/local/lib/node_modules/xo-server/node_modules/promise-toolbox/retry.js:103:18) { Feb 26 21:20:32 xoa xo-server[1731622]: errno: -2, Feb 26 21:20:32 xoa xo-server[1731622]: code: 'ENOENT', Feb 26 21:20:32 xoa xo-server[1731622]: syscall: 'rename', Feb 26 21:20:32 xoa xo-server[1731622]: path: '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/_20260227T020329Z-ukxolxktxyl', Feb 26 21:20:32 xoa xo-server[1731622]: dest: '/run/xo-server/mounts/cd3f089c-7064-412e-86e3-b62c5647021a/xo-vm-backups/.queue/clean-vm/__20260227T020329Z-ukxolxktxyl' Feb 26 21:20:32 xoa xo-server[1731622]: } Feb 26 21:20:32 xoa xo-server[1731622]: }This files do exist prior to the job running, but are erased during the job execution.

Remotes are all NFS based, my home lab and one location are using TrueNAS, another location is using NFS backed by Ubuntu 24.04 LTS. This seems to not make any difference.

I submitted a ticket for this since i have pro support but wanted to put this out to the community to see if anyone else is running into this? I have reverted back to 6.1.2 and things are operating smoothly again.

-

Some additional information since my original post.

The first time a backup runs after upgrading i see the log entries i posted above. The second time the backup runs, i see the messages in the screenshot i posted and a full backup run as the result. So it can be easily to think everything is working fine after updating and running a job only one time unless you are watching xo-server via journalctl or run the job a second time. I have run into this across 2 XOA appliances as well as 2 XOCE installs, all on unrelated networks / locations.

-

@flakpyro we did found a race condition in our code

We will do a complete post mortem after , but for now :

- the fix is in master ( https://github.com/vatesfr/xen-orchestra/commit/53f5c63fa242e398caab583223719741cd25bb1d )

- we are preparing a patch that will be release as soon as we are done with QA

-

patch is released

-

@florent thanks for the quick work on this. I have installed the patch and am running a test job. So far i do not see the rename error in the logs. The only error i see is:

Feb 27 08:26:45 xoa xo-server[1792434]: 2026-02-27T14:26:45.711Z vhd-lib:VhdFile INFO double close { Feb 27 08:26:45 xoa xo-server[1792434]: path: { Feb 27 08:26:45 xoa xo-server[1792434]: fd: 18, Feb 27 08:26:45 xoa xo-server[1792434]: path: '/xo-vm-backups/2e8d1b6c-5fe8-0f41-22b9-777eb61f582a/vdis/08dce402-b472-4fd5-ab38-549b4c263c31/6b1191c3-8052-4e16-9cfa-ede59bf790ea/20260131T040121Z.vhd' Feb 27 08:26:45 xoa xo-server[1792434]: }, Feb 27 08:26:45 xoa xo-server[1792434]: firstClosedBy: 'Error\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at VhdFile.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:134:22)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDisk.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:104:26)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDisk.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:101:24)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDisk.unlink (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:427:16)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDiskChain.unlink (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:269:18)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at #step_cleanup (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:279:23)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at async MergeRemoteDisk.merge (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:159:11)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at async _mergeVhdChain (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cleanVm.mjs:56:20)', Feb 27 08:26:45 xoa xo-server[1792434]: closedAgainBy: 'Error\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at VhdFile.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:129:24)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDisk.dispose (/usr/local/lib/node_modules/xo-server/node_modules/vhd-lib/Vhd/VhdFile.js:104:26)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDisk.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:101:24)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:85:52\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at Array.map (<anonymous>)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at RemoteVhdDiskChain.close (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/RemoteVhdDiskChain.mjs:85:35)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at #cleanup (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/disks/MergeRemoteDisk.mjs:309:21)\n' + Feb 27 08:26:45 xoa xo-server[1792434]: ' at async _mergeVhdChain (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cleanVm.mjs:56:20)' Feb 27 08:26:45 xoa xo-server[1792434]: }Im guessing this is unrelated?

-

@flakpyro the double close will be improved but this is only a log issue (we close the file twice to ensure it is closed in the nominal case and in case of error )

we did the minimal patch to ensure the root issue is addressed before weekend -

@florent Awesome, i have patched a second location's XOA and am not experiencing the issue anymore there either.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login