-

Okay great

If you can reproduce, that would be even better to try to do the migration with

If you can reproduce, that would be even better to try to do the migration with xeCLI, this way we remove more moving pieces in the middle

-

@olivierlambert Sorry, took some time to get around to this. But trying to migrate a VDI from an NFS store to XOSTOR is still failing most of the time. This is a VM that was created some time ago - it I do the same with the VDI of a a recently created VM the migration seems to work OK.

>xe vm-disk-list vm=lb01 Disk 0 VBD: uuid ( RO) : d9d06048-6f91-1913-714d-xxxxxxxxaece vm-name-label ( RO): lb01 userdevice ( RW): 0 Disk 0 VDI: uuid ( RO) : a38f27e8-c6a0-49d3-9fd3-xxxxxxxx10e3 name-label ( RW): lb01_tnc01_hdd sr-name-label ( RO): XCPNG_VMs_TrueNAS virtual-size ( RO): 10737418240 >xe sr-list name-label=XOSTOR01 uuid ( RO) : cf896912-cd71-d2b2-488a-xxxxxxxx7c87 name-label ( RW): XOSTOR01 name-description ( RW): host ( RO): <shared> type ( RO): linstor content-type ( RO): >xe vdi-pool-migrate uuid=a38f27e8-c6a0-49d3-9fd3-xxxxxxxx10e3 sr-uuid=cf896912-cd71-d2b2-488a-xxxxxxxx7c87 Error code: SR_BACKEND_FAILURE_46 Error parameters: , The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-xxxxxxxxec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']],Running this I see the VM pause as expected for a few minutes and then it just starts up again. VM is still running with no issues - it just did not move the VDI.

What is the resource with UUID

735fc2d7-f1f0-4cc6-9d35-xxxxxxxxec6cthat it is trying to find? That UUID does not match the VDI.The VDI must be OK as the VM is still up and running with no errors.

As this is probably not an XOSTOR issue - should I raise a new topic for this?

-

It's hard to tell. If you can migrate between non-XOSTOR SRs and see if you reproduce, then it's another issue. If it's only happening when using XOSTOR in the loop, then it's relevant here

-

@geoffbland I can't reproduce your problem, can you send me the SMlog of your hosts please?

-

@ronan-a said in XOSTOR hyperconvergence preview:

@geoffbland I can't reproduce your problem, can you send me the SMlog of your hosts please?

As requested,

May 24 09:13:22 XCPNG01 SM: [18127] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:23 XCPNG01 SM: [18127] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:23 XCPNG01 SM: [18127] 2048840192 May 24 09:13:23 XCPNG01 SM: [18127] query failed May 24 09:13:23 XCPNG01 SM: [18127] hidden: 0 May 24 09:13:23 XCPNG01 SM: [18127] ', stderr: '' May 24 09:13:23 XCPNG01 SM: [18127] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:26 XCPNG01 SM: [18158] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:26 XCPNG01 SM: [18158] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:26 XCPNG01 SM: [18158] 2048840192 May 24 09:13:26 XCPNG01 SM: [18158] query failed May 24 09:13:26 XCPNG01 SM: [18158] hidden: 0 May 24 09:13:26 XCPNG01 SM: [18158] ', stderr: '' May 24 09:13:26 XCPNG01 SM: [18158] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:29 XCPNG01 SM: [18200] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:29 XCPNG01 SM: [18200] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:29 XCPNG01 SM: [18200] 2048840192 May 24 09:13:29 XCPNG01 SM: [18200] query failed May 24 09:13:29 XCPNG01 SM: [18200] hidden: 0 May 24 09:13:29 XCPNG01 SM: [18200] ', stderr: '' May 24 09:13:29 XCPNG01 SM: [18200] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:32 XCPNG01 SM: [18212] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:32 XCPNG01 SM: [18212] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:32 XCPNG01 SM: [18212] 2048840192 May 24 09:13:32 XCPNG01 SM: [18212] query failed May 24 09:13:32 XCPNG01 SM: [18212] hidden: 0 May 24 09:13:32 XCPNG01 SM: [18212] ', stderr: '' May 24 09:13:32 XCPNG01 SM: [18212] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:35 XCPNG01 SM: [18247] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:35 XCPNG01 SM: [18247] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:35 XCPNG01 SM: [18247] 2048840192 May 24 09:13:35 XCPNG01 SM: [18247] query failed May 24 09:13:35 XCPNG01 SM: [18247] hidden: 0 May 24 09:13:35 XCPNG01 SM: [18247] ', stderr: '' May 24 09:13:35 XCPNG01 SM: [18247] linstor-manager:get_vhd_info error: No such file or directory May 24 09:13:36 XCPNG01 SM: [18259] ['/usr/bin/vhd-util', 'query', '--debug', '-vsfp', '-n', '/dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-xxxxxxxx58c0/0'] May 24 09:13:36 XCPNG01 SM: [18259] FAILED in util.pread: (rc 2) stdout: '40960 May 24 09:13:36 XCPNG01 SM: [18259] 2048840192 May 24 09:13:36 XCPNG01 SM: [18259] query failed May 24 09:13:36 XCPNG01 SM: [18259] hidden: 0 May 24 09:13:36 XCPNG01 SM: [18259] ', stderr: '' May 24 09:13:36 XCPNG01 SM: [18259] linstor-manager:get_vhd_info error: No such file or directory -

I found one of the VMs I had been using to test XOSTOR was locked up this morning. I restarted it but it will not start up and gives an error about the VDI being missing.

>xe vm-list name-label=test04 uuid ( RO) : 8ec952b4-7229-7a30-81b6-1564a58f6343 name-label ( RW): test04 power-state ( RO): halted >xe vm-disk-list vm=test04 Disk 0 VBD: uuid ( RO) : e3c465b8-17a4-d147-6383-527bd9341a16 vm-name-label ( RO): test04 userdevice ( RW): 0 Disk 0 VDI: uuid ( RO) : 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c name-label ( RW): test04_xostor01_vdi sr-name-label ( RO): XOSTOR01 virtual-size ( RO): 42949672960 >xe vm-start vm=test04 Error code: SR_BACKEND_FAILURE_46 Error parameters: , The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']],The logs for this are attached as file xostor issue 1.txt

-

@geoffbland Can you execute this command on the other hosts please?

ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0Also I don't have all the info in your previous log, can you send me the previous SMlog files? (Using private message if you want.

)

) -

@ronan-a said in XOSTOR hyperconvergence preview:

Can you execute this command on the other hosts please?

As requested

XCPNG01 - Current linstor master

[10:59 XCPNG01 ~]# ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 lrwxrwxrwx 1 root root 17 May 22 19:24 /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 -> ../../../drbd1004XCPNG02

[11:00 XCPNG02 ~]# ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 lrwxrwxrwx 1 root root 17 May 22 19:25 /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 -> ../../../drbd1004XCPNG03

[07:31 XCPNG03 ~]# ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 lrwxrwxrwx 1 root root 17 May 22 19:24 /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 -> ../../../drbd1004XCPNG04

[07:35 XCPNG04 ~]# ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 lrwxrwxrwx 1 root root 17 May 22 19:24 /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 -> ../../../drbd1004XCPNG05

[10:49 XCPNG05 ~]# ls -l /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 lrwxrwxrwx 1 root root 17 May 22 19:24 /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 -> ../../../drbd1004 -

It seems like xe may be getting mixed up between where a host is running and where the XOSTOR storage is held.

Apologies if I have misunderstood and done something wrong here - but I think this migration should have worked.

I created a new VM on one of my hosts XCPNG05 using XOSTOR as the VDI RS. I can see that the linstore volumes are on hosts XCPNG01, XCPNG03 and XCPNG05.

XCPNG05 is an Intel server, XCPNG01 and XCPNG03 are AMD. The VM is running on XCPNG05.

Now when I try to migrate the VM's VDI from XOSTOR onto a local VDI on the same host the VM is currently running on I get a warning about incompatible CPUs.To replicate the issue:

Create new VM test05 on XOSTOR.

VM is created on host XCPNG05.>xe vm-list name-label=test05 uuid ( RO) : d3f8c52d-be3c-3712-0ccc-a526dcc241a5 name-label ( RW): test05 power-state ( RO): running >xe vm-disk-list vm=test05 Disk 0 VBD: uuid ( RO) : a337cd1f-04cc-ce46-fbfb-d5d8e290dc03 vm-name-label ( RO): test05 userdevice ( RW): 0 Disk 0 VDI: uuid ( RO) : f856680c-c00d-44af-ba3f-16d9952ccb2f name-label ( RW): test05_vdi sr-name-label ( RO): XOSTOR01 virtual-size ( RO): 34359738368 >xe sr-list name-label=XOSTOR01 uuid ( RO) : cf896912-cd71-d2b2-488a-xxxxxxxx7c87 name-label ( RW): XOSTOR01 name-description ( RW): host ( RO): <shared> type ( RO): linstor content-type ( RO):Migrate to local disk (SSD1) on same host (XCPNG05) - this fails migrating to the same host the VM is currently running on due to incompatible CPU.

>xe sr-list name-label=XCPNG05SSD1 uuid ( RO) : c0851501-3a1b-c661-70b9-54373e0d9847 name-label ( RW): XCPNG05SSD1 name-description ( RW): host ( RO): XCPNG05 type ( RO): lvm content-type ( RO): user >xe vdi-pool-migrate uuid=f856680c-c00d-44af-ba3f-16d9952ccb2f sr-uuid=c0851501-3a1b-c661-70b9-54373e0d9847 The VM is incompatible with the CPU features of this host. vm: d3f8c52d-be3c-3712-0ccc-a526dcc241a5 (test05) host: 7bd62a77-71d6-4b51-9a86-850dd4ff4b60 (XCPNG05) reason: VM last booted on a host which had a CPU from a different vendor. -

@geoffbland Thank you, so ok the VDI is still here on all hosts.

You can try to check the status of the VDH like the smapi using:

/usr/bin/vhd-util query --debug -vsfp -n /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0If you have this problem on many hosts, I suspect a problem with DRBD, so maybe there is a useful info in daemon.log and/or kern.log.

-

@ronan-a said in XOSTOR hyperconvergence preview:

You can try to check the status of the VDH like the smapi using:

/usr/bin/vhd-util query --debug -vsfp -n /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0

useful info in daemon.log and/or kern.log.This gives the following:

[10:59 XCPNG01 ~]# /usr/bin/vhd-util query --debug -vsfp -n /dev/drbd/by-res/xcp-volume-75c11231-0fb8-4b40-9e2e-a0665bb758c0/0 40960 2061447680 query failed hidden: 0I will send logs by direct mail.

-

@geoffbland Okay so it's probably not related to the driver itself, I will take a look to the logs after reception.

-

Failure trying to revert a VM to a snapshot with XOSTOR.

Created a VM with main VDI on XOSTOR (24GB) and with 6 disks each also on XOSTOR (2GB each).

All is running OK.

Now create a snapshot of the VM - this takes quite a while but does eventually succeed.

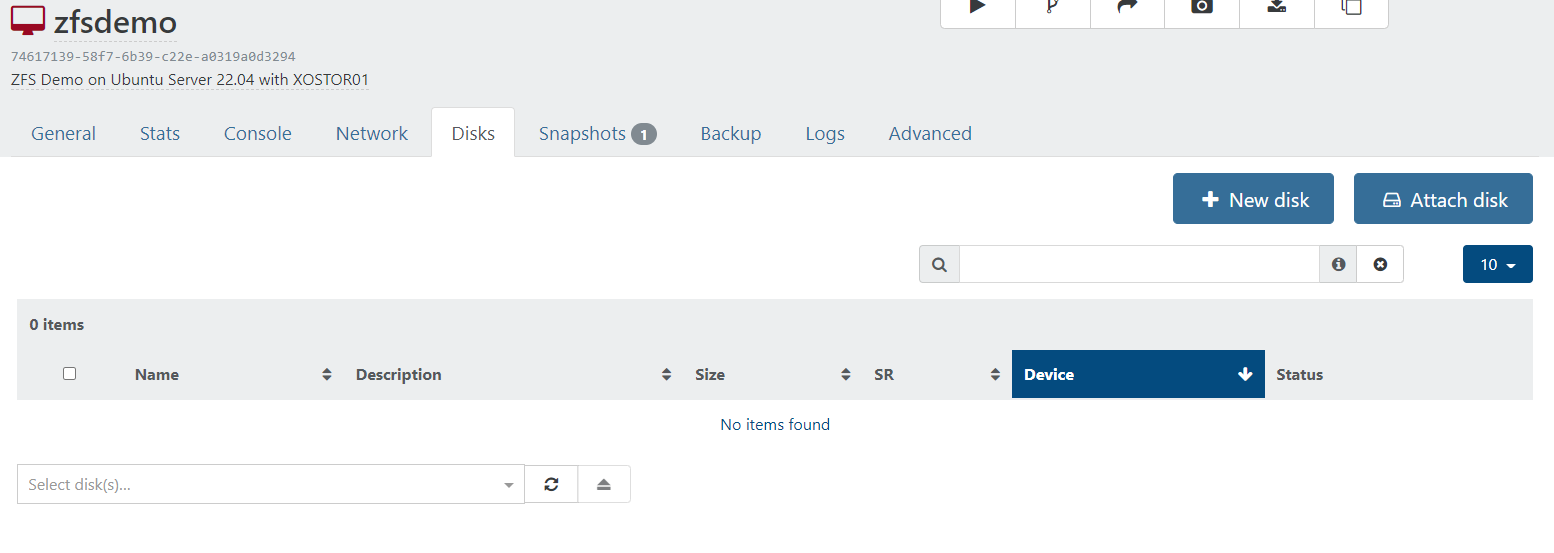

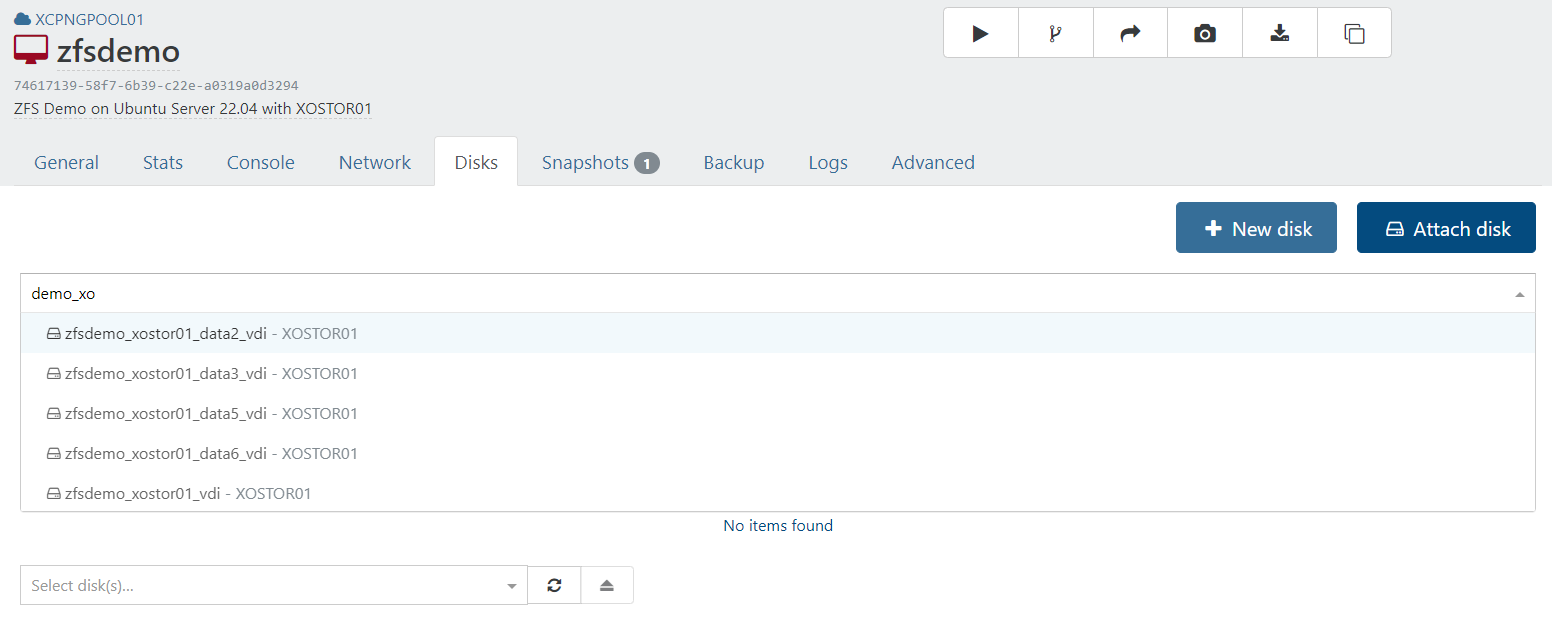

Now using XO (from sources) click the "Revert VM to this snapshot". This errors and the VM stops.vm.revert { "snapshot": "6032fc73-eb7f-cf64-2481-4346b7b57204" } { "code": "VM_REVERT_FAILED", "params": [ "OpaqueRef:1439fd0f-4e66-44c9-99af-1f8536e59378", "OpaqueRef:5ad4c51e-473e-4ab0-877d-2d0dbdb90add" ], "task": { "uuid": "4804fefd-0037-d7dd-9a7c-769230728483", "name_label": "Async.VM.revert", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20220527T15:01:42Z", "finished": "20220527T15:01:46Z", "status": "failure", "resident_on": "OpaqueRef:a1e9a8f3-0a79-4824-b29f-d81b3246d190", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "VM_REVERT_FAILED", "OpaqueRef:1439fd0f-4e66-44c9-99af-1f8536e59378", "OpaqueRef:5ad4c51e-473e-4ab0-877d-2d0dbdb90add" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename ocaml/xapi/xapi_vm_snapshot.ml)(line 492))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 131))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 231))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 103)))" }, "message": "VM_REVERT_FAILED(OpaqueRef:1439fd0f-4e66-44c9-99af-1f8536e59378, OpaqueRef:5ad4c51e-473e-4ab0-877d-2d0dbdb90add)", "name": "XapiError", "stack": "XapiError: VM_REVERT_FAILED(OpaqueRef:1439fd0f-4e66-44c9-99af-1f8536e59378, OpaqueRef:5ad4c51e-473e-4ab0-877d-2d0dbdb90add) at Function.wrap (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_XapiError.js:16:12) at _default (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_getTaskResult.js:11:29) at Xapi._addRecordToCache (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:949:24) at forEach (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:983:14) at Array.forEach (<anonymous>) at Xapi._processEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:973:12) at Xapi._watchEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:1139:14)" }Now viewing the VM with XO on the disks tab shows no attached disks - disk tab is blank.

But linstor appears to still have the disks and the snapshot disks too.

┊ XCPNG01 ┊ xcp-volume-142cb89f-2850-4ac8-a47c-10bb2cfc4692 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1010 ┊ /dev/drbd1010 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-142cb89f-2850-4ac8-a47c-10bb2cfc4692 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1010 ┊ /dev/drbd1010 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-142cb89f-2850-4ac8-a47c-10bb2cfc4692 ┊ DfltDisklessStorPool ┊ 0 ┊ 1010 ┊ /dev/drbd1010 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-142cb89f-2850-4ac8-a47c-10bb2cfc4692 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1010 ┊ /dev/drbd1010 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-18fa145a-d36b-44bd-b1b5-af1e9424ea00 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1018 ┊ /dev/drbd1018 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-18fa145a-d36b-44bd-b1b5-af1e9424ea00 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1018 ┊ /dev/drbd1018 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-18fa145a-d36b-44bd-b1b5-af1e9424ea00 ┊ DfltDisklessStorPool ┊ 0 ┊ 1018 ┊ /dev/drbd1018 ┊ ┊ InUse ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-18fa145a-d36b-44bd-b1b5-af1e9424ea00 ┊ DfltDisklessStorPool ┊ 0 ┊ 1018 ┊ /dev/drbd1018 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG05 ┊ xcp-volume-18fa145a-d36b-44bd-b1b5-af1e9424ea00 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1018 ┊ /dev/drbd1018 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-1a6c7272-f718-4c4d-a8b0-ca8419eab314 ┊ DfltDisklessStorPool ┊ 0 ┊ 1024 ┊ /dev/drbd1024 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-1a6c7272-f718-4c4d-a8b0-ca8419eab314 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1024 ┊ /dev/drbd1024 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-1a6c7272-f718-4c4d-a8b0-ca8419eab314 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1024 ┊ /dev/drbd1024 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-1a6c7272-f718-4c4d-a8b0-ca8419eab314 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1024 ┊ /dev/drbd1024 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-1a6c7272-f718-4c4d-a8b0-ca8419eab314 ┊ DfltDisklessStorPool ┊ 0 ┊ 1024 ┊ /dev/drbd1024 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-2cab6c2d-abf6-42c7-9094-d75351ed8ebb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1016 ┊ /dev/drbd1016 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-2cab6c2d-abf6-42c7-9094-d75351ed8ebb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1016 ┊ /dev/drbd1016 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-2cab6c2d-abf6-42c7-9094-d75351ed8ebb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1016 ┊ /dev/drbd1016 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-2cab6c2d-abf6-42c7-9094-d75351ed8ebb ┊ DfltDisklessStorPool ┊ 0 ┊ 1016 ┊ /dev/drbd1016 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG05 ┊ xcp-volume-2cab6c2d-abf6-42c7-9094-d75351ed8ebb ┊ DfltDisklessStorPool ┊ 0 ┊ 1016 ┊ /dev/drbd1016 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-30bf014b-025d-4f3f-a068-f9a9bf34fab2 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1013 ┊ /dev/drbd1013 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-30bf014b-025d-4f3f-a068-f9a9bf34fab2 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1013 ┊ /dev/drbd1013 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-30bf014b-025d-4f3f-a068-f9a9bf34fab2 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1013 ┊ /dev/drbd1013 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-3bdb2b25-706c-4309-ab8f-df3190f57c43 ┊ DfltDisklessStorPool ┊ 0 ┊ 1021 ┊ /dev/drbd1021 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-3bdb2b25-706c-4309-ab8f-df3190f57c43 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1021 ┊ /dev/drbd1021 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-3bdb2b25-706c-4309-ab8f-df3190f57c43 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1021 ┊ /dev/drbd1021 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-3bdb2b25-706c-4309-ab8f-df3190f57c43 ┊ DfltDisklessStorPool ┊ 0 ┊ 1021 ┊ /dev/drbd1021 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG05 ┊ xcp-volume-3bdb2b25-706c-4309-ab8f-df3190f57c43 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1021 ┊ /dev/drbd1021 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-450f65f7-7fcc-4ffd-893e-761a2f6ac366 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1020 ┊ /dev/drbd1020 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-450f65f7-7fcc-4ffd-893e-761a2f6ac366 ┊ DfltDisklessStorPool ┊ 0 ┊ 1020 ┊ /dev/drbd1020 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG03 ┊ xcp-volume-450f65f7-7fcc-4ffd-893e-761a2f6ac366 ┊ DfltDisklessStorPool ┊ 0 ┊ 1020 ┊ /dev/drbd1020 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-450f65f7-7fcc-4ffd-893e-761a2f6ac366 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1020 ┊ /dev/drbd1020 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-450f65f7-7fcc-4ffd-893e-761a2f6ac366 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1020 ┊ /dev/drbd1020 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-466938db-11f1-4b59-8a90-ad08fa20e085 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1015 ┊ /dev/drbd1015 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-466938db-11f1-4b59-8a90-ad08fa20e085 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1015 ┊ /dev/drbd1015 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-466938db-11f1-4b59-8a90-ad08fa20e085 ┊ DfltDisklessStorPool ┊ 0 ┊ 1015 ┊ /dev/drbd1015 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-466938db-11f1-4b59-8a90-ad08fa20e085 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1015 ┊ /dev/drbd1015 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-466938db-11f1-4b59-8a90-ad08fa20e085 ┊ DfltDisklessStorPool ┊ 0 ┊ 1015 ┊ /dev/drbd1015 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-470dcf6f-d916-403d-8258-e012c065b8ec ┊ xcp-sr-linstor_group ┊ 0 ┊ 1009 ┊ /dev/drbd1009 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-470dcf6f-d916-403d-8258-e012c065b8ec ┊ xcp-sr-linstor_group ┊ 0 ┊ 1009 ┊ /dev/drbd1009 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-470dcf6f-d916-403d-8258-e012c065b8ec ┊ DfltDisklessStorPool ┊ 0 ┊ 1009 ┊ /dev/drbd1009 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-470dcf6f-d916-403d-8258-e012c065b8ec ┊ xcp-sr-linstor_group ┊ 0 ┊ 1009 ┊ /dev/drbd1009 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-551db5b5-7772-407a-9e8c-e549db3a0e5f ┊ xcp-sr-linstor_group ┊ 0 ┊ 1008 ┊ /dev/drbd1008 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-551db5b5-7772-407a-9e8c-e549db3a0e5f ┊ xcp-sr-linstor_group ┊ 0 ┊ 1008 ┊ /dev/drbd1008 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-551db5b5-7772-407a-9e8c-e549db3a0e5f ┊ DfltDisklessStorPool ┊ 0 ┊ 1008 ┊ /dev/drbd1008 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-551db5b5-7772-407a-9e8c-e549db3a0e5f ┊ xcp-sr-linstor_group ┊ 0 ┊ 1008 ┊ /dev/drbd1008 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-699871db-2319-4ddd-9a44-0514d2e7aee3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1025 ┊ /dev/drbd1025 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-699871db-2319-4ddd-9a44-0514d2e7aee3 ┊ DfltDisklessStorPool ┊ 0 ┊ 1025 ┊ /dev/drbd1025 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG03 ┊ xcp-volume-699871db-2319-4ddd-9a44-0514d2e7aee3 ┊ DfltDisklessStorPool ┊ 0 ┊ 1025 ┊ /dev/drbd1025 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-699871db-2319-4ddd-9a44-0514d2e7aee3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1025 ┊ /dev/drbd1025 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-699871db-2319-4ddd-9a44-0514d2e7aee3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1025 ┊ /dev/drbd1025 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-6c96822b-7ded-41dd-b4ff-690dc4795ee7 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1023 ┊ /dev/drbd1023 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-6c96822b-7ded-41dd-b4ff-690dc4795ee7 ┊ DfltDisklessStorPool ┊ 0 ┊ 1023 ┊ /dev/drbd1023 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG03 ┊ xcp-volume-6c96822b-7ded-41dd-b4ff-690dc4795ee7 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1023 ┊ /dev/drbd1023 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-6c96822b-7ded-41dd-b4ff-690dc4795ee7 ┊ DfltDisklessStorPool ┊ 0 ┊ 1023 ┊ /dev/drbd1023 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG05 ┊ xcp-volume-6c96822b-7ded-41dd-b4ff-690dc4795ee7 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1023 ┊ /dev/drbd1023 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-70004559-a2c4-480f-b7bc-b26dcb95bfba ┊ xcp-sr-linstor_group ┊ 0 ┊ 1027 ┊ /dev/drbd1027 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-70004559-a2c4-480f-b7bc-b26dcb95bfba ┊ xcp-sr-linstor_group ┊ 0 ┊ 1027 ┊ /dev/drbd1027 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-70004559-a2c4-480f-b7bc-b26dcb95bfba ┊ DfltDisklessStorPool ┊ 0 ┊ 1027 ┊ /dev/drbd1027 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-70004559-a2c4-480f-b7bc-b26dcb95bfba ┊ xcp-sr-linstor_group ┊ 0 ┊ 1027 ┊ /dev/drbd1027 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-70004559-a2c4-480f-b7bc-b26dcb95bfba ┊ DfltDisklessStorPool ┊ 0 ┊ 1027 ┊ /dev/drbd1027 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-707a0158-ad31-4b4b-af2b-20d89e5717de ┊ DfltDisklessStorPool ┊ 0 ┊ 1026 ┊ /dev/drbd1026 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-707a0158-ad31-4b4b-af2b-20d89e5717de ┊ xcp-sr-linstor_group ┊ 0 ┊ 1026 ┊ /dev/drbd1026 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-707a0158-ad31-4b4b-af2b-20d89e5717de ┊ xcp-sr-linstor_group ┊ 0 ┊ 1026 ┊ /dev/drbd1026 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-707a0158-ad31-4b4b-af2b-20d89e5717de ┊ DfltDisklessStorPool ┊ 0 ┊ 1026 ┊ /dev/drbd1026 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG05 ┊ xcp-volume-707a0158-ad31-4b4b-af2b-20d89e5717de ┊ xcp-sr-linstor_group ┊ 0 ┊ 1026 ┊ /dev/drbd1026 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-7aaa7a6e-98c4-4a57-a4f1-4fea0a36b17a ┊ xcp-sr-linstor_group ┊ 0 ┊ 1011 ┊ /dev/drbd1011 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-7aaa7a6e-98c4-4a57-a4f1-4fea0a36b17a ┊ xcp-sr-linstor_group ┊ 0 ┊ 1011 ┊ /dev/drbd1011 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-7aaa7a6e-98c4-4a57-a4f1-4fea0a36b17a ┊ xcp-sr-linstor_group ┊ 0 ┊ 1011 ┊ /dev/drbd1011 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-9320c158-489e-49e7-92b8-85c93c9e3eeb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1022 ┊ /dev/drbd1022 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-9320c158-489e-49e7-92b8-85c93c9e3eeb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1022 ┊ /dev/drbd1022 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-9320c158-489e-49e7-92b8-85c93c9e3eeb ┊ DfltDisklessStorPool ┊ 0 ┊ 1022 ┊ /dev/drbd1022 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-9320c158-489e-49e7-92b8-85c93c9e3eeb ┊ xcp-sr-linstor_group ┊ 0 ┊ 1022 ┊ /dev/drbd1022 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-9320c158-489e-49e7-92b8-85c93c9e3eeb ┊ DfltDisklessStorPool ┊ 0 ┊ 1022 ┊ /dev/drbd1022 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-b341848b-01d1-4019-a62f-85c6108a53e3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1006 ┊ /dev/drbd1006 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-b341848b-01d1-4019-a62f-85c6108a53e3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1006 ┊ /dev/drbd1006 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-b341848b-01d1-4019-a62f-85c6108a53e3 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1006 ┊ /dev/drbd1006 ┊ 24.06 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-bccefe12-9ff5-4317-b05c-515cb44a5710 ┊ DfltDisklessStorPool ┊ 0 ┊ 1014 ┊ /dev/drbd1014 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-bccefe12-9ff5-4317-b05c-515cb44a5710 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1014 ┊ /dev/drbd1014 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-bccefe12-9ff5-4317-b05c-515cb44a5710 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1014 ┊ /dev/drbd1014 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-bccefe12-9ff5-4317-b05c-515cb44a5710 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1014 ┊ /dev/drbd1014 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-bccefe12-9ff5-4317-b05c-515cb44a5710 ┊ DfltDisklessStorPool ┊ 0 ┊ 1014 ┊ /dev/drbd1014 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-cdc051ae-bc39-4012-9ce0-6e4f855a5063 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1012 ┊ /dev/drbd1012 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG02 ┊ xcp-volume-cdc051ae-bc39-4012-9ce0-6e4f855a5063 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1012 ┊ /dev/drbd1012 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-cdc051ae-bc39-4012-9ce0-6e4f855a5063 ┊ DfltDisklessStorPool ┊ 0 ┊ 1012 ┊ /dev/drbd1012 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG04 ┊ xcp-volume-cdc051ae-bc39-4012-9ce0-6e4f855a5063 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1012 ┊ /dev/drbd1012 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG01 ┊ xcp-volume-d5a744ec-d1a1-4116-a576-38608b9dd790 ┊ DfltDisklessStorPool ┊ 0 ┊ 1019 ┊ /dev/drbd1019 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-d5a744ec-d1a1-4116-a576-38608b9dd790 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1019 ┊ /dev/drbd1019 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-d5a744ec-d1a1-4116-a576-38608b9dd790 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1019 ┊ /dev/drbd1019 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-d5a744ec-d1a1-4116-a576-38608b9dd790 ┊ xcp-sr-linstor_group ┊ 0 ┊ 1019 ┊ /dev/drbd1019 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-d5a744ec-d1a1-4116-a576-38608b9dd790 ┊ DfltDisklessStorPool ┊ 0 ┊ 1019 ┊ /dev/drbd1019 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG01 ┊ xcp-volume-f9cf9143-829d-4246-9051-9102f2c4709c ┊ DfltDisklessStorPool ┊ 0 ┊ 1017 ┊ /dev/drbd1017 ┊ ┊ Unused ┊ Diskless ┊ ┊ XCPNG02 ┊ xcp-volume-f9cf9143-829d-4246-9051-9102f2c4709c ┊ xcp-sr-linstor_group ┊ 0 ┊ 1017 ┊ /dev/drbd1017 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG03 ┊ xcp-volume-f9cf9143-829d-4246-9051-9102f2c4709c ┊ xcp-sr-linstor_group ┊ 0 ┊ 1017 ┊ /dev/drbd1017 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG04 ┊ xcp-volume-f9cf9143-829d-4246-9051-9102f2c4709c ┊ xcp-sr-linstor_group ┊ 0 ┊ 1017 ┊ /dev/drbd1017 ┊ 2.02 GiB ┊ Unused ┊ UpToDate ┊ ┊ XCPNG05 ┊ xcp-volume-f9cf9143-829d-4246-9051-9102f2c4709c ┊ DfltDisklessStorPool ┊ 0 ┊ 1017 ┊ /dev/drbd1017 ┊ ┊ Unused ┊ Diskless ┊From the VM disks tab if I try to Attach the disks, two of the disks created on XOSTOR are missing (data1 and data4).

Finally if I go to storage and bring up the XOSTOR storage and then press "Rescan all disks" I get this error:

sr.scan { "id": "cf896912-cd71-d2b2-488a-5792b7147c87" } { "code": "SR_BACKEND_FAILURE_46", "params": [ "", "The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']]", "" ], "task": { "uuid": "4dcac885-dfaa-784a-eb2d-02335efde0fb", "name_label": "Async.SR.scan", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20220527T16:27:36Z", "finished": "20220527T16:27:50Z", "status": "failure", "resident_on": "OpaqueRef:a1e9a8f3-0a79-4824-b29f-d81b3246d190", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "SR_BACKEND_FAILURE_46", "", "The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']]", "" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename lib/backtrace.ml)(line 210))((process xapi)(filename ocaml/xapi/storage_access.ml)(line 32))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 128))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 231))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 103)))" }, "message": "SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']], )", "name": "XapiError", "stack": "XapiError: SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Could not load 735fc2d7-f1f0-4cc6-9d35-42a049d8ec6c because: ['XENAPI_PLUGIN_FAILURE', 'getVHDInfo', 'CommandException', 'No such file or directory']], ) at Function.wrap (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_XapiError.js:16:12) at _default (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/_getTaskResult.js:11:29) at Xapi._addRecordToCache (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:949:24) at forEach (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:983:14) at Array.forEach (<anonymous>) at Xapi._processEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:973:12) at Xapi._watchEvents (/opt/xo/xo-builds/xen-orchestra-202204291839/packages/xen-api/src/index.js:1139:14)" } -

@ronan-a said in XOSTOR hyperconvergence preview:

Okay so it's probably not related to the driver itself, I will take a look to the logs after reception.

Did you get chance to look at the logs I sent?

-

@geoffbland So, I didn't notice useful info outside of:

FIXME drbd_a_xcp-volu[24302] op clear, bitmap locked for 'set_n_write sync_handshake' by drbd_r_xcp-volu[24231] ... FIXME drbd_a_xcp-volu[24328] op clear, bitmap locked for 'demote' by drbd_w_xcp-volu[24188]Like I said in my e-mail, maybe there are more details in another log file. I hope.

-

@ronan-a said in [XOSTOR hyperconvergence preview]

Like I said in my e-mail, maybe there are more details in another log file. I hope.

In the end I realised I was more trying to "use" XOSTOR whilst testing rather than properly test it. So I decided to rip it all down and start again and retest it again - this time properly recording each step so any issues can be replicated. I will let you know how this goes.

-

@ronan-a Could you please take a look at this issue I raised elsewhere on the forums.

I am currently unable to create new VMs, getting a

No such Tapdiskerror - checking down the stack trace - it seems to be coming from aget()call in/opt/xensource/sm/blktap2.pyand this code seems to have been changed in a XOSTOR release from 24th May. -

@geoffbland Hi, I was away last week, I will take a look.

-

This is a supercool and amazing thing. Coming from Proxmox with Ceph I really feel this was a missing piece. I want to migrate my whole homelab to this!

I've been playing around with it a fair bit, and it works well, but when it came to enabling HA I ran into trouble. Is XOSTOR not a valid shared storage target to enable HA on?

Having multiple shared storages (NFS/CIFS etc) in production is a given to put backups and whatnot on, but I thought it was weird that I couldn't use XOSTOR storage to enable HA.

-

@yrrips said in XOSTOR hyperconvergence preview:

when it came to enabling HA I ran into trouble. Is XOSTOR not a valid shared storage target to enable HA on?

I'm also really hoping that XOSTOR works well and also feel like this is something XCP-NG really needs. I've tried other distributed storage solutions, notably GlusterFS but never found anything that really works 100% when outages occur.

Note I plan to use XOSTOR just for VM disks and any data they use - not for the HA share and not for backups. My logic is that:

- backups should not be anywhere near XCP-NG and should be isolated software and hardware - and hopefully location wise.

- HA share needs to survive a issue where XCP-NG HA fails; if XCP-NG quorum is not working then XOSTOR (Linstor) quorum may be affected in a similar way - so I keep my HA share on a reliable NFS share. Note that in testing I found if the HA share is not available for a while that XCP-NG stays running OK (just don't make any configuration changes until HA share is back).

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login