VHD Check Error

-

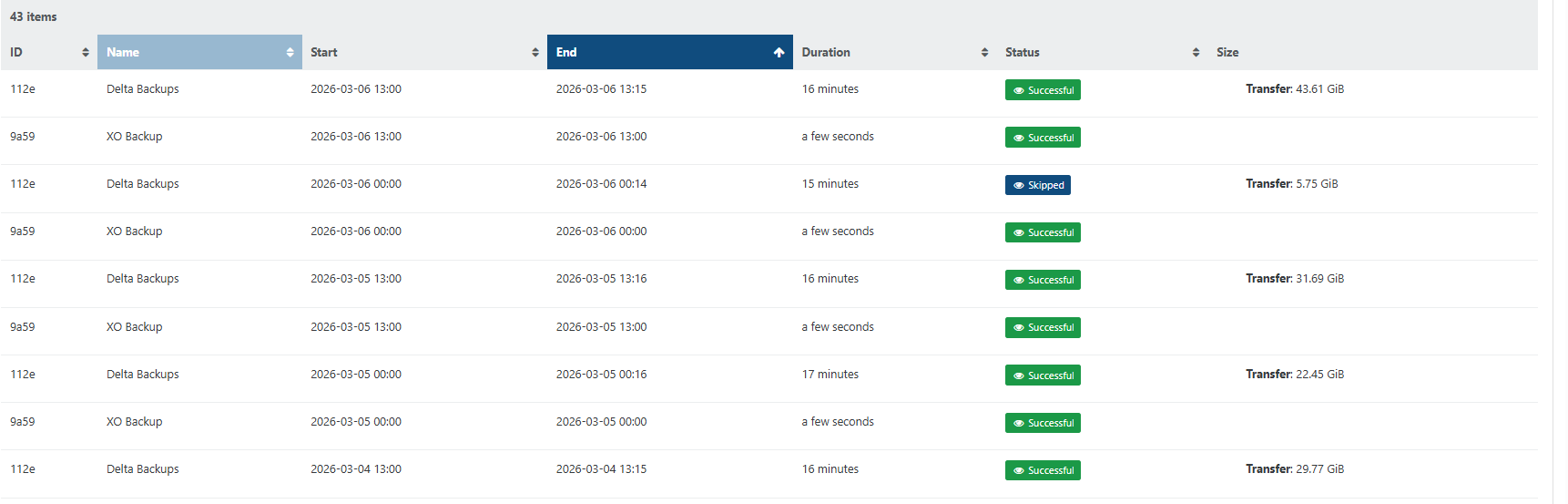

Below are logs from the most recent backups in home lab. Previous job before these completed with no errors.

"data": { "type": "VM", "id": "fb72a8d7-a039-849f-b547-24fc56f056ba", "name_label": "Work PC" }, "id": "1772820551246", "message": "backup VM", "start": 1772820551246, "status": "success", "tasks": [ { "id": "1772820551378", "message": "clean-vm", "start": 1772820551378, "status": "success", "warnings": [ { "data": { "path": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd", "error": { "parent": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260304T180919Z.vhd", "child1": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd", "child2": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd" } }, "message": "VHD check error" } ], "end": 1772820551595, "result": { "merge": false } }, { "id": "1772820552011", "message": "snapshot", "start": 1772820552011, "status": "success", "end": 1772820553464, "result": "1028f3b3-d45b-326c-0c0d-96a452d9ef1c" }, { "id": "1772820943456", "message": "health check", "start": 1772820943456, "status": "success", "infos": [ { "message": "This VM doesn't match the health check's tags for this schedule" } ], "end": 1772820943457 }, { "data": { "id": "a5e54e04-d7e4-48cb-bafc-b2f306d39679", "isFull": false, "type": "remote" }, "id": "1772820553464:0", "message": "export", "start": 1772820553464, "status": "success", "tasks": [ { "id": "1772820556813", "message": "transfer", "start": 1772820556813, "status": "success", "end": 1772820942395, "result": { "size": 30651973632 } }, { "id": "1772820943468", "message": "clean-vm", "start": 1772820943468, "status": "success", "warnings": [ { "data": { "path": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd", "error": { "parent": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260304T180919Z.vhd", "child1": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd", "child2": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd" } }, "message": "VHD check error" }, { "data": { "backup": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/20260305T050936Z.json", "missingVhds": [ "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd" ] }, "message": "some VHDs linked to the backup are missing" } ], "end": 1772820944285, "result": { "merge": true } } ], "end": 1772820944299 } ], "end": 1772820944299 },Previous backup job, marked as Skipped...

{ "data": { "type": "VM", "id": "fb72a8d7-a039-849f-b547-24fc56f056ba", "name_label": "Work PC" }, "id": "1772773302800", "message": "backup VM", "start": 1772773302800, "status": "skipped", "tasks": [ { "id": "1772773302805", "message": "clean-vm", "start": 1772773302805, "status": "success", "warnings": [ { "data": { "path": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd", "error": { "parent": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260304T180919Z.vhd", "child1": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd", "child2": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd" } }, "message": "VHD check error" } ], "end": 1772773303762, "result": { "merge": false } }, { "id": "1772773305019", "message": "snapshot", "start": 1772773305019, "status": "skipped", "end": 1772773305121, "result": { "message": "unhealthy VDI chain", "name": "Error", "stack": "Error: unhealthy VDI chain\n at Xapi._assertHealthyVdiChain (file:///opt/xen-orchestra/@xen-orchestra/xapi/vm.mjs:150:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async Xapi.assertHealthyVdiChains (file:///opt/xen-orchestra/@xen-orchestra/xapi/vm.mjs:214:7)\n at async file:///opt/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:184:11" } }, { "id": "1772773305238", "message": "clean-vm", "start": 1772773305238, "status": "success", "warnings": [ { "data": { "path": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd", "error": { "parent": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260304T180919Z.vhd", "child1": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T050936Z.vhd", "child2": "/xo-vm-backups/fb72a8d7-a039-849f-b547-24fc56f056ba/vdis/ec47112e-6518-4c2c-a59d-e6700d6f4923/468c876b-0054-41e2-840b-631d3c665f5d/20260305T180818Z.vhd" } }, "message": "VHD check error" } ], "end": 1772773307297, "result": { "merge": false } } ], "end": 1772773307301, "result": { "message": "unhealthy VDI chain", "name": "Error", "stack": "Error: unhealthy VDI chain\n at Xapi._assertHealthyVdiChain (file:///opt/xen-orchestra/@xen-orchestra/xapi/vm.mjs:150:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async Xapi.assertHealthyVdiChains (file:///opt/xen-orchestra/@xen-orchestra/xapi/vm.mjs:214:7)\n at async file:///opt/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:184:11" } }

-

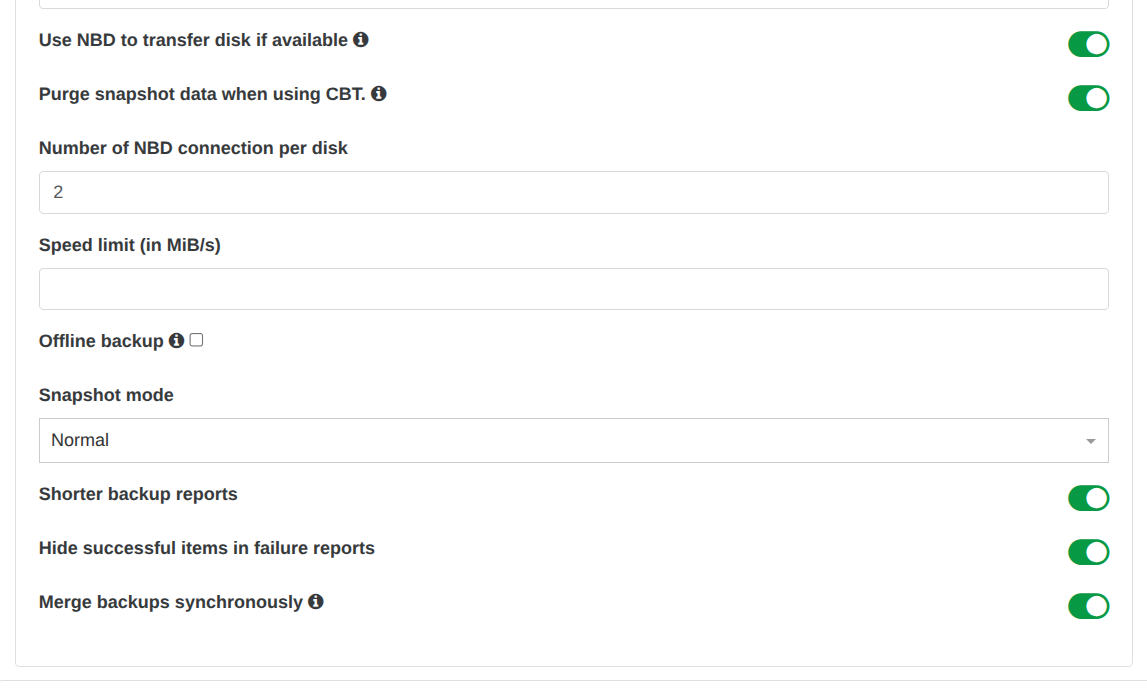

I looked into the backup job and i forgot to enable a few settings when recreated the backup job.

After renabling the below settings i reran the backup job. The vm in question did a full backup. All passed.

{ "data": { "type": "VM", "id": "fb72a8d7-a039-849f-b547-24fc56f056ba", "name_label": "Work PC" }, "id": "1772833993844", "message": "backup VM", "start": 1772833993844, "status": "success", "tasks": [ { "id": "1772833993868", "message": "clean-vm", "start": 1772833993868, "status": "success", "end": 1772833994233, "result": { "merge": false } }, { "id": "1772833994652", "message": "snapshot", "start": 1772833994652, "status": "success", "end": 1772833997235, "result": "16f5fe19-207a-4d89-017c-3f9405d22231" }, { "id": "1772834490355:0", "message": "health check", "start": 1772834490355, "status": "success", "infos": [ { "message": "This VM doesn't match the health check's tags for this schedule" } ], "end": 1772834490356 }, { "data": { "id": "a5e54e04-d7e4-48cb-bafc-b2f306d39679", "isFull": true, "type": "remote" }, "id": "1772833997235:0", "message": "export", "start": 1772833997235, "status": "success", "tasks": [ { "id": "1772834004185", "message": "transfer", "start": 1772834004185, "status": "success", "end": 1772834489032, "result": { "size": 119502012416 } }, { "id": "1772834490368", "message": "clean-vm", "start": 1772834490368, "status": "success", "end": 1772834490475, "result": { "merge": false } } ], "end": 1772834490476 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:31692cb3-7c43-de83-2cc8-f2e39a0105c8" }, "message": "Snapshot data has been deleted" } ], "end": 1772834490476 } -

Not sure if related to this. But now all my vms are failing back to full backups.

Attached is the first backup when all vms fail back full backup and the most recent backup log. All backups in between were full backups.

2026-03-07T05_00_00.117Z - backup NG.txt

2026-03-10T04_00_03.845Z - backup NG.txt

Backup file before vms fail back to full.

2026-03-06T21_42_15.233Z - backup NG.txt -

I have forced a full backup and then did another delta backup and still delta backups are falling back to full backups.

-

@acebmxer According to your screenshots it looks like you are using CBT for your backups.

I had the same issue (backup fell back to a full) back when I was using CBT.

After disabling CBT for all backup jobs, virtual disks and therefore using classic snapshot approach all is working fine. No more fallbacks to full backups. -

@MajorP93 did see that too

but when in a THICK lvmoiscsi environment, hard to let full snaps ... CBT is quite a savior, but you get these quircks (backup fell back to full) from time to time.. seems random.only time when you get it 100% is when you have a DR job and then next delta of VM WILL BE a full...

-

I am in the process moving a vm to new SR to see if that would make a difference. I cant recall 100% but i am sure i had cbt enabled before. I just double checked works backup and cbt is enable there with no issue.

Once migration is complete i will try again with out CBT.

-

@Pilow Yeah, true.

Also during my CBT-enabled-backups tests live migrations did trigger the fallback to a full from time to time aswell (VM residing on a different host during backup run compared to last backup run).

But I did those tests ~6 months ago so maybe some fixes have been applied in that regard.You are right, in a thick provision SR / block based scenario taking a snapshot would result in the same size of base VHD being allocated again for the purpose of snapshotting... not really practical.

I really hope that CBT receives some more love as we plan to move our storage to a vSAN cluster and intend to use iSCSI instead of NFS by that time so using CBT would also be our best bet then...

CBT also reduces the load on the SR (as in I/O) as it removes the need to constantly coalesce disks during backup job re-creation / deletion of snapshots.@acebmxer Interesting during my tests CBT was not really stable as in backups falling back to full quite often. I recall reading somewhere in the documentation that CBT has some quirks so it seems to be a known issue.

-

After shuffuling a vm around on differen SR I started another backup job and all vms failed back to full with CBT disabled in backup job.

When the following schedule back task started all vms did a delta backup.....

-

Now delta backups are working again. I tried re-enabling CBT so far so good. Still did a delta backup snapshot was removed no errors.

Maybe someone with more knowledge could explain why its working now.

-

@acebmxer when changing SR, full pass is expected (it is documented) even with CBT enabled.

the bitmap file of the CBT needs to be reconstructed on the destination SR, so you get a full pass, and next passes will get delta as expected -

While that could be true only 1 vm was shuffled to another SR. But all VMs now being backup with CBT enabled.

Well... i just check again at backups and after the last scheduled backup at 1am all vms fell back to full backup again.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login