Testing ZFS with XCP-ng

-

I all,

I spent some time trying to get ZFS to work.

The main issue is that ZFS doesn't support

O_DIRECTand that blktap only opens.vhdfiles withO_DIRECT.I patched blktap to remove the

O_DIRECTand replace it withO_DSYNCand try to run ZFS on a file SR. Here are some changes: https://gist.github.com/nraynaud/b32bd612f5856d1efc1418233d3aeb0fThe steps are the following:

- remove the current blktap from your host

- install the patched blktap

- install the ZFS support RPMs

- create the and mount the ZFS volume

- create the file SR pointing to the mounted directory

Here is a ZFS test script:

XCP_HOST_UNDER_TEST=root@yourmachine rsync -r zfs blktap-3.5.0-1.12test.x86_64.rpm ${XCP_HOST_UNDER_TEST}: ssh ${XCP_HOST_UNDER_TEST} yum remove -y blktap ssh ${XCP_HOST_UNDER_TEST} yum install -y blktap-3.5.0-1.12test.x86_64.rpm ssh ${XCP_HOST_UNDER_TEST} yum install -y zfs/*.rpm ssh ${XCP_HOST_UNDER_TEST} depmod -a ssh ${XCP_HOST_UNDER_TEST} modprobe zfs ssh ${XCP_HOST_UNDER_TEST} zpool create -f -m /mnt/zfs tank /dev/mydev ssh ${XCP_HOST_UNDER_TEST} zfs set sync=disabled tank ssh ${XCP_HOST_UNDER_TEST} zfs set compression=lz4 tank ssh ${XCP_HOST_UNDER_TEST} zfs list SR_ZFS_UUID=`ssh ${XCP_HOST_UNDER_TEST} "xe sr-create host-uuid=${XCP_HOST_UNDER_TEST_UUID} name-label=test-zfs-sr type=file other-config:o_direct=false device-config:location=/mnt/zfs/test-zfs-sr"`In details

ZFS options

Obviously, you can create the ZFS volume as you need (mirror, RAID-Z, doesn't matter, it's entirely up to you!)

Regarding options: due to

O_DIRECTremoval, it seems that the default sync mode is as if you gotsync=always. To enjoy ZFS real perfs, it's better to usesync=disabled.Compression (using

lz4) also offers a nice boost. Finally, if you are a bit kamikaze, you can try dedup, but frankly, not worth the risk.Note: please extend your

dom0memory to enjoy more perfs in general! 4GiB seems a minimum, remember that your host will now act as a cache for your storage.SR creation

We added a specific flag to allow SR usage without

O_DIRECT:xe sr-create host-uuid=<YOUR_HOST_UUID> name-label=ZFS-SR type=file other-config:o_direct=false device-config:location=/tankYou can see the

other-config:o_direct=falseflag required.Download

The files are here: https://cloud.vates.fr/index.php/s/zDSLcsJ4l5tYHwL?path=%2F

I would be interested in someone else reproducing my work, fiddle with ZFS and tell us if it's worth pursuing.

Thanks,

Nico.

-

I did a test

I did a test

Environment: XCP-ng 7.4 with latest yum updates

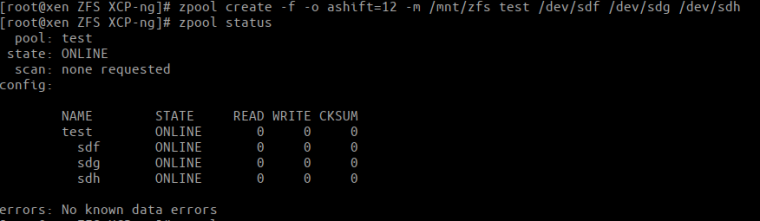

ZFS works as expected! (Note: ashift=12 --> I have 4k discs)

Test1 - File SR ontop ZFS-Folder

- created a new folder

zfs create test/srwhich is located at/mnt/zfs/sr - created a new file-sr

xe sr-create host-uuid=<host-uuid> type=file shared=false name-label=<sr-label> device-config:location=/mnt/zfs/sr

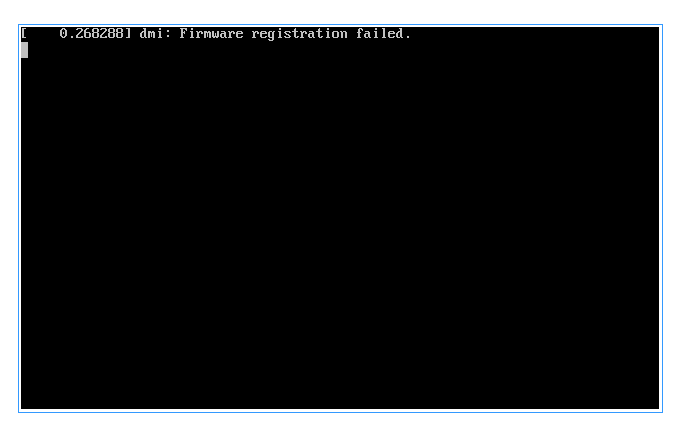

Result: could not boot my ubuntu-vm

Test2 - Ext-SR ontop ZFS Blockdevice

- created a new blockdevice

zfs create -V 100G test/ext-sr - created a new EXT-SR

xe sr-create content-type=user host-uuid=<host-uuid> shared=false type=ext device-config:device=/dev/zvol/test/ext-sr name-label=<sr-label>

Result: here my ubunt-vm also does not boot

Test 3 - Boot ubuntu-vm on "old" lvm SR

Result: could also not boot my ubuntu-vm

Hints

-

local copy of the vm was done without errors

-

in all three cases the vm got stuck on following boot-screen:

-

turning off the stuck vm takes a long time

Test 4

(after reading carefully your script and using

other-config:o_direct=false)Result: vm is booting

- created a new folder

-

Feel free to do some test (with

fiofor example, withbs=4kto test IOPS andbs=4Mto test max throughput) -

just now I watch the soccer games in russia, so just a little test

dd inside vm

root@ubuntu:~# dd if=/root/test.img of=/dev/null bs=4k 812740+0 Datensätze ein 812740+0 Datensätze aus 3328983040 bytes (3,3 GB, 3,1 GiB) copied, 17,4961 s, 190 MB/sdd on host

[root@xen sr3]# dd if=./83377032-f3d5-411f-b049-2e76048fd4a2.vhd of=/dev/null bs=4k 2307479+1 Datensätze ein 2307479+1 Datensätze aus 9451434496 Bytes (9,5 GB) kopiert, 38,5861 s, 245 MB/sA side note: My disc's are happy now with ZFS, I hope you can see it

-

@nraynaud Nice Work! Will report on weekend with my tests.

@olivierlambert I love fio. bonnie++ is also worth a shot.

@borzel you have a floppy drive!!!

-

thanks @r1

I started a PR here: https://github.com/xapi-project/blktap/pull/253 it's not exactly the same code as the RPM above. Above, O_DIRECT has been completely removed altogether, so that people can compare ext3 without O_DIRECT and ZFS. In the PR, I am just removing O_DIRECT when an open() has failed.

It is not sure it will be accepted, I have no clue what their policy is, and no idea what their QA process is, we are really at the draft stage.

Nico.

-

@nraynaud may be an extra config parameter while sr-create will be a right way. Handling a fail may not seem appealing. My 2c.

-

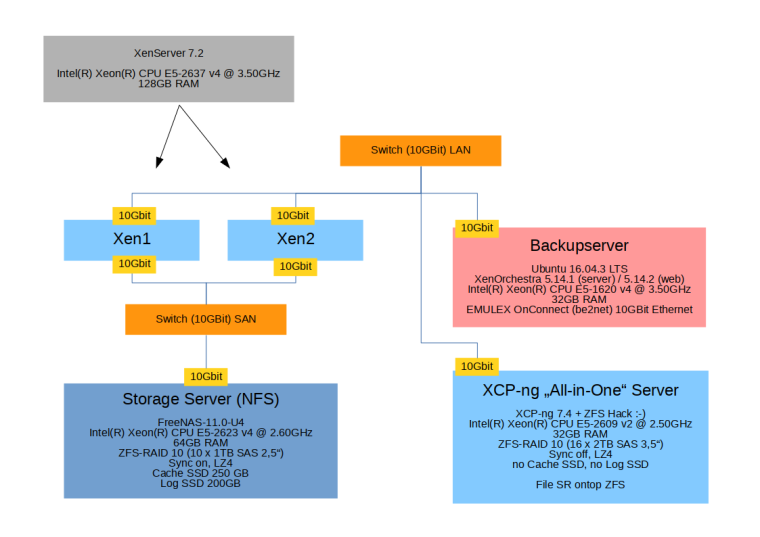

Status update:

I installed XCP-ng 7.4 on a "Storage Server" with 16 x 2TB HDDs, gave DOM0 8GB of RAM and created a zpool (Sync off, Compress LZ4) and file-SR ontop.

In combination with a local XenOrchestra VM (and configured continious replication jobs) we now have a selfcontained "All-in-One Replication Server" without dependency on hardware raid controllers

In case of an emergency in my production storage/virtualisation environment I can boot every VM I need with just this one server. Or power up my DHCP-VM if the other systems are down for service on chrismas/new years eve

I'm more than very happy with that

Side note: This is of course not ready for "production" and doesn't replace our real backup...just in case someone asks

-

How's the performance?

-

Currently hundred of gigabytes gets transfered

I switched xenorchestra from the VM to our hardware backup server, so we can use the 10Gbit cards. (The VM run iperf about 1,6 Gbit/s, the hardware at around 6 GBit/s.)

The data flow is:

NFS-Storage(ZFS) -> XenServer -> XO -> XCP-ng (ZFS), all connected over 10GBit Ethernet.I did not measure correct numbers, was just impressed and happy that it runs

I will try to express my data and meanings more detailed later...Setup

Settings

- Alle servers are connected via 10GBit ethernet cards (Emulex OnConnect)

- Xen1 and Xen2 are a pool, Xen1 is master

- XenOrchestra connects per http:// (no SSL to improve speed)

- DOM0 has 8GB RAM on XCP-ng AiO (so ZFS does use 4GB)

Numbers

- iperf between Backupserver and XCP-ng AiO: ~6 GBit's

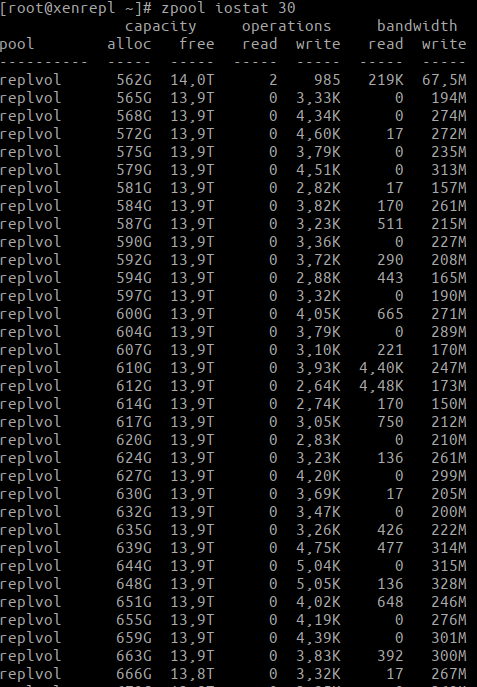

- Transfer speed of Continous Replication Job from Xen-Pool to XCP-ng AiO (output of

zpool iostatwith 30 seconds sample rate on XCP-ng AiO)

The speed varies a bit because of lz4 compression, current Compress Ratio: 1.64x

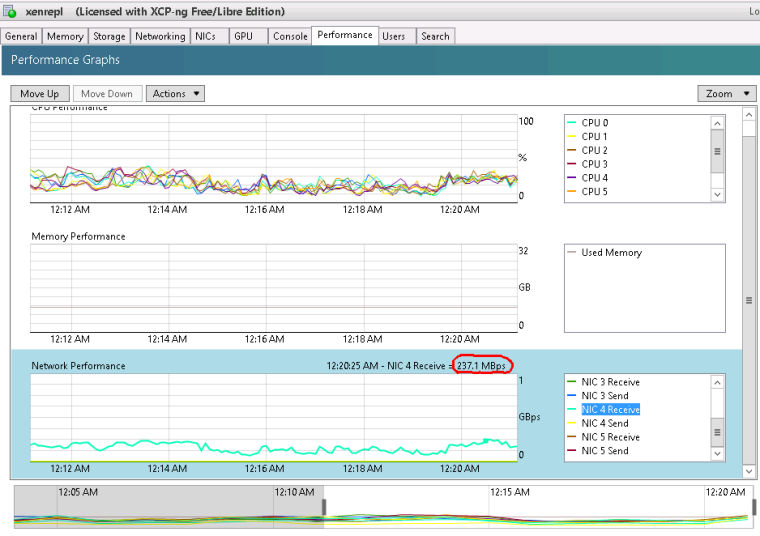

Here a quick look at the graphs (on XCP-ng AiO)

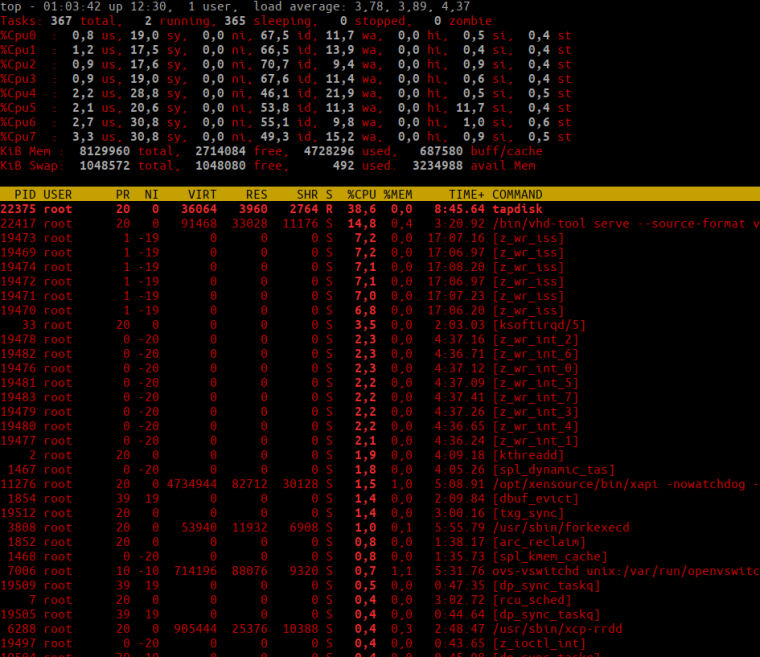

and a quick look at top (on XCP-ng AiO)

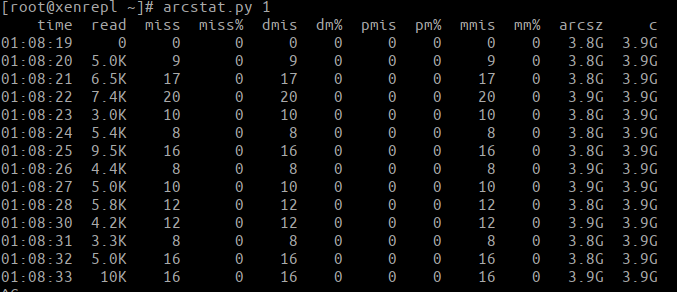

and arcstat.py (sample rate 1sec) (on XCP-ng AiO)

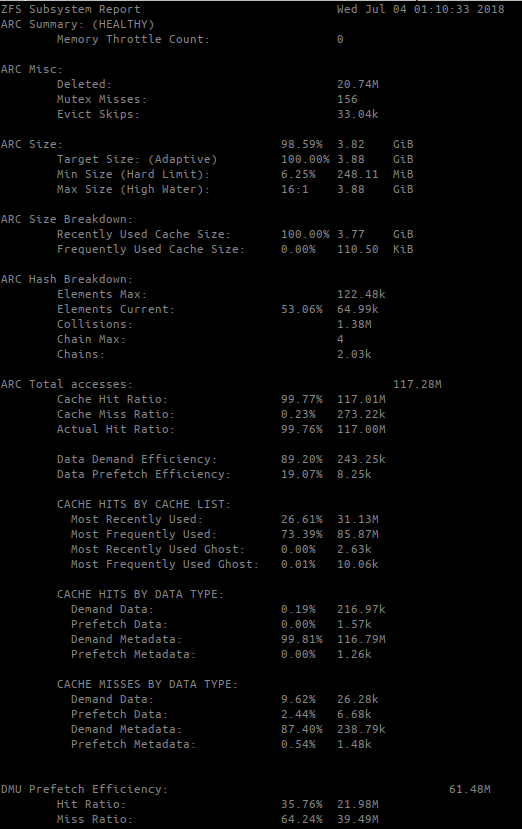

and arc_summary.py (on XCP-ng AiO)

@olivierlambert I hope you can see somehting with these numbers

-

To avoid the problem with XenOrchestra runing as VM (and not using the full 10GBit of the host) I would install it directly inside DOM0. It could be preinstalled in XCP-ng as WEB-Gui

Maybe I try this later on my AiO Server ...

-

Not a good idea to embed XO inside Dom0… (also it will cause issue if you have multiple XOs, it's meat to run at one place only).

That's why the virtual appliance is better

-

@olivierlambert My biggest Question: Why is this not a good Idea?

How do big datacenters solve the issue with the performance? Just install on Hardware? With virtual Appliance you are limited to GBit speed.

I run more than one XO's, one for Backups and one for Useraccess. Don't want users on my Backupserver.

Maybe this is something for a separat thread...

-

VM speed is not limited to GBit speed. XO proxies will deal with big infrastructures to avoid bottlenecks.

-

@olivierlambert ah, ok

-

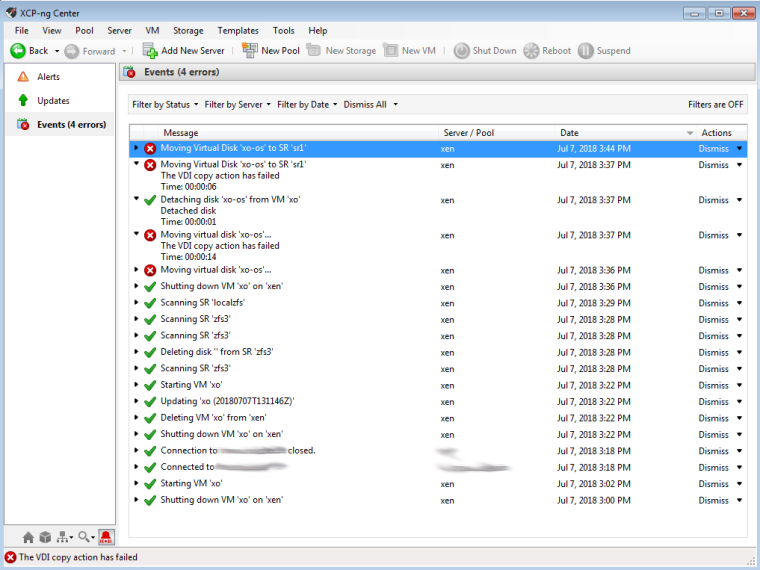

I did a little more testing with ZFS, tried to move a disk from

localpooltosrpoolSetup

- XCP-ng on single 500Gb disk, ZFS-Pool created on a separat partition on that disk (~420GB) ->

localpool-> SR namedlocalzfs - ZFS-Pool on 4 x 500GB Disks (Raid10) ->

srpool-> SR namedsr1

Error

Output of

/var/log/SMLogJul 7 15:44:07 xen SM: [28282] ['uuidgen', '-r'] Jul 7 15:44:07 xen SM: [28282] pread SUCCESS Jul 7 15:44:07 xen SM: [28282] lock: opening lock file /var/lock/sm/4c47f4b0-2504-fe56-085c-1ffe2269ddea/sr Jul 7 15:44:07 xen SM: [28282] lock: acquired /var/lock/sm/4c47f4b0-2504-fe56-085c-1ffe2269ddea/sr Jul 7 15:44:07 xen SM: [28282] vdi_create {'sr_uuid': '4c47f4b0-2504-fe56-085c-1ffe2269ddea', 'subtask_of': 'DummyRef:|62abb588-91e6-46d7-8c89-a1be48c843bd|VDI.create', 'vdi_type': 'system', 'args': ['10737418240', 'xo-os', '', '', 'false', '19700101T00:00:00Z', '', 'false'], 'o_direct': False, 'host_ref': 'OpaqueRef:ada301d3-f232-3421-527a-d511fd63f8c6', 'session_ref': 'OpaqueRef:02edf603-3cdf-444a-af75-1fb0a8d71216', 'device_config': {'SRmaster': 'true', 'location': '/mnt/srpool/sr1'}, 'command': 'vdi_create', 'sr_ref': 'OpaqueRef:0c8c4688-d402-4329-a99b-6273401246ec', 'vdi_sm_config': {}} Jul 7 15:44:07 xen SM: [28282] ['/usr/sbin/td-util', 'create', 'vhd', '10240', '/var/run/sr-mount/4c47f4b0-2504-fe56-085c-1ffe2269ddea/11a040ba-53fd-4119-80b3-7ea5a7e134c6.vhd'] Jul 7 15:44:07 xen SM: [28282] pread SUCCESS Jul 7 15:44:07 xen SM: [28282] ['/usr/sbin/td-util', 'query', 'vhd', '-v', '/var/run/sr-mount/4c47f4b0-2504-fe56-085c- 1ffe2269ddea/11a040ba-53fd-4119-80b3-7ea5a7e134c6.vhd'] Jul 7 15:44:07 xen SM: [28282] pread SUCCESS Jul 7 15:44:07 xen SM: [28282] lock: released /var/lock/sm/4c47f4b0-2504-fe56-085c-1ffe2269ddea/sr Jul 7 15:44:07 xen SM: [28282] lock: closed /var/lock/sm/4c47f4b0-2504-fe56-085c-1ffe2269ddea/sr Jul 7 15:44:07 xen SM: [28311] lock: opening lock file /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:07 xen SM: [28311] lock: acquired /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:07 xen SM: [28311] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/319aebee-24f0-8232-0d9e-ad42a75d8154/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0.vhd'] Jul 7 15:44:07 xen SM: [28311] pread SUCCESS Jul 7 15:44:07 xen SM: [28311] vdi_attach {'sr_uuid': '319aebee-24f0-8232-0d9e-ad42a75d8154', 'subtask_of': 'DummyRef:|b0cce7fa-75bb-4677-981a-4383e5fe1487|VDI.attach', 'vdi_ref': 'OpaqueRef:9daa47a5-1cae-40c2-b62e-88a767f38877', 'vdi_on_boot': 'persist', 'args': ['false'], 'o_direct': False, 'vdi_location': 'ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0', 'host_ref': 'OpaqueRef:ada301d3-f232-3421-527a-d511fd63f8c6', 'session_ref': 'OpaqueRef:a27b0e88-cebe-41a7-bc0c-ae0eb46dd011', 'device_config': {'SRmaster': 'true', 'location': '/localpool/sr'}, 'command': 'vdi_attach', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:e4d0225c-1737-4496-8701-86a7f7ac18c1', 'vdi_uuid': 'ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0'} Jul 7 15:44:07 xen SM: [28311] lock: opening lock file /var/lock/sm/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0/vdi Jul 7 15:44:07 xen SM: [28311] result: {'o_direct_reason': 'SR_NOT_SUPPORTED', 'params': '/dev/sm/backend/319aebee-24f0-8232-0d9e-ad42a75d8154/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0', 'o_direct': True, 'xenstore_data': {'scsi/0x12/0x80': 'AIAAEmNhODhmOGRjLTRhYjYtNGIgIA==', 'scsi/0x12/0x83': 'AIMAMQIBAC1YRU5TUkMgIGNhODhmOGRjLTRhYjYtNGJlNy05MDg0LTFiNDFjYmM4YzFjMCA=', 'vdi-uuid': 'ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0', 'mem-pool': '319aebee-24f0-8232-0d9e-ad42a75d8154'}} Jul 7 15:44:07 xen SM: [28311] lock: closed /var/lock/sm/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0/vdi Jul 7 15:44:07 xen SM: [28311] lock: released /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:07 xen SM: [28311] lock: closed /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:08 xen SM: [28337] lock: opening lock file /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:08 xen SM: [28337] lock: acquired /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:08 xen SM: [28337] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/319aebee-24f0-8232-0d9e-ad42a75d8154/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0.vhd'] Jul 7 15:44:08 xen SM: [28337] pread SUCCESS Jul 7 15:44:08 xen SM: [28337] lock: released /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:08 xen SM: [28337] vdi_activate {'sr_uuid': '319aebee-24f0-8232-0d9e-ad42a75d8154', 'subtask_of': 'DummyRef:|1f6f53c2-0d1e-462c-aeea-5d109e55d44f|VDI.activate', 'vdi_ref': 'OpaqueRef:9daa47a5-1cae-40c2-b62e-88a767f38877', 'vdi_on_boot': 'persist', 'args': ['false'], 'o_direct': False, 'vdi_location': 'ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0', 'host_ref': 'OpaqueRef:ada301d3-f232-3421-527a-d511fd63f8c6', 'session_ref': 'OpaqueRef:392bd2b6-2a84-48ca-96f1-fe0bdaa96f20', 'device_config': {'SRmaster': 'true', 'location': '/localpool/sr'}, 'command': 'vdi_activate', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:e4d0225c-1737-4496-8701-86a7f7ac18c1', 'vdi_uuid': 'ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0'} Jul 7 15:44:08 xen SM: [28337] lock: opening lock file /var/lock/sm/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0/vdi Jul 7 15:44:08 xen SM: [28337] blktap2.activate Jul 7 15:44:08 xen SM: [28337] lock: acquired /var/lock/sm/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0/vdi Jul 7 15:44:08 xen SM: [28337] Adding tag to: ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0 Jul 7 15:44:08 xen SM: [28337] Activate lock succeeded Jul 7 15:44:08 xen SM: [28337] lock: opening lock file /var/lock/sm/319aebee-24f0-8232-0d9e-ad42a75d8154/sr Jul 7 15:44:08 xen SM: [28337] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/319aebee-24f0-8232-0d9e-ad42a75d8154/ca88f8dc-4ab6-4be7-9084-1b41cbc8c1c0.vhd'] Jul 7 15:44:08 xen SM: [28337] pread SUCCESS - XCP-ng on single 500Gb disk, ZFS-Pool created on a separat partition on that disk (~420GB) ->

-

@borzel said in Testing ZFS with XCP-ng:

Jul 7 15:44:07 xen SM: [28311] result: {'o_direct_reason': 'SR_NOT_SUPPORTED',

Did find it after posting this and looking onto it... is this a known thing?

-

that's weird: https://github.com/xapi-project/sm/blob/master/drivers/blktap2.py#L1002

I don't even know how my tests did work before.

-

Maybe because it wasn't triggered without a VDI live migration?

-

did no livemigration ...just local copy of a vm

... ah, I should read before I answer ...

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login