Alert: Control Domain Memory Usage

-

Ok, so now we know for sure that it's related to the kernel or one of the drivers.

Let me summarize all the tests that users affected by the issue can do to help find what causes it:

- Test with kmemleak, hoping that it may be able to detect something. No luck for now for @delaf who tried.

- Test with the oldest kernel (

4.19.19-6.0.10.1.xcpng8.1). I doubt it will yield results, but that would allow to be sure. If it does yield results, then it will allow to search towards a specific patch. - With the current kernel, give priority to built-in drivers. If this gives good results, this will mean that the leak is in one of the drivers that are provided through driver RPMs. Two ways:

- A bit riskier but we'd still be interested in the results: disable them all so that only built-in kernel drivers are used. For this, edit

/etc/modprobe.d/dist.confand changesearch override updates extra built-in weak-updatesintosearch extra built-in weak-updates override updates, then rundepmod -aand reboot. Don't forget to restore the original contents after the tests. - Another way, that allows to select specific drivers one by one:

- Identify a few drivers that you want to check in the output of

lsmod. For exampleixgbe. - Find where the currently used driver is on the filesystem:

modinfo ixgbe | head -n 1 - If the path contains "/updates/", it's not a kernel built-in. Rename the file to

name_of_file.save.depmod -a.reboot. The kernel will then use its built-in driver. - If nothing changes, restore the file and try another.

- Identify a few drivers that you want to check in the output of

- A bit riskier but we'd still be interested in the results: disable them all so that only built-in kernel drivers are used. For this, edit

I also intend to build a new

ixgbedriver, just in case we're lucky and it's the culprit, since every affected user uses it. -

@r1 I do not manage to install the old kernel. Any idea?

# yum downgrade "kernel == 4.19.19-6.0.10.1.xcpng8.1" Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Resolving Dependencies --> Running transaction check ---> Package kernel.x86_64 0:4.19.19-6.0.10.1.xcpng8.1 will be a downgrade ---> Package kernel.x86_64 0:4.19.19-6.0.12.1.xcpng8.1 will be erased --> Finished Dependency Resolution Error: Trying to remove "kernel", which is protected -

@delaf I ran into the same thing recently. See solution below --

-

@stormi I have a server with only

search extra built-in weak-updates override updates. We will see if it is better. -

-

@stormi @r1

Four days later, I get:- one server (266) with alt-kernel: still no problem

- one server (268) with 4.19.19-6.0.10.1.xcpng8.1: no more problem!

- one server (272) with kmemleak kernel: no memleak detected, but the problem is present

- one server (273) with

search extra built-in weak-updates override updates: problem still present

-

-

@delaf said in Alert: Control Domain Memory Usage:

one server (268) with 4.19.19-6.0.10.1.xcpng8.1: no more problem!

Yeah, we need to be sure that this is a stable kernel and somewhere after this, the memory leak seems to have introduced.

-

I currently have:

top - 13:35:31 up 59 days, 17:11, 1 user, load average: 0.43, 0.36, 0.34 Tasks: 646 total, 1 running, 436 sleeping, 0 stopped, 0 zombie %Cpu(s): 0.8 us, 1.1 sy, 0.0 ni, 97.5 id, 0.3 wa, 0.0 hi, 0.1 si, 0.2 st KiB Mem : 12205936 total, 149152 free, 10627080 used, 1429704 buff/cache KiB Swap: 1048572 total, 1048572 free, 0 used. 1153360 avail Memtop - 13:35:54 up 35 days, 17:29, 1 user, load average: 0.54, 0.73, 0.77 Tasks: 489 total, 1 running, 324 sleeping, 0 stopped, 0 zombie %Cpu(s): 3.5 us, 3.4 sy, 0.0 ni, 92.7 id, 0.0 wa, 0.0 hi, 0.0 si, 0.4 st KiB Mem : 12207996 total, 155084 free, 9388032 used, 2664880 buff/cache KiB Swap: 1048572 total, 1048572 free, 0 used. 2394220 avail Memboth with:

# uname -a Linux xs01 4.19.0+1 #1 SMP Thu Jun 11 16:18:33 CEST 2020 x86_64 x86_64 x86_64 GNU/Linux # yum list installed | grep kernel kernel.x86_64 4.19.19-6.0.11.1.xcpng8.1 @xcp-ng-updatesshall i test something?

-

I have a set of hosts on kernel-4.19.19-6.0.11.1.xcpng8.1 and I believe I'm hitting this as well. The OOM seems to kill openvswitch, which takes the host offline and in most cases, the VMs as well.

-

So, the difference between 4.19.19-6.0.10.1.xcpng8.1 and 4.19.19-6.0.11.1.xcpng8.1 is two patches meant to reduce the performance overhead of the CROSSTalk vulnerability mitigations.

So, assuming from @delaf's test results that one of those patches introduced the memory leak, I have built

Now here are the tests that you can do:

- Reproduce @delaf's findings by running

kernel-4.19.19-6.0.10.1.xcpng8.1: no more memory leaks? - Test this kernel I built with patch 53 disabled: https://nextcloud.vates.fr/index.php/s/YXWCSEwo8SWkfAZ

- Test this kernel I built with patch 62 disabled: https://nextcloud.vates.fr/index.php/s/arj5YfdrkjMKbBy

If one of the patches is the cause of the memory leak, then one of the last two should still cause a memory leak and the other one not.

- Reproduce @delaf's findings by running

-

@stormi I have installed the two kernels

272 ~]# yum list installed kernel | grep kernel kernel.x86_64 4.19.19-6.0.11.1.0.1.patch53disabled.xcpng8.1 273 ~]# yum list installed kernel | grep kernel kernel.x86_64 4.19.19-6.0.11.1.0.1.patch62disabled.xcpng8.1I have removed the modification in

/etc/modprobe.d/dist.confon server 273.We have to wait a little bit now

-

FYI, the kernel with kmemleak support did detect something for a user who has a support ticket related to dom0 memory usage.

-

@stormi For the

kernel-4.19.19-6.0.10.1.xcpng8.1test, i'm not sure it solve the problem because I get a small memory increase. We have to wait a bit more

-

-

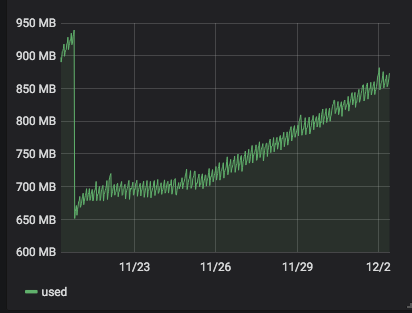

server 266 with

alt-kernel: still no problem.

-

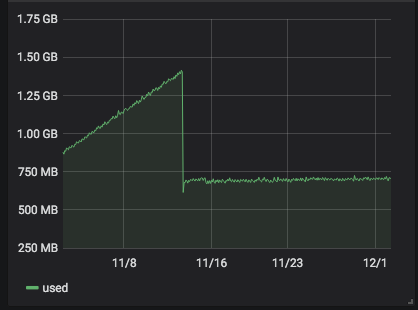

server 268 with

4.19.19-6.0.10.1.xcpng8.1: the problem has begun some days ago after some stable days.

-

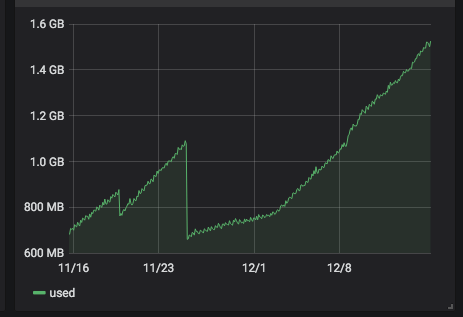

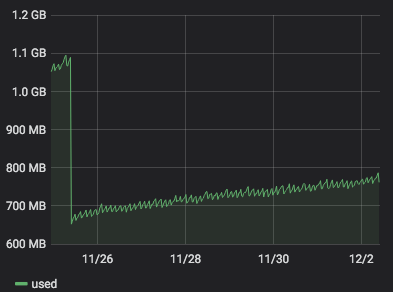

server 272 with

4.19.19-6.0.11.1.0.1.patch53disabled.xcpng8.1:

)

) -

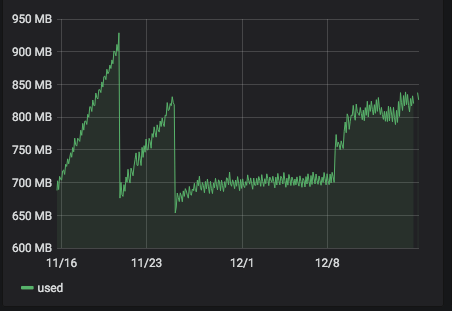

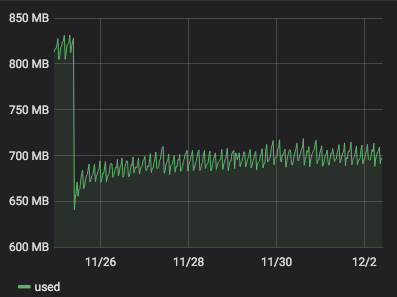

server 273 with

4.19.19-6.0.11.1.0.1.patch62disabled.xcpng8.1:

It seems that

4.19.19-6.0.11.1.0.1.patch62disabled.xcpng8.1is more stable than4.19.19-6.0.11.1.0.1.patch53disabled.xcpng8.1. But it is a but early to be sure. -

-

-

Thanks. It looks like I'm doomed to see seemingly contradictory results for every kernel-related issue (this one, and an other one regarding network performance): you don't have any leaks without patch 62, but you had leaks with kernel

4.19.19-6.0.10.1.xcpng8.1which doesn't have that patch either. So it's hard to conclude anything

-

Hey Guys,

we are facing the same issue with xcp 8.1.

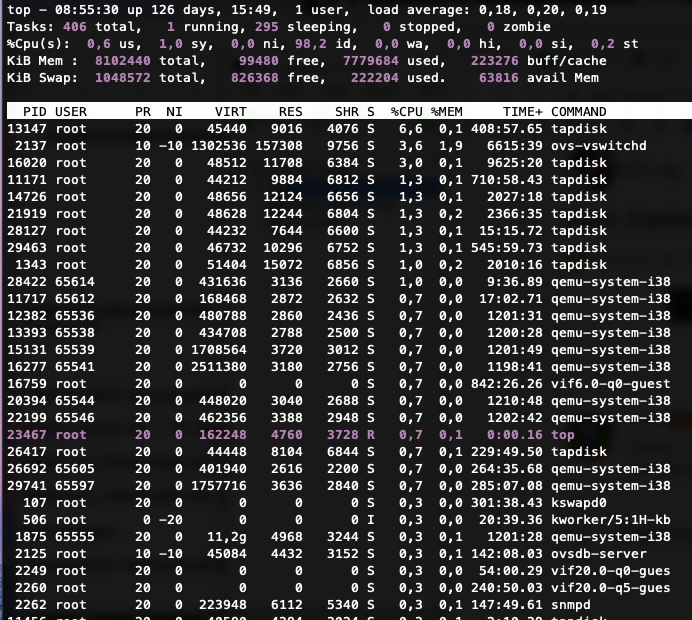

We can't figure out what uses all this memory (8GB) or how to reduce it. Restarting the Toolstack did nothing and we can't afford a downtime because everything runs in production. Similar systems with same configurations don't show such a behavior.I can provide you with some output from our system, maybe you can see something or help us finding a solution.

free -m

total used free shared buff/cache available Mem: 7912 7595 82 33 234 62 Swap: 1023 216 807xl top

NAME STATE CPU(sec) CPU(%) MEM(k) MEM(%) MAXMEM(k) MAXMEM(%) VCPUS NETS NETTX(k) NETRX(k) VBDS VBD_OO VBD_RD VBD_WR VBD_RSECT VBD_WSECT SSID Domain-0 -----r 7308446 52.1 8388608 3.1 8388608 3.1 16 0 0 0 0 0 0 0 0 0 0xe vm-param-list uuid | grep memory

memory-actual ( RO): 8589934592 memory-target ( RO): <unknown> memory-overhead ( RO): 84934656 memory-static-max ( RW): 8589934592 memory-dynamic-max ( RW): 8589934592 memory-dynamic-min ( RW): 8589934592 memory-static-min ( RW): 8589934592 memory (MRO): <not in database>lsmod and grub.cfg

lsmod.txt

grub-cgf.txttop output

Tell me if you need more information or if you have any idea. Thanks.

-

We need more details on the host.

- Hardware detail (NICs, server model)

- If all your hardware is fully BIOS/firmware up to date

- The kind of storage used (iSCSI, FCoE, NFS?)

So far, we couldn't find a real common thing between people, and that's make hard to find the root cause.

-

It is a Dell PowerEdge R440 Version 2.6.3 with an LACP Bond and we use an NFS Storage.