XCP-ng 7.5.0 final is here

-

@olivierlambert

We ( @dsiminiuk and I ) did an remote session on his VM:- the PV-Tools from citrix where in a something strange state

- we tried to remove them and install the XCP-ng beta testsigned drivers, but without luck

-> I will build me a test VM and play arround, to get a better understanding, what this Windows Server 2008 R2 is doing with our drivers

- the PV-Tools from citrix where in a something strange state

-

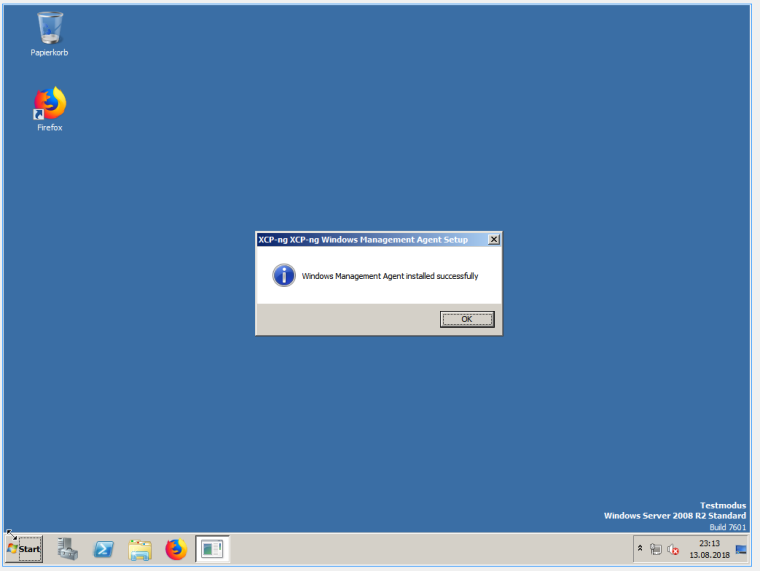

@dsiminiuk bad news, my fresh installed test VM (Server 2008 R2 with SP1) installed the (

betapre-alpha testsinged non-production do-not-use wear-gloves make-backups) drivers just fine:

-

I'm going to build a new Windows VM, anticipating the windows tools release. Thanks

-

@dsiminiuk

I had a similar problem with my setup. 2 hosts with freenas-iscsi. Some VMs didn't migrate as they should, got stuck in a migrate/shutdown state I shutdown everything and start them again. That solved a few problems. One VM didn't find the hdd and when I tried restore from snapshot it didn't work 100% although it booted. But its just a "lab" and the VM was a pi-hole so just I re-installed it. After that I installed 1 one for redundancy

I shutdown everything and start them again. That solved a few problems. One VM didn't find the hdd and when I tried restore from snapshot it didn't work 100% although it booted. But its just a "lab" and the VM was a pi-hole so just I re-installed it. After that I installed 1 one for redundancy

If you cant re-install it. Try shutdown it trough the cli.

-

@fraggan @borzel

I created a brand new Windows Server 2016 VM (named "sweet") on 50GB VDI.Edit: I then installed the guest tools extracted from the Xenserver ISO.

Initiated live migration from server1 where I built it, to server2 (pool master)...

2018-08-14 18:20:29,259 ERROR XenAdmin.Actions.AsyncAction [50] - Internal error: Xenops_interface.Does_not_exist(_) 2018-08-14 18:20:29,259 ERROR XenAdmin.Actions.AsyncAction [50] - at XenAdmin.Network.TaskPoller.poll() at XenAdmin.Network.TaskPoller.PollToCompletion() at XenAdmin.Actions.VMActions.VMMigrateAction.Run() at XenAdmin.Actions.AsyncAction.RunWorkerThread(Object o) 2018-08-14 18:20:29,261 WARN Audit [50] - Operation failure: VMMigrateAction: pool1: VM 2a80d18b-b1d6-d2c0-6874-e664b9ebdb67 (SWEET): Pool 86708278-0d7d-2bb1-5024-b04c39690e15 (pool1): Host c3970498-bc7f-4a49-8fe2-23e1e6dcb6bb (xs2): MigratingThe new VM appears powered off attached to the pool.

Initiated a power on of the VM on server2. Status stays Yellow, console of VM remains blank.

I initiated a restart of the toolstack of server2 (pool master).

The XCP-ng UI crashes with two popups to check the log.2018-08-14 18:55:54,296 ERROR XenAdmin.Program [Main program thread] - Exception in Invoke System.NullReferenceException: Object reference not set to an instance of an object. <snip> 2018-08-14 18:55:54,297 ERROR XenAdmin.Program [19] - Exception in Invoke (ControlType=XenAdmin.MainWindow, MethodName=<RefreshTreeView>b__63_0) System.NullReferenceException: Object reference not set to an instance of an object. at System.Windows.Forms.Control.MarshaledInvoke(Control caller, Delegate method, Object[] args, Boolean synchronous) <snip> 2018-08-14 18:55:54,304 FATAL XenAdmin.Program [19] - Uncaught exceptionAfter closing and reopening the UI, the VM I tried to start appeared powered off still.

I initiated a startup of the new VM on server2 (pool master) and it started.

It seems to me that for whatever reason the live migration fails, it leaves the target machine (server2 in this case) in an invalid state and you cannot start a VM on it again until the toolstack is restarted. -

@borzel Thank you for the hours of hands on assistance Alex.

I've decided to go back to 7.4. Even with XCP-ng Center 7.5 it behaves as expected with these same VMs.

I hope this information is useful for somebody in the future.

-

Maybe this is something someone could test with XS 7.5.

It looks to me as if the pv-drivers from XS 7.4 or before (because we didn't deliver windows drivers ever) causing problems on the newer xen version in XCP-ng 7.5 .... so maybe the same issue is happening in XS 7.5 (without upgrading the drivers)... and not upgrading the drivers is not recommended as I recall the upgrade instructions from Citrix

...it's just a guess from my feeling

By the way, I just found the smiley bar:

-

I exported all my VMs to XVA. Wiped disks, installed anew from 7.4, updated to latest with yum, imported VMs.

Everything works as it should; live migrations, everything. -

Yeah but 7.4 won't be maintained, so it would be better to try to see if it's a XS issue or not.

-

I had a live migration issue with windows too.

Secondary host running XCP-ng 7.5, primary XS7.1, live-migrated the Win 2K12 VM from primary host to secondary fine, took 14mins. I updated XS7.1 to XCP-ng 7.5 and installed new Dell BIOS on primary host. Migrate back hung at 99%. I left it about 45mins (3-times as long as the original migrate took) and noted it was still sending data between the two.. I wonder if it was stuck in some form of loop.

The logs on src host had this in logs a lot:

Aug 16 13:55:05 xen52 xenopsd-xc: [debug|xen52|37 |Async.VM.migrate_send R:c6ab32165e47|xenops_server] TASK.signal 1305 = ["Pending",0.99] Aug 16 13:55:05 xen52 xapi: [debug|xen52|389 |org.xen.xapi.xenops.classic events D:333a26fc4942|xenops] Processing event: ["Task","1305"] Aug 16 13:55:05 xen52 xapi: [debug|xen52|389 |org.xen.xapi.xenops.classic events D:333a26fc4942|xenops] xenops event on Task 1305 Aug 16 13:55:05 xen52 xenopsd-xc: [debug|xen52|37 |Async.VM.migrate_send R:c6ab32165e47|xenops] VM = 66ebe30b-2bd2-ae59-c225-54a625655d52; domid = 2; progress = 99 / 100I read a bit on Citrix forums and decided to shutdown the VM. Doing so from XCP-ng center didn't work, so I did it from within the VM.

Upon shutdown it seemed to sort itself, but the VM came up as 'paused' on the primary node. This couldn't be resumed. A force restart from XCP-ng center jsut seemed to hang. I tried to cancel the task with xe task-cancel on hard_reboot and it didn't work so did a xe-toolstack-restart and this reset the VM state back to shutdown. It then booted normally...

Now the secondary host was running an old BIOS still with earlier microcode so not sure if it was related to that in anyway..

john

-

@john205 If you have these problems with Windows, can you please test your case with our testsigned drivers? Only if its not production:

https://xcp-ng.org/forum/topic/309/test-xcp-ng-7-5-0-windows-pv-drivers-and-management-agent

-

@borzel unfortunately this is a production client server so can't do that easily. The two linux VM's moved over fine. Most of the VM's we have are Linux based also but I might be able to do it with an internal one on a different host but need to see if it can be moved easily as it has multiple network interfaces.

I think the one with the issue I mentioned before had XS7.1 drivers installed.

-

ok, I've got one running XS 6.2 drivers I can test although it's got 100GB of disk so will take some time to do. Both ends are XCP-ng 7.5. I'll live migrate it and back with this driver first as a test and then try some others, see how it goes.

Edit: this vm is Win 2008R2 also where as previous which failed was 2K12.

-

Ok migration of W2K8R2 with 6.2 drivers from XCP-ng 7.5 to XCP-ng 7.5 failed with msg:

Storage_Interface.Does_not_exist(_)

It also crashed the VM.

I think this could be related to https://bugs.xenserver.org/browse/XSO-785 as there is only 10GB free on the source storage repository so I don't think I can actually do the migration because of that (it's recommended to have 2x space available to migrate a VM, something that becomes increasingly harder with large VMs!).

-

Ah damn! I think you spotted the issue then!

-

@olivierlambert said in XCP-ng 7.5.0 final is here:

Ah damn! I think you spotted the issue then!

I think that is different to the original problem as there was enough disk space in that instance, but this non-production VM I was using to test doesn't have the space on the source host.

New problem I've come across since doing that failed migration, the message-switch process is now eating approx half of the memory on the server:

[root@host log]# free -m

total used free shared buff/cache available

Mem: 1923 1601 27 12 295 221

Swap: 511 26 485

[root@host log]# ps axuww | grep message

root 1920 0.2 52.4 1094032 1033144 ? Ssl Aug16 2:36 /usr/sbin/message-switch --config /etc/message-switch.conf

root 32669 0.0 0.1 112656 2232 pts/24 S+ 12:43 0:00 grep --color=auto messageAnyone know how to restart that?

-

Restart the toolstack?

-

Hm.. I did a toolstack restart and it still had the high memory usage after, but it has now since dropped..

root 1920 0.2 2.0 108860 39516 ? Ssl Aug16 2:39 /usr/sbin/message-switch --config /etc/message-switch.conf

Perhaps it takes a bit of time to clear it out.

-

@olivierlambert said in XCP-ng 7.5.0 final is here:

Yeah but 7.4 won't be maintained, so it would be better to try to see if it's a XS issue or not.

My bleeding edge attempt has been dulled. I'll try again later after seeing if the root cause is identified.

-

Hi

Has there been any update on this please?Just tried updating our pool and after updating the master had failed migration of VM (Ubuntu) and then was unable to get VM to start (Missing VDI error). Only solution was to roll master back to 7.4 and everything working again.

Thanks

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login