-

@dumarjo Well, for the moment we don't have a script to do that. But you can modify manually the linstor database:

- You must find where is running the controller (for example with the command:

linstor) - Then execute this command on the host to add:

linstor --controllers=<CONTROLLER_IP> node create --node-type combined $HOSTNAMEwhereCONTROLLER_IPis given by the first step. - If you want to add a new disk, you must create a LVM/VG group with the name used in the other storage pools:

linstor --controllers=<CONTROLLER_IP> storage-pool list. - Than you can create the storage pool:

linstor --controllers=<CONTROLLER_IP> storage-pool create {lvm or lvmthin} $HOSTNAME <STORAGE_POOL> <POOL_NAME>(Check the two last params are the same in the DB.) - The PBDs of the current SR must be modified to use the new host, and a PBD must be created on the new host.

It's a little bit complex. So I think I will add a basic script to configure a new host or to remove an existing one. I do not recommend the usage of these commands, except in the case of tests.

- You must find where is running the controller (for example with the command:

-

@ronan-a said in XOSTOR hyperconvergence preview:

- The PBDs of the current SR must be modified to use the new host, and a PBD must be created on the new host.

I'm relatively new with xcp-ng, and I'm a bit lost for this part. I'll give you more info on my current setup

For now I have 3 hosts that are connected and working. I can install new VMs and start them on this SR.

The new host is already added to the pool (xcp-ng-04).

It's a little bit complex. So I think I will add a basic script to configure a new host or to remove an existing one. I do not recommend the usage of these commands, except in the case of tests.

Agree. This can help a bit.

-

@ronan-a

Another situation is that I cannot use the SR on all the host that are not part of the group (linstor_group/thin_device).for now I have my new xcp-ng-04 host joined to the pool, and I would like to connect the SR to it.

The first error I get is that the driver is not installed. So I installed all the package used on the install script (without the init disk section), and try to connect the SR to the xcp-ng-04. Now I get those error in the logfile.

[12:33 xcp-ng-01 ~]# tail -f /var/log/xensource.log | grep linstor Mar 2 12:34:10 xcp-ng-01 xapi: [debug||2306 HTTPS 192.168.2.94->:::80|host.call_plugin R:98517b08066b|audit] Host.call_plugin host = '8ac2930f-f826-4a18-8330-06153e3e4054 (xcp-ng-02)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:10 xcp-ng-01 xapi: [debug||2033 HTTPS 192.168.2.94->:::80|host.call_plugin R:e39e097fe8cb|audit] Host.call_plugin host = '81b06c7f-df55-4628-b27f-4e1e7850f900 (xcp-ng-03)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:11 xcp-ng-01 xapi: [debug||2306 HTTPS 192.168.2.94->:::80|host.call_plugin R:16602b9fb9d1|audit] Host.call_plugin host = '8ac2930f-f826-4a18-8330-06153e3e4054 (xcp-ng-02)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:11 xcp-ng-01 xapi: [debug||2033 HTTPS 192.168.2.94->:::80|host.call_plugin R:20c5d5ce11dc|audit] Host.call_plugin host = '81b06c7f-df55-4628-b27f-4e1e7850f900 (xcp-ng-03)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] ..... ..... Mar 2 12:34:43 xcp-ng-01 xapi: [error||6940 ||backtrace] Async.PBD.plug R:4bbaefcdb2cb failed with exception Server_error(SR_BACKEND_FAILURE_47, [ ; The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-03']]; ]) Mar 2 12:34:43 xcp-ng-01 xapi: [error||6940 ||backtrace] Raised Server_error(SR_BACKEND_FAILURE_47, [ ; The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-03']]; ])it's look like a controller need to be started on all the hosts to be able to "connect" the new host ?

Like I said, This is a new test to know if a xcp-ng that are not part of the VG can use this SR.

Hope my intention is clear...I just click on the disconnected button on UI

regards,

-

HI,

Do the vm HA is suppose to work if the VM is hosted on a SR with XOStore based ? I try to get it work, but the VM never restart if I shutdown the hosts where the VM is running.

Regards,

-

Pinging @ronan-a

-

@dumarjo Regarding your error during the attach call, could you send me the SMlog please?

-

@ronan-a

Here some more info

Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:22 xcp-ng-04 SM: [28002] sr_attach {'sr_uuid': 'bef191f3-e976-94ec-6bb7-d87529a72dbb', 'subtask_of': 'DummyRef:|79eb31a6-806c-4883-8e8d-de59cde66469|SR.at$ Mar 10 17:05:22 xcp-ng-04 SMGC: [28002] === SR bef191f3-e976-94ec-6bb7-d87529a72dbb: abort === Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: tried lock /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active, acquired: True (exists: True) Mar 10 17:05:22 xcp-ng-04 SMGC: [28002] abort: releasing the process lock Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:22 xcp-ng-04 SM: [28002] RESET for SR bef191f3-e976-94ec-6bb7-d87529a72dbb (master: False) Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:23 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 0 Mar 10 17:05:27 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 1 Mar 10 17:05:30 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 2 Mar 10 17:05:33 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 3 Mar 10 17:05:37 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 4 Mar 10 17:05:40 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 5 Mar 10 17:05:43 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 6 Mar 10 17:05:47 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 7 Mar 10 17:05:50 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 8 Mar 10 17:05:53 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 9 Mar 10 17:05:54 xcp-ng-04 SM: [28002] Raising exception [47, The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor:/$ Mar 10 17:05:54 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:54 xcp-ng-04 SM: [28002] ***** generic exception: sr_attach: EXCEPTION <class 'SR.SROSError'>, The SR is not available [opterr=Error: Unable to connect to$ Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 110, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return self._run_locked(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 159, in _run_locked Mar 10 17:05:54 xcp-ng-04 SM: [28002] rv = self._run(sr, target) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 352, in _run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return sr.attach(sr_uuid) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 489, in wrap Mar 10 17:05:54 xcp-ng-04 SM: [28002] return load(self, *args, **kwargs) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 415, in load Mar 10 17:05:54 xcp-ng-04 SM: [28002] raise xs_errors.XenError('SRUnavailable', opterr=str(e)) Mar 10 17:05:54 xcp-ng-04 SM: [28002] Mar 10 17:05:54 xcp-ng-04 SM: [28002] ***** LINSTOR resources on XCP-ng: EXCEPTION <class 'SR.SROSError'>, The SR is not available [opterr=Error: Unable to connect to $ Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 378, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] ret = cmd.run(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 110, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return self._run_locked(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 159, in _run_locked Mar 10 17:05:54 xcp-ng-04 SM: [28002] rv = self._run(sr, target) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 352, in _run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return sr.attach(sr_uuid) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 489, in wrap Mar 10 17:05:54 xcp-ng-04 SM: [28002] return load(self, *args, **kwargs) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 415, in load Mar 10 17:05:54 xcp-ng-04 SM: [28002] raise xs_errors.XenError('SRUnavailable', opterr=str(e)) Mar 10 17:05:54 xcp-ng-04 SM: [28002]This is what I have in the log file. If you need more info, let me know.

-

Hi.

I have a couple of questions I'm hoping you might be able to answer. I'm currently using Linstor with a ZFS, encrypted and compressed zpool. This solves a lot of problems for me, including high availability, data redundancy and disk wide encryption. Looking at the install script provided, this simply installs the software and sets up the LVM group.

Does this mean the

sr-createcommand is responsible for setting up the Linstor controllers and their respective storage pools? If so, presumably at this point it only supports LVM as the storage-pool creation command will specify LVM?If it's not possible at this time to use ZFS backing devices with the SR, is there any future plans to do so? As the Linstor controllers are responsible for managing the storage pools, I would imagine it wouldn't be too difficult to achieve. Maybe even as simple as changing the creation command to zfs i.e.

linstor storage-pool create zfs node data srtank?Cheers.

-

@dumarjo Well, the new node (xcp-ng-04) is missing in the LINSTOR database. You must add it.

-

@Huxy Yeah for the moment we support only thin and thick LVM. We also want to support ZFS for several features: ZFS snapshots and lifting the restriction of the VHD format (maximum 2TB of data). Unfortunately we don't have a planning for this now. This will probably be done in the SMapiv3, not in the V1.

-

@ronan-a no worries. Thanks for the quick response. I'll consider moving to LVM or staying with Proxmox for the time being.

-

Is my understanding is good ? A XCP-NG host cannot use a shared SR (based on linstor) if it's not part of the linstor nodes ?

How this new host can be part of the nodes if I don't want to add HDD/SSD to this new host ? can it be done ?

Again, I want to know the limitation before thinking using this new promising technology !

-

A XCP-NG host cannot use a shared SR (based on linstor) if it's not part of the linstor nodes ?

Yeah it's required to use diskless devices.

How this new host can be part of the nodes if I don't want to add HDD/SSD to this new host ? can it be done ?

There are small changes to add to support a node without storage. But it's feasible with a modification in the driver. It should be supported because diskless volumes is a feature of DRBD to access to data using local network.

-

@ronan-a

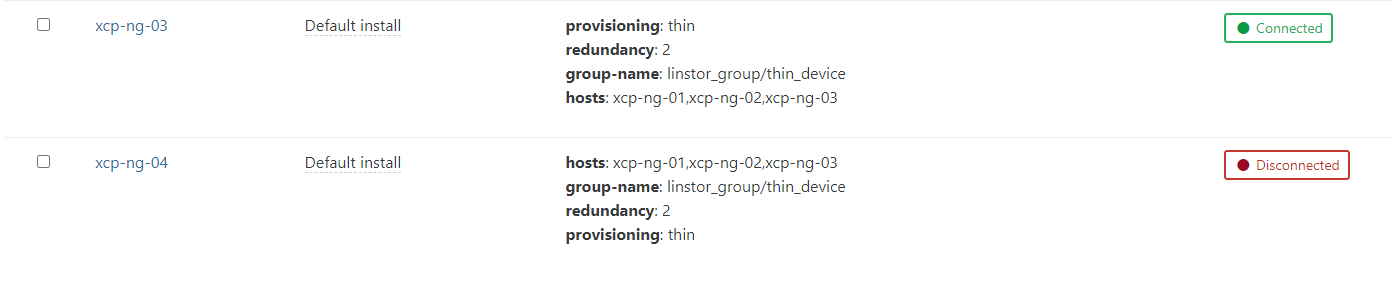

Hi,I did some experiment... and cannot get the missing piece of the puzzle to add my xcp-ng-04 host.

From my last status, I have my new xcp-ng-04 host part of the pool and I have installed all the tools for the linstor.

I checked the services for satellite and controller on the xcp-ng-04 and they are not running. I have no Idea if I need to start something manualy of not.

Here my SMlog on a freshly booted xcp-ng-04

Mar 22 11:54:14 xcp-ng-04 SM: [2685] sr_attach {'sr_uuid': '38c2baa3-bc8f-fbc5-ef5a-42461db92c51', 'subtask_of': 'DummyRef:|b4ea936f-80bb-4a6b-98b9-66a95f86b006|SR.attach', 'args': [],$ Mar 22 11:54:14 xcp-ng-04 SMGC: [2685] === SR 38c2baa3-bc8f-fbc5-ef5a-42461db92c51: abort === Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: tried lock /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active, acquired: True (exists: True) Mar 22 11:54:14 xcp-ng-04 SMGC: [2685] abort: releasing the process lock Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: acquired /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: acquired /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] RESET for SR 38c2baa3-bc8f-fbc5-ef5a-42461db92c51 (master: True) Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] set_dirty 'OpaqueRef:ea31ae92-4207-4c8b-8db6-da901c6a00a8' succeeded Mar 22 11:54:14 xcp-ng-04 SM: [2709] sr_update {'sr_uuid': '38c2baa3-bc8f-fbc5-ef5a-42461db92c51', 'subtask_of': 'DummyRef:|ae4505d5-b9fe-4f39-92dc-67ab9d0b579b|SR.stat', 'args': [], '$ Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 22 11:54:15 xcp-ng-04 SM: [2725] sr_attach {'sr_uuid': 'bef191f3-e976-94ec-6bb7-d87529a72dbb', 'subtask_of': 'DummyRef:|ae624d96-e91a-4a12-afd6-35593be4ce51|SR.attach', 'args': [],$ Mar 22 11:54:15 xcp-ng-04 SMGC: [2725] === SR bef191f3-e976-94ec-6bb7-d87529a72dbb: abort === Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: tried lock /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active, acquired: True (exists: True) Mar 22 11:54:15 xcp-ng-04 SMGC: [2725] abort: releasing the process lock Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:15 xcp-ng-04 SM: [2725] RESET for SR bef191f3-e976-94ec-6bb7-d87529a72dbb (master: False) Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:16 xcp-ng-04 SM: [2725] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 0 Mar 22 11:54:19 xcp-ng-04 SM: [2725] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 1On xcp-ng-02 (linstor controller)

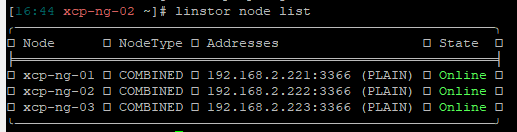

[11:42 xcp-ng-02 ~]# linstor node list ╭────────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ State ┊ ╞════════════════════════════════════════════════════════════╡ ┊ xcp-ng-01 ┊ COMBINED ┊ 192.168.2.221:3366 (PLAIN) ┊ Online ┊ ┊ xcp-ng-02 ┊ COMBINED ┊ 192.168.2.222:3366 (PLAIN) ┊ Online ┊ ┊ xcp-ng-03 ┊ COMBINED ┊ 192.168.2.223:3366 (PLAIN) ┊ Online ┊ ╰────────────────────────────────────────────────────────────╯I list all the linstor PBD on hosts:

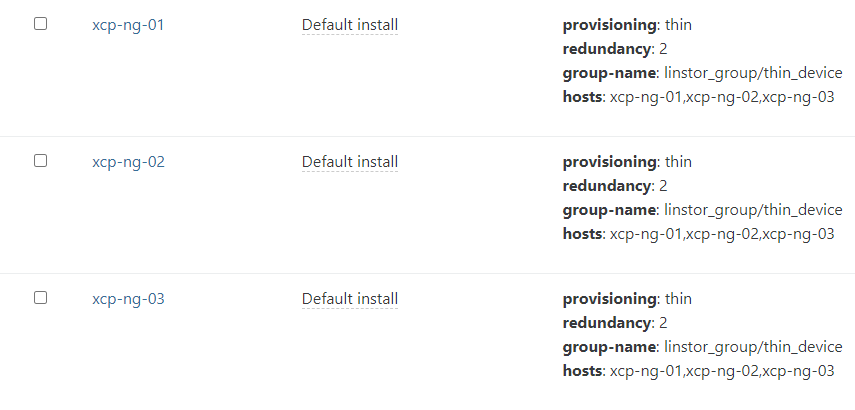

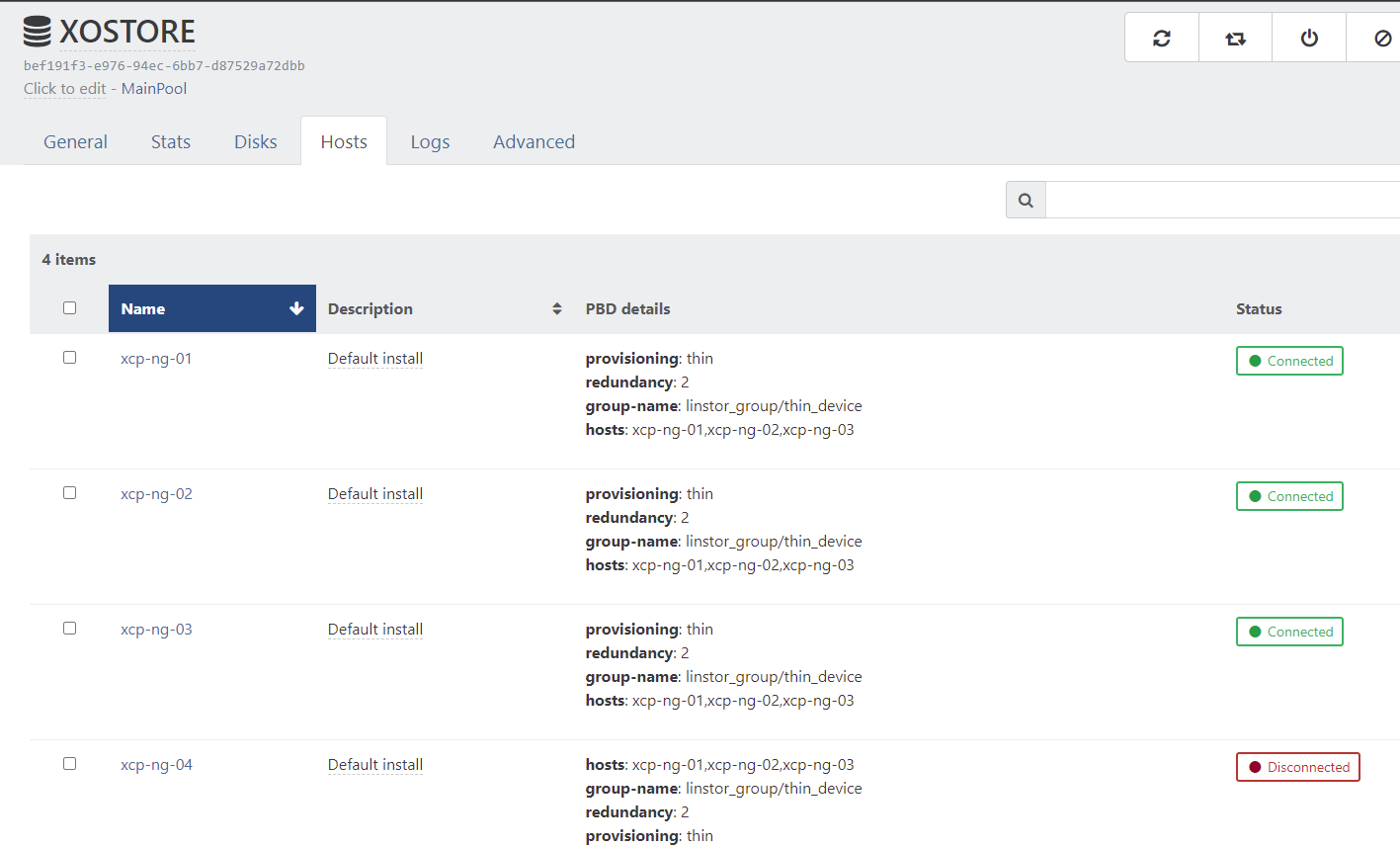

[11:49 xcp-ng-01 ~]# xe pbd-list | grep linstor -3 uuid ( RO) : e75fdc51-29a4-aa57-bc44-459f80a0d230 host-uuid ( RO): ad95c6ca-612d-42af-8909-d4e9dc7645bb sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): true -- uuid ( RO) : 063db650-55b1-a3e0-9d9a-e94ce938988d host-uuid ( RO): 5747f145-0dc2-4987-a6b9-b6c5a7ed0505 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03; group-name: linstor_group/thin_device; redundancy: 2; provisioning: thin currently-attached ( RO): false -- uuid ( RO) : a1f876b1-0568-71ac-9ffb-720e626cb4ab host-uuid ( RO): e286a04a-69bf-4d59-a0c8-e7338e8c1831 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): true uuid ( RO) : 08564ab5-a518-f709-8527-f592c2592d14 host-uuid ( RO): eb48f91d-9916-4542-9cf4-4a718abdc451 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): trueAfter that, I remove all the PBD to all hosts to be able to recreate the PBDs on all hosts with the new xcp-ng-04

xe pbd-create host-uuid=e286a04a-69bf-4d59-a0c8-e7338e8c1831 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=ad95c6ca-612d-42af-8909-d4e9dc7645bb sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=eb48f91d-9916-4542-9cf4-4a718abdc451 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=5747f145-0dc2-4987-a6b9-b6c5a7ed0505 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04all succeed. No error.

After that I try to connect all PBDs to the SR

[11:13 xcp-ng-01 ~]# xe pbd-plug uuid=7d588c37-a152-9666-175e-91b2d48c150f [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=99f76235-1b1a-e5fa-bb19-3883737fcc6d [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=df727345-f475-b929-ecc1-b506f0053361 [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=8b4ddc6f-e25a-1942-a435-345ccc93551a Error code: SR_BACKEND_FAILURE_47 Error parameters: , The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']],From there, I'm a bit lost...

1- Do I need to add the linstor satellite xcp-ng-04 before doing all the PBDs ?

2- Should I start any of the services on xcp-ng-04 before doing all this ?Regards

-

Any input appreciated

Regards

-

Question for @ronan-a

-

@dumarjo Could you open a ticket with a tunnel please? I can take a look. Also: I started a script this week to simplify the management of LINSTOR with add/remove commands.

-

Hi,

@ronan-a said in XOSTOR hyperconvergence preview:@dumarjo Could you open a ticket with a tunnel please? I can take a look. Also: I started a script this week to simplify the management of LINSTOR with add/remove commands.

Ticket done on vates.

-

So after analysis:

- The iptables of the new host must be updated (in the case of add/remove action) to connect the corresponding new node to the linstor controller. Otherwise, we can have a "Auto-evict" error in the database.

- PBDs must/can be updated after the creation of the new node.

Thank you for the feedback, it's helpful to see the potential issues to automatise LINSTOR management.

-

To be able to recreate and reconnect all the PBD, I have modified the /etc/hosts file to add manually each hosts with their IPs. I know that @ronan-a is working to fix the hostname addressing in the driver. But at least I can continue to test the scalability.

Looks promising !