nVidia Tesla P4 for vgpu and Plex encoding

-

Yes, some parts we can't redistribute publicly, but which are present in CH ISO.

-

I've been digging around vGPU for days.

I found information that you don't need any additional binaries to make it work. All you need is Nvidia vGPU drivers.

So I tried installing different versions of Nvidia vGPU drivers for XenServer on xcp-ng.

There were no errors during installation.

After installing the drivers I was able to see all vGPU types in XenCenter,nvidia-smigives me the correct output.

I also checked xensource.log and this is what I found.Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:7e239728-ac74-4323-b8d0-7c40fa318411 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:6448a86a-ee72-492a-b700-83d1645f0c60 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:7e239728-ac74-4323-b8d0-7c40fa318411 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:6448a86a-ee72-492a-b700-83d1645f0c60 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|vgpuops] vGPUs allocated to VM (OpaqueRef:7051bb05-712e-4dc3-bf6b-fef76c0980ee) are: OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|vgpuops] Creating virtual VGPUs Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:7e239728-ac74-4323-b8d0-7c40fa318411 Dec 1 00:53:25 XEN60 xapi: [debug||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_gpumon] assert_vgpu_pgpu_are_compatible: vGPU/pGPU are compatible by default OpaqueRef:f8b54a1f-8f3c-4ba5-a475-980b4e2af511/OpaqueRef:6448a86a-ee72-492a-b700-83d1645f0c60 Dec 1 00:53:25 XEN60 xapi: [ info||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xenops] xenops: VM.import_metadata {"vusbs":[],"vgpus":[{"implementation":["Nvidia",{"extra_args":"","uuid":"a50159e3-a755-0b90-19ea-d5697b007834","type_id":"224","virtual_pci_address":{"fn":0,"dev":11,"bus":0,"domain":0}}],"physical_pci_address":{"fn":0,"dev":0,"bus":193,"domain":0},"position":0,"id":["f565e3ab-2fc9-2d00-a184-e7f28ee91915","0"]}],"pcis":[],"vifs":[],"vbds":[{"persistent":true,"extra_private_keys":{},"extra_backend_keys":{"polling-duration":"1000","polling-idle-threshold":"50"},"unpluggable":true,"ty":"CDROM","mode":"ReadOnly","position":["Ide",3,0],"id":["f565e3ab-2fc9-2d00-a184-e7f28ee91915","xvdd"]},{"persistent":true,"extra_private_keys":{},"extra_backend_keys":{"polling-duration":"1000","polling-idle-threshold":"50"},"unpluggable":true,"ty":"Disk","backend":["VDI","b2d22c67-abe8-d411-ba19-b5aa046407e9/b3fc6e5b-7d44-4117-b614-a9c086be0cf7"],"mode":"ReadWrite","position":["Ide",0,0],"id":["f565e3ab-2fc9-2d00-a184-e7f28ee91915","xvda"]}],"vm":{"generation_id":"4573398145280631014:2680438277400141975","has_vendor_device":true,"pci_power_mgmt":false,"pci_msitranslate":false,"on_reboot":["Start"],"on_shutdown":["Shutdown"],"on_crash":["Start"],"scheduler_params":{"affinity":[],"priority":[256,0]},"vcpus":2,"vcpu_max":2,"memory_dynamic_min":2147483648,"memory_dynamic_max":2147483648,"memory_static_max":2147483648,"suppress_spurious_page_faults":false,"ty":["HVM",{"firmware":["Uefi",{"backend":"xapidb","on_boot":"Persist"}],"qemu_stubdom":false,"qemu_disk_cmdline":false,"boot_order":"cd","pci_passthrough":false,"pci_emulations":[],"serial":"pty","acpi":true,"video":"Vgpu","video_mib":16,"timeoffset":"0","shadow_multiplier":1.0,"hap":true}],"bios_strings":{"bios-vendor":"Xen","bios-version":"","system-manufacturer":"Xen","system-product-name":"HVM domU","system-version":"","system-serial-number":"","baseboard-manufacturer":"","baseboard-product-name":"","baseboard-version":"","baseboard-serial-number":"","baseboard-asset-tag":"","baseboard-location-in-chassis":"","enclosure-asset-tag":"","hp-rombios":"","oem-1":"Xen","oem-2":"MS_VM_CERT/SHA1/bdbeb6e0a816d43fa6d3fe8aaef04c2bad9d3e3d"},"platformdata":{"featureset":"178bfbff-f6d83203-2e500800-040001f7-0000000f-219c01a9-00400004-00000000-010cd005-00000000-00000000-00000000-00000000-00000000-00000000-00000000-00000000-00000000","timeoffset":"0","usb":"true","usb_tablet":"true","device-model":"qemu-upstream-uefi","videoram":"8","hpet":"true","secureboot":"false","viridian_apic_assist":"true","apic":"true","device_id":"0002","cores-per-socket":"2","viridian_crash_ctl":"true","pae":"true","vga":"std","nx":"true","viridian_time_ref_count":"true","viridian_stimer":"true","viridian":"true","acpi":"1","viridian_reference_tsc":"true"},"xsdata":{"vm-data/mmio-hole-size":"268435456","vm-data":""},"ssidref":0,"name":"Windows Server 2022 (64-bit) (1)","id":"f565e3ab-2fc9-2d00-a184-e7f28ee91915"}} Dec 1 00:53:25 XEN60 xenopsd-xc: [debug||140 |Async.VM.start R:021195d15a31|xenops_utils] TypedTable: Writing VM/f565e3ab-2fc9-2d00-a184-e7f28ee91915/vgpu.0 Dec 1 00:53:25 XEN60 xenopsd-xc: [debug||36 |Async.VM.start R:021195d15a31|xenguesthelper] connect: args = [ -mode hvm_build -image /usr/libexec/xen/boot/hvmloader -vgpu -domid 2 -store_port 3 -store_domid 0 -console_port 4 -console_domid 0 -mem_max_mib 2032 -mem_start_mib 2032 ] Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] Device.Dm.start domid=2 args: [-vgpu -videoram 16 -vnc unix:/var/run/xen/vnc-2,lock-key-sync=off -acpi -monitor null -pidfile /var/run/xen/qemu-dm-2.pid -xen-domid 2 -m size=2032 -boot order=cd -usb -device usb-tablet,port=2 -smp 2,maxcpus=2 -serial pty -display none -nodefaults -trace enable=xen_platform_log -sandbox on,obsolete=deny,elevateprivileges=allow,spawn=deny,resourcecontrol=deny -S -parallel null -qmp unix:/var/run/xen/qmp-libxl-2,server,nowait -qmp unix:/var/run/xen/qmp-event-2,server,nowait -device xen-platform,addr=3,device-id=0x0002 -drive file=,if=none,id=ide1-cd1,read-only=on -device ide-cd,drive=ide1-cd1,bus=ide.1,unit=1 -device nvme,serial=nvme0,id=nvme0,addr=7 -drive id=disk0,if=none,file=/dev/sm/backend/b2d22c67-abe8-d411-ba19-b5aa046407e9/b3fc6e5b-7d44-4117-b614-a9c086be0cf7,media=disk,auto-read-only=off,format=raw -device nvme-ns,drive=disk0,bus=nvme0,nsid=1 -device xen-pvdevice,device-id=0xc000,addr=6 -net none] Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] Starting daemon: /usr/bin/vgpu with args [--domain=2; --vcpus=2; --suspend=/var/lib/xen/demu-save.2; --device=0000:c1:00.0,224,0000:00:0b.0,a50159e3-a755-0b90-19ea-d5697b007834] Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] vgpu: should be running in the background (stdout -> syslog); (fd,pid) = (FEFork (35,8823)) Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] Daemon started: vgpu-2 Dec 1 00:53:56 XEN60 xenopsd-xc: [error||36 ||xenops] vgpu: unexpected exit with code: 127 Dec 1 00:53:56 XEN60 xenopsd-xc: [ info||36 ||xenops_server] Caught Xenops_interface.Xenopsd_error([S(Failed_to_start_emulator);[S(f565e3ab-2fc9-2d00-a184-e7f28ee91915);S(vgpu);S(Daemon exited unexpectedly)]]) executing ["VM_start",["f565e3ab-2fc9-2d00-a184-e7f28ee91915",false]]: triggering cleanup actions Dec 1 00:53:58 XEN60 xenopsd-xc: [error||36 ||task_server] Task 42 failed; Xenops_interface.Xenopsd_error([S(Failed_to_start_emulator);[S(f565e3ab-2fc9-2d00-a184-e7f28ee91915);S(vgpu);S(Daemon exited unexpectedly)]]) Dec 1 00:53:58 XEN60 xenopsd-xc: [debug||36 ||xenops_server] TASK.signal 42 = ["Failed",["Failed_to_start_emulator",["f565e3ab-2fc9-2d00-a184-e7f28ee91915","vgpu","Daemon exited unexpectedly"]]] Dec 1 00:53:58 XEN60 xapi: [ info||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xapi_network] Caught Xenops_interface.Xenopsd_error([S(Failed_to_start_emulator);[S(f565e3ab-2fc9-2d00-a184-e7f28ee91915);S(vgpu);S(Daemon exited unexpectedly)]]): detaching networks Dec 1 00:53:58 XEN60 xapi: [error||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xenops] Caught exception starting VM: Xenops_interface.Xenopsd_error([S(Failed_to_start_emulator);[S(f565e3ab-2fc9-2d00-a184-e7f28ee91915);S(vgpu);S(Daemon exited unexpectedly)]]) Dec 1 00:53:58 XEN60 xenopsd-xc: [debug||25 |org.xen.xapi.xenops.classic events D:22e2807de46a|xenops_utils] TypedTable: Removing VM/f565e3ab-2fc9-2d00-a184-e7f28ee91915/vgpu.0 Dec 1 00:53:58 XEN60 xenopsd-xc: [debug||25 |org.xen.xapi.xenops.classic events D:22e2807de46a|xenops_utils] TypedTable: Deleting VM/f565e3ab-2fc9-2d00-a184-e7f28ee91915/vgpu.0 Dec 1 00:53:58 XEN60 xenopsd-xc: [debug||25 |org.xen.xapi.xenops.classic events D:22e2807de46a|xenops_utils] DB.delete /var/run/nonpersistent/xenopsd/classic/VM/f565e3ab-2fc9-2d00-a184-e7f28ee91915/vgpu.0 Dec 1 00:53:58 XEN60 xapi: [error||952 HTTPS 192.168.8.103->|Async.VM.start R:021195d15a31|xenops] Re-raising as FAILED_TO_START_EMULATOR [ OpaqueRef:7051bb05-712e-4dc3-bf6b-fef76c0980ee; vgpu; Daemon exited unexpectedly ] Dec 1 00:53:58 XEN60 xapi: [error||952 ||backtrace] Async.VM.start R:021195d15a31 failed with exception Server_error(FAILED_TO_START_EMULATOR, [ OpaqueRef:7051bb05-712e-4dc3-bf6b-fef76c0980ee; vgpu; Daemon exited unexpectedly ]) Dec 1 00:53:58 XEN60 xapi: [error||952 ||backtrace] Raised Server_error(FAILED_TO_START_EMULATOR, [ OpaqueRef:7051bb05-712e-4dc3-bf6b-fef76c0980ee; vgpu; Daemon exited unexpectedly ])I think that main is

Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] vgpu: should be running in the background (stdout -> syslog); (fd,pid) = (FEFork (35,8823)) Dec 1 00:53:26 XEN60 xenopsd-xc: [debug||36 ||xenops] Daemon started: vgpu-2 Dec 1 00:53:56 XEN60 xenopsd-xc: [error||36 ||xenops] vgpu: unexpected exit with code: 127So it said that

Daemon started: vgpu-2, but then failed withcode: 127Are there any options to debug it? Any ideas why it failed to start?

-

That's because our version of emu-manager doesn't support vGPU. In short, Citrix decided in 2018 to introduce emu-manager and to make it closed source. Without it, you can't even boot a VM (read the story here, it's pretty "funny": https://bugs.xenserver.org/browse/XSO-878)

So we had to come with our own version without any clue on how it works. We managed to make something working, but obviously it took a lot of efforts and time, even without vGPU management.

In theory, you can use the emu-manager from Citrix ISO to replace our own, and that should do the trick.

-

I have tried to com emu-manager from different version of Citrix ISO, but nothing changed.

May I have to enable trial license, but it will be non production variant.So I will switch to AMD Firepro s7150X2 GPU

It would be great to expand the list of supported GPUs in the future.

NVIDIa drivers are available after registration and there are some triks to overcome NVIDIA vGPU license on opensource platforms. So we need "just" make some changes in emu-manager... Do you have source code if emu-manager that xpn-ng using? -

We had someone who managed to get Nvidia vGPU working recently, so it should work but I'm not confident to give all details publicly since it's not legal to redistribute or use proprietary packages

In my opinion, the future will be mediated devices, using VFIO or something. And good news: for our DPU work, we are working on an equivalent of VFIO for Xen. So the solution might come from there

-

@olivierlambert could you give me a contact, please? I will contact him in private conversation.

-

Finally after a week I found the solution!

There is no problem with emu-manager.

XCP does not contain necessary packagevgpu.

I copiedvgpufrom Citrix ISO and now it is alive! : ) -

Ah great

But I think our EMU manager won't work, do you confirm you are still using the Citrix one, right?

But I think our EMU manager won't work, do you confirm you are still using the Citrix one, right? -

Steps I have done to make NVIDIA vGPU works:

- Install XCP-ng 8.2.1

- Install all update

yum update reboot- Download NVIDIA vGPU drivers for XenServer 8.2 from NVIDIA site. Version NVIDIA-GRID-CitrixHypervisor-8.2-510.108.03-513.91

- Unzip and install rpm from Host-Drivers

rebootagain- Download free CitrixHypervisor-8.2.0-install-cd.iso from Citrix site

- Open CitrixHypervisor-8.2.0-install-cd.iso with 7-zip, then unzip

vgpubinary file from Packages->vgpu....rpm->vgpu....cpio->.->usr->lib64->xen->bin - Upload

vgputo XCP-ng host to/usr/lib64/xen/binand made it executablechmod +x /usr/lib64/xen/bin/vgpu - Deployed VM with vGPU and it started without any problems

So I did not make any modifications with emu-manager.

My test server is far away from me and it will take some time to download the windows ISO to this test location. Then I will check how it works in the guest OS and report back here.

-

@splastunov Will this be hampered by any licensing issues? To my understanding , NVIDIA vGPU requires a license per user per GPU to work properly. Unless this isn't the case on Xen?

-

@wyatt-made I need few days to test it.

Will report later here -

@wyatt-made Yeah, you need not only licenses for the hosts and any VMs running on them, but also have to run a custom NVIDIA license manager.

-

Mediated devices will be a game changer… Eager to show our results with DPU, that will be the start of it. Some reading on the potential: https://arccompute.com/blog/libvfio-commodity-gpu-multiplexing/

-

No luck yet...

I found 2 configs:

/usr/share/nvidia/vgpu/vgpuConfig.xml.

Seams that nvidia-vgpud.service use this config on start to generate vgpu types.

List of vgpu types you can get with command

nvidia-smi vgpu -sMy output is

GPU 00000000:81:00.0 GRID T4-1B GRID T4-2B GRID T4-2B4 GRID T4-1Q GRID T4-2Q GRID T4-4Q GRID T4-8Q GRID T4-16Q GRID T4-1A GRID T4-2A GRID T4-4A GRID T4-8A GRID T4-16A GRID T4-1B4 GPU 00000000:C1:00.0 GRID T4-1B GRID T4-2B GRID T4-2B4 GRID T4-1Q GRID T4-2Q GRID T4-4Q GRID T4-8Q GRID T4-16Q GRID T4-1A GRID T4-2A GRID T4-4A GRID T4-8A GRID T4-16A GRID T4-1B4- Set of configs located in

/usr/share/nvidia/vgx

Here are individual config for each type

# ls -la | grep "grid_t4" -r--r--r-- 1 root root 530 Oct 20 09:11 grid_t4-16a.conf -r--r--r-- 1 root root 556 Oct 20 09:11 grid_t4-16q.conf -r--r--r-- 1 root root 529 Oct 20 09:11 grid_t4-1a.conf -r--r--r-- 1 root root 529 Oct 20 09:11 grid_t4-1b4.conf -r--r--r-- 1 root root 529 Oct 20 09:11 grid_t4-1b.conf -r--r--r-- 1 root root 555 Oct 20 09:11 grid_t4-1q.conf -r--r--r-- 1 root root 528 Oct 20 09:11 grid_t4-2a.conf -r--r--r-- 1 root root 528 Oct 20 09:11 grid_t4-2b4.conf -r--r--r-- 1 root root 528 Oct 20 09:11 grid_t4-2b.conf -r--r--r-- 1 root root 554 Dec 9 15:45 grid_t4-2q.conf -r--r--r-- 1 root root 529 Oct 20 09:11 grid_t4-4a.conf -r--r--r-- 1 root root 555 Oct 20 09:11 grid_t4-4q.conf -r--r--r-- 1 root root 530 Oct 20 09:11 grid_t4-8a.conf -r--r--r-- 1 root root 556 Oct 20 09:11 grid_t4-8q.confNow I'm trying to change pci_id of vgpu, to make guest OS "think" that vgpu is Quadro RTX 5000 (based on the same chip TU104).

I played around with the configs, but without success.

Any changes in the configs lead to the fact that the VM stops starting, because XCP cannot create vgpu.In the guest OS, I tried to install different drivers manually, but the device does not start.

So I have 2 questions:

-

Is there any option to make change in some "raw" VM config (or something like this) to change vgpu pci_id?

I have tried to export metadata to XVA format, edit it and import.

But after VM start it change all IDs back.... -

Is it possible to create custom vgpu_types?

xe vgpu-type-listshow that all types are RO (readonly).

Seams that they are generating when XCP boot.

-

@splastunov My guess is that it's not going to be possible to do any customizing since the GPU configuration types are managed by the NVIDIA drivers and applications, which incorporate specific types associated with each GPU model. As newer releases appear, sometimes this will change (such as with the introduction of the "B" configurations some years ago).

About the only close equivalent to a "raw" designation for a VM would be to do a passthrough to that VM,but even then , you still are going to be restricted to defining some standard GPU type. -

Haha, it finally works!!!

The problem was with the template I used to deploy the VM.

I first deployed a VM from the default Windows 2019 template and it was not possible to install the GPU drivers.After that I tried deploying the VM from the "other installation media" template and now I can install any drivers.

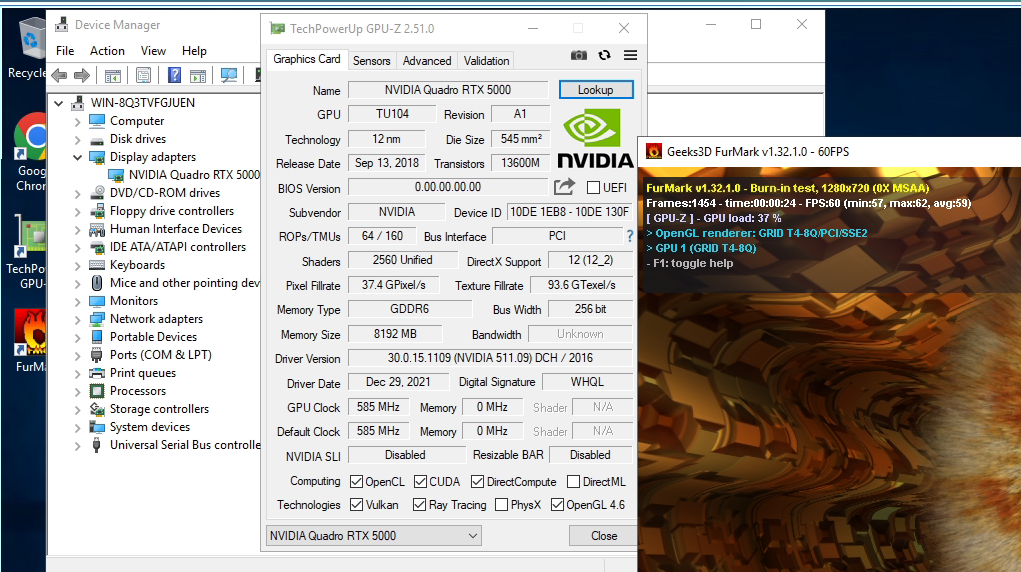

To make it work with different benchmarks, I installed the Quadro RTX 5000 driver (from consumer site).

The result is on the screen. About 60 average FPS.

I think it is limited with driver on host.

As you can see FurMark detected GPU correctly T4-Q8.

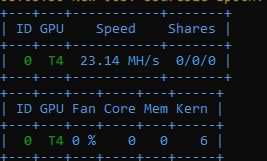

Result from eth classic miner.

Miner detected GPU as T4 too.

No licenses are required : )

-

@splastunov Hmmm, not sure that will work for long for a P4 or T4 without an NVIDIA license. At some point, it will likely throttle down to a maximum of 3 FPS after the grace period expires.

Then again, you might get lucky.

-

Weird, something in the Windows template is make it fail? That's interesting information

-

S splastunov referenced this topic on

S splastunov referenced this topic on

-

Hi everyone,

I'm interested too in the use of Nvidia GRID in XCP-ng because we have a cluster with 3 XCP-ng servers and now a new one with a GPU Nvidia A100. It would be great if I could use it in a new XCP-ng pool, because it's an excellent tool and we already have the knowledge.

Our plan is to virtualize the A100 80 GB GPU so we can use it in various virtual machines, with "slices" of 10/20 GB, for compute tasks (AI, Deep learning, etc.).

So I have two questions:- The trick copying this vgpu executable can be dangerous when updating the XCP-ng server? Maybe overwriten, deleted or something.

- Do you have plan of supporting nvidia vGPU soon? We still can use Qemu over Ubuntu or other Linux with this drivers and everything works ok but XCP-ng is more professional than qemu IMHO.

You are doing a great great job at Vates. Keep going!

Dani -

@Dani I made a post yesterday about Nvidia MiG support. vGPU support on XCP-ng is tricky because of the proprietary code bit that makes vGPU work can't be freely distributed. On the other hand, MiG (which is supported by many Ampere cards like to A100) doesn't requiring licensing like vGPU and seemingly just creates PCI addresses for the card which could, in theory, be passed through to VMs.

CC: @olivierlambert since we briefly talked about this yesterday in my thread.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login