Non-server CPU compatibility - Ryzen and Intel

-

In theory we now have the relevant patches. We'll try to provide a patched build in the coming days, either this week or the next.

-

Awesome! I appreciate it.

-

@stormi Good news! if you need some testing to be done, I've a 7900X avaliable for testing.

-

Hi everyone!

So, @andSmv applied a few patches to Xen for you and built it.

As it's still only a temporary test build, you will find it in a special user repository at: https://koji.xcp-ng.org/repos/user/8/8.3/asemenov1/x86_64/

You may either add the repository (by creating a .repo file in /etc/yum.repos.d/) and then run

yum update --enablerepo={nameofrepo}, or download the RPMs locally and then runyum update ./xen*.rpm.Please let us know if it fixes issues with your CPUs.

-

-

Yay \o/

-

It's looking very good on my 7900X since applying the fixes:

- Rolled back all the "nopv" boot options on Linux VMs (Ubuntu, Mint, Rocky) and they boot quickly.

- Xen Orchestra has its IP address within a minute instead of around 10 minutes

- Xen tools status on the Linux VMs are now showing "installed" (vs "not installed" before fixes)

- No "stuck CPU" messages observed anywhere

What a difference! Thanks!!

-

@stormi Everything seem to be running stable. Thanks for the updates and all the hard work. I'm running a Ryzen 7 7700x.

-

Thanks for your feedback!

-

The patch is now included in the latest updates to XCP-ng 8.3 (see https://xcp-ng.org/forum/post/62263) so you can forget about the test build and just update normally.

For those who installed the test build, it will be automatically replaced when you update.

-

@stormi today I installed fresh on asus motherboard:

7900X 2x32GB 5600 CL40 , X670-P-Wifi bios 1616 from May 16 2023.Without forcing X2APIC on the bios, the 8.3 installer gave KP.

Installed to an nvme SSD and rebooted without issues. Added the repo and updated the system.Install XOA through https://xen-orchestra.com/#!/xoa And made a simple Windows 10 Client machine, with 2 cores, the installl went smooth.

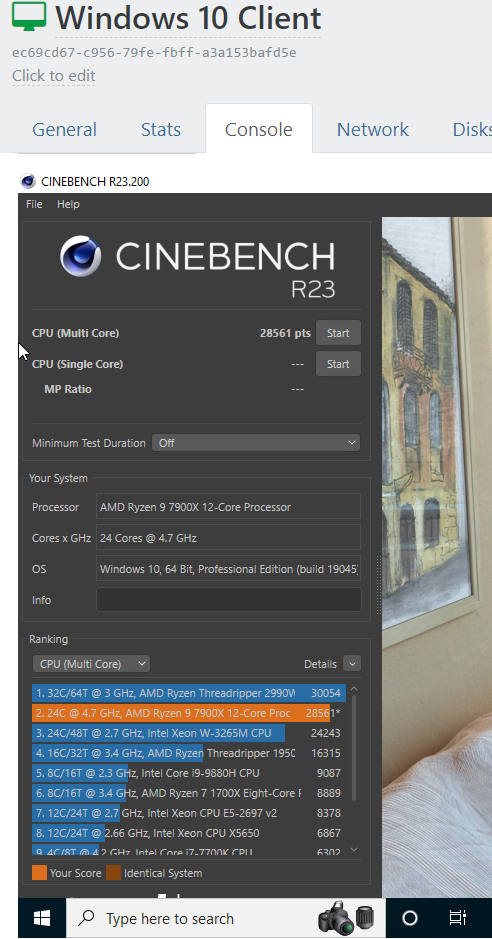

So good that I upgrade the VM with 24 cores just for testing and got a very nice surprise:

Almost the same performance I've got through Windows 11 baremetal!

Note this CPU only gets about 5.04 Ghz in one CCD and 4.85 Ghz in the other. When fully loaded. Not the best CPU I've got and runs 95ºC (normal for this CPU).

HTOP only shows 16 threads when X2apic enabled.

I need to test the integrated 2.5Gb NIC and the PCIe passtrough:

--> NVME pcie 4.0 directly to a VM (W11)

--> i350-T4 SR-IOV testing

---> i350-T4 directly to a VM.

---> X710-DA2 / X520-DA2 -

Great news

-

I've been spending time reading through this thread and running a few tests on my own just to make sure. It appears that the issue is far more present on Asus motherboards than others; when testing with both Asrock and Gigabyte boards, B650 and X670, the problem with 7900X and 7700X goes away. HOWEVER, if you do this on the Asus Prime board, you run into a series of fairly strange issues. First, I ran into both a combination of stability issues, random VM reboots, and after that it would simply perform at a pace far slower than I expected.

Now, I have two general mods of looking at how I do this.. I store a few VMs locally, but I also keep VMs available via ISCSI and NFS on a TrueNAS over 2.5Gb/10GB (two different storage networks).

For local VMs, my performance was very close on all boards tested. But if I'm using remote storage for hosting VMs, then the performance of the X series on Asus motherboards performed in a curve of falling behind other competitors as the speed needed increased. In other words, by the time I was accessing a high-test Windows Server on the 10Gb, the performance was significantly trailing the Asrock and Gigabyte products. And I mean SIGNIFICANTLY.

I'm not sure what Asus has done with their BIOS, I am running the current BIOS as available today, 5/29, and similar newest on the other products.

In the end, the Asrock (consumer) product provided the best overall transfer/stability/installation performance, Gigabyte a close second, and then Asus trailed significantly. I'm not sure what is wrong with their BIOS as of current, but it appears as though with Asus, you might be better with a non-X variant. I also used the ROG B650E-F Strix, thanks to Microcenter's sell on the product in a combo with the 7900X. I used the B650 Asrock and Gigabyte from holding with other products.

NOTE: I've reworked with the latest XCP-NG build, and even on the new build, the 7900X performs MUCH better across the board, but the Asus board still lags behind the others by about 7-10%.

This is just something of interest in my testing and YRMV.

-

@tmservo433 just to be sure, have you tried with latest 8.3 since the Xen patch was released?

-

@olivierlambert Correct. I've tried with all three boards, multiple processors, etc. The new patch is a HUGE improvement; the Asrock and Gigabyte are, in data transfer, boot and performance tests, between 1.5-3% of each other. That's close. Using the same cooling methodology so that I should not be receiving different boosts due to cooling. The Asus, however, trails by about 6% off of the performance from the Asrock, even with the newest BIOS. I'll keep looking in the ASUS BIOS, but it appears just something that has put them at a disadvantage over competitors right now, at least on this board model.

I just want to add: I've spent almost 15 years virtualizing small business needs that had to keep servers apart (application needs) and funding lots of servers and maintaining them. VMWare was a great solution. Even our rate with them for years, I worked from VMWare 4 on, and their product, including their desktop client, was excellent. Their webclient in 5X and up -sucked- and support seemed to fall off a cliff for us. Once we hit version 7, transport capability and performance, as well as expected cost for small business was simply a disaster. In looking for replacements, I'm glad I've found XCP-NG, in part thanks to Lawrence Systems Youtube, but also from past experience with Citrix terminal server (oh, the days where I'd put up dumb terminals).

Really happy that I've found XCP, and as I move forward with clients, it just isn't feasible for them to sit with VMWare any longer, and this is the ideal replacement.

-

Thanks for your feedback. Let's keep out of Asus for now then

BTW, we are about to open an official partner program soon, stay tuned!

-

@tmservo433 I'm testing a 7900X with Asus Prime X670-P with the latest patches on 8.3. When the patch was released I left a baisc configuration only deploying a W10 test machine and cloned snapshots to test. Was great.

Today I was setting the W10 machine and started to go very slow and painful. I just remember your post. DId a reboot, and started working fast again.

I'll be testing and installing a 10G nic on that board. The Realtek nic was working at 2.5Gb (I need to fire some iperfs to test). Connected to a 5GbE Switch.

I have a few machines with this combo with multiple NICs to use with QEMU on Ubuntu.

Not liking the ASUS expirience, I would love to have an AM5 board with 4 PCie 4X mechanical or with bifurcation.

I also tested unsucessfully to passtrough the NVME M.2 PCiev4 Driver with W11 installed. ( I managed to boot another system with Proxmox) but with older bios and many inestabilites.

-

@Sam @olivierlambert So.. I spent some time in our little storefront here running some tests. Remember, I'm coming from primarily a VMWare background so I played with some VMWare 8 over the weekend as well for my one team that will not switch pretty much regardless due to their deal with Dell. That said, I grabbed hold of some X670 boards and here is what I found out - and I'm going to do some comparisons with different Hyper-V here.

First, I want to say I would -never- recommend an X670 for Hyper-V. Normally a B650E is fine as you are not (or definitely should not) be overclocking or utilizing many of those features. That said, if you're going X670, it's hard to not say: get a 670E vs. a 670. The reason is the "E" means you have 24 PCI 5 lanes vs 4 on the X670. This effectively allows for better later expansion if you are looking at really utilizing NVME 5.0 or solid-state cards if you are looking at really intensive IOPs but also for future proof use. For example; I have one client who does extensive engineering using XCP-NG where both SolidWorks and Mastercam model works are data stored there; the faster we go the better. So we keep their workstations which are currently all Threadripper Pro 5995WX or Threadripper 3990X. One of the issues is understanding the purpose for which you are planning to both virtualize and the means by which you are needing to generate the IOPs and performance. If your end goal is just a home lab to run Plex or something, then your data and performance needs are different than planning to to fulfill in a setting where excellent server speed matters.

So, with that said, I ran some benches on a few boards that are consumer-oriented just to give some feedback that are X670E.

I tried these boards, utilizing the exact same processor (7900X, non-3D) as I had it on hand and did not feel I needed to open a new processor to test as if I established a baseline it'd be fine. 128GB of DDR5-6000 Corsair RAM, and while I could use onboard video with these processors I have mixed feelings about how that works out as it seems across the board in every Hyperviser to result in not the performance I desired.

So, before I get to XCP-NG, I want to comment on what I found in the other products on the market; I do utilize some of these at different points based on the familiarity sometimes with the onsite staff, as I work as primarily an outside consultant and I manage others or I work to implement policies/procedures/setups/etc.

Microsoft Hyper-V 2019: Microsoft's last HyperV core server, as they will not introduce another per their announcement to all of us; there is no HyperV 2022 stand alone product. This product worked and is improved over 2016 by a great margin, especially once you hit 12 or more high-use VMs that are of mixed OS (With Windows as a backbone). It is also one of the ones that really can address mixing Windows modes very, very well, with fewer issues associated with Windows 2003 and before if you are trying to spin up and recover old OS - something I have had to do more than once in the last few years where clients have discovered data "lost" but an old machine is hanging around; being able to image the old machine (I use Acronis) and image back into here.. I can make that click for NT4.0 and Server 2000, which doesn't always work with others. That said, the performance of Microsoft's Hyper-V trails every other product considerably and despite what you'd think about it being accepting of drivers, I ran into more driver issues including just utilizing Hyper-V inside fo a full install of Server2022 on any of these motherboards than most other OS. I was eventually able to "cram" AMD drivers down it's throat, but without them, many required features were broken, performed poorly, and did the experience was not pleasant.

Proxmox: functional from the beginning, the install went smooth, easy getting it going and connecting to TrueNas Scale as a backup target. I do not in general care for the ProxMox interface which reminds me of VMWare 4.0/5.0 web interface if I remember right.. very sparse; I have always appreciated their ZFS attitude, and performance was about equal to XCP-NG. I'm going to admit, I've always been pretty agnostic between the two products. There are things I really like about both, things I wish would change about both. If you like one, stick with it, if you like the other, stick with it. I feel it's a bit like people who got into fights between Ubuntu, Slackware, Redhat. Shit, I grew up in a basement lab running Sun Spark OS (SunOS) so.. yeah. Stick with what you like. When I get to the performance differences on the boards, I want to note that BOTH XCP-NG and ProxMox had damn near identical performance on these boards.

VMWare 8.0... ok, let me say this now: I like the interface. They've really upgraded, and I've used VMWare for years since the beginning. They've learned alot and they continue to implement some functions that I feel are absolutely unmatched. Then again, they are a huge company working for giant profits and that's what you'd expect. Their appliance level? Fantastic. If you have the $$$$$ and I mean at this point it is big $$$ then it's nice. But they've priced most people out. But here is the thing: I've tried multiple X670 and B650 boards on VMWare 8.0.. stability is non-existent. Crash. Crash. Tried different ram, different ram timings, going down to 4800, and tried turning off all onboard devices. Tried different cards. Nope. Nope. I could not get a stress test that was valid on VMWare 8 on this platform right now. I should note that in testing this I tried against a 2950X Threadripper I had on hand and a 5950X Ryzen I have on the bench, and VMWare was fine; though in one case it did not like the onboard NIC (not unexpected), still.. VMWare's hardware control is still big.

So, here's the performance rundown.

I tried a few boards: the MSI Tomahawk 670E; Asrock 670E Steel Legend; Gigabyte X670E Aorus; Asus X670E Prime-Pro X670E

I did not go for any of the super high-end motherboards, because as a part of a HyperV I am NOT needing a stack of onboard features I'm not going to use and I don't need to pay for a ton of overclocking. Expansion is the only item here that really matters as far as I'm concerned so I looked at M.2 options as well as SATA rust options. Brief rundown:

Asus Prime-Pro: Good news right off the bat was that the Realtek 2.5Gb onboard network adapter worked. I'm used to replacing with Intel 2.5Gb or 10Gbe NICs I have on hand for backup network purposes. Using as my base 128Gb of the Crucial RAM, 2 Seagate IronWolf Pro 14TB, I use an older Corsair Tower case for this test, 750W fully modular PSU (I'm not using a high end GPU, passing through a Quadro K2200, which doesn't even need a power connector). Tested the performance on the network adapter; good performance in the end, really happy with XCP-NGs run at this; performance is significantly (about 15Mb/s so you'll notice) better than MS Hyper-V, and again, VMWare unstable. So good work there. Every test VM ran solid, with stability very good, connectivity good, performance against local storage and run backup solid. I'm running a self-compile XO as I'm just using this in a test. I setup a few VMs as full "emergency recover" VMs and restore them from Acronis image universal restore: BOOM. No issues coming from a Xeon setup so transfer works smoothly. Performance comparison below.

MSI Tomahawk: Again, same Realtek 2.5Gbe, and had no problem right off of the bat. That's good news. I personally preferred the design of the MSI, and its performance is almost identical to the Asus. No real difference in the performance on any test I ran more than anything I couldn't associate with a fluke. Within 1% generally. This is using the newest version. So, pick your favorite.

Asrock Steel Legend: This is where things get interesting. Dual NICs, 1Gbe and 2.5Gbe, both were detected. Using the 7900X I ran into two things worth noting: boot time of both the OS as well as the VMs were considerably faster. I noticed, using the exact same setup, that I was getting a good uplift in both and it was noticeable. In the case of the VMs, the uplift was most significant when handling Windows Server 2019, where the boot time to get into an AD DC was reduced quite a bit. By quite a bit, I mean about 8-11% on each run. In case of getting data to and from TrueNAS, I also had a performance benefit, but this is largely due to the board itself; because of the dual inbuilt NICs, I reserved my management NIC to the 1Gbe and the Storage on the 2.5 and by dividing them out, I wasn't running management traffic over the storage network, able to put them on different ranges; something I generally recommend anyway. The benefits of this configuration is something anyone who has been through any network training coursework knows well.. hell, going back to when Novell was busy teaching me Tokenring, we talked about this, and by the time Windows NT4.0 was really rolling the benefits were obvious. We've known this one forever so it isn't surprising that a board with two supported NICs gives advantages if you want to virtualize. Also a benefit: it's cheaper than the Asus or the Tomahawk.

The Gigabyte option was the most expensive by far; as the X670E was only in the Master version I could find. That said, this is the one board that is not only outrageously more expensive than the others, but you are going to find it is the WORST option for XCP-NG, Proxmox, or anything else. The reason? Drivers. It's the only one that uses the Intel Lan, switching from a Realtek 2.5Gbe to Intel I225, and I had absolutely nothing but problems in making this function worth a damn. I normally recommend against Realtek and in favor of better options, but this adapter began non-functional, requiring me to install a card just to get an install taken care of, then I had to work like hell to try for functionality, and even after I could get some functionality resolved it was so miserably behind the others that I could never use it as a host for anything. Strong avoid; would probably be good for gaming.

Anyway, I had a weekend to waste, and it's always good to look. I will say for those that are thinking about it.. it isn't that hard right now to find companies who are replacing older Threadripper systems; and those machines are absolutely perfect hosts for XCP-NG. I managed to pickup a pair of X399 Motherboard/2950X combos, and I paid less than $150 each. In one case I even got grabbed a nice case with it. Grabbing a prior-gen Xeon or Threadripper for the most part is going to be a far, far better bang for the buck including support, than spending on high-end hardware for most virtualization tasks.

-

Far from being as detailed as everyone above but just to leave some contribution to the 'Non-server' topic, here are some experiences that I've had.

Everything is already outdated, but it might be useful as some reference anyway.

I have a client running a pair of XCP-ngs 8.1 on Ryzen 7 1800X w/ Gigabyte AB350M-GAMING 3 motherboards, Kingston consumer grade SSDs (almost all the write disk activity goes to a NAS), doing HA with halizard nosan version (DRBD to synchronize storage) for years without a hitch. We have even moved the servers to another location, syncing them through an EoIP vpn with virtually no downtime.

And I have just assembled a Ryzen 5700G with an Asus B550M TUF Plus motherboard and XCP-ng 8.2.1 for a homelab. The new AM5 is still a bit expensive in Brazil for a homelab, and I didn't needed too much cpu power anyway, mostly memory and storage. I had an issue with the RTL8125B NIC (just now I noticed the very first message states the same) but adding an Intel NIC made everything perfectly fine.

-

Today I installed a i350-T4 for testing and not depending on the realtek 2.5g card.

As I guess the computer didn't boot after installing the card.

DDR5 5600@C40 max supported on the QVL. Had to clear cmos and reconfigure again the bios so the computer can post again. Set up the L2APIC again, and SR-IOV, IOMMU.Got access to the server through SSH, but the server can't start any VM and have issues with the API not working locally. (XOA LITE) not working neither VMS.

With

xe vm-listsometimes list the vms, even withxe-toolstack-restart.The error:

Error : Connection Refused (calling connect)On the display in the vm list :

("'NoneType' object has no attribute 'xenapi' ", )Also in the xensource.log some erros on the string.

I will dump the logs and copy some over here.