Backup: ERR_OUT_OF_RANGE in RemoteVhdDisk.mergeBlock

-

Context: Home Lab running XCP_NG since 2018. Current H/W is 2x MS-A2 not pooled together; local storage. Backup to local Synology NAS.

XO: Manually built from sources (no 3rd part script), current commit 561e2Problem: One of 12 VMs in a nightly backup set is suddenly throwing this error (full log pasted at end):

"code": "ERR_OUT_OF_RANGE", "The value of \"offset\" is out of range. It must be >= 0 and <= 106492. Received 106496",This has happened the last three nights (so with commit 561e2 and with a commit from a few days ago).

The one different thing about this VM is that it also has 4 hourly rolling snapshots retaining 7 of them.

I'm not sure where to look to debug this. XO has been restarted. I haven't tried restarting the toolstack or host, or deleting all the snapshots, just in case the issue vanishes and I lose the opportunity to debug.

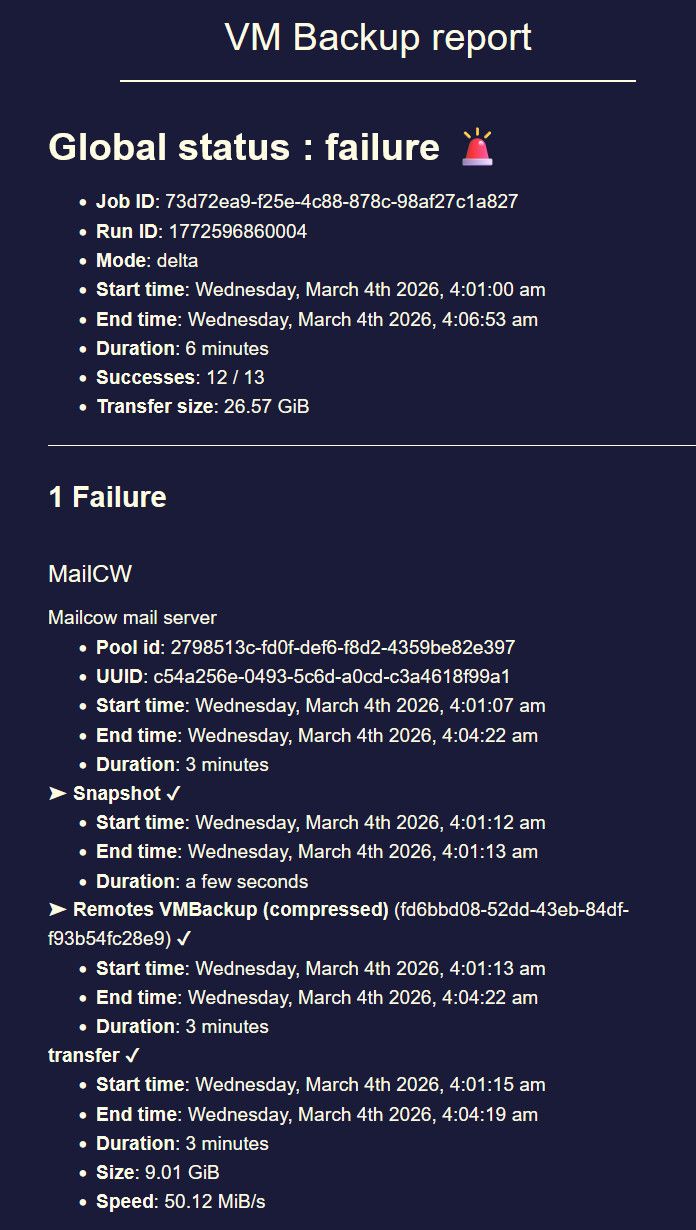

Another observation is that the email report says there is a failure, but actually claims that all phases of the backup succeeded.

{ "data": { "type": "VM", "id": "c54a256e-0493-5c6d-a0cd-c3a4618f99a1", "name_label": "MailCW" }, "id": "1772596867673", "message": "backup VM", "start": 1772596867673, "status": "failure", "tasks": [ { "id": "1772596867683", "message": "clean-vm", "start": 1772596867683, "status": "failure", "tasks": [ { "id": "1772596868690", "message": "merge", "start": 1772596868690, "status": "failure", "end": 1772596871939, "result": { "code": "ERR_OUT_OF_RANGE", "message": "The value of \"offset\" is out of range. It must be >= 0 and <= 106492. Received 106496", "name": "RangeError", "stack": "RangeError [ERR_OUT_OF_RANGE]: The value of \"offset\" is out of range. It must be >= 0 and <= 106492. Received 106496\n at boundsError (node:internal/buffer:88:9)\n at checkBounds (node:internal/buffer:57:5)\n at checkInt (node:internal/buffer:76:3)\n at writeU_Int32BE (node:internal/buffer:804:3)\n at Buffer.writeUInt32BE (node:internal/buffer:817:10)\n at VhdFile._setBatEntry (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:308:16)\n at VhdFile._createBlock (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:321:16)\n at VhdFile.writeEntireBlock (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:344:30)\n at RemoteVhdDisk.writeBlock (file:///opt/xen-orchestra/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:237:21)\n at RemoteVhdDisk.mergeBlock (file:///opt/xen-orchestra/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:298:19)" } } ], "end": 1772596871939, "result": { "code": "ERR_OUT_OF_RANGE", "message": "The value of \"offset\" is out of range. It must be >= 0 and <= 106492. Received 106496", "name": "RangeError", "stack": "RangeError [ERR_OUT_OF_RANGE]: The value of \"offset\" is out of range. It must be >= 0 and <= 106492. Received 106496\n at boundsError (node:internal/buffer:88:9)\n at checkBounds (node:internal/buffer:57:5)\n at checkInt (node:internal/buffer:76:3)\n at writeU_Int32BE (node:internal/buffer:804:3)\n at Buffer.writeUInt32BE (node:internal/buffer:817:10)\n at VhdFile._setBatEntry (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:308:16)\n at VhdFile._createBlock (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:321:16)\n at VhdFile.writeEntireBlock (/opt/xen-orchestra/packages/vhd-lib/Vhd/VhdFile.js:344:30)\n at RemoteVhdDisk.writeBlock (file:///opt/xen-orchestra/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:237:21)\n at RemoteVhdDisk.mergeBlock (file:///opt/xen-orchestra/@xen-orchestra/backups/disks/RemoteVhdDisk.mjs:298:19)" } }, { "id": "1772596872185", "message": "snapshot", "start": 1772596872185, "status": "success", "end": 1772596873726, "result": "04b94e3c-8443-84a0-5ac2-486d4e13ec93" }, { "data": { "id": "fd6bbd08-52dd-43eb-84df-f93b54fc28e9", "isFull": false, "type": "remote" }, "id": "1772596873726:0", "message": "export", "start": 1772596873726, "status": "success", "tasks": [ { "id": "1772596875363", "message": "transfer", "start": 1772596875363, "status": "success", "end": 1772597059519, "result": { "size": 9678356480 } }, { "id": "1772597061803", "message": "clean-vm", "start": 1772597061803, "status": "success", "end": 1772597062717, "result": { "merge": true } } ], "end": 1772597062918 } ], "end": 1772597062919 }, -

Question for @florent I assume

-

@olivierlambert I am summoning @simonp that worked on the merge last month ( in fact he is already working on it)

-

did you resize the VM disks in the near past ?

-

Hi @wralb, thank you for the report.

We have identified the source of the issue and implemented a potential fix. It's currently on the branch

fix_merge_out_of_range.Could you check it out, retry the backup and confirm that the issue issue is fixed ?

Thanks.

-

@florent, yes, the VM has a single disk and I did enlarge it by a few GB in the last couple of weeks.

-

@simonp Thanks! Will have a go as soon as I can.

-

Hi @simonp, thanks again. Great service!!

I have checked out your fix branch and re-run the backup. It succeeded as seen here:

{ "data": { "mode": "delta", "reportWhen": "always" }, "id": "1772725052650", "jobId": "73d72ea9-f25e-4c88-878c-98af27c1a827", "jobName": "Daily backup of Unix VMs with changing data", "message": "backup", "scheduleId": "9cbc3238-382d-4fae-83c0-4e92348898e5", "start": 1772725052650, "status": "success", "infos": [ { "data": { "vms": [ "c54a256e-0493-5c6d-a0cd-c3a4618f99a1" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "c54a256e-0493-5c6d-a0cd-c3a4618f99a1", "name_label": "MailCW" }, "id": "1772725054418", "message": "backup VM", "start": 1772725054418, "status": "success", "tasks": [ { "id": "1772725054426", "message": "clean-vm", "start": 1772725054426, "status": "success", "tasks": [ { "id": "1772725055044", "message": "merge", "start": 1772725055044, "status": "success", "end": 1772725196294 } ], "end": 1772725196304, "result": { "merge": true } }, { "id": "1772725197030", "message": "snapshot", "start": 1772725197030, "status": "success", "end": 1772725198708, "result": "e46c757e-9180-2502-81c5-36a2fb2928fc" }, { "data": { "id": "fd6bbd08-52dd-43eb-84df-f93b54fc28e9", "isFull": false, "type": "remote" }, "id": "1772725198708:0", "message": "export", "start": 1772725198708, "status": "success", "tasks": [ { "id": "1772725200147", "message": "transfer", "start": 1772725200147, "status": "success", "end": 1772725224785, "result": { "size": 2768240640 } }, { "id": "1772725225673", "message": "clean-vm", "start": 1772725225673, "status": "success", "end": 1772725225747, "result": { "merge": true } } ], "end": 1772725225757 } ], "end": 1772725225758 } ], "end": 1772725225759 } -

@wralb Perfect, thanks for getting back to us.

So the issue only occurs when a delta backup tries to backup a disk that has had an increase in size, so you can either stay on the branch or go back to master if you are not planning on enlarging any disks in the near future.

Anyways, the fix should be available in the next patch or release.

Thanks.

-

@simonp Thanks for the extra information; very helpful. I will revert to master as I don't routinely enlarge disks.

-

@wralb this will impact each merges with different sizes, so if you revert to master you may still have the issue

this commit will probably be in master by monday

-

@florent Understood. Thanks.

-

@wralb it is in master, we are preparing a patch release for XOA on monday morning with this fix

-

@florent One last update. I reverted to Master branch (6699b) yesterday evening and the backup ran without issues overnight.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login