Second (and final) Release Candidate for QCOW2 image format support

-

@pkgw Would it be possible to open a ticket and a support tunnel so that @Team-Storage can look at it?

-

@pkgw Our initial theory is that you might have applied updates at some point which had replaced the

smpackage with one that didn't support qcow2. Then a next update would have brought it back, but the metadata lost. -

I just published, in the

xcp-ng-testingrepository, what is hopefully the very last round of fixes before the feature goes live.You’ll have about three days to share your feedback if you’d like to be part of this final sprint

.

.Details at https://xcp-ng.org/forum/post/104961

-

@stormi That is quite possible. I'll open a ticket for further investigation.

-

This is it, it's now out!

https://xcp-ng.org/blog/2026/05/05/qcow2-is-now-ga-in-xcp-ng/

-

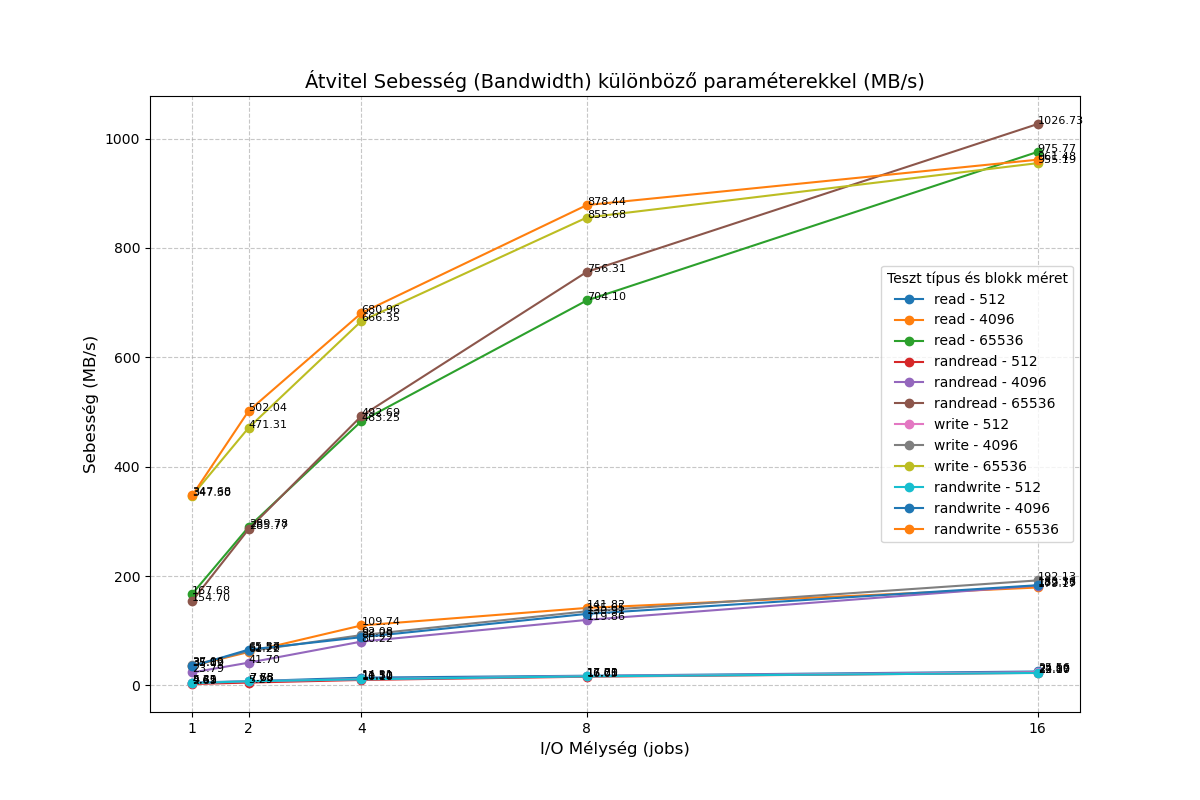

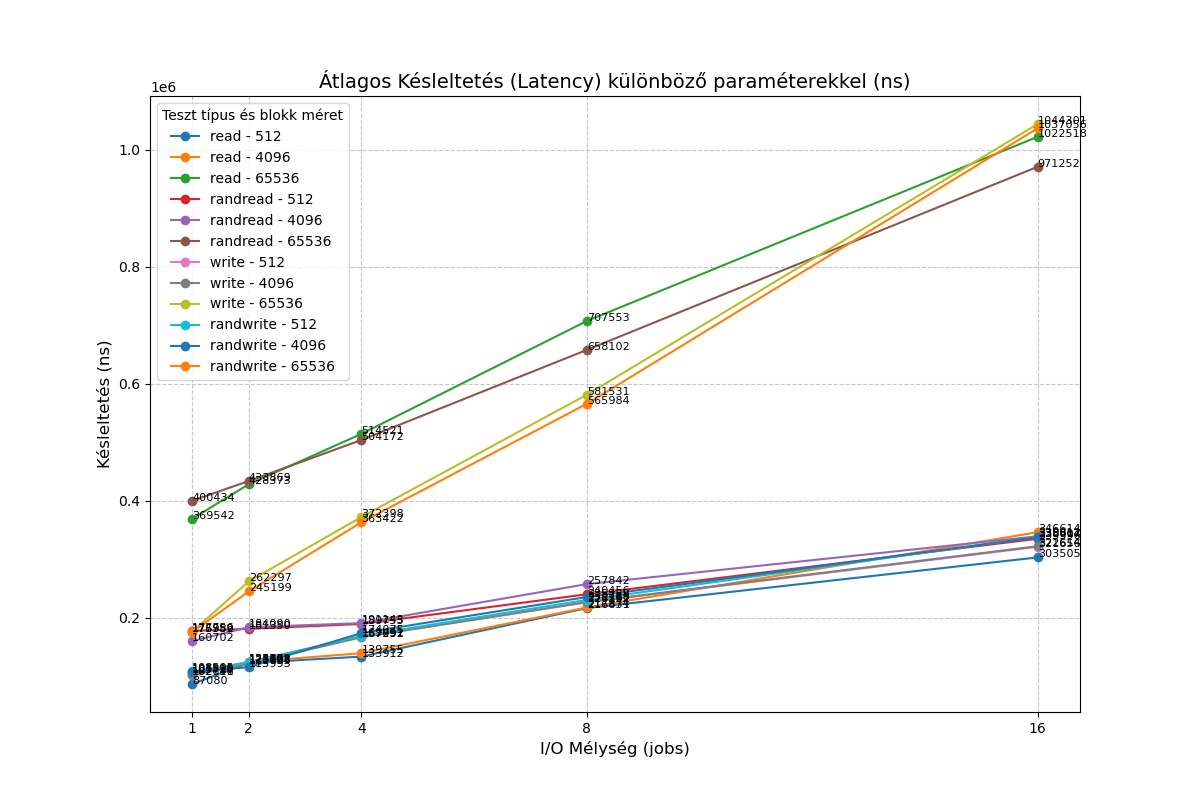

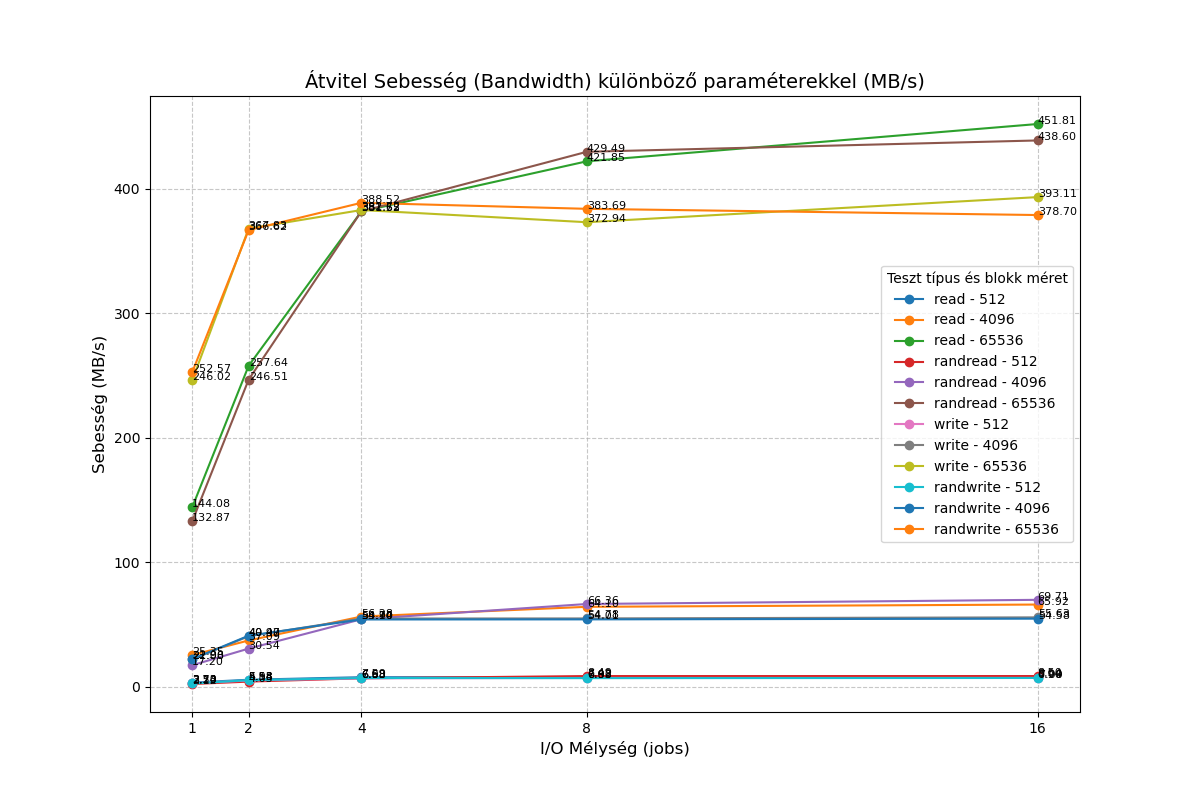

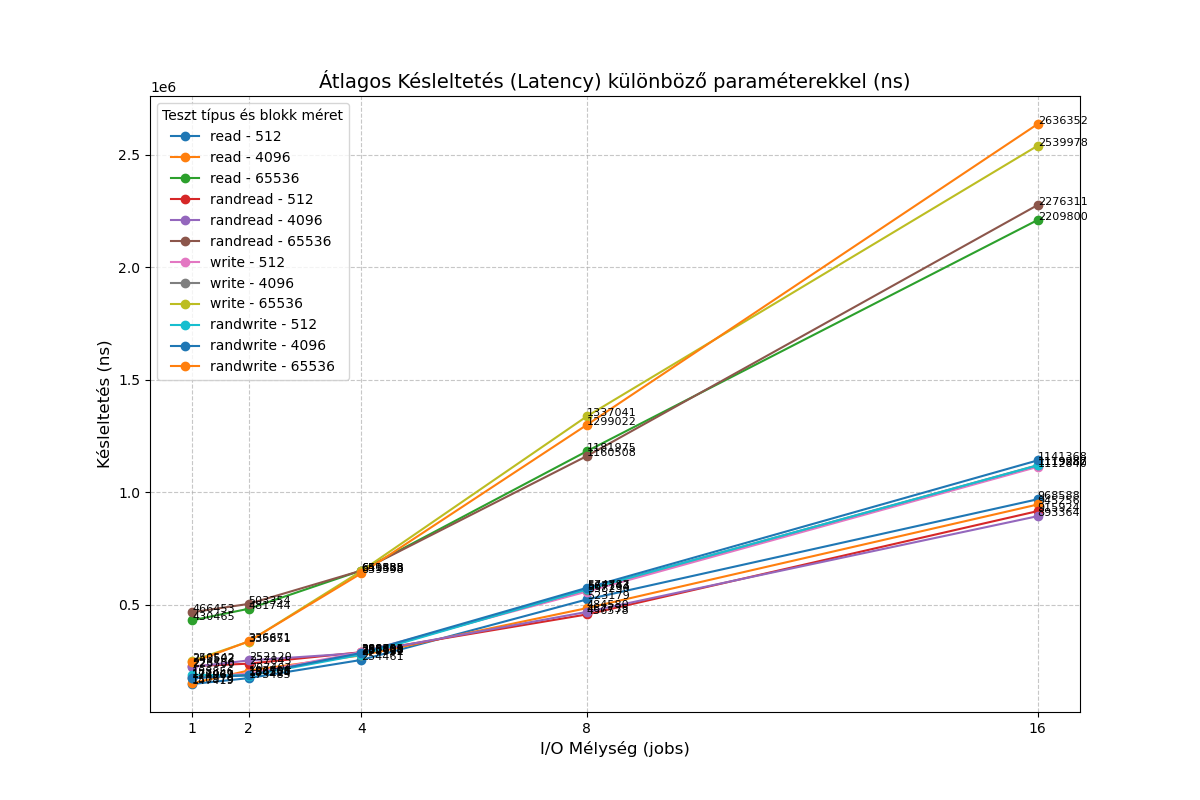

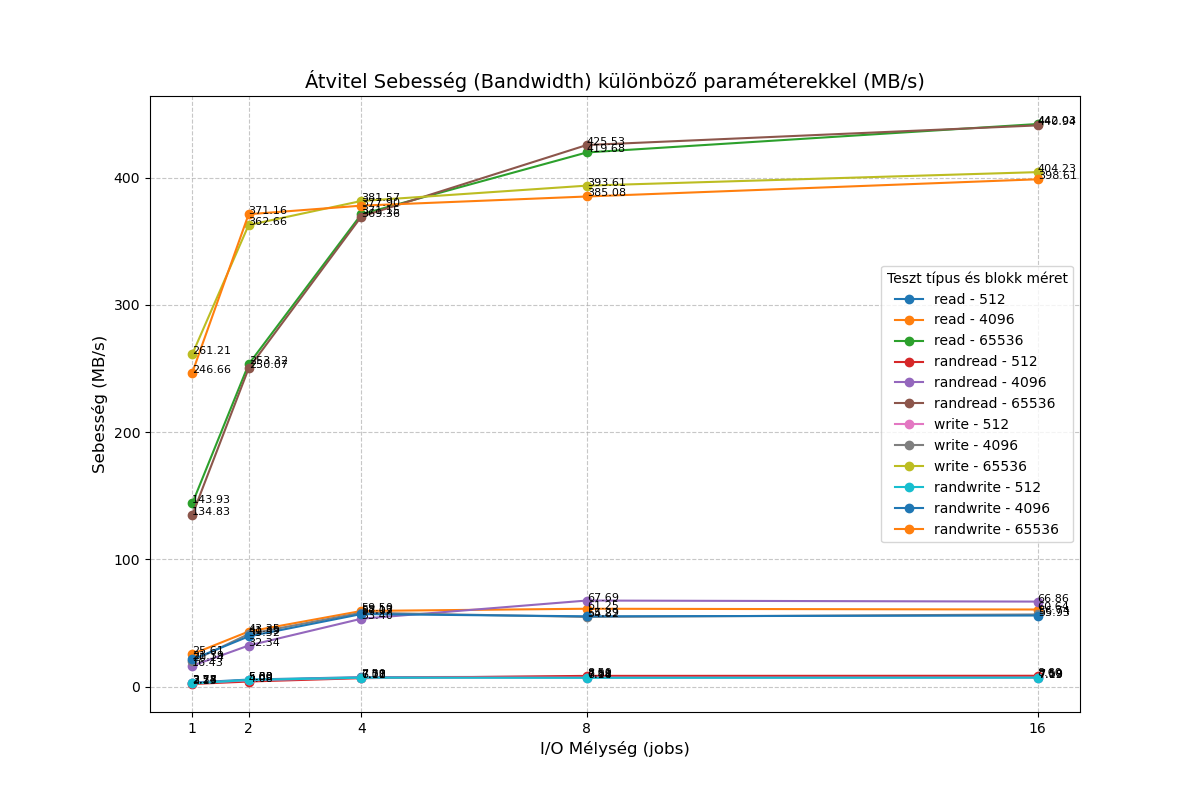

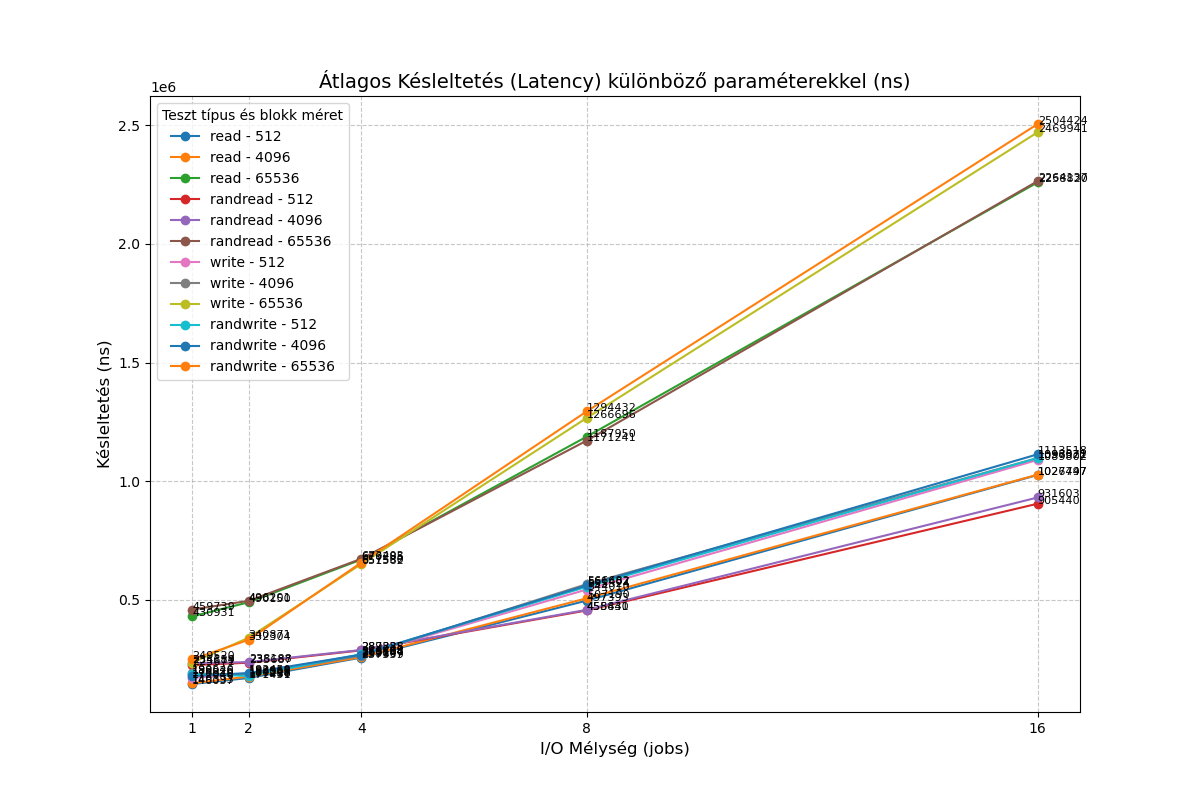

@stormi XCP-ng QCOW2 vs. VHD Performance Feedback on NVMe

First of all, I would like to thank the team for all the hard work in bringing QCOW2 support to a production-ready state. It is a very welcome feature.I have performed some quick I/O benchmarks comparing the new QCOW2 format against the traditional VHD. In my tests, QCOW2 appears significantly slower than VHD on my hardware.

Test Environment

Hypervisor: Dell PowerEdge R420CPU: Intel Xeon E5-2470 v2

Storage: Intel SSDPELKX010T8 NVMe

VM OS: Debian 13

VM Specs: 2 vCPUs, 1GB RAM

Setup: One 10GB VHD and one 10GB QCOW2 disk, both pre-filled from /dev/random.

Methodology

I used a custom test suite available here: https://vm01.unsoft.hu/~ventura/fio/fio_test_20250408.tar.gz

I also ran a simplefio loop with the following results:

VHD:root@Debian-13-CloudInit-20250810:/mnt/vhd# for mode in read write; do for jobs in 1 16; do for bs in 4 64; do for t in "" rand; do printf "%2i qd %2ik % 4s " $jobs $bs $t; fio --name=random-write --rw=$t$mode --bs=${bs}k --numjobs=1 --size=1g --iodepth=$jobs --runtime=10 --time_based --direct=1 --ioengine=libaio|grep -e BW -e runt ; done; done; done; done 1 qd 4k read: IOPS=9625, BW=37.6MiB/s (39.4MB/s)(376MiB/10001msec) 1 qd 4k rand read: IOPS=5414, BW=21.2MiB/s (22.2MB/s)(212MiB/10001msec) 1 qd 64k read: IOPS=2657, BW=166MiB/s (174MB/s)(1661MiB/10001msec) 1 qd 64k rand read: IOPS=2575, BW=161MiB/s (169MB/s)(1610MiB/10001msec) 16 qd 4k read: IOPS=45.7k, BW=178MiB/s (187MB/s)(1785MiB/10001msec) 16 qd 4k rand read: IOPS=45.9k, BW=179MiB/s (188MB/s)(1794MiB/10001msec) 16 qd 64k read: IOPS=16.7k, BW=1041MiB/s (1092MB/s)(10.2GiB/10001msec) 16 qd 64k rand read: IOPS=16.7k, BW=1042MiB/s (1093MB/s)(10.2GiB/10001msec) 1 qd 4k write: IOPS=8842, BW=34.5MiB/s (36.2MB/s)(345MiB/10001msec); 0 zone resets 1 qd 4k rand write: IOPS=8880, BW=34.7MiB/s (36.4MB/s)(347MiB/10001msec); 0 zone resets 1 qd 64k write: IOPS=6095, BW=381MiB/s (399MB/s)(3810MiB/10001msec); 0 zone resets 1 qd 64k rand write: IOPS=6006, BW=375MiB/s (394MB/s)(3755MiB/10001msec); 0 zone resets 16 qd 4k write: IOPS=49.3k, BW=193MiB/s (202MB/s)(1928MiB/10001msec); 0 zone resets 16 qd 4k rand write: IOPS=47.3k, BW=185MiB/s (194MB/s)(1848MiB/10001msec); 0 zone resets 16 qd 64k write: IOPS=14.3k, BW=891MiB/s (934MB/s)(8910MiB/10001msec); 0 zone resets 16 qd 64k rand write: IOPS=15.5k, BW=966MiB/s (1013MB/s)(9663MiB/10001msec); 0 zone resetsQCOW2

root@Debian-13-CloudInit-20250810:/mnt/qcow2# for mode in read write; do for jobs in 1 16; do for bs in 4 64; do for t in "" rand; do printf "%2i qd %2ik % 4s " $jobs $bs $t; fio --name=random-write --rw=$t$mode --bs=${bs}k --numjobs=1 --size=1g --iodepth=$jobs --runtime=10 --time_based --direct=1 --ioengine=libaio|grep -e BW -e runt ; done; done; done; done 1 qd 4k read: IOPS=5866, BW=22.9MiB/s (24.0MB/s)(229MiB/10001msec) 1 qd 4k rand read: IOPS=4000, BW=15.6MiB/s (16.4MB/s)(156MiB/10001msec) 1 qd 64k read: IOPS=2229, BW=139MiB/s (146MB/s)(1394MiB/10001msec) 1 qd 64k rand read: IOPS=2161, BW=135MiB/s (142MB/s)(1351MiB/10001msec) 16 qd 4k read: IOPS=16.9k, BW=66.2MiB/s (69.4MB/s)(662MiB/10001msec) 16 qd 4k rand read: IOPS=17.6k, BW=68.8MiB/s (72.1MB/s)(688MiB/10001msec) 16 qd 64k read: IOPS=7244, BW=453MiB/s (475MB/s)(4529MiB/10002msec) 16 qd 64k rand read: IOPS=6994, BW=437MiB/s (458MB/s)(4372MiB/10002msec) 1 qd 4k write: IOPS=5551, BW=21.7MiB/s (22.7MB/s)(217MiB/10001msec); 0 zone resets 1 qd 4k rand write: IOPS=5159, BW=20.2MiB/s (21.1MB/s)(202MiB/10001msec); 0 zone resets 1 qd 64k write: IOPS=4024, BW=252MiB/s (264MB/s)(2515MiB/10001msec); 0 zone resets 1 qd 64k rand write: IOPS=4027, BW=252MiB/s (264MB/s)(2517MiB/10001msec); 0 zone resets 16 qd 4k write: IOPS=14.5k, BW=56.8MiB/s (59.6MB/s)(568MiB/10002msec); 0 zone resets 16 qd 4k rand write: IOPS=14.0k, BW=54.7MiB/s (57.4MB/s)(547MiB/10001msec); 0 zone resets 16 qd 64k write: IOPS=6360, BW=398MiB/s (417MB/s)(3976MiB/10002msec); 0 zone resets 16 qd 64k rand write: IOPS=6090, BW=381MiB/s (399MB/s)(3807MiB/10002msec); 0 zone resetsI would be interested to know if I'm overlooking something, or if the qcow2 format simply provides lower performance compared to VHD for the time being?

-

Might be interesting to test a different cluster size and see the impact

https://docs.xcp-ng.org/storage/qcow2_faq/#can-we-change-the-qcow2-cluster-size

-

Might be interesting to test a different cluster size and see the impact

I tested it with a cluster size of 2 megabytes, and nothing changed

-

@bogikornel I've also found that the I/O performance is somewhat lower in general.

Some of my profiling made it seem like the VHD backends were doing their low-level I/O with much bigger blocks than QCOW2 ... would that depend on the cluster size?

-

@pkgw I tested it with a cluster size of 2 megabytes. I got similar results to those with the default size.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login