Alert: Control Domain Memory Usage

-

It depends how self-contained the modules are. For device drivers, it's usually feasible. For more core parts of the kernel, I think we should rather try to identify patches that look like they could fix the issue and rebuild the kernel with them.

We could also opt for a dichotomy approach between the main kernel and the alternate kernel, but since it takes days before one can be sure that there's no leak, it's not really doable, unless we find a way to reproduce the issue way faster (which is another thing in which users may help: try to provoke the memleak on purpose. High network load seems to be a lead.).

-

@olivierlambert said in Alert: Control Domain Memory Usage:

The latest report was on a NFS storage, however

lsmoddisplays various iSCSI modules loaded. So it doesn't mean it's not an iSCSI module issue:Isn't it SCSI rather than iSCSI here? However, maybe the leak is in SCSI layers indeed...

-

I am seeing similar behavior with Citrix Hypervisor 8.2LTSR after upgrading from 7.1CU2, which was not affected. We have a pool with 5 Poweredge R730 hosts and 2 R720 hosts. All have Intel 10G and 1G NICs (ixgbe and igb drivers) and we use iSCSI storage. I have had two hosts use up all their control domain memory, requiring an evacuate/reboot of the host. One host was the pool master, which runs only one VM (xen orchestra appliance) but is generally busy with various iSCSI tasks due to snapshot coalesce after daily backups. The other host has ~20 VMs that are pretty busy with network activity. No userspace processes that seem to be using an abnormal amount of memory.

-

Thanks, this is good to have confirmation of what we thought about CH 8.2 but couldn't prove!

-

@stormi I've just opened a Citrix case on the issue, but I wouldn't expect much help there, and definitely wouldn't expect anything quickly.

-

@fasterfourier Well, we've also tried to make the issue known to Citrix before so maybe your confirmation that it does not only happen in XCP-ng will be enough to trigger some movement about it. A memory leak that makes the host unusable is not a small issue.

-

@stormi The ID for the case I just opened is 80240347. If you have a bugtracker issue open, you may want to mention that ticket. I just now opened the ticket, though, so it will be a while before it makes its way out of tier 1, etc.

EDIT: Had the wrong case number at first. Updated case number.

-

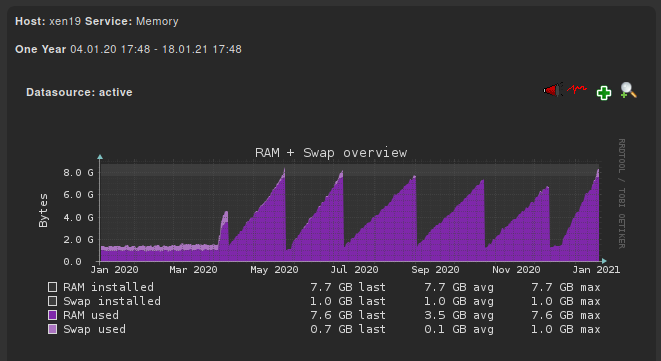

We also have an issue with growing control domain memory:

- XCP-ng 8.2

- NFS shared storage

- the poolmaster (xen19 is one of them) are more affected than pool members

Today I install the alternate kernel on one of our poolmaster to see if that resolves our issue.

-

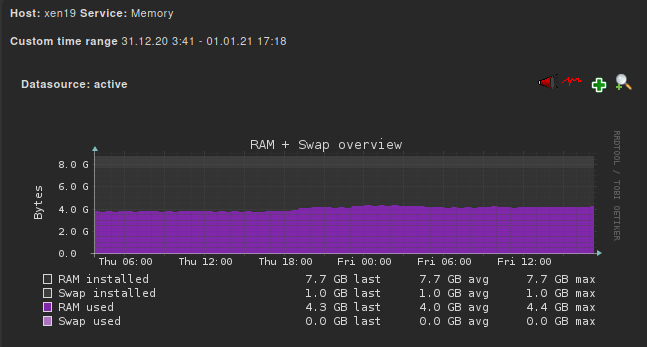

I noticed in my monitoring graphs, that since we have this issue, the SWAP is not used like before the issue:

-

looked in my yum.log on this server (xen19):

our problems startet exactly since "Apr 10 18:10:29 Installed: kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64"

yum.log.4.gz:Oct 03 17:35:54 Installed: kernel-4.4.52-4.0.7.1.x86_64 yum.log.4.gz:Nov 20 18:29:29 Updated: kernel-4.4.52-4.0.12.x86_64 yum.log.2.gz:Oct 10 20:19:31 Updated: kernel-4.4.52-4.0.13.x86_64 yum.log.1:Apr 10 18:10:29 Installed: kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64 yum.log.1:Jul 07 17:46:34 Updated: kernel-4.19.19-6.0.11.1.xcpng8.1.x86_64 yum.log.1:Dec 10 17:59:07 Updated: kernel-4.19.19-6.0.12.1.xcpng8.1.x86_64 yum.log.1:Dec 19 13:53:39 Updated: kernel-4.19.19-6.0.13.1.xcpng8.1.x86_64 yum.log.1:Dec 19 13:55:20 Updated: kernel-4.19.19-7.0.9.1.xcpng8.2.x86_64 yum.log:Jan 18 17:35:07 Installed: kernel-alt-4.19.142-1.xcpng8.2.x86_64 -

@borzel How frequently do you restart VMs? And what's the last dom-id?

# xl list -

@r1 in general we do not restart many of our VMs, its all very static, only manual operated

xen19 is now rebootet (we need it in production) with kernel-alt - highest id is currently 4

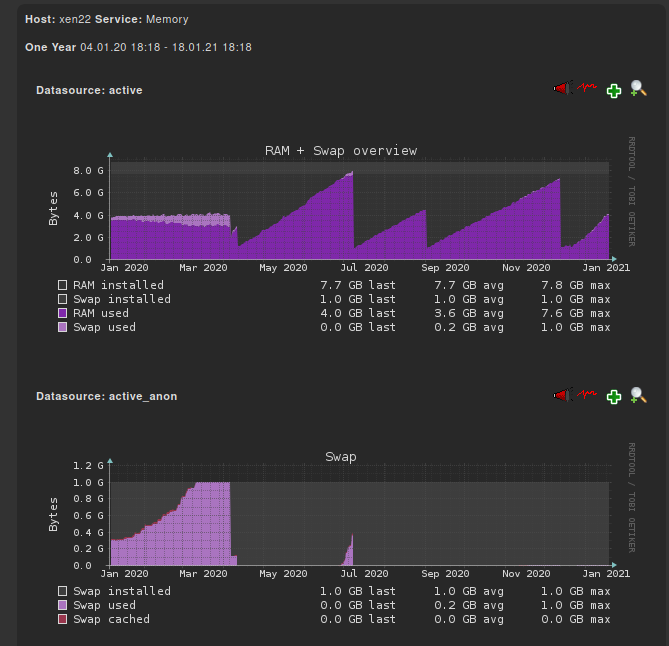

xen22 (pool master of another affected pool) - highest id is curently 30

memory graphs of xen22

yum.log of xen22 (Problem here also after installing kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64)

yum.log.5.gz:Dec 19 00:52:47 Updated: kernel-4.4.52-4.0.12.x86_6 yum.log.3.gz:Nov 08 10:07:40 Updated: kernel-4.4.52-4.0.13.x86_64 yum.log.1:Apr 10 20:31:01 Installed: kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64 yum.log.1:Aug 31 23:10:50 Updated: kernel-4.19.19-6.0.11.1.xcpng8.1.x86_64 yum.log.1:Dec 11 18:00:54 Updated: kernel-4.19.19-6.0.12.1.xcpng8.1.x86_64 yum.log.1:Dec 19 12:52:00 Updated: kernel-4.19.19-6.0.13.1.xcpng8.1.x86_64 yum.log.1:Dec 19 12:54:13 Updated: kernel-4.19.19-7.0.9.1.xcpng8.2.x86_64 -

@borzel Between 4.19.19-6.0.9 to 4.19.19-6.0.10, following two patches were added.

0001-block-cleanup-__blkdev_issue_discard.patch 0001-block-fix-32-bit-overflow-in-__blkdev_issue_discard.patchBoth are well vetted and seems stable without any further changes in them. Was there anything else updated along with kernel?

-

@r1 yes, ever line "installed" in yum.log is an Upgrade from XCP-ng.

Problems started with XCP-ng 8.x -

@borzel did you "yum upgrade" from 7.x from 8.x?

-

@stormi on server xen19 I think so, on server xen22 I'm not sure

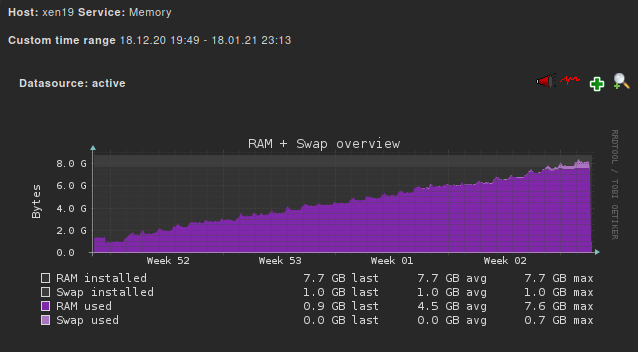

I looked more close on my memory graphs and saw, that the memory baseline increases every night:

"bump" every day:

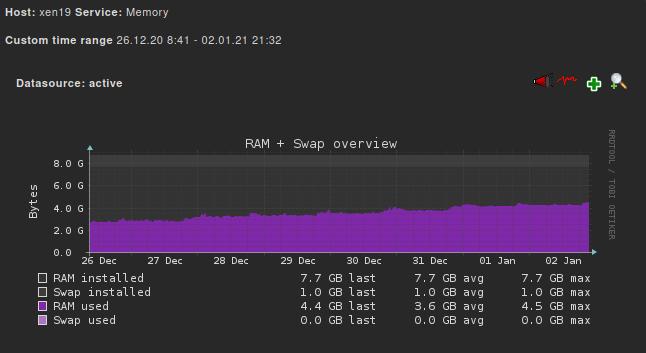

closer look in week 53:

Dez 31. - Jan 01.

Our Backups run from 18.00 till 3 or 4 in the morning (including coalesce).

--> maybe the heavy IO load leads to memory leaks "somewhere"?

-

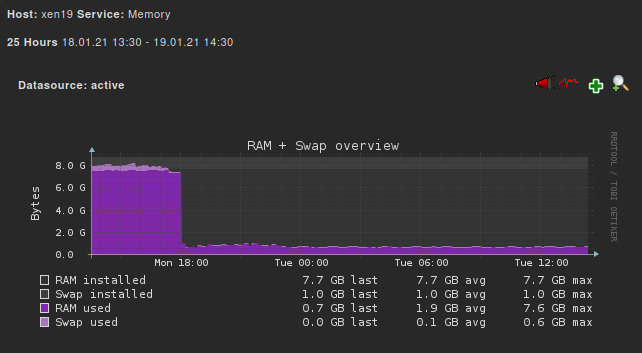

Good news from the kernel-alt (server xen19): No RAM leaks so far

-

At least that's consistent

Thanks for the feedback @borzel

Thanks for the feedback @borzel -

@borzel

yum upgradefrom 7.x to 8.x is not supported, so it's likely that your host isn't in a perfectly clean state.This is unrelated to the memory leak, but could cause other kinds of issues. Basically, scripts that should have run during the RPM upgrade to ensure the final state is consistent with what you'd have from an ISO upgrade either don't exist or haven't been tested.

-

@stormi I'm not complete sure if I did the iso upgrade or not...

But it's a good idea to reinstall the poolmaster from scratch...

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login