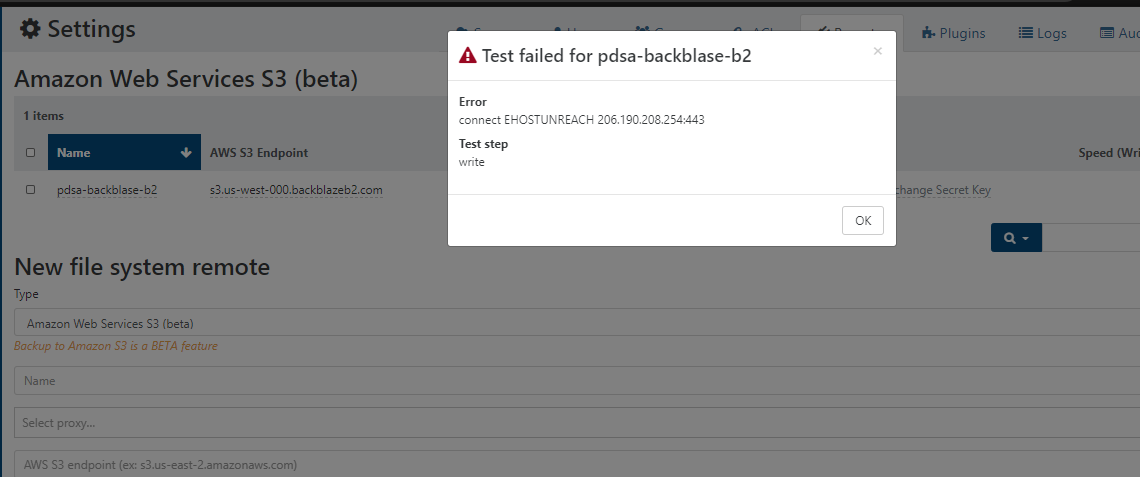

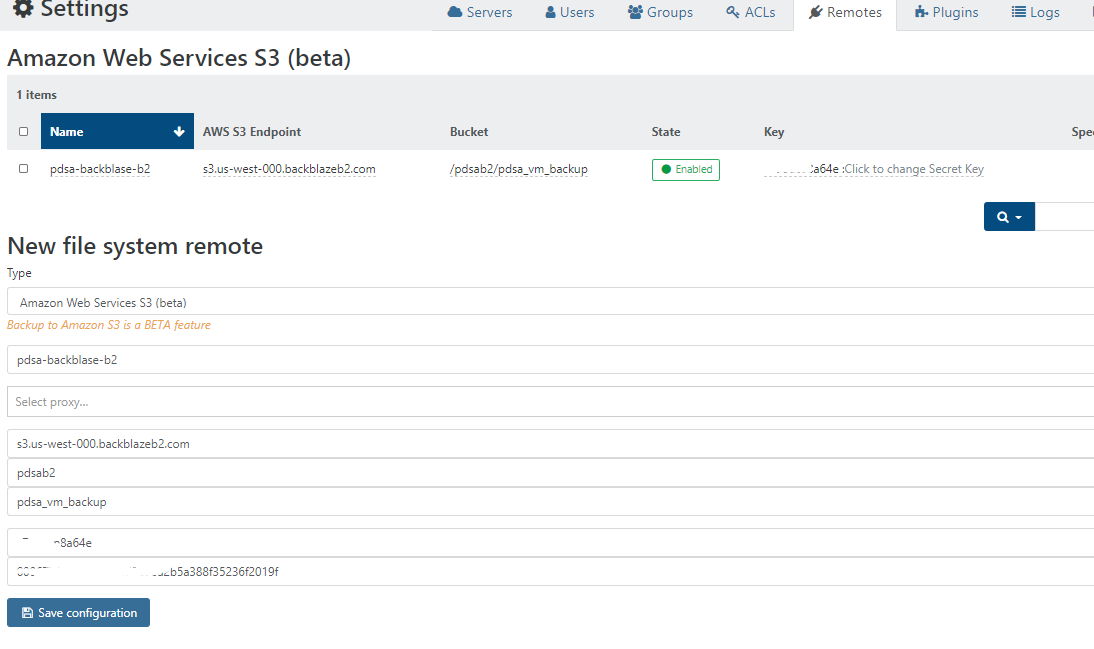

backblaze b2 / amazon s3 as remote in xoa

-

@shorian I forget if you're building from sources, would you mind trying this code with a small amount of memory ? https://github.com/vatesfr/xen-orchestra/pull/5579 ?

I removed the keepalive in there.

-

@nraynaud superb! I can’t get into this until tomorrow evening but will be on it as soon as I can. Bear with....

-

@nraynaud Ok, so far so good; haven't had a timeout yet. However the backups are reported as being much much smaller ; overall cumulative size has dropped from over 20gb to under 5gb, which would avoid the problem in any case.

There are no changes to the settings (zstd, normal snapshot without memory), all I can think of is that maybe there were a lot of resends resulting in the large data size being reported within XO, but unless I've picked up a much improved algorithm by building that commit compared with the released branch, I'm a little confused.

I will take a look at the size of the actual backups as held on the remote (Backblaze B2) compared with the reported size in XO to see if I can substantiate the above paragraph.

Meanwhile, I'll keep running backups to soak test it but so far we're looking good!

-

@shorian Just a point, don't forget to test your restoration as well.

Making a backup is only half of having working backups.

-

@dustinb Concur 100%; my current focus is on confirming the error doesn't reoccur and understanding the change in what I'm seeing compared with previous backups, before this goes into production I 100% agree it should be tested for restores. I shall do so myself once I've got confidence that the symptom has been resolved.

-

@nraynaud Unfortunately after a good start I'm now seeing AWS.S3.upload socket hang up errors:

"message": "Error calling AWS.S3.upload: socket hang up", "name": "Error", "stack": "Error: Error calling AWS.S3.upload: socket hang up\n at rethrow (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/@sullux/aws-sdk/webpack:/lib/proxy.js:114:1)\n at tryCatcher (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/util.js:16:23)\n at Promise._settlePromiseFromHandler (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/promise.js:547:31)\n at Promise._settlePromise (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/promise.js:604:18)\n at Promise._settlePromise0 (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/promise.js:649:10)\n at Promise._settlePromises (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/promise.js:725:18)\n at _drainQueueStep (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/async.js:93:12)\n at _drainQueue (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/async.js:86:9)\n at Async._drainQueues (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/async.js:102:5)\n at Immediate.Async.drainQueues [as _onImmediate] (/opt/xo/xo-builds/xen-orchestra-202102171611/node_modules/bluebird/js/release/async.js:15:14)\n at processImmediate (internal/timers.js:461:21)" }This is not occurring for all the VMs being uploaded, usually only for one out of the three. The tasks then stays open and runs until the timeout after 3 hours, despite normally taking about 30 minutes for this particular batch.

After 3 successful runs, this has now occurred each time on the following 3 runs. I am going to clear out the target completely and see if that makes any difference. (Note that I am using BackBlaze B2 not AWS.). Let me know if you want me to send you the full log or an extract of SMlog or anything else.

To @DustinB's point above, I have tried one restore and it came up fine, but please don't consider this a full and comprehensive test.

-

Thank you all. I would have never guessed that uploading a file over http would be this hard. I'll dig deeper.

-

@nraynaud Bizarre isn't it; I'm so very grateful for your efforts.

Some more news - it seems that one big challenge is around concurrency - things improve dramatically if concurrency is set to 1. As soon as something else is running in parallel, we run into the socket failures. I'm expanding things to try your change on another box to see if the outcomes are different - but in summary what I'm seeing so far:

- Concurrency = 1 - works fine first time, fails occasionally (20% of the time?) thereafter

- Concurrency > 1 - almost impossible to get it to run, but sometimes one or two VMs backup ok but not enough to be predictable and never the entire group

- Anything fails - impossible to get a clean run again until the S3 target has been cleaned entirely

So it appears that somewhere there perhaps is a lock occurring when more than one stream is running, and additionally there's some kind of conflict when things have terminated prematurely and the target is therefore not in its expected state on the next run.

-

@shorian thanks, I'm a bit lost, I will read on the node.js Agent class.

-

@nraynaud Removing any concurrency seems to be effective; certainly a substantial improvement upon the original backup prior to your amendments.

We have managed to get things to run pretty much every time now, by running with concurrency set to '1' and being careful on the timing to ensure no other backups accidentally run in parallel.

Have checked a couple of restores and they seem to be ok too.

Only thing I would highlight is that now I am not getting the failures, I cannot tell if the issue on the remote when recovering from a partial/failed backup is resolved. I guess this needs me to pull a plug on the network whilst back up is running but I would need to test this on a different machine in the lab rather than where we're running at the moment.

-

Ok, spent weekend having backups running continuously across a number of boxes.

Good news - the fix seems to have solved things, providing one only ever uses concurrency “1” and there are no conflicting or overlapping backups.

Restores are working fine for me too.

In short @nraynaud - it’s a substantial improvement and for me makes this now usable. A huge thank you.

-

Thanks for the feeback @shorian !

-

@shorian I would like to abuse your patience again, by asking you to test this branch: https://github.com/vatesfr/xen-orchestra/tree/nr-s3-fix-big-backups2

The concept is that the backup upload will happen without any sort of smart upload system or queue.

Thank you, Nico

-

@nraynaud Building XO now; will run a few backups overnight.

Anything in particular you'd like me to look for / test ?

-

@shorian thanks. If you could just try no concurrency as a proof that the code work, then your normal concurrency, to check if the issue is fixed that would be great.

This code is meant to be more vulnerable to losing the connection to S3, but I think it's a low risk. It's now using straight HTTP POST.

-

@nraynaud Bit of a hiccup I'm afraid ; errors out with "Not Implemented":

{ "data": { "mode": "full", "reportWhen": "failure" }, "id": "1615570162008", "jobId": "b947a31c-35f7-45d9-af88-628ec027c71e", "jobName": "B2", "message": "backup", "scheduleId": "8481e1b8-3d22-4502-abb7-9f0413aca0a3", "start": 1615570162008, "status": "pending", "infos": [ { "data": { "vms": [ "25a4e2a6-b116-7cee-b94b-f3553fe001f2", "24103ce1-e47b-fe12-4029-d643e0382f08", "60ac886d-8adf-9e21-4baa-b14e0fc1b2bb" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "25a4e2a6-b116-7cee-b94b-f3553fe001f2" }, "id": "1615570162050", "message": "backup VM", "start": 1615570162050, "status": "pending", "tasks": [ { "id": "1615570162064", "message": "snapshot", "start": 1615570162064, "status": "success", "end": 1615570163265, "result": "294d65fc-ddc7-b8ad-68b8-d14e36b6838e" }, { "data": { "id": "df07dd6b-753e-40f2-89be-75404bac2c1e", "type": "remote", "isFull": true }, "id": "1615570163286", "message": "export", "start": 1615570163286, "status": "failure", "tasks": [ { "id": "1615570164234", "message": "transfer", "start": 1615570164234, "status": "failure", "end": 1615570164257, "result": { "message": "Not implemented", "name": "Error", "stack": "Error: Not implemented\n at S3Handler._createWriteStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:428:11)\n at S3Handler._createOutputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:412:25)\n at S3Handler.createOutputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:129:12)\n at RemoteAdapter.outputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/RemoteAdapter.js:513:34)\n at /opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_FullBackupWriter.js:70:7\n at FullBackupWriter.run (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_FullBackupWriter.js:69:5)\n at Array.<anonymous> (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_VmBackup.js:209:9)" } } ], "end": 1615570164258, "result": { "message": "Not implemented", "name": "Error", "stack": "Error: Not implemented\n at S3Handler._createWriteStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:428:11)\n at S3Handler._createOutputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:412:25)\n at S3Handler.createOutputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/fs/src/abstract.js:129:12)\n at RemoteAdapter.outputStream (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/RemoteAdapter.js:513:34)\n at /opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_FullBackupWriter.js:70:7\n at FullBackupWriter.run (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_FullBackupWriter.js:69:5)\n at Array.<anonymous> (/opt/xo/xo-builds/xen-orchestra-202103121606/@xen-orchestra/backups/_VmBackup.js:209:9)" } } ] } ] }I'll try over the weekend with a fresh install. Backing up to Backblaze B2 rather than S3 - likely to be the issue?

-

@shorian Ah sorry, I think S3 is completely broken at the moment.

-

@nraynaud Getting the "not implemented" error on all branches at the moment - is there a functioning, albeit not perfect branch that we can run with as a temporary measure? Thanks chap

-

Is this still broken on all branches?

It's a shame, it was working relatively fine when used carefully -

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login

But we should have soon something on track.

But we should have soon something on track.