Issue with SR and coalesce

-

its ok buddy, please don't feel it, i'm following the discussion and trying to figure out whats happening too.

I added another SR and i'm monitoring the status. Still having performance issues (1 SR is SAS) but, the coalesce number seems to be finally decreasing.

Watching out the backup jobs running and keep you all in touch -

-

Yeah I am at a loss restored systems from Full backups onto a new SR multiple times and it just will not let us backup while they run, turn them off works fine.

Its like XCP's failing to make a snapshot, but you can make snapshots manually and its fine.

Maybe new SR is broken? if that's the case how does that happen when it was just created and added. If there a SR repair tool?

I can not find the error we are getting anywhere and the fact it does not have any logs of coalescing makes me thing its just not doing its job.

-

@lucasljorge How full is the storage, percentage-wise? If over around 90%, a coalesce operation sometimes will not work. You may have to shuffle some of your VM storage to a different SR.

If your host is not responding, you may have to do a reboot to clear out stuck taks if the "xe task-cancel" command isn't working. -

@Byte_Smarter hard to tell whats happening. The Xen version here is 8.2

I read somewhere in other XCP documentation, that the SR has to be at least 30% free to do backup and coalesce jobs properly.

I think that was the problem here, the SR went full and we're unable to run any job. But i had to recreate the old SR to get a success response from Orchestra.

Did you try to migrate the VDI's to another SR?

-

@tjkreidl it was around 85% full.

-

@lucasljorge Its a Brand New SR that we moved them too with TB of free space

-

@Byte_Smarter said in Issue with SR and coalesce:

@lucasljorge Its a Brand New SR that we moved them too with TB of free space

By moved we used a backup restore instead of a migration.

-

@Byte_Smarter I would try updating XCP first, I'm just not sure the impacts

don't know too if there are differences between migration and restoring a backup to the master/orchestra

-

Yeah we are thinking that too, it would involve downtime on all 10 of the systems in the pool to complete so that would be a scheduled task.

-

@lucasljorge That may be the issue. That's pretty full for a coalesce to work!

-

@tjkreidl Would a not full disk still have the issue be just a completely different issue ?

-

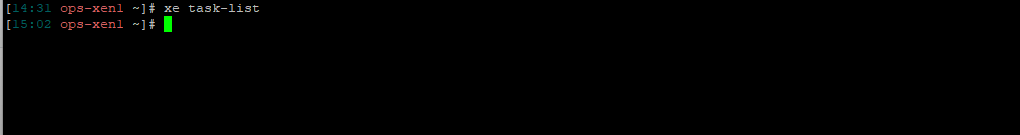

@Byte_Smarter Sure, that's of course possible. Does "xe task-list" show any currently running tasks? Anything else of possible value in the logs?

-

-

@Byte_Smarter Hmmm, can you migrate any VMs' storage to other SRs to free up more space?

-

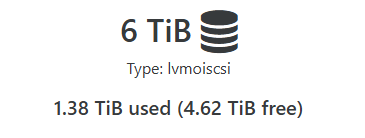

@tjkreidl I am not sure if you saw my earlier post, I have several TB of space

-

@Byte_Smarter said in Issue with SR and coalesce:

@tjkreidl I am not sure if you saw my earlier post, I have several TB of space

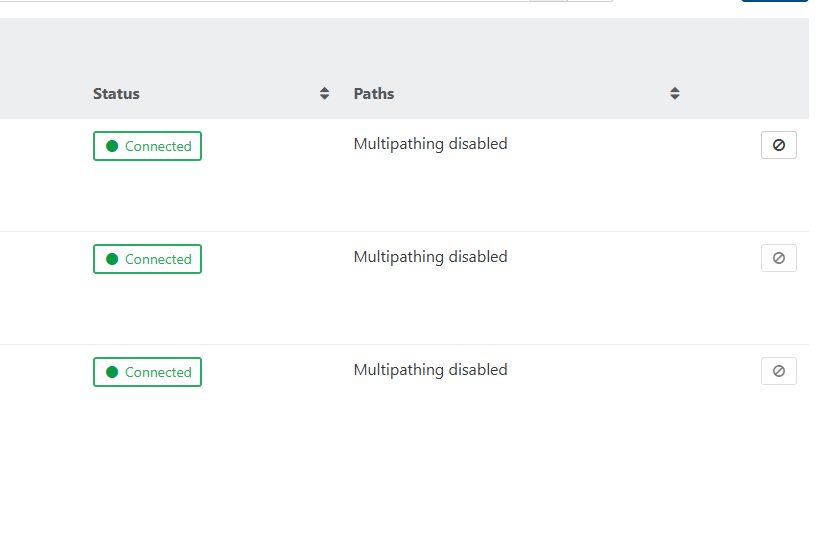

Are you sure multipathing is disabled on all hosts in the pool?

Also, could you share a larger portion of the /var/log/SMlog ? -

Multipathing is not enabled, the three connections are to each host in the pool

The SR ID the issues seems to be on is

6eb76845-35be-e755-4d7a-5419049aca87Mar 15 04:06:41 ops-xen2 SM: [19269] lock: tried lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr, acquired: True (exists: True) Mar 15 04:06:41 ops-xen2 SMGC: [19269] Found 23 VDIs for deletion: Mar 15 04:06:41 ops-xen2 SMGC: [19269] *9573449e[VHD](250.000G//8.000M|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *707d5286[VHD](20.000G//6.070G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *44f96838[VHD](20.000G//2.789G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *a87215e0[VHD](20.000G//1.258G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *8a544363[VHD](20.000G//19.680G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *b27b0d95[VHD](250.000G//66.812G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *54ea774f[VHD](250.000G//19.277G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *7054e859[VHD](250.000G//22.547G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *b6af6f2f[VHD](250.000G//51.547G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *64ffcd3c[VHD](250.000G//40.863G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *db977fb7[VHD](250.000G//11.977G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *08b5e4b4[VHD](250.000G//207.605G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *a8248b10[VHD](3.879G//3.023G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *4bbdc102[VHD](40.000G//4.586G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *eee08599[VHD](40.000G//6.500G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *8580e5aa[VHD](40.000G//20.414G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *29ad53b8[VHD](20.000G//812.000M|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *000e5169[VHD](20.000G//4.344G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *1a591e58[VHD](20.000G//6.000G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *af10d4dd[VHD](20.000G//3.355G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *cd2634d6[VHD](20.000G//4.727G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *496fdfc3[VHD](20.000G//19.672G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] *b23fbb00[VHD](150.000G//150.297G|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] Deleting unlinked VDI *9573449e[VHD](250.000G//8.000M|n) Mar 15 04:06:41 ops-xen2 SMGC: [19269] Checking with slave OpaqueRef:0745579d-7eca-4dae-9502-68b645fd9957 (path /dev/VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87/VHD-9573449e-fed0-4d9d-ad5a-8ff3c0dd69d8) Mar 15 04:06:42 ops-xen2 SMGC: [19269] call-plugin returned: 'True' Mar 15 04:06:42 ops-xen2 SMGC: [19269] Checking with slave OpaqueRef:ec4a67fd-9558-47cd-9c87-9be5aeb1601c (path /dev/VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87/VHD-9573449e-fed0-4d9d-ad5a-8ff3c0dd69d8) Mar 15 04:07:03 ops-xen2 SM: [28516] Matched SCSIid, updating 36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:03 ops-xen2 SM: [28516] Matched SCSIid, updating 36001405928bd167d3b1ed4351d8a50d8 Mar 15 04:07:03 ops-xen2 SM: [28516] Matched SCSIid, updating 36001405991b910dd5e91d4aaed9a5edf Mar 15 04:07:03 ops-xen2 SM: [28516] MPATH: Update done Mar 15 04:07:03 ops-xen2 SM: [28682] Matched SCSIid, updating 36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:03 ops-xen2 SM: [28682] Matched SCSIid, updating 36001405928bd167d3b1ed4351d8a50d8 Mar 15 04:07:03 ops-xen2 SM: [28682] Matched SCSIid, updating 36001405991b910dd5e91d4aaed9a5edf Mar 15 04:07:03 ops-xen2 SM: [28682] MPATH: Update done Mar 15 04:07:04 ops-xen2 SM: [29001] Matched SCSIid, updating 36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:04 ops-xen2 SM: [29001] Matched SCSIid, updating 36001405928bd167d3b1ed4351d8a50d8 Mar 15 04:07:04 ops-xen2 SM: [29001] Matched SCSIid, updating 36001405991b910dd5e91d4aaed9a5edf Mar 15 04:07:04 ops-xen2 SM: [29001] MPATH: Update done Mar 15 04:07:04 ops-xen2 SM: [29280] Matched SCSIid, updating 36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:04 ops-xen2 SM: [29280] Matched SCSIid, updating 36001405928bd167d3b1ed4351d8a50d8 Mar 15 04:07:04 ops-xen2 SM: [29280] Matched SCSIid, updating 36001405991b910dd5e91d4aaed9a5edf Mar 15 04:07:04 ops-xen2 SM: [29280] MPATH: Update done Mar 15 04:07:04 ops-xen2 SM: [29546] Matched SCSIid, updating 36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:04 ops-xen2 SM: [29546] Matched SCSIid, updating 36001405928bd167d3b1ed4351d8a50d8 Mar 15 04:07:04 ops-xen2 SM: [29546] Matched SCSIid, updating 36001405991b910dd5e91d4aaed9a5edf Mar 15 04:07:04 ops-xen2 SM: [29546] MPATH: Update done Mar 15 04:07:06 ops-xen2 SM: [29642] Setting LVM_DEVICE to /dev/disk/by-scsid/36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:06 ops-xen2 SM: [29642] Setting LVM_DEVICE to /dev/disk/by-scsid/36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:06 ops-xen2 SM: [29642] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:06 ops-xen2 SM: [29642] LVMCache created for VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87 Mar 15 04:07:06 ops-xen2 SM: [29642] ['/sbin/vgs', '--readonly', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:06 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:06 ops-xen2 SM: [29642] Failed to lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr on first attempt, blocked by PID 19269 Mar 15 04:07:06 ops-xen2 SM: [29673] sr_update {'sr_uuid': '18858bd8-5341-0a01-84af-a841ec689894', 'subtask_of': 'DummyRef:|aab5875a-ae37-4d0c-934b-bf39e06fa7de|SR.stat', 'args': [], 'host_ref': 'OpaqueRef:d1ef8654-1582-4fbf-bff9-a0c18af279a5', 'session_ref': 'OpaqueRef:c79da288-ef14-4c7c-9c64-fbc0581c2eee', 'dev$ Mar 15 04:07:31 ops-xen2 SM: [19269] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:31 ops-xen2 SM: [29642] lock: acquired /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:31 ops-xen2 SM: [19269] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/running Mar 15 04:07:31 ops-xen2 SMGC: [19269] GC process exiting, no work left Mar 15 04:07:31 ops-xen2 SM: [19269] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/gc_active Mar 15 04:07:31 ops-xen2 SM: [29642] LVMCache: will initialize now Mar 15 04:07:31 ops-xen2 SM: [29642] LVMCache: refreshing Mar 15 04:07:31 ops-xen2 SM: [29642] ['/sbin/lvs', '--noheadings', '--units', 'b', '-o', '+lv_tags', '/dev/VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:31 ops-xen2 SMGC: [19269] SR 6eb7 ('HyperionOps') (41 VDIs in 12 VHD trees): no changes Mar 15 04:07:31 ops-xen2 SMGC: [19269] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Mar 15 04:07:31 ops-xen2 SMGC: [19269] *********************** Mar 15 04:07:31 ops-xen2 SMGC: [19269] * E X C E P T I O N * Mar 15 04:07:31 ops-xen2 SMGC: [19269] gc: EXCEPTION <class 'XenAPI.Failure'>, ['XENAPI_PLUGIN_FAILURE', 'multi', 'CommandException', 'Input/output error'] Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 2961, in gc Mar 15 04:07:31 ops-xen2 SMGC: [19269] _gc(None, srUuid, dryRun) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 2846, in _gc Mar 15 04:07:31 ops-xen2 SMGC: [19269] _gcLoop(sr, dryRun) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 2813, in _gcLoop Mar 15 04:07:31 ops-xen2 SMGC: [19269] sr.garbageCollect(dryRun) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 1651, in garbageCollect Mar 15 04:07:31 ops-xen2 SMGC: [19269] self.deleteVDIs(vdiList) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 1665, in deleteVDIs Mar 15 04:07:31 ops-xen2 SMGC: [19269] self.deleteVDI(vdi) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 2426, in deleteVDI Mar 15 04:07:31 ops-xen2 SMGC: [19269] self._checkSlaves(vdi) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 2650, in _checkSlaves Mar 15 04:07:31 ops-xen2 SMGC: [19269] self.xapi.ensureInactive(hostRef, args) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/opt/xensource/sm/cleanup.py", line 332, in ensureInactive Mar 15 04:07:31 ops-xen2 SMGC: [19269] hostRef, self.PLUGIN_ON_SLAVE, "multi", args) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Mar 15 04:07:31 ops-xen2 SMGC: [19269] return self.__send(self.__name, args) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Mar 15 04:07:31 ops-xen2 SMGC: [19269] result = _parse_result(getattr(self, methodname)(*full_params)) Mar 15 04:07:31 ops-xen2 SMGC: [19269] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Mar 15 04:07:31 ops-xen2 SMGC: [19269] raise Failure(result['ErrorDescription']) Mar 15 04:07:31 ops-xen2 SMGC: [19269] Mar 15 04:07:31 ops-xen2 SMGC: [19269] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Mar 15 04:07:31 ops-xen2 SMGC: [19269] * * * * * SR 6eb76845-35be-e755-4d7a-5419049aca87: ERROR Mar 15 04:07:31 ops-xen2 SMGC: [19269] Mar 15 04:07:31 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:31 ops-xen2 SM: [29642] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:31 ops-xen2 SM: [29642] Entering _checkMetadataVolume Mar 15 04:07:31 ops-xen2 SM: [29642] lock: acquired /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:31 ops-xen2 SM: [29642] sr_scan {'sr_uuid': '6eb76845-35be-e755-4d7a-5419049aca87', 'subtask_of': 'DummyRef:|5e97641e-9c29-4d5b-b42c-c9df3a794e69|SR.scan', 'args': [], 'host_ref': 'OpaqueRef:d1ef8654-1582-4fbf-bff9-a0c18af279a5', 'session_ref': 'OpaqueRef:2684e3de-0605-4442-9678-3d3c07da1b7d', 'devic$ Mar 15 04:07:31 ops-xen2 SM: [29642] LVHDSR.scan for 6eb76845-35be-e755-4d7a-5419049aca87 Mar 15 04:07:31 ops-xen2 SM: [29642] ['/sbin/vgs', '--noheadings', '--nosuffix', '--units', 'b', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:31 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:31 ops-xen2 SM: [29642] LVMCache: refreshing Mar 15 04:07:31 ops-xen2 SM: [29642] ['/sbin/lvs', '--noheadings', '--units', 'b', '-o', '+lv_tags', '/dev/VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:31 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:31 ops-xen2 SM: [29642] ['/usr/bin/vhd-util', 'scan', '-f', '-m', 'VHD-*', '-l', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:31 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (4bbdc102-8a1d-4c8a-991f-8f1feee2740b), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (9573449e-fed0-4d9d-ad5a-8ff3c0dd69d8), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (b27b0d95-2911-4240-b510-f065212a6d22), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (29ad53b8-7e2d-4472-a8f1-410d362e84b8), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (a8248b10-9c14-4d88-bf63-3dcae5b81ce6), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (b23fbb00-ca47-4493-8058-50affdb2b348), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] Scan found hidden leaf (707d5286-bc23-4e4a-8981-e6f772a193c3), ignoring Mar 15 04:07:31 ops-xen2 SM: [29642] ['/sbin/vgs', '--noheadings', '--nosuffix', '--units', 'b', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:32 ops-xen2 SM: [29642] pread SUCCESS Mar 15 04:07:32 ops-xen2 SM: [29642] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/running Mar 15 04:07:32 ops-xen2 SM: [29642] lock: tried lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/running, acquired: True (exists: True) Mar 15 04:07:32 ops-xen2 SM: [29642] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/running Mar 15 04:07:32 ops-xen2 SM: [29642] Kicking GC Mar 15 04:07:32 ops-xen2 SMGC: [29642] === SR 6eb76845-35be-e755-4d7a-5419049aca87: gc === Mar 15 04:07:32 ops-xen2 SMGC: [29802] Will finish as PID [29803] Mar 15 04:07:32 ops-xen2 SMGC: [29642] New PID [29802] Mar 15 04:07:32 ops-xen2 SM: [29803] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/running Mar 15 04:07:32 ops-xen2 SM: [29803] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/gc_active Mar 15 04:07:32 ops-xen2 SM: [29642] lock: released /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:32 ops-xen2 SM: [29803] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:32 ops-xen2 SM: [29803] LVMCache created for VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87 Mar 15 04:07:32 ops-xen2 SM: [29803] lock: tried lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/gc_active, acquired: True (exists: True) Mar 15 04:07:32 ops-xen2 SM: [29803] lock: tried lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr, acquired: True (exists: True) Mar 15 04:07:32 ops-xen2 SM: [29803] LVMCache: refreshing Mar 15 04:07:32 ops-xen2 SM: [29803] ['/sbin/lvs', '--noheadings', '--units', 'b', '-o', '+lv_tags', '/dev/VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:32 ops-xen2 SM: [29803] pread SUCCESS Mar 15 04:07:32 ops-xen2 SM: [29803] ['/usr/bin/vhd-util', 'scan', '-f', '-m', 'VHD-*', '-l', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:32 ops-xen2 SM: [29820] Setting LVM_DEVICE to /dev/disk/by-scsid/36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:32 ops-xen2 SM: [29820] Setting LVM_DEVICE to /dev/disk/by-scsid/36e843b63e2d6a93dd5e7d4263d804bdf Mar 15 04:07:32 ops-xen2 SM: [29820] lock: opening lock file /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr Mar 15 04:07:32 ops-xen2 SM: [29820] LVMCache created for VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87 Mar 15 04:07:32 ops-xen2 SM: [29820] ['/sbin/vgs', '--readonly', 'VG_XenStorage-6eb76845-35be-e755-4d7a-5419049aca87'] Mar 15 04:07:32 ops-xen2 SM: [29820] pread SUCCESS Mar 15 04:07:32 ops-xen2 SM: [29820] Failed to lock /var/lock/sm/6eb76845-35be-e755-4d7a-5419049aca87/sr on first attempt, blocked by PID 29803 Mar 15 04:07:32 ops-xen2 SM: [29803] pread SUCCESS Mar 15 04:07:32 ops-xen2 SMGC: [29803] SR 6eb7 ('HyperionOps') (41 VDIs in 12 VHD trees): Mar 15 04:07:32 ops-xen2 SMGC: [29803] *a8bd427a[VHD](10.000G//9.953G|n) Mar 15 04:07:32 ops-xen2 SMGC: [29803] *35567cc8[VHD](10.000G//4.809G|n) Mar 15 04:07:32 ops-xen2 SMGC: [29803] *a5c1e8d8[VHD](10.000G//3.547G|n) -

I can share the log in its entirety if needed just need to make sure it does not contain any confidential details

-

I am in the discord and can screenshare the logs if it helps at all.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login