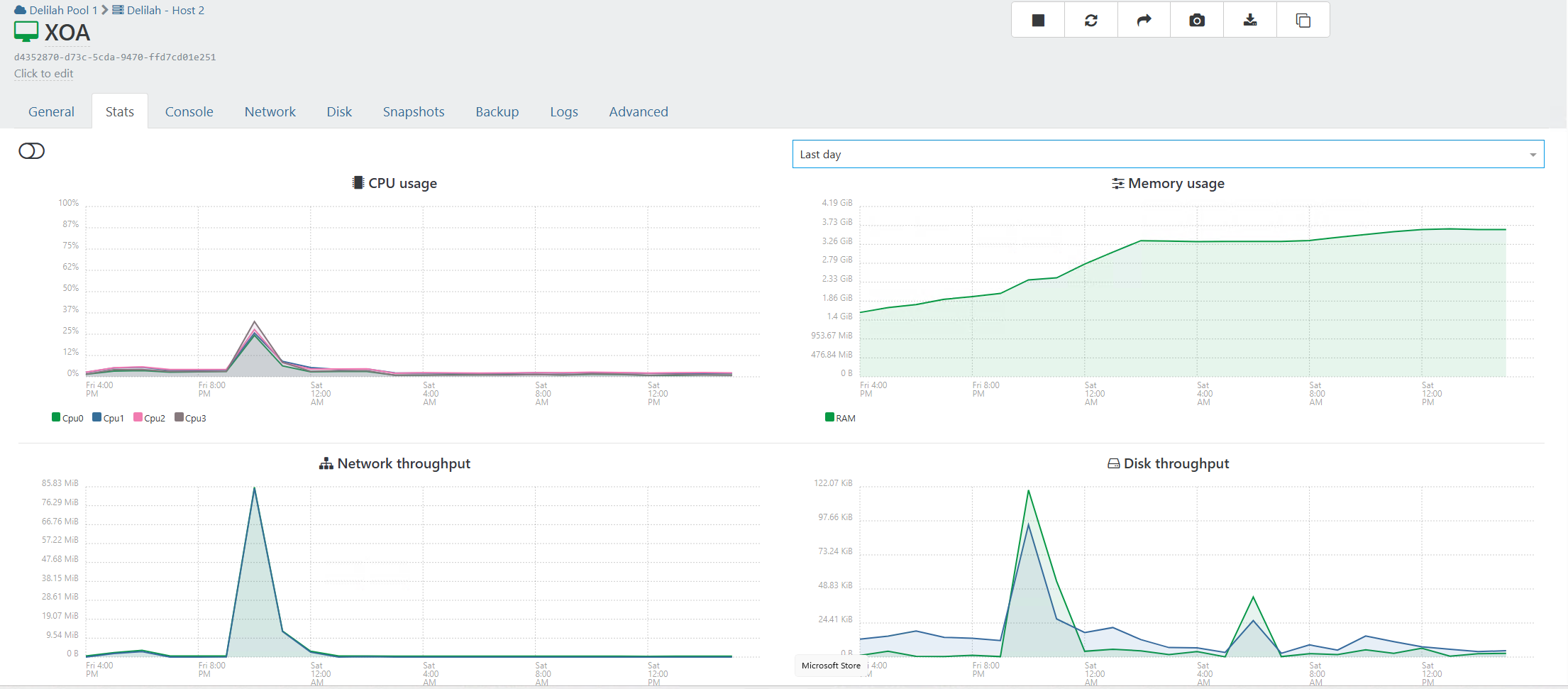

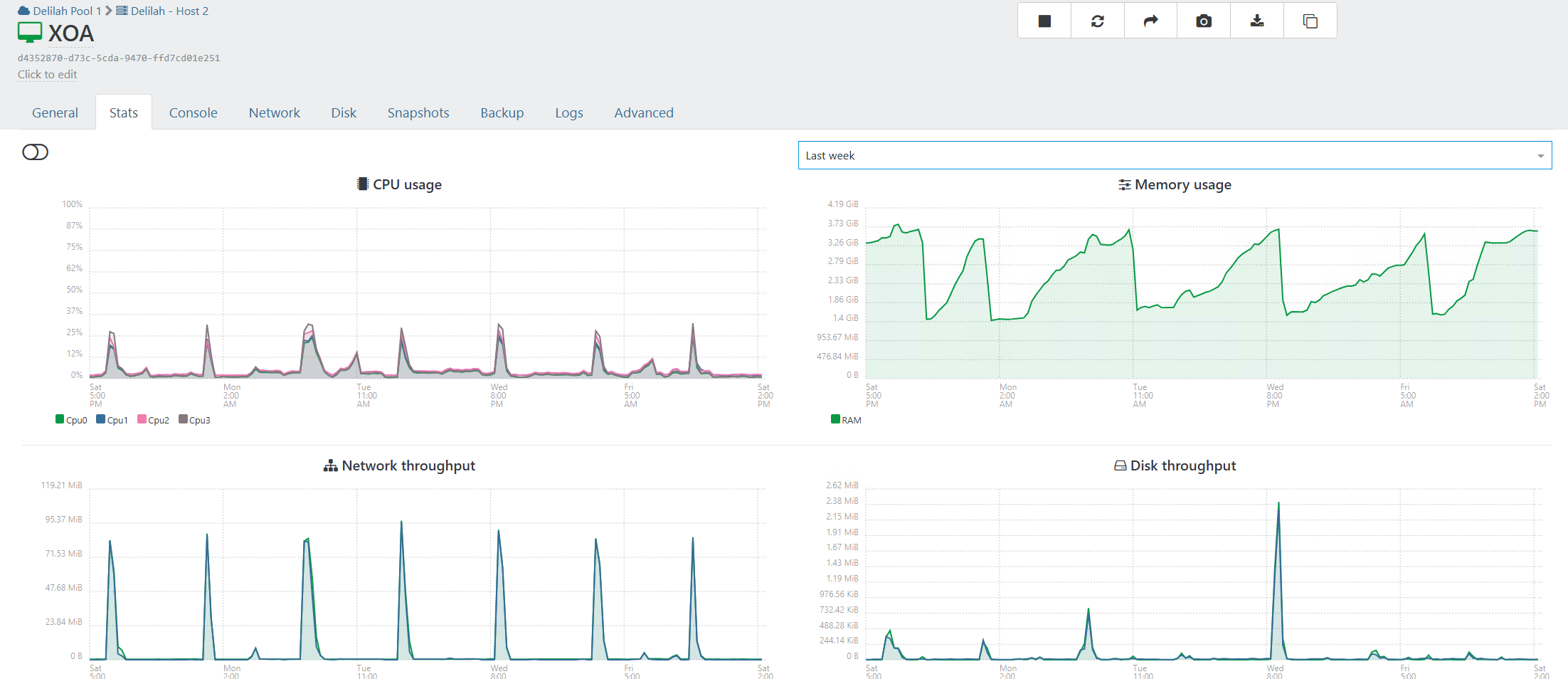

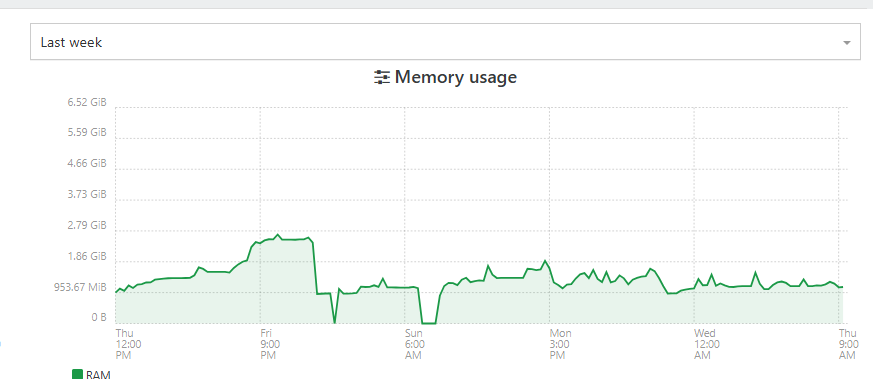

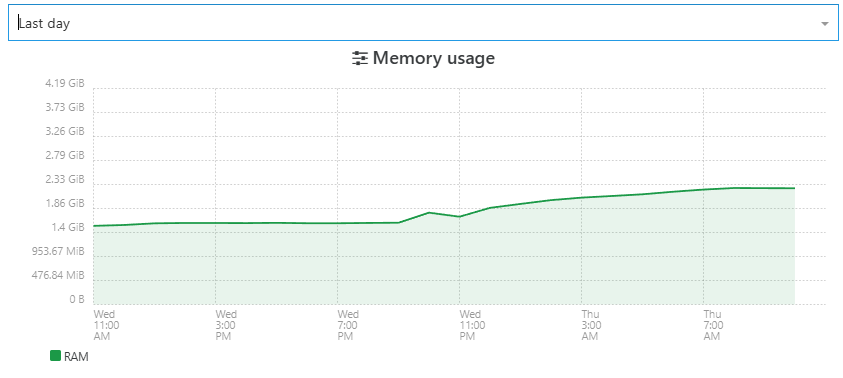

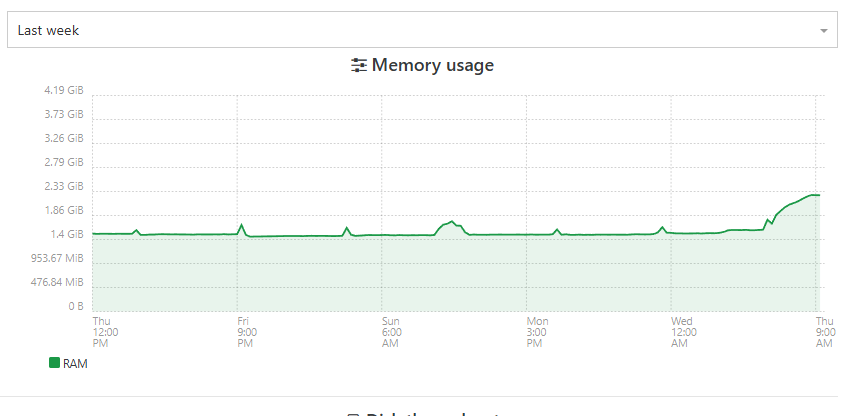

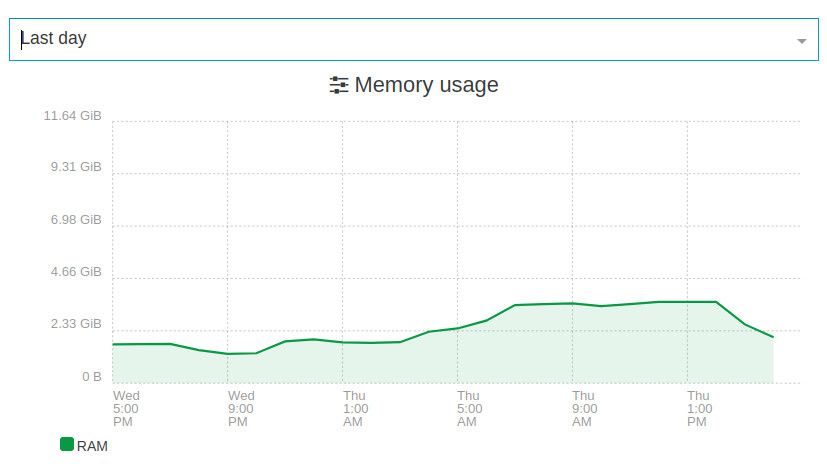

XOA - Memory Usage

-

@MajorP93 I think there are multiple issue for memory . Pillow and acebmxer uses proxy , so the backup memory is handled differently

are you using XO from source or a xoa ? would you be ok to export a memory heap ?

-

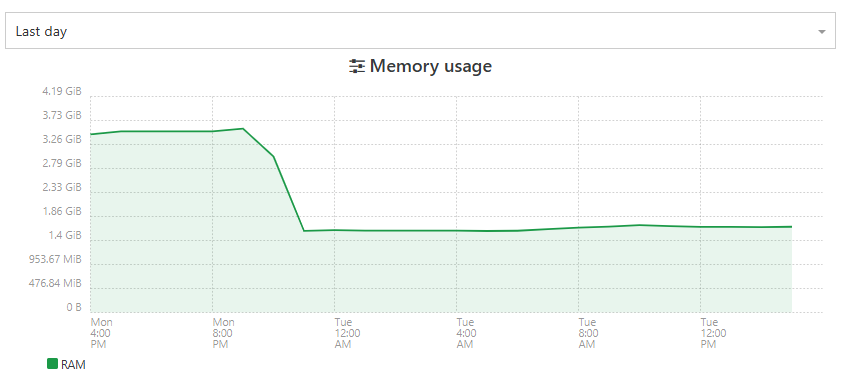

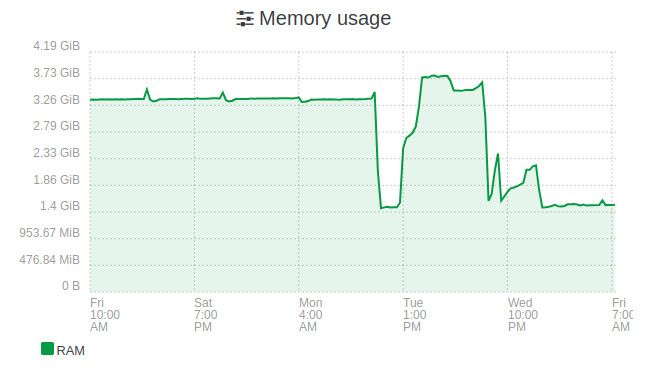

maybe a good news ?

is completely stable since yesterday's patch

-

@florent Yes I am using XO from sources:

Currently on the branch that @bastien-nollet asked me to test.

Yeah sure I can export a memory heap. Can you give me instructions for that?

Also: currently I have one backup job running. Is it better to take the memory heap snapshot while backup is running or afterwards?Best regards

-

@MajorP93 I am not sure of which branch.

can you switch to https://github.com/vatesfr/xen-orchestra/pull/9725then

when running this branch :

wait for the memory to fill find the process running xo ( dist/cli.mjs ) send signal : kill -SIGUSR2 a file is created in `/tmp/xo-server-${process.pid}-${Date.now()}.heapsnapshot`Note that these exports can contain sensitive data. This will freeze you xo from a few seconds to 2 minutes, and the memory consumption after will be higher after, but this will be very usefull to identify exactly what is leaking

The file will be a few hundred MB

-

we merge the code into master ( including the code to allow a heap memory export )

-

@florent Thanks!

I did not have time (yet) to look into the heap export due to weekend being in between.

I will update to current master and provide you with the heap export in the following days.

Best regards -

@florent Thanks!

I did not have time (yet) to look into the heap export due to weekend being in between.

I will update to current master and provide you with the heap export in the following days.

Best regardsno problem , the sooner we'll have these exports, the sooner we will be able to indentify what is leaking in your usage, since we already plugged the leaks we reproduced in our labs

-

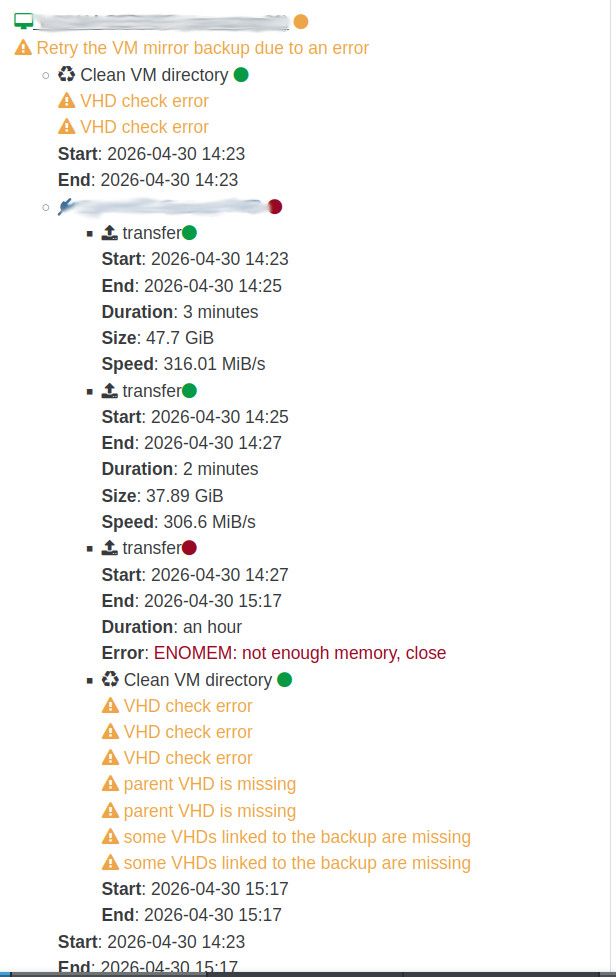

@florent Unfortunately Xen Orchestra RAM usage issue is back on my end.

During delta backups everything looked quite good but yesterday full backups were due according to our schedule.

The initial full backup succeeded but during the mirror job that is being started by sequence after the delta job finished the RAM issue got triggered.

We are using SMB backup remotes by the way.

XO VM has 10GB RAM in total plenty of which is free during backups. Still XO backup failed due to ENOMEM.

I can see in dmesg output on the Xen Orchestra VM:

[71584.204045] kworker/u37:2: page allocation failure: order:4, mode:0x40d40(GFP_NOFS|__GFP_ZERO|__GFP_COMP), nodemask=(null),cpuset=/,mems_allowed=0 [71584.204069] CPU: 4 UID: 0 PID: 75928 Comm: kworker/u37:2 Not tainted 6.18.25 #1 PREEMPT(lazy) [71584.204075] Hardware name: Xen HVM domU, BIOS 4.17 04/21/2026 [71584.204077] Workqueue: writeback wb_workfn (flush-cifs-2) [71584.204087] Call Trace: [71584.204091] <TASK> [71584.204096] dump_stack_lvl+0x61/0x80 [71584.204103] warn_alloc+0x151/0x1c0 [71584.204109] ? psi_memstall_leave+0x83/0xa0 [71584.204116] __alloc_pages_slowpath+0xdf2/0xe90 [71584.204124] __alloc_frozen_pages_noprof+0x2bb/0x350 [71584.204129] alloc_pages_mpol+0x107/0x190 [71584.204135] ___kmalloc_large_node+0x46/0x100 [71584.204140] __kmalloc_large_node_noprof+0x1d/0xd0 [71584.204144] __kmalloc_noprof+0x358/0x5e0 [71584.204150] ? crypt_message+0x50b/0x940 [cifs] [71584.204259] crypt_message+0x50b/0x940 [cifs] [71584.204365] smb3_init_transform_rq+0x278/0x310 [cifs] [71584.204458] smb_send_rqst+0x120/0x1c0 [cifs] [71584.204551] cifs_call_async+0x2b8/0x370 [cifs] [71584.204632] ? __pfx_smb2_writev_callback+0x10/0x10 [cifs] [71584.204714] smb2_async_writev+0x405/0x600 [cifs] [71584.204800] netfs_advance_write+0xcd/0xf0 [netfs] [71584.204827] netfs_write_folio+0x509/0x7c0 [netfs] [71584.204850] netfs_writepages+0x28f/0x440 [netfs] [71584.204872] do_writepages+0xdc/0x1f0 [71584.204878] ? update_curr+0x2f/0x180 [71584.204884] __writeback_single_inode+0x3e/0x320 [71584.204889] writeback_sb_inodes+0x274/0x4a0 [71584.204901] __writeback_inodes_wb+0x9a/0xf0 [71584.204906] wb_writeback+0x15b/0x300 [71584.204913] wb_workfn+0x3af/0x590 [71584.204918] process_scheduled_works+0x1d7/0x420 [71584.204923] worker_thread+0x21a/0x2f0 [71584.204927] ? __pfx_worker_thread+0x10/0x10 [71584.204930] kthread+0x201/0x260 [71584.204937] ? __pfx_kthread+0x10/0x10 [71584.204941] ret_from_fork+0x107/0x210 [71584.204946] ? __pfx_kthread+0x10/0x10 [71584.204950] ret_from_fork_asm+0x1a/0x30 [71584.204956] </TASK> [71584.205026] Mem-Info: [71584.205031] active_anon:288751 inactive_anon:14365 isolated_anon:0 active_file:1227362 inactive_file:783958 isolated_file:0 unevictable:768 dirty:32364 writeback:1024 slab_reclaimable:113003 slab_unreclaimable:26821 mapped:47649 shmem:936 pagetables:6024 sec_pagetables:0 bounce:0 kernel_misc_reclaimable:0 free:40796 free_pcp:5103 free_cma:0 [71584.205041] Node 0 active_anon:1155004kB inactive_anon:57460kB active_file:4909448kB inactive_file:3135832kB unevictable:3072kB isolated(anon):0kB isolated(file):0kB mapped:190596kB dirty:129456kB writeback:4096kB shmem:3744kB shmem_thp:0kB shmem_pmdmapped:0kB anon_thp:522240kB kernel_stack:7600kB pagetables:24096kB sec_pagetables:0kB all_unreclaimable? no Balloon:0kB [71584.205050] Node 0 DMA free:13312kB boost:0kB min:100kB low:124kB high:148kB reserved_highatomic:0KB free_highatomic:0KB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0kB writepending:0kB zspages:0kB present:15996kB managed:15360kB mlocked:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB [71584.205060] lowmem_reserve[]: 0 3720 9925 9925 9925 [71584.205068] Node 0 DMA32 free:108944kB boost:0kB min:25084kB low:31352kB high:37620kB reserved_highatomic:0KB free_highatomic:0KB active_anon:527548kB inactive_anon:0kB active_file:73972kB inactive_file:2908844kB unevictable:0kB writepending:133552kB zspages:0kB present:3915188kB managed:3809364kB mlocked:0kB bounce:0kB free_pcp:16856kB local_pcp:3944kB free_cma:0kB [71584.205078] lowmem_reserve[]: 0 0 6205 6205 6205 [71584.205085] Node 0 Normal free:43900kB boost:0kB min:42392kB low:52988kB high:63584kB reserved_highatomic:2048KB free_highatomic:2048KB active_anon:627456kB inactive_anon:57460kB active_file:4835476kB inactive_file:226988kB unevictable:3072kB writepending:0kB zspages:0kB present:6545408kB managed:6353952kB mlocked:0kB bounce:0kB free_pcp:664kB local_pcp:248kB free_cma:0kB [71584.205094] lowmem_reserve[]: 0 0 0 0 0 [71584.205101] Node 0 DMA: 0*4kB 0*8kB 0*16kB 0*32kB 0*64kB 0*128kB 0*256kB 0*512kB 1*1024kB (U) 2*2048kB (UM) 2*4096kB (M) = 13312kB [71584.205120] Node 0 DMA32: 1553*4kB (UME) 1096*8kB (UME) 822*16kB (UME) 671*32kB (UM) 236*64kB (UM) 104*128kB (UM) 34*256kB (UM) 2*512kB (UM) 3*1024kB (M) 7*2048kB (M) 2*4096kB (M) = 113348kB [71584.205194] Node 0 Normal: 71*4kB (UME) 998*8kB (UM) 1524*16kB (UE) 280*32kB (UE) 11*64kB (UE) 0*128kB 0*256kB 0*512kB 0*1024kB 1*2048kB (H) 0*4096kB = 44364kB [71584.205216] Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=1048576kB [71584.205219] Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=2048kB [71584.205221] 2012251 total pagecache pages [71584.205223] 2 pages in swap cache [71584.205225] Free swap = 7294960kB [71584.205226] Total swap = 7294968kB [71584.205228] 2619148 pages RAM [71584.205229] 0 pages HighMem/MovableOnly [71584.205231] 74479 pages reserved [71584.205232] 0 pages hwpoisonedMy XO from sources instance is running 6.4.0 release commit.

Best regards

//EDIT: maybe the issue that I am experiencing from time to time is being related to SMB remote?

Maybe backups to SMB remote are using different code path than e.g. NFS? -

-

SMB can be the culprit, not in XO directly but could trigger different things; That would be interesting to see if you can reproduce the issue on NFS.

-

@acebmxer

If you are ok, you can do a heap memory export ( not that you will probably have to restart the xoa after to free the memory)If you can do it, and we will collect the memory file on monday ( or when you are ready) , and see if this is a new cause or the return of an old one

-

I didnt setup ssh on work XOA i just set the password but need to reboot it for it to work. The tunnel is still open if you dont mind doing it otherwise I will need to reboot xoa to get in myself.

-

-

-

@acebmxer not yet

(a little under the water with the release patch, but we will do it )

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login