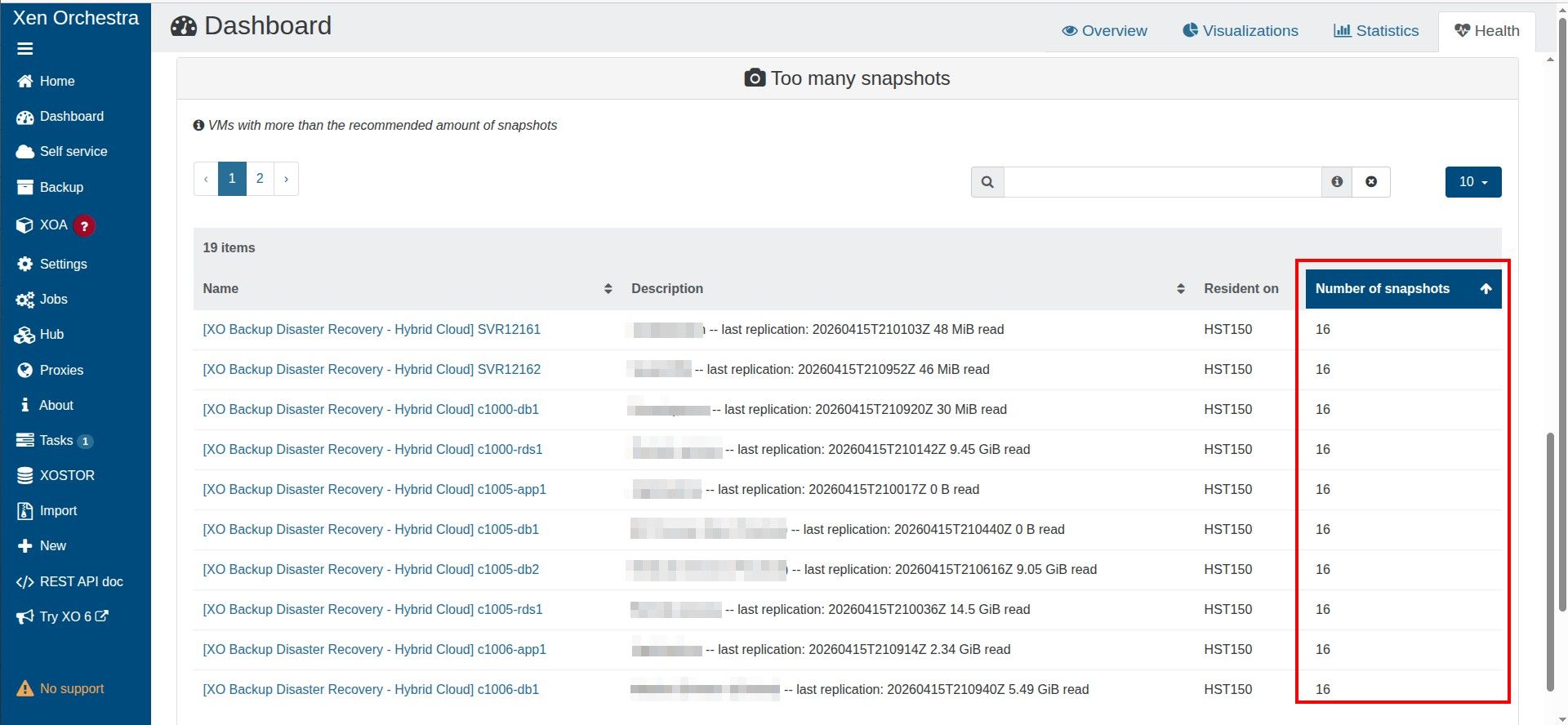

Too many snapshots

-

@tjkreidl in DASHBOARD/HEALTH/UNHEALTHY VDIs

there you can see GC doing its magic, with VDI Chain Length progressivly going down to zero when deleting a snapshot.my 2 cents, he has multiple VMs in the same CR job, and GC is sequential. in the one hour timeframe, next CR is launched and stumble upon VMs that are not yet sanitized

downing the number of VM per job could do the trick, and chain/sequence 2 CR jobs with a dispatch of the VMs

-

-

@Pilow Ah, right. You'd have to check the time stamp if you worked on automating this.

So maybe @McHenry could write a script to do the backups and that way, ensure there was no on-going task in progress before kicking off the next backup instance.

It could be run periodically from a cron job and if there's still on-going activity, just exit and try again the next time. -

@tjkreidl yes would be a good way to deal with the original problem

hope backup Devs @florent and/or @bastien-nollet can implement this, would profit to everyone

-

@Pilow Right, just skip the currently planned backup if a coalesce is still in progress and check again the next scheduled backup. This could potentially be implemented in the existing backup code.

-

@tjkreidl either skip or wait until possible

I'm used to veeam backup & recovery that is very resilient to these corner cases, on vmware if it understands that a Datastore has too many snapshots, or some backup ressouce is not ready yet (you can throttle number of active workers on a repository or per proxy), veeam will just wait for availability and keep going.problem with this way of doing is it can shift in time the schedule where you expect CR or backup to be happening.

but can be a problem to skip altogether, if @mchenry need compliancy of a certain number of replicas happening

waiting vs skipping, in a perfect world the devs give us a switch to choose our destiny

ps : I know XO Backup is not to be 100% mapped on Veeam functionnalities, but some of these functionnalities would really augment the XO Backup experience. just have to take into account Xen environment (no GC in vmware infrastructure)

-

@Pilow The other thing to to consider is being cognizant of how long your backups typically take (or even, planning a worst-case condition) and defining the backup intervals accordingly.

In other words, if you know you cannot consistently do your incremental backups in less than an hour, perform them 90 minutes or two hours between backups. It's better IMO to have a solid backup less frequently than have them fail on a regular basis. -

-

@tjkreidl

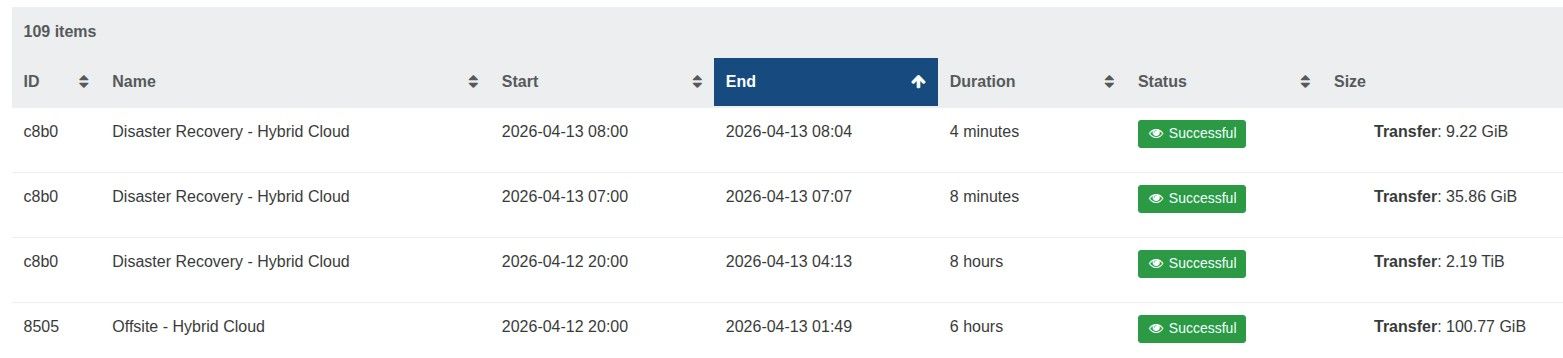

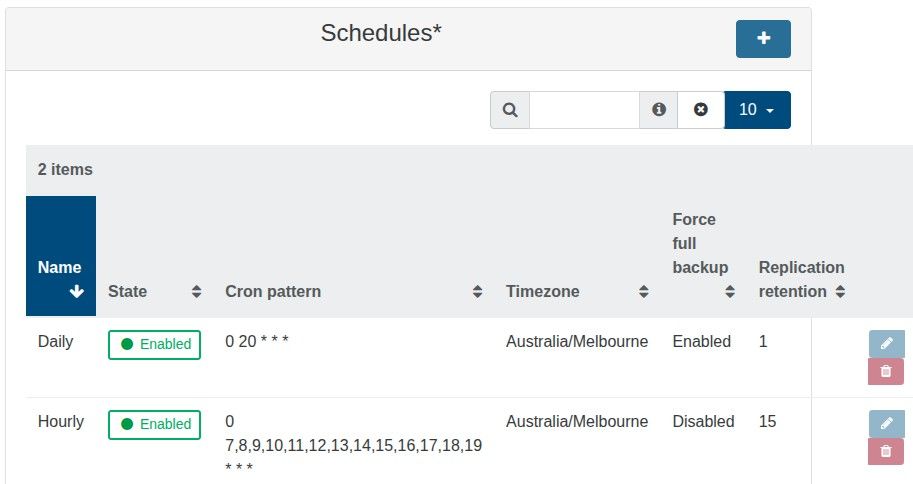

The offsite backup runs at 8 pm and takes 6/7 hours, whereas the hourly runs from 7 am to 7 pm and only take a few minutes.

The backup job has 19 VMs, suely this is not too many.

-

@McHenry 19 VMs is 19 Chains of 16 VDIs

at each hourly run, a new snapshot is created (some minutes) and the oldest one is merged/garbage collected in the first snap (time undetermined)I guess 19 merge + chain garbage collected seems to not be able to be done in the one hour timeframe before next CR is done

you possibly have a chain growing

can you check in DASHBOARD/HEALTH the unhealthy VDI section at 11 am ?

-

@Pilow Agree.... have to be sure that garbage collection is completed or it'll never catch up if backups continue to be run without the coalesce completing.

-

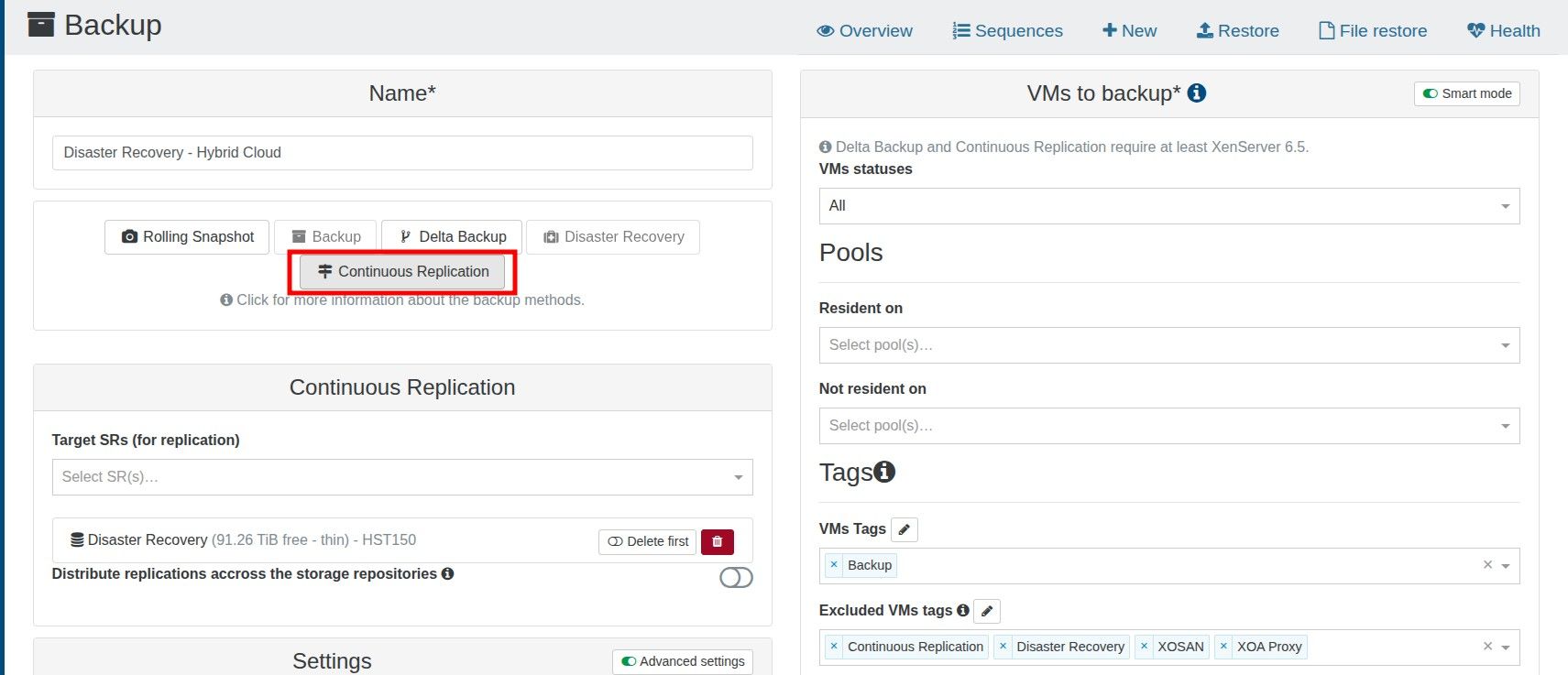

If each CR backup is now created as a snapshot, instead of a new VM, and the alert triggers after a VM has more than three snapshots, this logically means that the alert will trigger if the CR has a retention value greater than 3.

Have I misunderstood how the CR backup process works?

-

@McHenry I dont think more than 3 snapshots triggers an error, just tested on one VM

it is not recommended for "in production" VMs, but for a CR destination, it's OK (as you would need to start a copy anyway)

your problem, failing CR jobs is probably due to garbage collection not finishing in the one hour timeframe when chain is long.

-

CR jobs are not failing just XO reports too many snapshots under:

Dashboard >> HealthAll good if I can just ignore this warning but thought best to check in case it was an issue.

I got the value of 3 from here.

https://docs.xen-orchestra.com/manage_infrastructure#too-many-snapshots -

@McHenry could you screen the health page ?

where we could see the chain length -

Hello,

I see also this behavior which is "new" since few weeks.

Previously, when a backup start:

- it stake a snapshot ( if there another one before, it delete it ).

- it upload the snapshot as a backup

- it coalesce the backup on the remote.

- end of the game.

Now, the old snapshots are not deleted anymore which can lead easily to some disk full.

Even with a retention of 1, the problem is present.

I observe this only in Backup job, not DR/CR job.

I just updated my XO to latest version, i will see if the issue is fixed.

-

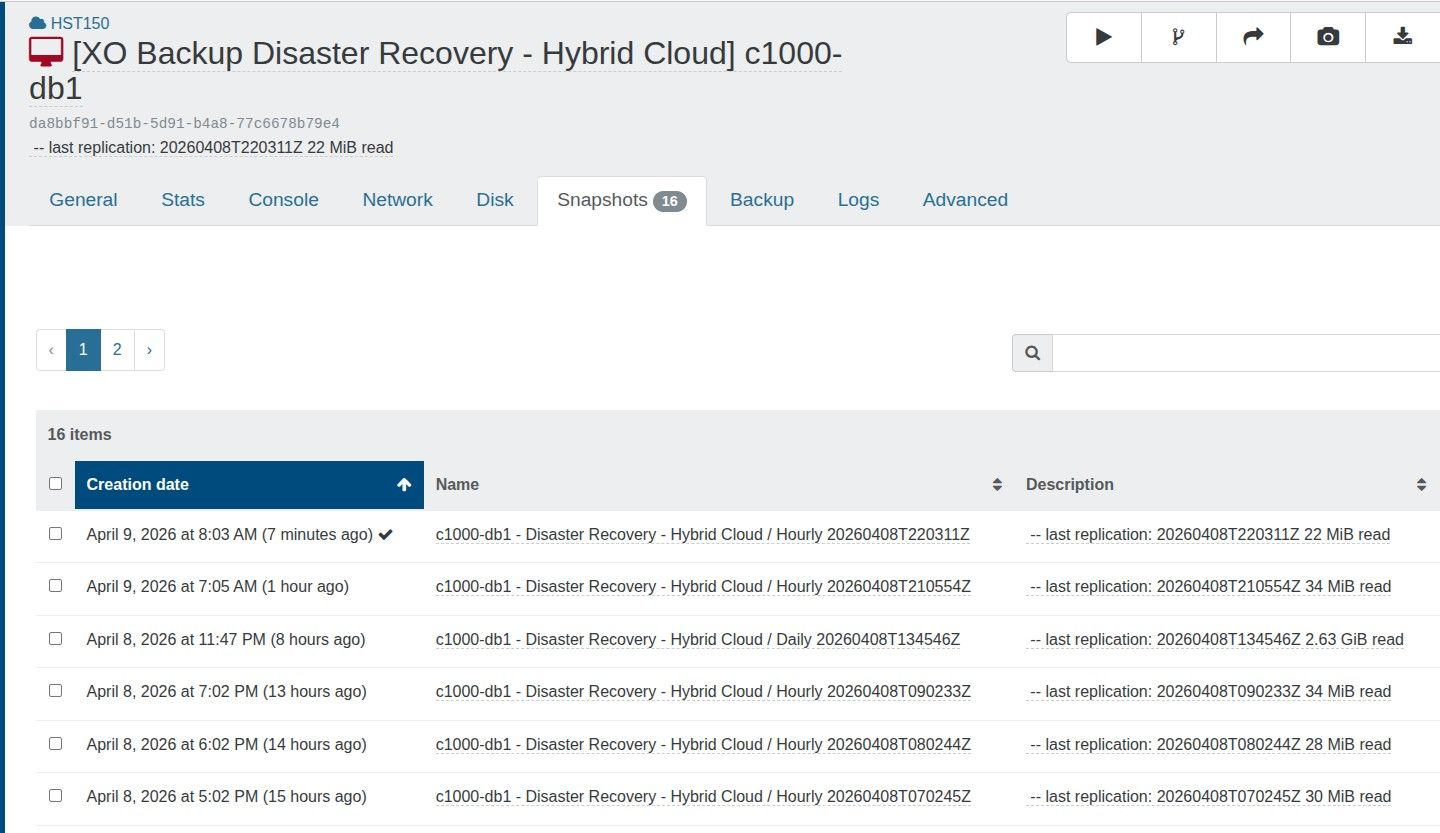

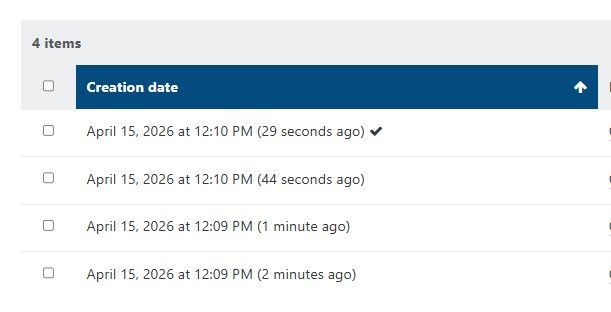

The number of snapshots shows 16, which makes sense as I have two backup schedules, one with a retention of 15 and one with a retention of 1. The daily backup with a retention of 1 resets the chain, as it is a full backup.

-

Hello @McHenry .

Yes but no, once the snapshot is exported, the previous one must be cleaned on local.

Best regards,

-

Thanks.

The old snapshots are being removed as the total never increases beyond 16, so when a new snapshot is added, the old one is removed. -

Thanks.

The old snapshots are being removed as the total never increases beyond 16, so when a new snapshot is added, the old one is removed.immediatly removed, yes, but then Garbage collection takes place.

and perhaps with 19x16 GC to process it can't be done in one hour, and then next CR is launched, etc etc...

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login