Backups with qcow2 enabled

-

@acebmxer I have a new case of managing to force the fell back to full error...

i'll create a new topic for thisin the time being, if you can, do a toolstack restart on your pool when no tasks is ongoing

your backups with NBD could be better (spoiler alert : iptables rules... )

) -

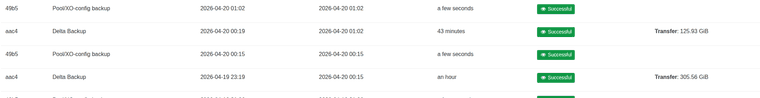

Both full and delta passed...

Full - 2026-04-17T11_32_42.906Z - backup NG.txt

Delta - 2026-04-17T13_26_54.973Z - backup NG.txtI just restarted tool stack on both pool master and second host. Re-edit backup job to remove the purge snapshot with cbt and re-ran a delta backup. Which still fell back to full backup.

-

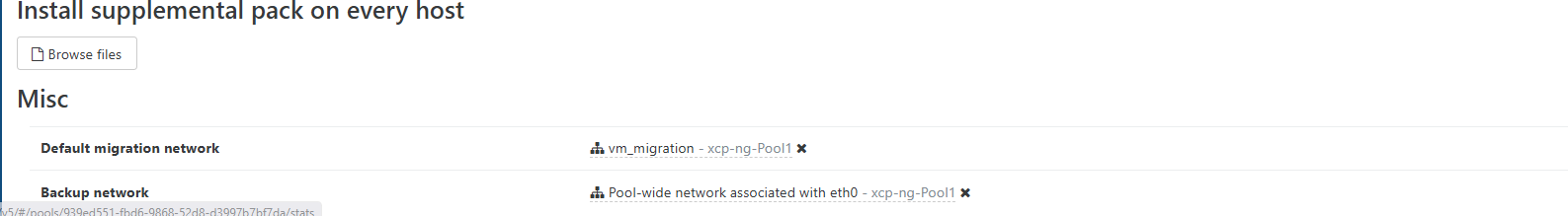

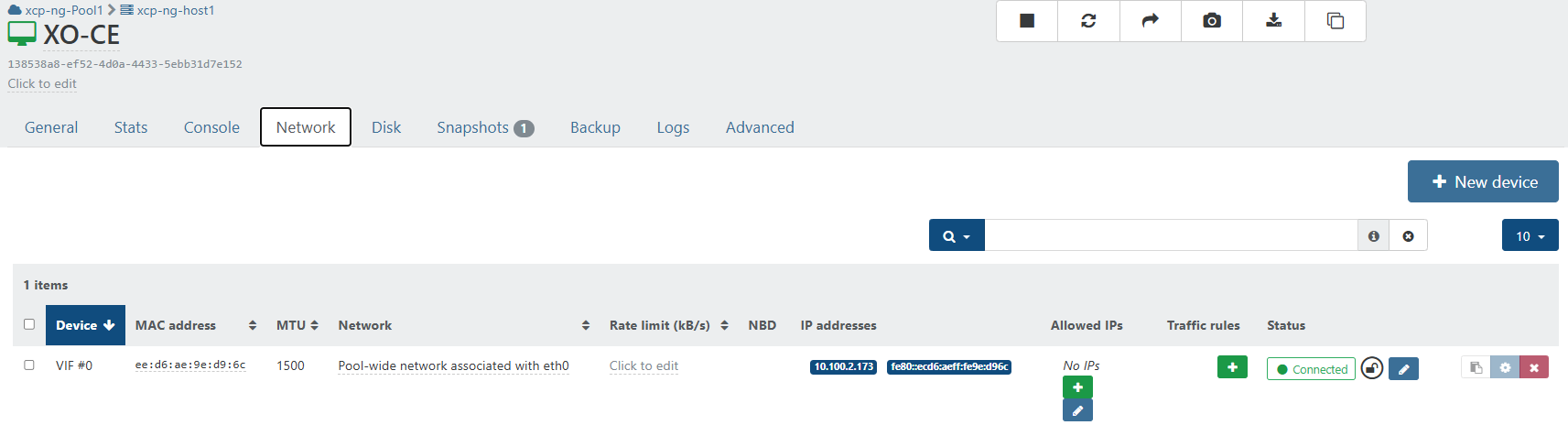

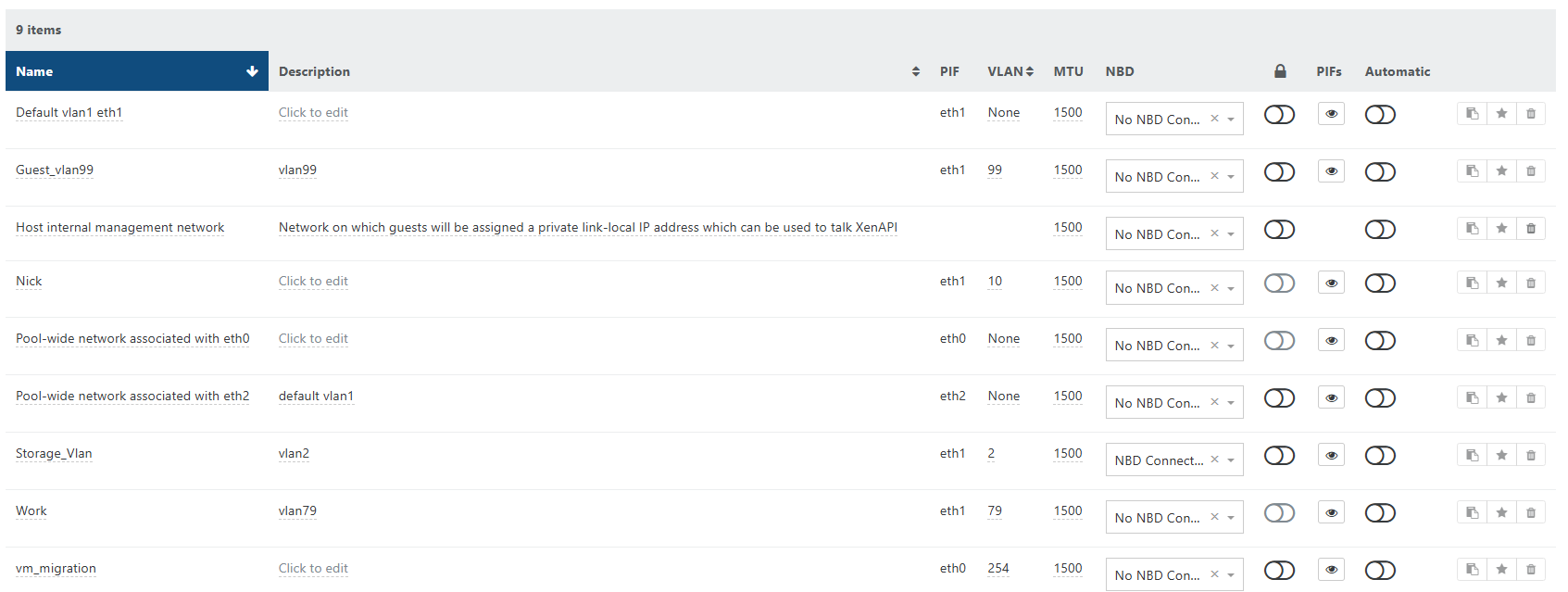

@acebmxer bottom of POOL advanced tab, is BACKUP NETWORK selected on the NBD enabled network accessible by both hosts and XOA ?

-

Was already configured...

Think this is the issue....

Network tab under pool.

Will run another delta once the current one finishes.

Edit - update

Can a warning be made if NBD is not enabled at the pool level? or make the error more clear?

I enabled the nbd at the pool level and ran another delta - 2026-04-17T14_40_39.535Z - backup NG.txt

I Then re-enable the purge snapshot in back up job and ran another delta. 2026-04-17T15_00_40.358Z - backup NG.txt

-

A acebmxer referenced this topic on

A acebmxer referenced this topic on

-

@acebmxer so, NBD it was...

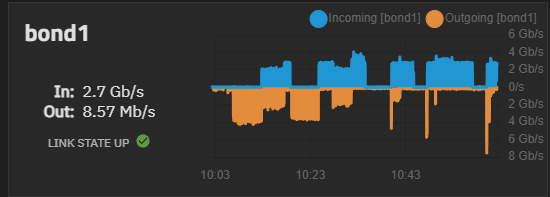

holy molly, you have some good network performance !

what kind of SR at source ? and remote at destination ?

what about the PIFs ? -

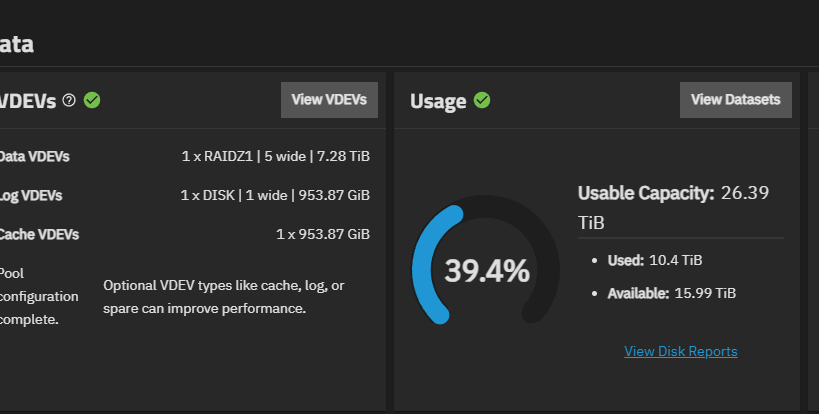

Just a little old truenas running on AMD 5900x with 10 Gic nics bound, Unifi Agg switch with 10gb links to hosts.

Storage 5 8tb tohisba drives and two 1tb nvme drives 1 for cache 1 for log vdev.

Backup device is a DS1819+ with 4 12 tb seagate exo drives with 10gb link.

-

@acebmxer NFS remotes on the DS1819+ ?

we have iSCSI SR (25Gb mellanox 6 PIFs on hosts to 25Gb MSA2062 SAN dual controller)

our remotes are iSCSI os mounted volumes on MSA SANs, presented as S3 (minio VMs)

using XO PROXIES to offload backups from XOAwe max out a 150/200Mb/s during backups

but we are on VHD VDIs, asking myself if the added backup performance you present could be due to QCOW2 format on source SR ?

will have to try VDIs on such SR to see the diff -

@Pilow Yes NFS on vm storage and on backup storage.

All vms are now on qcow2 except for the windows vm what was vhd. However i just mirgrated it over to qcow2. the Nics in all systems are the intel 10gb either x520 or x540

Edit - Sorry missed your question about performance vhd vers qcow2. I would say its on equal to. I didnt run any benchmarks for comparison. (probably should have) But havent seen any major slowness other then GC issues. (See latest post)

-

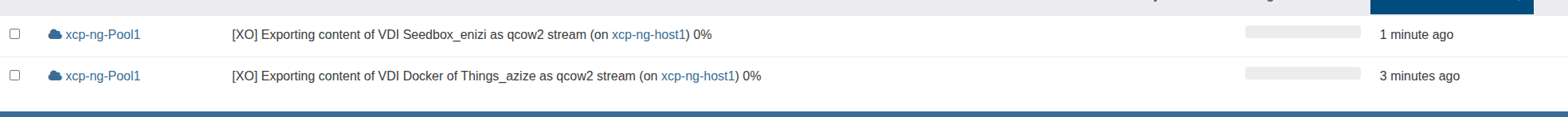

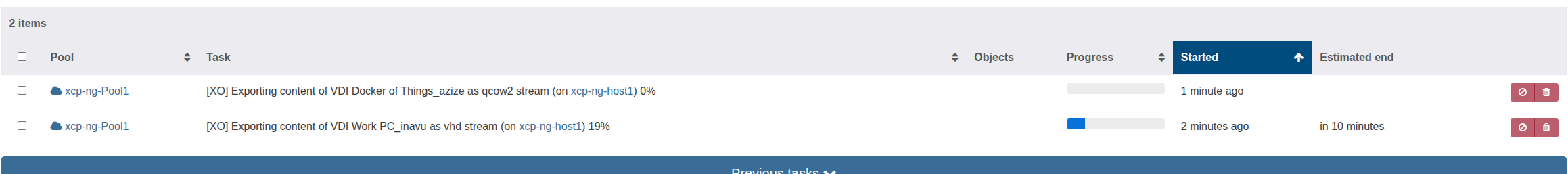

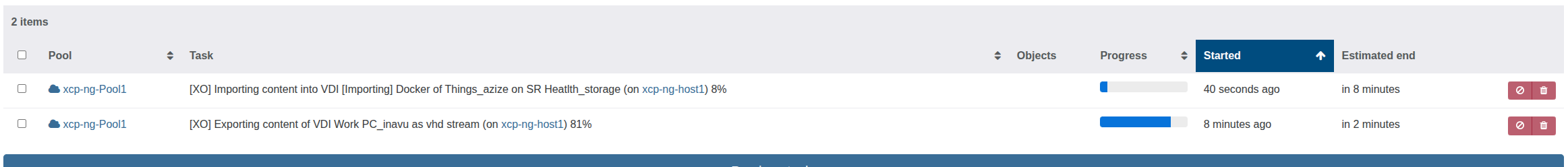

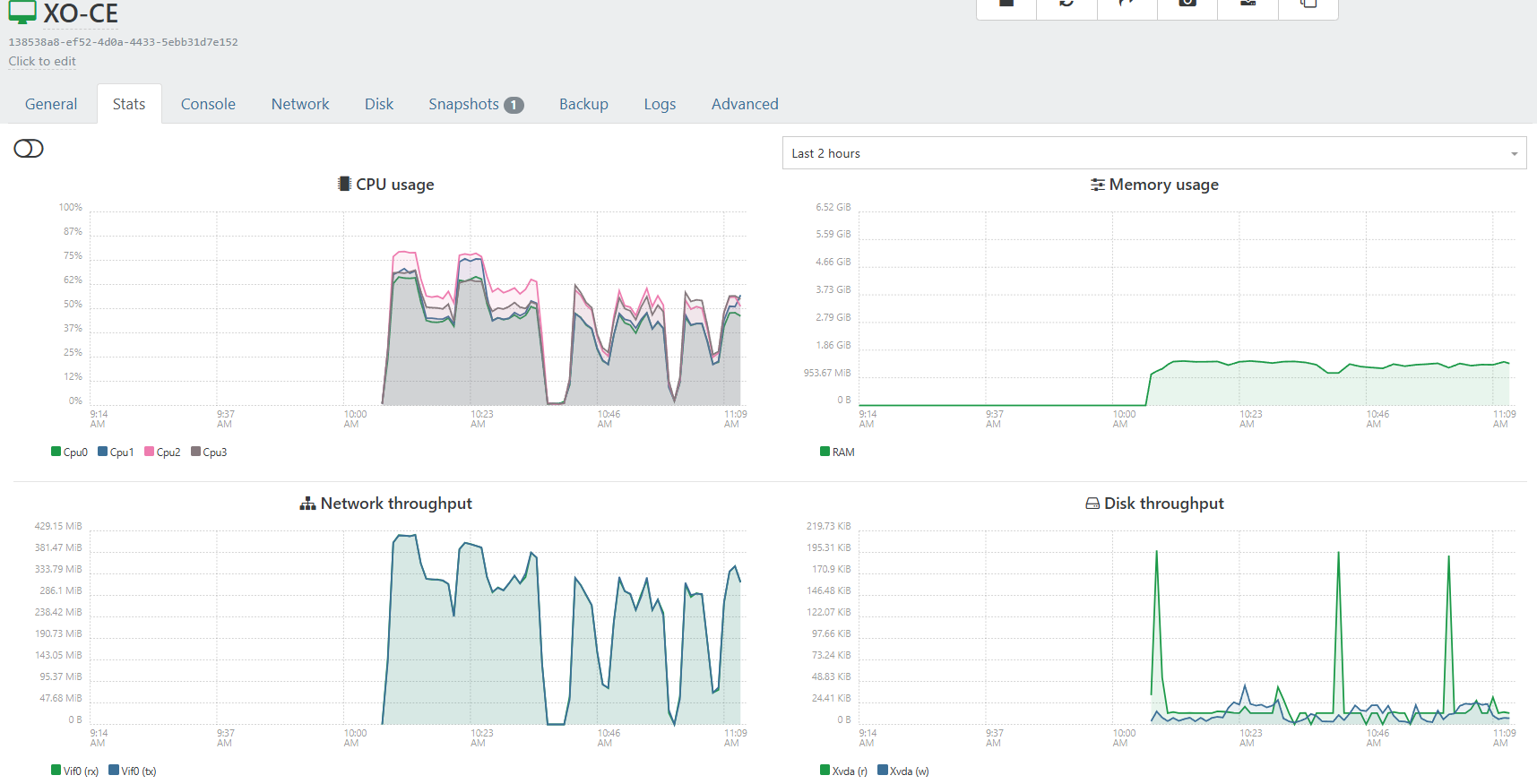

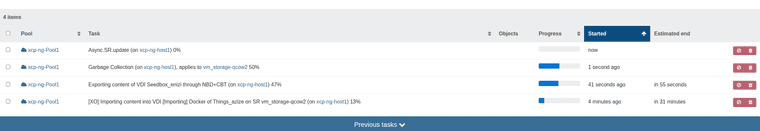

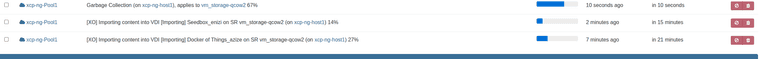

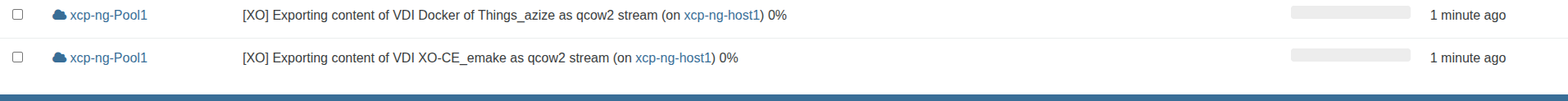

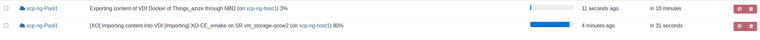

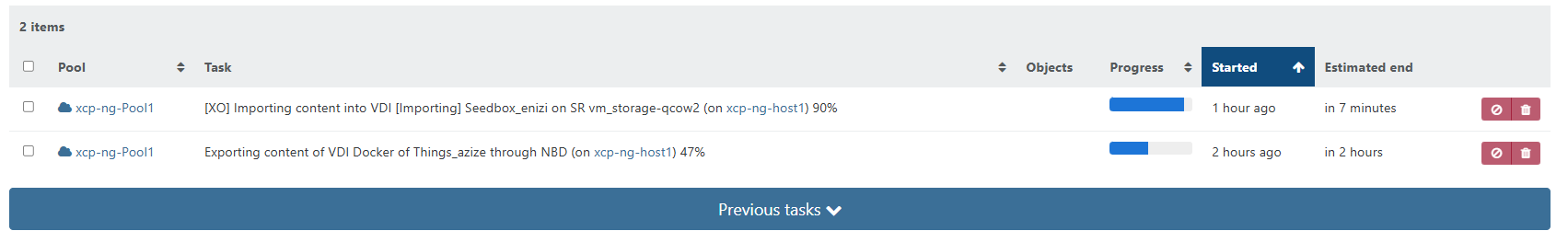

So progress bar seems to be working now on exporting. But i am also noticing Garbage collection seems to be running quite often that i feel its slowing down the import for the health check.

-

Next thing I am noticing that garbage collection is not able to coalesce vdi to vms that are running. garbage will keep trying to run every 30 - 45 seconds for 30 seconds run time. VDIs to coalesce will keep increasing unless a vm is powered off and given enough time for garbage collection to actually run.

Because garbage collection is spamming so often importing a vdi for health check will take logger.

-

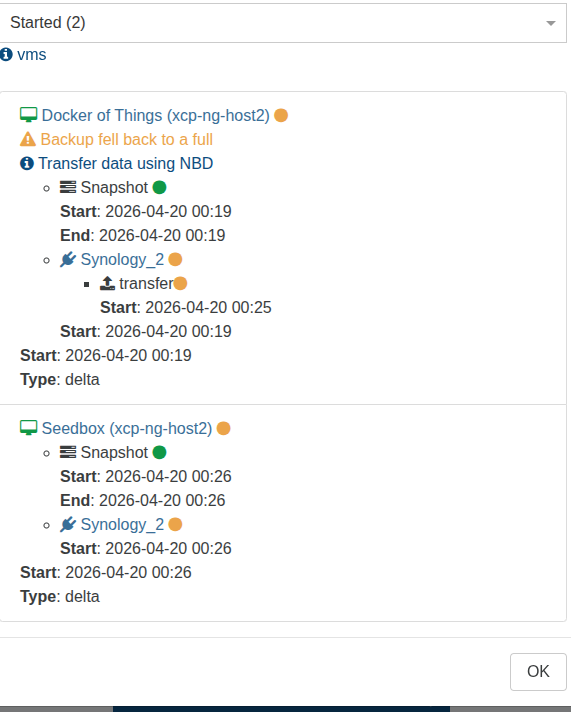

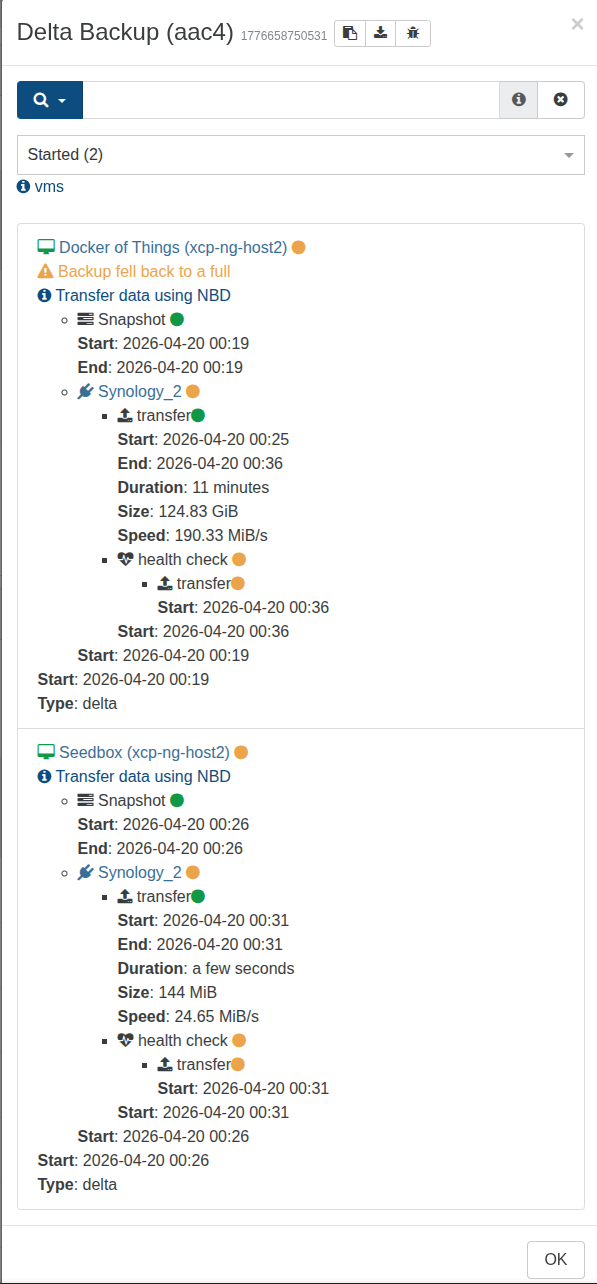

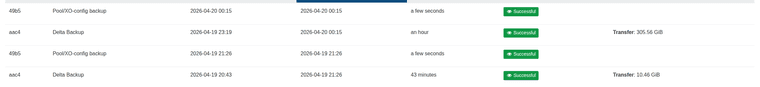

I disabled CBT on the vms and re-ran a delta backup. I noticed the following.

While watching the backup task when it shows [XO] Exporting it will not show the percentage and completion. Watching backup task a vm will show not using NBD at first but using XO and then fail. The backup log will then show vm fell back to full backup and the task will then show that it is using NBD.

Also with out CBT GC is not constantly spamming once a vm has finished exporting and started health check. It appears to actually be running and able to coalesce as they are no longer showing in the dashboard / health...

delta job with CBT enabled - 2026-04-20T00_43_48.487Z - backup NG.txt

CBT disabled delta job. two vms fell back to full - 2026-04-20T03_19_02.894Z - backup NG.txt

Next delta job with CBT disabled 1 vm fell back to full - 2026-04-20T04_19_10.531Z - backup NG.txt

-

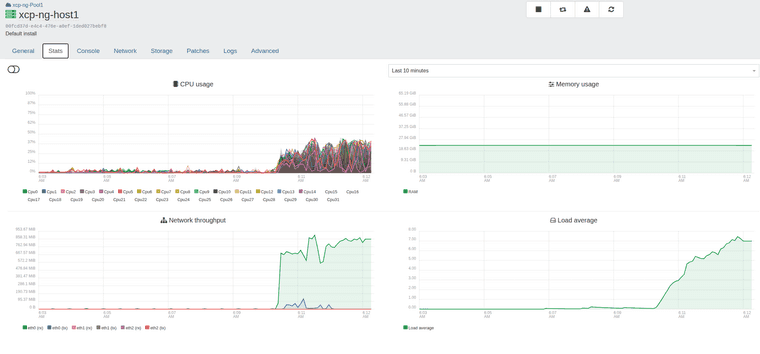

Ok something is happening. I made no additional changes from last night. Now schedule backup job 1 pm is still going on 2 hours later.

Think i might revert back to VHD as this has cause so many issues.

-

@acebmxer do you have something in the xo logs ( journalct probably ) ?

-

@acebmxer do you have something in the xo logs ( journalct probably ) ?

from host

sudo journalctl -u xo-server -n 50

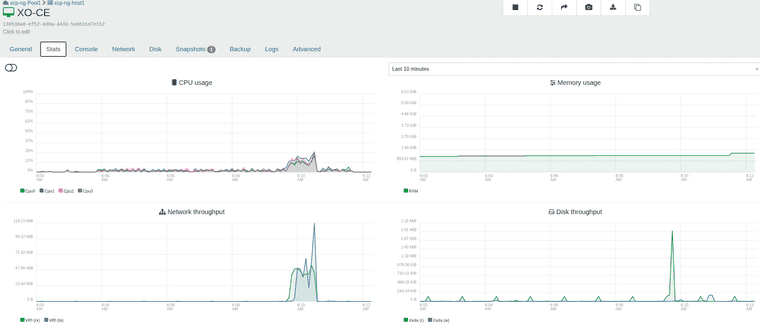

-- No entries --From XO - Currently on fbba0 commit (4 behind)

Apr 20 15:21:51 xo-ce xo-server[849]: } Apr 20 15:21:51 xo-ce xo-server[849]: } Apr 20 15:22:35 xo-ce xo-server[849]: 2026-04-20T19:22:35.554Z xo:main INFO + Console proxy ( - 127.0.0.1) Apr 20 15:22:41 xo-ce xo-server[849]: 2026-04-20T19:22:41.365Z xo:main INFO - Console proxy ( - 127.0.0.1) Apr 20 15:22:55 xo-ce xo-server[849]: 2026-04-20T19:22:55.223Z xo:main INFO + Console proxy ( - 127.0.0.1) Apr 20 15:23:04 xo-ce xo-server[849]: 2026-04-20T19:23:04.562Z xo:main INFO - Console proxy ( - 127.0.0.1) Apr 20 16:11:49 xo-ce xo-server[849]: (node:849) MaxListenersExceededWarning: Possible EventEmitter memory leak detected. 11 update list> Apr 20 16:11:49 xo-ce xo-server[849]: 2026-04-20T20:11:49.190Z xo:xo-server WARN Node warning { Apr 20 16:11:49 xo-ce xo-server[849]: error: MaxListenersExceededWarning: Possible EventEmitter memory leak detected. 11 update listen> Apr 20 16:11:49 xo-ce xo-server[849]: at genericNodeError (node:internal/errors:985:15) Apr 20 16:11:49 xo-ce xo-server[849]: at wrappedFn (node:internal/errors:539:14) Apr 20 16:11:49 xo-ce xo-server[849]: at _addListener (node:events:590:17) Apr 20 16:11:49 xo-ce xo-server[849]: at Tasks.addListener (node:events:608:10) Apr 20 16:11:49 xo-ce xo-server[849]: at TaskController.getTasks (file:///opt/xen-orchestra/@xen-orchestra/rest-api/dist/tasks/tas> Apr 20 16:11:49 xo-ce xo-server[849]: at ExpressTemplateService.buildPromise (/opt/xen-orchestra/node_modules/@tsoa/runtime/src/ro> Apr 20 16:11:49 xo-ce xo-server[849]: at ExpressTemplateService.apiHandler (/opt/xen-orchestra/node_modules/@tsoa/runtime/src/rout> Apr 20 16:11:49 xo-ce xo-server[849]: at TaskController_getTasks (file:///opt/xen-orchestra/@xen-orchestra/rest-api/dist/open-api/> Apr 20 16:11:49 xo-ce xo-server[849]: emitter: Tasks { Apr 20 16:11:49 xo-ce xo-server[849]: _events: [Object: null prototype], Apr 20 16:11:49 xo-ce xo-server[849]: _eventsCount: 2, Apr 20 16:11:49 xo-ce xo-server[849]: _maxListeners: undefined, Apr 20 16:11:49 xo-ce xo-server[849]: Symbol(shapeMode): false, Apr 20 16:11:49 xo-ce xo-server[849]: Symbol(kCapture): false Apr 20 16:11:49 xo-ce xo-server[849]: }, Apr 20 16:11:49 xo-ce xo-server[849]: type: 'update', Apr 20 16:11:49 xo-ce xo-server[849]: count: 11 Apr 20 16:11:49 xo-ce xo-server[849]: } Apr 20 16:11:49 xo-ce xo-server[849]: } Apr 20 16:11:49 xo-ce xo-server[849]: (node:849) MaxListenersExceededWarning: Possible EventEmitter memory leak detected. 11 remove list> Apr 20 16:11:49 xo-ce xo-server[849]: 2026-04-20T20:11:49.190Z xo:xo-server WARN Node warning { Apr 20 16:11:49 xo-ce xo-server[849]: error: MaxListenersExceededWarning: Possible EventEmitter memory leak detected. 11 remove listen> Apr 20 16:11:49 xo-ce xo-server[849]: at genericNodeError (node:internal/errors:985:15) Apr 20 16:11:49 xo-ce xo-server[849]: at wrappedFn (node:internal/errors:539:14) Apr 20 16:11:49 xo-ce xo-server[849]: at _addListener (node:events:590:17) Apr 20 16:11:49 xo-ce xo-server[849]: at Tasks.addListener (node:events:608:10) Apr 20 16:11:49 xo-ce xo-server[849]: at TaskController.getTasks (file:///opt/xen-orchestra/@xen-orchestra/rest-api/dist/tasks/tas> Apr 20 16:11:49 xo-ce xo-server[849]: at ExpressTemplateService.buildPromise (/opt/xen-orchestra/node_modules/@tsoa/runtime/src/ro> Apr 20 16:11:49 xo-ce xo-server[849]: at ExpressTemplateService.apiHandler (/opt/xen-orchestra/node_modules/@tsoa/runtime/src/rout> Apr 20 16:11:49 xo-ce xo-server[849]: at TaskController_getTasks (file:///opt/xen-orchestra/@xen-orchestra/rest-api/dist/open-api/> Apr 20 16:11:49 xo-ce xo-server[849]: emitter: Tasks { Apr 20 16:11:49 xo-ce xo-server[849]: _events: [Object: null prototype], Apr 20 16:11:49 xo-ce xo-server[849]: _eventsCount: 2, Apr 20 16:11:49 xo-ce xo-server[849]: _maxListeners: undefined, Apr 20 16:11:49 xo-ce xo-server[849]: Symbol(shapeMode): false, Apr 20 16:11:49 xo-ce xo-server[849]: Symbol(kCapture): false Apr 20 16:11:49 xo-ce xo-server[849]: }, Apr 20 16:11:49 xo-ce xo-server[849]: type: 'remove', Apr 20 16:11:49 xo-ce xo-server[849]: count: 11 Apr 20 16:11:49 xo-ce xo-server[849]: } Apr 20 16:11:49 xo-ce xo-server[849]: } -

Killed the current tasks. Updated xo to latest commit and start backups again... here are current errors from xo...

sudo journalctl -u xo-server -n 50 Apr 20 17:29:54 xo-ce xo-server[3147]: Symbol(undici.error.UND_ERR): true, Apr 20 17:29:54 xo-ce xo-server[3147]: Symbol(undici.error.UND_ERR_SOCKET): true Apr 20 17:29:54 xo-ce xo-server[3147]: } Apr 20 17:30:29 xo-ce xo-server[3262]: 2026-04-20T21:30:29.390Z xo:xapi:vdi INFO OpaqueRef:4efd6d02-6c4d-26f8-7ed5-1b9b34daa89d has been disconnected from dom0> Apr 20 17:30:29 xo-ce xo-server[3262]: vdiRef: 'OpaqueRef:ce354818-4cf0-2ac3-8156-3d7118548ecd', Apr 20 17:30:29 xo-ce xo-server[3262]: vbdRef: 'OpaqueRef:4efd6d02-6c4d-26f8-7ed5-1b9b34daa89d' Apr 20 17:30:29 xo-ce xo-server[3262]: } Apr 20 17:30:29 xo-ce xo-server[3262]: 2026-04-20T21:30:29.392Z xo:xapi:xapi-disks WARN Either transmit the source to the constructor or implement openSource an> Apr 20 17:30:29 xo-ce xo-server[3262]: error: Error: Either transmit the source to the constructor or implement openSource and call init Apr 20 17:30:29 xo-ce xo-server[3262]: at get source (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:53:19) Apr 20 17:30:29 xo-ce xo-server[3262]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:86> Apr 20 17:30:29 xo-ce xo-server[3262]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQcow2StreamSource.mjs:61:18) Apr 20 17:30:29 xo-ce xo-server[3262]: at #openExportStream (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/Xapi.mjs:189:21) Apr 20 17:30:29 xo-ce xo-server[3262]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) Apr 20 17:30:29 xo-ce xo-server[3262]: at async #openNbdStream (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/Xapi.mjs:97:22) Apr 20 17:30:29 xo-ce xo-server[3262]: at async XapiDiskSource.openSource (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/Xapi.mjs:258:18) Apr 20 17:30:29 xo-ce xo-server[3262]: at async XapiDiskSource.init (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:28:4> Apr 20 17:30:29 xo-ce xo-server[3262]: at async file:///opt/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:66:5 Apr 20 17:30:29 xo-ce xo-server[3262]: at async Promise.all (index 0) Apr 20 17:30:29 xo-ce xo-server[3262]: } Apr 20 17:30:29 xo-ce xo-server[3262]: 2026-04-20T21:30:29.392Z xo:xapi:xapi-disks WARN can't compute delta OpaqueRef:ce354818-4cf0-2ac3-8156-3d7118548ecd from > Apr 20 17:30:29 xo-ce xo-server[3262]: error: BodyTimeoutError: Body Timeout Error Apr 20 17:30:29 xo-ce xo-server[3262]: at FastTimer.onParserTimeout [as _onTimeout] (/opt/xen-orchestra/node_modules/undici/lib/dispatcher/client-h1.js:64> Apr 20 17:30:29 xo-ce xo-server[3262]: at Timeout.onTick [as _onTimeout] (/opt/xen-orchestra/node_modules/undici/lib/util/timers.js:162:13) Apr 20 17:30:29 xo-ce xo-server[3262]: at listOnTimeout (node:internal/timers:605:17) Apr 20 17:30:29 xo-ce xo-server[3262]: at process.processTimers (node:internal/timers:541:7) { Apr 20 17:30:29 xo-ce xo-server[3262]: code: 'UND_ERR_BODY_TIMEOUT', Apr 20 17:30:29 xo-ce xo-server[3262]: Symbol(undici.error.UND_ERR): true, Apr 20 17:30:29 xo-ce xo-server[3262]: Symbol(undici.error.UND_ERR_BODY_TIMEOUT): true Apr 20 17:30:29 xo-ce xo-server[3262]: } Apr 20 17:30:29 xo-ce xo-server[3262]: } Apr 20 17:30:29 xo-ce xo-server[3262]: 2026-04-20T21:30:29.395Z xo:xapi:xapi-disks INFO export through qcow2 Apr 20 17:30:36 xo-ce xo-server[3262]: 2026-04-20T21:30:36.324Z xo:backups:worker ERROR unhandled error event { Apr 20 17:30:36 xo-ce xo-server[3262]: error: RequestAbortedError [AbortError]: Request aborted Apr 20 17:30:36 xo-ce xo-server[3262]: at BodyReadable.destroy (/opt/xen-orchestra/node_modules/undici/lib/api/readable.js:51:13) Apr 20 17:30:36 xo-ce xo-server[3262]: at QcowStream.close (file:///opt/xen-orchestra/@xen-orchestra/qcow2/dist/disk/QcowStream.mjs:40:22) Apr 20 17:30:36 xo-ce xo-server[3262]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:86> Apr 20 17:30:36 xo-ce xo-server[3262]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQcow2StreamSource.mjs:61:18) Apr 20 17:30:36 xo-ce xo-server[3262]: at DiskLargerBlock.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskLargerBlock.mjs:87:28) Apr 20 17:30:36 xo-ce xo-server[3262]: at TimeoutDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:34:29) Apr 20 17:30:36 xo-ce xo-server[3262]: at XapiStreamNbdSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:34:2> Apr 20 17:30:36 xo-ce xo-server[3262]: at XapiStreamNbdSource.init (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiStreamNbd.mjs:66:17) Apr 20 17:30:36 xo-ce xo-server[3262]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) Apr 20 17:30:36 xo-ce xo-server[3262]: at async #openNbdStream (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/Xapi.mjs:108:7) { Apr 20 17:30:36 xo-ce xo-server[3262]: code: 'UND_ERR_ABORTED', Apr 20 17:30:36 xo-ce xo-server[3262]: Symbol(undici.error.UND_ERR): true, Apr 20 17:30:36 xo-ce xo-server[3262]: Symbol(undici.error.UND_ERR_ABORT): true, Apr 20 17:30:36 xo-ce xo-server[3262]: Symbol(undici.error.UND_ERR_ABORTED): true Apr 20 17:30:36 xo-ce xo-server[3262]: } Apr 20 17:30:36 xo-ce xo-server[3262]: } -

After some digging this is what I have come up with. Please double check everything...

I can PM you the whole chat session if you like.Bug Report: XO Backup Intermittent Failure — RequestAbortedError During NBD Stream Init

Environment:XCP-ng: 8.3.0 (build 20260408, xapi 26.1.3)

xapi-nbd: 26.1.3-1.6.xcpng8.3

xo-server: community edition (xen-orchestra from source)

Pool: 2-node pool (host1 10.100.2.10, host2 10.100.2.11)

Backup NFS target: 10.100.2.23:/volume1/backup

Symptom:Scheduled backup jobs intermittently fail with RequestAbortedError: Request aborted during NBD stream initialization. The failure is transient — the same VMs back up successfully on subsequent runs.

xo:backups:worker ERROR unhandled error event

error: RequestAbortedError [AbortError]: Request aborted

at BodyReadable.destroy (undici/lib/api/readable.js:51:13)

at QcowStream.close (@xen-orchestra/qcow2/dist/disk/QcowStream.mjs:40:22)

at XapiQcow2StreamSource.close (@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:86:28)

at XapiQcow2StreamSource.close (@xen-orchestra/xapi/disks/XapiQcow2StreamSource.mjs:61:18)

at DiskLargerBlock.close (@xen-orchestra/disk-transform/dist/DiskLargerBlock.mjs:87:28)

at TimeoutDisk.close (@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:34:29)

at XapiStreamNbdSource.close (@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:34:29)

at XapiStreamNbdSource.init (@xen-orchestra/xapi/disks/XapiStreamNbd.mjs:66:17)

at async #openNbdStream (@xen-orchestra/xapi/disks/Xapi.mjs:108:7)

Root Cause Analysis:The error chain is misleading — QcowStream.close and BodyReadable.destroy are cleanup, not the cause. The actual failure is inside connectNbdClientIfPossible() called at XapiStreamNbd.mjs:66.

The sequence in #openNbdStream (Xapi.mjs) is:

#openExportStream() — opens a qcow2/VHD HTTP stream from XAPI (succeeds)

new XapiStreamNbdSource(streamSource, ...) — wraps it

await source.init() — calls super.init() then connectNbdClientIfPossible()

If connectNbdClientIfPossible() throws for any reason other than NO_NBD_AVAILABLE, execution goes to the catch block in #openNbdStream which calls source?.close() — this closes the already-open qcow2 HTTP stream, producing the BodyReadable.destroy → AbortError cascade

The underlying NBD connection failure: MultiNbdClient.connect() opens nbdConcurrency (default 2) sequential connections. Each NbdClient.connect() failure causes the candidate host to be removed and retried with another candidate. With only 2 hosts in the pool and nbdConcurrency=2, a single transient TLS or TCP failure on one host during the NBD option negotiation can exhaust all candidates, causing MultiNbdClient to throw NO_NBD_AVAILABLE — but this error IS caught and falls back to stream export. So the failure here is something else: a connection that partially succeeds then aborts, throwing a non-NO_NBD_AVAILABLE error that propagates uncaught to #openNbdStream's catch block.Specific issue: When nbdClient.connect() throws with UND_ERR_ABORTED (an undici abort), the error code is not NO_NBD_AVAILABLE, so #openNbdStream re-throws it instead of falling back to stream export. The backup then fails entirely rather than gracefully degrading.

Proposed Fix:

In Xapi.mjs, the catch block in #openNbdStream should treat any NBD connection failure as fallback-eligible, not just NO_NBD_AVAILABLE:

} catch (err) {

if (err.code === 'NO_NBD_AVAILABLE' || err.code === 'UND_ERR_ABORTED') {

warn(can't connect through NBD, fall back to stream export, { err })

if (streamSource === undefined) {

throw new Error(Can't open stream source)

}

return streamSource

}

await source?.close().catch(warn)

throw err

}

Or more robustly, treat any NBD connection error as fallback-eligible rather than hardcoding error codes:} catch (err) {

warn(can't connect through NBD, fall back to stream export, { err })

if (streamSource === undefined) {

throw new Error(Can't open stream source)

}

return streamSource

}

This matches the intent of the existing NO_NBD_AVAILABLE fallback — NBD is opportunistic, and any failure to establish it should degrade gracefully to HTTP stream export rather than failing the entire backup job.Observed Timeline:

02:22:11 — xo-server opens VHD + qcow2 export streams

02:22:12–15 — NBD connections attempted, fail mid-handshake

02:22:15 — backup fails with UND_ERR_ABORTED, no fallback

02:33:51 — retry attempt also fails in 5 seconds

23:03 — same VMs back up successfully (transient condition resolved)

Impact: Backup jobs fail entirely on transient NBD connectivity issues instead of falling back to HTTP stream export, which is already implemented and working.You can file this at the XO GitHub issues or the XCP-ng forum. The fix is straightforward and low-risk — the fallback path already exists and works, it's just not being reached for UND_ERR_ABORTED errors.

-

-

connectNbdClientIfPossible

yes connectNbdClientIfPossible is the nearest error message from the real cause

Now, why does it fails ?nbd should be enabled on the network used for backups , do you have a default backup network defined ?

xo must have at least one VIF on this network

See post - https://xcp-ng.org/forum/post/104549 I do have backup network defined. I believe before doing the last round I did have NBD enabled at the pool level on the PIFs and the backup network defined. During trouble shooting it mentioned about NBD being the issue so i disabled NBD, I left the backup pool network defined. I send PM of chat working to the conclusion. Also i did re-add a vhd first SR and migrated the vm "Docker of Things" to that since that was one of the vms i was having constant issues with.

the last backups logs...

2026-04-20T04_19_10.531Z - backup NG.txt

2026-04-20T17_04_48.232Z - backup NG.txt

2026-04-20T21_24_14.738Z - backup NG.txt

2026-04-21T02_22_08.642Z - backup NG.txt

2026-04-21T04_16_26.094Z - backup NG.txt

First success full with NBD disabled 2026-04-21T04_24_12.036Z - backup NG.txt

Just ran again this morning expected it to pass but 2 vms failed - 2026-04-21T10_10_14.175Z - backup NG.txtWith NBD disabled looks like the Host does the backup not xo itself...

-

@acebmxer the connectnbdclient error won't be in the task log, but in the system log

qcow2 backup need NBD

-

{10:42}~ ➭ journalctl -u xo-server --since "7:00" Apr 21 07:02:34 xo-ce xo-server[12334]: 2026-04-21T11:02:34.586Z xo:xapi:xapi-disks WARN can't connect through NBD, fall back to stream > Apr 21 07:02:34 xo-ce xo-server[12334]: 2026-04-21T11:02:34.663Z xo:backups:worker ERROR unhandled error event { Apr 21 07:02:34 xo-ce xo-server[12334]: error: Error: Method not implemented. Apr 21 07:02:34 xo-ce xo-server[12334]: at QcowStream.instantiateParent (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/d> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.instantiateParent (file:///opt/xen-orchestra/@xen-orchestra/disk-> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.openParent (file:///opt/xen-orchestra/@xen-orchestra/disk-transfo> Apr 21 07:02:34 xo-ce xo-server[12334]: at DiskLargerBlock.readBlock (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist> Apr 21 07:02:34 xo-ce xo-server[12334]: at DiskLargerBlock.buildDiskBlockGenerator (file:///opt/xen-orchestra/@xen-orchestra/disk-> Apr 21 07:02:34 xo-ce xo-server[12334]: at buildDiskBlockGenerator.next (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at Timeout.next (file:///opt/xen-orchestra/@vates/generator-toolbox/dist/timeout.mjs:13:41) Apr 21 07:02:34 xo-ce xo-server[12334]: at generatorWithLength (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Throt> Apr 21 07:02:34 xo-ce xo-server[12334]: at generatorWithLength.next (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at Throttle.createThrottledGenerator (file:///opt/xen-orchestra/@vates/generator-toolbox/d> Apr 21 07:02:34 xo-ce xo-server[12334]: } Apr 21 07:02:34 xo-ce xo-server[12334]: 2026-04-21T11:02:34.663Z xo:backups:worker ERROR unhandled error event { Apr 21 07:02:34 xo-ce xo-server[12334]: error: RequestAbortedError [AbortError]: Request aborted Apr 21 07:02:34 xo-ce xo-server[12334]: at BodyReadable.destroy (/opt/xen-orchestra/node_modules/undici/lib/api/readable.js:51:13) Apr 21 07:02:34 xo-ce xo-server[12334]: at QcowStream.close (file:///opt/xen-orchestra/@xen-orchestra/qcow2/dist/disk/QcowStream.m> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/di> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQc> Apr 21 07:02:34 xo-ce xo-server[12334]: at DiskLargerBlock.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Dis> Apr 21 07:02:34 xo-ce xo-server[12334]: at TimeoutDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPas> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiDiskSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Disk> Apr 21 07:02:34 xo-ce xo-server[12334]: at ThrottledDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskP> Apr 21 07:02:34 xo-ce xo-server[12334]: at ThrottledDisk.diskBlocks (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/> Apr 21 07:02:34 xo-ce xo-server[12334]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 21 07:02:34 xo-ce xo-server[12334]: code: 'UND_ERR_ABORTED', Apr 21 07:02:34 xo-ce xo-server[12334]: Symbol(undici.error.UND_ERR): true, Apr 21 07:02:34 xo-ce xo-server[12334]: Symbol(undici.error.UND_ERR_ABORT): true, Apr 21 07:02:34 xo-ce xo-server[12334]: Symbol(undici.error.UND_ERR_ABORTED): true Apr 21 07:02:34 xo-ce xo-server[12334]: } Apr 21 07:02:34 xo-ce xo-server[12334]: } Apr 21 07:02:34 xo-ce xo-server[12334]: { Apr 21 07:02:34 xo-ce xo-server[12334]: err: Error: Method not implemented. Apr 21 07:02:34 xo-ce xo-server[12334]: at QcowStream.instantiateParent (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/d> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.instantiateParent (file:///opt/xen-orchestra/@xen-orchestra/disk-> Apr 21 07:02:34 xo-ce xo-server[12334]: at XapiQcow2StreamSource.openParent (file:///opt/xen-orchestra/@xen-orchestra/disk-transfo> Apr 21 07:02:34 xo-ce xo-server[12334]: at DiskLargerBlock.readBlock (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist> Apr 21 07:02:34 xo-ce xo-server[12334]: at DiskLargerBlock.buildDiskBlockGenerator (file:///opt/xen-orchestra/@xen-orchestra/disk-> Apr 21 07:02:34 xo-ce xo-server[12334]: at buildDiskBlockGenerator.next (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at Timeout.next (file:///opt/xen-orchestra/@vates/generator-toolbox/dist/timeout.mjs:13:41) Apr 21 07:02:34 xo-ce xo-server[12334]: at generatorWithLength (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Throt> Apr 21 07:02:34 xo-ce xo-server[12334]: at generatorWithLength.next (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at Throttle.createThrottledGenerator (file:///opt/xen-orchestra/@vates/generator-toolbox/d> Apr 21 07:02:34 xo-ce xo-server[12334]: } Apr 21 07:02:34 xo-ce xo-server[12334]: 2026-04-21T11:02:34.666Z xo:backups:AbstractVmRunner WARN writer step failed { Apr 21 07:02:34 xo-ce xo-server[12334]: error: Error: ENOENT: no such file or directory, open '/run/xo-server/mounts/3ba9eed2-55f1-476> Apr 21 07:02:34 xo-ce xo-server[12334]: From: Apr 21 07:02:34 xo-ce xo-server[12334]: at NfsHandler.addSyncStackTrace (/opt/xen-orchestra/@xen-orchestra/fs/dist/local.js:21:26) Apr 21 07:02:34 xo-ce xo-server[12334]: at NfsHandler._openFile (/opt/xen-orchestra/@xen-orchestra/fs/dist/local.js:154:35) Apr 21 07:02:34 xo-ce xo-server[12334]: at /opt/xen-orchestra/@xen-orchestra/fs/dist/utils.js:29:26 Apr 21 07:02:34 xo-ce xo-server[12334]: at new Promise (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at NfsHandler.<anonymous> (/opt/xen-orchestra/@xen-orchestra/fs/dist/utils.js:24:12) Apr 21 07:02:34 xo-ce xo-server[12334]: at loopResolver (/opt/xen-orchestra/node_modules/promise-toolbox/retry.js:83:46) Apr 21 07:02:34 xo-ce xo-server[12334]: at new Promise (<anonymous>) Apr 21 07:02:34 xo-ce xo-server[12334]: at loop (/opt/xen-orchestra/node_modules/promise-toolbox/retry.js:85:22) Apr 21 07:02:34 xo-ce xo-server[12334]: at NfsHandler.retry (/opt/xen-orchestra/node_modules/promise-toolbox/retry.js:87:10) Apr 21 07:02:34 xo-ce xo-server[12334]: at NfsHandler._openFile (/opt/xen-orchestra/node_modules/promise-toolbox/retry.js:103:18) { Apr 21 07:02:34 xo-ce xo-server[12334]: errno: -2, Apr 21 07:02:34 xo-ce xo-server[12334]: code: 'ENOENT', Apr 21 07:02:34 xo-ce xo-server[12334]: syscall: 'open', Apr 21 07:02:34 xo-ce xo-server[12334]: path: '/run/xo-server/mounts/3ba9eed2-55f1-4760-ac08-655c252aa316/xo-vm-backups/fb72a8d7-a03> Apr 21 07:02:34 xo-ce xo-server[12334]: }, Apr 21 07:02:34 xo-ce xo-server[12334]: step: 'writer.updateUuidAndChain()', Apr 21 07:02:34 xo-ce xo-server[12334]: writer: 'IncrementalRemoteWriter' Apr 21 07:02:34 xo-ce xo-server[12334]: } Apr 21 07:02:35 xo-ce xo-server[12334]: 2026-04-21T11:02:35.100Z xo:xapi WARN retry { Apr 21 07:02:35 xo-ce xo-server[12334]: attemptNumber: 0, Apr 21 07:02:35 xo-ce xo-server[12334]: delay: 5000, Apr 21 07:02:35 xo-ce xo-server[12334]: error: XapiError: VDI_IN_USE(OpaqueRef:e1cfcc8f-1060-150d-8514-2d625fe79aa7, destroy) Apr 21 07:02:35 xo-ce xo-server[12334]: at XapiError.wrap (file:///opt/xen-orchestra/packages/xen-api/_XapiError.mjs:16:12) Apr 21 07:02:35 xo-ce xo-server[12334]: at default (file:///opt/xen-orchestra/packages/xen-api/_getTaskResult.mjs:13:29) Apr 21 07:02:35 xo-ce xo-server[12334]: at Xapi._addRecordToCache (file:///opt/xen-orchestra/packages/xen-api/index.mjs:1078:24) Apr 21 07:02:35 xo-ce xo-server[12334]: at file:///opt/xen-orchestra/packages/xen-api/index.mjs:1112:14 Apr 21 07:02:35 xo-ce xo-server[12334]: at Array.forEach (<anonymous>) Apr 21 07:02:35 xo-ce xo-server[12334]: at Xapi._processEvents (file:///opt/xen-orchestra/packages/xen-api/index.mjs:1102:12) Apr 21 07:02:35 xo-ce xo-server[12334]: at Xapi._watchEvents (file:///opt/xen-orchestra/packages/xen-api/index.mjs:1275:14) Apr 21 07:02:35 xo-ce xo-server[12334]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 21 07:02:35 xo-ce xo-server[12334]: code: 'VDI_IN_USE', Apr 21 07:02:35 xo-ce xo-server[12334]: params: [ 'OpaqueRef:e1cfcc8f-1060-150d-8514-2d625fe79aa7', 'destroy' ], Apr 21 07:02:35 xo-ce xo-server[12334]: call: undefined, Apr 21 07:02:35 xo-ce xo-server[12334]: url: undefined, Apr 21 07:02:35 xo-ce xo-server[12334]: task: task { Apr 21 07:02:35 xo-ce xo-server[12334]: uuid: '71d29445-16f6-2ce9-5380-d67b08c2d4ec', Apr 21 07:02:35 xo-ce xo-server[12334]: name_label: 'Async.VDI.destroy', Apr 21 07:02:35 xo-ce xo-server[12334]: name_description: '', Apr 21 07:02:35 xo-ce xo-server[12334]: allowed_operations: [], Apr 21 07:02:35 xo-ce xo-server[12334]: current_operations: {}, Apr 21 07:02:35 xo-ce xo-server[12334]: created: '20260421T11:02:34Z', Apr 21 07:02:35 xo-ce xo-server[12334]: finished: '20260421T11:02:34Z', Apr 21 07:02:35 xo-ce xo-server[12334]: status: 'failure', Apr 21 07:02:35 xo-ce xo-server[12334]: resident_on: 'OpaqueRef:2b1bb3f1-ceee-ba8f-62d9-74362fee8b3d', Apr 21 07:02:35 xo-ce xo-server[12334]: progress: 1, Apr 21 07:02:35 xo-ce xo-server[12334]: type: '<none/>', Apr 21 07:02:35 xo-ce xo-server[12334]: result: '', Apr 21 07:02:35 xo-ce xo-server[12334]: error_info: [Array], Apr 21 07:02:35 xo-ce xo-server[12334]: other_config: {}, Apr 21 07:02:35 xo-ce xo-server[12334]: subtask_of: 'OpaqueRef:NULL', Apr 21 07:02:35 xo-ce xo-server[12334]: subtasks: [], Apr 21 07:02:35 xo-ce xo-server[12334]: backtrace: '(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 5212))((proce> Apr 21 07:02:35 xo-ce xo-server[12334]: } Apr 21 07:02:35 xo-ce xo-server[12334]: }, Apr 21 07:02:35 xo-ce xo-server[12334]: fn: 'destroy', Apr 21 07:02:35 xo-ce xo-server[12334]: arguments: [ 'OpaqueRef:e1cfcc8f-1060-150d-8514-2d625fe79aa7' ], Apr 21 07:02:35 xo-ce xo-server[12334]: pool: { Apr 21 07:02:35 xo-ce xo-server[12334]: uuid: '939ed551-fbd6-9868-52d8-d3997b7bf7da', Apr 21 07:02:35 xo-ce xo-server[12334]: name_label: 'xcp-ng-Pool1' Apr 21 07:02:35 xo-ce xo-server[12334]: } Apr 21 07:02:35 xo-ce xo-server[12334]: } Apr 21 07:02:35 xo-ce xo-server[12334]: 2026-04-21T11:02:35.701Z xo:xapi:vdi INFO OpaqueRef:bb28f5cf-3b2e-2bb2-4f91-bcf86594007f was al> Apr 21 07:02:35 xo-ce xo-server[12334]: vdiRef: 'OpaqueRef:e1cfcc8f-1060-150d-8514-2d625fe79aa7', Apr 21 07:02:35 xo-ce xo-server[12334]: vbdRef: 'OpaqueRef:bb28f5cf-3b2e-2bb2-4f91-bcf86594007f' Apr 21 07:02:35 xo-ce xo-server[12334]: } Apr 21 07:02:41 xo-ce xo-server[12334]: 2026-04-21T11:02:41.221Z xo:backups:worker INFO backup has ended Apr 21 07:02:41 xo-ce xo-server[11902]: 2026-04-21T11:02:41.222Z xo:backups:backupWorker WARN incorrect value passed to logger { level: > Apr 21 07:02:41 xo-ce sudo[12436]: xo-service : PWD=/opt/xen-orchestra/packages/xo-server ; USER=root ; COMMAND=/usr/bin/umount /run/xo-> Apr 21 07:02:41 xo-ce sudo[12436]: pam_unix(sudo:session): session opened for user root(uid=0) by (uid=996) Apr 21 07:02:41 xo-ce sudo[12436]: pam_unix(sudo:session): session closed for user root Apr 21 07:03:11 xo-ce xo-server[12334]: 2026-04-21T11:03:11.265Z xo:backups:worker WARN worker process did not exit automatically, forci> Apr 21 07:03:11 xo-ce xo-server[12334]: 2026-04-21T11:03:11.266Z xo:backups:worker INFO process will exit { Apr 21 07:03:11 xo-ce xo-server[12334]: duration: 406553270, Apr 21 07:03:11 xo-ce xo-server[12334]: exitCode: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: resourceUsage: { Apr 21 07:03:11 xo-ce xo-server[12334]: userCPUTime: 8354715, Apr 21 07:03:11 xo-ce xo-server[12334]: systemCPUTime: 2569287, Apr 21 07:03:11 xo-ce xo-server[12334]: maxRSS: 75452, Apr 21 07:03:11 xo-ce xo-server[12334]: sharedMemorySize: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: unsharedDataSize: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: unsharedStackSize: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: minorPageFault: 141970, Apr 21 07:03:11 xo-ce xo-server[12334]: majorPageFault: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: swappedOut: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: fsRead: 14032, Apr 21 07:03:11 xo-ce xo-server[12334]: fsWrite: 2022432, Apr 21 07:03:11 xo-ce xo-server[12334]: ipcSent: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: ipcReceived: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: signalsCount: 0, Apr 21 07:03:11 xo-ce xo-server[12334]: voluntaryContextSwitches: 20646, Apr 21 07:03:11 xo-ce xo-server[12334]: involuntaryContextSwitches: 1082 Apr 21 07:03:11 xo-ce xo-server[12334]: }, Apr 21 07:03:11 xo-ce xo-server[12334]: summary: { duration: '7m', cpuUsage: '3%', memoryUsage: '73.68 MiB' } Apr 21 07:03:11 xo-ce xo-server[12334]: } Apr 21 07:16:13 xo-ce xo-server[12608]: 2026-04-21T11:16:13.882Z xo:backups:worker INFO starting backup Apr 21 07:16:13 xo-ce sudo[12621]: xo-service : PWD=/opt/xen-orchestra/packages/xo-server ; USER=root ; COMMAND=/usr/bin/mount -o -t nf> Apr 21 07:16:13 xo-ce sudo[12621]: pam_unix(sudo:session): session opened for user root(uid=0) by (uid=996) Apr 21 07:16:13 xo-ce sudo[12621]: pam_unix(sudo:session): session closed for user root Apr 21 07:16:17 xo-ce xo-server[12608]: 2026-04-21T11:16:17.213Z xo:xapi:xapi-disks INFO export through qcow2 Apr 21 07:16:18 xo-ce xo-server[12608]: 2026-04-21T11:16:18.902Z xo:xapi:xapi-disks INFO export through qcow2 Apr 21 07:16:20 xo-ce xo-server[12608]: 2026-04-21T11:16:20.784Z xo:xapi:xapi-disks WARN can't connect through NBD, fall back to stream > Apr 21 07:16:23 xo-ce xo-server[12608]: 2026-04-21T11:16:23.412Z xo:xapi:xapi-disks WARN can't connect through NBD, fall back to stream > Apr 21 07:19:01 xo-ce xo-server[12608]: 2026-04-21T11:19:01.857Z xo:backups:worker ERROR unhandled error event { Apr 21 07:19:01 xo-ce xo-server[12608]: error: RequestAbortedError [AbortError]: Request aborted Apr 21 07:19:01 xo-ce xo-server[12608]: at BodyReadable.destroy (/opt/xen-orchestra/node_modules/undici/lib/api/readable.js:51:13) Apr 21 07:19:01 xo-ce xo-server[12608]: at QcowStream.close (file:///opt/xen-orchestra/@xen-orchestra/qcow2/dist/disk/QcowStream.m> Apr 21 07:19:01 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/di> Apr 21 07:19:01 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQc> Apr 21 07:19:01 xo-ce xo-server[12608]: at DiskLargerBlock.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Dis> Apr 21 07:19:01 xo-ce xo-server[12608]: at TimeoutDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPas> Apr 21 07:19:01 xo-ce xo-server[12608]: at XapiDiskSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Disk> Apr 21 07:19:01 xo-ce xo-server[12608]: at ThrottledDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskP> Apr 21 07:19:01 xo-ce xo-server[12608]: at ThrottledDisk.diskBlocks (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/> Apr 21 07:19:01 xo-ce xo-server[12608]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 21 07:19:01 xo-ce xo-server[12608]: code: 'UND_ERR_ABORTED', Apr 21 07:19:01 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR): true, Apr 21 07:19:01 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORT): true, Apr 21 07:19:01 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORTED): true Apr 21 07:19:01 xo-ce xo-server[12608]: } Apr 21 07:19:01 xo-ce xo-server[12608]: } Apr 21 07:19:08 xo-ce xo-server[12608]: 2026-04-21T11:19:08.560Z xo:xapi:xapi-disks INFO export through qcow2 Apr 21 07:19:15 xo-ce xo-server[12608]: 2026-04-21T11:19:15.707Z xo:xapi:xapi-disks WARN can't connect through NBD, fall back to stream > Apr 21 07:23:10 xo-ce xo-server[12608]: 2026-04-21T11:23:10.978Z xo:backups:worker ERROR unhandled error event { Apr 21 07:23:10 xo-ce xo-server[12608]: error: RequestAbortedError [AbortError]: Request aborted Apr 21 07:23:10 xo-ce xo-server[12608]: at BodyReadable.destroy (/opt/xen-orchestra/node_modules/undici/lib/api/readable.js:51:13) Apr 21 07:23:10 xo-ce xo-server[12608]: at QcowStream.close (file:///opt/xen-orchestra/@xen-orchestra/qcow2/dist/disk/QcowStream.m> Apr 21 07:23:10 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/di> Apr 21 07:23:10 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQc> Apr 21 07:23:10 xo-ce xo-server[12608]: at DiskLargerBlock.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Dis> Apr 21 07:23:10 xo-ce xo-server[12608]: at TimeoutDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPas> Apr 21 07:23:10 xo-ce xo-server[12608]: at XapiDiskSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Disk> Apr 21 07:23:10 xo-ce xo-server[12608]: at ThrottledDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskP> Apr 21 07:23:10 xo-ce xo-server[12608]: at ThrottledDisk.diskBlocks (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/> Apr 21 07:23:10 xo-ce xo-server[12608]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 21 07:23:10 xo-ce xo-server[12608]: code: 'UND_ERR_ABORTED', Apr 21 07:23:10 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR): true, Apr 21 07:23:10 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORT): true, Apr 21 07:23:10 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORTED): true Apr 21 07:23:10 xo-ce xo-server[12608]: } Apr 21 07:23:10 xo-ce xo-server[12608]: } Apr 21 07:26:43 xo-ce xo-server[12608]: 2026-04-21T11:26:43.361Z xo:backups:worker ERROR unhandled error event { Apr 21 07:26:43 xo-ce xo-server[12608]: error: RequestAbortedError [AbortError]: Request aborted Apr 21 07:26:43 xo-ce xo-server[12608]: at BodyReadable.destroy (/opt/xen-orchestra/node_modules/undici/lib/api/readable.js:51:13) Apr 21 07:26:43 xo-ce xo-server[12608]: at QcowStream.close (file:///opt/xen-orchestra/@xen-orchestra/qcow2/dist/disk/QcowStream.m> Apr 21 07:26:43 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/di> Apr 21 07:26:43 xo-ce xo-server[12608]: at XapiQcow2StreamSource.close (file:///opt/xen-orchestra/@xen-orchestra/xapi/disks/XapiQc> Apr 21 07:26:43 xo-ce xo-server[12608]: at DiskLargerBlock.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Dis> Apr 21 07:26:43 xo-ce xo-server[12608]: at TimeoutDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskPas> Apr 21 07:26:43 xo-ce xo-server[12608]: at XapiDiskSource.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/Disk> Apr 21 07:26:43 xo-ce xo-server[12608]: at ThrottledDisk.close (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/DiskP> Apr 21 07:26:43 xo-ce xo-server[12608]: at ThrottledDisk.diskBlocks (file:///opt/xen-orchestra/@xen-orchestra/disk-transform/dist/> Apr 21 07:26:43 xo-ce xo-server[12608]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 21 07:26:43 xo-ce xo-server[12608]: code: 'UND_ERR_ABORTED', Apr 21 07:26:43 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR): true, Apr 21 07:26:43 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORT): true, Apr 21 07:26:43 xo-ce xo-server[12608]: Symbol(undici.error.UND_ERR_ABORTED): true Apr 21 07:26:43 xo-ce xo-server[12608]: } Apr 21 07:26:43 xo-ce xo-server[12608]: } Apr 21 07:26:47 xo-ce xo-server[12608]: 2026-04-21T11:26:47.173Z xo:backups:worker INFO backup has ended Apr 21 07:26:47 xo-ce sudo[12778]: xo-service : PWD=/opt/xen-orchestra/packages/xo-server ; USER=root ; COMMAND=/usr/bin/umount /run/xo-> Apr 21 07:26:47 xo-ce sudo[12778]: pam_unix(sudo:session): session opened for user root(uid=0) by (uid=996) Apr 21 07:26:47 xo-ce sudo[12778]: pam_unix(sudo:session): session closed for user root Apr 21 07:26:47 xo-ce xo-server[12608]: 2026-04-21T11:26:47.222Z xo:backups:worker INFO process will exit { Apr 21 07:26:47 xo-ce xo-server[12608]: duration: 633339340, Apr 21 07:26:47 xo-ce xo-server[12608]: exitCode: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: resourceUsage: { Apr 21 07:26:47 xo-ce xo-server[12608]: userCPUTime: 1260533493, Apr 21 07:26:47 xo-ce xo-server[12608]: systemCPUTime: 332811098, Apr 21 07:26:47 xo-ce xo-server[12608]: maxRSS: 419224, Apr 21 07:26:47 xo-ce xo-server[12608]: sharedMemorySize: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: unsharedDataSize: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: unsharedStackSize: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: minorPageFault: 3113937, Apr 21 07:26:47 xo-ce xo-server[12608]: majorPageFault: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: swappedOut: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: fsRead: 14920, Apr 21 07:26:47 xo-ce xo-server[12608]: fsWrite: 440514224, Apr 21 07:26:47 xo-ce xo-server[12608]: ipcSent: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: ipcReceived: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: signalsCount: 0, Apr 21 07:26:47 xo-ce xo-server[12608]: voluntaryContextSwitches: 4431961, Apr 21 07:26:47 xo-ce xo-server[12608]: involuntaryContextSwitches: 1742254 Apr 21 07:26:47 xo-ce xo-server[12608]: }, Apr 21 07:26:47 xo-ce xo-server[12608]: summary: { duration: '11m', cpuUsage: '252%', memoryUsage: '409.4 MiB' } Apr 21 07:26:47 xo-ce xo-server[12608]: }

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login