Alert: Control Domain Memory Usage

-

@borzel How frequently do you restart VMs? And what's the last dom-id?

# xl list -

@r1 in general we do not restart many of our VMs, its all very static, only manual operated

xen19 is now rebootet (we need it in production) with kernel-alt - highest id is currently 4

xen22 (pool master of another affected pool) - highest id is curently 30

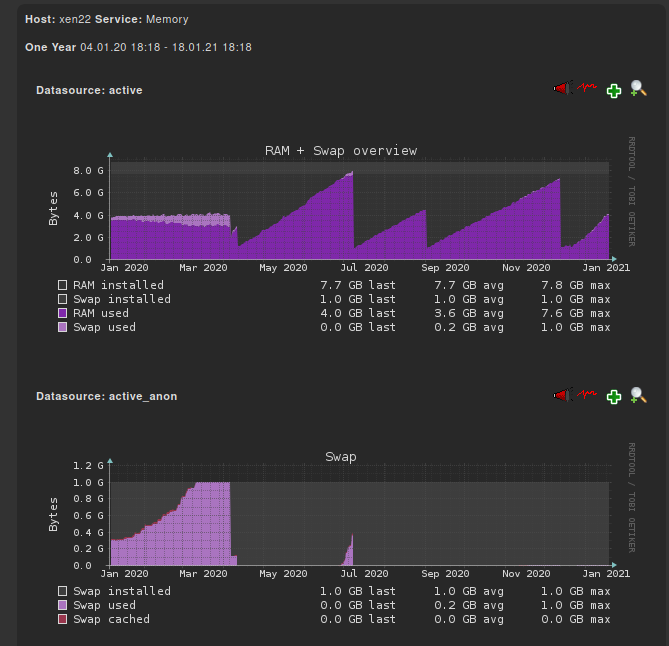

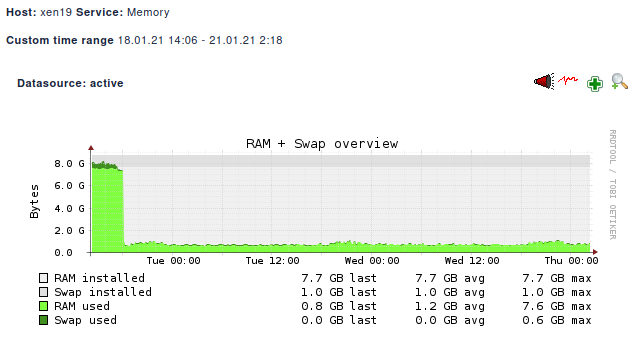

memory graphs of xen22

yum.log of xen22 (Problem here also after installing kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64)

yum.log.5.gz:Dec 19 00:52:47 Updated: kernel-4.4.52-4.0.12.x86_6 yum.log.3.gz:Nov 08 10:07:40 Updated: kernel-4.4.52-4.0.13.x86_64 yum.log.1:Apr 10 20:31:01 Installed: kernel-4.19.19-6.0.10.1.xcpng8.1.x86_64 yum.log.1:Aug 31 23:10:50 Updated: kernel-4.19.19-6.0.11.1.xcpng8.1.x86_64 yum.log.1:Dec 11 18:00:54 Updated: kernel-4.19.19-6.0.12.1.xcpng8.1.x86_64 yum.log.1:Dec 19 12:52:00 Updated: kernel-4.19.19-6.0.13.1.xcpng8.1.x86_64 yum.log.1:Dec 19 12:54:13 Updated: kernel-4.19.19-7.0.9.1.xcpng8.2.x86_64 -

@borzel Between 4.19.19-6.0.9 to 4.19.19-6.0.10, following two patches were added.

0001-block-cleanup-__blkdev_issue_discard.patch 0001-block-fix-32-bit-overflow-in-__blkdev_issue_discard.patchBoth are well vetted and seems stable without any further changes in them. Was there anything else updated along with kernel?

-

@r1 yes, ever line "installed" in yum.log is an Upgrade from XCP-ng.

Problems started with XCP-ng 8.x -

@borzel did you "yum upgrade" from 7.x from 8.x?

-

@stormi on server xen19 I think so, on server xen22 I'm not sure

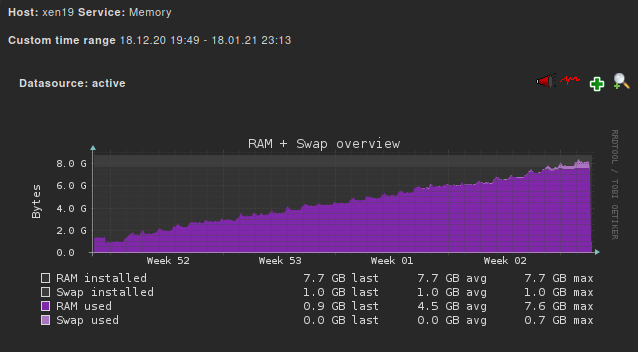

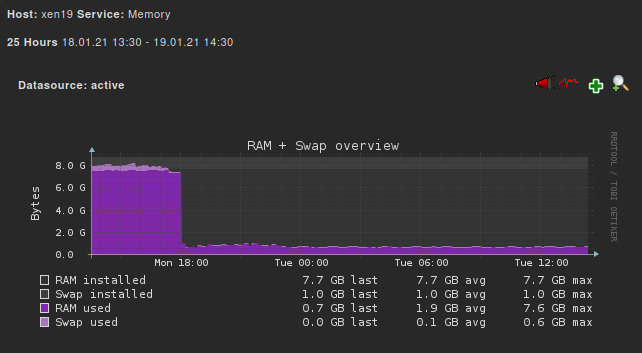

I looked more close on my memory graphs and saw, that the memory baseline increases every night:

"bump" every day:

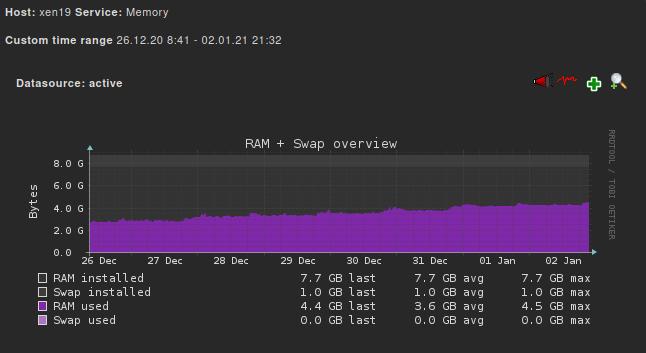

closer look in week 53:

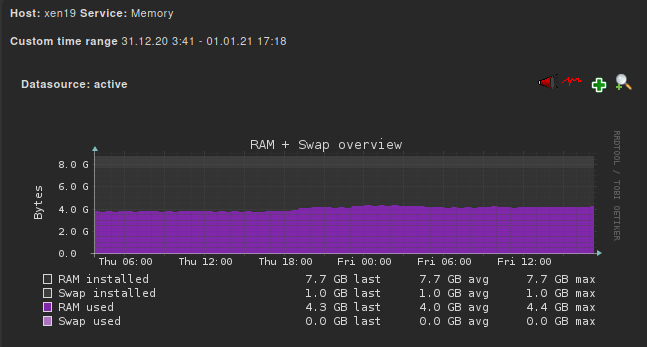

Dez 31. - Jan 01.

Our Backups run from 18.00 till 3 or 4 in the morning (including coalesce).

--> maybe the heavy IO load leads to memory leaks "somewhere"?

-

Good news from the kernel-alt (server xen19): No RAM leaks so far

-

At least that's consistent

Thanks for the feedback @borzel

Thanks for the feedback @borzel -

@borzel

yum upgradefrom 7.x to 8.x is not supported, so it's likely that your host isn't in a perfectly clean state.This is unrelated to the memory leak, but could cause other kinds of issues. Basically, scripts that should have run during the RPM upgrade to ensure the final state is consistent with what you'd have from an ISO upgrade either don't exist or haven't been tested.

-

@stormi I'm not complete sure if I did the iso upgrade or not...

But it's a good idea to reinstall the poolmaster from scratch...

-

@borzel I wish comparing kernel-alt and base kernel was easy to catch this... I'm sure that the tapdisk IO code is same in kernel and kernel-alt.

Also the 2 patches mentioned earlier are also present in base kernel of xcp-ng 8.2 as well as kernel-alt 4.19.142. They are also present for xcp-ng 8.1 base kernel, however they are not present in xcp-ng 8.1 kernel-alt.

Can you confirm your kernel-alt version?

-

@borzel Also, I somehow need to be able to reproduce the issue at lab. If you can give more details about how do you do backup, may be I can simulate something.

-

@r1 If it helps at all, I have seen this more often on the pool master than in other pool hosts. We are using XO delta backup on 125 VMs in this pool daily. So, the master is busy doing a lot of snapshot coalesce operations (lots of iSCSI storage IO) compared to other hosts. The other host that has hit 95% control domain memory use is also IO heavy (it has some database server VMs).

-

@r1 our complete setup:

[FreeNAS NFS] <----shared-storage----> [Pool of 2 servers (xen22 + xen23)] ----XAPI---> [XO from sources] -----remote----> [FreeNAS NFS]

All [servers] are real hardware servers, no VMs involved.

Same chain of servers for xen19, execpt there are more pool members (and VMs).

-

[02:27 xen19 ~]# uname -a Linux xen19 4.19.142 #1 SMP Tue Nov 3 11:27:36 CET 2020 x86_64 x86_64 x86_64 GNU/Linux[02:30 xen19 ~]# yum list installed | grep kernel kernel.x86_64 4.19.19-7.0.9.1.xcpng8.2 @xcp-ng-updates kernel-alt.x86_64 4.19.142-1.xcpng8.2 @xcp-ng-basememory graph so far:

looking very good!!!

-

@borzel Thanks. That rules out the my suspicion on those patches. We are still working on reproducing this issue without success. We would really appreciate if you or someone from community can arrange a test hosts which shows this problem. Reason for test host is because we will have to replace the kernel multiple times to observe change.

Another test users can do is to remove iscsi from equation. Run some workloads on local disks (with backups) and verify control domain memory usage.

-

Good morning.

Long time lurker, first time commenter.

Just wanted to add my 2c worth to this conversation, that may assist.We are running 37 XCP-ng servers, most on Xen 8.0, mixture of Dell R630/640 and some odd Supermicro servers, and we have been experiencing this issue on some of them where DOM0 runs out of memory (free -m like the first post shows very little RAM left).

We see a performance impact (but the VMS still run) with DOM0 - just trying to SSH to DOM0 / using xsconsole is slow, and then eventually DOM0's network fails and whatever we try doesn't restart the networking. When DOM0's network fails all the vms also loose network connectivity. The only resolution is to manually stop each vm via command line and then reboot the xen host.

With one exception, all Xen servers that have experienced this issue generally has an uptime of at least 200 days, but the thing I find interesting is the servers that also have issues has a kubernetes data node on them.

I assume something that kubernetes does is causing the issue. The boxes that do not have kubernetes on them (with 1 exception) never has had this issue.

I have a spare Dell R640 that I'm currently doing some testing on to see if I can create lots of VM and do a heap of CPU/Memory/IO on it to see if I can replicate the issue and if I can try the alternative kernel to see if that makes any difference.

I'll provide feedback on what I find.

Gary

-

Thanks! feedback is vital to help us

-

FWIW, no kubernetes in our environment with this issue.

-

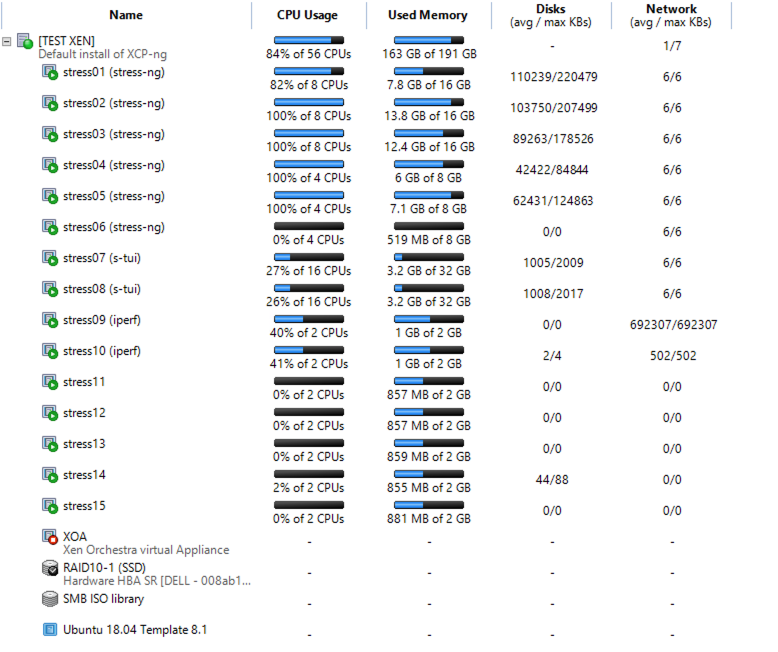

Some feedback on some testing that I have done.

I have spun up some vms and done some stress testing on them, with a combination of stress-ng, s-tui and iperf and though slow, I can see a drop over time of DOM0 free memory- 26.1.2021 -- 662 MB used -- 6307 MB free (initial)

- 27.1.2021 -- 726 MB used -- 6270 MB free

- 28.1.2021 11:36 AM -- 840 MB used -- 6123 MB free

- 28.1.2021 12:23 AM -- 866 MB used -- 6191 MB free

- 28.1.2021 3:59 PM -- 877 MB used -- 6056 MB free

- 28.1.2021 4:35 PM -- 883 MB used -- 6048 MB free

- 29.1.2021 7:32 AM -- 897 MB used -- 6004 MB free

This is my setup - XCP-ng 18.0 standard kernel

Below are some notes on what I did to do this test.

DOM0 Memory issue.txtI'll try now with different kernels / upgrading to XCP-ng 8.2 (both standard and alternative kernels) and see if I can continue to replicate the issue.

Re: kubernetes - not sure 100% if that is causing it, it just seems to be a common factor but based on the stress testing, lots of cpu/memory/io seems to be causing DOM0 memory usage to increase.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

등록 로그인