10GB backup with XOA (XCP to windows NFS)

-

@olivierlambert Will connecting with http://<IP> authenticate using plain text l/p over the network?

-

Yes, it's just for testing if it's related XAPI bottleneck (

stunnel) -

After the test with Http://ipserver

the speed written in the backup report is 33,8 MiB/s.On XCP could i use iperf?

-

So it's a bit better indeed (25% better perfs!).

You can use

iperfindeed, but I already know the result: it won't be a bandwidth issue. It's all on the export speed of the host itself. -

What do you mean with export speed of the host?

10Gb nic or 1Gb nic are the same for XCP-ng or XOA? As i can see if I use 1Gb or 10Gb nothing change and the performance are bad in both cases. -

When you do a backup, this will happen:

- dom0 will access the VM disk (snapshot disk but whatever)

- it will create a HTTP handler where the disk data can be fetch

- it will give the URL of this HTTP handler to XO

- then XO will fetch from this HTTP(S) handler

- XO will stream the content of this handler to the NFS share

We know that a major bottleneck is the speed at which the HTTP handler can be fetch.

-

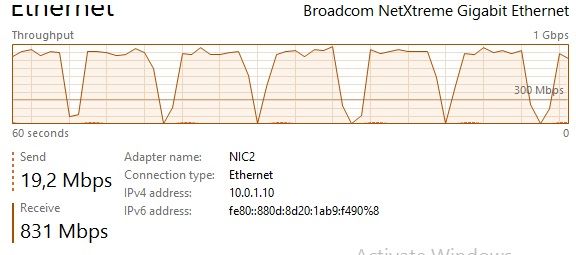

We checked the network interface status and we found that the network work as the attached picture.

Is that normal? it goes up and then to 0 and then up again, etc...

(this test is new and we used a 1Gb nic, to find if XOA use the 1Gb nic to its full)We will try the 10Gb soon.

-

Please be more explicit. What generated this output?

iperf? -

XOA backup.

We start a backup of a turned off Windows server 2016.

When we try to copy from a NAS to the same NFS share we don't have the same issue. -

With the 10Gb nic the issue is worse. Now we have the backup with 4-5 packets around 700MB and then 10-15 second with few KB, and then again 4-5 packets around 700MB.

Before we didn't have that much time without high transfer speed, i think because 10Gb nic can send more with less packets. -

Again, you need to think globally. The transfer speed is depending on the slowest element. If the HTTP handled can't export faster than let's say 30MiB/s, then it won't magically be transferred faster than that, despite having a 100G network.

You have export speed, SR speed, stream speed, write speed, protocol speed, read seek speed etc.

-

What do you mean with HTTP? can you be more specific with HTTP limitations?

-

I explained that here: https://xcp-ng.org/forum/post/28832

-

Do you know where i can find some data about HTTP handler on XCP/Xenserver? How much GB can be fetch in the handler?

-

Hi,

i opened this ticket because our datacenter has 67 VM and right now we take 20 Hours to make a full backup.

We need to fast it up a lot.

The differential backup need 5 hours to backup 350GB. -

I think it's likely the bottleneck, and except disabling HTTPS, there's no magical rule. You can play with concurrency in XO to see if it's better.

Also, it's not a "ticket" you opened, but a thread on a community forum, where people can answer on their free time.

-

Sorry, i know this isn't a ticket, i meant the question here on the forum.

and we write this thread because we cannot find a solution anywhere for our issue/question to speed up the backup.

We are making a lot of test right now, but we are not going better in anyway and we found a lot of limits in XOA/XCP-ng and Xen server 7.1 CU2 to, we are a bit worried about this issue with the backup. -

Note that you can scale up with concurrency (see https://xen-orchestra.com/docs/backups.html#backup-concurrency).

Also, if you have different pools, you could use XOA Proxies (alternatively, multiple XO from sources but it's less elegant) so you can accumulate all pool speed to get to a decent global speed.

-

We see about the same speed, just below 1Gbit/s no matter how many concurrent backups and what not. The bursting is probably due to buffering in the node-process.

I think this is a limitation in XAPI just like @olivierlambert wrote earlier and nothing much you can do in XO to speed it up.

We've been trying to speed things up for a long time, we ended up using the Delta-backup and Continious Replication which are both incremental. This allows us to backup all our VM's over night to our NFS-machines.

-

We'll get rid of potential stream buffer limitation in a relatively short time frame, but again, I'm pretty sure the export speed on the host is the real bottleneck.

As we are also working on XCP-ng storage performance in parallel, I'm confident we'll be able to improve that