RunX: tech preview

-

RunX tech preview

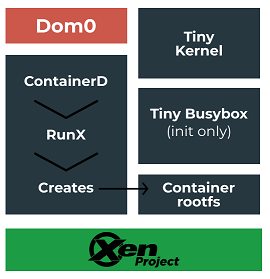

What is RunX?

See https://xcp-ng.org/blog/2021/09/14/runx-next-generation-secured-containers/ for more details.

Do I need it?

If you want to test high level of security with your containers, yes. Or if you like to play with shiny new techs and report bugs

How to test it

THIS IS A TECH PREVIEW

It's not meant to run in production. Play with it in your lab, not in production. You have been warned

Follow the next section.

Installation

1. Create the repo, install packages + restart toolstack.

Create the file

/etc/yum.repos.d/xcp-ng-runx.repowith:[xcp-ng-runx] name=XCP-ng runx Repository baseurl=http://mirrors.xcp-ng.org/8/8.2/runx/x86_64/ http://updates.xcp-ng.org/8/8.2/runx/x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-xcpngThen, install all the packages:

yum install --enablerepo=epel -y qemu-dp xenopsd xenopsd-cli xenopsd-xc xcp-ng-xapi-storage runx yum install --enablerepo="base,extras" docker podman xe-toolstack-restart2. Create a 9p SR

mkdir runx-sr mkdir -p /root/runx-sr xe sr-create type=fsp name-label=runx-sr device-config:file-uri=/root/runx-sr3. Create a VM template

You must create a VM template with the desired amount of ram an the number of cores to use. Make sure the vm type is: PV.

It's not necessary to add disk to this VM.4. Modify the runx config

Open

/etc/runx.confand modify these variables:SR_UUIDandTEMPLATE_UUID.5. Start a VM.

With Podman

Using podman:

podman pull archlinux podman container create --name archlinux archlinux podman start archlinuxWith Docker

With docker:

- Use previous commands and replace

podmanwithdocker. - Also you must start the docker daemon using an empty bridge using

docker -b none.

Feedback

Please test and report any problem

- Use previous commands and replace

-

O olivierlambert pinned this topic on

O olivierlambert pinned this topic on

-

O olivierlambert referenced this topic on

O olivierlambert referenced this topic on

-

@olivierlambert Just as a heads up, there's a dependency on jq which is in EPEL. Not an issue, but worth noting.

-

Ah good to know, let me fix the command line then

edit: fixed!

-

Well. It would appear I ballsed something up. Followed the instructions above through step 4, replaced step five with the below command.

How big is the VHD that it tries to create? If its any larger than ~12gb that's probably why its failing.

edit: oh i also didn't install docker

[12:20 lenovo150 ~]# podman run --rm -it -v podtest:/data matrixdotorg/mjolnir:latest umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/run/.containerenv: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/run/.containerenv: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/run/.containerenv: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/run/.containerenv: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/run/.containerenv: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/etc/resolv.conf: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/etc/resolv.conf: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/etc/resolv.conf: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/etc/resolv.conf: not mounted umount: /var/lib/containers/storage/overlay/1348371eb61cde6fde7d79c285122d12a423309749b9f482fe542899b3b7196a/merged/etc/resolv.conf: not mounted Error: container create failed (no logs from conmon): EOF -

Does it work after Docker is installed?

-

@olivierlambert Same error.

-

Can you detail the

podmanarguments you are using? Also, I suppose you did the same on a "regular" VM or host and it worked? -

@olivierlambert Well, for the sake of debugging I tried using the archlinux example in the above post. Debug readout below.

[15:32 lenovo150 ~]# podman --log-level debug start archlinux DEBU[0000] Reading configuration file "/etc/containers/libpod.conf" DEBU[0000] Merged system config "/etc/containers/libpod.conf": &{{false false false false false true} 0 { [] [] []} docker:// runx map[crun:[/usr/bin/crun /usr/local/bin/crun] runc:[/usr/bin/runc /usr/sbin/runc /usr/local/bin/runc /usr/local/sbin/runc /sbin/runc /bin/runc /usr/lib/cri-o-runc/sbin/runc /run/current-system/sw/bin/runc] runx:[/usr/bin/runx]] [runx] [runx] [] [/usr/libexec/podman/conmon /usr/local/libexec/podman/conmon /usr/local/lib/podman/conmon /usr/bin/conmon /usr/sbin/conmon /usr/local/bin/conmon /usr/local/sbin/conmon /run/current-system/sw/bin/conmon] [PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin] systemd /var/run/libpod -1 false /etc/cni/net.d/ [/usr/libexec/cni /usr/lib/cni /usr/local/lib/cni /opt/cni/bin] runx [] k8s.gcr.io/pause:3.1 /pause false false 2048 shm false} DEBU[0000] Reading configuration file "/usr/share/containers/libpod.conf" DEBU[0000] Merged system config "/usr/share/containers/libpod.conf": &{{false false false false false true} 0 { [] [] []} docker:// runx map[crun:[/usr/bin/crun /usr/local/bin/crun] runc:[/usr/bin/runc /usr/sbin/runc /usr/local/bin/runc /usr/local/sbin/runc /sbin/runc /bin/runc /usr/lib/cri-o-runc/sbin/runc /run/current-system/sw/bin/runc] runx:[/usr/bin/runx]] [runx] [runx] [] [/usr/libexec/podman/conmon /usr/local/libexec/podman/conmon /usr/local/lib/podman/conmon /usr/bin/conmon /usr/sbin/conmon /usr/local/bin/conmon /usr/local/sbin/conmon /run/current-system/sw/bin/conmon] [PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin] systemd /var/run/libpod -1 false /etc/cni/net.d/ [/usr/libexec/cni /usr/lib/cni /usr/local/lib/cni /opt/cni/bin] runx [] k8s.gcr.io/pause:3.1 /pause false false 2048 shm false} DEBU[0000] Using conmon: "/usr/bin/conmon" DEBU[0000] Initializing boltdb state at /var/lib/containers/storage/libpod/bolt_state.db DEBU[0000] Using graph driver overlay DEBU[0000] Using graph root /var/lib/containers/storage DEBU[0000] Using run root /var/run/containers/storage DEBU[0000] Using static dir /var/lib/containers/storage/libpod DEBU[0000] Using tmp dir /var/run/libpod DEBU[0000] Using volume path /var/lib/containers/storage/volumes DEBU[0000] Set libpod namespace to "" DEBU[0000] [graphdriver] trying provided driver "overlay" DEBU[0000] cached value indicated that overlay is supported DEBU[0000] cached value indicated that metacopy is not being used DEBU[0000] cached value indicated that native-diff is usable DEBU[0000] backingFs=extfs, projectQuotaSupported=false, useNativeDiff=true, usingMetacopy=false DEBU[0000] Initializing event backend journald DEBU[0000] using runtime "/usr/bin/runc" WARN[0000] Error initializing configured OCI runtime crun: no valid executable found for OCI runtime crun: invalid argument DEBU[0000] using runtime "/usr/bin/runx" INFO[0000] Found CNI network podman (type=bridge) at /etc/cni/net.d/87-podman-bridge.conflist INFO[0000] Found CNI network runx (type=loopback) at /etc/cni/net.d/99-podman-runx.conflist DEBU[0000] Made network namespace at /var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to add CNI network cni-loopback (type=loopback) DEBU[0000] overlay: mount_data=lowerdir=/var/lib/containers/storage/overlay/l/57IKSKIB7PWKFFISHSGCHN7OJL:/var/lib/containers/storage/overlay/l/UGY43VPEWOHWBCZOQR4WMP5A6F,upperdir=/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/diff,workdir=/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/work DEBU[0000] mounted container "abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4" at "/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged" DEBU[0000] Created root filesystem for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 at /var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to add CNI network runx (type=loopback) DEBU[0000] [0] CNI result: Interfaces:[{Name:lo Mac:00:00:00:00:00:00 Sandbox:/var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e}], IP:[{Version:4 Interface:0xc0004d0bf0 Address:{IP:127.0.0.1 Mask:ff000000} Gateway:<nil>} {Version:6 Interface:0xc0004d0c28 Address:{IP:::1 Mask:ffffffffffffffffffffffffffffffff} Gateway:<nil>}], DNS:{Nameservers:[] Domain: Search:[] Options:[]} DEBU[0000] /etc/system-fips does not exist on host, not mounting FIPS mode secret DEBU[0000] Setting CGroups for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 to machine.slice:libpod:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 DEBU[0000] reading hooks from /usr/share/containers/oci/hooks.d DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-register-machine.json DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-systemd-hook.json DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-umount.json DEBU[0000] hook oci-register-machine.json did not match DEBU[0000] hook oci-systemd-hook.json did not match DEBU[0000] hook oci-umount.json did not match DEBU[0000] reading hooks from /etc/containers/oci/hooks.d DEBU[0000] Created OCI spec for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 at /var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/config.json DEBU[0000] /usr/bin/conmon messages will be logged to syslog DEBU[0000] running conmon: /usr/bin/conmon args="[--api-version 1 -s -c abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 -u abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 -r /usr/bin/runx -b /var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata -p /var/run/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/pidfile -l k8s-file:/var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/ctr.log --exit-dir /var/run/libpod/exits --socket-dir-path /var/run/libpod/socket --log-level debug --syslog --conmon-pidfile /var/run/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/conmon.pid --exit-command /usr/bin/podman --exit-command-arg --root --exit-command-arg /var/lib/containers/storage --exit-command-arg --runroot --exit-command-arg /var/run/containers/storage --exit-command-arg --log-level --exit-command-arg error --exit-command-arg --cgroup-manager --exit-command-arg systemd --exit-command-arg --tmpdir --exit-command-arg /var/run/libpod --exit-command-arg --runtime --exit-command-arg runx --exit-command-arg --storage-driver --exit-command-arg overlay --exit-command-arg --events-backend --exit-command-arg journald --exit-command-arg container --exit-command-arg cleanup --exit-command-arg abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4]" INFO[0000] Running conmon under slice machine.slice and unitName libpod-conmon-abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4.scope DEBU[0000] Cleaning up container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 DEBU[0000] Tearing down network namespace at /var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-467a5369-669f-2580-6ba3-cb9b1ca2626e Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to del CNI network runx (type=loopback) DEBU[0000] unmounted container "abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4" ERRO[0000] unable to start container "archlinux": container create failed (no logs from conmon): EOF -

Worth noting that I have a network adapter on the template-if i run it without a network adapter it still fails, but slightly differently.

DEBU[0000] Reading configuration file "/etc/containers/libpod.conf" DEBU[0000] Merged system config "/etc/containers/libpod.conf": &{{false false false false false true} 0 { [] [] []} docker:// runx map[crun:[/usr/bin/crun /usr/local/bin/crun] runc:[/usr/bin/runc /usr/sbin/runc /usr/local/bin/runc /usr/local/sbin/runc /sbin/runc /bin/runc /usr/lib/cri-o-runc/sbin/runc /run/current-system/sw/bin/runc] runx:[/usr/bin/runx]] [runx] [runx] [] [/usr/libexec/podman/conmon /usr/local/libexec/podman/conmon /usr/local/lib/podman/conmon /usr/bin/conmon /usr/sbin/conmon /usr/local/bin/conmon /usr/local/sbin/conmon /run/current-system/sw/bin/conmon] [PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin] systemd /var/run/libpod -1 false /etc/cni/net.d/ [/usr/libexec/cni /usr/lib/cni /usr/local/lib/cni /opt/cni/bin] runx [] k8s.gcr.io/pause:3.1 /pause false false 2048 shm false} DEBU[0000] Reading configuration file "/usr/share/containers/libpod.conf" DEBU[0000] Merged system config "/usr/share/containers/libpod.conf": &{{false false false false false true} 0 { [] [] []} docker:// runx map[crun:[/usr/bin/crun /usr/local/bin/crun] runc:[/usr/bin/runc /usr/sbin/runc /usr/local/bin/runc /usr/local/sbin/runc /sbin/runc /bin/runc /usr/lib/cri-o-runc/sbin/runc /run/current-system/sw/bin/runc] runx:[/usr/bin/runx]] [runx] [runx] [] [/usr/libexec/podman/conmon /usr/local/libexec/podman/conmon /usr/local/lib/podman/conmon /usr/bin/conmon /usr/sbin/conmon /usr/local/bin/conmon /usr/local/sbin/conmon /run/current-system/sw/bin/conmon] [PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin] systemd /var/run/libpod -1 false /etc/cni/net.d/ [/usr/libexec/cni /usr/lib/cni /usr/local/lib/cni /opt/cni/bin] runx [] k8s.gcr.io/pause:3.1 /pause false false 2048 shm false} DEBU[0000] Using conmon: "/usr/bin/conmon" DEBU[0000] Initializing boltdb state at /var/lib/containers/storage/libpod/bolt_state.db DEBU[0000] Using graph driver overlay DEBU[0000] Using graph root /var/lib/containers/storage DEBU[0000] Using run root /var/run/containers/storage DEBU[0000] Using static dir /var/lib/containers/storage/libpod DEBU[0000] Using tmp dir /var/run/libpod DEBU[0000] Using volume path /var/lib/containers/storage/volumes DEBU[0000] Set libpod namespace to "" DEBU[0000] [graphdriver] trying provided driver "overlay" DEBU[0000] cached value indicated that overlay is supported DEBU[0000] cached value indicated that metacopy is not being used DEBU[0000] cached value indicated that native-diff is usable DEBU[0000] backingFs=extfs, projectQuotaSupported=false, useNativeDiff=true, usingMetacopy=false DEBU[0000] Initializing event backend journald WARN[0000] Error initializing configured OCI runtime crun: no valid executable found for OCI runtime crun: invalid argument DEBU[0000] using runtime "/usr/bin/runx" DEBU[0000] using runtime "/usr/bin/runc" INFO[0000] Found CNI network podman (type=bridge) at /etc/cni/net.d/87-podman-bridge.conflist INFO[0000] Found CNI network runx (type=loopback) at /etc/cni/net.d/99-podman-runx.conflist DEBU[0000] Made network namespace at /var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888 for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888 Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to add CNI network cni-loopback (type=loopback) DEBU[0000] overlay: mount_data=lowerdir=/var/lib/containers/storage/overlay/l/57IKSKIB7PWKFFISHSGCHN7OJL:/var/lib/containers/storage/overlay/l/UGY43VPEWOHWBCZOQR4WMP5A6F,upperdir=/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/diff,workdir=/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/work DEBU[0000] mounted container "abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4" at "/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged" DEBU[0000] Created root filesystem for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 at /var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888 Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to add CNI network runx (type=loopback) DEBU[0000] [0] CNI result: Interfaces:[{Name:lo Mac:00:00:00:00:00:00 Sandbox:/var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888}], IP:[{Version:4 Interface:0xc0001d8bf0 Address:{IP:127.0.0.1 Mask:ff000000} Gateway:<nil>} {Version:6 Interface:0xc0001d8c28 Address:{IP:::1 Mask:ffffffffffffffffffffffffffffffff} Gateway:<nil>}], DNS:{Nameservers:[] Domain: Search:[] Options:[]} DEBU[0000] /etc/system-fips does not exist on host, not mounting FIPS mode secret DEBU[0000] Setting CGroups for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 to machine.slice:libpod:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 DEBU[0000] reading hooks from /usr/share/containers/oci/hooks.d DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-register-machine.json DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-systemd-hook.json DEBU[0000] added hook /usr/share/containers/oci/hooks.d/oci-umount.json DEBU[0000] hook oci-register-machine.json did not match DEBU[0000] hook oci-systemd-hook.json did not match DEBU[0000] hook oci-umount.json did not match DEBU[0000] reading hooks from /etc/containers/oci/hooks.d DEBU[0000] Created OCI spec for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 at /var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/config.json DEBU[0000] /usr/bin/conmon messages will be logged to syslog DEBU[0000] running conmon: /usr/bin/conmon args="[--api-version 1 -s -c abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 -u abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 -r /usr/bin/runx -b /var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata -p /var/run/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/pidfile -l k8s-file:/var/lib/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/ctr.log --exit-dir /var/run/libpod/exits --socket-dir-path /var/run/libpod/socket --log-level debug --syslog --conmon-pidfile /var/run/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/conmon.pid --exit-command /usr/bin/podman --exit-command-arg --root --exit-command-arg /var/lib/containers/storage --exit-command-arg --runroot --exit-command-arg /var/run/containers/storage --exit-command-arg --log-level --exit-command-arg error --exit-command-arg --cgroup-manager --exit-command-arg systemd --exit-command-arg --tmpdir --exit-command-arg /var/run/libpod --exit-command-arg --runtime --exit-command-arg runx --exit-command-arg --storage-driver --exit-command-arg overlay --exit-command-arg --events-backend --exit-command-arg journald --exit-command-arg container --exit-command-arg cleanup --exit-command-arg abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4]" INFO[0000] Running conmon under slice machine.slice and unitName libpod-conmon-abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4.scope DEBU[0000] Cleaning up container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 DEBU[0000] Tearing down network namespace at /var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888 for container abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 INFO[0000] Got pod network &{Name:archlinux Namespace:archlinux ID:abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4 NetNS:/var/run/netns/cni-ad3f7be8-7341-a2d3-fd7d-9e417dd8c888 Networks:[] RuntimeConfig:map[runx:{IP: PortMappings:[] Bandwidth:<nil> IpRanges:[]}]} INFO[0000] About to del CNI network runx (type=loopback) DEBU[0000] unmounted container "abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4" ERRO[0000] unable to start container "archlinux": container create failed (no logs from conmon): EOF -

Thanks @ronan-a or @BenjiReis will take a look tomorrow

-

@theaeon Could you verify the UUIDs of your config in

/etc/runx.conf?

In a shell:xe sr-list uuid=<SR_UUID> xe template-list uuid=<TEMPLATE_UUID>You must have a valid output for each command.

Also ensure the template is a PV template.

Edit: You can also try this basic command:

podman start archlinuxwithout other arguments. The parsing of command line arguments must be improved. -

@ronan-a Confirmed all three points. I did set GUEST_TOOLS in runx.conf-I do wonder if that could be the problem here.

For what its worth log-level debug shouldn't ever make it over to runx, so those earlier pastes should effectively be

podman start archlinuxedit: a quick test says that GUEST_TOOLS isnt it.

edit: more logs for ya:

[04:01 lenovo150 ~]# podman logs archlinux connect to container console with 'xl console abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4' mount: mount(2) failed: Not a directory mount: mount(2) failed: Not a directory mkdir: cannot create directory '/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged/etc/hosts': File exists mount: mount(2) failed: Not a directory rm: cannot remove '/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged//etc/hosts': Device or resource busy cp: '/var/run/containers/storage/overlay-containers/abb22f4d68083424252ac7116427dfd9c3644291e858039750f135cae499f8b4/userdata/hosts' and '/var/lib/containers/storage/overlay/742626ef59426855d765f2cee7b24cac06ecacc60c5ae37668d1f95ff649cd22/merged//etc/hosts' are the same file -

@theaeon Hi!

I've just setup an runx host following @olivierlambert instructions.

I've reproduced your error when--log-level debugis put in the podman command.Do you still have an error without it?

podman start archlinuxshoud create a VM namedcontainer:<something>that starts, stops and then is removed like a container would do. -

@theaeon Like I said we must change how arguments are parsed in the runx script, so avoid additional params like

--log-devel.

-

For what its worth, the podman logs archlinux command from above is w/o debug. I didn't quite realize it vanishing immediately was intended behavior though, tells you how versed I am in containers.

I'll try setting up the matrixdotorg/mjolnir thing again now that I have a command I know is working on runc.

-

Oh now that's interesting. Turns out the containers (both archlinux and the one i just created) are exiting w/ error 143. They're getting sigterm'ed from somewhere.

-

@theaeon said in RunX: tech preview:

For what its worth, the podman logs archlinux command from above is w/o debug. I didn't quite realize it vanishing immediately was intended behavior though, tells you how versed I am in containers.

I'll try setting up the matrixdotorg/mjolnir thing again now that I have a command I know is working on runc.Yeah by default the archlinux image executes the bash command and when the container is started, bash is launched and died just after that. Finally the VM is stopped. This behavior is the same on docker with this image. However using the interactive mode, it's not the case, but we must implement it for a next runx version.

-

@theaeon said in RunX: tech preview:

Oh now that's interesting. Turns out the containers (both archlinux and the one i just created) are exiting w/ error 143. They're getting sigterm'ed from somewhere.

It's related to how we terminate the VM process: it's a wrapper and not the real process that manages the VM. But we shouldn't show this code to users, it's not the real code, I will create an issue on our side, thanks for the feedback.

-

@ronan-a oop-good to know. Now I guess I need to figure out why the new image i created is exiting instead of, well, working.

Unless there's something in this command that I shouldn't be invoking.

podman create --health-cmd="wget --no-verbose --tries=1 --spider http://127.0.0.1:8080/ || exit 1" --volume=/root/mjolnir:/data:Z matrixdotorg/mjolnir(I start it separately, later)

-

System unpinned this topic on

-

Hi,

I have started to play around with this feature. I think it's a great idea

At the moment I'm running into issue on container start:message: xenopsd internal error: Could not find BlockDevice, File, or Nbd implementation: {"implementations":[["XenDisk",{"backend_type":"9pfs","extra":{},"params":"vdi:80a85063-9b59-4fda-82c9-017be0fe967a share_dir none ///srv/runx-sr/1"}]]}I have created the SR as described above:

uuid ( RO) : 968d0b84-213e-a269-3a7a-355cd54f1a1c name-label ( RW): runx-sr name-description ( RW): host ( RO): fraxcp04 allowed-operations (SRO): VDI.introduce; unplug; plug; PBD.create; update; PBD.destroy; VDI.resize; VDI.clone; scan; VDI.snapshot; VDI.create; VDI.destroy; VDI.set_on_boot current-operations (SRO): VDIs (SRO): 80a85063-9b59-4fda-82c9-017be0fe967a PBDs (SRO): 0d4ca926-5906-b137-a192-8b55c5b2acb6 virtual-allocation ( RO): 0 physical-utilisation ( RO): -1 physical-size ( RO): -1 type ( RO): fsp content-type ( RO): shared ( RW): false introduced-by ( RO): <not in database> is-tools-sr ( RO): false other-config (MRW): sm-config (MRO): blobs ( RO): local-cache-enabled ( RO): false tags (SRW): clustered ( RO): false # xe pbd-param-list uuid=0d4ca926-5906-b137-a192-8b55c5b2acb6 uuid ( RO) : 0d4ca926-5906-b137-a192-8b55c5b2acb6 host ( RO) [DEPRECATED]: a6ec002d-b7c3-47d1-a9f2-18614565dd6c host-uuid ( RO): a6ec002d-b7c3-47d1-a9f2-18614565dd6c host-name-label ( RO): fraxcp04 sr-uuid ( RO): 968d0b84-213e-a269-3a7a-355cd54f1a1c sr-name-label ( RO): runx-sr device-config (MRO): file-uri: /srv/runx-sr currently-attached ( RO): true other-config (MRW): storage_driver_domain: OpaqueRef:a194af9f-fd9e-4cb1-a99f-3ee8ad54b624I see also that in

/srv/runx-sra symlink1is created, pointing to a overlay image.The VM is in stated paused after the error above.

The template I used was a old debian PV template, where I removed the PV-bootloader and install-* attributes from other-config. What template would you recommend to use?

Any idea what could cause the error above?

Thanks,

Florian

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login