VMware migration tool: we need your feedback!

-

Without

thin=true, the destination VHD will contains ALL blocks, and will be the size of the virtual disk. So yes, in your example, 500GiB will be transferred and used on your SR.It will be then "forever" thick, until maybe you do a SR migration or copy, because (I think but not 100% sure) that the SR migration or VDI copy is doing a sparse copy (without empty blocks).

So I would suggest, in any case, to use

thin=true.Also, a disk isn't thin or thick per se, only a copy with sparse will be able to tell you if there's a lot of empty blocks.

-

@olivierlambert OK this is all great to know, thanks so much, really appreciate it!

-

I think, in the end, reading twice worth it. Especially when warm mode will be enabled, the penalty to read twice will be small vs the space saved.

-

@olivierlambert Yes, I totally agree with this.

One more question, I just did some digging and it seems like the snapshots aren't using the full amount of disk space even though they are thick, is this normal?

As an example I have 100GB base copy which shows as 101GB with ls -lh in the CLI, but the actual disk attached to the VM shows 9GB (I have 1 snapshot).

I'll also test migrating the VDI to another SR and then back to see if it shrinks it like you think it will, just for test purposes. Will definitely use thin=true when I do the bigger VMs though.

-

So when you do a snapshot, it will convert the actual active disk to a base copy in read only (so 100GiB entirely full), and then write the new blocks inside the new active disk, which was empty and now probably 9GiB big because you created a diff 9GiB worth of 2MiB blocks.

Indeed, moving the disk is using sparse command and so clean those empty blocks.

We'll continue to find solutions (if there are) to improve it, but now we are focused on getting the diff working from VMware to XCP-ng, accelerating a lot the "shutdown" time during the migration process

-

@olivierlambert Got it, I guess I misunderstood, thought that snapshots of thick provisioned disks used WAY more space but seems like it ends up similar?

I mean the thin provisioned base copy will only use the space that actual VM is using, so still saves some there I suppose, but with thick you are using the space for empty blocks either way.

Thanks again for all the help, this is such a great tool and is just in time for my org to be leaving VMware!

-

Are you talking about thin or thick SR? In a thick SR, any active disk will use the total disk space (eg 500GiB even if 10GiB used inside). In a thin SR, the active disk will only use the "real" diff from the base copy (10GiB from our previous example).

I think it's more appropriate to use the term "dynamic image" for VHD files in general. Without the

thin=trueparameter, the image will be inflated to the total size of the disk with empty blocks, regardless the SR type (thin or thick). Withthin=true, we'll only create VHD blocks where there's actual data, so the VHD size will be on the "content" size. -

@olivierlambert OK I think I got it all now, my brain was totally confusing thin provisioned SRs and disk images, not enough coffee today! lol

This all makes a ton of sense now, thank you!

-

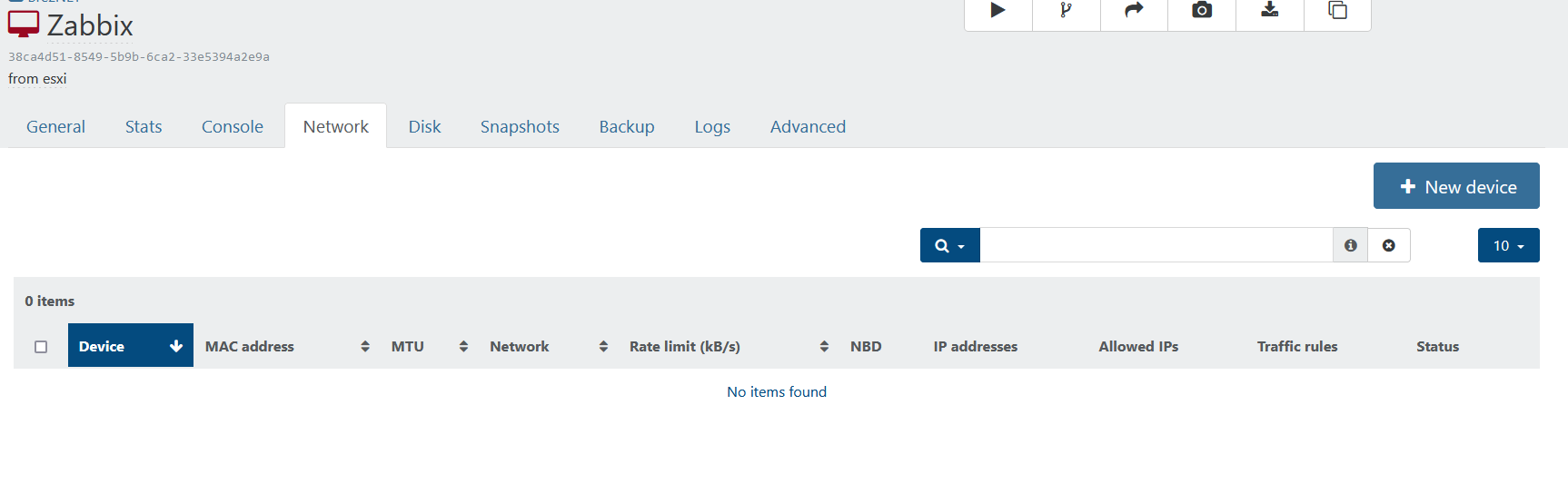

I've done 2 imports and the VMs are up and running. I had to add the nic manually as XO did not create the nic, I had to add it manually.

-

@olivierlambert Just as a quick update, I migrated a 32GB VDI to a different SR and then back, size is still 32GB used, so doesn't appear it "deflated" it or anything like that.

Definitely think using thin=true is the way to go for anyone using this!

-

@planedrop this would only apply to block storage I believe, NFS or ext is thin by default. When I imported a VM that was on an NFS share form ESXi to XCP-ng without the thin=true set the VM was imported with the disk being thin.

-

@brezlord Yes, looks like the disks themselves are thin but whatever was used thick wise is what the disk is inflated to.

i.e. without the thin=true, a 100GB thick disk on ESXi with only 20GB used would end up taking up 100GB on the SR, but if you grow the disk beyond the 100GB it's all thin at that point, so you could change it to a 200GB and it's still using only 100GB of actual space.

BUT with thin=true, the 100GB thick disk on ESXi that only has 20GB used would ONLY use up 20GB of space on the SR in XCP-ng (so it'd only be inflated to 20GB while still showing the OS it's a 100GB disk). Saves a lot of space if you have VMs with massive disks and low usage (like one of the hosts I am migrating which has 13TB assigned thick disks but only about 4TB is used).

@olivierlambert did I get this right?

-

@planedrop great explanation planedrop, I may use it later

-

@brezlord the imported VM should have the network, all set to the networkId of the command line. I will look into this

-

@brezlord : i pushed a fix for the network missing

-

@planedrop If your SR is thick (local LVM, iSCSI), it doesn't matter, the disk will be always the total size of the disk, regardless

thin=truein the VMware migration tool. This last option is relevant for thin SR (local ext, NFS etc.) -

@florent I get the following error now.

root@xoa:~# xo-cli vm.importFromEsxi host=192.168.40.203 user='root' password='obfuscated ' sslVerify=false vm=30 sr=accb1cf1-92b7-5d47-e2c4-e7d8a282c448 network=83594c5b-8b5b-b45f-d3a7-7e5301468dc8 thin=true ✖ HANDLE_INVALID(network, OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45) JsonRpcError: HANDLE_INVALID(network, OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45) at Peer._callee$ (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/json-rpc-peer/dist/index.js:139:44) at tryCatch (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/regeneratorRuntime.js:44:17) at Generator.<anonymous> (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/regeneratorRuntime.js:125:22) at Generator.next (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/regeneratorRuntime.js:69:21) at asyncGeneratorStep (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/asyncToGenerator.js:3:24) at _next (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/asyncToGenerator.js:22:9) at /opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/asyncToGenerator.js:27:7 at new Promise (<anonymous>) at Peer.<anonymous> (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/@babel/runtime/helpers/asyncToGenerator.js:19:12) at Peer.exec (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/json-rpc-peer/dist/index.js:182:20)vm.importFromEsxi { "host": "192.168.40.203", "user": "root", "password": "* obfuscated *", "sslVerify": false, "vm": "30", "sr": "accb1cf1-92b7-5d47-e2c4-e7d8a282c448", "network": "83594c5b-8b5b-b45f-d3a7-7e5301468dc8", "thin": true } { "code": "HANDLE_INVALID", "params": [ "network", "OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45" ], "call": { "method": "network.get_MTU", "params": [ "OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45" ] }, "message": "HANDLE_INVALID(network, OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45)", "name": "XapiError", "stack": "XapiError: HANDLE_INVALID(network, OpaqueRef:478f9e9d-7592-40a2-ab07-10a0a6982e45) at Function.wrap (/opt/xo/xo-builds/xen-orchestra-202301251747/packages/xen-api/src/_XapiError.js:16:12) at /opt/xo/xo-builds/xen-orchestra-202301251747/packages/xen-api/src/transports/json-rpc.js:37:27 at AsyncResource.runInAsyncScope (node:async_hooks:204:9) at cb (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/util.js:355:42) at tryCatcher (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/util.js:16:23) at Promise._settlePromiseFromHandler (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/promise.js:547:31) at Promise._settlePromise (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/promise.js:604:18) at Promise._settlePromise0 (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/promise.js:649:10) at Promise._settlePromises (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/promise.js:729:18) at _drainQueueStep (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/async.js:93:12) at _drainQueue (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/async.js:86:9) at Async._drainQueues (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/async.js:102:5) at Immediate.Async.drainQueues [as _onImmediate] (/opt/xo/xo-builds/xen-orchestra-202301251747/node_modules/bluebird/js/release/async.js:15:14) at processImmediate (node:internal/timers:471:21) at process.callbackTrampoline (node:internal/async_hooks:130:17)" } -

@brezlord that looks lik an invlaid network id , are you sure it's ok ?

(this was not visible before since the code was skipping VIFs creation) -

@florent It's copied direct from XO web UI.

-

Are you sure it's not a PIF? Can you do a

xe network-param-list uuid=<UUID>?If it doesn't work, then do it for a PIF:

xe pif-param-list uuid=<UUID>

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login