Please review - XCP-ng Reference Architecture

-

Hi everyone, I've been a huge XCP-ng fan for years, and am actively promoting it to my employer, even got approval to PoC this and if successful, replace VMware as our hypervisor platform!

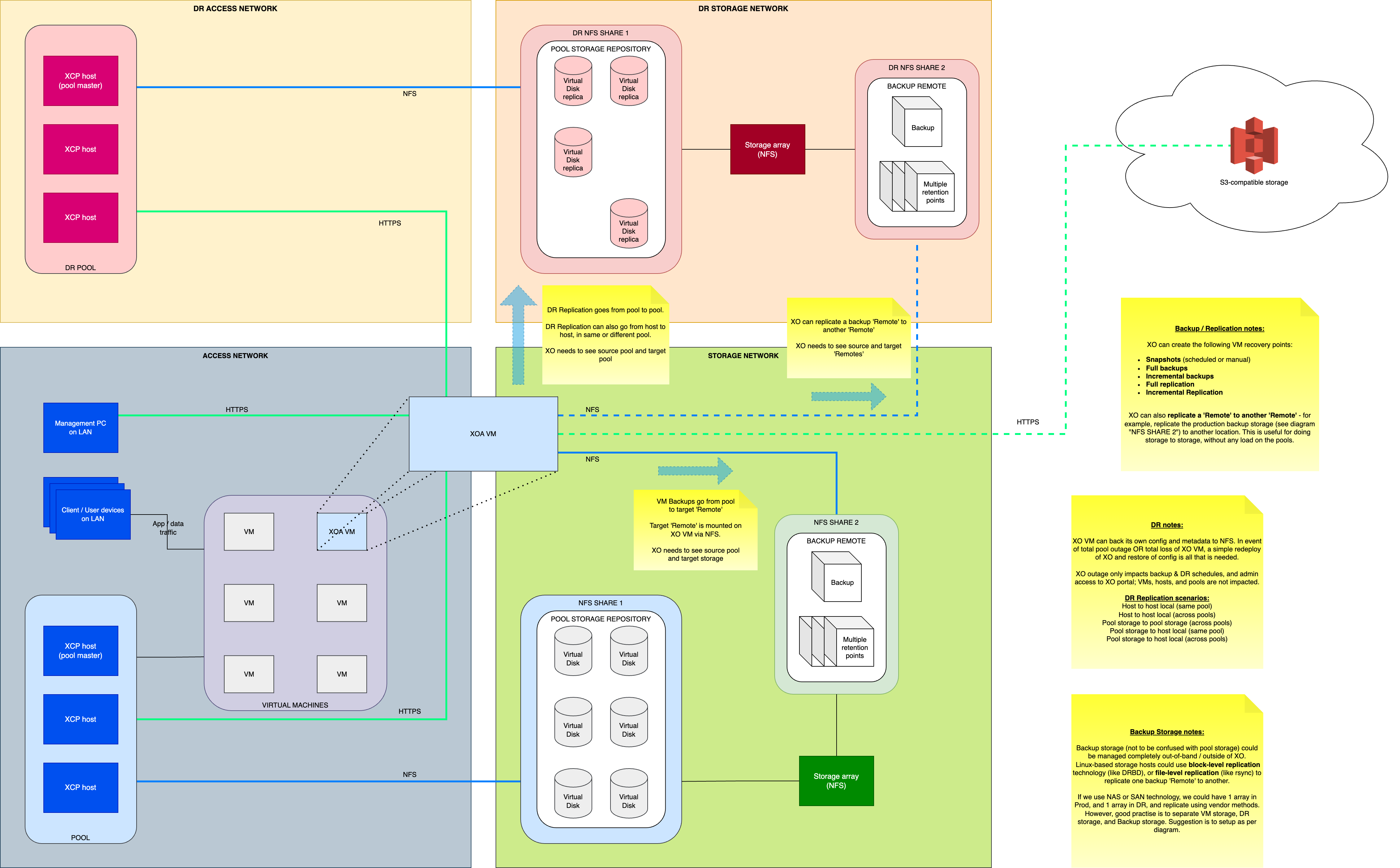

I've designed a logical architecture, for a very simple deployment of 3 hosts in a 'production' pool, and 3 hosts in a 'DR' pool. Separate storage (technology TBC, but most likely TrueNAS) array at each location, which are separated by a few miles but thankfully connected with 10Gbps.

I'll post my first attempt at a design below; please could the Vates heroes and community review it and correct any mistakes?

EDIT: for some additional context, I'm not in an operations role, so I don't touch the systems myself. My last operational role was back in 2012; since then I've worked in solution consultant, pre-sales consultant, and solutions architecture roles.

If this is helpful at all, and is considered to be accurate, please feel free to include this diagram in any XCP-ng documentation - I want to contribute this to the community and wider XCP-ng documentation

All feedback and critiques are welcome!

All feedback and critiques are welcome!

-

@TS79 In your backup/replication notes you say that remote to remote backup replication doesn't put any load on the pools, except that it does? The XO VM is doing the heavy lifting and will use CPU and Network resources, provided by the pool, to accomplish the replication. There are no "agent" like service running ON the remote that can offload the transfer.

If you haven't locked in your production storage, and have <25ms latency between your sites, consider PureStorage Flash Array //X. We use these for production storage (and backups on //E arrays)... Long story short, if you deploy a PureStorage Flash Array into both sites, you can set them up in ActiveCluster, like we have done. You can then split your production cluster between the two sites. Hosts in both sites have paths to storage on each side, but use the array closest to them - the arrays then replicate the writes between themselves. It's truly active-active synchronous replication. This allows a design where the failover between sites is automated. If one of the sites goes down, the workloads will automatically restart in the other site. There's a lot of network design that goes into this, but it's definitely possible as we're doing it.

-

@billcouper this is a good setup if you have a lot of money, we're using something similar but with Dell Powerstore.

If you dont have a lot of money you can use TrueNAS as array per site and have XOA do the backups. I think @TS79 has described it very well in his design.

The only thing I'd like to add is that we do include our XOA in the replication job, so that we have our XOA appliance at our DR site ready to just start up if we need to perform a DR. -

@billcouper Excellent spot - thanks for your thoughts and inputs! Based on the load during backup / replication jobs, I've been considering where best to put XOA. The default deployment method puts it on the pool, but I've considered deploying a standalone instance on a desktop (with 10GbE of course) - need to see how that would work in terms of seeing shared storage and pool local storage.

Honestly, TrueNAS is the most likely. We're winding down the on-prem footprint quite aggressively, so investment in beautiful things like Pure AFA's is unlikely. But I will look into those anyway, if just to be informed on the options - thanks again.

Really appreciate your input

-

@nikade Thanks for your comments and thoughts. We're repurposing existing HP DL380 servers for the hosts, and was going to try repurpose our Nimble AF40 arrays, but they only do iSCSI, which means thick provisioning, which creates a capacity challenge for us (some of our VMs have been provisioned with 2-4TB virtual disks, but only using 100-300GB... so recreating smaller disks and data-cloning would be tedious but necessary).

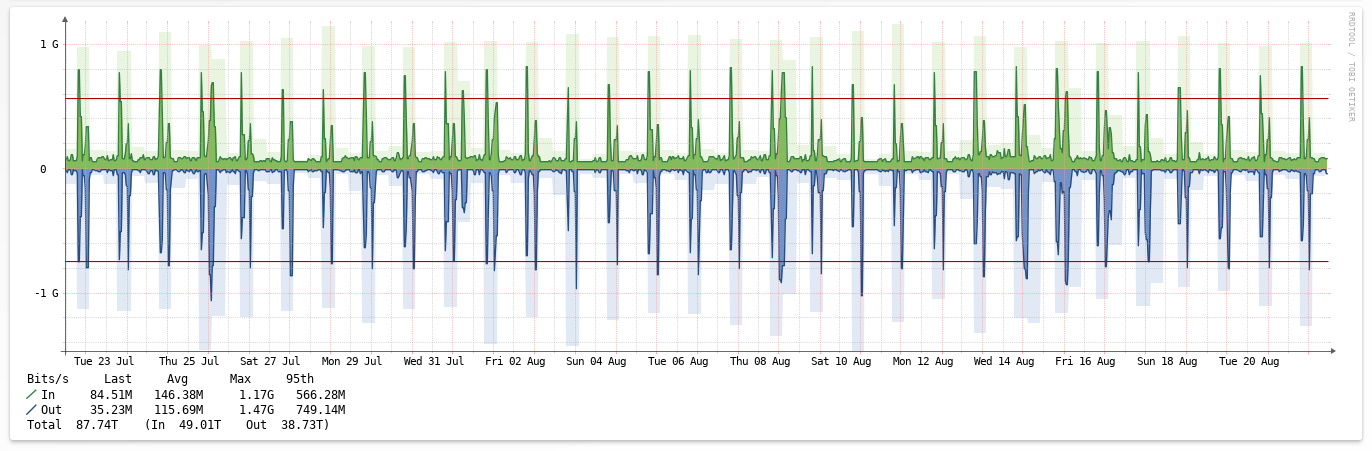

TrueNAS is my 'gold prize', assuming it provides enough uptime and performance. Our IOPS and throughput requirements aren't huge; they only hit anywhere over 500MB/sec and a few thousand IOPS during backup jobs.

Replicating XOA is definitely a 'default'. But from my lab tests, redeploying and restoring config is to quick too, so I'm not too fussed about 'losing' XOA. I'd backup the config to on-premises 'remotes' and to cloud-based object storage.

Much appreciate your time and feedback, thank you!

-

We've used FreeNAS/TrueNAS for a long time and it works great, make sure you plan the sizing because that will really be important once you start putting some load on it (Backup time is really resource-consuming).

If you can, use SSD for the arrays instead of spinning rust - It will greatly improve performance.In our case we've used Dell R730xd's with dual Xeon hexacores (8 cores + HT each) and 128-256Gb RAM for ARC, then 2x200Gb SSD for SLOG and 1x200Gb SSD for L2ARC and the performance is OK.

We mostly do NFS shares (thin provisioning is important) but also some iSCSI LUN's mapped to VM's who need bigger disks, I really do not recommend mapping more than 400-500Gb VDI's directly from your SR.Our TrueNAS boxes are connected with 2x10Gb links but we really never see them do more than 1.4Gbit/s because of using RAIDZ2 and 10K disks (It is slow).

-

@olivierlambert Brief question please - would it make sense to install XOA on a dedicated computer (either 'from source' on Debian/Ubuntu, or as an only VM on a standalone XCP-ng host) so that it's managing pools but isn't adding load to the pool's compute / storage / network resources? Is there any recommendation here from Vates?

-

@TS79 I dont think it really matters, we run ours in one of our pools and we've been doing that since 2016 without any issues.

-

It's doable to dedicate an XCP-ng host for XOA. But XO doesn't use that much resources, so before it will be a "performance best-practice", I would argue it's a good thing for people with sensitive infrastructure where they want to split their mgmt environment to their prod environment. However, due to the level of isolation with Xen, it's doesn't matter in 90% of use case.

-

@olivierlambert Thank you - all makes sense

-

@nikade Thanks again for your input, much appreciated.

-

@TS79 said in Please review - XCP-ng Reference Architecture:

@nikade Thanks again for your input, much appreciated.

If your running TrueNAS Scale or TrueNAS Enterprise 24.04.2 as part of your deployment, with XCP-ng to replace VMware. Make sure you install TrueSecure app, as otherwise you'll be missing important security features on your TrueNAS.

-

@john-c said in Please review - XCP-ng Reference Architecture:

TrueSecure

Whats that? Never heard of TrueSecure on TrueNAS.

-

@john-c @nikade - I had to Google Search for TrueSecure, as hadn't heard of it before.

Seems good in that it's first-party solution, and security it typically always a good idea, but it's not really something for my use-case as a homelabber.

It mentions storage encryption: which to me immediately complicates things like deduplication, compression, and delta backups / replication.

TrueSecure seems to be positioned as a tool to achieve security compliance for strict standards like NIST / FIPS / government security regulations.

Still, good to know it exists and will be reading more about it for potential future advice! -

@TS79 said in Please review - XCP-ng Reference Architecture:

@john-c @nikade - I had to Google Search for TrueSecure, as hadn't heard of it before.

Seems good in that it's first-party solution, and security it typically always a good idea, but it's not really something for my use-case as a homelabber.

It mentions storage encryption: which to me immediately complicates things like deduplication, compression, and delta backups / replication.

TrueSecure seems to be positioned as a tool to achieve security compliance for strict standards like NIST / FIPS / government security regulations.

Still, good to know it exists and will be reading more about it for potential future advice!It's also where you can configure settings like minimum SMB protocol to use, SMB connection encryption and SMB connection signing.

-

@nikade said in Please review - XCP-ng Reference Architecture:

@john-c said in Please review - XCP-ng Reference Architecture:

TrueSecure

Whats that? Never heard of TrueSecure on TrueNAS.

It's an application or feature for TrueNAS Scale, TrueNAS Core and/or TrueNAS Enterprise. Which enables the enabling and configuration of security features of TrueNAS instances (software and/or hardware).

-

If you run backups outside of business hours, any impact on pool hosts cpu/memory performance is likely irrelevant (and limited by how many resources the XO is provisioned with anyway). The bigger potential impact is likely on your production storage, which again could be irrelevant outside of business hours.

However, if you want to perform backups more frequently and/or during business hours, in my experience the storage performance is the more likely to suffer noticeable impact. Unless your hosts are very highly utilized the additional cpu/memory load on a single VM shouldn't tip the scales. And at 500MB/sec your network shouldn't struggle either (I am assuming 10+Gbps links to get that speed).

And regardless of backing up during or outside business hours, or how long your backup window is, always consider the restore times! Performance of backup storage is always low priority until something needs to be restored

If your backup takes 8 hours your restore will take 8 hours. Or longer. Don't cut any corners on backup storage, it is very important!

If your backup takes 8 hours your restore will take 8 hours. Or longer. Don't cut any corners on backup storage, it is very important! -

@john-c said in Please review - XCP-ng Reference Architecture:

@nikade said in Please review - XCP-ng Reference Architecture:

@john-c said in Please review - XCP-ng Reference Architecture:

TrueSecure

Whats that? Never heard of TrueSecure on TrueNAS.

It's an application or feature for TrueNAS Scale, TrueNAS Core and/or TrueNAS Enterprise. Which enables the enabling and configuration of security features of TrueNAS instances (software and/or hardware).

Alright - I didnt know that, thanks for the info.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login