XCP-ng 8.3 updates announcements and testing

-

installed updates will report back.

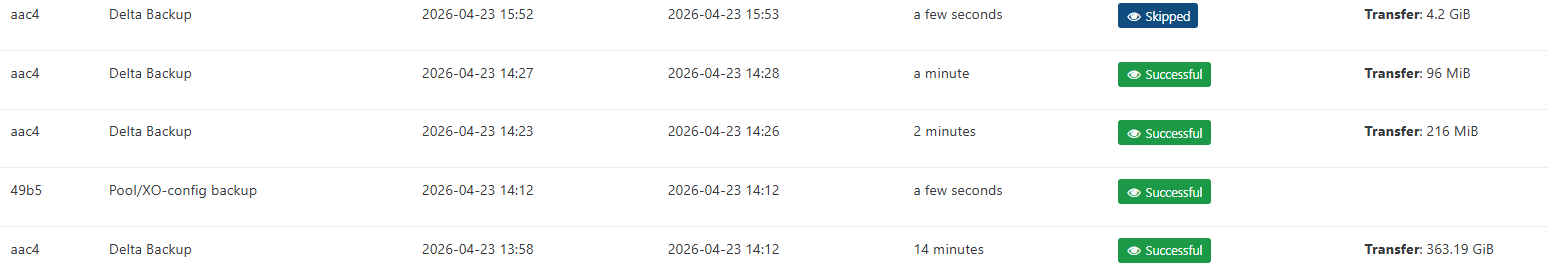

Update - I had migrated vms back over to vhd prior to update release. I have migrated 2 vms back over to qcow2 and the initial backup ran successfull. Ran a second delta backup and that as well was successful with out issues. Backups happen very quickly now. But it appears the % and progress bar are working.

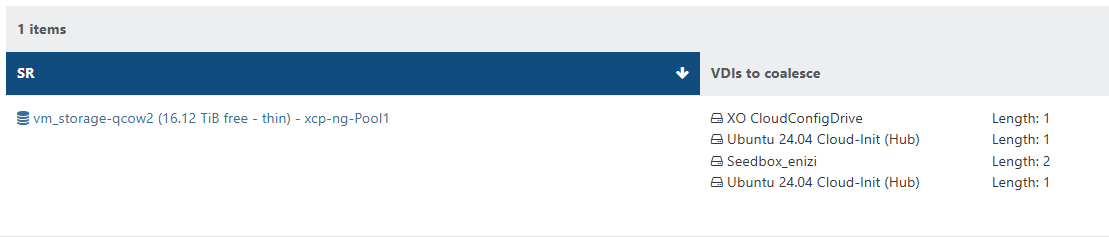

When CBT is enabled on the vm vdi. They show up as needing to be coalesced. VMs without CBT enabled the vdis are coalesced.

Will continue to monitor.

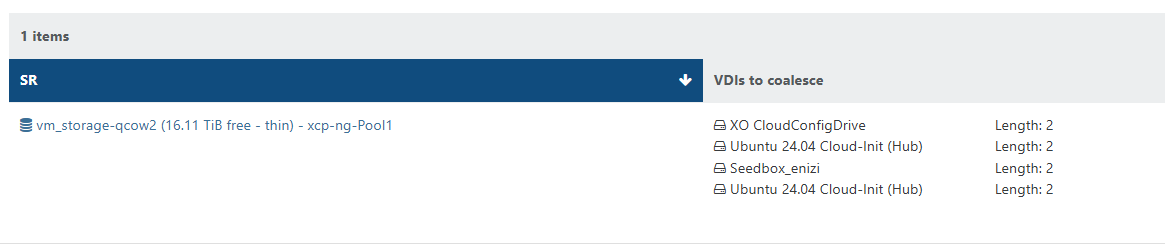

Once the coalesence hits 2 for the vm. The vm is skipped form future backups until cleared. (shutting down the vm will allow the coalescence to happen.

2026-04-23T19_52_34.694Z - backup NG.txt

-

@rzr XCP 8.3 pools updated and running.

CR delta backup snapshot problem corrected and working now.

SSH from old system to XCP displays the warning (per documentation).

-

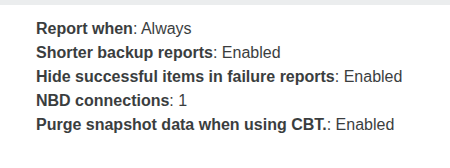

I thought i pressed save after editing the backup job to enable the purge snapshot when using cbt.

After re-enabling and clicking save. All is good now. No errors no stuck coalescence after backups.

-

This post is deleted! -

I updated my test environment and performed a few tests:

- migrating VMs back and forth between VHD based NFS SR and QCOW2 based iSCSI SR --> VMs got converted between VHD and QCOW2 just fine, live migration worked

- creation of rather big QCOW2 based VMs (2.5+ TB)

- NBD-enabled delta backups of a mixed set of VMs (small, big, QCOW2, VHD)

All tests worked fine so far. Only thing that I noticed: When converting VHD-based VMs to QCOW2 format I was not able to storage migrate more than 2 VMs at a time. XO said something about "not enough memory". That might be related to my dom0 in test environment only having 4GB of RAM. Maybe not related to VHD to QCOW2 migration path. I never saw this error in my live environment where all node's dom0 have 8GB RAM.

Update candidate looks good so far from my point of view.

-

New update candidates for you to test!

We are continuing to refine the next batch of update with planned fixes. This release batch contains fixes on the major storage feature previously announced, read the RC2 announcement for QCOW2 image format support for 2TiB+ images.

What changed

Storage

QCOW2 image format support is the major feature of this release batch, check related announcement in forum.

Some fixes have been applied to fix issues found during the testing phase.

-

sm: 3.2.12-17.6-

Limit QCOW2 VDI max size to be 16TiB with metadata to allow compatibility with EXTSR (EXTSR is limited to 16TiB unique file size)

-

If a full QCOW2 VDI is allocated, XCP-ng would not be able to migrate it to an EXTSR with this limitation.

-

In the future, while EXTSR will remain limited to this maximum size, other SR types will evolve towards higher limits. For this, we'll have to work on the existing assumption that all SR which support the QCOW2 image-format share the same maximum size limit for VDIs, and to catch migration attempts towards SRs whic cannot receive disks bigger than their maximum limit.

-

-

-

blktap: 3.55.5-6.6- Update the package's license.

Versions:

blktap: 3.55.5-6.5.xcpng8.3 -> 3.55.5-6.6.xcpng8.3sm: 3.2.12-17.5.xcpng8.3 -> 3.2.12-17.6.xcpng8.3

Test on XCP-ng 8.3

If you are using XOSTOR, please refer to our documentation for the update method.

yum clean metadata --enablerepo=xcp-ng-testing,xcp-ng-candidates yum update --enablerepo=xcp-ng-testing,xcp-ng-candidates rebootThe usual update rules apply: pool coordinator first, etc.

What to test

The most important change is related to storage: adding QCOW2 support also affects the codebase managing VHD disks. What matters here is, above all, to detect any regression on VHD support (we tested it deeply, but on this matter there's no such thing as too much testing). Of course, you are also welcome to test the QCOW2 image format support.

See the dedicated thread for more information.

And, as usual, normal use and anything else you want to test.

Test window before official release of the updates

~3 days

We would like to thank users who reported feedback since our last call for testing, in less than 24h: @acebmxer, @Andrew, @MajorP93.

-

-

Installed on my usual test hosts without issues. However i am not using the qcow2 disk format anywhere yet

-

@rzr Installed latest update and no issues to report. I dont hvae any 2tb+ drives in my vms. converting from vhd to qcow2 and backups all working.

-

@rzr Updates running. VHD use only.

-

Installed on my usual lab setup works fine so far although I have the "Fell back to full backup" message for qcow2 VMs

-

@bufanda Make sure you have enable the purge snapshot when using CBT, if you are using CBT. After the previous update that fixed fell back to full backup issue for me..

Maybe also try running a manual full backup to reset the VDI chain.

-

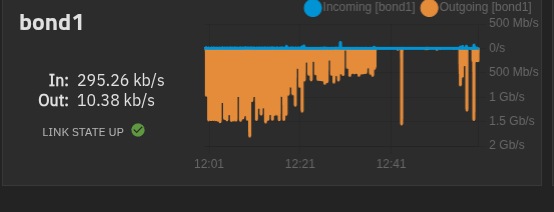

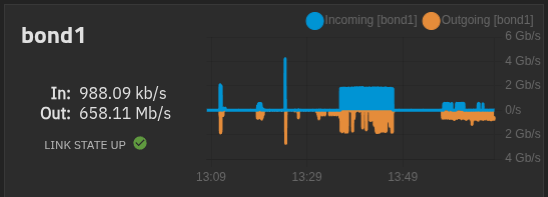

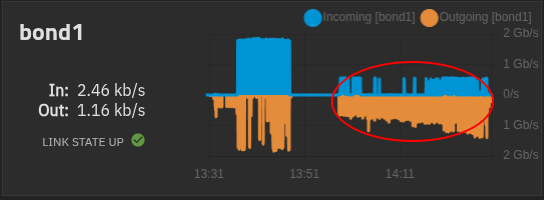

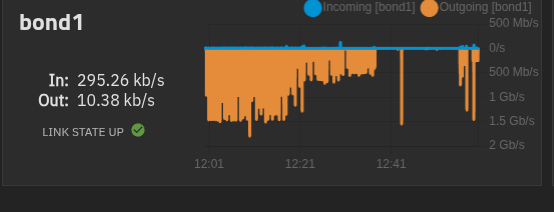

One thing i am noticing after this updates. Is i am seeing alot more traffic on my truenas. The gaps are me shutting down vms and starting each one to find the problem vms but it seems to be any vm. I didnt notice any performance issues just noticed the graph in truenas when its usally flat line there the occasional spike here and there not the big mess to the left in first screenshot. 5 vms running

My xoa is on local storage on master host. and used for these test.

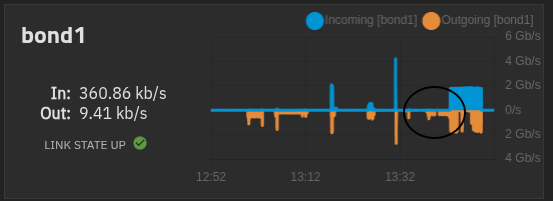

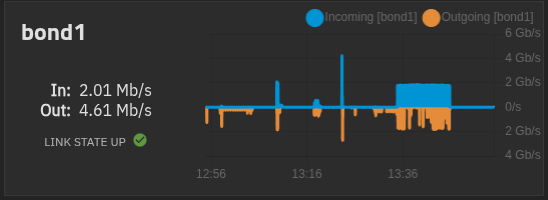

All vms are powered off except xoa and xo

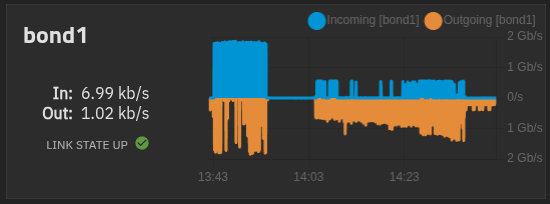

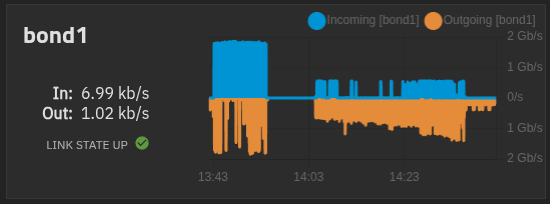

Here i booted the xo vm and left it idle. The spike after is me live migrate back to vhd SR and then left idle.

The gap in the middle is xo idle on vhd only SR.

live Migrate xo back to qcow2 only SR

Migration back to qcow2 completed

Left xo ldle after migration to qcow2 sr.

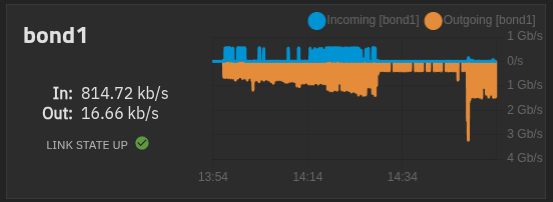

Again all vms booted and idle....

From master host.

-

@acebmxer purge snapshots is active since I created the backup job over a year ago. I always enable purge snapshots on backup jobs.

-

@bufanda I'm being told it's expected to have it the first time after the update, but in theory the next ones should be not be fulls. Can you try?

-

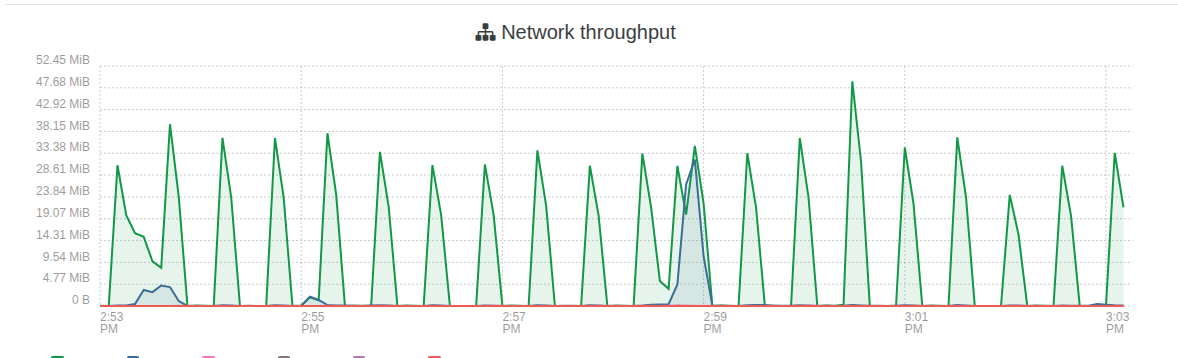

@acebmxer with qcow2, the way we scan the SR regularly uses more I/O, so this may explain it.

-

-

@bufanda I'm being told it's expected to have it the first time after the update, but in theory the next ones should be not be fulls. Can you try?

Just checked and the VM I was testing with was part of two backups and it seems that when one runs and the second starts that it will fall back. I removed the VM now from one backup and with being only member of one backup job it looks good then. Will keep an eye on it.

-

@acebmxer Can you evaluate the amount of data transferred at each spike? So that we can evaluate if it's more than expected. What's the total size of the VM disks?

-

Left side of chart is all VMS running. 1.5gb/s each vm's vdi ranges from 128gb - 256gb allocated. Actual disk spaced used not sure)

The 200mb/s - 300mb/s on far right is just XO-CE running idle.

So if each vm is consuming 300mb/s ish times 4 -5 vms would get close to the 1.5gb/s.

-

Thanks. Ping @Team-Storage

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login