-

I read on the blog that XOSTOR has been officially released and wanted to test it. I have installed v8.2.1 of XCP-ng on the server nodes. On a separate computer in the management network I have XO built from sources. I have updated the hosts to the latest packages.

Then I started following instructions from the first post in the thread. I am getting error at the sr-create step.

[15:11 xcp-ng-vh1 ~]# xe sr-create type=linstor name-label=XOSTOR host-uuid=382d49a5-7435-425e-8588-f56e7a7711f8 device-config:group-name=linstor_group/thin_device device-config:redundancy=2 shared=true device-config:provisioning=thin Error code: SR_BACKEND_FAILURE_202 Error parameters: , General backend error [opterr=['XENAPI_PLUGIN_FAILURE', 'non-zero exit', '', 'Traceback (most recent call last):\n File "/etc/xapi.d/plugins/linstor-manager", line 24, in <module>\n from linstorjournaler import LinstorJournaler\n File "/opt/xensource/sm/linstorjournaler.py", line 19, in <module>\n from linstorvolumemanager import LinstorVolumeManager\n File "/opt/xensource/sm/linstorvolumemanager.py", line 20, in <module>\n import linstor\nImportError: No module named linstor\n']],I tried to find possible causes on the forums and it was mentioned that the linstor packages are not yet mature for 8.3 release and that python versions between 8.2 and 8.3 versions of xcp-ng can cause issues. I am using 8.2 branch though so not sure what I am missing here:

[15:12 xcp-ng-vh1 ~]# cat /etc/os-release NAME="XCP-ng" VERSION="8.2.1" ID="xenenterprise" ID_LIKE="centos rhel fedora" VERSION_ID="8.2.1" PRETTY_NAME="XCP-ng 8.2.1" ANSI_COLOR="0;31" HOME_URL="http://xcp-ng.org/" BUG_REPORT_URL="https://github.com/xcp-ng/xcp"Packages related to linstor on the system:

[20:11 xcp-ng-vh1 ~]# yum list | grep linstor drbd.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-bash-completion.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-pacemaker.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-reactor.x86_64 1.4.0-1 @xcp-ng-linstor drbd-udev.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-utils.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-xen.x86_64 9.27.0-1.el7 @xcp-ng-linstor kmod-drbd.x86_64 9.2.8_4.19.0+1-1 @xcp-ng-linstor linstor-client.noarch 1.21.1-1.xcpng8.2 @xcp-ng-linstor linstor-common.noarch 1.26.1-1.el7 @xcp-ng-linstor linstor-controller.noarch 1.26.1-1.el7 @xcp-ng-linstor linstor-satellite.noarch 1.26.1-1.el7 @xcp-ng-linstor python-linstor.noarch 1.21.1-1.xcpng8.2 @xcp-ng-linstor sm.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 @xcp-ng-linstor sm-rawhba.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 @xcp-ng-linstor tzdata-java.noarch 2023c-1.el7 @xcp-ng-linstor xcp-ng-linstor.noarch 1.1-3.xcpng8.2 @xcp-ng-updates xcp-ng-release-linstor.noarch 1.3-1.xcpng8.2 @xcp-ng-updates drbd-debuginfo.x86_64 9.27.0-1.el7 xcp-ng-linstor drbd-heartbeat.x86_64 9.27.0-1.el7 xcp-ng-linstor sm-debuginfo.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstor sm-test-plugins.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstor sm-testresults.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstorAny help appreciated.

Thanks.

-

@ha_tu_su said in XOSTOR hyperconvergence preview:

I read on the blog that XOSTOR has been officially released and wanted to test it. I have installed v8.2.1 of XCP-ng on the server nodes. On a separate computer in the management network I have XO built from sources. I have updated the hosts to the latest packages.

Then I started following instructions from the first post in the thread. I am getting error at the sr-create step.

[15:11 xcp-ng-vh1 ~]# xe sr-create type=linstor name-label=XOSTOR host-uuid=382d49a5-7435-425e-8588-f56e7a7711f8 device-config:group-name=linstor_group/thin_device device-config:redundancy=2 shared=true device-config:provisioning=thin Error code: SR_BACKEND_FAILURE_202 Error parameters: , General backend error [opterr=['XENAPI_PLUGIN_FAILURE', 'non-zero exit', '', 'Traceback (most recent call last):\n File "/etc/xapi.d/plugins/linstor-manager", line 24, in <module>\n from linstorjournaler import LinstorJournaler\n File "/opt/xensource/sm/linstorjournaler.py", line 19, in <module>\n from linstorvolumemanager import LinstorVolumeManager\n File "/opt/xensource/sm/linstorvolumemanager.py", line 20, in <module>\n import linstor\nImportError: No module named linstor\n']],I tried to find possible causes on the forums and it was mentioned that the linstor packages are not yet mature for 8.3 release and that python versions between 8.2 and 8.3 versions of xcp-ng can cause issues. I am using 8.2 branch though so not sure what I am missing here:

[15:12 xcp-ng-vh1 ~]# cat /etc/os-release NAME="XCP-ng" VERSION="8.2.1" ID="xenenterprise" ID_LIKE="centos rhel fedora" VERSION_ID="8.2.1" PRETTY_NAME="XCP-ng 8.2.1" ANSI_COLOR="0;31" HOME_URL="http://xcp-ng.org/" BUG_REPORT_URL="https://github.com/xcp-ng/xcp"Packages related to linstor on the system:

[20:11 xcp-ng-vh1 ~]# yum list | grep linstor drbd.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-bash-completion.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-pacemaker.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-reactor.x86_64 1.4.0-1 @xcp-ng-linstor drbd-udev.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-utils.x86_64 9.27.0-1.el7 @xcp-ng-linstor drbd-xen.x86_64 9.27.0-1.el7 @xcp-ng-linstor kmod-drbd.x86_64 9.2.8_4.19.0+1-1 @xcp-ng-linstor linstor-client.noarch 1.21.1-1.xcpng8.2 @xcp-ng-linstor linstor-common.noarch 1.26.1-1.el7 @xcp-ng-linstor linstor-controller.noarch 1.26.1-1.el7 @xcp-ng-linstor linstor-satellite.noarch 1.26.1-1.el7 @xcp-ng-linstor python-linstor.noarch 1.21.1-1.xcpng8.2 @xcp-ng-linstor sm.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 @xcp-ng-linstor sm-rawhba.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 @xcp-ng-linstor tzdata-java.noarch 2023c-1.el7 @xcp-ng-linstor xcp-ng-linstor.noarch 1.1-3.xcpng8.2 @xcp-ng-updates xcp-ng-release-linstor.noarch 1.3-1.xcpng8.2 @xcp-ng-updates drbd-debuginfo.x86_64 9.27.0-1.el7 xcp-ng-linstor drbd-heartbeat.x86_64 9.27.0-1.el7 xcp-ng-linstor sm-debuginfo.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstor sm-test-plugins.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstor sm-testresults.x86_64 2.30.8-10.1.0.linstor.2.xcpng8.2 xcp-ng-linstorAny help appreciated.

Thanks.

Ok, I had 3 hosts in the pool. Above error I was getting on 2 hosts. Just to repeat the process cleanly given in the first post I tried steps on 3rd host and SR creation was successful.

Initially on the 2 hosts I had used the 'thick' version of command to prepare disks. Then I had deleted the lvm and used wipefs on disks and then redid steps using the 'thin' version of command. My guess is that the disks were not 'wiped' completely and then I got error during SR creation.

I am going to use gparted to wipe the disks properly and then redo steps. If that doesn't work, then nuke the install of xcp-ng and reinstall and then check. Will update the post accordingly.

Cheers.

-

@ha_tu_su

After using gparted to wiping out all disks, sr-create command works as expected to create XOSTOR. -

H ha_tu_su referenced this topic on

-

@ronan-a and @Maelstrom96 I didn't get this hostname issue.

Does XOSTOR needs a fully functional DNS setup to work? Or the failure was local due to the local change of the hostname?

I didn't understand if the communication is done by IP addresses directly or if DNS name resolution is needed.

I'm particularly interested in this because with XOSTOR I'm considering virtualizing my pfSense firewall directly and get rid of the physical servers. And in this scenario in a case of a entire pool reboot I must guarantee that I will have the two pfSense VMs up and running, with the option to auto start after reboot, so I can access the entire infrastructure or else I'll be locked from outside.

-

@ferrao said in XOSTOR hyperconvergence preview:

Does XOSTOR needs a fully functional DNS setup to work? Or the failure was local due to the local change of the hostname?

No. But your LINSTOR node name must match the hostname. We use IPs to communicate between nodes and in our driver.

-

@ronan-a thanks. I've deployed it already with the script on the first post. Seems to be working. I've opted to used redundancy=3 in a 3 hosts setup. It's a lot of 'wasted' resources but seems to be the best option for performance and reliability.

May I ask now a licensing issue: if we upgrade to Vates VM, does the deployment mode on the first message is considered supported or everything will need to be done again from XOA?

Thanks.

-

@ferrao said in XOSTOR hyperconvergence preview:

May I ask now a licensing issue: if we upgrade to Vates VM, does the deployment mode on the first message is considered supported or everything will need to be done again from XOA?

Regarding XOSTOR Support Licenses: In general, we prefer our users to use a trial license through XOA. And if they are interested, they can subscribe to a commercial license.

To be more precise: the manual steps in this thread are still valid to configure an SR LINSTOR, no difference with the XOA commands. However, if you wish to suscribe to a support license from a pool without XOA nor trial license, we are quite strict on the fact that the infrastructure must be in a stable state. -

Anyone else getting a 301 error?

http://mirrors.xcp-ng.org/8/8.2/base/x86_64/repodata/repomd.xml: [Errno 14] HTTPS Error 301 - Moved Permanently Trying other mirror. -

@lover said in XOSTOR hyperconvergence preview:

Anyone else getting a 301 error?

http://mirrors.xcp-ng.org/8/8.2/base/x86_64/repodata/repomd.xml: [Errno 14] HTTPS Error 301 - Moved Permanently Trying other mirror.301 is not an error (as a failure) it's a redirect. Here it redirects correctly to a mirror nearby. In my case: https://mirror.uepg.br/xcp-ng/8/8.2/base/x86_64/repodata/repomd.xml

-

This post is deleted! -

See /etc/yum.repos.d/xcp-ng.repo and update all references from http:/ to https:/

-

Hello all!

I have an issue with backing up to S3. I am hoping someone can point out the mistake I am making.

Our xcp-ng hosts are all up to date.jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 remote l ╭─────────────────────────────────────────────────────────────────────────╮ ┊ Name ┊ Type ┊ Info ┊ ╞═════════════════════════════════════════════════════════════════════════╡ ┊ linbit-velero-backup ┊ S3 ┊ us-east-1.s3.wasabisys.com/velero-preprod ┊ ╰─────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 backup create linbit-velero-backup pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d SUCCESS: Suspended IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-vtest-k8s01-worker02' for snapshot SUCCESS: Suspended IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen02' for snapshot SUCCESS: Suspended IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen03' for snapshot SUCCESS: Suspended IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen01' for snapshot SUCCESS: Took snapshot of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-vtest-k8s01-worker02' SUCCESS: Took snapshot of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen02' SUCCESS: Took snapshot of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen03' SUCCESS: Took snapshot of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen01' SUCCESS: Resumed IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-vtest-k8s01-worker02' after snapshot SUCCESS: Resumed IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen01' after snapshot SUCCESS: Resumed IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen02' after snapshot SUCCESS: Resumed IO of '[pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d]' on 'ovbh-pprod-xen03' after snapshot INFO: Generated snapshot name for backup of resourcepvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d to remote linbit-velero-backup INFO: Shipping of resource pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d to remote linbit-velero-backup in progress. SUCCESS: Started shipping of resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' SUCCESS: Started shipping of resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' SUCCESS: Started shipping of resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 snapshot l ╭─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ SnapshotName ┊ NodeNames ┊ Volumes ┊ CreatedOn ┊ State ┊ ╞═════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d ┊ back_20241002_191139 ┊ ovbh-pprod-xen01, ovbh-pprod-xen02, ovbh-pprod-xen03 ┊ 0: 50 GiB ┊ 2024-10-02 16:11:40 ┊ Shipping ┊ ╰─────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 backup list linbit-velero-backup ╭───────────────────────────────────────────────────────╮ ┊ Resource ┊ Snapshot ┊ Finished at ┊ Based On ┊ Status ┊ ╞═══════════════════════════════════════════════════════╡ ╰───────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 snapshot l ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ SnapshotName ┊ NodeNames ┊ Volumes ┊ CreatedOn ┊ State ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d ┊ back_20241002_191139 ┊ ovbh-pprod-xen01, ovbh-pprod-xen02, ovbh-pprod-xen03 ┊ 0: 50 GiB ┊ 2024-10-02 16:11:40 ┊ Successful ┊ ╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯Nothing shows up on S3.

And after, enabling logs by modifying /usr/share/linstor-server/lib/conf/logback.xml I see the following[19:15 ovbh-pprod-xen01 ~]# tail /var/log/linstor-satellite/linstor-Satellite.log -n 20 2024_10_02 19:11:41.511 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_02 19:11:41.512 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_02 19:11:41.513 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_02 19:11:41.516 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 82432 bytes remaining 2024_10_02 19:11:41.543 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 42 2024_10_02 19:11:41.543 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 43 2024_10_02 19:11:41.552 [MainWorkerPool-5] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241002_191139' of resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' registered. 2024_10_02 19:11:41.553 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000 size from 52440040 KiB to 52441088 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_02 19:11:41.615 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' [DRBD] adjusted. 2024_10_02 19:11:41.781 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 43 2024_10_02 19:11:41.781 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 44 2024_10_02 19:11:47.220 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - stdErr: Incomplete copy_data, 4194304 bytes missing. 2024_10_02 19:11:47.295 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] WARN LINSTOR/Satellite - SYSTEM - Exception occurred while checking for support of requester-pays on remote linbit-velero-backup. Defaulting to false 2024_10_02 19:11:47.307 [MainWorkerPool-7] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241002_191139' of resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' registered. 2024_10_02 19:11:47.309 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000 size from 52440040 KiB to 52441088 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_02 19:11:47.312 [shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139] ERROR LINSTOR/Satellite - SYSTEM - [Report number 66FDD1AE-3AE91-000000] 2024_10_02 19:11:47.398 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d' [DRBD] adjusted. 2024_10_02 19:11:47.561 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 44 2024_10_02 19:11:47.561 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 45The error

[19:12 ovbh-pprod-xen01 ~]# cat /var/log/linstor-satellite/ErrorReport-66FDD1AE-3AE91-000000.log ERROR REPORT 66FDD1AE-3AE91-000000 ============================================================ Application: LINBIT® LINSTOR Module: Satellite Version: 1.26.1 Build ID: 12746ac9c6e7882807972c3df56e9a89eccad4e5 Build time: 2024-02-22T05:27:50+00:00 Error time: 2024-10-02 19:11:47 Node: ovbh-pprod-xen01 Thread: shipping_pvc-7746af6f-d37e-4c5d-9f44-9616f2f9b33d_00000_back_20241002_191139 ============================================================ Reported error: =============== Category: RuntimeException Class name: AbortedException Class canonical name: com.amazonaws.AbortedException Generated at: Method 'handleInterruptedException', Source file 'AmazonHttpClient.java', Line #880 Error message: Call backtrace: Method Native Class:Line number handleInterruptedException N com.amazonaws.http.AmazonHttpClient$RequestExecutor:880 execute N com.amazonaws.http.AmazonHttpClient$RequestExecutor:757 access$500 N com.amazonaws.http.AmazonHttpClient$RequestExecutor:715 execute N com.amazonaws.http.AmazonHttpClient$RequestExecutionBuilderImpl:697 execute N com.amazonaws.http.AmazonHttpClient:561 execute N com.amazonaws.http.AmazonHttpClient:541 invoke N com.amazonaws.services.s3.AmazonS3Client:5516 invoke N com.amazonaws.services.s3.AmazonS3Client:5463 abortMultipartUpload N com.amazonaws.services.s3.AmazonS3Client:3620 abortMultipart N com.linbit.linstor.api.BackupToS3:199 threadFinished N com.linbit.linstor.backupshipping.BackupShippingS3Daemon:320 run N com.linbit.linstor.backupshipping.BackupShippingS3Daemon:298 run N java.lang.Thread:829 Caused by: ========== Category: Exception Class name: SdkInterruptedException Class canonical name: com.amazonaws.http.timers.client.SdkInterruptedException Generated at: Method 'checkInterrupted', Source file 'AmazonHttpClient.java', Line #935 Call backtrace: Method Native Class:Line number checkInterrupted N com.amazonaws.http.AmazonHttpClient$RequestExecutor:935 checkInterrupted N com.amazonaws.http.AmazonHttpClient$RequestExecutor:921 executeHelper N com.amazonaws.http.AmazonHttpClient$RequestExecutor:1115 doExecute N com.amazonaws.http.AmazonHttpClient$RequestExecutor:814 executeWithTimer N com.amazonaws.http.AmazonHttpClient$RequestExecutor:781 execute N com.amazonaws.http.AmazonHttpClient$RequestExecutor:755 access$500 N com.amazonaws.http.AmazonHttpClient$RequestExecutor:715 execute N com.amazonaws.http.AmazonHttpClient$RequestExecutionBuilderImpl:697 execute N com.amazonaws.http.AmazonHttpClient:561 execute N com.amazonaws.http.AmazonHttpClient:541 invoke N com.amazonaws.services.s3.AmazonS3Client:5516 invoke N com.amazonaws.services.s3.AmazonS3Client:5463 abortMultipartUpload N com.amazonaws.services.s3.AmazonS3Client:3620 abortMultipart N com.linbit.linstor.api.BackupToS3:199 threadFinished N com.linbit.linstor.backupshipping.BackupShippingS3Daemon:320 run N com.linbit.linstor.backupshipping.BackupShippingS3Daemon:298 run N java.lang.Thread:829 END OF ERROR REPORT. -

Ok, so, turns out this is because of the

thin-send-recvpackage I build from https://github.com/LINBIT/thin-send-recv/tree/masterI just swapped out the version I built for the last one I was able to get online to test, and it works.

The last version I was able to get from any repository before they went 403 was thin-send-recv-1.0.1-1.x86_64.rpm.txt, I was able to get this from https://piraeus.daocloud.io/linbit/rpms/7/x86_64/thin-send-recv-1.0.1-1.x86_64.rpm. FYI https://packages.linbit.com/yum/sles12-sp2/drbd-9.0/x86_64/Packages/ returns 403's too so no point in looking for it there if they have it hosted.

I built thin-send-recv-1.1.2-1.xcpng8.2.x86_64.rpm.txt using this doc I put together thin-send-recv.txt. But this package I built is resulting in the error posted previously.

So I am a bit at a loss, I want to be able to use velero for backing up pvs which are not managed by an operator with backup capabilities, but I do not want to be stuck with this old version I can not update.

Any advice would be greatly appreciated!

-

Ok great, I manually built 1.0.1, and it works just like the package I got online, that means that what I am doing is working and the build process is correct.

The bad new is there is a breaking change with v1.1.2, and I think I am potentially SOL.

I am going to build and test v1.1.0 and v1.1.1 to see which ones work. NVM v1.1.0 is also broken.So the change that breaks it is in here: https://github.com/LINBIT/thin-send-recv/compare/6b7c9002cd7716ff6ef93f5a5e8908032b81f853...e44f566ea0c975e2baa475868ebc176065a5b22d

v1.0.1 might just be the version that works with the version of linstor, and whenever that gets updated it might call for a newer version of thin-send-recv.

-

@ronan-a might take a look if he found a minute free (which is complicated right now

)

) -

@olivierlambert @Jonathon Unfortunately we don't maintain this package, so it's not available in our repositories, the simplest thing is that you address this problem directly to linbit. Maybe there is a regression or something else?

-

This post is deleted! -

I spoke with one of our developers. He said:

"When I search for

Device read short 40960 bytes remainingI only get results for XCP-ng/Citrix with LVM. So I think this is an issue with LVM on xcp-ng. One thing we changed between thin-send-recv 1.0.X and 1.1.X is the error handling, so now errors are properly propagated. So I would guess it was always broken but newer versions actually make the error visible."

Not sure if that helps, but if there is anything to relay I'm more than happy to pass it along.

-

@BHellman Appreciate the weigh in and the time from your dev.

Ok yeah I thought I was having a hallucination lol. v1.0.1 was 100% working when I installed it at time of my posting, and it was failing today. Restarting all the satellites and it works, assuming it will break again.

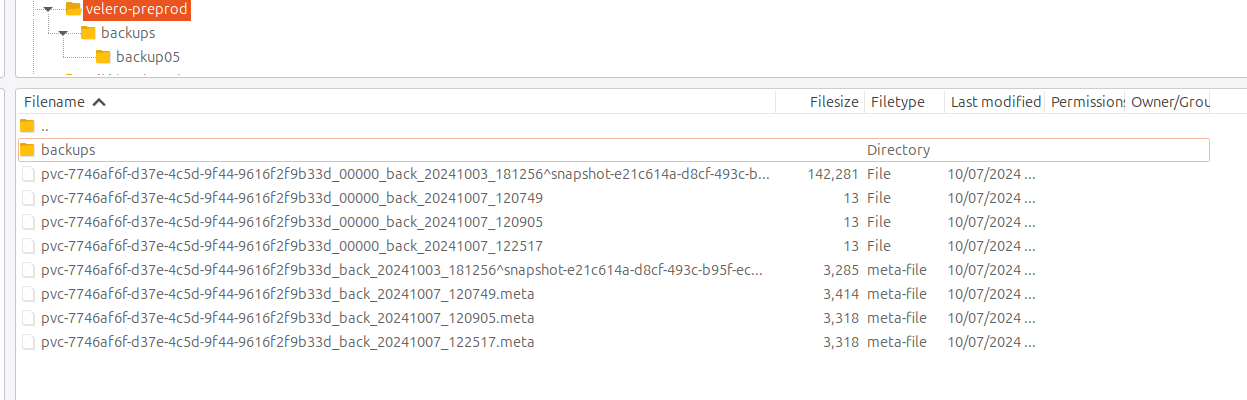

When it actually works, I can see the pvc in s3 remote

Here are a scatter of commands and outputs. In this I restarted the satellites, so it may be difficult to read but thought it would be better then nothing.

commands-and-outputs.txtxen01-linstor-Satellite.txt

xen02-linstor-Satellite.txt

xen03-linstor-Satellite.txt -

And now for something completely different lol.It's the same thing

We have a new xcp-ng cluster that we would like to migrate everything to. Not migrating k8s clusters, creating new ones on a new RKE2 rancher. So to migrate the applications it would simplify things if I could move pvc's over.

Command that fails, same if I add

--target-storage-pool xcp-sr-linstor_group_thin_devicejonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 backup ship newCluster pvc-086a5817-d813-41fe-86d8-3fac2ae2028f pvc-086a5817-d813-41fe-86d8-3fac2ae2028f ERROR: Description: Remote 'newCluster': Could not find suitable storage pool to receive backup Cause: ErrorReport id on target cluster: 66FF0E92-00000-000011Setup remotes

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 controller list-properties ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ Key ┊ Value ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ Cluster/LocalID ┊ 941fc610-acb9-484a-9837-d2c0df8a86aa jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 controller list-properties ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ Key ┊ Value ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ Cluster/LocalID ┊ 717be8f7-1aec-4830-9aab-cc0afba0dd3a linstor --controller 10.2.0.19 remote create linstor newCluster 10.2.0.10 --cluster-id 717be8f7-1aec-4830-9aab-cc0afba0dd3a linstor --controller 10.2.0.10 remote create linstor sourceCluster 10.2.0.19 --cluster-id 941fc610-acb9-484a-9837-d2c0df8a86aaNothing interesting in any satellite logs.

Error on new clusterjonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 err show 66FF0E92-00000-000011 ERROR REPORT 66FF0E92-00000-000011 ============================================================ Application: LINBIT® LINSTOR Module: Controller Version: 1.26.1 Build ID: 12746ac9c6e7882807972c3df56e9a89eccad4e5 Build time: 2024-02-22T05:27:50+00:00 Error time: 2024-10-07 14:51:35 Node: ovbh-pprod-xen01 Thread: MainWorkerPool-3 ============================================================ Reported error: =============== Category: RuntimeException Class name: ApiRcException Class canonical name: com.linbit.linstor.core.apicallhandler.response.ApiRcException Generated at: Method 'restoreBackupL2LInTransaction', Source file 'CtrlBackupRestoreApiCallHandler.java', Line #1123 Error message: Could not find suitable storage pool to receive backup Asynchronous stage backtrace: Error has been observed at the following site(s): *__checkpoint ⇢ restore backup *__checkpoint ⇢ Backupshipping L2L start receive Original Stack Trace: Call backtrace: Method Native Class:Line number restoreBackupL2LInTransaction N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupRestoreApiCallHandler:1123 Suppressed exception 1 of 1: =============== Category: RuntimeException Class name: OnAssemblyException Class canonical name: reactor.core.publisher.FluxOnAssembly.OnAssemblyException Generated at: Method 'restoreBackupL2LInTransaction', Source file 'CtrlBackupRestoreApiCallHandler.java', Line #1123 Error message: Error has been observed at the following site(s): *__checkpoint ⇢ restore backup *__checkpoint ⇢ Backupshipping L2L start receive Original Stack Trace: Call backtrace: Method Native Class:Line number restoreBackupL2LInTransaction N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupRestoreApiCallHandler:1123 lambda$startReceivingInTransaction$4 N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupL2LDstApiCallHandler:526 doInScope N com.linbit.linstor.core.apicallhandler.ScopeRunner:149 lambda$fluxInScope$0 N com.linbit.linstor.core.apicallhandler.ScopeRunner:76 call N reactor.core.publisher.MonoCallable:72 trySubscribeScalarMap N reactor.core.publisher.FluxFlatMap:127 subscribeOrReturn N reactor.core.publisher.MonoFlatMapMany:49 subscribe N reactor.core.publisher.Flux:8759 onNext N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:195 request N reactor.core.publisher.Operators$ScalarSubscription:2545 onSubscribe N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:141 subscribe N reactor.core.publisher.MonoJust:55 subscribe N reactor.core.publisher.MonoDeferContextual:55 subscribe N reactor.core.publisher.Flux:8773 onNext N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:195 onNext N reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber:129 completePossiblyEmpty N reactor.core.publisher.Operators$BaseFluxToMonoOperator:2071 onComplete N reactor.core.publisher.MonoCollect$CollectSubscriber:145 onComplete N reactor.core.publisher.MonoFlatMapMany$FlatMapManyInner:260 checkTerminated N reactor.core.publisher.FluxFlatMap$FlatMapMain:847 drainLoop N reactor.core.publisher.FluxFlatMap$FlatMapMain:609 drain N reactor.core.publisher.FluxFlatMap$FlatMapMain:589 onComplete N reactor.core.publisher.FluxFlatMap$FlatMapMain:466 checkTerminated N reactor.core.publisher.FluxFlatMap$FlatMapMain:847 drainLoop N reactor.core.publisher.FluxFlatMap$FlatMapMain:609 innerComplete N reactor.core.publisher.FluxFlatMap$FlatMapMain:895 onComplete N reactor.core.publisher.FluxFlatMap$FlatMapInner:998 onComplete N reactor.core.publisher.FluxMap$MapSubscriber:144 onComplete N reactor.core.publisher.Operators$MultiSubscriptionSubscriber:2205 onComplete N reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber:85 complete N reactor.core.publisher.FluxCreate$BaseSink:460 drain N reactor.core.publisher.FluxCreate$BufferAsyncSink:805 complete N reactor.core.publisher.FluxCreate$BufferAsyncSink:753 drainLoop N reactor.core.publisher.FluxCreate$SerializedFluxSink:247 drain N reactor.core.publisher.FluxCreate$SerializedFluxSink:213 complete N reactor.core.publisher.FluxCreate$SerializedFluxSink:204 apiCallComplete N com.linbit.linstor.netcom.TcpConnectorPeer:506 handleComplete N com.linbit.linstor.proto.CommonMessageProcessor:372 handleDataMessage N com.linbit.linstor.proto.CommonMessageProcessor:296 doProcessInOrderMessage N com.linbit.linstor.proto.CommonMessageProcessor:244 lambda$doProcessMessage$4 N com.linbit.linstor.proto.CommonMessageProcessor:229 subscribe N reactor.core.publisher.FluxDefer:46 subscribe N reactor.core.publisher.Flux:8773 onNext N reactor.core.publisher.FluxFlatMap$FlatMapMain:427 drainAsync N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:453 drain N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:724 onNext N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:256 drainFused N reactor.core.publisher.SinkManyUnicast:319 drain N reactor.core.publisher.SinkManyUnicast:362 tryEmitNext N reactor.core.publisher.SinkManyUnicast:237 tryEmitNext N reactor.core.publisher.SinkManySerialized:100 processInOrder N com.linbit.linstor.netcom.TcpConnectorPeer:419 doProcessMessage N com.linbit.linstor.proto.CommonMessageProcessor:227 lambda$processMessage$2 N com.linbit.linstor.proto.CommonMessageProcessor:164 onNext N reactor.core.publisher.FluxPeek$PeekSubscriber:185 runAsync N reactor.core.publisher.FluxPublishOn$PublishOnSubscriber:440 run N reactor.core.publisher.FluxPublishOn$PublishOnSubscriber:527 call N reactor.core.scheduler.WorkerTask:84 call N reactor.core.scheduler.WorkerTask:37 run N java.util.concurrent.FutureTask:264 run N java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask:304 runWorker N java.util.concurrent.ThreadPoolExecutor:1128 run N java.util.concurrent.ThreadPoolExecutor$Worker:628 run N java.lang.Thread:829 END OF ERROR REPORT.Info on new cluster

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 r l | grep -e "pvc-086a5817-d813-41fe-86d8-3fac2ae2028f" | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen10 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:09 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen12 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:09 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen13 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:07 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-vtest-k8s02-worker01 | 7117 | InUse | Ok | Diskless | 2024-08-09 11:31:25 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-vtest-k8s02-worker03 | 7117 | Unused | Ok | Diskless | 2024-06-13 14:15:57 | jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 rd l | grep -e "pvc-086a5817-d813-41fe-86d8-3fac2ae2028f" | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | 7117 | sc-74e1434b-b435-587e-9dea-fa067deec898 | ok | jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 rg l ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceGroup ┊ SelectFilter ┊ VlmNrs ┊ Description ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltRscGrp ┊ PlaceCount: 2 ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-74e1434b-b435-587e-9dea-fa067deec898 ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-b066e430-6206-5588-a490-cc91ecef53d6 ┊ PlaceCount: 1 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-sr-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 sp l ╭──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ StoragePool ┊ Node ┊ Driver ┊ PoolName ┊ FreeCapacity ┊ TotalCapacity ┊ CanSnapshots ┊ State ┊ SharedName ┊ ╞══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen10 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen10;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen11 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen11;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen12 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen12;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen13 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen13;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker07 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker07;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker04 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker04;DfltDisklessStorPool ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen10 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.48 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen10;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen11 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.42 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen11;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen12 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.83 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen12;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen13 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 4.12 TiB ┊ 4.99 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen13;xcp-sr-linstor_group_thin_device ┊ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯On the new cluster

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 rg l ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceGroup ┊ SelectFilter ┊ VlmNrs ┊ Description ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltRscGrp ┊ PlaceCount: 2 ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-74e1434b-b435-587e-9dea-fa067deec898 ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-ha-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: False ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-sr-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: False ┊ ┊ ┊ ╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 sp l ╭──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ StoragePool ┊ Node ┊ Driver ┊ PoolName ┊ FreeCapacity ┊ TotalCapacity ┊ CanSnapshots ┊ State ┊ SharedName ┊ ╞══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker03;DfltDisklessStorPool ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen01 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen01;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen02 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen02;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen03 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen03;xcp-sr-linstor_group_thin_device ┊ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login