CBT: the thread to centralize your feedback

-

I followed the advice above, restarted all my hosts one by one, I still have errors

{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1725368860234", "jobId": "9170c82b-ede4-491d-b792-f421b3e4b525", "jobName": "BACKUP_AND_REPLICATE_DEFAULT_BACKUP_JOB", "message": "backup", "scheduleId": "3e87844e-b48a-453f-87b4-e2a2cc3fa2c8", "start": 1725368860234, "status": "failure", "infos": [ { "data": { "vms": [ "cc59f885-c138-675d-fc81-054713586bc1", "96cfde06-61c0-0f3e-cf6d-f637d41cc8c6", "e1da68e8-ef42-44a9-386b-aceb7a920463", "565c724d-020f-baa8-8e7d-cf54d8b57a28" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "cc59f885-c138-675d-fc81-054713586bc1", "name_label": "REC-APP-BCTI" }, "id": "1725368863035", "message": "backup VM", "start": 1725368863035, "status": "failure", "tasks": [ { "id": "1725368863044:0", "message": "clean-vm", "start": 1725368863044, "status": "success", "end": 1725368863493, "result": { "merge": false } }, { "id": "1725368863818", "message": "snapshot", "start": 1725368863818, "status": "success", "end": 1725368945306, "result": "7f7f7d83-4ac6-0cbd-0bd4-34f9fb94c4a5" }, { "data": { "id": "122ddf1f-090d-4c23-8c5e-fe095321f8b9", "isFull": false, "type": "remote" }, "id": "1725368945306:0", "message": "export", "start": 1725368945306, "status": "success", "tasks": [ { "id": "1725369159363", "message": "clean-vm", "start": 1725369159363, "status": "success", "end": 1725369159662, "result": { "merge": false } } ], "end": 1725369159662 }, { "data": { "id": "beee944b-e502-61d7-e03b-e1408f01db8c", "isFull": false, "name_label": "iSCSI-STORE-CES-01_HDD-01", "type": "SR" }, "id": "1725368945306:1", "message": "export", "start": 1725368945306, "status": "interrupted" } ], "end": 1725369159662, "result": { "code": "VDI_IN_USE", "params": [ "OpaqueRef:d1b7eaca-1bb8-41da-9a96-11103411b868", "destroy" ], "task": { "uuid": "e2318576-41e0-7c97-bef3-b66fb17dc12f", "name_label": "Async.VDI.destroy", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20240903T13:12:39Z", "finished": "20240903T13:12:39Z", "status": "failure", "resident_on": "OpaqueRef:c329c60f-e5d8-4797-9019-2dcb1083227c", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "VDI_IN_USE", "OpaqueRef:d1b7eaca-1bb8-41da-9a96-11103411b868", "destroy" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 4711))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))" }, "message": "VDI_IN_USE(OpaqueRef:d1b7eaca-1bb8-41da-9a96-11103411b868, destroy)", "name": "XapiError", "stack": "XapiError: VDI_IN_USE(OpaqueRef:d1b7eaca-1bb8-41da-9a96-11103411b868, destroy)\n at XapiError.wrap (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_XapiError.mjs:16:12)\n at default (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_getTaskResult.mjs:13:29)\n at Xapi._addRecordToCache (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1076:24)\n at file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1110:14\n at Array.forEach (<anonymous>)\n at Xapi._processEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1100:12)\n at Xapi._watchEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1273:14)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)" } }, { "data": { "type": "VM", "id": "e1da68e8-ef42-44a9-386b-aceb7a920463", "name_label": "PROD-BDD-LIC01" }, "id": "1725368863036", "message": "backup VM", "start": 1725368863036, "status": "failure", "tasks": [ { "id": "1725368863043", "message": "clean-vm", "start": 1725368863043, "status": "success", "end": 1725368863472, "result": { "merge": false } }, { "id": "1725368863842", "message": "snapshot", "start": 1725368863842, "status": "success", "end": 1725368876837, "result": "882ebd40-e16a-e286-f0ac-580728a3ec19" }, { "data": { "id": "122ddf1f-090d-4c23-8c5e-fe095321f8b9", "isFull": false, "type": "remote" }, "id": "1725368876838", "message": "export", "start": 1725368876838, "status": "success", "tasks": [ { "id": "1725368983568", "message": "clean-vm", "start": 1725368983568, "status": "success", "end": 1725368983673, "result": { "merge": false } } ], "end": 1725368983675 }, { "data": { "id": "beee944b-e502-61d7-e03b-e1408f01db8c", "isFull": false, "name_label": "iSCSI-STORE-CES-01_HDD-01", "type": "SR" }, "id": "1725368876839", "message": "export", "start": 1725368876839, "status": "interrupted" } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:f10a5b53-170b-4c2d-9975-d419197cbf2a" }, "message": "Snapshot data has been deleted" } ], "end": 1725368983676, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/xapi/vdi.mjs:202:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_incrementalVm.mjs:57:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_incrementalVm.mjs:26:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } }, { "data": { "type": "VM", "id": "565c724d-020f-baa8-8e7d-cf54d8b57a28", "name_label": "ADMIN-SEC-ADM01" }, "id": "1725368863036:0", "message": "backup VM", "start": 1725368863036, "status": "failure", "tasks": [ { "id": "1725368863044", "message": "clean-vm", "start": 1725368863044, "status": "success", "end": 1725368863755, "result": { "merge": false } }, { "id": "1725368864026", "message": "snapshot", "start": 1725368864026, "status": "success", "end": 1725369030903, "result": "3c37e2e9-9e08-6fa7-a1e4-c16d51b4106a" }, { "data": { "id": "122ddf1f-090d-4c23-8c5e-fe095321f8b9", "isFull": false, "type": "remote" }, "id": "1725369030903:0", "message": "export", "start": 1725369030903, "status": "success", "tasks": [ { "id": "1725369054746", "message": "clean-vm", "start": 1725369054746, "status": "success", "end": 1725369055027, "result": { "merge": false } } ], "end": 1725369055031 }, { "data": { "id": "beee944b-e502-61d7-e03b-e1408f01db8c", "isFull": false, "name_label": "iSCSI-STORE-CES-01_HDD-01", "type": "SR" }, "id": "1725369030903:1", "message": "export", "start": 1725369030903, "status": "interrupted" } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:7a307227-d0bc-4810-92a8-6be38d0eecbb" }, "message": "Snapshot data has been deleted" }, { "data": { "vdiRef": "OpaqueRef:0048f302-86c2-4d69-81bc-feae8ef3cc15" }, "message": "Snapshot data has been deleted" } ], "end": 1725369055031, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/xapi/vdi.mjs:202:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_incrementalVm.mjs:57:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_incrementalVm.mjs:26:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } }, { "data": { "type": "VM", "id": "96cfde06-61c0-0f3e-cf6d-f637d41cc8c6", "name_label": "SRV-SQL" }, "id": "1725368863038", "message": "backup VM", "start": 1725368863038, "status": "failure", "tasks": [ { "id": "1725368863045", "message": "clean-vm", "start": 1725368863045, "status": "success", "end": 1725368863747, "result": { "merge": false } }, { "id": "1725368864026:0", "message": "snapshot", "start": 1725368864026, "status": "success", "end": 1725368911729, "result": "4db8f7fb-d7d0-cd20-24d1-9223180f0c09" }, { "data": { "id": "122ddf1f-090d-4c23-8c5e-fe095321f8b9", "isFull": false, "type": "remote" }, "id": "1725368911729:0", "message": "export", "start": 1725368911729, "status": "success", "tasks": [ { "id": "1725369104743", "message": "clean-vm", "start": 1725369104743, "status": "success", "end": 1725369105118, "result": { "merge": false } } ], "end": 1725369105118 }, { "data": { "id": "beee944b-e502-61d7-e03b-e1408f01db8c", "isFull": false, "name_label": "iSCSI-STORE-CES-01_HDD-01", "type": "SR" }, "id": "1725368911729:1", "message": "export", "start": 1725368911729, "status": "interrupted" } ], "end": 1725369105118, "result": { "code": "VDI_IN_USE", "params": [ "OpaqueRef:7b2ab98c-bfa5-4403-8890-73b88ce4b1dd", "destroy" ], "task": { "uuid": "d3978e94-c447-987c-c15d-21adcb7a8805", "name_label": "Async.VDI.destroy", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20240903T13:11:44Z", "finished": "20240903T13:11:44Z", "status": "failure", "resident_on": "OpaqueRef:c329c60f-e5d8-4797-9019-2dcb1083227c", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "VDI_IN_USE", "OpaqueRef:7b2ab98c-bfa5-4403-8890-73b88ce4b1dd", "destroy" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 4711))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))" }, "message": "VDI_IN_USE(OpaqueRef:7b2ab98c-bfa5-4403-8890-73b88ce4b1dd, destroy)", "name": "XapiError", "stack": "XapiError: VDI_IN_USE(OpaqueRef:7b2ab98c-bfa5-4403-8890-73b88ce4b1dd, destroy)\n at XapiError.wrap (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_XapiError.mjs:16:12)\n at default (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_getTaskResult.mjs:13:29)\n at Xapi._addRecordToCache (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1076:24)\n at file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1110:14\n at Array.forEach (<anonymous>)\n at Xapi._processEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1100:12)\n at Xapi._watchEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1273:14)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)" } } ], "end": 1725369159662 }What I have to do ?

-

@still_at_work this vdi_in_use error is something we have seen as well, when i do a retry the jobs are processing well at our end. So it seems more like a timing issue, vates is looking into this. Not shure if this is the same issue as u are seeing.

-

in my case, even if I restart the jobs in error, the error vdi_in_use persists

-

@still_at_work let’s see what vates does discover on the logging, i bet it is some sort of timing issue.

-

See https://github.com/vatesfr/xen-orchestra/pull/7960

Might be helpful

-

Thanks @olivierlambert

I'm available to install patch on my installation if you want, and if it's possible.

-

If you have a ticket number we could take a look tomorrow

-

-

@olivierlambert would this maybe also affect the other vdi_in_use error?

-

@olivierlambert the vdi_in_use error we saw in regarding to destroy has not been occuring again. The vdi_in_use error regarding data_destroy is still occuring. Not shure if the fix did anything or that we were lucky not to see issues last night on it. I believe @florent is right and this is caused by a timing issue but maybe we need more time then 4 seconds.

-

@rtjdamen I do run from sources and I was on the latest build when I posted. One of my first steps in regards to any issue is to update XO. I do not have any speed limits configured for the job.

@olivierlambert It seems to be happening fairly regularly for me. At least every couple of days. Let me know what I can do to help troubleshoot.

It appears that the same thing has happened again. One VM got attached to the Control Domain yesterday and therefore failed to backup. What is odd is that now I have another VM listed twice as being attached to the Control Domain. Oddly, they show the same attached UUID but different VBD UUIDs.

I see that there are patches available for the pool, so I will apply those as well as update XO and remove the Control attached VMs.

-

today i got issues with one of our hosts, i needed to do a hard reset of it. Now i get the error "can't create a stream from a metadata VDI, fall back to a base " for these vms hosted on this machine. I believe this error message is caused because the CBT got invalid after the hard reset. I remember we had this kind of error messages on vmware as well when a host crashed. Maybe if this is the case the error message should be update to something like "CBT is invalid fall back to base" it makes more sense that way.

-

@CJ strange indeed, are the backups succesfull or do they fail as well?

-

@rtjdamen The one backup failed last night, but the next one hasn't run yet. However, I expect both VMs to fail. Due to issues applying the patches I haven't cleared the control attached domain yet.

-

@CJ strange issue, not something we see on our end. Maybe vates has an idea?

-

@rtjdamen Agreed. I'm waiting for them to respond regarding my patching problems so I can test the latest updates. If that still has problems then hopefully they can let me know what they need to investigate the issue.

-

I'm now updated to the latest patches and XO version. I've cleaned out the control attached VMs and the orphans. We'll see how the backup goes tonight.

-

@CJ fingers crossed!

-

Hi all, i can confirm the vdi_in_use error is resolved by https://github.com/vatesfr/xen-orchestra/pull/7960 we no longer see issues there.

Only remaining issue we see is the “ can’t create a stream from a metadata vdi fall back to base”

-

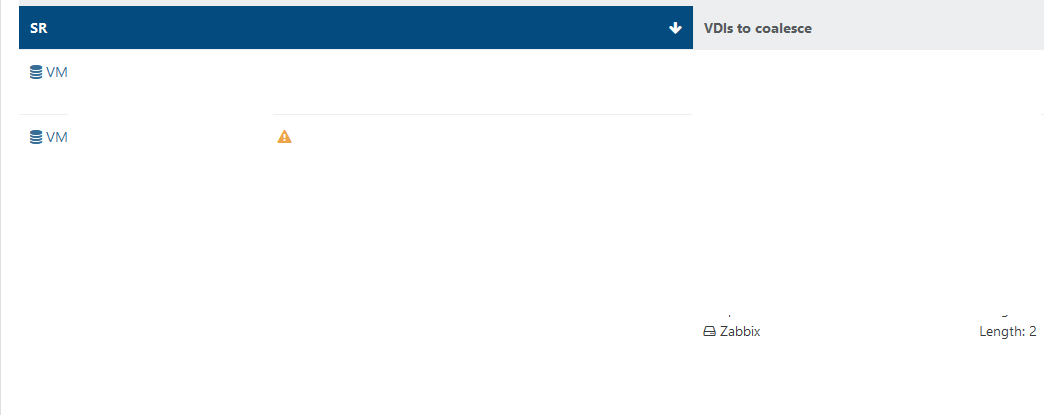

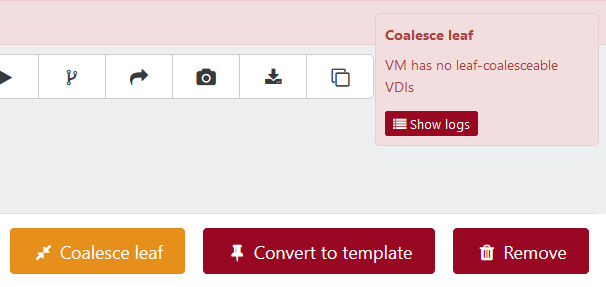

not sure is it CBT related, but 1 pool got a huge coalesce queue after i tried migrate VDI to another storage.

for example, this VM halted for a months with disabled backups. How it possible to get coalesce this way?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login