DevOps Megathread: what you need and how we can help!

-

Hello

We published a new blog post about our Kubernetes recipe:

You'll find there- A step by step guide to create a production ready Kubernetes cluster, on top of your servers, in minutes!

- Some architecture insights

https://xen-orchestra.com/blog/virtops-6-create-a-kubernetes-cluster-in-minutes/

Thanks to @Cyrille

-

Xen Orchestra Cloud Controller Manager in development

Hello everyone

We publish a development version of a Xen Orchestra Cloud Controller Manager!

It support the controllers cloud-node and cloud-node-lifecycle and add labels to your Kubernetes nodes hosted on Xen Orchestra VMs.

apiVersion: v1 kind: Node metadata: labels: # Type generated base on CPU and RAM node.kubernetes.io/instance-type: 2VCPU-1GB # Xen Orchestra Pool ID of the node VM Host topology.kubernetes.io/region: 3679fe1a-d058-4055-b800-d30e1bd2af48 # Xen Orchestra ID of the node VM Host topology.kubernetes.io/zone: 3d6764fe-dc88-42bf-9147-c87d54a73f21 # Additional labels based on Xen Orchestra data (beta) topology.k8s.xenorchestra/host_id: 3d6764fe-dc88-42bf-9147-c87d54a73f21 topology.k8s.xenorchestra/pool_id: 3679fe1a-d058-4055-b800-d30e1bd2af48 vm.k8s.xenorchestra/name_label: cgn-microk8s-recipe---Control-Plane ... name: worker-1 spec: ... # providerID - magic string: # xeorchestra://{Pool ID}/{VM ID} providerID: xeorchestra://3679fe1a-d058-4055-b800-d30e1bd2af48/8f0d32f8-3ce5-487f-9793-431bab66c115For now, we have only tested the provider with Microk8s.

What's next?

We will test the CCM with other types of Kubernetes clusters and work on fixing known issues.

Also a modification of the XOA Hub recipe will come to include the CCM.

More label will be added (Pool Name, VM Name, etc.).Feedback is welcome!

You can install and test the XO CCM, and provide feedback to help improve and speed up the release of the first stable version. This is greatly appreciated

-

Pulumi Xen Orchestra Provider - Release v2.1.0

This new version brings improvement on the VM disks lifecycle made on the Terraform Provider.

https://github.com/vatesfr/pulumi-xenorchestra/releases/tag/v2.1.0

-

Hi, I'm currently testing deployments with pulumi using packer templates.

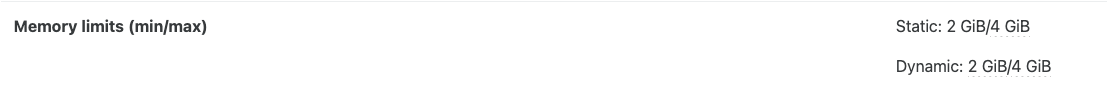

So far the basics work as expected but I'm stuck on a setting issue that seems to affect both pulumi and terraform providers. As far as I know there is no way to set the memory as static or changing memory_min when creating a VM from a template.

The template was created with 1cpu and 2GB of RAM

The VM created from this template using pulumi was assigned 2cpus and 4GB of RAM, however this only sets memory_max

I found the following post that talks about this: https://xcp-ng.org/forum/topic/5628/xenorchestra-with-terraform

and also the folllowing github issue https://github.com/vatesfr/terraform-provider-xenorchestra/issues/211

Manually setting the memory limits after VM creation defeats the purpose of automation. I suppose that implementing those settings in the relevant providers is a core feature. In most cases, VMs need static memory limits.

In the meantime, is there any workaround that I should investigate or anything that I missed ?

EDIT: Using the JSON-RPC API of XenOrchestra, I'm able to set the memory limits after the creation of the VM. This is great but unfortunately it is a bit too "imperative" in a declarative world.

I'll publish the code when I can clean up the hellish python I wrote, but a few pointers for those interested:-

See Mickaël Baron's blog (in French, sorry!) for an exemple of working with XO JSON-RPC API: https://mickael-baron.fr/blog/2021/05/28/xo-server-websocket-jsonrcp

-

The system.getMethodsInfo() RPC function will give you all available calls you can make to the server. For instance, you can sign-in with session.signInWithToken(token="XO_TOKEN") and vm.setAndRestart to change VM settings and restart it immediately after.

-

You can use Pulumi's hooks: https://www.pulumi.com/docs/iac/concepts/options/hooks/

-

In python, Pulumi is running in an asyncio loop already so bear that in mind: https://www.pulumi.com/docs/iac/languages-sdks/python/python-blocking-async/

-

-

Hi @afk!

We are working on a new version of the Xen Orchestra Terraform provider to improve VM memory control.In this new version, the

memory_maxsetting will now set the maximum limits for both the dynamic and static memory.There is also an optional new setting called 'memory_min', which can be used to set the minimum limit for dynamic VM memory.

This version will also resolve the issue with template memory limits used during VM creation.Can you test this pre-release version and provide us with some feedback? Or maybe just tell us if this new behaviour is more likely to meet your needs?

https://github.com/vatesfr/terraform-provider-xenorchestra/releases/tag/v0.33.0-alpha.1

I will try asap to do a pre-release version for the Pulumi provider.

-

Hi @Cyrille, thank you for working on this, it will help a lot.

This is indeed implementing the needed behavior when creating vms from templates, avoiding the use of additional ad-hoc code. For reference, I needed to call the

vm.setXO JSON-RPC function after the creation with the following argumentsrpc_args = { "id": vm_id, "memory": vm_memory, "memoryMin": vm_memory, "memoryMax": vm_memory, "memoryStaticMax": vm_memory, } await rpcserver.vm.set(**rpc_args)I'll try to test the provider patch in the coming days.

-

I'd like the terraform provider to have a

xenorchestra_backupresource.For me, part of the process of spinning up a new set of VMs is to create backup jobs for those new VMs.

I can today manually make a a backup job which applies to VMs with a certain tag, then later, via TF, make VMs with that tag. However I'd prefer being able to make a

xenorchestra_backupresource, with settings specific to that VM (or set of VMs).Furthermore, if the idea with backup schedules is that they can be used across backup jobs, then that would mean a new

xenorchestra_backup_scheduleresource type too, which would be referenced in thexenorchestra_backup. Also, this might require creating axenorchestra_remotedata-source.Having said that, I am not a paying customer, so I understand this is a low priority request, and I do have a workaround.

-

@sid I made that request a while ago, and tried to look into myself too, but the current API of XenOrchestra just doesn't support it, there are many pieces missing from what I could see. I hope with XenOrchestra 6 the API wil support it though.

-

@bufanda said in DevOps Megathread: what you need and how we can help!:

@sid I made that request a while ago, and tried to look into myself too, but the current API of XenOrchestra just doesn't support it, there are many pieces missing from what I could see. I hope with XenOrchestra 6 the API wil support it though.

That's the point. We started working on it, but it wasn't possible to implement the required functionality in the TF provider using the current JRPC API. We are working with the XO team to provide feedback to make it happen with the REST API. I hope the backup resource will be available with XO6

NB: If you want to take a look, there are branches on the GitHub repository. These are for both the provider and the Golang client.

-

Pulumi Xen Orchestra Provider - Pre-Release v2.2.0-alpha.1

This is the pre-release version, which includes the changes to the memory_max field and adds the new memory_min field.

https://github.com/vatesfr/pulumi-xenorchestra/releases/tag/v2.2.0-alpha.1

-

@Cyrille Aah, I didn't know about the branches. I had started my own attempt to implement the feature, good to know I can abandon that work. Oh boy discovering the

settingsmap uses an empty key was a moment.OK, I will wait. Thanks to your team for the work on the terraform provider

-

New releases for Terraform and Pulumi providers!

This new version introduces a new field,

memory_min, for the VM resource and makes a slight change to thememory_maxfield, which now sets both the dynamic and static maximum memory limits and providing better control of VM memory.Pulumi Provider v2.2.0

Terraform Provider v0.33.0

Xen Orchestra Go SDK v1.4.0 -

@Cyrille Thanks for the release !

I just tested the happy path of settings (6GB min / 8GB max and 8/8) and it seems to work as expected.

Now, I can get rid of the workaround, that's awesome

-

@olivierlambert Another useful item to aid in development processes and IaC operations. Is when using GitHub Copilot an MCP Server which will interface with the Vates VMS stack, so the agent can get context related to requests (queries). That way its responses can be properly grounded in the context of the stack, as well as the configuration, setup of the Vates VMS installation and its available resources.

Can the IaC team work on this, though may need other teams help?

-

@john.c Why not, can you share what would be the first tools to support and your use cases? I assume that if you are working in VSCode you might be useing some infrastructure as code, like Terraform or Pulumi or Ansible, isn't? In these case do you also have some related MCP servers enabled?

-

@nathanael-h said in DevOps Megathread: what you need and how we can help!:

@john.c Why not, can you share what would be the first tools to support and your use cases? I assume that if you are working in VSCode you might be useing some infrastructure as code, like Terraform or Pulumi or Ansible, isn't? In these case do you also have some related MCP servers enabled?

@nathanael-h Pulumi for the infrastructure as code, with the code held on a private GitHub repository.

To aid in writing the IaC code as well as helping with provisioning VMs etc.

As well as during development of full stack website projects.

The appropriate servers are already enabled and configured, for GitHub Copilot use.

Visual Studio Code with GitHub Copilot.

-

@manilx I have proposed to the IaC team of Vates, a MCP Server for Vates VMS. Which can be used by GitHub Copilot or similar, if used when doing IaC etc.

-

Terraform Provider - Release 0.35.1

The new version fixes bugs when creating a VM from a template #361:

- All existing disks in the template are used if they are declared in the TF plan.

- All unused disks in the template are deleted to avoid inconsistency between the TF plan and the actual state.

- It is no longer possible to resize existing template disks to a smaller size (fixes potential source of data loss).

The release: https://github.com/vatesfr/terraform-provider-xenorchestra/releases/tag/v0.35.1

-

The release v0.35.0 improves the logging of both the Xen Orchestra golang SDK and the Terraform Provider.

Now it should be easier to read the log using

TF_LOG_PROVIDER=DEBUG(see the provider documentation) -

Terraform Provider v0.36.0 and Pulumi Provider v2.3.0

- Read and expose boot_firmware on template data-source by @sakaru in #381

- Fixes VM creation from multi-disks template:

- All existing disks in the template are used if they are declared in the plan.

- All unused disks in the template are deleted to avoid inconsistency between the plan and the actual state.

- It is no longer possible to resize existing template disks to a smaller size (fixes potential source of data loss).

- Order of existing disk matches the declaration order in the plan

Terraform provider release: https://github.com/vatesfr/terraform-provider-xenorchestra/releases/tag/v0.36.0

Pulumi provider release: https://github.com/vatesfr/pulumi-xenorchestra/releases/tag/v2.3.0

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login