Backup jobs run twice after upgrade to 5.76.2

-

Additional to the above errors, we noticed a lot 'VDI_IO_ERROR' errors during the running jobs.

I remember there was a similar problem with CR which got fixed using the 'guessVhdSizeOnImport: true' option. Did anything change since then?

I will try with XOA trial when I have some spare time.

EDIT:

Can we import the config from our XO from sources to XOA or should we setup everything from scratch? -

Some addional info that might be relevant to this issue:

When stopping / starting xo-server after a hanging job, the summary sent as report is interesting as it states that the SR and VM isn't available / not found - which is obviously wrong..

## Global status: interrupted - **Job ID**: fd08162c-228a-4c49-908e-de3085f75e46 - **Run ID**: 1582618375292 - **mode**: delta - **Start time**: Tuesday, February 25th 2020, 9:12:55 am - **Successes**: 13 / 32 --- ## 19 Interrupted ### VM not found - **UUID**: d627892d-661c-9b7c-f733-8f52da7325e2 - **Start time**: Tuesday, February 25th 2020, 9:12:55 am - **Snapshot** ✔ - **Start time**: Tuesday, February 25th 2020, 9:12:55 am - **End time**: Tuesday, February 25th 2020, 9:12:56 am - **Duration**: a few seconds - **SRs** - **SR Not found** (ea4f9bd7-ccae-7a1f-c981-217565c8e08e) ⚠️ - **Start time**: Tuesday, February 25th 2020, 9:14:26 am - **transfer** ⚠️ - **Start time**: Tuesday, February 25th 2020, 9:14:26 am [snip] -

@tts said in Backup jobs run twice after upgrade to 5.76.2:

Can we import the config from our XO from sources to XOA or should we setup everything from scratch?

Today I got XOA trial ready and imported config from XO-from-sources. Manually started CR jobs are working. Will test it with scheduled CR next couple of days.

-

Right now one of my CR jobs are stuck, symptoms are the same as with XO from sources.

XOA 5.43.2 (xo-server and xo-web 5.56.2)

Hosts where VM is running and where it is replicated to, both XenServer 7.0

Backup log:{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1582750800014", "jobId": "b5c85bb9-6ba7-4665-af60-f86a8a21503d", "jobName": "web1", "message": "backup", "scheduleId": "6204a834-1145-4278-9170-63a9fc9d5b2b", "start": 1582750800014, "status": "pending", "tasks": [ { "data": { "type": "VM", "id": "04430f5e-f0db-72b6-2b73-cc49433a2e14" }, "id": "1582750800016", "message": "Starting backup of web1. (b5c85bb9-6ba7-4665-af60-f86a8a21503d)", "start": 1582750800016, "status": "pending", "tasks": [ { "id": "1582750800018", "message": "snapshot", "start": 1582750800018, "status": "success", "end": 1582750808307, "result": "6e6c19e2-104c-1dcf-a355-563348d5a11f" }, { "id": "1582750808314", "message": "add metadata to snapshot", "start": 1582750808314, "status": "success", "end": 1582750808325 }, { "id": "1582750808517", "message": "waiting for uptodate snapshot record", "start": 1582750808517, "status": "success", "end": 1582750808729 }, { "id": "1582750808735", "message": "start snapshot export", "start": 1582750808735, "status": "success", "end": 1582750808735 }, { "data": { "id": "4a7d1f0f-4bb6-ff77-58df-67dde7fa98a0", "isFull": false, "type": "SR" }, "id": "1582750808736", "message": "export", "start": 1582750808736, "status": "pending", "tasks": [ { "id": "1582750808751", "message": "transfer", "start": 1582750808751, "status": "pending" } ] } ] } ] }Perhaps there is something with my environment but then why it is working with older XO versions.

-

Ping @julien-f

-

There is probably a problem in XO regarding this because we have received multiple similar reports, we are investigating.

-

@karlisi I've been unable to reproduce the issue so far, can you tell me which version of XO has the issue and which one does not?

Also, if you could provide me with your job configuration it would be nice

-

@julien-f said in Backup jobs run twice after upgrade to 5.76.2:

@karlisi I've been unable to reproduce the issue so far, can you tell me which version of XO has the issue and which one does not?

Also, if you could provide me with your job configuration it would be nice

Can I send it to you in PM?

-

In this case, it's better that you open a ticket on xen-orchestra.com

-

@olivierlambert Done

-

Thanks a lot for this great ticket with all the context, this is really helpful!

-

Hi,

I can give a little update on our issues, but our 'workaround' is really time consuming.

Besides the problem of randomly stuck CR jobs, which could be fixed mostly by:

stop xo-server/ start xo-server (sometimes needed to restart xo-vm) and restart the interrupted jobs again and again until all VM are successfully backed up.

Now without changing anything the jobs are again started twice again most of the time, but not always.

I can not find a correlation to this.

Somehow it looked that this could be fixed by dis/enabling the backup jobs, but as of now this doesn't make any difference.

-Toni

-

@tts Are You on latest XOA version?

-

It wasn't XOA, we used xo-server from sources.

I planned to test with XOA but Olivier yesterday wrote that it's probably fixed upstream.

So I updated our installation to xo-server 5.57.3 / xo-web 5.57.1 and will see if it's fixed.

fingers crossed

-

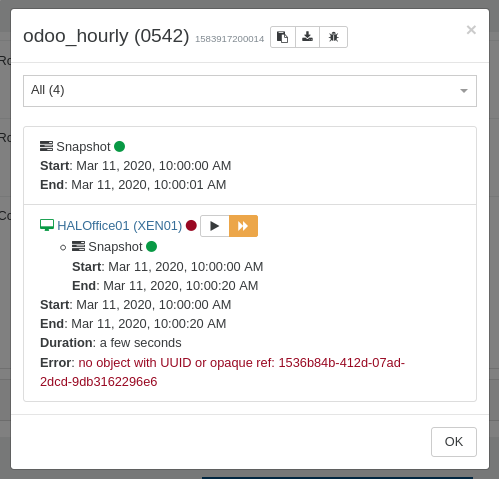

Maybe there are additional problems. I just noticed one of our rolling-snapshot task started twice again.

This time it's even more weird, both jobs are listed as successfull.

The first job displays just nothing in the detail window:

json:{ "data": { "mode": "full", "reportWhen": "failure" }, "id": "1583917200011", "jobId": "22050542-f6ec-4437-a031-0600260197d7", "jobName": "odoo_hourly", "message": "backup", "scheduleId": "ab9468b4-9b8a-44a0-83a8-fc6b84fe8a95", "start": 1583917200011, "status": "success", "end": 1583917207066 }The second job shows 4? VMs but only consists of one VM which failed, but is displayed as successful

json:{ "data": { "mode": "full", "reportWhen": "failure" }, "id": "1583917200014", "jobId": "22050542-f6ec-4437-a031-0600260197d7", "jobName": "odoo_hourly", "message": "backup", "scheduleId": "ab9468b4-9b8a-44a0-83a8-fc6b84fe8a95", "start": 1583917200014, "status": "success", "tasks": [ { "id": "1583917200019", "message": "snapshot", "start": 1583917200019, "status": "success", "end": 1583917201773, "result": "32ba0201-26bd-6f29-c507-0416129058b4" }, { "id": "1583917201780", "message": "add metadata to snapshot", "start": 1583917201780, "status": "success", "end": 1583917201791 }, { "id": "1583917206856", "message": "waiting for uptodate snapshot record", "start": 1583917206856, "status": "success", "end": 1583917207064 }, { "data": { "type": "VM", "id": "b6b63fab-bd5f-8640-305d-3565361b213f" }, "id": "1583917200022", "message": "Starting backup of HALOffice01. (22050542-f6ec-4437-a031-0600260197d7)", "start": 1583917200022, "status": "failure", "tasks": [ { "id": "1583917200028", "message": "snapshot", "start": 1583917200028, "status": "success", "end": 1583917220144, "result": "62e06abc-6fca-9564-aad7-7933dec90ba3" }, { "id": "1583917220148", "message": "add metadata to snapshot", "start": 1583917220148, "status": "success", "end": 1583917220167 } ], "end": 1583917220405, "result": { "message": "no object with UUID or opaque ref: 1536b84b-412d-07ad-2dcd-9db3162296e6", "name": "Error", "stack": "Error: no object with UUID or opaque ref: 1536b84b-412d-07ad-2dcd-9db3162296e6\n at Xapi.apply (/opt/xen-orchestra/packages/xen-api/src/index.js:573:11)\n at Xapi.getObject (/opt/xen-orchestra/packages/xo-server/src/xapi/index.js:128:24)\n at Xapi.deleteVm (/opt/xen-orchestra/packages/xo-server/src/xapi/index.js:692:12)\n at iteratee (/opt/xen-orchestra/packages/xo-server/src/xo-mixins/backups-ng/index.js:1268:23)\n at /opt/xen-orchestra/@xen-orchestra/async-map/src/index.js:32:17\n at Promise._execute (/opt/xen-orchestra/node_modules/bluebird/js/release/debuggability.js:384:9)\n at Promise._resolveFromExecutor (/opt/xen-orchestra/node_modules/bluebird/js/release/promise.js:518:18)\n at new Promise (/opt/xen-orchestra/node_modules/bluebird/js/release/promise.js:103:10)\n at /opt/xen-orchestra/@xen-orchestra/async-map/src/index.js:31:7\n at arrayMap (/opt/xen-orchestra/node_modules/lodash/_arrayMap.js:16:21)\n at map (/opt/xen-orchestra/node_modules/lodash/map.js:50:10)\n at asyncMap (/opt/xen-orchestra/@xen-orchestra/async-map/src/index.js:30:5)\n at BackupNg._backupVm (/opt/xen-orchestra/packages/xo-server/src/xo-mixins/backups-ng/index.js:1265:13)\n at runMicrotasks (<anonymous>)\n at processTicksAndRejections (internal/process/task_queues.js:97:5)\n at handleVm (/opt/xen-orchestra/packages/xo-server/src/xo-mixins/backups-ng/index.js:734:13)" } } ], "end": 1583917207065 }So the results are clearly wrong, job is ran twice, this time at the same point in time.

Would it help to delete and recreate all of the backup jobs?

What extra information I could offer to get this issue fixed?Already thinking about setting up another new xo-server from scratch now.

please help and advice,

thanks Toni -

Please try with XOA and report if you have the same problem.

-

I'm currently on it, we did setup the XOA appliance recently. Just needs activating the trial phase, I think I can do that today in a few hours.

Can we import the settings safely from our current 'from sources' version or do we need to setup everything from scratch?

-

Yes you can import settings in XOA.

-

Ok thanks, will do that and report back later.

-

Looks like XOA on stable channel is only updating to v5.43.2,

I really don't get the point why 'rolling back' to a much older version could help solve the backup problems in the more recent version 'from sources' 5.56+?

Is this a completely different codebase?

Quite lost now!