-

Ok, so, turns out this is because of the

thin-send-recvpackage I build from https://github.com/LINBIT/thin-send-recv/tree/masterI just swapped out the version I built for the last one I was able to get online to test, and it works.

The last version I was able to get from any repository before they went 403 was thin-send-recv-1.0.1-1.x86_64.rpm.txt, I was able to get this from https://piraeus.daocloud.io/linbit/rpms/7/x86_64/thin-send-recv-1.0.1-1.x86_64.rpm. FYI https://packages.linbit.com/yum/sles12-sp2/drbd-9.0/x86_64/Packages/ returns 403's too so no point in looking for it there if they have it hosted.

I built thin-send-recv-1.1.2-1.xcpng8.2.x86_64.rpm.txt using this doc I put together thin-send-recv.txt. But this package I built is resulting in the error posted previously.

So I am a bit at a loss, I want to be able to use velero for backing up pvs which are not managed by an operator with backup capabilities, but I do not want to be stuck with this old version I can not update.

Any advice would be greatly appreciated!

-

Ok great, I manually built 1.0.1, and it works just like the package I got online, that means that what I am doing is working and the build process is correct.

The bad new is there is a breaking change with v1.1.2, and I think I am potentially SOL.

I am going to build and test v1.1.0 and v1.1.1 to see which ones work. NVM v1.1.0 is also broken.So the change that breaks it is in here: https://github.com/LINBIT/thin-send-recv/compare/6b7c9002cd7716ff6ef93f5a5e8908032b81f853...e44f566ea0c975e2baa475868ebc176065a5b22d

v1.0.1 might just be the version that works with the version of linstor, and whenever that gets updated it might call for a newer version of thin-send-recv.

-

@ronan-a might take a look if he found a minute free (which is complicated right now

)

) -

@olivierlambert @Jonathon Unfortunately we don't maintain this package, so it's not available in our repositories, the simplest thing is that you address this problem directly to linbit. Maybe there is a regression or something else?

-

This post is deleted! -

I spoke with one of our developers. He said:

"When I search for

Device read short 40960 bytes remainingI only get results for XCP-ng/Citrix with LVM. So I think this is an issue with LVM on xcp-ng. One thing we changed between thin-send-recv 1.0.X and 1.1.X is the error handling, so now errors are properly propagated. So I would guess it was always broken but newer versions actually make the error visible."

Not sure if that helps, but if there is anything to relay I'm more than happy to pass it along.

-

@BHellman Appreciate the weigh in and the time from your dev.

Ok yeah I thought I was having a hallucination lol. v1.0.1 was 100% working when I installed it at time of my posting, and it was failing today. Restarting all the satellites and it works, assuming it will break again.

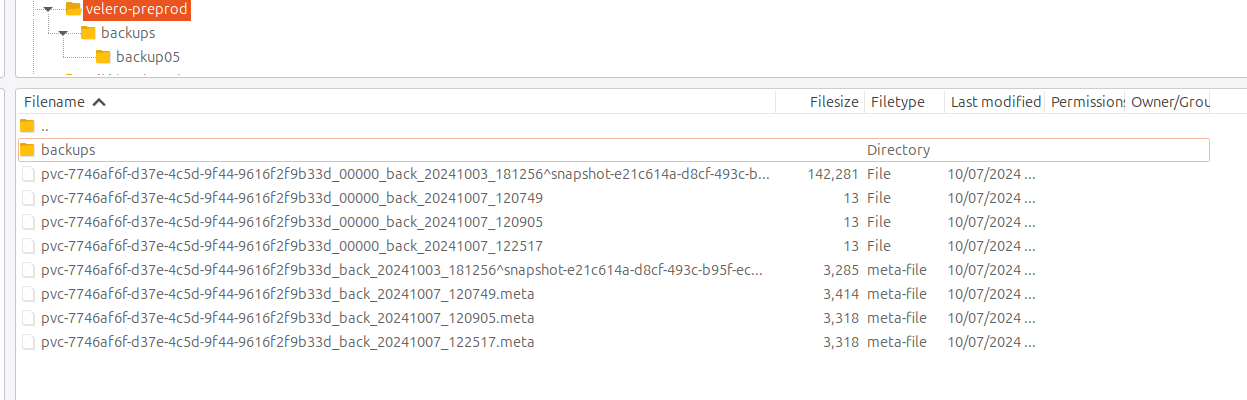

When it actually works, I can see the pvc in s3 remote

Here are a scatter of commands and outputs. In this I restarted the satellites, so it may be difficult to read but thought it would be better then nothing.

commands-and-outputs.txtxen01-linstor-Satellite.txt

xen02-linstor-Satellite.txt

xen03-linstor-Satellite.txt -

And now for something completely different lol.It's the same thing

We have a new xcp-ng cluster that we would like to migrate everything to. Not migrating k8s clusters, creating new ones on a new RKE2 rancher. So to migrate the applications it would simplify things if I could move pvc's over.

Command that fails, same if I add

--target-storage-pool xcp-sr-linstor_group_thin_devicejonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 backup ship newCluster pvc-086a5817-d813-41fe-86d8-3fac2ae2028f pvc-086a5817-d813-41fe-86d8-3fac2ae2028f ERROR: Description: Remote 'newCluster': Could not find suitable storage pool to receive backup Cause: ErrorReport id on target cluster: 66FF0E92-00000-000011Setup remotes

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 controller list-properties ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ Key ┊ Value ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ Cluster/LocalID ┊ 941fc610-acb9-484a-9837-d2c0df8a86aa jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 controller list-properties ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ Key ┊ Value ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ Cluster/LocalID ┊ 717be8f7-1aec-4830-9aab-cc0afba0dd3a linstor --controller 10.2.0.19 remote create linstor newCluster 10.2.0.10 --cluster-id 717be8f7-1aec-4830-9aab-cc0afba0dd3a linstor --controller 10.2.0.10 remote create linstor sourceCluster 10.2.0.19 --cluster-id 941fc610-acb9-484a-9837-d2c0df8a86aaNothing interesting in any satellite logs.

Error on new clusterjonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 err show 66FF0E92-00000-000011 ERROR REPORT 66FF0E92-00000-000011 ============================================================ Application: LINBIT® LINSTOR Module: Controller Version: 1.26.1 Build ID: 12746ac9c6e7882807972c3df56e9a89eccad4e5 Build time: 2024-02-22T05:27:50+00:00 Error time: 2024-10-07 14:51:35 Node: ovbh-pprod-xen01 Thread: MainWorkerPool-3 ============================================================ Reported error: =============== Category: RuntimeException Class name: ApiRcException Class canonical name: com.linbit.linstor.core.apicallhandler.response.ApiRcException Generated at: Method 'restoreBackupL2LInTransaction', Source file 'CtrlBackupRestoreApiCallHandler.java', Line #1123 Error message: Could not find suitable storage pool to receive backup Asynchronous stage backtrace: Error has been observed at the following site(s): *__checkpoint ⇢ restore backup *__checkpoint ⇢ Backupshipping L2L start receive Original Stack Trace: Call backtrace: Method Native Class:Line number restoreBackupL2LInTransaction N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupRestoreApiCallHandler:1123 Suppressed exception 1 of 1: =============== Category: RuntimeException Class name: OnAssemblyException Class canonical name: reactor.core.publisher.FluxOnAssembly.OnAssemblyException Generated at: Method 'restoreBackupL2LInTransaction', Source file 'CtrlBackupRestoreApiCallHandler.java', Line #1123 Error message: Error has been observed at the following site(s): *__checkpoint ⇢ restore backup *__checkpoint ⇢ Backupshipping L2L start receive Original Stack Trace: Call backtrace: Method Native Class:Line number restoreBackupL2LInTransaction N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupRestoreApiCallHandler:1123 lambda$startReceivingInTransaction$4 N com.linbit.linstor.core.apicallhandler.controller.backup.CtrlBackupL2LDstApiCallHandler:526 doInScope N com.linbit.linstor.core.apicallhandler.ScopeRunner:149 lambda$fluxInScope$0 N com.linbit.linstor.core.apicallhandler.ScopeRunner:76 call N reactor.core.publisher.MonoCallable:72 trySubscribeScalarMap N reactor.core.publisher.FluxFlatMap:127 subscribeOrReturn N reactor.core.publisher.MonoFlatMapMany:49 subscribe N reactor.core.publisher.Flux:8759 onNext N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:195 request N reactor.core.publisher.Operators$ScalarSubscription:2545 onSubscribe N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:141 subscribe N reactor.core.publisher.MonoJust:55 subscribe N reactor.core.publisher.MonoDeferContextual:55 subscribe N reactor.core.publisher.Flux:8773 onNext N reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain:195 onNext N reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber:129 completePossiblyEmpty N reactor.core.publisher.Operators$BaseFluxToMonoOperator:2071 onComplete N reactor.core.publisher.MonoCollect$CollectSubscriber:145 onComplete N reactor.core.publisher.MonoFlatMapMany$FlatMapManyInner:260 checkTerminated N reactor.core.publisher.FluxFlatMap$FlatMapMain:847 drainLoop N reactor.core.publisher.FluxFlatMap$FlatMapMain:609 drain N reactor.core.publisher.FluxFlatMap$FlatMapMain:589 onComplete N reactor.core.publisher.FluxFlatMap$FlatMapMain:466 checkTerminated N reactor.core.publisher.FluxFlatMap$FlatMapMain:847 drainLoop N reactor.core.publisher.FluxFlatMap$FlatMapMain:609 innerComplete N reactor.core.publisher.FluxFlatMap$FlatMapMain:895 onComplete N reactor.core.publisher.FluxFlatMap$FlatMapInner:998 onComplete N reactor.core.publisher.FluxMap$MapSubscriber:144 onComplete N reactor.core.publisher.Operators$MultiSubscriptionSubscriber:2205 onComplete N reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber:85 complete N reactor.core.publisher.FluxCreate$BaseSink:460 drain N reactor.core.publisher.FluxCreate$BufferAsyncSink:805 complete N reactor.core.publisher.FluxCreate$BufferAsyncSink:753 drainLoop N reactor.core.publisher.FluxCreate$SerializedFluxSink:247 drain N reactor.core.publisher.FluxCreate$SerializedFluxSink:213 complete N reactor.core.publisher.FluxCreate$SerializedFluxSink:204 apiCallComplete N com.linbit.linstor.netcom.TcpConnectorPeer:506 handleComplete N com.linbit.linstor.proto.CommonMessageProcessor:372 handleDataMessage N com.linbit.linstor.proto.CommonMessageProcessor:296 doProcessInOrderMessage N com.linbit.linstor.proto.CommonMessageProcessor:244 lambda$doProcessMessage$4 N com.linbit.linstor.proto.CommonMessageProcessor:229 subscribe N reactor.core.publisher.FluxDefer:46 subscribe N reactor.core.publisher.Flux:8773 onNext N reactor.core.publisher.FluxFlatMap$FlatMapMain:427 drainAsync N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:453 drain N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:724 onNext N reactor.core.publisher.FluxFlattenIterable$FlattenIterableSubscriber:256 drainFused N reactor.core.publisher.SinkManyUnicast:319 drain N reactor.core.publisher.SinkManyUnicast:362 tryEmitNext N reactor.core.publisher.SinkManyUnicast:237 tryEmitNext N reactor.core.publisher.SinkManySerialized:100 processInOrder N com.linbit.linstor.netcom.TcpConnectorPeer:419 doProcessMessage N com.linbit.linstor.proto.CommonMessageProcessor:227 lambda$processMessage$2 N com.linbit.linstor.proto.CommonMessageProcessor:164 onNext N reactor.core.publisher.FluxPeek$PeekSubscriber:185 runAsync N reactor.core.publisher.FluxPublishOn$PublishOnSubscriber:440 run N reactor.core.publisher.FluxPublishOn$PublishOnSubscriber:527 call N reactor.core.scheduler.WorkerTask:84 call N reactor.core.scheduler.WorkerTask:37 run N java.util.concurrent.FutureTask:264 run N java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask:304 runWorker N java.util.concurrent.ThreadPoolExecutor:1128 run N java.util.concurrent.ThreadPoolExecutor$Worker:628 run N java.lang.Thread:829 END OF ERROR REPORT.Info on new cluster

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 r l | grep -e "pvc-086a5817-d813-41fe-86d8-3fac2ae2028f" | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen10 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:09 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen12 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:09 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-pprod-xen13 | 7117 | Unused | Ok | UpToDate | 2023-05-31 14:42:07 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-vtest-k8s02-worker01 | 7117 | InUse | Ok | Diskless | 2024-08-09 11:31:25 | | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | ovbh-vtest-k8s02-worker03 | 7117 | Unused | Ok | Diskless | 2024-06-13 14:15:57 | jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 rd l | grep -e "pvc-086a5817-d813-41fe-86d8-3fac2ae2028f" | pvc-086a5817-d813-41fe-86d8-3fac2ae2028f | 7117 | sc-74e1434b-b435-587e-9dea-fa067deec898 | ok | jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 rg l ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceGroup ┊ SelectFilter ┊ VlmNrs ┊ Description ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltRscGrp ┊ PlaceCount: 2 ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-74e1434b-b435-587e-9dea-fa067deec898 ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-b066e430-6206-5588-a490-cc91ecef53d6 ┊ PlaceCount: 1 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-sr-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 sp l ╭──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ StoragePool ┊ Node ┊ Driver ┊ PoolName ┊ FreeCapacity ┊ TotalCapacity ┊ CanSnapshots ┊ State ┊ SharedName ┊ ╞══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen10 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen10;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen11 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen11;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen12 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen12;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen13 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen13;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-k8s04-worker07 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-k8s04-worker07;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s02-worker04 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s02-worker04;DfltDisklessStorPool ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen10 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.48 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen10;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen11 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.42 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen11;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen12 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 2.83 TiB ┊ 3.49 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen12;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen13 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 4.12 TiB ┊ 4.99 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen13;xcp-sr-linstor_group_thin_device ┊ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯On the new cluster

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 rg l ╭───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceGroup ┊ SelectFilter ┊ VlmNrs ┊ Description ┊ ╞═══════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltRscGrp ┊ PlaceCount: 2 ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ sc-74e1434b-b435-587e-9dea-fa067deec898 ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ DisklessOnRemaining: True ┊ ┊ ┊ ┊ ┊ LayerStack: ['DRBD', 'STORAGE'] ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-ha-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: False ┊ ┊ ┊ ╞┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄┄╡ ┊ xcp-sr-linstor_group_thin_device ┊ PlaceCount: 3 ┊ 0 ┊ ┊ ┊ ┊ StoragePool(s): xcp-sr-linstor_group_thin_device ┊ ┊ ┊ ┊ ┊ DisklessOnRemaining: False ┊ ┊ ┊ ╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 sp l ╭──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ StoragePool ┊ Node ┊ Driver ┊ PoolName ┊ FreeCapacity ┊ TotalCapacity ┊ CanSnapshots ┊ State ┊ SharedName ┊ ╞══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-pprod-xen03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-pprod-xen03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vprod-rancher03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vprod-rancher03;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker01 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker01;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker02 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker02;DfltDisklessStorPool ┊ ┊ DfltDisklessStorPool ┊ ovbh-vtest-k8s01-worker03 ┊ DISKLESS ┊ ┊ ┊ ┊ False ┊ Ok ┊ ovbh-vtest-k8s01-worker03;DfltDisklessStorPool ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen01 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen01;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen02 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen02;xcp-sr-linstor_group_thin_device ┊ ┊ xcp-sr-linstor_group_thin_device ┊ ovbh-pprod-xen03 ┊ LVM_THIN ┊ linstor_group/thin_device ┊ 13.75 TiB ┊ 13.97 TiB ┊ True ┊ Ok ┊ ovbh-pprod-xen03;xcp-sr-linstor_group_thin_device ┊ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ -

Turns out I did not have

SOCATon the new linstor cluster, and that was why I was getting that error message

I am able to run the command

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 backup create linbit-velero-preprod-backup pvc-086a5817-d813-41fe-86d8-3fac2ae2028f SUCCESS: Suspended IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker01' for snapshot SUCCESS: Suspended IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker03' for snapshot SUCCESS: Suspended IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen13' for snapshot SUCCESS: Suspended IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen12' for snapshot SUCCESS: Suspended IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen10' for snapshot SUCCESS: Took snapshot of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker01' SUCCESS: Took snapshot of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker03' SUCCESS: Took snapshot of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen13' SUCCESS: Took snapshot of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen12' SUCCESS: Took snapshot of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen10' SUCCESS: Resumed IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker01' after snapshot SUCCESS: Resumed IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-vtest-k8s02-worker03' after snapshot SUCCESS: Resumed IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen13' after snapshot SUCCESS: Resumed IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen12' after snapshot SUCCESS: Resumed IO of '[pvc-086a5817-d813-41fe-86d8-3fac2ae2028f]' on 'ovbh-pprod-xen10' after snapshot INFO: Generated snapshot name for backup of resourcepvc-086a5817-d813-41fe-86d8-3fac2ae2028f to remote linbit-velero-preprod-backup INFO: Shipping of resource pvc-086a5817-d813-41fe-86d8-3fac2ae2028f to remote linbit-velero-preprod-backup in progress. SUCCESS: Started shipping of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' SUCCESS: Started shipping of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' SUCCESS: Started shipping of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f'But over an hour later it has still not finished.

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 s l ╭────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ SnapshotName ┊ NodeNames ┊ Volumes ┊ CreatedOn ┊ State ┊ ╞════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ pvc-086a5817-d813-41fe-86d8-3fac2ae2028f ┊ back_20241009_161658_5ttp634a ┊ ovbh-pprod-xen01 ┊ 0: 8 GiB ┊ 2024-10-09 13:17:02 ┊ Restoring ┊ ╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯ jonathon@jonathon-framework:~$ linstor --controller 10.2.0.10 rd l ╭──────────────────────────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ Port ┊ ResourceGroup ┊ State ┊ ╞══════════════════════════════════════════════════════════════════════════════════════════════════════════╡ ┊ pvc-086a5817-d813-41fe-86d8-3fac2ae2028f ┊ ┊ DfltRscGrp ┊ ok ┊ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────╯Seems like it might be the same issue as S3.

2024_10_09 16:17:00.885 [MainWorkerPool-11] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241009_161658' of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' registered. 2024_10_09 16:17:00.886 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000 size from 8390440 KiB to 8392704 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_09 16:17:01.034 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' [DRBD] adjusted. 2024_10_09 16:17:01.262 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 96 2024_10_09 16:17:01.262 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 97 2024_10_09 16:17:01.301 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 97 2024_10_09 16:17:01.301 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 98 2024_10_09 16:17:02.765 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 98 2024_10_09 16:17:02.766 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 99 2024_10_09 16:17:02.774 [MainWorkerPool-1] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241009_161658' of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' registered. 2024_10_09 16:17:02.774 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000 size from 8390440 KiB to 8392704 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_09 16:17:03.012 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' [DRBD] adjusted. 2024_10_09 16:17:03.037 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: 2024/10/09 16:17:03 socat[23463] E connect(5, AF=2 10.2.0.10:12012, 16): No route to host 2024_10_09 16:17:03.092 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_09 16:17:03.094 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_09 16:17:03.095 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 94208 bytes remaining 2024_10_09 16:17:03.095 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 57856 bytes remaining 2024_10_09 16:17:03.099 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 94208 bytes remaining 2024_10_09 16:17:03.100 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 57856 bytes remaining 2024_10_09 16:17:03.109 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 82432 bytes remaining 2024_10_09 16:17:03.248 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_09 16:17:03.249 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 40960 bytes remaining 2024_10_09 16:17:03.250 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 94208 bytes remaining 2024_10_09 16:17:03.251 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 57856 bytes remaining 2024_10_09 16:17:03.254 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 94208 bytes remaining 2024_10_09 16:17:03.256 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 57856 bytes remaining 2024_10_09 16:17:03.266 [pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000_back_20241009_161658] WARN LINSTOR/Satellite - SYSTEM - stdErr: Device read short 82432 bytes remaining 2024_10_09 16:17:03.282 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 99 2024_10_09 16:17:03.282 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 100 2024_10_09 16:17:03.288 [MainWorkerPool-3] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241009_161658' of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' registered. 2024_10_09 16:17:03.289 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000 size from 8390440 KiB to 8392704 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_09 16:17:03.421 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' [DRBD] adjusted. 2024_10_09 16:17:03.644 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 100 2024_10_09 16:17:03.644 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 101 2024_10_09 16:17:03.674 [MainWorkerPool-5] INFO LINSTOR/Satellite - SYSTEM - Snapshot 'back_20241009_161658' of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' registered. 2024_10_09 16:17:03.674 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Aligning /dev/linstor_group/pvc-086a5817-d813-41fe-86d8-3fac2ae2028f_00000 size from 8390440 KiB to 8392704 KiB to be a multiple of extent size 4096 KiB (from Storage Pool) 2024_10_09 16:17:03.807 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' [DRBD] adjusted. 2024_10_09 16:17:04.031 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 101 2024_10_09 16:17:04.031 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 102 2024_10_09 16:47:03.682 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - End DeviceManager cycle 102 2024_10_09 16:47:03.682 [DeviceManager] INFO LINSTOR/Satellite - SYSTEM - Begin DeviceManager cycle 103Full log: linstor-satellite.txt

No Error Report on either cluster

-

I am curious if anyone else can replicate this, as it is just attempting to move a resource between two xostor clusters? If it is just me I can continue troubleshooting, otherwise it would be nice to know it is an exercise in futility.

But I am well aware that the release a few days ago has everyone swamped and this can wait, would just be awesome to know as it would change migration plans.

https://linbit.com/drbd-user-guide/linstor-guide-1_0-en/#s-linstor-snapshots-shipping

thin-send-recv is needed to ship data when using LVM thin-provisioned volumes

Yeah this seems to be for any type of shipping, s3 or otherwise.

-

So, did more testing. Looks like thin_send_recv is not the problem, but maybe socat.

I am able to manually migrate resource between XOSTOR (linstor) cluster using thin_send_recv. I have encluded all steps below so that it can be replicated.And we know socat is used, cause it complains if it is not there.

jonathon@jonathon-framework:~$ linstor --controller 10.2.0.19 backup ship newCluster pvc-086a5817-d813-41fe-86d8-3fac2ae2028f pvc-086a5817-d813-41fe-86d8-3fac2ae2028f INFO: Cannot use node 'ovbh-pprod-xen10' as it does not support the tool(s): SOCAT INFO: Cannot use node 'ovbh-pprod-xen12' as it does not support the tool(s): SOCAT INFO: Cannot use node 'ovbh-pprod-xen13' as it does not support the tool(s): SOCAT ERROR: Backup shipping of resource 'pvc-086a5817-d813-41fe-86d8-3fac2ae2028f' cannot be started since there is no node available that supports backup shipping.Using 1.0.1 thin_send_recv.

[16:16 ovbh-pprod-xen11 ~]# thin_send --version 1.0.1 [16:16 ovbh-pprod-xen01 ~]# thin_recv --version 1.0.1Versions of socat match.

[16:16 ovbh-pprod-xen11 ~]# socat -V socat by Gerhard Rieger and contributors - see www.dest-unreach.org socat version 1.7.3.2 on Aug 4 2017 04:57:10 running on Linux version #1 SMP Tue Jan 23 14:12:55 CET 2024, release 4.19.0+1, machine x86_64 features: #define WITH_STDIO 1 #define WITH_FDNUM 1 #define WITH_FILE 1 #define WITH_CREAT 1 #define WITH_GOPEN 1 #define WITH_TERMIOS 1 #define WITH_PIPE 1 #define WITH_UNIX 1 #define WITH_ABSTRACT_UNIXSOCKET 1 #define WITH_IP4 1 #define WITH_IP6 1 #define WITH_RAWIP 1 #define WITH_GENERICSOCKET 1 #define WITH_INTERFACE 1 #define WITH_TCP 1 #define WITH_UDP 1 #define WITH_SCTP 1 #define WITH_LISTEN 1 #define WITH_SOCKS4 1 #define WITH_SOCKS4A 1 #define WITH_PROXY 1 #define WITH_SYSTEM 1 #define WITH_EXEC 1 #define WITH_READLINE 1 #define WITH_TUN 1 #define WITH_PTY 1 #define WITH_OPENSSL 1 #undef WITH_FIPS #define WITH_LIBWRAP 1 #define WITH_SYCLS 1 #define WITH_FILAN 1 #define WITH_RETRY 1 #define WITH_MSGLEVEL 0 /*debug*/ ... [16:17 ovbh-pprod-xen01 ~]# socat -V socat by Gerhard Rieger and contributors - see www.dest-unreach.org socat version 1.7.3.2 on Aug 4 2017 04:57:10 running on Linux version #1 SMP Tue Jan 23 14:12:55 CET 2024, release 4.19.0+1, machine x86_64 features: #define WITH_STDIO 1 #define WITH_FDNUM 1 #define WITH_FILE 1 #define WITH_CREAT 1 #define WITH_GOPEN 1 #define WITH_TERMIOS 1 #define WITH_PIPE 1 #define WITH_UNIX 1 #define WITH_ABSTRACT_UNIXSOCKET 1 #define WITH_IP4 1 #define WITH_IP6 1 #define WITH_RAWIP 1 #define WITH_GENERICSOCKET 1 #define WITH_INTERFACE 1 #define WITH_TCP 1 #define WITH_UDP 1 #define WITH_SCTP 1 #define WITH_LISTEN 1 #define WITH_SOCKS4 1 #define WITH_SOCKS4A 1 #define WITH_PROXY 1 #define WITH_SYSTEM 1 #define WITH_EXEC 1 #define WITH_READLINE 1 #define WITH_TUN 1 #define WITH_PTY 1 #define WITH_OPENSSL 1 #undef WITH_FIPS #define WITH_LIBWRAP 1 #define WITH_SYCLS 1 #define WITH_FILAN 1 #define WITH_RETRY 1 #define WITH_MSGLEVEL 0 /*debug*/Migrating using only

thin_send_recvworks. -

The reason that it may be socat, is because the commands fail when I try using it, as instructed by https://github.com/LINBIT/thin-send-recv

[13:03 ovbh-pprod-xen11 ~]# thin_send linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000_s_test 2>/dev/null | zstd | socat STDIN TCP:10.2.0.10:4321 2024/10/28 13:04:59 socat[25701] E write(5, 0x55da36101da0, 8192): Broken pipe ... [13:03 ovbh-pprod-xen01 ~]# socat TCP-LISTEN:4321 STDOUT | zstd -d | thin_recv linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 2>/dev/null 2024/10/28 13:04:59 socat[27039] E read(1, 0x560ef6ff4350, 8192): Bad file descriptorAnd the same thing happens if I exclude

zstdfrom both commands. -

Hi!

I'm looking for new storage cluster for XCP-ng, because ceph RBD performance is very poor.

The main quetion now - is it possible to build XOSTOR (linstore) cluster separatly from xcp-ng and connect it over ethernet?

No inforamtion about such scenario in this article.

So I would like to have "compute" claster of xcp-ng nodes with fast local NVMe disks + and dedicated storage cluster with big amount of HDDs connected vie ethernet.And second question is about scaling.

How this storage cluster could be scaled? Is it possible to add storage nodes online without interrupting clients (VMs)?Thank you

-

LINSTOR can be reach in NFS or iSCSI if I remember correctly. You question is more for LINSTOR than for XCP-ng

-

@olivierlambert NFS and iSCSI have single point of failure. Yes, it is possible to deploy multipath iSCSI, but it is to complicated. I like CEPH RBD because it does not have single point of failure.

So I'm looking for something similar.From my point of vew XOSTOR is good idea, but in some cases there is no need to use all nodes as xcp-ng hosts. For example you do not need large amount of RAM and fast modern CPU for storage cluster nodes.

I think the best solution in my case will be to deploy XOSTOR controller in xcp-ng cluster connected to separate storage cluster.At first glance, I assume that it should be possible to connect storage cluster to xcp-ng with this command

linstor resource create node1 test1 --disklessSo the base idea is to use xcp-ng nodes for linstor-controllers/linstor-satellite and "storage" nodes as linstor-satellite only.

-

@splastunov / all.

Just on multipath iSCSI. I had this running for years with separate switches running the 'A side' and 'B side' multipath networks between the iSCSI dot hill's resilient and redundant controllers.

Once you have it configured, it is incredibly solid for what is a very budget solution. It just takes a bit of careful planning up front to make sure you wire up in a way that is properly resilient. Plus reliable monitoring to make sure that you notice when one of the resilient elements fails.

We had a number of switch power supply failures over the 10 year period that were completely transparent to the services running on the connected XCP-NG and XenServer hosts.

Similarly we were able to do a full DotHill controller replacement without any downtime after one of the two controllers failed.

We also replaced the hypervisor hardware a number of times over the lifetime of the platform.

-

@Mark-C Thank you!

Could you tell please how you scale your storage with iSCSI? What hardware/software are you using for storage? Is it possible to add storage nodes on fly or you have to deploy new storag cluster every time when you grow? -

@splastunov The DotHill certainly wasn't great for scaling out, because of the age of the firmware and the old-school treatment of the disk arrays.

We did deploy several disk expansion trays that were part populated at purchase as some level of expansion, and operated most of the array as a single underlying pool, which was then split up into iSCSI presented volumes.

So the limitations of the DotHill controllers and firmware we were using didn't address the storage pool scaling issue that CEPH / GPFS / etal are designed to.

However, some of the newer filer heads that treat their entire available disk array as a consolidated pool and support iSCSI would have that scalability, whilst keeping the hypervisor side relatively straight-forward.

CEPH etal are also in a much more mature place than they were back in the early 2010s too, so if I was doing it again, maybe we would go for a solution architecture based around that. Or XOSTOR, of course!

My intent was to present an iSCSI setup instance as something we found relatively bomb-proof, and post-initial configuration, relatively easy to scale. We provisioned 24 port A and B side network switches with jumbo frame support to give us the scalability to add further pools of hypervisors or storage, as required.

-

The typical hypervisor node was provisioned with at least 4 network ports, but most had 8 to allow trunking/resilience for every connection:

Management + live migration (Isolated)

World (Trunk)

iSCSI-A

iSCSI-BThe DotHills were 4 ports per controller, and the two controllers were configured as:

Management

iSCSI-A

iSCSI-B

SpareThe iSCSI-A and iSCSI-B were simple web-managed procurves with 24 ports each.

We had this scaled to 12 hypervisor nodes in 3 pools, all running off the same underlying dothill (dual redundant controller) hardware. We had provisioned some local SSD storage in each hypervisor for any nodes that were struggling for IOPs but ultimately never needed that.

-

OK we have debugged and improved this process, so including it here if it helps anyone else.

How to migrate resources between XOSTOR (linstor) clusters. This also works with piraeus-operator, which we use for k8s.

Manually moving listor resource with thin_send_recv

Migration of data

Commands

# PV: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 # S: pgdata-snapshot # s: 10741612544B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 #prep lvcreate -V 10741612544B --thinpool linstor_group/thin_device -n pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 pgdata-snapshot #send thin_send linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000_pgdata-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 2>/dev/nullWalk-through

Prep migration

[13:29 original-xostor-server ~]# lvs --noheadings --units B -o lv_size linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 26851934208B [13:53 new-xostor-server-01 ~]# lvcreate -V 26851934208B --thinpool linstor_group/thin_device -n pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 linstor_group Logical volume "pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000" created.Create snapshot

15:35:03] jonathon@jonathon-framework:~$ linstor --controller original-xostor-server s create pvc-12aca72c-d94a-4c09-8102-0a6646906f8d s_test SUCCESS: Description: New snapshot 's_test' of resource 'pvc-12aca72c-d94a-4c09-8102-0a6646906f8d' registered. Details: Snapshot 's_test' of resource 'pvc-12aca72c-d94a-4c09-8102-0a6646906f8d' UUID is: 3a07d2fd-6dc3-4994-b13f-8c3a2bb206b8 SUCCESS: Suspended IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' for snapshot SUCCESS: Suspended IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' for snapshot SUCCESS: Took snapshot of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' SUCCESS: Took snapshot of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' SUCCESS: Resumed IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' after snapshot SUCCESS: Resumed IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' after snapshotMigration

[13:53 original-xostor-server ~]# thin_send /dev/linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000_s_test 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 2>/dev/nullNeed to yeet errors on both ends of command or it will fail.

This is the same setup process for replica-1 or replica-3. For replica-3 can target new-xostor-server-01 each time, for replica-1 be sure to spread them out right.

Replica-3 Setup

Explanation

thin_sendto new-xostor-server-01, will need to run commands to force sync of data to replicas.Commands

# PV: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 # snapshot: snipeit-snapshot # size: 21483225088B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 #prep lvcreate -V 21483225088B --thinpool linstor_group/thin_device -n pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 snipeit-snapshot linstor --controller original-xostor-server s l | grep -e 'snipeit-snapshot' #send thin_send linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000_snipeit-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 2>/dev/null #linstor setup linstor --controller new-xostor-server-01 resource-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 --resource-group sc-74e1434b-b435-587e-9dea-fa067deec898 linstor --controller new-xostor-server-01 volume-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 21483225088B --storage-pool xcp-sr-linstor_group_thin_device linstor --controller new-xostor-server-01 resource create --storage-pool xcp-sr-linstor_group_thin_device --providers LVM_THIN new-xostor-server-01 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 linstor --controller new-xostor-server-01 resource create --auto-place +1 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 #Run the following on the node with the data. This is the prefered command drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 #Run the following on the node without the data. This is just for reference drbdadm invalidate pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 linstor --controller new-xostor-server-01 r l | grep -e 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584'--- apiVersion: v1 kind: PersistentVolume metadata: name: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 annotations: pv.kubernetes.io/provisioned-by: linstor.csi.linbit.com finalizers: - external-provisioner.volume.kubernetes.io/finalizer - kubernetes.io/pv-protection - external-attacher/linstor-csi-linbit-com spec: accessModes: - ReadWriteOnce capacity: storage: 20Gi # Ensure this matches the actual size of the LINSTOR volume persistentVolumeReclaimPolicy: Retain storageClassName: linstor-replica-three # Adjust to the storage class you want to use volumeMode: Filesystem csi: driver: linstor.csi.linbit.com fsType: ext4 volumeHandle: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 volumeAttributes: linstor.csi.linbit.com/mount-options: '' linstor.csi.linbit.com/post-mount-xfs-opts: '' linstor.csi.linbit.com/uses-volume-context: 'true' linstor.csi.linbit.com/remote-access-policy: 'true' --- apiVersion: v1 kind: PersistentVolumeClaim metadata: annotations: pv.kubernetes.io/bind-completed: 'yes' pv.kubernetes.io/bound-by-controller: 'yes' volume.beta.kubernetes.io/storage-provisioner: linstor.csi.linbit.com volume.kubernetes.io/storage-provisioner: linstor.csi.linbit.com finalizers: - kubernetes.io/pvc-protection name: pp-snipeit-pvc namespace: snipe-it spec: accessModes: - ReadWriteOnce resources: requests: storage: 20Gi storageClassName: linstor-replica-three volumeMode: Filesystem volumeName: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584Walk-through

jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 --resource-group sc-74e1434b-b435-587e-9dea-fa067deec898 SUCCESS: Description: New resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' created. Details: Resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' UUID is: 772692e2-3fca-4069-92e9-2bef22c68a6f jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 volume-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 21483225088B --storage-pool xcp-sr-linstor_group_thin_device SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: New volume definition with number '0' of resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' created. jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource create --storage-pool xcp-sr-linstor_group_thin_device --providers LVM_THIN new-xostor-server-01 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Successfully set property key(s): StorPoolName INFO: Updated pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 DRBD auto verify algorithm to 'crct10dif-pclmul' SUCCESS: Description: New resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' registered. Details: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' UUID is: 3072aaae-4a34-453e-bdc6-facb47809b3d SUCCESS: Description: Volume with number '0' on resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' successfully registered Details: Volume UUID is: 52b11ef6-ec50-42fb-8710-1d3f8c15c657 SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-01' jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource create --auto-place +1 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' successfully autoplaced on 2 nodes Details: Used nodes (storage pool name): 'new-xostor-server-02 (xcp-sr-linstor_group_thin_device)', 'new-xostor-server-03 (xcp-sr-linstor_group_thin_device)' INFO: Resource-definition property 'DrbdOptions/Resource/quorum' updated from 'off' to 'majority' by auto-quorum INFO: Resource-definition property 'DrbdOptions/Resource/on-no-quorum' updated from 'off' to 'suspend-io' by auto-quorum SUCCESS: Added peer(s) 'new-xostor-server-02', 'new-xostor-server-03' to resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-01' SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-02' SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-03' SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-03' ready Details: Auto-placing resource: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-02' ready Details: Auto-placing resource: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584At this point

jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 v l | grep -e 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' | new-xostor-server-01 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 9.20 GiB | Unused | UpToDate | | new-xostor-server-02 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 112.73 MiB | Unused | UpToDate | | new-xostor-server-03 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 112.73 MiB | Unused | UpToDate |To force the sync, run the following command on the node with the data

drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584This will kick it to get the data re-synced.

[14:51 new-xostor-server-01 ~]# drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 [14:51 new-xostor-server-01 ~]# drbdadm status pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 role:Secondary disk:UpToDate new-xostor-server-02 role:Secondary replication:SyncSource peer-disk:Inconsistent done:1.14 new-xostor-server-03 role:Secondary replication:SyncSource peer-disk:Inconsistent done:1.18 [14:51 new-xostor-server-01 ~]# drbdadm status pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 role:Secondary disk:UpToDate new-xostor-server-02 role:Secondary peer-disk:UpToDate new-xostor-server-03 role:Secondary peer-disk:UpToDateSee: https://github.com/LINBIT/linstor-server/issues/389

Replica-1setup

# PV: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 # S: pgdata-snapshot # s: 10741612544B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 #prep lvcreate -V 10741612544B --thinpool linstor_group/thin_device -n pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 pgdata-snapshot #send thin_send linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000_pgdata-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 2>/dev/null # 1 linstor --controller new-xostor-server-01 resource-definition create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 --resource-group sc-b066e430-6206-5588-a490-cc91ecef53d6 linstor --controller new-xostor-server-01 volume-definition create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 10741612544B --storage-pool xcp-sr-linstor_group_thin_device linstor --controller new-xostor-server-01 resource create new-xostor-server-01 pvc-6408a214-6def-44c4-8d9a-bebb67be5510--- apiVersion: v1 kind: PersistentVolume metadata: name: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 annotations: pv.kubernetes.io/provisioned-by: linstor.csi.linbit.com finalizers: - external-provisioner.volume.kubernetes.io/finalizer - kubernetes.io/pv-protection - external-attacher/linstor-csi-linbit-com spec: accessModes: - ReadWriteOnce capacity: storage: 10Gi # Ensure this matches the actual size of the LINSTOR volume persistentVolumeReclaimPolicy: Retain storageClassName: linstor-replica-one-local # Adjust to the storage class you want to use volumeMode: Filesystem csi: driver: linstor.csi.linbit.com fsType: ext4 volumeHandle: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 volumeAttributes: linstor.csi.linbit.com/mount-options: '' linstor.csi.linbit.com/post-mount-xfs-opts: '' linstor.csi.linbit.com/uses-volume-context: 'true' linstor.csi.linbit.com/remote-access-policy: | - fromSame: - xcp-ng/node nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: xcp-ng/node operator: In values: - new-xostor-server-01 --- apiVersion: v1 kind: PersistentVolumeClaim metadata: annotations: pv.kubernetes.io/bind-completed: 'yes' pv.kubernetes.io/bound-by-controller: 'yes' volume.beta.kubernetes.io/storage-provisioner: linstor.csi.linbit.com volume.kubernetes.io/selected-node: ovbh-vtest-k8s01-worker01 volume.kubernetes.io/storage-provisioner: linstor.csi.linbit.com finalizers: - kubernetes.io/pvc-protection name: acid-merch-2 namespace: default spec: accessModes: - ReadWriteOnce resources: requests: storage: 10Gi storageClassName: linstor-replica-one-local volumeMode: Filesystem volumeName: pvc-6408a214-6def-44c4-8d9a-bebb67be5510

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login