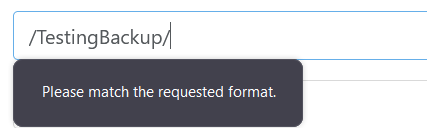

S3 Backup "Please Match The Requested Format"

-

@vincentp I am the one doing most of the work on S3. The remote and backup UX need a huge overhaul for XO 6, but we'll fix bugs until then

The pattern used to check seems strange, maybe it is too coupled to S3 bucket format, we'll try to relax it.

edit : it's because we took S3 naming convention from https://docs.aws.amazon.com/AmazonS3/latest/userguide/bucketnamingrules.html

Bucket names can consist only of lowercase letters, numbers, dots (.), and hyphens (-).

that's great news. Feel free to share your results, we always try to improve the backup speed. In the xo-server config file, there is a

writeBlockConcurrencyparameter ; it's the nmber of blocks written to the remote in parallel.Btw we are merging another code path to access backup using NBD which should speed up delta backup and continuous replication when they are constrained by the speed of the xapi ( the xen api used to read data from the host) https://github.com/vatesfr/xen-orchestra/pull/6716 .

Did you check the continuous replication ? it could be an easy addition to your backup strategy if you have enough storages on your hosts. This way you can start a replicated VM immediatly if there is an incident on the main host. It can even be in the same job as the backup to backblaze ( but in this case it will be constrained by the backblaze backup speed)

-

@florent PR for bucket is here : https://github.com/vatesfr/xen-orchestra/pull/6757 it should be merged today and go live with the release on the end of he week

-

@florent Sorry for my slow reply on this, ended up falling ill shortly after posting this, doing much better now though.

This makes sense to me why I was getting the format error, glad it's getting "fixed", thanks a ton!

As for the writeBlockConcurrency parameter, is this something that will eventually be tunable in the XOA GUI? I'll see if I can mess with this and see what speed improvements I can get though.

The NBD stuff looks very interesting to me, excited to test it.

Right now we aren't using Continuous Rep but will be in the future, we only have a single host currently in our stack for XCP-ng, but within a few months we should have 3 or 4 hosts for it (including one at a remote backup site of ours, which is where we will do the continuous rep to).

-

@planedrop said in S3 Backup "Please Match The Requested Format":

I am also curious if multiple backups at the same time results in faster overall speeds? Not quite sure how parallel a single VM backup is, but maybe each can run at that 15 or so MiB/s so multiple backups can use more bandwidth, I'll have to test this to find out.

So I did get to test this scenario out last night - finally got my production vms migrated over - that's another story - and did run 2 full backups at the same time.

Duration: 9 hours

Size: 512.03 GiB

Speed: 16.98 MiB/sDuration: 7 hours

Size: 511.97 GiB

Speed: 20.25 MiB/sI did monitor for a short while (it was 2am) with netdata and observed the outbound connection running between 600Mbs and 900Mbs - so I guess that's about as good as it's going to get (1Gbs connection). In the long term I really need to get a truenas server at this site and run the backups to backblaze from there.

-

@vincentp Interesting findings, odd that the speeds showed that with netdata though considering 20MiB/s is 160Mbs, seems like maybe it is a more bursty workload instead of a constant one?

As for the TrueNAS, I'm actually trying to move away from that since it results in a double restore scenario, say something catastrophic does happen, ransomware, etc..... Then you have to both source and build a TrueNAS server, restore to it, then source and build an XCP-ng server and restore from the TrueNAS server. A much faster method would be just to install XCP-ng and XOA and then restore config and restore VMs direct from Backblaze.

-

@planedrop I'm about to give up on using backblaze - the peformance is really variable, but I am seeing full backups take > 24hrs at the moment. I'm not sure where the bottleneck is, servers are co-located in LA - backblaze region set to us.west - 1Gbps connection but I just cannot get the backups done in a reasonable time. I'm having similar issues with continous replication between the 2 servers (which have a 10G direct connection) - the incremental backups are fine, but the full backups just take too long.

For now I'm just going to focus on backing up the data inside the vm's (db, websites etc) as that will be quicker.

-

@vincentp Did you try activating the NBD option ?

Can you back one VM on a NFS on a separate job to check if it's really reading speed that is slow, or if it's the S3 and CR writer ?

-

@florent said in S3 Backup "Please Match The Requested Format":

@vincentp Did you try activating the NBD option ?

Can you back one VM on a NFS on a separate job to check if it's really reading speed that is slow, or if it's the S3 and CR writer ?

No, I don't have any shared storage at that site so cannot test NFS.

I do have NDB enabled on the direct 10G connection between the two hosts.

-

@vincentp did you also enable it in the xapioptions of xo-server / the relevant proxy ?

-

@vincentp Seems to me the full backups taking a long time isn't a huge issue considering you should mostly just have deltas from there on out, other than periodic fulls once in a long while.

I'm going to do more testing myself here soon to see if I can get things to speed up more, will test NBD.

Also @florent I am not seeing the

writeBlockConcurrencyoption within the config files, is it one that I should add or should it already be there? -

@planedrop you should add it in the

[backups]section -

@florent I'll give this a shot and see how performance is. Do you have a recommended number to set on this? 8?

-

@planedrop not reallky for now

-

@vincentp Wanted to get some more info from you regarding your backups that were taking a long time.

Was the > 24hrs mark the entire backup process or just the data transfer process?

If you were using Delta backups and then had the backup retention in the schedule set to 1, it is still honored (even if the schedule itself was disabled), so what might have been happening is that it was merging the delta chain every single time you did a backup which takes a long time.

I did some more testing (nothing with huge VMs yet, but with 4 100GB ish ones) and setting the backup retention to 7 (so it only merges things into the Delta every 7 days) made it so the deltas were much faster to complete, otherwise the backup process would get "stuck" while it's merging the deltas into the full, which is the part that takes the longest from my testing.

Of course, once you hit that 7 retention mark, then every backup from there on out has to merge 1 delta into the full so not sure this is a solution but was just curious.

I'm still doing testing but I think the merge process is going to be the largest issue, so much so that it might actually be faster to NOT use Delta's and instead use full backups and just do them less frequently.

-

@florent any idea on a way to make the delta merges faster? I know there was some work in the pipeline for that a while back but don't recall what came of it. As of right now the actual uploads of the deltas are plenty fast but the merge process is super slow (for some VMs the upload happens in like 10 minutes but merge takes over an hour).

Would the

writeblockconcurrencysetting help with merges at all? -

@planedrop TBH I didn't monitor for the full 24hrs - but the transfer speeds were just too slow. The same happens with CR between hosts - way too slow to be usable.

Backup performance on xcp/xo is a major problem - currently battling with this at another site where we just installed a truenas server with dual 25G direct connections between the xcp host and the truenas server (with NDB - and we see < 100MiB/s transfers (nfs share on truenas scale latest) - this time the xcp host is a dual eypc 7543 with all nvme and tons of ram.

This thread did help a bit - top on the host was showing stunnel using a lot of cpu - switching the connection from xo to the server tp be http removed stunnel from the equation but it's still too slow - and I did read somewhere that in the next xcp/xen version http is going away and everything will be https only.

I'm currently researching backups on proxmox to see if they have the same sort of performance issues. I really like xcp and xo but when we end up with backups taking so long that they overlap with the next backup schedule that is not usable.

-

@vincentp I do agree backups being as slow as they are, especially for S3, is a huge issue.

I don't personally find backups over SMB (10GbE in this case) too slow to be usable by any means, many TB of VMs can be backed up in a single night without issues, but remote stuff is very slow.

I'm curious to hear about your CR experience though, as that is something I'm planning to deploy here soon. Where the hosts on the same subnet and had fast connections or was it a remote host with like IPSec handling the connectivity?

-

@planedrop I haven't used smb for backups but will test that - currently testing with nfs which I imagine would be faster than smb though.

I have 3 sites, each with a separate XO instance managing them

LA DC

- 2 xcp hosts (dual E5-2620v4)

- no shared storage

- 10G direct connection between hosts

I tried CR between the hosts, and it works well.. once you get past the full backup - but those take 24hrs (800GB vms),

S3 Backup - same experience - backups seem to work well once you get past the full backup - but that sometimes takes longer than 24hrs and runs into the next backup schedule. XO has access to the 10G interface and I confirmed it's using that.Sydney DC

- single xcp host (dual eypc)

- truenas scale (installed yesterday) - dual 25G direct connections to the xcp

Testing full backups to nfs on truenas over 25G network(NDB Enabled) with the shared option to use multiple files enabled. Also XO is connecting via http to avoid stunnel which was using a huge amount of cpu when I first tried it, and it has access to a 25G interface and I confirmed via netdata that the correct interface is being used for the backups

Small vm test.

Duration: 9 minutes

Size: 41.48 GiB

Speed: 81.03 MiB/sMore testing required but its frustrating that we cannot get better speeds than this - could have saved some money and just used 1G cards in the machines!

HomeLab

- single xcp host

- truenas server to be commissioned this week

just doing disaster recovery backups to 2nd local storage on the machine for now (and just as slow) - will use the truenas once I get it in the rack this week.

The bottleneck with the backups is definitely not the network, even on the homelab machine that backs up to local storage I'm only seeing around 60MiB/s

-

@vincentp If you backup multiple VMs in parallel, does the total speed stays at 80MB/s or does it scales with the number of VM ?

NBD also use encryption by default. You can use it unencrypted by removing the NBD purpose on the network and adding insecure_nbd https://docs.citrix.com/en-us/citrix-hypervisor/developer/changed-block-tracking-guide/enabling-nbd.html#enabling-an-insecure-nbd-connection-for-a-network-notls-mode

-

80MiB/s between LA and Sydney is already pretty impressive, knowing the latency between those. When you write a lot of small blocks, each block have to way for a round trip before being ACK. This takes a lot of time.

Higher the latency, longer the backup, except if we choose bigger blocks, which isn't trivial.

When using NBD, it should be a lot better however since we can have more blocks worked in parallel. I achieved a huge bump with more blocks at the same time.

Also, you can also try XO from the source on a physical machine to check the difference

(vs XO in a VM)

(vs XO in a VM)There's many many many ways to get faster, what's important is to measure each modification boost, because this might help to identify bottlenecks