CBT: the thread to centralize your feedback

-

Hi,

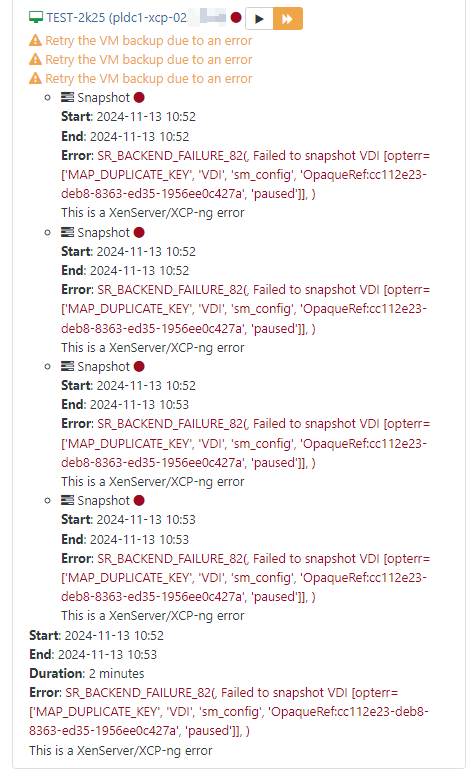

I observed one issue with the backup process when the CBT is enabled. The case is showing when the VM has two disks on different SRs. When the backup tries to be taken, it fails, and the machine goes to a state where no other changes/reconfiguration is possible. It is not possible to disable CBT, move a disk to a different SR or create another backup. Sometimes after a failed backup the console access and machine are freezing. Everything works fine when all of the VM disks are on one SR.The logs from the first backup:

-

Hi @barwys

Can you provide more details? We need to know on which commit or XOA version you are

-

@olivierlambert Xen Orchestra, commit 804af

Both SRs are the shared storage by Fibre Channels connection (multipathing enabled). I tested on the Windows or Linux guest system as well. XenTools respectively 9.4.0/9.3.3 and 8.4.0-1 respectively. -

This commit is already a week old, please update to the latest one, rebuild and try again

-

Hello @olivierlambert,

After upgrading to commit 05c4e, I cannot reproduce this issue again. It seems to work much better.

Thanks -

Great! Please remember to always be on latest commit before reporting a problem, as you can see the code base is evolving fast

-

@olivierlambert Unfortunately after updating to 5.100.2 (XOA and Proxies) i am still running into XOA backups falling back to full after preforming a VM migration between two hosts within a pool when snapshot deletion is enabled. This doesnt happen if the snapshot is retained.

My test setup:

Servers: 2 x HP DL325 Gen 10 AMD Epyc Servers

Storage: TrueNAS Mini R running TrueNAS Scale 24.10 serving an NFS SR shared between both servers.

All VMs running on the same shared SR on the TrueNAS.Scenario:

VM 1 Migrated from Host A to Host B

VM 2 Migrated from Host B to Host ARunning a CBT with Snapshot delete backup after migration the VM between hosts while they remain on the same shared SR results in the error:

"Can't do delta with this vdi, transfer will be a full"

"Can't do delta, will try to get a full stream"Full log and screenshot of the error:

2024-11-14T19_20_40.383Z - backup NG.json.txt

-

Sadly you are the only one we know we this problem that we cannot reproduce

I suppose we already checked that the VDI UUID doesn't change after the migration right?

-

@olivierlambert Yeah we did confirm the UUID does not change. These are VMs that were imported from VMware using the XOA wizard if that makes any difference.

The only other pool i have i could try on would be our production pool which is using CBT but is not removing the snapshot.

However if i migrate a VM between hosts on that pool and run a backup job i see this on the pool masters SMLog

Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] Size of bitmap: 491520 Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] lock: acquired /var/lock/sm/c63f7214-c1ef-4fc3-b174-cb78dffcbaa6/cbtlog Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] ['/usr/sbin/cbt-util', 'get', '-n', '/var/run/sr-mount/16e4ecd2-583e-e2a0-5d3d-8e53ae9c1429/c63f7214-c1ef-4fc3-b174-cb78dffcbaa6.cbtlog', '-c'] Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] pread SUCCESS Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] lock: released /var/lock/sm/c63f7214-c1ef-4fc3-b174-cb78dffcbaa6/cbtlog Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] Raising exception [460, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]] Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] ***** generic exception: vdi_list_changed_blocks: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] return self._run_locked(sr) Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] rv = self._run(sr, target) Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 326, in _run Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] return target.list_changed_blocks() Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/VDI.py", line 757, in list_changed_blocks Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] "Source and target VDI are unrelated") Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] ***** NFS VHD: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 385, in run Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] ret = cmd.run(sr) Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] return self._run_locked(sr) Nov 14 21:06:50 xcpng-prd-03 SM: [3980818] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked -- Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] Size of bitmap: 25165824 Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] lock: acquired /var/lock/sm/90202d5b-8016-49a3-92dd-4790a2edaa41/cbtlog Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] ['/usr/sbin/cbt-util', 'get', '-n', '/var/run/sr-mount/16e4ecd2-583e-e2a0-5d3d-8e53ae9c1429/90202d5b-8016-49a3-92dd-4790a2edaa41.cbtlog', '-c'] Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] pread SUCCESS Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] lock: released /var/lock/sm/90202d5b-8016-49a3-92dd-4790a2edaa41/cbtlog Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] Raising exception [460, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]] Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] ***** generic exception: vdi_list_changed_blocks: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] return self._run_locked(sr) Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] rv = self._run(sr, target) Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 326, in _run Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] return target.list_changed_blocks() Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/VDI.py", line 757, in list_changed_blocks Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] "Source and target VDI are unrelated") Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] ***** NFS VHD: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 385, in run Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] ret = cmd.run(sr) Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] return self._run_locked(sr) Nov 14 21:07:05 xcpng-prd-03 SM: [3981883] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_lockedThis goes away after the the initial backup run after the VM migration and all runs after result in nothing in the SMLog. Because i am not removing the snapshot the backup still runs as a delta however this leads me to believe if i enabled snapshot delete on that pool i would run into the same issue and a full backup would run instead.

I'm not sure what i would be doing that's unique to cause this. TestLab is NFS4 on a TrueNAS and Production is NFS3 on a Pure X20 array.

I will soon be able to test this on our DR site pool but we are currently waiting on hardware for that to arrive.

-

I have no idea why you are the only one to have this issue, which is why it's weird

-

@olivierlambert So today i installed the latest round of updates on the test pool which moved all the VMs back and forth during a rolling pool update. I then let everything sit for a couple hours then ran the backup job and this time it did not throw any errors. So thats even more confusing.

Perhaps its because i am kicking off a backup job immediately after migrating the VMs? As a test i am going to move them around again now, wait an hour then attempt to run the job.

Edit: Waiting did not seem to help. Running the job manually again resulted in a full being run again with the same

Can't do delta with this vdi, transfer will be a full

Can't do delta, will try to get a full stream -

SMLog output on the test pool looks the same as production pool after a manual VM migration:

I did also double check that the VM UUID does not change after the migration.

Nov 15 13:59:40 xcpng-test-01 SM: [277865] lock: opening lock file /var/lock/sm/8b0ee29e-7cbe-4e15-bd13-330a974fde2a/cbtlog Nov 15 13:59:40 xcpng-test-01 SM: [277865] lock: acquired /var/lock/sm/8b0ee29e-7cbe-4e15-bd13-330a974fde2a/cbtlog Nov 15 13:59:40 xcpng-test-01 SM: [277865] ['/usr/sbin/cbt-util', 'get', '-n', '/var/run/sr-mount/45e457aa-16f8-41e0-d03d-8201e69638be/8b0ee29e-7cbe-4e15-bd13-330a974fde2a.cbtlog', '-c'] Nov 15 13:59:40 xcpng-test-01 SM: [277865] pread SUCCESS Nov 15 13:59:40 xcpng-test-01 SM: [277865] lock: released /var/lock/sm/8b0ee29e-7cbe-4e15-bd13-330a974fde2a/cbtlog Nov 15 13:59:40 xcpng-test-01 SM: [277865] Raising exception [460, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]] Nov 15 13:59:40 xcpng-test-01 SM: [277865] ***** generic exception: vdi_list_changed_blocks: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 15 13:59:40 xcpng-test-01 SM: [277865] return self._run_locked(sr) Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked Nov 15 13:59:40 xcpng-test-01 SM: [277865] rv = self._run(sr, target) Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 326, in _run Nov 15 13:59:40 xcpng-test-01 SM: [277865] return target.list_changed_blocks() Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/VDI.py", line 757, in list_changed_blocks Nov 15 13:59:40 xcpng-test-01 SM: [277865] "Source and target VDI are unrelated") Nov 15 13:59:40 xcpng-test-01 SM: [277865] Nov 15 13:59:40 xcpng-test-01 SM: [277865] ***** NFS VHD: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 385, in run Nov 15 13:59:40 xcpng-test-01 SM: [277865] ret = cmd.run(sr) Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 15 13:59:40 xcpng-test-01 SM: [277865] return self._run_locked(sr) Nov 15 13:59:40 xcpng-test-01 SM: [277865] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked -- Nov 15 13:59:45 xcpng-test-01 SM: [278274] lock: opening lock file /var/lock/sm/fa7929aa-a39c-437d-9787-5218e9bcbc1a/cbtlog Nov 15 13:59:45 xcpng-test-01 SM: [278274] lock: acquired /var/lock/sm/fa7929aa-a39c-437d-9787-5218e9bcbc1a/cbtlog Nov 15 13:59:45 xcpng-test-01 SM: [278274] ['/usr/sbin/cbt-util', 'get', '-n', '/var/run/sr-mount/45e457aa-16f8-41e0-d03d-8201e69638be/fa7929aa-a39c-437d-9787-5218e9bcbc1a.cbtlog', '-c'] Nov 15 13:59:45 xcpng-test-01 SM: [278274] pread SUCCESS Nov 15 13:59:45 xcpng-test-01 SM: [278274] lock: released /var/lock/sm/fa7929aa-a39c-437d-9787-5218e9bcbc1a/cbtlog Nov 15 13:59:45 xcpng-test-01 SM: [278274] Raising exception [460, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]] Nov 15 13:59:45 xcpng-test-01 SM: [278274] ***** generic exception: vdi_list_changed_blocks: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 15 13:59:45 xcpng-test-01 SM: [278274] return self._run_locked(sr) Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked Nov 15 13:59:45 xcpng-test-01 SM: [278274] rv = self._run(sr, target) Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 326, in _run Nov 15 13:59:45 xcpng-test-01 SM: [278274] return target.list_changed_blocks() Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/VDI.py", line 757, in list_changed_blocks Nov 15 13:59:45 xcpng-test-01 SM: [278274] "Source and target VDI are unrelated") Nov 15 13:59:45 xcpng-test-01 SM: [278274] Nov 15 13:59:45 xcpng-test-01 SM: [278274] ***** NFS VHD: EXCEPTION <class 'xs_errors.SROSError'>, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated] Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 385, in run Nov 15 13:59:45 xcpng-test-01 SM: [278274] ret = cmd.run(sr) Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 111, in run Nov 15 13:59:45 xcpng-test-01 SM: [278274] return self._run_locked(sr) Nov 15 13:59:45 xcpng-test-01 SM: [278274] File "/opt/xensource/sm/SRCommand.py", line 161, in _run_locked -

@olivierlambert Im making progress getting to the bottom of this thanks to some documentation from XenServer about using cbt-util.

You can use the cbt-util utility, which helps establish chain relationship. If the VDI snapshots are not linked by changed block metadata, you get errors like “SR_BACKEND_FAILURE_460”, “Failed to calculate changed blocks for given VDIs”, and “Source and target VDI are unrelated”. Example usage of cbt-util: cbt-util get –c –n <name of cbt log file> The -c option prints the child log file UUID.I cleared all CBT snapshots from my test VMs and run a full backup on each VM. Then ensured the CBT chain was consistent using cbt-util, the output was:

[14:22 xcpng-test-01 45e457aa-16f8-41e0-d03d-8201e69638be]# cbt-util get -c -n 867063fc-4d86-420a-9ad2-dfe1749ecbc1.cbtlog 1950d6a3-c6a9-4b0c-b79f-068dd44479ccAfter the backup was complete i then migrated the VM to the second host in the pool and ran the same command from both hosts:

[14:26 xcpng-test-01 45e457aa-16f8-41e0-d03d-8201e69638be]# cbt-util get -c -n 867063fc-4d86-420a-9ad2-dfe1749ecbc1.cbtlog 00000000-0000-0000-0000-000000000000And from the second host:

[14:26 xcpng-test-02 45e457aa-16f8-41e0-d03d-8201e69638be]# cbt-util get -c -n 867063fc-4d86-420a-9ad2-dfe1749ecbc1.cbtlog 00000000-0000-0000-0000-000000000000That clearly is the problem right there, question is, what is causing that to happen?

After running another full the zero'd out cbtlog file is removed and a new one is created which will work fine until the VM is migrated again:

[14:39 xcpng-test-01 45e457aa-16f8-41e0-d03d-8201e69638be]# cbt-util get -c -n 1eefb7bf-9dc3-4830-8352-441a77412576.cbtlog 1950d6a3-c6a9-4b0c-b79f-068dd44479cc -

@flakpyro i can't reproduce this on our end, after migration within pool on the same storage pool the cbt is preserved. When i migrate to a different storage pool the cbt is reset.

-

@rtjdamen interesting, this is with iSCSI (block) or with an NFS SR?

-

@flakpyro both scenarios

-

@rtjdamen Hmm very strange.

The only thing i can think of is that this maybe due to the fact these VMs were imported from VMware.

Next week i can try creating a brand new NFSv3 SR (Since NFS4 has created issues in the past) as well as a new clean install VM that was not imported from VMware and see if the issue persists.

-

This is a completely different 5 host pool backed by a Pure storage array with SRs mounted via NFSv3, migrating a VM between hosts results in the same issue.

Before migration: [01:41 xcpng-prd-03 b04d9910-8671-750f-050e-8b55c64fbede]# cbt-util get -c -n 83035854-b5a9-4f7e-869f-abe43ddc658d.cbtlog e28065ff-342f-4eae-a910-b91842dd39ca After migration [01:41 xcpng-prd-03 b04d9910-8671-750f-050e-8b55c64fbede]# cbt-util get -c -n 83035854-b5a9-4f7e-869f-abe43ddc658d.cbtlog 00000000-0000-0000-0000-000000000000I dont think i have anything "custom" running that would be causing this so no idea why this is happening but its happening on multiple pools for us.

-

@flakpyro is there any difference in migrating with the vm powered on or powered off?

-

@flakpyro i have just tested live migration and offline on our end, both kept the cbt alive. Tested on both iscsi and nfs.