@TheNorthernLight, based on this recent blog post: https://xcp-ng.org/blog/2025/03/14/the-future-of-xcp-ng-lts/, I'm assuming it will go GA around or on when 8.3 gets moved into LTS. But that's just a guess.

Best posts made by Delgado

-

RE: XOSTOR on 8.3?

-

RE: Continuous Replication Job Causing XO to Crash

@olivierlambert Thanks. I will do that. I did bump the memory up to 12GiB and the backup ran successfully but I will pursue a ticket with the 3rd party script maker as well. Thank you for your time!

-

RE: Continuous Replication fails with XO update (June-20)

I can confirm I upgraded to this branch and replication works again.

-

RE: Health Checks Failing

@florent can confirm that health checks are working again! Thanks for the prompt reply!

-

RE: Backup Mirror Health Check Error

@florent It looks like things are working as expected now. If there are changes that need to be backed up the vms run a health check now. If there are no changes no health check is ran. Thank you!

Latest posts made by Delgado

-

RE: Our future backup code: test it!

@olivierlambert I just tried to build the install with that branch and got the following error.

• Running build in 22 packages • Remote caching disabled @xen-orchestra/disk-transform:build: cache miss, executing c1d61a12721a1a1b @xen-orchestra/disk-transform:build: yarn run v1.22.22 @xen-orchestra/disk-transform:build: $ tsc @xen-orchestra/disk-transform:build: src/SynchronizedDisk.mts(2,30): error TS 2307: Cannot find module '@vates/generator-toolbox' or its corresponding type declarations. @xen-orchestra/disk-transform:build: error Command failed with exit code 2.OS: Rocky 9

Yarn: 1.22.22

Node: v22.14.0Let me know if you need anymore information.

-

RE: XOSTOR on 8.3?

@TheNorthernLight, based on this recent blog post: https://xcp-ng.org/blog/2025/03/14/the-future-of-xcp-ng-lts/, I'm assuming it will go GA around or on when 8.3 gets moved into LTS. But that's just a guess.

-

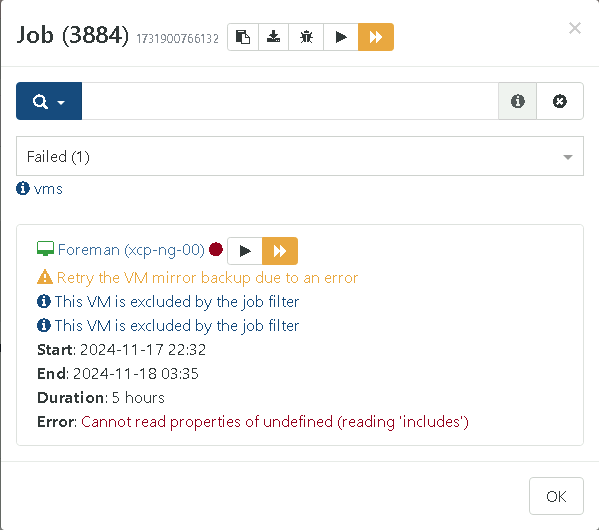

Mirror Backup Failing On Excluded VM

Hello,

I have a mirror incremental backup that is mirroring some vms to BackBlaze buckets. I am only selecting my more critical vms as I don't need this larger VM backed up offsite. I seem to be getting an error every backup on the one excluded VM stating "Cannot read properties of undefined (reading 'includes')" I have tried recreating the mirror job etc but it seems to still crop up.

Commit: 99c56

Log: 2024-11-18T03_32_46.132Z - backup NG.json.txt

Screenshot below:

Let me know if you need anymore informaton.

-

Question About Backup Sequences

Hello,

I saw that backup sequences were added with the new release (and it looks like an awesome feature by the way!). I have one quick question. Does the backup sequence schedule take precedence over the normal schedule for a job? My thought process was to disable the schedule on the normal job but keep it to allow for things such as health checks but allow the back sequence to start the job.

-

RE: CBT: the thread to centralize your feedback

@rtjdamen I haven't had any hosts crash recently or any storage issue from what I can tell. The "type" in the log says delta but the size of the backups definitely look like full backups. They're also labelled as key when I look at the restore points for delta backups.

-

RE: CBT: the thread to centralize your feedback

It looks like all of my backups have started erroring with "can't create a stream from a metadata VDI, fall back to a base" I am using 1 NDB connection and I am not commit 530c3. I have attached the logs of a delta backup and a replication.

2024-09-19T16_00_00.002Z - backup NG.json.txt

2024-09-19T04_00_00.001Z - backup NG.json.txtI am seeing this in the journal logs.

Sep 19 12:01:39 hostname xo-server[11597]: error: XapiError: SR_BACKEND_FAILURE_460(, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Sep 19 12:01:39 hostname xo-server[11597]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/_XapiError.mjs:16:12) Sep 19 12:01:39 hostname xo-server[11597]: at file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/transports/json-rpc.mjs:38:21 Sep 19 12:01:39 hostname xo-server[11597]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Sep 19 12:01:39 hostname xo-server[11597]: code: 'SR_BACKEND_FAILURE_460', Sep 19 12:01:39 hostname xo-server[11597]: params: [ Sep 19 12:01:39 hostname xo-server[11597]: '', Sep 19 12:01:39 hostname xo-server[11597]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]', Sep 19 12:01:39 hostname xo-server[11597]: '' Sep 19 12:01:39 hostname xo-server[11597]: ], Sep 19 12:01:39 hostname xo-server[11597]: call: { method: 'VDI.list_changed_blocks', params: [Array] }, Sep 19 12:01:39 hostname xo-server[11597]: url: undefined, Sep 19 12:01:39 hostname xo-server[11597]: task: undefined Sep 19 12:01:39 hostname xo-server[11597]: }, Sep 19 12:01:39 hostname xo-server[11597]: ref: 'OpaqueRef:0438087b-5cbc-a458-a8a0-4eaa6ce74d19', Sep 19 12:01:39 hostname xo-server[11597]: baseRef: 'OpaqueRef:ae1330a2-0f95-6c16-6878-f6c05373a2f2' Sep 19 12:01:39 hostname xo-server[11597]: } Sep 19 12:01:43 hostname xo-server[11597]: 2024-09-19T16:01:43.015Z xo:xapi:vdi INFO OpaqueRef:b6f65ae4-bee8-b179-a06c-2bb4956214ba has been disconnected from dom0 { Sep 19 12:01:43 hostname xo-server[11597]: vdiRef: 'OpaqueRef:0438087b-5cbc-a458-a8a0-4eaa6ce74d19', Sep 19 12:01:43 hostname xo-server[11597]: vbdRef: 'OpaqueRef:b6f65ae4-bee8-b179-a06c-2bb4956214ba' Sep 19 12:01:43 hostname xo-server[11597]: } Sep 19 12:02:29 hostname xo-server[11597]: 2024-09-19T16:02:29.855Z xo:xapi:vdi INFO can't get changed block { Sep 19 12:02:29 hostname xo-server[11597]: error: XapiError: SR_BACKEND_FAILURE_460(, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Sep 19 12:02:29 hostname xo-server[11597]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/_XapiError.mjs:16:12) Sep 19 12:02:29 hostname xo-server[11597]: at file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/transports/json-rpc.mjs:38:21 Sep 19 12:02:29 hostname xo-server[11597]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Sep 19 12:02:29 hostname xo-server[11597]: code: 'SR_BACKEND_FAILURE_460', Sep 19 12:02:29 hostname xo-server[11597]: params: [ Sep 19 12:02:29 hostname xo-server[11597]: '', Sep 19 12:02:29 hostname xo-server[11597]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]', -

RE: CBT: the thread to centralize your feedback

I saw these errors in my log today after starting a replication job on commit 530c3. I have not migrated these VMs to a new host or an SR.

Sep 18 08:17:12 xo-server[6199]: 2024-09-18T12:17:12.861Z xo:xapi:vdi INFO can't get changed block { Sep 18 08:17:12 xo-server[6199]: error: XapiError: SR_BACKEND_FAILURE_460(, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Sep 18 08:17:12 xo-server[6199]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/_XapiError.mjs:16:12) Sep 18 08:17:12 xo-server[6199]: at file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/transports/json-rpc.mjs:38:21 Sep 18 08:17:12 xo-server[6199]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Sep 18 08:17:12 xo-server[6199]: code: 'SR_BACKEND_FAILURE_460', Sep 18 08:17:12 xo-server[6199]: params: [ Sep 18 08:17:12 xo-server[6199]: '', Sep 18 08:17:12 xo-server[6199]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Sep 18 08:17:12 xo-server[6199]: '' Sep 18 08:17:12 xo-server[6199]: ], Sep 18 08:17:12 xo-server[6199]: call: { method: 'VDI.list_changed_blocks', params: [Array] }, Sep 18 08:17:12 xo-server[6199]: url: undefined, Sep 18 08:17:12 xo-server[6199]: task: undefined Sep 18 08:17:12 xo-server[6199]: }, Sep 18 08:17:12 xo-server[6199]: ref: 'OpaqueRef:a7c534ef-d1d5-0578-a564-05b2c36de7be', Sep 18 08:17:12 xo-server[6199]: baseRef: 'OpaqueRef:5d4109f0-5278-64d8-233d-6cd73c8c6d6a' Sep 18 08:17:12 xo-server[6199]: } Sep 18 08:17:14 xo-server[6199]: 2024-09-18T12:17:14.459Z xo:xapi:vdi INFO can't get changed block { Sep 18 08:17:14 xo-server[6199]: error: XapiError: SR_BACKEND_FAILURE_460(, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Sep 18 08:17:14 xo-server[6199]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/_XapiError.mjs:16:12) Sep 18 08:17:14 xo-server[6199]: at file:///opt/xo/xo-builds/xen-orchestra-202409180806/packages/xen-api/transports/json-rpc.mjs:38:21 Sep 18 08:17:14 xo-server[6199]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Sep 18 08:17:14 xo-server[6199]: code: 'SR_BACKEND_FAILURE_460', Sep 18 08:17:14 xo-server[6199]: params: [ Sep 18 08:17:14 xo-server[6199]: '', Sep 18 08:17:14 xo-server[6199]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]', Sep 18 08:17:14 xo-server[6199]: '' Sep 18 08:17:14 xo-server[6199]: ], Sep 18 08:17:14 xo-server[6199]: call: { method: 'VDI.list_changed_blocks', params: [Array] }, Sep 18 08:17:14 xo-server[6199]: url: undefined, Sep 18 08:17:14 xo-server[6199]: task: undefined Sep 18 08:17:14 xo-server[6199]: }, -

RE: Backup Fail: Trying to add data in unsupported state

Hello,

My vms are about 150G each. I was using compression when I backed up the vm to the remote before mirroring it to the s3 bucket. I did end up changing to delta backups and the error did go away but I can create another normal backup and mirror it to the bucket again to see if I get the same results.