@olivierlambert Not an urgent thing. First test on this.

Posts

-

RE: Import from vmware esxi 6.0 or 6.5

-

Import from vmware esxi 6.0 or 6.5

Hi,

I just try to import a simple VM from ESXI 6.0 or 6.5 (I have both hosts). I juste update everything to latest (xcp-ng, XO and XOA). When I try to import the VM I get an instant error message:

vm.importMultipleFromEsxi { "concurrency": 2, "host": "10.0.1.9", "network": "0029d5bd-a537-0d88-862c-edb0ff89948e", "password": "* obfuscated *", "sr": "a4c92ff0-5388-12f6-7b30-021b76f6bbb9", "sslVerify": false, "stopOnError": true, "stopSource": true, "thin": true, "user": "root", "vms": [ "5" ] } { "succeeded": {}, "message": "Property description must be an object: undefined", "name": "TypeError", "stack": "TypeError: Property description must be an object: undefined at Function.defineProperty (<anonymous>) at Task.onProgress (/etc/xen-orchestra/@vates/task/combineEvents.js:51:16) at Task.#emit (/etc/xen-orchestra/@vates/task/index.js:126:21) at Task.#maybeStart (/etc/xen-orchestra/@vates/task/index.js:133:17) at Task.runInside (/etc/xen-orchestra/@vates/task/index.js:152:21) at Task.run (/etc/xen-orchestra/@vates/task/index.js:138:31) at asyncEach.concurrency.concurrency (file:///etc/xen-orchestra/packages/xo-server/src/api/vm.mjs:1372:58) at next (/etc/xen-orchestra/@vates/async-each/index.js:90:37)" }I tried by the web interface of XO and XOA.

Anything I can do ?

Regards

-

RE: XOSTOR hyperconvergence preview

@ronan-a Hi,

I tested your branch and now the new added hosts to the pool are now attached to the XOSTOR. This is nice !

I have looked at the code, but I'm not sure if in the current state of your branch we can add a disk on the new host and update the replication ? I think not... but just to be sure.

-

RE: XOSTOR hyperconvergence preview

Imagine if I have 30 Vms... alot of resources to be created....

I'm not sure to understand the link with VMs? ^^"

From what I understand, I have to recreate all the resource manually, since all the VMs disk create a resource.. Maybe I'm wrong again

I'm very interested testing this new add_host functionnality. The fun part is now I have a better undertanding of what is going on under the hood !

regards,

-

RE: XOSTOR hyperconvergence preview

Hi,

Ok, Figured out how to do it and get it working on 2 or more nodes. Here the process:

[xcp-ng-01 ~]#wget https://gist.githubusercontent.com/Wescoeur/7bb568c0e09e796710b0ea966882fcac/raw/26d1db55fafa4622af2d9ee29a48f6756b8b11a3/gistfile1.txt -O install && chmod +x install [xcp-ng-01 ~]# ./install --disks /dev/sdb --thin [xcp-ng-01 ~]# vgchange -a y linstor_group [xcp-ng-01 ~]# xe sr-create type=linstor name-label=XOSTOR host-uuid=71324aae-aff1-4323-bb0b-2c5f858b223e device-config:hosts=xcp-ng-01 device-config:group-name=linstor_group/thin_device device-config:redundancy=1 shared=true device-config:provisioning=thinNow the SR is available to create the VMs. For simplicity, I won't create VM now.

[xcp-ng-02 ~]# wget https://gist.githubusercontent.com/Wescoeur/7bb568c0e09e796710b0ea966882fcac/raw/26d1db55fafa4622af2d9ee29a48f6756b8b11a3/gistfile1.txt -O install && chmod +x install [xcp-ng-02 ~]# ./install --disks /dev/sdb --thin [xcp-ng-02 ~]# vgchange -a y linstor_groupOn both hosts, I modify the /etc/hosts to add both hosts with their IP to workaround the driver bug

Start services on nodes 2

systemctl enable minidrbdcluster.service systemctl enable linstor-satellite.service systemctl start linstor-satellite.service systemctl start minidrbdcluster.serviceOpen IPTables on node 2

/etc/xapi.d/plugins/firewall-port open 3366 /etc/xapi.d/plugins/firewall-port open 3370 /etc/xapi.d/plugins/firewall-port open 3376 /etc/xapi.d/plugins/firewall-port open 3377 /etc/xapi.d/plugins/firewall-port open 8076 /etc/xapi.d/plugins/firewall-port open 8077 /etc/xapi.d/plugins/firewall-port open 7000:8000[xcp-ng-02 ~]linstor --controllers=10.33.33.40 node create --node-type combined $HOSTNAME [xcp-ng-02 ~]linstor --controllers=10.33.33.40 storage-pool create lvmthin $HOSTNAME xcp-sr-linstor_group_thin_device linstor_group/thin_device [xcp-ng-02 ~]linstor --controllers=10.33.33.40 resource create $HOSTNAME xcp-persistent-database --storage-pool xcp-sr-linstor_group_thin_deviceAfter that, you should be in splitbrain. I have no Idea !, my knowledge are not good enough to figure it right now. But I know how to fix it.

On node 2 run those commands:

drbdadm secondary all drbdadm disconnect all drbdadm -- --discard-my-data connect allOn node 1 run those commands:

drbdadm primary all drbdadm disconnect all drbdadm connect allNow the linstor/drbd are in good shape and should have all the resources.

for the sake of fun, I change the place count from 1 to 2 on linstor controller[xcp-ng-01 ~]linstor rg modify --place-count 2 xcp-sr-linstor_group_thin_deviceNow the replication is working.

Now on node 1, I unplug the pbd of xcp-ng-02, destroyit and create a new on with 2 hosts [xcp-ng-01 ~]# xe pbd-unplug uuid=6295519d-1071-2127-4313-f14c9615f244 [xcp-ng-01 ~]# xe pbd-destroy uuid=6295519d-1071-2127-4313-f14c9615f244 [xcp-ng-01 ~]# xe pbd-create host-uuid=eb48f91d-9916-4542-9cf4-4a718abdc451 sr-uuid=505c1928-d39d-421c-1556-143f82770ff5 device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02 [xcp-ng-01 ~]# xe pbd-plug uuid=774971b4-dd03-18c8-92e5-32cac9bdc1e3do the same thing with the second pbd and everything is connected together.

Not an easy task !

Imagine if I have 30 Vms... alot of resources to be created....

-

RE: XOSTOR hyperconvergence preview

To be able to recreate and reconnect all the PBD, I have modified the /etc/hosts file to add manually each hosts with their IPs. I know that @ronan-a is working to fix the hostname addressing in the driver. But at least I can continue to test the scalability.

Looks promising !

-

RE: XOSTOR hyperconvergence preview

Hi,

@ronan-a said in XOSTOR hyperconvergence preview:@dumarjo Could you open a ticket with a tunnel please? I can take a look. Also: I started a script this week to simplify the management of LINSTOR with add/remove commands.

Ticket done on vates.

-

RE: XOSTOR hyperconvergence preview

@ronan-a

Hi,I did some experiment... and cannot get the missing piece of the puzzle to add my xcp-ng-04 host.

From my last status, I have my new xcp-ng-04 host part of the pool and I have installed all the tools for the linstor.

I checked the services for satellite and controller on the xcp-ng-04 and they are not running. I have no Idea if I need to start something manualy of not.

Here my SMlog on a freshly booted xcp-ng-04

Mar 22 11:54:14 xcp-ng-04 SM: [2685] sr_attach {'sr_uuid': '38c2baa3-bc8f-fbc5-ef5a-42461db92c51', 'subtask_of': 'DummyRef:|b4ea936f-80bb-4a6b-98b9-66a95f86b006|SR.attach', 'args': [],$ Mar 22 11:54:14 xcp-ng-04 SMGC: [2685] === SR 38c2baa3-bc8f-fbc5-ef5a-42461db92c51: abort === Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: tried lock /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active, acquired: True (exists: True) Mar 22 11:54:14 xcp-ng-04 SMGC: [2685] abort: releasing the process lock Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/gc_active Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: opening lock file /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: acquired /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: acquired /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] RESET for SR 38c2baa3-bc8f-fbc5-ef5a-42461db92c51 (master: True) Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/sr Mar 22 11:54:14 xcp-ng-04 SM: [2685] lock: released /var/lock/sm/38c2baa3-bc8f-fbc5-ef5a-42461db92c51/running Mar 22 11:54:14 xcp-ng-04 SM: [2685] set_dirty 'OpaqueRef:ea31ae92-4207-4c8b-8db6-da901c6a00a8' succeeded Mar 22 11:54:14 xcp-ng-04 SM: [2709] sr_update {'sr_uuid': '38c2baa3-bc8f-fbc5-ef5a-42461db92c51', 'subtask_of': 'DummyRef:|ae4505d5-b9fe-4f39-92dc-67ab9d0b579b|SR.stat', 'args': [], '$ Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 22 11:54:15 xcp-ng-04 SM: [2725] sr_attach {'sr_uuid': 'bef191f3-e976-94ec-6bb7-d87529a72dbb', 'subtask_of': 'DummyRef:|ae624d96-e91a-4a12-afd6-35593be4ce51|SR.attach', 'args': [],$ Mar 22 11:54:15 xcp-ng-04 SMGC: [2725] === SR bef191f3-e976-94ec-6bb7-d87529a72dbb: abort === Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: tried lock /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active, acquired: True (exists: True) Mar 22 11:54:15 xcp-ng-04 SMGC: [2725] abort: releasing the process lock Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:15 xcp-ng-04 SM: [2725] RESET for SR bef191f3-e976-94ec-6bb7-d87529a72dbb (master: False) Mar 22 11:54:15 xcp-ng-04 SM: [2725] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 22 11:54:16 xcp-ng-04 SM: [2725] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 0 Mar 22 11:54:19 xcp-ng-04 SM: [2725] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 1On xcp-ng-02 (linstor controller)

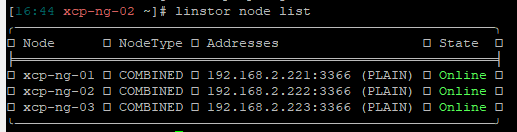

[11:42 xcp-ng-02 ~]# linstor node list ╭────────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ State ┊ ╞════════════════════════════════════════════════════════════╡ ┊ xcp-ng-01 ┊ COMBINED ┊ 192.168.2.221:3366 (PLAIN) ┊ Online ┊ ┊ xcp-ng-02 ┊ COMBINED ┊ 192.168.2.222:3366 (PLAIN) ┊ Online ┊ ┊ xcp-ng-03 ┊ COMBINED ┊ 192.168.2.223:3366 (PLAIN) ┊ Online ┊ ╰────────────────────────────────────────────────────────────╯I list all the linstor PBD on hosts:

[11:49 xcp-ng-01 ~]# xe pbd-list | grep linstor -3 uuid ( RO) : e75fdc51-29a4-aa57-bc44-459f80a0d230 host-uuid ( RO): ad95c6ca-612d-42af-8909-d4e9dc7645bb sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): true -- uuid ( RO) : 063db650-55b1-a3e0-9d9a-e94ce938988d host-uuid ( RO): 5747f145-0dc2-4987-a6b9-b6c5a7ed0505 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03; group-name: linstor_group/thin_device; redundancy: 2; provisioning: thin currently-attached ( RO): false -- uuid ( RO) : a1f876b1-0568-71ac-9ffb-720e626cb4ab host-uuid ( RO): e286a04a-69bf-4d59-a0c8-e7338e8c1831 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): true uuid ( RO) : 08564ab5-a518-f709-8527-f592c2592d14 host-uuid ( RO): eb48f91d-9916-4542-9cf4-4a718abdc451 sr-uuid ( RO): bef191f3-e976-94ec-6bb7-d87529a72dbb device-config (MRO): provisioning: thin; redundancy: 2; group-name: linstor_group/thin_device; hosts: xcp-ng-01,xcp-ng-02,xcp-ng-03 currently-attached ( RO): trueAfter that, I remove all the PBD to all hosts to be able to recreate the PBDs on all hosts with the new xcp-ng-04

xe pbd-create host-uuid=e286a04a-69bf-4d59-a0c8-e7338e8c1831 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=ad95c6ca-612d-42af-8909-d4e9dc7645bb sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=eb48f91d-9916-4542-9cf4-4a718abdc451 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04 xe pbd-create host-uuid=5747f145-0dc2-4987-a6b9-b6c5a7ed0505 sr-uuid=bef191f3-e976-94ec-6bb7-d87529a72dbb device-config:provisioning=thin device-config:redundancy=2 device-config:group-name=linstor_group/thin_device device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03,xcp-ng-04all succeed. No error.

After that I try to connect all PBDs to the SR

[11:13 xcp-ng-01 ~]# xe pbd-plug uuid=7d588c37-a152-9666-175e-91b2d48c150f [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=99f76235-1b1a-e5fa-bb19-3883737fcc6d [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=df727345-f475-b929-ecc1-b506f0053361 [11:13 xcp-ng-01 ~]# xe pbd-plug uuid=8b4ddc6f-e25a-1942-a435-345ccc93551a Error code: SR_BACKEND_FAILURE_47 Error parameters: , The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']],From there, I'm a bit lost...

1- Do I need to add the linstor satellite xcp-ng-04 before doing all the PBDs ?

2- Should I start any of the services on xcp-ng-04 before doing all this ?Regards

-

RE: XOSTOR hyperconvergence preview

Is my understanding is good ? A XCP-NG host cannot use a shared SR (based on linstor) if it's not part of the linstor nodes ?

How this new host can be part of the nodes if I don't want to add HDD/SSD to this new host ? can it be done ?

Again, I want to know the limitation before thinking using this new promising technology !

-

RE: XOSTOR hyperconvergence preview

@ronan-a

Here some more info

Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:22 xcp-ng-04 SM: [28002] sr_attach {'sr_uuid': 'bef191f3-e976-94ec-6bb7-d87529a72dbb', 'subtask_of': 'DummyRef:|79eb31a6-806c-4883-8e8d-de59cde66469|SR.at$ Mar 10 17:05:22 xcp-ng-04 SMGC: [28002] === SR bef191f3-e976-94ec-6bb7-d87529a72dbb: abort === Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: opening lock file /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: tried lock /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active, acquired: True (exists: True) Mar 10 17:05:22 xcp-ng-04 SMGC: [28002] abort: releasing the process lock Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/gc_active Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: acquired /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:22 xcp-ng-04 SM: [28002] RESET for SR bef191f3-e976-94ec-6bb7-d87529a72dbb (master: False) Mar 10 17:05:22 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/running Mar 10 17:05:23 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 0 Mar 10 17:05:27 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 1 Mar 10 17:05:30 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 2 Mar 10 17:05:33 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 3 Mar 10 17:05:37 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 4 Mar 10 17:05:40 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 5 Mar 10 17:05:43 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 6 Mar 10 17:05:47 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 7 Mar 10 17:05:50 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 8 Mar 10 17:05:53 xcp-ng-04 SM: [28002] Got exception: Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-02']. Retry number: 9 Mar 10 17:05:54 xcp-ng-04 SM: [28002] Raising exception [47, The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor:/$ Mar 10 17:05:54 xcp-ng-04 SM: [28002] lock: released /var/lock/sm/bef191f3-e976-94ec-6bb7-d87529a72dbb/sr Mar 10 17:05:54 xcp-ng-04 SM: [28002] ***** generic exception: sr_attach: EXCEPTION <class 'SR.SROSError'>, The SR is not available [opterr=Error: Unable to connect to$ Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 110, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return self._run_locked(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 159, in _run_locked Mar 10 17:05:54 xcp-ng-04 SM: [28002] rv = self._run(sr, target) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 352, in _run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return sr.attach(sr_uuid) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 489, in wrap Mar 10 17:05:54 xcp-ng-04 SM: [28002] return load(self, *args, **kwargs) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 415, in load Mar 10 17:05:54 xcp-ng-04 SM: [28002] raise xs_errors.XenError('SRUnavailable', opterr=str(e)) Mar 10 17:05:54 xcp-ng-04 SM: [28002] Mar 10 17:05:54 xcp-ng-04 SM: [28002] ***** LINSTOR resources on XCP-ng: EXCEPTION <class 'SR.SROSError'>, The SR is not available [opterr=Error: Unable to connect to $ Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 378, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] ret = cmd.run(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 110, in run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return self._run_locked(sr) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 159, in _run_locked Mar 10 17:05:54 xcp-ng-04 SM: [28002] rv = self._run(sr, target) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/SRCommand.py", line 352, in _run Mar 10 17:05:54 xcp-ng-04 SM: [28002] return sr.attach(sr_uuid) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 489, in wrap Mar 10 17:05:54 xcp-ng-04 SM: [28002] return load(self, *args, **kwargs) Mar 10 17:05:54 xcp-ng-04 SM: [28002] File "/opt/xensource/sm/LinstorSR", line 415, in load Mar 10 17:05:54 xcp-ng-04 SM: [28002] raise xs_errors.XenError('SRUnavailable', opterr=str(e)) Mar 10 17:05:54 xcp-ng-04 SM: [28002]This is what I have in the log file. If you need more info, let me know.

-

RE: XOSTOR hyperconvergence preview

HI,

Do the vm HA is suppose to work if the VM is hosted on a SR with XOStore based ? I try to get it work, but the VM never restart if I shutdown the hosts where the VM is running.

Regards,

-

RE: XOSTOR hyperconvergence preview

@ronan-a

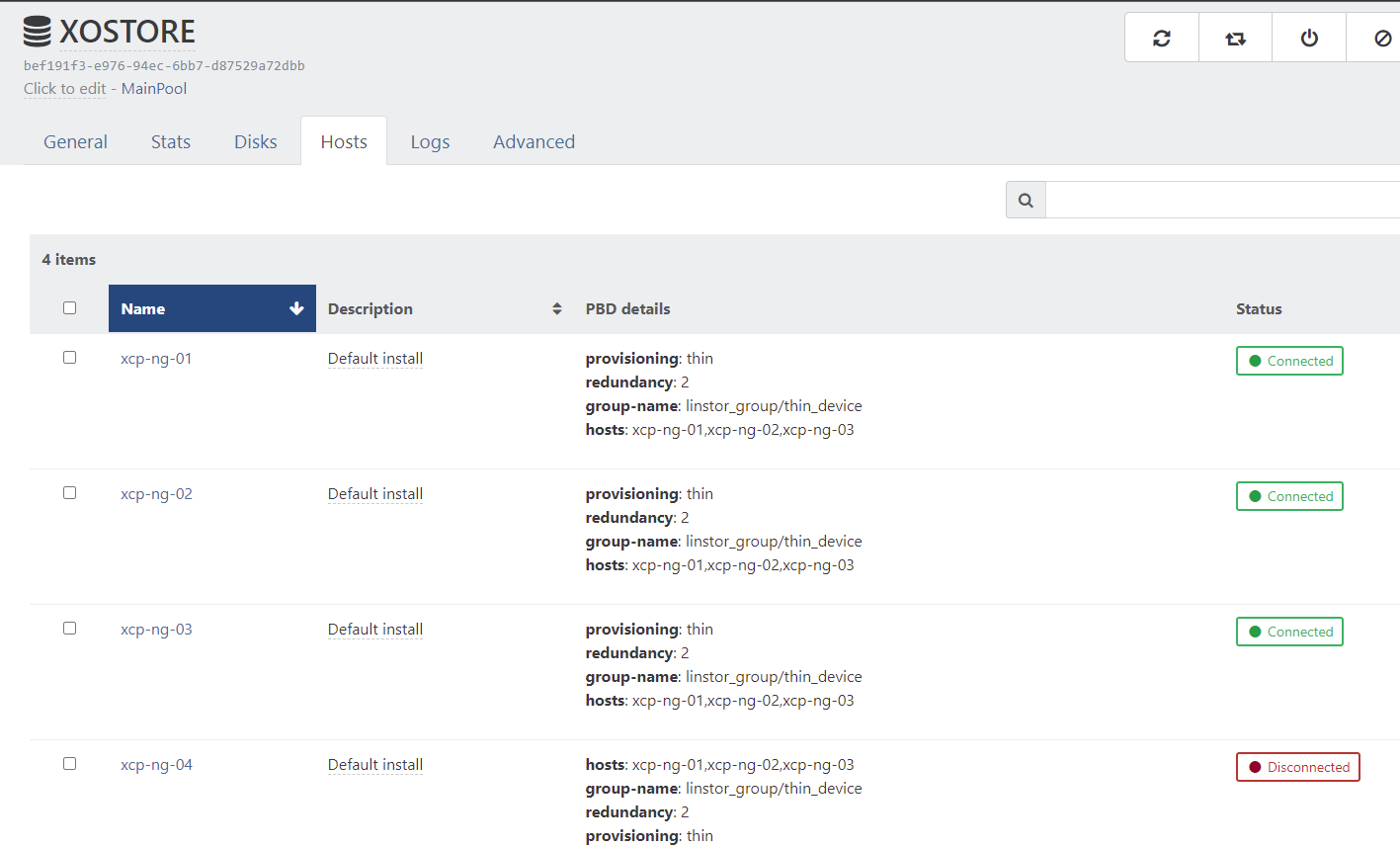

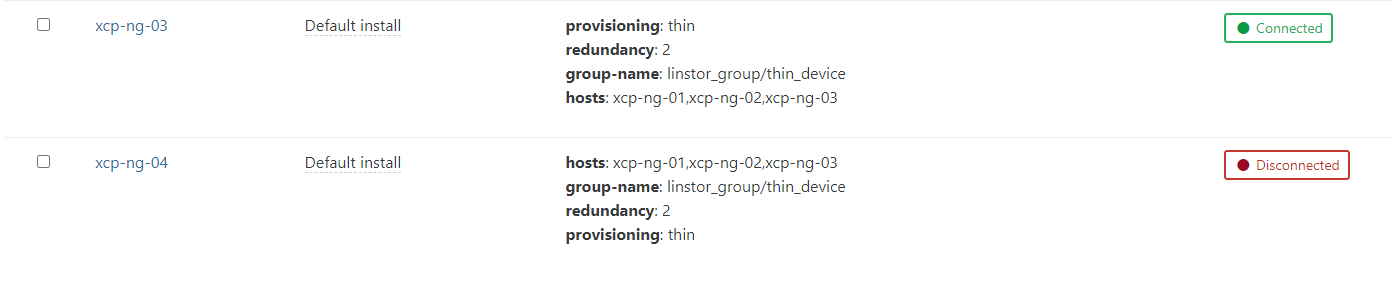

Another situation is that I cannot use the SR on all the host that are not part of the group (linstor_group/thin_device).for now I have my new xcp-ng-04 host joined to the pool, and I would like to connect the SR to it.

The first error I get is that the driver is not installed. So I installed all the package used on the install script (without the init disk section), and try to connect the SR to the xcp-ng-04. Now I get those error in the logfile.

[12:33 xcp-ng-01 ~]# tail -f /var/log/xensource.log | grep linstor Mar 2 12:34:10 xcp-ng-01 xapi: [debug||2306 HTTPS 192.168.2.94->:::80|host.call_plugin R:98517b08066b|audit] Host.call_plugin host = '8ac2930f-f826-4a18-8330-06153e3e4054 (xcp-ng-02)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:10 xcp-ng-01 xapi: [debug||2033 HTTPS 192.168.2.94->:::80|host.call_plugin R:e39e097fe8cb|audit] Host.call_plugin host = '81b06c7f-df55-4628-b27f-4e1e7850f900 (xcp-ng-03)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:11 xcp-ng-01 xapi: [debug||2306 HTTPS 192.168.2.94->:::80|host.call_plugin R:16602b9fb9d1|audit] Host.call_plugin host = '8ac2930f-f826-4a18-8330-06153e3e4054 (xcp-ng-02)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] Mar 2 12:34:11 xcp-ng-01 xapi: [debug||2033 HTTPS 192.168.2.94->:::80|host.call_plugin R:20c5d5ce11dc|audit] Host.call_plugin host = '81b06c7f-df55-4628-b27f-4e1e7850f900 (xcp-ng-03)'; plugin = 'linstor-manager'; fn = 'hasControllerRunning' args = [ 'hidden' ] ..... ..... Mar 2 12:34:43 xcp-ng-01 xapi: [error||6940 ||backtrace] Async.PBD.plug R:4bbaefcdb2cb failed with exception Server_error(SR_BACKEND_FAILURE_47, [ ; The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-03']]; ]) Mar 2 12:34:43 xcp-ng-01 xapi: [error||6940 ||backtrace] Raised Server_error(SR_BACKEND_FAILURE_47, [ ; The SR is not available [opterr=Error: Unable to connect to any of the given controller hosts: ['linstor://xcp-ng-03']]; ])it's look like a controller need to be started on all the hosts to be able to "connect" the new host ?

Like I said, This is a new test to know if a xcp-ng that are not part of the VG can use this SR.

Hope my intention is clear...I just click on the disconnected button on UI

regards,

-

RE: XOSTOR hyperconvergence preview

@ronan-a said in XOSTOR hyperconvergence preview:

- The PBDs of the current SR must be modified to use the new host, and a PBD must be created on the new host.

I'm relatively new with xcp-ng, and I'm a bit lost for this part. I'll give you more info on my current setup

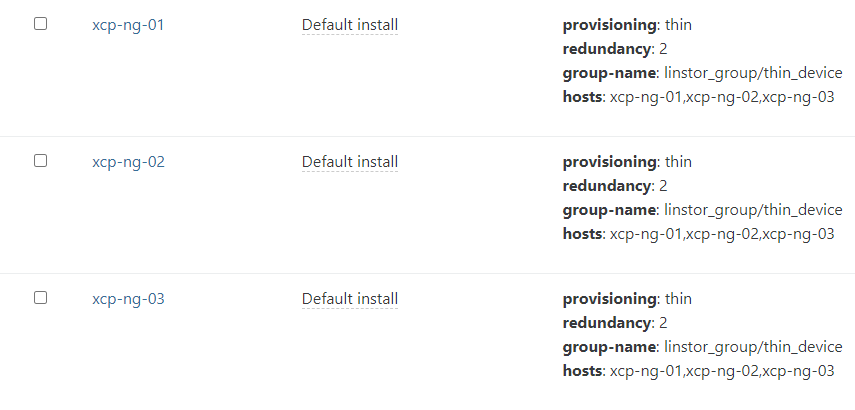

For now I have 3 hosts that are connected and working. I can install new VMs and start them on this SR.

The new host is already added to the pool (xcp-ng-04).

It's a little bit complex. So I think I will add a basic script to configure a new host or to remove an existing one. I do not recommend the usage of these commands, except in the case of tests.

Agree. This can help a bit.

-

RE: XOSTOR hyperconvergence preview

@ronan-a I did this on all this server and now the SR is up.

For the sake of scalability, is it possible to add a new host or a new disk in a host easily ? Do you have a tips about it ?

Regards,

-

RE: XOSTOR hyperconvergence preview

I tried to do the setup with 3 hosts, and followed the commands. Now I get this error when I try to create the SR.

xe sr-create type=linstor name-label=XOSTOR host-uuid=43b39fc0-002f-4347-a3e8-16e1284cfcb3 device-config:hosts=xcp-ng-01,xcp-ng-02,xcp-ng-03 device-config:group-name=linstor_group/thin_device device-config:redundancy=3 shared=true device-config:provisionning=thinError code: SR_BACKEND_FAILURE_5006 Error parameters: , LINSTOR SR creation error [opterr=Could not create SP `xcp-sr-linstor_group_thin_device` on node `xcp-ng-01`: (Node: 'xcp-ng-01') Expected 3 columns, but got 2],No idea of what I need to do with this error. looks like an error when inserting into the DB.

-

RE: XOSTOR hyperconvergence preview

Hi,

I have been able to create a small lab with 2 xcp-ngs and would like to use the XOSTOR. First I create a first xcp-ng box (xcp-ng-01) create a new XOSTOR with all the command above. All is working. I have a new SR with 1 host only.

Now If I want to add a new host to the SR how can I do this ? I would like to simulate adding new host/disk to the SR. Is it possible ?