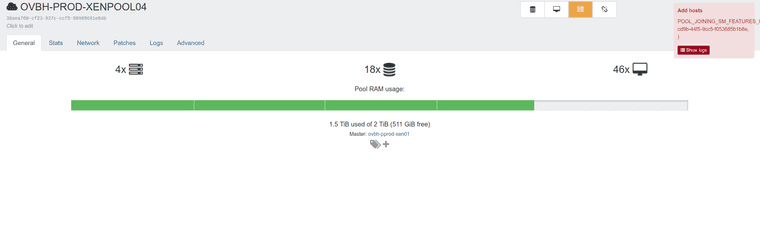

I do not have any asks ATM, but I thought I would just share my plan that I use to create k8s clusters that we have been using for a while now.

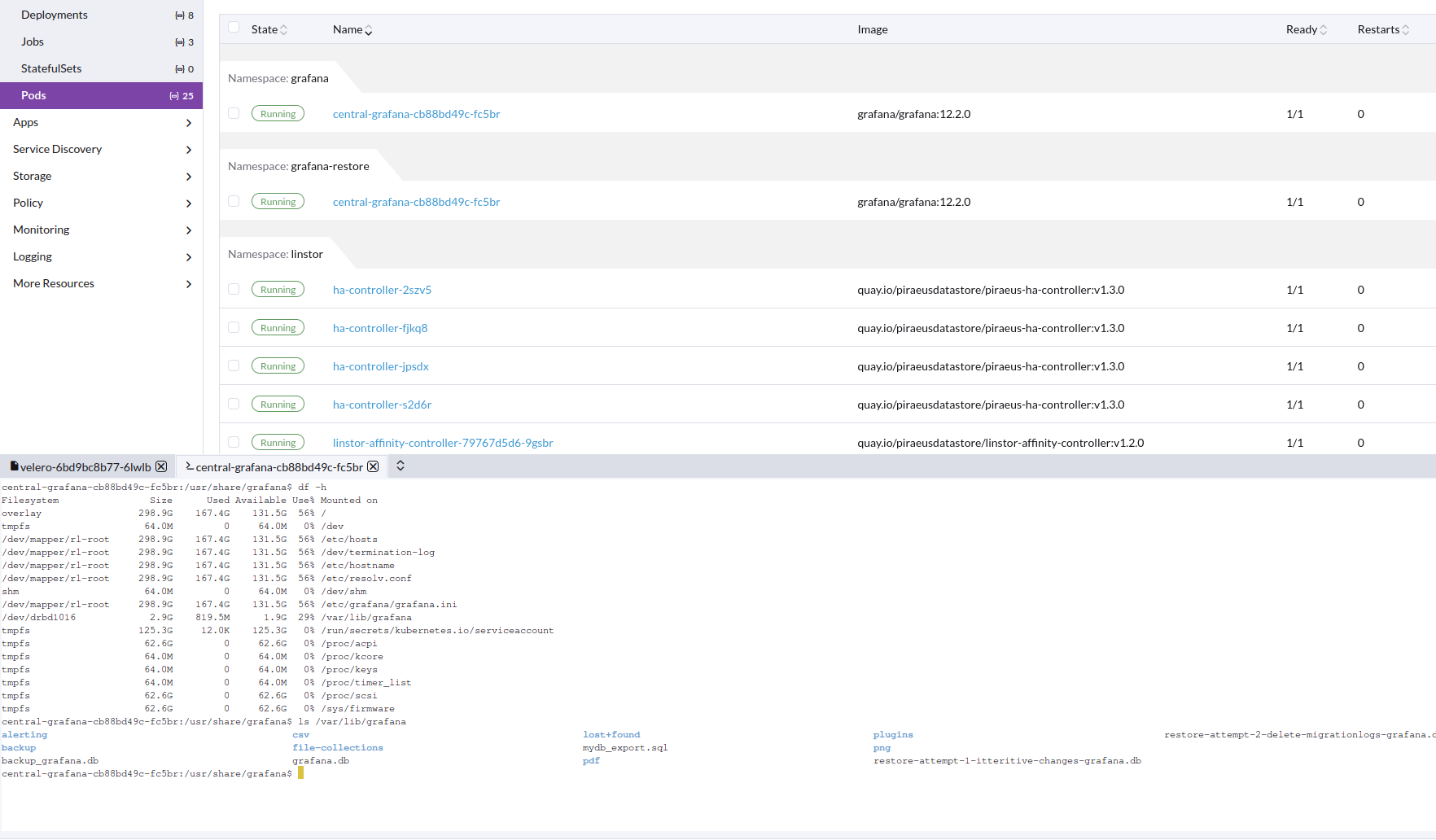

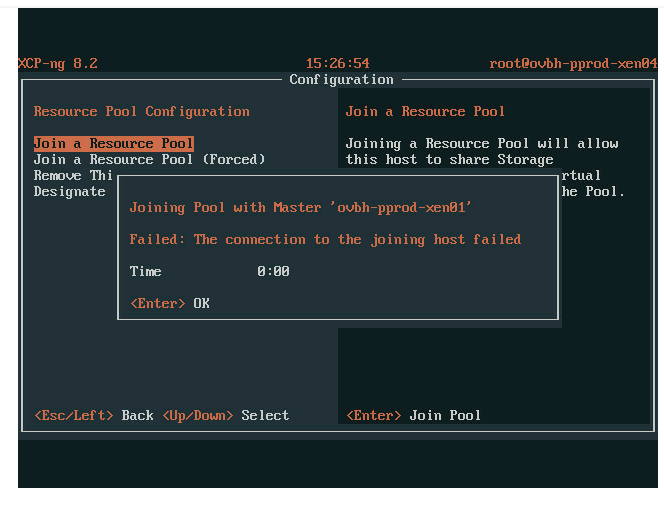

It has grown over time and may be a bit messy, but figured better then nothing. We use this for rke2 rancher k8s clusters deployed onto out xcp-ng cluster. We use xostor for drives, and the vlan5 network is for piraeus operator to use for pv. We also use IPVS. We are using a rocky linux 9 vm template.

If these are useful to anyone and they have questions I will do my best to answer.

variable "pool" {

default = "OVBH-PROD-XENPOOL04"

}

variable "network0" {

default = "Native vRack"

}

variable "network1" {

default = "VLAN80"

}

variable "network2" {

default = "VLAN5"

}

variable "cluster_name" {

default = "Production K8s Cluster"

}

variable "enrollment_command" {

default = "curl -fL https://rancher.<redacted>.net/system-agent-install.sh | sudo sh -s - --server https://rancher.<redacted>.net --label 'cattle.io/os=linux' --token <redacted>"

}

variable "node_type" {

description = "Node type flag"

default = {

"1" = "--etcd --controlplane",

"2" = "--etcd --controlplane",

"3" = "--etcd --controlplane",

"4" = "--worker",

"5" = "--worker",

"6" = "--worker",

"7" = "--worker --taints smtp=true:NoSchedule",

"8" = "--worker --taints smtp=true:NoSchedule",

"9" = "--worker --taints smtp=true:NoSchedule"

}

}

variable "node_networks" {

description = "Node network flag"

default = {

"1" = "--internal-address 10.1.8.100 --address <redacted>",

"2" = "--internal-address 10.1.8.101 --address <redacted>",

"3" = "--internal-address 10.1.8.102 --address <redacted>",

"4" = "--internal-address 10.1.8.103 --address <redacted>",

"5" = "--internal-address 10.1.8.104 --address <redacted>",

"6" = "--internal-address 10.1.8.105 --address <redacted>",

"7" = "--internal-address 10.1.8.106 --address <redacted>",

"8" = "--internal-address 10.1.8.107 --address <redacted>",

"9" = "--internal-address 10.1.8.108 --address <redacted>"

}

}

variable "vm_name" {

description = "Node type flag"

default = {

"1" = "OVBH-VPROD-K8S01-MASTER01",

"2" = "OVBH-VPROD-K8S01-MASTER02",

"3" = "OVBH-VPROD-K8S01-MASTER03",

"4" = "OVBH-VPROD-K8S01-WORKER01",

"5" = "OVBH-VPROD-K8S01-WORKER02",

"6" = "OVBH-VPROD-K8S01-WORKER03",

"7" = "OVBH-VPROD-K8S01-WORKER04",

"8" = "OVBH-VPROD-K8S01-WORKER05",

"9" = "OVBH-VPROD-K8S01-WORKER06"

}

}

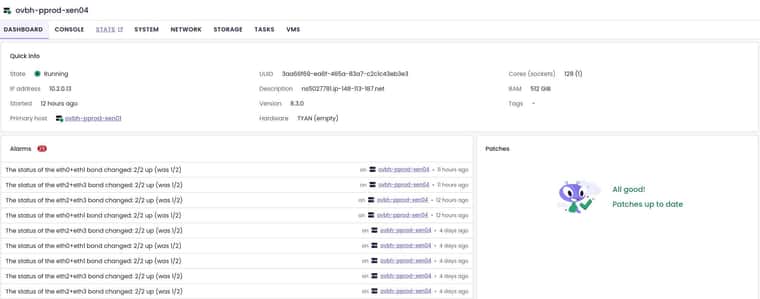

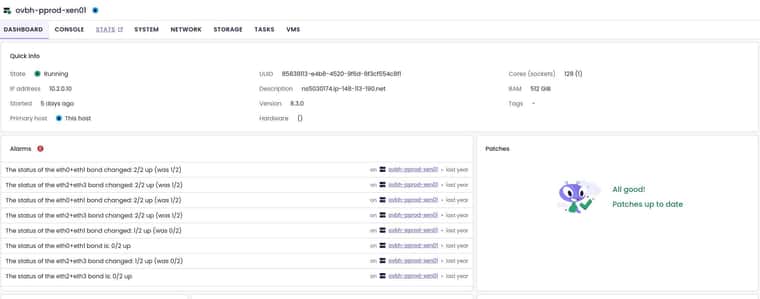

variable "preferred_host" {

default = {

"1" = "85838113-e4b8-4520-9f6d-8f3cf554c8f1",

"2" = "783c27ac-2dcb-4798-9ca8-27f5f30791f6",

"3" = "c03e1a45-4c4c-46f5-a2a1-d8de2e22a866",

"4" = "85838113-e4b8-4520-9f6d-8f3cf554c8f1",

"5" = "783c27ac-2dcb-4798-9ca8-27f5f30791f6",

"6" = "c03e1a45-4c4c-46f5-a2a1-d8de2e22a866",

"7" = "85838113-e4b8-4520-9f6d-8f3cf554c8f1",

"8" = "783c27ac-2dcb-4798-9ca8-27f5f30791f6",

"9" = "c03e1a45-4c4c-46f5-a2a1-d8de2e22a866"

}

}

variable "xoa_admin_password" {

}

variable "host_count" {

description = "All drives go to xostor"

default = {

"1" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"2" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"3" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"4" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"5" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"6" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"7" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"8" = "479ca676-20a1-4051-7189-a4a9ca47e00d",

"9" = "479ca676-20a1-4051-7189-a4a9ca47e00d"

}

}

variable "network1_ip_mapping" {

description = "Mapping for network1 ips, vlan80"

default = {

"1" = "10.1.8.100",

"2" = "10.1.8.101",

"3" = "10.1.8.102",

"4" = "10.1.8.103",

"5" = "10.1.8.104",

"6" = "10.1.8.105",

"7" = "10.1.8.106",

"8" = "10.1.8.107",

"9" = "10.1.8.108"

}

}

variable "network1_gateway" {

description = "Mapping for public ip gateways, from hosts"

default = "10.1.8.1"

}

variable "network1_prefix" {

description = "Prefix for the network used"

default = "22"

}

variable "network2_ip_mapping" {

description = "Mapping for network2 ips, VLAN5"

default = {

"1" = "10.2.5.30",

"2" = "10.2.5.31",

"3" = "10.2.5.32",

"4" = "10.2.5.33",

"5" = "10.2.5.34",

"6" = "10.2.5.35",

"7" = "10.2.5.36",

"8" = "10.2.5.37",

"9" = "10.2.5.38"

}

}

variable "network2_prefix" {

description = "Prefix for the network used"

default = "22"

}

variable "network0_ip_mapping" {

description = "Mapping for network0 ips, public"

default = {

<redacted>

}

}

variable "network0_gateway" {

description = "Mapping for public ip gateways, from hosts"

default = {

<redacted>

}

}

variable "network0_prefix" {

description = "Prefix for the network used"

default = {

<redacted>

}

}

# Instruct terraform to download the provider on `terraform init`

terraform {

required_providers {

xenorchestra = {

source = "vatesfr/xenorchestra"

version = "~> 0.29.0"

}

}

}

# Configure the XenServer Provider

provider "xenorchestra" {

# Must be ws or wss

url = "ws://10.2.0.5" # Or set XOA_URL environment variable

username = "admin@admin.net" # Or set XOA_USER environment variable

password = var.xoa_admin_password # Or set XOA_PASSWORD environment variable

}

data "xenorchestra_pool" "pool" {

name_label = var.pool

}

data "xenorchestra_template" "template" {

name_label = "Rocky Linux 9 Template"

pool_id = data.xenorchestra_pool.pool.id

}

data "xenorchestra_network" "net1" {

name_label = var.network1

pool_id = data.xenorchestra_pool.pool.id

}

data "xenorchestra_network" "net2" {

name_label = var.network2

pool_id = data.xenorchestra_pool.pool.id

}

data "xenorchestra_network" "net0" {

name_label = var.network0

pool_id = data.xenorchestra_pool.pool.id

}

resource "xenorchestra_cloud_config" "node" {

count = 9

name = "${lower(lookup(var.vm_name, count.index + 1))}_cloud_config"

template = <<EOF

#cloud-config

ssh_authorized_keys:

- ssh-rsa <redacted>

write_files:

- path: /etc/NetworkManager/conf.d/rke2-canal.conf

permissions: '0755'

owner: root

content: |

[keyfile]

unmanaged-devices=interface-name:cali*;interface-name:flannel*

- path: /tmp/selinux_kmod_drbd.log

permissions: '0640'

owner: root

content: |

type=AVC msg=audit(1661803314.183:778): avc: denied { module_load } for pid=148256 comm="insmod" path="/tmp/ko/drbd.ko" dev="overlay" ino=101839829 scontext=system_u:system_r:unconfined_service_t:s0 tcontext=system_u:object_r:var_lib_t:s0 tclass=system permissive=0

type=AVC msg=audit(1661803314.185:779): avc: denied { module_load } for pid=148257 comm="insmod" path="/tmp/ko/drbd_transport_tcp.ko" dev="overlay" ino=101839831 scontext=system_u:system_r:unconfined_service_t:s0 tcontext=system_u:object_r:var_lib_t:s0 tclass=system permissive=0

- path: /etc/sysconfig/modules/ipvs.modules

permissions: 0755

owner: root

content: |

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

- path: /etc/modules-load.d/ipvs.conf

permissions: 0755

owner: root

content: |

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack

#cloud-init

runcmd:

- sudo hostnamectl set-hostname --static ${lower(lookup(var.vm_name, count.index + 1))}.<redacted>.com

- sudo hostnamectl set-hostname ${lower(lookup(var.vm_name, count.index + 1))}.<redacted>.com

- nmcli -t -f NAME con show | xargs -d '\n' -I {} nmcli con delete "{}"

- nmcli con add type ethernet con-name public ifname enX0

- nmcli con mod public ipv4.address '${lookup(var.network0_ip_mapping, count.index + 1)}/${lookup(var.network0_prefix, count.index + 1)}'

- nmcli con mod public ipv4.method manual

- nmcli con mod public ipv4.ignore-auto-dns yes

- nmcli con mod public ipv4.gateway '${lookup(var.network0_gateway, count.index + 1)}'

- nmcli con mod public ipv4.dns "8.8.8.8 8.8.4.4"

- nmcli con mod public connection.autoconnect true

- nmcli con up public

- nmcli con add type ethernet con-name vlan80 ifname enX1

- nmcli con mod vlan80 ipv4.address '${lookup(var.network1_ip_mapping, count.index + 1)}/${var.network1_prefix}'

- nmcli con mod vlan80 ipv4.method manual

- nmcli con mod vlan80 ipv4.ignore-auto-dns yes

- nmcli con mod vlan80 ipv4.ignore-auto-routes yes

- nmcli con mod vlan80 ipv4.gateway '${var.network1_gateway}'

- nmcli con mod vlan80 ipv4.dns "${var.network1_gateway}"

- nmcli con mod vlan80 connection.autoconnect true

- nmcli con mod vlan80 ipv4.never-default true

- nmcli con mod vlan80 ipv6.never-default true

- nmcli con mod vlan80 ipv4.routes "10.0.0.0/8 ${var.network1_gateway}"

- nmcli con up vlan80

- nmcli con add type ethernet con-name vlan5 ifname enX2

- nmcli con mod vlan5 ipv4.address '${lookup(var.network2_ip_mapping, count.index + 1)}/${var.network2_prefix}'

- nmcli con mod vlan5 ipv4.method manual

- nmcli con mod vlan5 ipv4.ignore-auto-dns yes

- nmcli con mod vlan5 ipv4.ignore-auto-routes yes

- nmcli con mod vlan5 connection.autoconnect true

- nmcli con mod vlan5 ipv4.never-default true

- nmcli con mod vlan5 ipv6.never-default true

- nmcli con up vlan5

- systemctl restart NetworkManager

- dnf upgrade -y

- dnf install ipset ipvsadm -y

- bash /etc/sysconfig/modules/ipvs.modules

- dnf install chrony -y

- sudo systemctl enable --now chronyd

- yum install kernel-devel kernel-headers -y

- yum install elfutils-libelf-devel -y

- swapoff -a

- modprobe -- ip_tables

- systemctl disable --now firewalld.service

- systemctl disable --now rngd

- dnf config-manager --add-repo=https://download.docker.com/linux/centos/docker-ce.repo

- dnf install containerd.io tar -y

- dnf install policycoreutils-python-utils -y

- cat /tmp/selinux_kmod_drbd.log | sudo audit2allow -M insmoddrbd

- sudo semodule -i insmoddrbd.pp

- ${var.enrollment_command} ${lookup(var.node_type, count.index + 1)} ${lookup(var.node_networks, count.index + 1)}

bootcmd:

- swapoff -a

- modprobe -- ip_tables

EOF

}

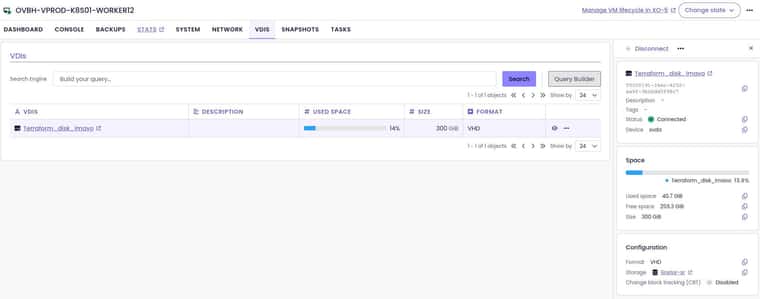

resource "xenorchestra_vm" "master" {

count = 3

cpus = 4

memory_max = 8589934592

cloud_config = xenorchestra_cloud_config.node[count.index].template

name_label = lookup(var.vm_name, count.index + 1)

name_description = "${var.cluster_name} master"

template = data.xenorchestra_template.template.id

auto_poweron = true

affinity_host = lookup(var.preferred_host, count.index + 1)

network {

network_id = data.xenorchestra_network.net0.id

}

network {

network_id = data.xenorchestra_network.net1.id

}

network {

network_id = data.xenorchestra_network.net2.id

}

disk {

sr_id = lookup(var.host_count, count.index + 1)

name_label = "Terraform_disk_imavo"

size = 107374182400

}

}

resource "xenorchestra_vm" "worker" {

count = 3

cpus = 32

memory_max = 68719476736

cloud_config = xenorchestra_cloud_config.node[count.index + 3].template

name_label = lookup(var.vm_name, count.index + 3 + 1)

name_description = "${var.cluster_name} worker"

template = data.xenorchestra_template.template.id

auto_poweron = true

affinity_host = lookup(var.preferred_host, count.index + 3 + 1)

network {

network_id = data.xenorchestra_network.net0.id

}

network {

network_id = data.xenorchestra_network.net1.id

}

network {

network_id = data.xenorchestra_network.net2.id

}

disk {

sr_id = lookup(var.host_count, count.index + 3 + 1)

name_label = "Terraform_disk_imavo"

size = 322122547200

}

}

resource "xenorchestra_vm" "smtp" {

count = 3

cpus = 4

memory_max = 8589934592

cloud_config = xenorchestra_cloud_config.node[count.index + 6].template

name_label = lookup(var.vm_name, count.index + 6 + 1)

name_description = "${var.cluster_name} smtp worker"

template = data.xenorchestra_template.template.id

auto_poweron = true

affinity_host = lookup(var.preferred_host, count.index + 6 + 1)

network {

network_id = data.xenorchestra_network.net0.id

}

network {

network_id = data.xenorchestra_network.net1.id

}

network {

network_id = data.xenorchestra_network.net2.id

}

disk {

sr_id = lookup(var.host_count, count.index + 6 + 1)

name_label = "Terraform_disk_imavo"

size = 53687091200

}

}

/home/jonathon/Pictures/Screenshots/Screenshot from 2025-05-20 15-26-59.png

/home/jonathon/Pictures/Screenshots/Screenshot from 2025-05-20 15-26-59.png