@dthenot said:

you will also likely need to give the host-uuid of the host the disk is on

Thanks, and I'm assuming this UUID or Machine ID from:

[14:45 xcp-kbbn NAS]# dmidecode --type SYSTEM

# dmidecode 3.0

Getting SMBIOS data from sysfs.

SMBIOS 3.3.0 present.

# SMBIOS implementations newer than version 3.0 are not

# fully supported by this version of dmidecode.

Handle 0x0001, DMI type 1, 27 bytes

System Information

Manufacturer: ASUS

Product Name: System Product Name

Version: System Version

Serial Number: System Serial Number

UUID: E7AE9723-CB08-A278-5C45-7C10C9473BD0

Wake-up Type: Power Switch

SKU Number: SKU

Family: To be filled by O.E.M.

OR

[12:46 xcp-kbbn NAS]# hostnamectl

Static hostname: xcp-kbbn

Icon name: computer-desktop

Chassis: desktop

Machine ID: c668f529e66c42b9815fe12f3401df82

Boot ID: dd70b200b25341c688aee9fbc58d20a2

Virtualization: xen

Operating System: XCP-ng 8.3

Kernel: Linux 4.19.0+1

Architecture: x86-64

the command would look like this correct:

xe sr-create type=udev device-config:location=/srv/NAS name-label="NAS Disks" host-uuid=E7AE9723-CB08-A278-5C45-7C10C9473BD0 or c668f529e66c42b9815fe12f3401df82

UPDATE: I tried the new command but I got these error messages:

[14:45 xcp-kbbn NAS]# xe sr-create type=udev device-config:location=/srv/NAS name-label="NAS Disks" host-uuid=E7AE9723-CB08-A278-5C45-7C10C9473BD0

The uuid you supplied was invalid.

type: host

uuid: E7AE9723-CB08-A278-5C45-7C10C9473BD0

[15:14 xcp-kbbn NAS]# ls

[15:19 xcp-kbbn NAS]# xe sr-create type=udev device-config:location=/srv/NAS name-label="NAS Disks" host-uuid=c668f529e66c42b9815fe12f3401df82

The uuid you supplied was invalid.

type: host

uuid: c668f529e66c42b9815fe12f3401df82

then I was successful (I believe with you confirmation) when I left our the host-uuid param:

[15:19 xcp-kbbn NAS]# xe sr-create type=udev device-config:location=/srv/NAS name-label="NAS Disks"

b802722e-67ac-5aba-8d6c-e565d2d7fa0d

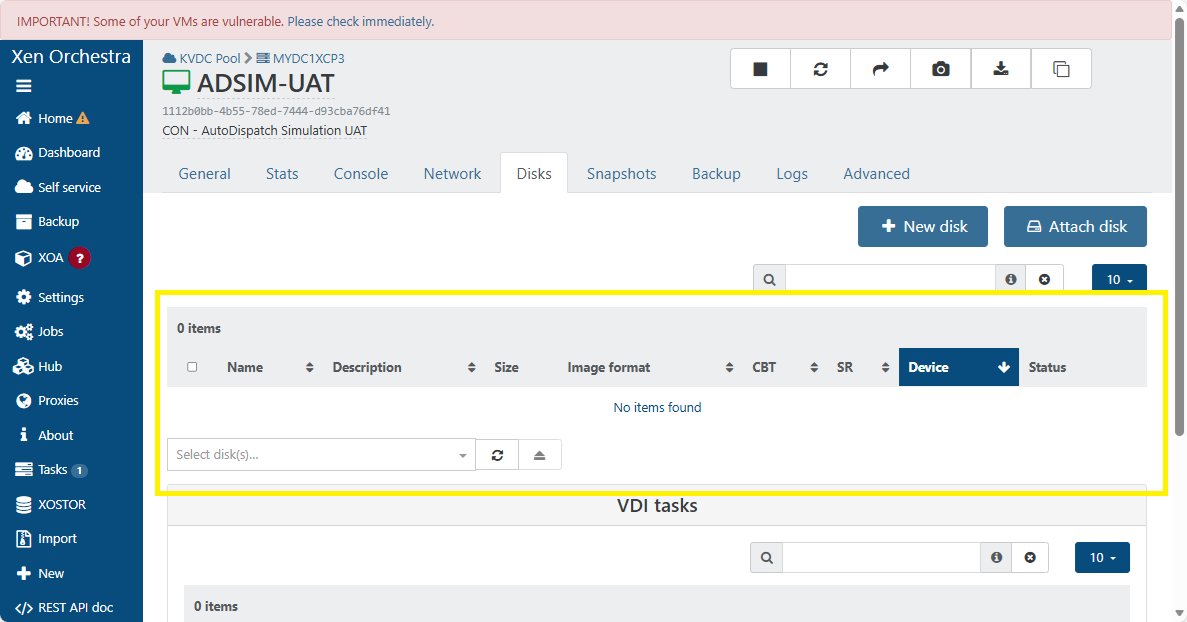

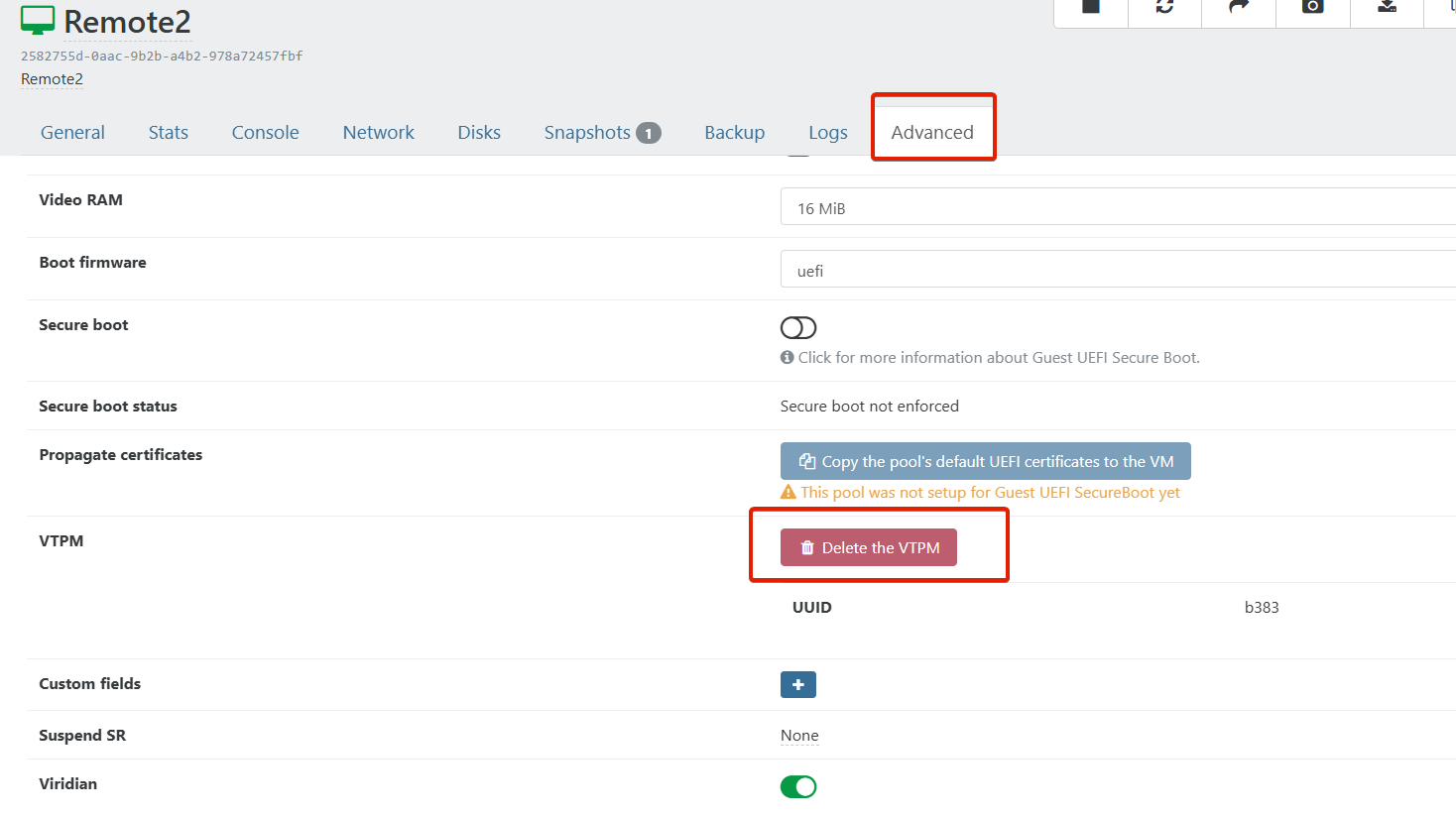

and then it was listed as a SR:

[image: 1779139583485-screenshot-from-2026-05-18-15-24-03.png]

now to the next task command:

xe sr-scan uuid=b802722e-67ac-5aba-8d6c-e565d2d7fa0d

was successful, but I have another Question, the size of the device is not showing, is this normal being that it was done this way?

UPDATE 2.0: I just discovered the xe command:

[15:27 xcp-kbbn NAS]# xe host-list

uuid ( RO) : 9a5e5bb3-bdb9-41ea-9505-6f6fe630f369

name-label ( RW): xcp-kbbn

the uuid does not match the ones provided above, do I need to remove the SR and do it over again using this uuid? I will give it a try using another folder....

UPDATE 2.1: I executed the command using the above uuid:

[15:35 xcp-kbbn srv]# xe sr-create type=udev device-config:location=/srv/NAS name-label="NAS Disks" host-uuid=9a5e5bb3-bdb9-41ea-9505-6f6fe630f369

6fc531a0-19a0-b9c1-c78e-c687fce5ff84

and was successful, but now my question for the device size, is it normal or not due to creating it this way?

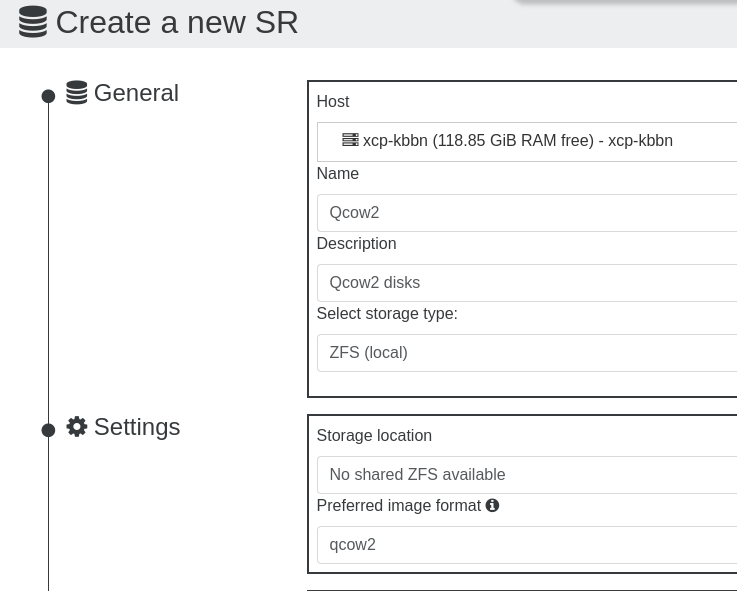

UPDATE 2.2: I forgot to do:

ln -s /dev/sda /srv/NAS/sda #although it might be better to use a stable identifier if you have multiple disks

xe sr-scan uuid=<UUID of the udev SR>

be right back.....

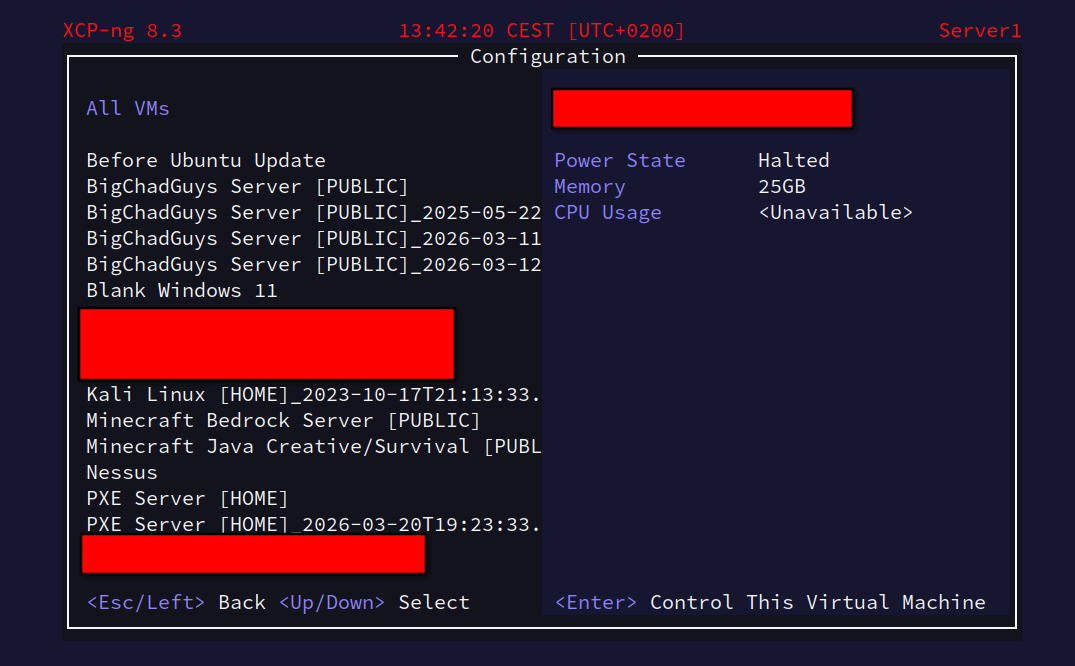

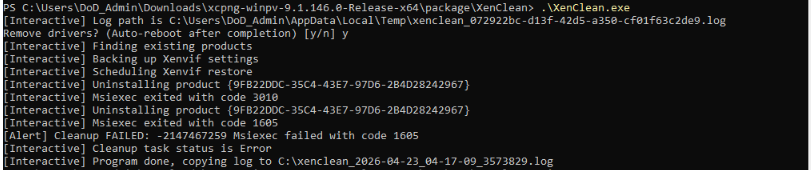

UPDATE 3.0: Success, I have all HDs attached to my VM, access all files and the sizes are reported! :

[image: 1779147782991-screenshot-from-2026-05-18-17-25-38.png]

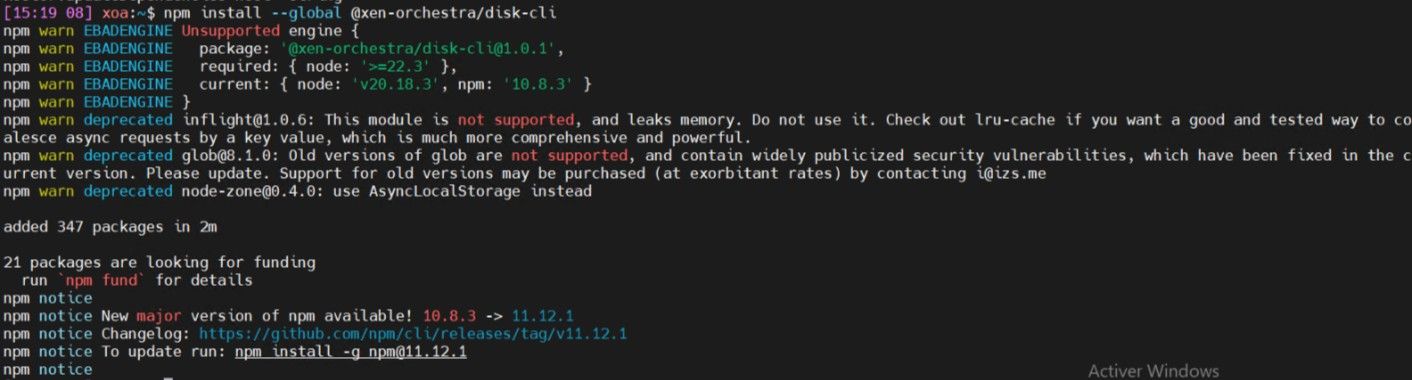

List of attached disks:

[image: 1779147797114-screenshot-from-2026-05-18-17-18-07.png]

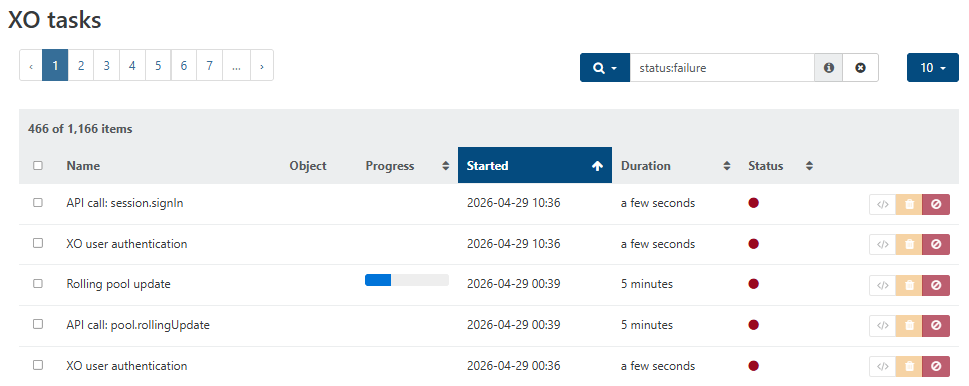

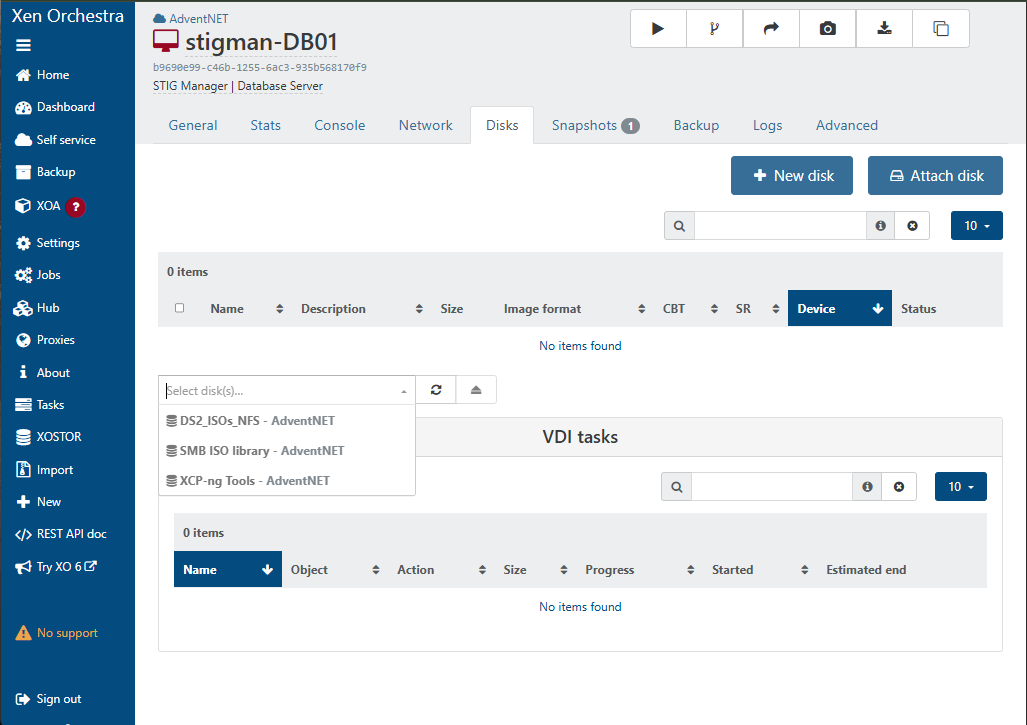

The VM:

[image: 1779148373193-screenshot-from-2026-05-18-17-51-19.png]

Thanks for your help!...