Good news it worked !

Nothing better than to start by a good old cleaning and battery replacement !

Will continue to play and learn more about XCP-ng and XOA now!

Thank you guys, for the help!

With kind regards.

Good news it worked !

Nothing better than to start by a good old cleaning and battery replacement !

Will continue to play and learn more about XCP-ng and XOA now!

Thank you guys, for the help!

With kind regards.

@Danp, @DustinB ,

I have taken the full machine apart, did a full clean up and cpu repaste.

I also changed the Bios battery. After that the VM is now starting correctly.

I will give another try with XOA Deploy and see how it goes. I would like to double check if I'm reproducing the original issue.

With kind regards.

@poddingue thank you that was it !

I had the feeling that the issue was around the path with the 4 slashes but couldn't figure out why, what and where.

So essentially, after setting the working directory to /tmp for my docker run it worked.

Here is the extract of the working build step for install.img

- name: Build install.img

run: |

XCPNG_VER="${{ github.event.inputs.xcpng_version }}"

docker run --rm \

--user root -w /tmp \

-v "$(pwd)/create-install-image:/create-install-image:ro" \

-v "/tmp/RPM-GPG-KEY-xcp-ng-ce:/etc/pki/rpm-gpg/RPM-GPG-KEY-xcp-ng-ce" \

-v "$(pwd):/output" \

xcp-ng-build-ready \

bash -ce "

rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-xcpng

rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-xcp-ng-ce

/create-install-image/scripts/create-installimg.sh \

--output /output/install-${XCPNG_VER}.img \

--define-repo base!https://updates.xcp-ng.org/8/${XCPNG_VER}/base \

--define-repo updates!https://updates.xcp-ng.org/8/${XCPNG_VER}/updates \

${XCPNG_VER}

echo 'install.img built'

Regarding the output you wanted to see, here is it when it fails, first the way I trigger the container for context.

sudo docker run --rm -it -v "$(pwd)/create-install-image:/create-install-image:ro" -v "$(pwd):/output" b292e8a21068 /bin/bash

./create-install-image/scripts/create-installimg.sh --output /output/instal.img 8.3

-----Set REPOS-----

--- PWD var and TMPDIR content----

/

total 20

drwx------ 4 root root 4096 Apr 16 00:54 .

drwxr-xr-x 1 root root 4096 Apr 16 00:54 ..

drwx------ 2 root root 4096 Apr 16 00:54 rootfs-FJWbFM

-rw------- 1 root root 295 Apr 16 00:54 yum-HRyIb1.conf

drwx------ 2 root root 4096 Apr 16 00:54 yum-repos-1FbWwV.d

--- ISSUE happens here *setup_yum_repos* ----

CRITICAL:yum.cli:Config error: Error accessing file for config file:////tmpdir-sApL80/yum-HRyIb1.conf

As soon as I'm moving to different directory other than the root / then this issue goes away.

Now going through the ISO build.

With kind regards.

Hi everyone,

I'm playing around with the build of the XCP-ng ISO to add a custom RPM in it.

Also, I'm facing an issue where I can't find my way out.

This is the create-installimg.sh step where setup_yum_repos ( from misc.sh ) doesn't manage to access the yum config.

What is weird is that just before this function is called I manage with a simple cat command to access it.

And as per my understanding at reading the script the TPMDIR is set by misc.sh so I don't have control over it.

This is the output of my tests currently.

sudo docker run --rm -it -v "$(pwd)/create-install-image:/create-install-image:ro" -v "$(pwd):/output" b292e8a21068 /bin/bash

[root@85b20fccb0e7 /]# /create-install-image/scripts/create-installimg.sh --output /output/install-8.3.img 8.3

-----Set REPOS-----

YUMCONF: //tmpdir-VcYHGW/yum-171swX.conf

YUMREPOSD: //tmpdir-VcYHGW/yum-repos-eg1dEe.d

YUMCONF_TPML: /create-install-image/configs/8.3/yum.conf.tmpl

ROOTFS: //tmpdir-VcYHGW/rootfs-1RKWIe

YUMFLAGS: --config=//tmpdir-VcYHGW/yum-171swX.conf --installroot=//tmpdir-VcYHGW/rootfs-1RKWIe -q

ls REPOSD

cat YUMCONF

[main]

keepcache=1

debuglevel=2

reposdir=//tmpdir-VcYHGW/yum-repos-eg1dEe.d

logfile=/var/log/yum.log

retries=20

obsoletes=1

gpgcheck=1

assumeyes=1

syslog_ident=mock

syslog_device=

metadata_expire=0

mdpolicy=group:primary

best=1

protected_packages=

skip_missing_names_on_install=0

tsflags=nodocs

--- ISSUE happens here *setup_yum_repos* ----

CRITICAL:yum.cli:Config error: Error accessing file for config file:////tmpdir-VcYHGW/yum-171swX.conf

This is the docker image I'm using.

sudo docker images

i Info → U In Use

IMAGE ID DISK USAGE CONTENT SIZE EXTRA

ghcr.io/xcp-ng/xcp-ng-build-env:8.3 b292e8a21068 543MB 0B

I have been building this from the xcp-ng build env repo:

https://github.com/xcp-ng/create-install-image/tree/master

I'm puzzle and can't find what I'm doing wrong here.

Any hint will be much welcome !

Ok just read the code and found my answer

It was a typo it is repo-gpgcheck not repo_gpgcheck

repo_gpgcheck = (None if getStrAttribute(i, ['repo-gpgcheck'], default=None) is None

else getBoolAttribute(i, ['repo-gpgcheck']))

gpgcheck = (None if getStrAttribute(i, ['gpgcheck'], default=None) is None

else getBoolAttribute(i, ['gpgcheck']))

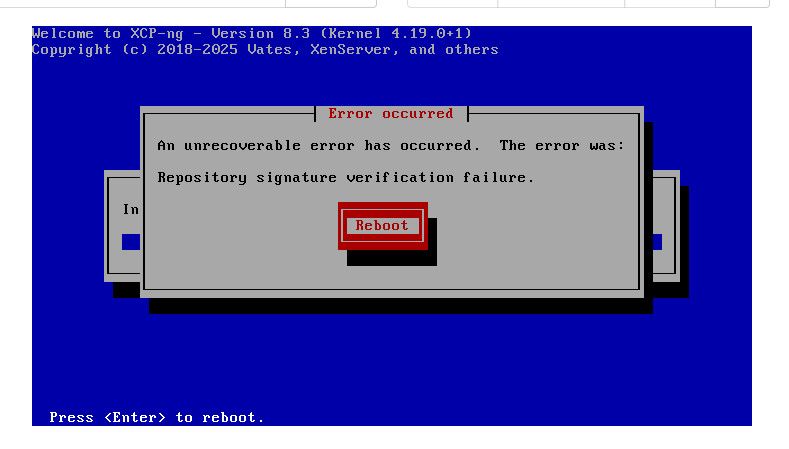

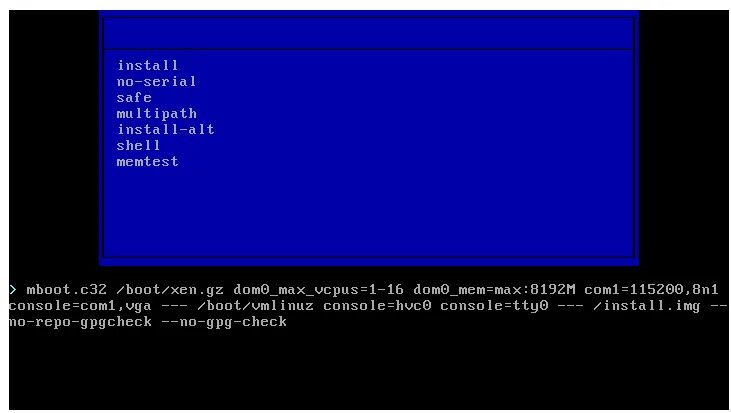

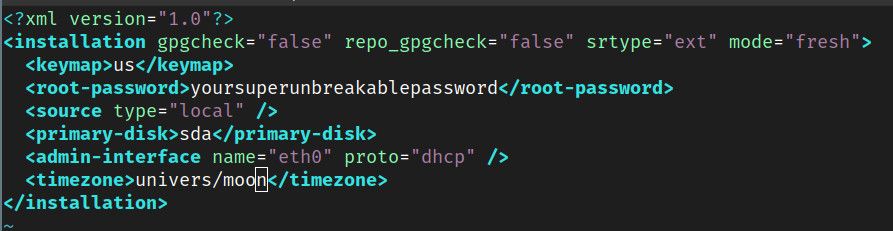

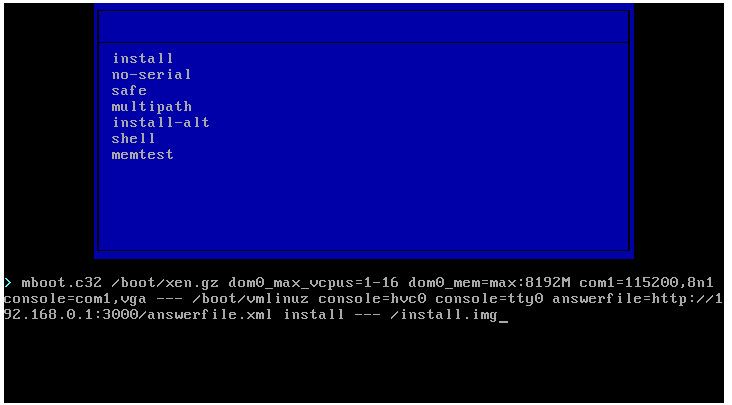

Hi Everyone,

I'm currently testing XCP-ng ISO modification. And i'm constently experiencing the gpg check failure

I understand why this happen since I'm modifying one of the rpm and the ISO itself.

What I puzzled is why the ignore gpg-check I'm passing to the installer are ignored.

So first I have tried to pass in --no-gpg-check and --no-repo-gpgcheck to the boot

But it seems not having effect

I have also tried to put this in an answerfile :

And calling it at boot

Unfortunately, in both case the gpg check seems to be triggered and as expected failing.

Anyone, have any hint where could be my issue ? How can I disable gpg-check ?

I'm guessing this is a syntax or config issue but can't find what it is..

Thanks in advance for any help !

Re: XO SocketError: other side closed

Hello

I'm also experiencing the same issue on my XCP-ng / XOA .

My XCP-ng host time to time disappear from XOA.

Here is the error I have in journalctl

60.1

Feb 26 21:29:34 xoa xo-server[1628]: '00000000000008673337,00000000000008098348',

Feb 26 21:29:34 xoa xo-server[1628]: [Array],

Feb 26 21:29:34 xoa xo-server[1628]: '* session id *',

Feb 26 21:29:34 xoa xo-server[1628]: params: [

Feb 26 21:29:34 xoa xo-server[1628]: method: 'event.from',

Feb 26 21:29:34 xoa xo-server[1628]: duration: 4,

Feb 26 21:29:34 xoa xo-server[1628]: call: {

Feb 26 21:29:34 xoa xo-server[1628]: },

Feb 26 21:29:34 xoa xo-server[1628]: bytesRead: 976370

Feb 26 21:29:34 xoa xo-server[1628]: bytesWritten: 40192,

Feb 26 21:29:34 xoa xo-server[1628]: timeout: undefined,

Feb 26 21:29:34 xoa xo-server[1628]: remoteFamily: 'IPv4',

Feb 26 21:29:34 xoa xo-server[1628]: remotePort: 443,

Feb 26 21:29:34 xoa xo-server[1628]: remoteAddress: '192.168.0.2',

Feb 26 21:29:34 xoa xo-server[1628]: localPort: 44468,

Feb 26 21:29:34 xoa xo-server[1628]: localAddress: '192.168.0.7',

Feb 26 21:29:34 xoa xo-server[1628]: socket: {

Feb 26 21:29:34 xoa xo-server[1628]: code: 'UND_ERR_SOCKET',

Feb 26 21:29:34 xoa xo-server[1628]: at processTicksAndRejections (node:internal/process/task_queues:82:21) {

Feb 26 21:29:34 xoa xo-server[1628]: at endReadableNT (node:internal/streams/readable:1698:12)

Feb 26 21:29:34 xoa xo-server[1628]: at TLSSocket.patchedEmit [as emit] (/usr/local/lib/node_modules/xo-server/node_modules/@xen-orchestra/log/configure.js:52:17)

Feb 26 21:29:34 xoa xo-server[1628]: at TLSSocket.emit (node:events:530:35)

Feb 26 21:29:34 xoa xo-server[1628]: at TLSSocket.<anonymous> (/usr/local/lib/node_modules/xo-server/node_modules/undici/lib/dispatcher/client-h1.js:701:24)

Feb 26 21:29:34 xoa xo-server[1628]: _watchEvents SocketError: other side closed

Feb 26 21:28:46 xoa xo-server[1628]: }

Feb 26 21:28:46 xoa xo-server[1628]: }

Feb 26 21:28:46 xoa xo-server[1628]: ]

Here is the node version:

node -v

v20.18.3

When I looked for XOA version, found it difficult to find it.

So I had in fact to go back to XO lite to find that I'm on 2025.11.

As for XCP-ng i'm on 8.3.0 .

My XOA is hosted on top of XCP-ng on the same machine.

Any advice to understand the cause of this issue are welcome.

Best.

Hello,

I have hardware issues, specially on the disks.

Unfortunately I don't have fully access to the machine to be able to confirm if is effectively disks( more likely ) or back-plane.

Interestingly some of those VMs does work, I can boot them and work with them but cannot copy the disks.

Since those are Windows server VM I have also tried the Windows backup tool and this failed as well to perform to finish the backup.

So I'm closing this investigation as hardware issue.

Not sure If correct but the vhd-tool check seem quite basic.

Looks like it only do kind of a "wrapper" check and not a full integrity check

$ vhd-tool check 4156d2ea-d39d-436d-b89f-06ad64949c53.vhd

0 START of section 'footer_at_top'

1ff END of section 'footer_at_top'

200 START of section 'header'

5ff END of section 'header'

600 START of section 'BAT'

195ff END of section 'BAT'

67a00 START of section 'locator block 0'

67bff END of section 'locator block 0'

67c00 START of section 'locator block 1'

67dff END of section 'locator block 1'

67e00 START of section 'locator block 2'

67fff END of section 'locator block 2'

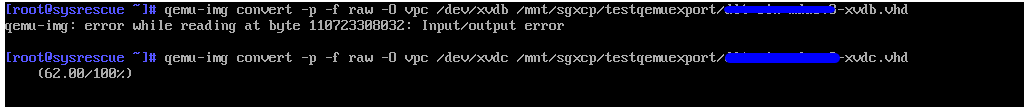

Hi all,

Continuing the investigation,

Since the VM is booting, I'm imaging all disks with qemu-img.

What I'm doing is boot on SysrescueISO, then image to vhd each disks, will see if it works.

On thing which is interesting is that I have a consistent I/O error on one of the device.

I'm guessing that could be the cause of my issue, when I try to do a VMexport.

Also, wondering why no issue has been found on the vhd, will have to investigate that part as well.

Best.

Hi

I tried again and failed with the same error message..

xe vm-export vm=c57a4225-3adb-b422-34ec-d2a23c6223c9 filename=/run/sr-mount/a7f6df31-412f-d5e9-4163-8af75b1df8ad/dlt-sin-mducv8.xva

The server failed to handle your request, due to an internal error. The given message may give details useful for debugging the problem.

message: Unix.Unix_error(Unix.EIO, "read", "")

Hum in this last reproduction, I the issue happened 3hours after I triggered the export...

I have a feeling this is an hardware issue here...

Anyone any other idea ? Advice where to look at?

[EDIT] I have manage to export another vm without issue.

Best.

Matth.

@Danp thank you for the help.

I have looked at all vhd in my disk and they are all good as per vhd-tool check.

Regarding the logs in xensource logs the first error is showing up few mins after I trigger the export.

#!/bin/bash

# Script to check all VHD files in the current directory using vhd-tool

set +e # Don't exit on error

total=0

failed=0

echo "Checking VHD files..."

echo ""

# Loop through all .vhd files in current directory

for vhd_file in *.vhd; do

# Skip if no .vhd files found

[ -e "$vhd_file" ] || continue

((total++))

# Run vhd-tool check

if vhd-tool check "$vhd_file" > /dev/null 2>&1; then

echo "PASS: $vhd_file"

else

echo "FAIL: $vhd_file"

((failed++))

fi

done

# Report

echo ""

echo "===================="

echo "Total: $total"

echo "Failed: $failed"

echo "===================="

[ $failed -eq 0 ] && exit 0 || exit 1

/checkvhd.sh

Checking VHD files...

PASS: 014e122e-7908-4566-be52-31fc40ea6042.vhd

PASS: 054f3177-e40b-4c87-86c6-88ac9db3edfe.vhd

PASS: 154c4dd3-651d-404b-8df0-e85a07aa5bf6.vhd

PASS: 16f68c2a-74d7-4698-8276-56bdc686e161.vhd

PASS: 18b4a57f-3c77-42da-973c-d136375878da.vhd

PASS: 1a129b44-a562-4555-8785-2bfaa1f98455.vhd

PASS: 1e62a336-bb27-40f0-8da5-1f53cbeeee83.vhd

PASS: 24b6ede5-b698-4a9d-acb1-a4898d78cf8c.vhd

PASS: 24b82a29-9fe2-47af-a68b-f36a73b34624.vhd

PASS: 2d8b1375-4fc8-46eb-b1f6-2d887e37602c.vhd

PASS: 2e3897fb-7d45-4098-9956-d985636d9a12.vhd

PASS: 3165bf7b-ca6f-4a30-b33d-bda31e2c67ab.vhd

PASS: 31832728-0001-407d-85f6-0de340e2dbda.vhd

PASS: 32f501b3-47df-4730-824b-f9e297e9d12c.vhd

PASS: 346c5bf0-6635-4f1b-af32-fc9f8fac5088.vhd

PASS: 3a7f68ce-b342-4d03-878f-956e74074b6a.vhd

PASS: 406f2750-fea7-4389-920f-0b080335284f.vhd

PASS: 4156d2ea-d39d-436d-b89f-06ad64949c53.vhd

PASS: 475b16f8-f1c0-40b6-b181-99f83f14dd73.vhd

PASS: 52656fa5-d418-45e0-9e65-07a450af2476.vhd

PASS: 69042c99-b0a3-47ab-8c2b-48303c4cc6bc.vhd

PASS: 6a79914e-67e0-4ac9-b93e-2e393aaa7b97.vhd

PASS: 6b0919bb-7d0a-4036-a833-0313476a11a3.vhd

PASS: 7e2fc00c-7208-4132-aa4a-7db818c7a94b.vhd

PASS: 824af8d0-112b-479b-b474-6e5a26e0d8b2.vhd

PASS: 8f6d6a7c-5390-43bd-b0b5-55f8e681453a.vhd

PASS: 94453b92-3a69-416b-9c08-9f0b91b2aee1.vhd

PASS: 947ad175-06a7-4881-8725-aba36d2cda66.vhd

PASS: 97e577dc-b0be-4990-8e06-c0c4333aef82.vhd

PASS: 9889a738-cfd4-447d-962a-8fd88222d8ab.vhd

PASS: 9c93fa78-4b17-41b1-b794-8bfa165ed4b4.vhd

PASS: a3408bda-9c26-4333-b535-3a008109b98c.vhd

PASS: a3c69ec6-f56e-4d25-ba71-023a38eef8e3.vhd

PASS: a8c45c4c-8925-41a3-a78d-c30d6e20c261.vhd

PASS: b119ad26-f0dc-4965-b726-9dc3fc815130.vhd

PASS: b12002da-56b6-451c-ad4d-a6c16a79fc12.vhd

PASS: b24f9236-6b68-4214-812d-9e98994467aa.vhd

PASS: b34f20b8-6681-42e9-b234-116989286699.vhd

PASS: b5ff8f04-0ace-4b23-9f0b-7a6c5328bef5.vhd

PASS: b932bc63-a47d-49cb-9d28-a57ad61e7ad9.vhd

PASS: be06df0b-cbb7-4dfe-8eaf-697de4d8850b.vhd

PASS: cb88a496-8c80-41e2-b3de-055916a3a32c.vhd

PASS: ce66b785-5b1b-43c8-9ece-dc456508014c.vhd

PASS: ddb84192-3e35-4541-86da-d4326a6bbea7.vhd

PASS: de27f27e-65b8-42d3-929f-f34f0544149a.vhd

PASS: e054e9da-08e7-40b1-8c55-0fd2ae97ee5f.vhd

PASS: e449742e-5007-4be6-89c0-2529d00dbf28.vhd

PASS: f0ee695e-dcb4-49e6-ac17-4a64f65998a6.vhd

PASS: f20e6bb0-4cba-4030-87fc-ddbaca262169.vhd

PASS: f28a0554-6530-4055-a67e-d970ab1c4cc1.vhd

PASS: f5dd3ee4-7006-43fc-8254-98eb45505639.vhd

====================

Total: 51

Failed: 0

====================

I'll give another export try to see if it works this time.

best.