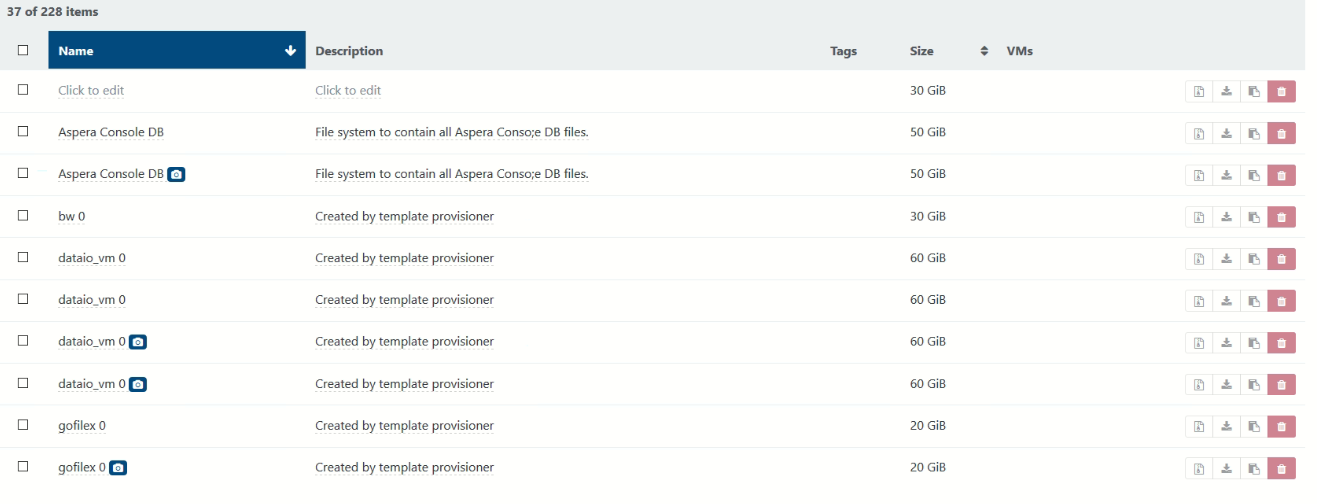

too many VDI/VHD per VM, how to get rid of unused ones

-

Yes, as long as you don't have intentionally left a VDI connected to no VM whatsoever, you can remove all of them.

-

im looking and i see no vms attached to the orphaned disks

-

That's by definition why are they called "orphaned"

The question is: have you decided to disconnect some VDI of some VM by yourself or they just are here because they are leftover of some failed operations?

The question is: have you decided to disconnect some VDI of some VM by yourself or they just are here because they are leftover of some failed operations?I think that in your case, you can remove them all.

-

thanks Olivier because when i change the magnifying glass to "type:!VDI-unmanaged" i see under the VMs the actual live vm name and the backup vm so i think its safe to delete all the orphaned ones

-

Yes, VDIs connected to a VM are by definition not orphaned. You don't want to remove those.

-

just out of interest when i change the magnifying glass to "type:VDI-unmanaged"

it lists "base copy"

what are they?

-

They are the read only parent of a disk (when you do a snapshot, in fact it creates 3 disks: the base copy in read only, the new active disk and the snapshot).

If you remove the snapshot, then a coalesce will appear, to merge the active disk within the base copy, and get back to the initial state: 1 disk only.

-

so can i delete the "type:VDI-snapshot"

-

This post is deleted! -

- Please edit your post and use Markdown syntax for code/error blocks

- Rescan your SR and try again, at worst remove everything you can, leave it a while to coalesce and wait before trying to remove it again

-

vdi.delete { "id": "1778c579-65a5-48b3-82df-9558a4f6ff7f" } { "code": "SR_BACKEND_FAILURE_1200", "params": [ "", "", "" ], "task": { "uuid": "3c72bd9a-36ec-01da-b1b5-0b19468f4532", "name_label": "Async.VDI.destroy", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20200120T19:23:41Z", "finished": "20200120T19:23:50Z", "status": "failure", "resident_on": "OpaqueRef:bb0f833a-b400-4862-9d3f-07f15f34e0f8", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "SR_BACKEND_FAILURE_1200", "", "", "" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process"xapi @ lon-p-xenserver01")(filename lib/backtrace.ml)(line 210))((process"xapi @ lon-p-xenserver01")(filename ocaml/xapi/storage_access.ml)(line 31))((process"xapi @ lon-p-xenserver01")(filename ocaml/xapi/xapi_vdi.ml)(line 683))((process"xapi @ lon-p-xenserver01")(filename ocaml/xapi/message_forwarding.ml)(line 100))((process"xapi @ lon-p-xenserver01")(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process"xapi @ lon-p-xenserver01")(filename ocaml/xapi/rbac.ml)(line 236))((process"xapi @ lon-p-xenserver01")(filename ocaml/xapi/server_helpers.ml)(line 83)))" }, "message": "SR_BACKEND_FAILURE_1200(, , )", "name": "XapiError", "stack": "XapiError: SR_BACKEND_FAILURE_1200(, , ) at Function.wrap (/xen-orchestra/packages/xen-api/src/_XapiError.js:16:11) at _default (/xen-orchestra/packages/xen-api/src/_getTaskResult.js:11:28) at Xapi._addRecordToCache (/xen-orchestra/packages/xen-api/src/index.js:812:37) at events.forEach.event (/xen-orchestra/packages/xen-api/src/index.js:833:13) at Array.forEach (<anonymous>) at Xapi._processEvents (/xen-orchestra/packages/xen-api/src/index.js:823:11) at /xen-orchestra/packages/xen-api/src/index.js:984:13 at Generator.next (<anonymous>) at asyncGeneratorStep (/xen-orchestra/packages/xen-api/dist/index.js:58:103) at _next (/xen-orchestra/packages/xen-api/dist/index.js:60:194) at tryCatcher (/xen-orchestra/node_modules/bluebird/js/release/util.js:16:23) at Promise._settlePromiseFromHandler (/xen-orchestra/node_modules/bluebird/js/release/promise.js:547:31) at Promise._settlePromise (/xen-orchestra/node_modules/bluebird/js/release/promise.js:604:18) at Promise._settlePromise0 (/xen-orchestra/node_modules/bluebird/js/release/promise.js:649:10) at Promise._settlePromises (/xen-orchestra/node_modules/bluebird/js/release/promise.js:729:18) at _drainQueueStep (/xen-orchestra/node_modules/bluebird/js/release/async.js:93:12) at _drainQueue (/xen-orchestra/node_modules/bluebird/js/release/async.js:86:9) at Async._drainQueues (/xen-orchestra/node_modules/bluebird/js/release/async.js:102:5) at Immediate.Async.drainQueues (/xen-orchestra/node_modules/bluebird/js/release/async.js:15:14) at runCallback (timers.js:810:20) at tryOnImmediate (timers.js:768:5) at processImmediate [as _immediateCallback] (timers.js:745:5)" } -

got the command

xe vm-disk-list vm=(name or UUID)

im going to cross refernce it with this

xe vdi-list sr-uuid=$sr_uuid params=uuid managed=true

and any vdis that dont match im going to delete with

xe vdi-destroy

is this corect?

mmm... when i run a

xe vdi-list

i see i have a lot of base copies

what are they all about?

-

ok Olivier

as XOA isnt working can i do it from the cli of xen, so -

xe vdi-destroy uuid=(uuid of orphaned vdi )

-

It's not XOA that's not working. XO is the client, sending commands to the host. You had a pretty clear error coming from the host, so the issue is there.

Also, please edit your post and use Markdown syntax please, otherwise it's hard to read.

-

Hi Olivier, do i need to do this -

-

Why? How it's related? Have you cleaned the maximum number of VDIs?

-

no...how do i do that Olivier

do you mean delete the orphan ones?

-

Yes, removing all possible orphaned VDIs

-

mmm... not looking good, got a problem with my backend SR...

Jan 21 08:57:16 lon-p-xenserver01 SMGC: [6577] Found 2 orphaned vdis Jan 21 08:57:16 lon-p-xenserver01 SMGC: [6577] Found 2 VDIs for deletion: Jan 21 08:57:16 lon-p-xenserver01 SMGC: [6577] *4c5de6b9[VHD](20.000G//192.000M|ao) Jan 21 08:57:16 lon-p-xenserver01 SMGC: [6577] *98b10e9d[VHD](50.000G//9.445G|n) Jan 21 08:57:16 lon-p-xenserver01 SMGC: [6577] Deleting unlinked VDI *4c5de6b9[VHD](20.000G//192.000M|ao) Jan 21 08:57:16 lon-p-xenserver01 SM: [6577] lock: tried lock /var/lock/sm/0f956522-42d7-5328-a5ec-a7fd406ca0f3/sr, acquired: True (exists: True) Jan 21 08:57:16 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #0 Jan 21 08:57:21 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:24 lon-p-xenserver01 SM: [9438] sr_scan {'sr_uuid': 'e4497404-baa7-c26f-49b1-266fd1f89e5f', 'subtask_of': 'DummyRef:|26c1154f-1c31-4ff7-8455-7899be8018d9|SR.scan', 'args': [], 'host_ref': 'OpaqueRef:bb0f833a-b400-4862-9d3f-07f15f34e0f8', 'session_ref': 'OpaqueRef:a5cdddcc-1b39-4fe7-8de0-457e40783795', 'device_config': {'username': 'robert.wild.admin', 'vers': '3.0', 'cifspassword_secret': '8a5270fc-72ca-59aa-a71e-c8cd9dc91750', 'iso_path': '/engineering/xen/iso', 'location': '//10.110.130.101/mmfs1', 'type': 'cifs', 'SRmaster': 'true'}, 'command': 'sr_scan', 'sr_ref': 'OpaqueRef:88f40308-1653-4d3e-906b-c85de994844d'} Jan 21 08:57:25 lon-p-xenserver01 SM: [9464] sr_update {'sr_uuid': 'e4497404-baa7-c26f-49b1-266fd1f89e5f', 'subtask_of': 'DummyRef:|d6f3febc-c434-446f-b152-8b226d935e8c|SR.stat', 'args': [], 'host_ref': 'OpaqueRef:bb0f833a-b400-4862-9d3f-07f15f34e0f8', 'session_ref': 'OpaqueRef:6355efbb-370e-4192-b69b-d7e529058db9', 'device_config': {'username': 'robert.wild.admin', 'vers': '3.0', 'cifspassword_secret': '8a5270fc-72ca-59aa-a71e-c8cd9dc91750', 'iso_path': '/engineering/xen/iso', 'location': '//10.110.130.101/mmfs1', 'type': 'cifs', 'SRmaster': 'true'}, 'command': 'sr_update', 'sr_ref': 'OpaqueRef:88f40308-1653-4d3e-906b-c85de994844d'} Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #1 Jan 21 08:57:26 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #2 Jan 21 08:57:31 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #3 Jan 21 08:57:36 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #4 Jan 21 08:57:41 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] ' Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] *** lvremove failed on attempt #5 Jan 21 08:57:45 lon-p-xenserver01 SM: [6577] ['/sbin/lvremove', '-f', '/dev/VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653'] Jan 21 08:57:50 lon-p-xenserver01 SM: [6577] FAILED in util.pread: (rc 5) stdout: '', stderr: ' /run/lvm/lvmetad.socket: connect failed: No such file or directory Jan 21 08:57:50 lon-p-xenserver01 SM: [6577] WARNING: Failed to connect to lvmetad. Falling back to internal scanning. Jan 21 08:57:50 lon-p-xenserver01 SM: [6577] Logical volume VG_XenStorage-0f956522-42d7-5328-a5ec-a7fd406ca0f3/VHD-4c5de6b9-ae97-4a15-ad76-84a49c397653 in use. -

great, seems a bug in XS 7.6 and im running it LOL

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login