What should i expect from VM migration performance from Xen-ng ?

-

Hi Everyone ,

When live migrate vms between Xen-ng nodes i saw that couldn't push more then 1 Gbit traffic.

We have 3 nodes and all of them are connected 10Gbit to the switch (bond is configured but only on physical port up for now ) (bond is switch independent configured )

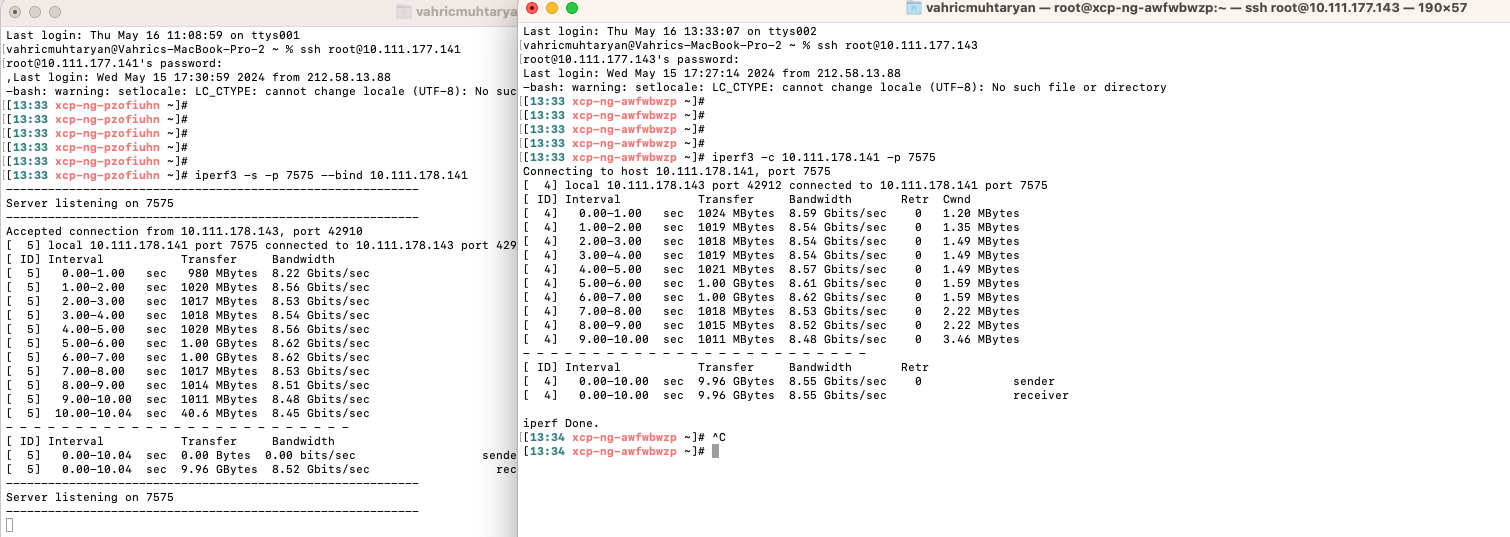

Then we back to Xen-ng hosts install iperf3 and made a test between nodes and get almost 8 Gbit traffic but do not care what happened to other 2 Gbit

(maybe because no dpdk enabled default -->

(maybe because no dpdk enabled default -->

[00:04 xcp-ng-zjiihqws ~]# ovs-vsctl get Open_vSwitch . iface_types

[geneve, gre, internal, ipsec_gre, lisp, patch, stt, system, tap, vxlan])Using MTU 9000 on switch and Xen-ng side (actually 1500 mtu should be enough but we try to apple best practice) bonding 9000 and sub interface migration also same 9000 too.

Then tried 2-3 VM together to understand how did something in paralel and up to 1.24GB traffic we succeeded.

Actually i do not want push 10G or something just want to understand something wrong with me ? or really live migration traffic could not saturate my nic bandwith ? Should check some config to allow high traffic for migration ?

Thanks, Regards

VM -

Are you doing storage/vdi migration?

The speed of that is "limited" some how, I dont know why but migrating VDI's is always slow.If we migrate 3-4 VM's at the same time we could see it spike to a total of 166MB/s but that's about it.

I've even created a report on the Citrix Xenserver bugtracker but they never bothered to do anything about it. -

@nikade hi , no storage migration , only move one vm from one node to another

this is what exactly i saw, almost seeing 1.24 or 1.3 Gbit always which equal 166MB/s -

Live memory migration (without storage) can reach far higher speed. We had some numbers I remember about close to 8Gbits.

-

@olivierlambert hi,

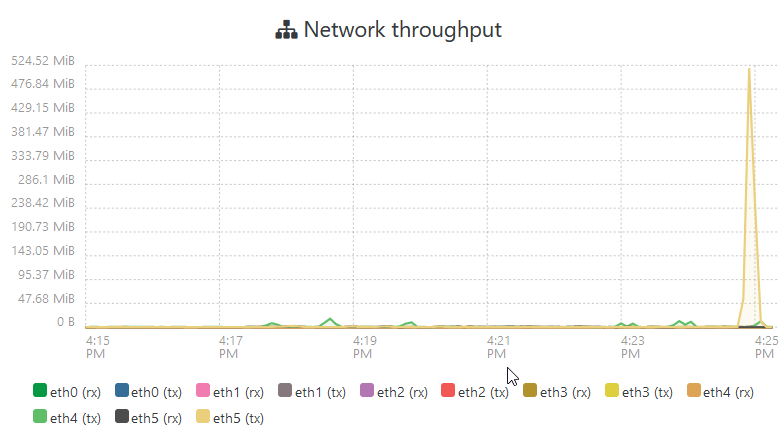

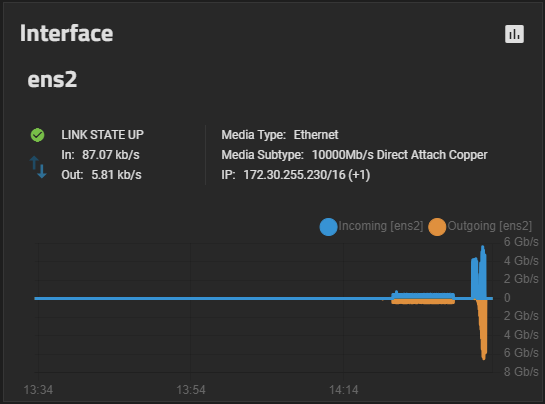

This iperf3 performance between two nodes 141 and 143 via migration network which is 10.111.178 network

I'm sure MTUs are 9000 on switch , nics and related sub interface for migration network work ...

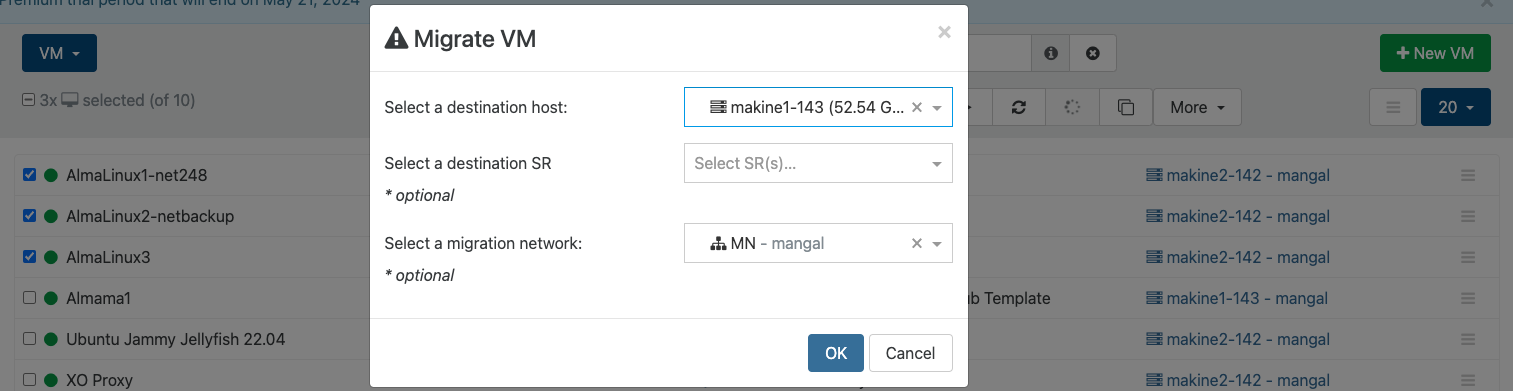

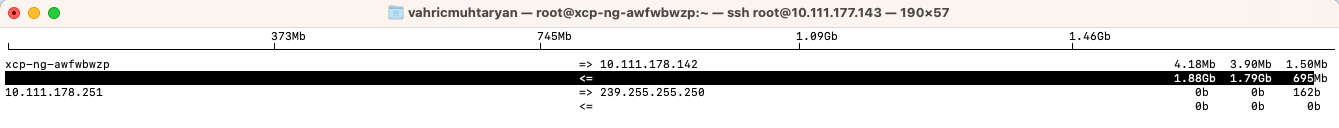

3 VM migrations are kicked

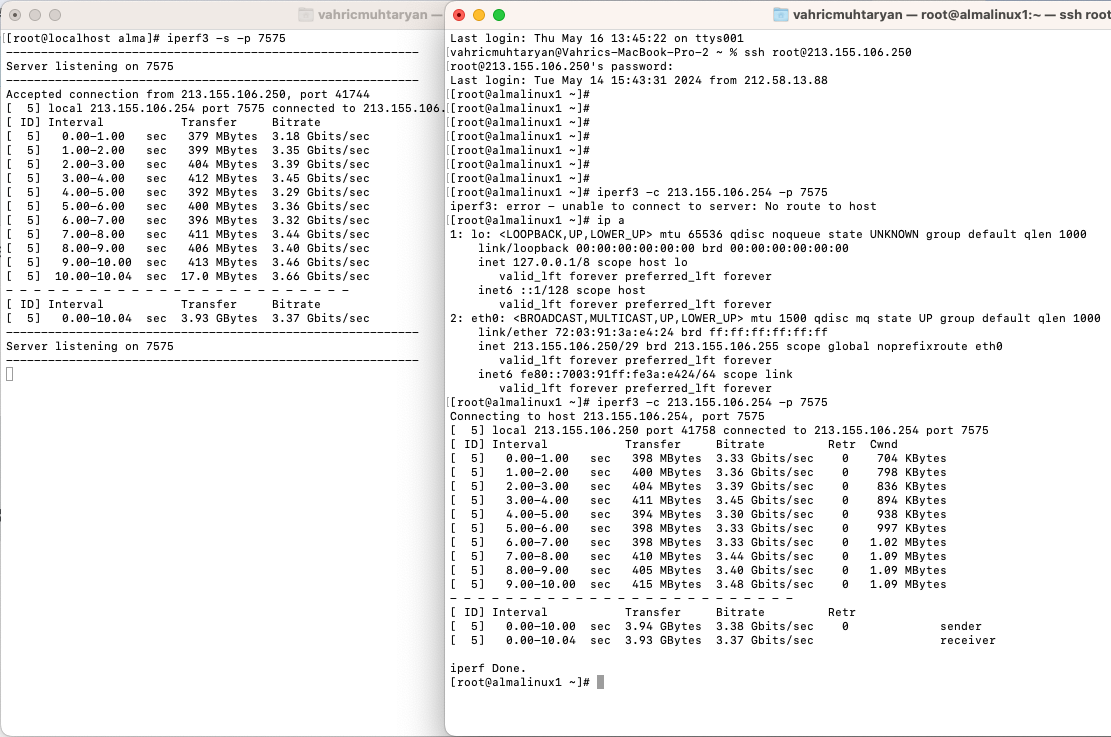

No luck to catch high throughputBetween two vms mtu 1500, i got this

Means couldn't imagine what is the issue here ? Maybe Dom0 limiter for VMs but for migration process nothing should be , isn't it ?

Thanks

VM -

When I live migrate a VM without disk (VDI on shared SR) we're seeing ~4Gbit/s with 2x10G in a bond0 with MTU 9000, within the same broadcast domain/subnet:

We've seen around 9Gbit/s max but thats when we migrate a large VM with a lot of memory.

-

increasing memory of Dom0 did not effect ...

increasing vCPU of Dom0 did not effect (actually not all vcpu already used for it but i just want to try )

I run stress*ng for load vms memory but did not effect

No pinning or numa config need because single cpu and shared L3 cache for all cores

Also MTU size is not effecting its working same with 1500 and 9000 MTU

I saw and change tcp_limit_output_bytes but did not help meOnly what effect is changing the hardware

My Intel servers are Intel(R) Xeon(R) CPU E5-2620 v3 @ 2.40GHz --> 0.9 Gbit/s per migration

My AMD servers are AMD EPYC 7502P 32-Core Processor --> 1.76 Gbit/s per migrationDo you have any advise ?

-

Hello,

So, does someone know why I can't go over 145MB/s and how to increase the vdi speed transfert ?

( I have 5Gb/s between nodes )

Thanks !

-

Hi,

I think it's related to how it's transferred, I wouldn't expect much more.

-

@henri9813 said in What should i expect from VM migration performance from Xen-ng ?:

Hello,

So, does someone know why I can't go over 145MB/s and how to increase the vdi speed transfert ?

( I have 5Gb/s between nodes )

Thanks !

Are you migrating storage or just the VM?

How can you have 5Gb/s between the nodes? What kind of networking setup are you using? -

Hello,

Thanks for your answer @nikade

I migrate both storage and vm.

I have 25Gb/s nic, but I have a 5Gb/s limitation at switch level.

But I found on another topic an explanation about this, this could be related to SMAPIv1.

Best regards

-

@henri9813 said in What should i expect from VM migration performance from Xen-ng ?:

Hello,

Thanks for your answer @nikade

I migrate both storage and vm.

I have 25Gb/s nic, but I have a 5Gb/s limitation at switch level.

But I found on another topic an explanation about this, this could be related to SMAPIv1.

Best regards

Yeah, when migrating storage the speeds are pretty much the same no matter what NIC you have...

This is a known limitation and I hope it will be resolved soon. -

I've spent a bunch of time trying to find some dark magic to making the VDI migration faster, so far nothing. My VM (memory) migration is fast enough that I'm not concerned right now. and don't have any testing to show for it.

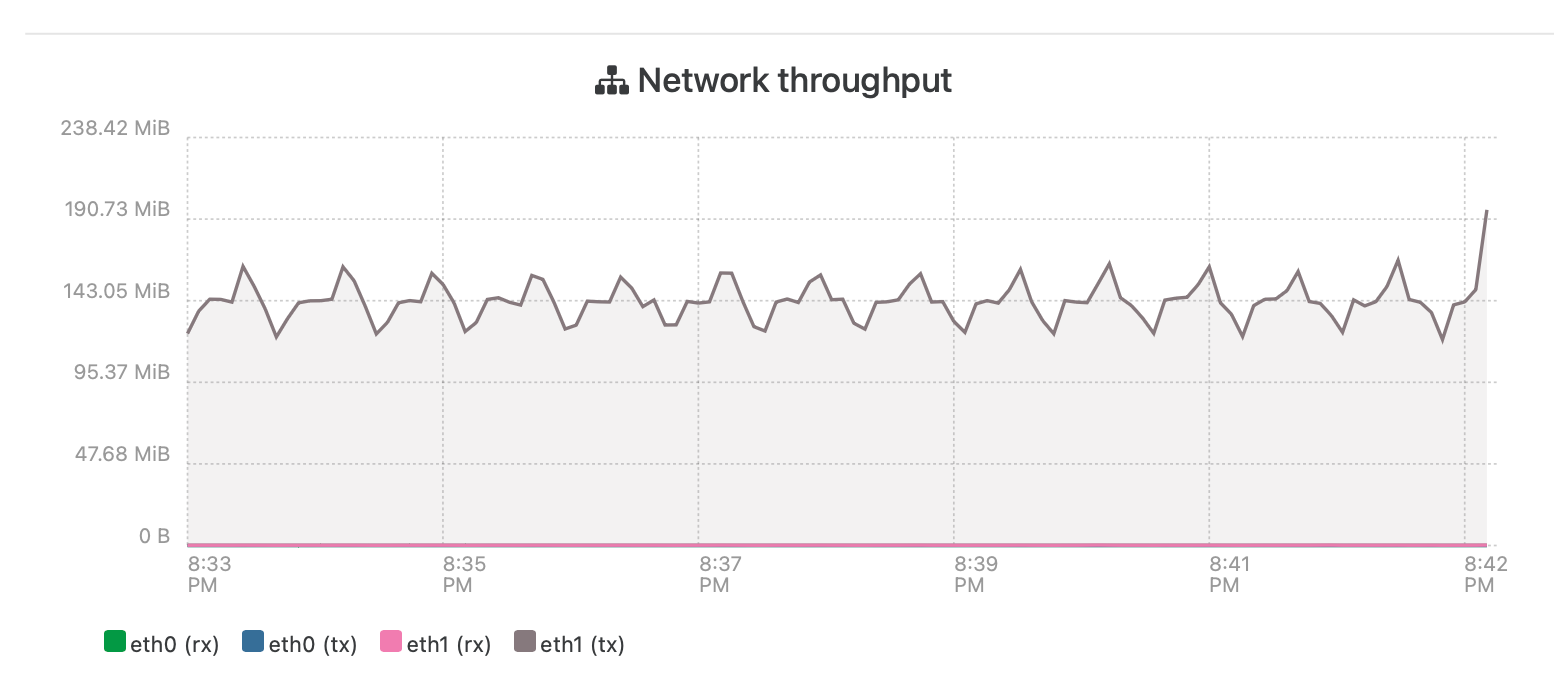

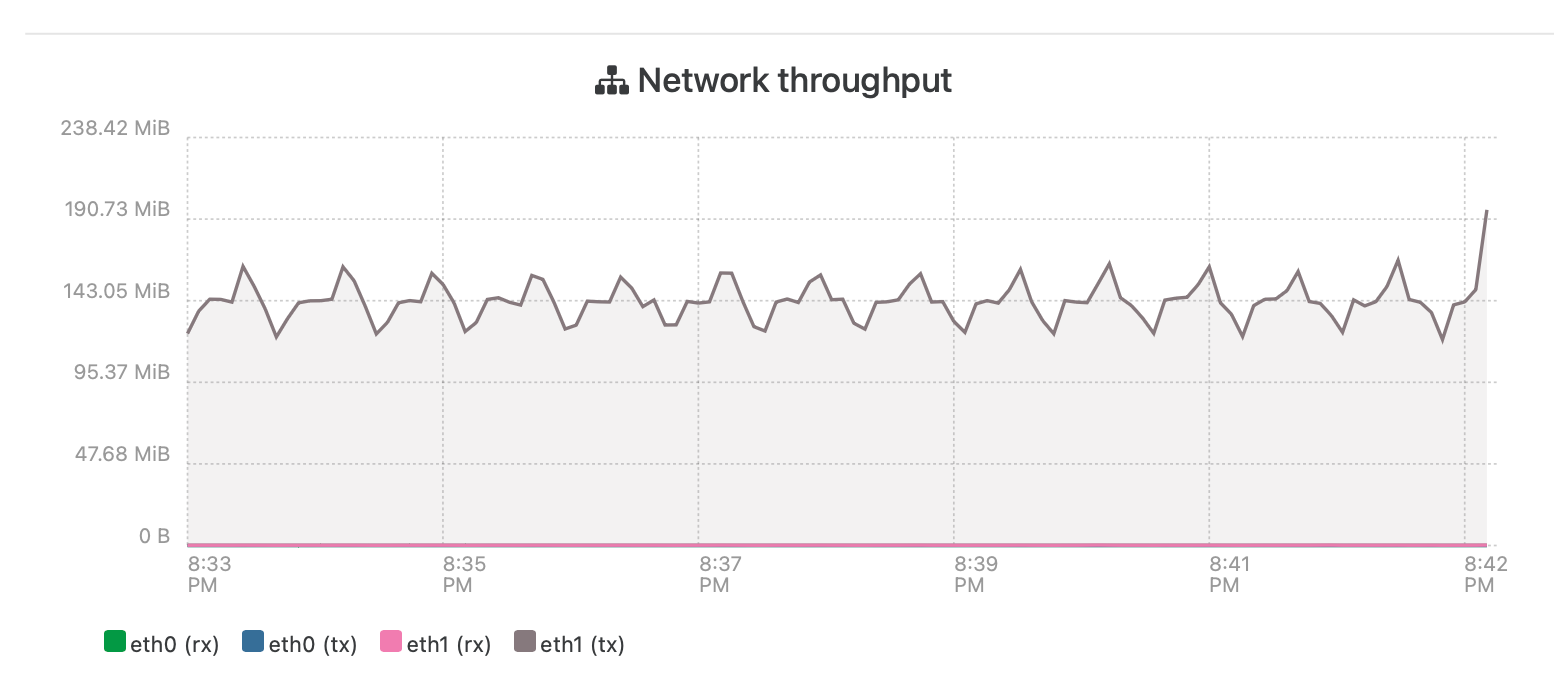

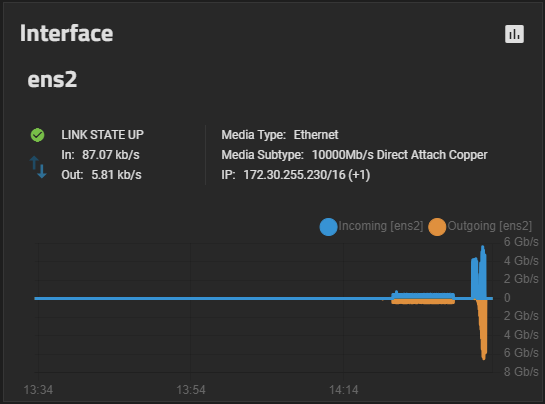

Currently migrating the test VDI from storage1 to storage2 (again) and getting an average of 400/400mbps (lower case m and b). If I do three VDI at once, I can get over a gigbit and sometimes close to 2 gigabit.

It's either SMAPIv1 or it is a file "block" size issue, bigger blocks can get me benchmarks up to 600MBps to almost 700MBps (capital M and B) on my slow storage over a 10gbps network. Testing this with XCP-NG 8.3 release to see if anything changed from the Beta, so far all is the same. Also all testing done with thin provisioned file shares (SMB and NFS). If I could get half my maximum tests for the VDI migration, I'd be happy. In fact I'm extremely pleased that my storage can go as fast as it is showing, it's all old stuff on SATA.

I have a whole thread on this testing if you want to read more.

You can see the migrate which was 400/400 and then the benchmark across the ethernet interface of my Truenas, this example was migrate from SMB to NFS, and benchmark on the NFS. Settings for that NFS are in the thread mentioned and certainly my fastest non-real world performance to date.

-

@Greg_E said in What should i expect from VM migration performance from Xen-ng ?:

I've spent a bunch of time trying to find some dark magic to making the VDI migration faster, so far nothing. My VM (memory) migration is fast enough that I'm not concerned right now. and don't have any testing to show for it.

Currently migrating the test VDI from storage1 to storage2 (again) and getting an average of 400/400mbps (lower case m and b). If I do three VDI at once, I can get over a gigbit and sometimes close to 2 gigabit.

It's either SMAPIv1 or it is a file "block" size issue, bigger blocks can get me benchmarks up to 600MBps to almost 700MBps (capital M and B) on my slow storage over a 10gbps network. Testing this with XCP-NG 8.3 release to see if anything changed from the Beta, so far all is the same. Also all testing done with thin provisioned file shares (SMB and NFS). If I could get half my maximum tests for the VDI migration, I'd be happy. In fact I'm extremely pleased that my storage can go as fast as it is showing, it's all old stuff on SATA.

I have a whole thread on this testing if you want to read more.

You can see the migrate which was 400/400 and then the benchmark across the ethernet interface of my Truenas, this example was migrate from SMB to NFS, and benchmark on the NFS. Settings for that NFS are in the thread mentioned and certainly my fastest non-real world performance to date.

That's impressive!

We're not seeing as high speeds are you are, we have 3 different storages, mostly doing NFS tho. We're still running 8.2.0 but I dont really think it matters as the issue is most likely tied to the SMAPIv1.We also noted that it goes a bit faster when doing 3-4 VDI's in parallell, but the individual speed per migration is about the same.

-

I'm doing upgrades on both XCP-NG and Truenas, when they are done I have 4 small VMs that I'm going to fire up at the same time and see what I can see. I might be able to hit 2gbps reads while all 4 are booting.

I have one last thing to try in my speed testing, going to see if increasing the RAM for each host makes a difference. Probably not since each already has a decent amount (defaults go up when you pass a certain amount of available). I read somewhere that going up to 16GB for each host might help, with my lab being so lightly used, I might try going up to 32GB for fun. I have lots of slots in my production system where I can add RAM if this helps on lab, the lab machines are full.

-

@Greg_E said in What should i expect from VM migration performance from Xen-ng ?:

I'm doing upgrades on both XCP-NG and Truenas, when they are done I have 4 small VMs that I'm going to fire up at the same time and see what I can see. I might be able to hit 2gbps reads while all 4 are booting.

I have one last thing to try in my speed testing, going to see if increasing the RAM for each host makes a difference. Probably not since each already has a decent amount (defaults go up when you pass a certain amount of available). I read somewhere that going up to 16GB for each host might help, with my lab being so lightly used, I might try going up to 32GB for fun. I have lots of slots in my production system where I can add RAM if this helps on lab, the lab machines are full.

We usually set our busy hosts to 16Gb for the dom0 - It does make a stability difference in our case (We could have 30-40 running VM's per host) especially when there is a bit of load inside the VM's.

Normal hosts gets 4-8Gb ram depending on the total amount of ram in the host, 4Gb on the ones with 32Gb and then 6Gb for 64Gb and 8Gb and upwards for the ones with +128Gb. -

BTW, the ram increase made zero difference in the VDI migration times.

I may still change the ram for each host in production, but I only have like 12 VMs right now and probably not many more to create in my little system. Might be useful if I ever get down to a single host because of a catastrophe. Currently running what it chose for default at 7.41 MiB, roughly doubled that in my lab to see what might happen.

Just got my VMware lab started so all this other stuff is going to back burner for a while. I will say I like the basic ESXi interface that they give you, I can see why smaller systems may not bother buying VCenter. I hope XO-lite gives us the same amount of function when it gets done (or more).

-

@Greg_E yeah I can imagine, I dont think the ram does much except gives you some "margins" under some heavier load. With 12 VM's I wouldn't bother increasing it from the default values

Yea the mgmt interface in ESXi is nice, I think the standalone interface is almost better than vCenter. Im pretty sure XO Lite will be able to do more when it is done, for example you'll be able to manage a small pool with it.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login