Timestamp lost in Continuous Replication

-

Ping @florent

-

Ant they are

full

-

@ph7 Do you know which commit you were on previously? Also, it may help if you can share the full backup log.

-

I was on

5bdd7installed at 202603091803 -

{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1773474108084", "jobId": "3dcd13a8-de8b-47b6-945b-12dbad9c6234", "jobName": "ContRep", "message": "backup", "scheduleId": "522d611e-7cd9-4fe0-a9e1-b409927cd8c8", "start": 1773474108084, "status": "success", "infos": [ { "data": { "vms": [ "b1940325-7c09-7342-5a90-be2185c6d5b9", "86ab334a-92dc-324c-0c42-43aad3ae3bc2", "0f5c4931-a468-e75d-fa54-e1f9da0227a1" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "b1940325-7c09-7342-5a90-be2185c6d5b9", "name_label": "PiHole wifi" }, "id": "1773474110343", "message": "backup VM", "start": 1773474110343, "status": "success", "tasks": [ { "id": "1773474111649", "message": "snapshot", "start": 1773474111649, "status": "success", "end": 1773474113141, "result": "d4b0607f-0837-c7ae-5c2d-6426995470bd" }, { "data": { "id": "4f2f7ae2-024a-9ac7-add4-ffe7d569cae7", "isFull": true, "name_label": "Q1-ContRep", "type": "SR" }, "id": "1773474113142", "message": "export", "start": 1773474113142, "status": "success", "tasks": [ { "id": "1773474114002", "message": "transfer", "start": 1773474114002, "status": "success", "tasks": [ { "id": "1773474189159", "message": "target snapshot", "start": 1773474189159, "status": "success", "end": 1773474190075, "result": "OpaqueRef:fa356b5f-4b25-d3e6-5507-6ed81c32b1d8" } ], "end": 1773474190075, "result": { "size": 4299161600 } } ], "end": 1773474190682 } ], "end": 1773474191523 }, { "data": { "type": "VM", "id": "86ab334a-92dc-324c-0c42-43aad3ae3bc2", "name_label": "Home Assistant" }, "id": "1773474191534", "message": "backup VM", "start": 1773474191534, "status": "success", "tasks": [ { "id": "1773474191707", "message": "snapshot", "start": 1773474191707, "status": "success", "end": 1773474193196, "result": "c3f038c3-7ca9-cbbb-9f84-61e1fd30c9d5" }, { "data": { "id": "4f2f7ae2-024a-9ac7-add4-ffe7d569cae7", "isFull": true, "name_label": "Q1-ContRep", "type": "SR" }, "id": "1773474193196:0", "message": "export", "start": 1773474193196, "status": "success", "tasks": [ { "id": "1773474194123", "message": "transfer", "start": 1773474194123, "status": "success", "tasks": [ { "id": "1773474462529", "message": "target snapshot", "start": 1773474462529, "status": "success", "end": 1773474463434, "result": "OpaqueRef:c13f3cab-29c8-4ef0-253f-de5998580cd9" } ], "end": 1773474463434, "result": { "size": 15548284928 } } ], "end": 1773474464311 } ], "end": 1773474466186 }, { "data": { "type": "VM", "id": "0f5c4931-a468-e75d-fa54-e1f9da0227a1", "name_label": "Sync Mate" }, "id": "1773474466193", "message": "backup VM", "start": 1773474466193, "status": "success", "tasks": [ { "id": "1773474466371", "message": "snapshot", "start": 1773474466371, "status": "success", "end": 1773474470399, "result": "36a17271-1c2f-4b26-0d86-dc0faf27fa17" }, { "data": { "id": "4f2f7ae2-024a-9ac7-add4-ffe7d569cae7", "isFull": true, "name_label": "Q1-ContRep", "type": "SR" }, "id": "1773474470399:0", "message": "export", "start": 1773474470399, "status": "success", "tasks": [ { "id": "1773474471561", "message": "transfer", "start": 1773474471561, "status": "success", "tasks": [ { "id": "1773476263789", "message": "target snapshot", "start": 1773476263789, "status": "success", "end": 1773476264925, "result": "OpaqueRef:88bd55f4-64ea-fb16-f389-4b9f42ac459f" } ], "end": 1773476264925, "result": { "size": 105526591488 } } ], "end": 1773476267354 } ], "end": 1773476268187 } ], "end": 1773476268187 } -

The latest XCP-ng update had an update regarding NTP

XAPI, XCP-ng's control plane, was updated to version 26.1.3. Added API for controlling NTP.This might be a longshot and I don't know it this has anything to do with my "problem"

The timestamp on ContRep VM's has always been inUTC

I'm usingStockholm SEas timezone and other bugs regarding presentation of time have been fixed but not this one, -

I did a rollback to

5bdd7and got the date back and also delta -

I did get a snapshot at one of the CR VM's with the 449e7 version.

I don't with 5bdd7 -

P ph7 referenced this topic on

-

@ph7 yes this is expected even if we did not have time to communicate on this yet : https://github.com/vatesfr/xen-orchestra/pull/9524

the goal is to have more symmetry between the source ( on VM with snapshots ) and the replica ( on VM with snapshots) . The end goal is to use this to be able to reverse a PRD , and to allow advanced scenarii like VM on edge site => replica on center site => backup on central servers

but it should not do full backups, it should respect the delta / full chain

-

@florent Florent, I could see the benefits of unified VM name, but could you at least push the timestamp in a note on the VM ?

it is important to know wich timestamp a replica VM is, in order to choose failover option wisely -

@Pilow the timestamp is on the snapshot, but you're right, we can add a note on the VM with the last replications informations

note that the older VMs replicated will be purged when we are sure they don't have any usefull data , so you will have only one VM replicated , wuth multiple snapshots

-

@florent ho nice

we had as many "VMs with a timestamp in the name" as number of REPLICAs, and multiple snapshot on source VM

now we have "one Replica VM with multiple snapshots" ? Veeam-replica-style...

do multiple snapshots persists on source VM too ?if it's true, that's nice on the concept.

but when your replica is over lvmoiscsi

not so nice

not so niceps : i didnt upgrade to last XOA/XCP patchs yet

-

we had as many "VMs with a timestamp in the name" as number of REPLICAs, and multiple snapshot on source VM

now we have "one Replica VM with multiple snapshots" ? Veeam-replica-style...we didn't look at veeam , but it's reassuring to see that we converge toward the solutions used elsewhere

it shouldn't change anything on the source

I am currently doing more test to see if we missed somethingedit: as an additional beenfits it should use less space on target it you have a retention > 1 since we will only have one active disk

-

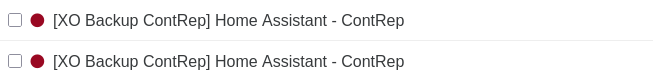

Hello everyone,

I’m not sure if my information is useful, but I’m experiencing the same problem. I am using continuous replication between two servers with a retention of 4.

Previously, four VM replicas were created, each with a timestamp in the name. With the current version, four VMs are created with identical names.My environment:

XO: from source commit 598ab

xcp-ng: 8.3.0 with the latest patchesMy backup job:

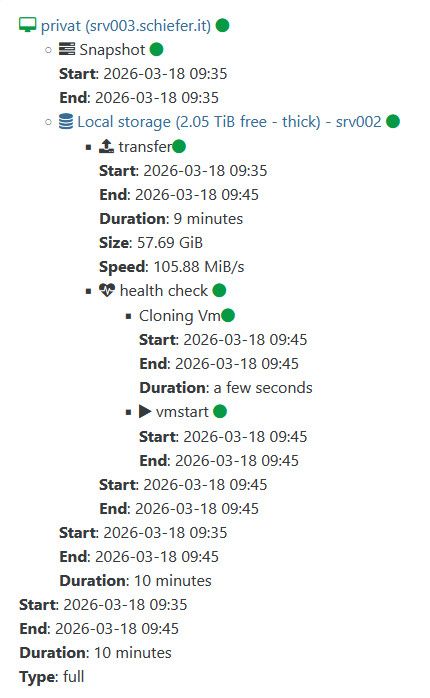

Name of backup job: replicate_to_srv002

Source server: srv003

Target server: srv002

VM name: privat

Retention: 4Result on the target server:

4 VMs with the name "[XO Backup replicate_to_srv002] privat - replicate_to_srv002", where only the newest one contains a snapshot named: "privat - replicate_to_srv002 - (20260318T083542Z)"Additionally, full backups are created on every run of the backup job, and no deltas are being used.

If I can help with any additional information, I’d be happy to do so.

Best regards,

Simon -

-

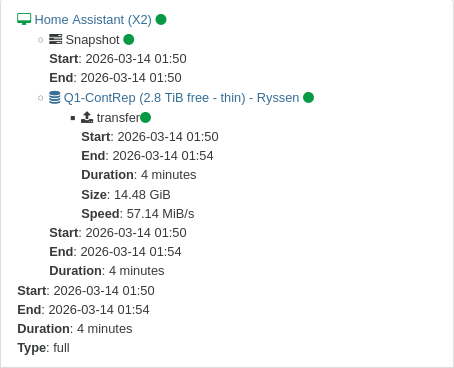

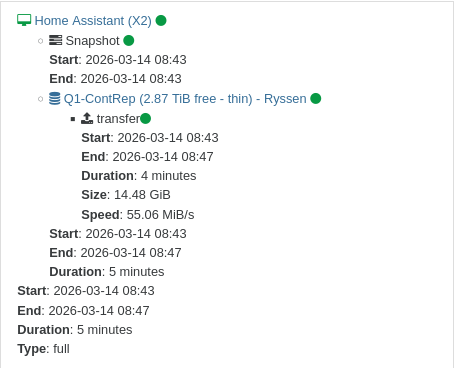

@Pilow

actually it looks like this:

edit:

Log of last run:

{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1773822934377", "jobId": "a95ac100-0e20-49c5-9270-c0306ee2852f", "jobName": "replicate_to_srv002", "message": "backup", "scheduleId": "1014584a-228c-4049-8912-51ab1b24925a", "start": 1773822934377, "status": "success", "infos": [ { "data": { "vms": [ "224a73db-9bc6-13d6-cc8e-0bf22dbede73" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "224a73db-9bc6-13d6-cc8e-0bf22dbede73", "name_label": "privat" }, "id": "1773822936247", "message": "backup VM", "start": 1773822936247, "status": "success", "tasks": [ { "id": "1773822937378", "message": "snapshot", "start": 1773822937378, "status": "success", "end": 1773822940361, "result": "d0ba1483-f5ae-ce72-fb4f-dbd9eafbf272" }, { "data": { "id": "8205e6c4-4d8f-69d9-6315-9ee89af8e307", "isFull": true, "name_label": "Local storage", "type": "SR" }, "id": "1773822940361:0", "message": "export", "start": 1773822940361, "status": "success", "tasks": [ { "id": "1773822942354", "message": "transfer", "start": 1773822942354, "status": "success", "tasks": [ { "id": "1773823497635", "message": "target snapshot", "start": 1773823497635, "status": "success", "end": 1773823500290, "result": "OpaqueRef:53bceb07-a69c-504d-e824-28f5384cb763" } ], "end": 1773823500290, "result": { "size": 61941481472 } }, { "id": "1773823501512", "message": "health check", "start": 1773823501512, "status": "success", "tasks": [ { "id": "1773823501515", "message": "cloning-vm", "start": 1773823501515, "status": "success", "end": 1773823504720, "result": "OpaqueRef:43e9644f-fc99-963a-4a54-da3b845e823b" }, { "id": "1773823504722", "message": "vmstart", "start": 1773823504722, "status": "success", "end": 1773823545662 } ], "end": 1773823549312 } ], "end": 1773823549312 } ], "end": 1773823549319 } ], "end": 1773823549319 } -

-

@florent

Yes, that is absolutely correct. I have a pool with two members without shared storage. Some VMs run on the master, and some on the second pool member. I replicate between the pool members so that, if necessary, I can start the VMs on the other member. This may not be best practice. -

@kratos you probably heard the sound of my head hitting my desk when I found the cause

the fix is in review, you will be able to use it in a few hours -

@florent

I’m a developer myself, so I can totally relate—just when you think everything is working perfectly, someone like me comes along

I’m really glad I could help contribute to finding a solution, and I’ll report back once I’ve tested the new commit. Thanks a lot for your work.However, this does raise the question for me: is my use case for continuous replication really that unusual?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login