-

LINSTOR can be reach in NFS or iSCSI if I remember correctly. You question is more for LINSTOR than for XCP-ng

-

@olivierlambert NFS and iSCSI have single point of failure. Yes, it is possible to deploy multipath iSCSI, but it is to complicated. I like CEPH RBD because it does not have single point of failure.

So I'm looking for something similar.From my point of vew XOSTOR is good idea, but in some cases there is no need to use all nodes as xcp-ng hosts. For example you do not need large amount of RAM and fast modern CPU for storage cluster nodes.

I think the best solution in my case will be to deploy XOSTOR controller in xcp-ng cluster connected to separate storage cluster.At first glance, I assume that it should be possible to connect storage cluster to xcp-ng with this command

linstor resource create node1 test1 --disklessSo the base idea is to use xcp-ng nodes for linstor-controllers/linstor-satellite and "storage" nodes as linstor-satellite only.

-

@splastunov / all.

Just on multipath iSCSI. I had this running for years with separate switches running the 'A side' and 'B side' multipath networks between the iSCSI dot hill's resilient and redundant controllers.

Once you have it configured, it is incredibly solid for what is a very budget solution. It just takes a bit of careful planning up front to make sure you wire up in a way that is properly resilient. Plus reliable monitoring to make sure that you notice when one of the resilient elements fails.

We had a number of switch power supply failures over the 10 year period that were completely transparent to the services running on the connected XCP-NG and XenServer hosts.

Similarly we were able to do a full DotHill controller replacement without any downtime after one of the two controllers failed.

We also replaced the hypervisor hardware a number of times over the lifetime of the platform.

-

@Mark-C Thank you!

Could you tell please how you scale your storage with iSCSI? What hardware/software are you using for storage? Is it possible to add storage nodes on fly or you have to deploy new storag cluster every time when you grow? -

@splastunov The DotHill certainly wasn't great for scaling out, because of the age of the firmware and the old-school treatment of the disk arrays.

We did deploy several disk expansion trays that were part populated at purchase as some level of expansion, and operated most of the array as a single underlying pool, which was then split up into iSCSI presented volumes.

So the limitations of the DotHill controllers and firmware we were using didn't address the storage pool scaling issue that CEPH / GPFS / etal are designed to.

However, some of the newer filer heads that treat their entire available disk array as a consolidated pool and support iSCSI would have that scalability, whilst keeping the hypervisor side relatively straight-forward.

CEPH etal are also in a much more mature place than they were back in the early 2010s too, so if I was doing it again, maybe we would go for a solution architecture based around that. Or XOSTOR, of course!

My intent was to present an iSCSI setup instance as something we found relatively bomb-proof, and post-initial configuration, relatively easy to scale. We provisioned 24 port A and B side network switches with jumbo frame support to give us the scalability to add further pools of hypervisors or storage, as required.

-

The typical hypervisor node was provisioned with at least 4 network ports, but most had 8 to allow trunking/resilience for every connection:

Management + live migration (Isolated)

World (Trunk)

iSCSI-A

iSCSI-BThe DotHills were 4 ports per controller, and the two controllers were configured as:

Management

iSCSI-A

iSCSI-B

SpareThe iSCSI-A and iSCSI-B were simple web-managed procurves with 24 ports each.

We had this scaled to 12 hypervisor nodes in 3 pools, all running off the same underlying dothill (dual redundant controller) hardware. We had provisioned some local SSD storage in each hypervisor for any nodes that were struggling for IOPs but ultimately never needed that.

-

OK we have debugged and improved this process, so including it here if it helps anyone else.

How to migrate resources between XOSTOR (linstor) clusters. This also works with piraeus-operator, which we use for k8s.

Manually moving listor resource with thin_send_recv

Migration of data

Commands

# PV: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 # S: pgdata-snapshot # s: 10741612544B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 #prep lvcreate -V 10741612544B --thinpool linstor_group/thin_device -n pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 pgdata-snapshot #send thin_send linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000_pgdata-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 2>/dev/nullWalk-through

Prep migration

[13:29 original-xostor-server ~]# lvs --noheadings --units B -o lv_size linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 26851934208B [13:53 new-xostor-server-01 ~]# lvcreate -V 26851934208B --thinpool linstor_group/thin_device -n pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 linstor_group Logical volume "pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000" created.Create snapshot

15:35:03] jonathon@jonathon-framework:~$ linstor --controller original-xostor-server s create pvc-12aca72c-d94a-4c09-8102-0a6646906f8d s_test SUCCESS: Description: New snapshot 's_test' of resource 'pvc-12aca72c-d94a-4c09-8102-0a6646906f8d' registered. Details: Snapshot 's_test' of resource 'pvc-12aca72c-d94a-4c09-8102-0a6646906f8d' UUID is: 3a07d2fd-6dc3-4994-b13f-8c3a2bb206b8 SUCCESS: Suspended IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' for snapshot SUCCESS: Suspended IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' for snapshot SUCCESS: Took snapshot of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' SUCCESS: Took snapshot of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' SUCCESS: Resumed IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'ovbh-vprod-k8s04-worker02' after snapshot SUCCESS: Resumed IO of '[pvc-12aca72c-d94a-4c09-8102-0a6646906f8d]' on 'original-xostor-server' after snapshotMigration

[13:53 original-xostor-server ~]# thin_send /dev/linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000_s_test 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-12aca72c-d94a-4c09-8102-0a6646906f8d_00000 2>/dev/nullNeed to yeet errors on both ends of command or it will fail.

This is the same setup process for replica-1 or replica-3. For replica-3 can target new-xostor-server-01 each time, for replica-1 be sure to spread them out right.

Replica-3 Setup

Explanation

thin_sendto new-xostor-server-01, will need to run commands to force sync of data to replicas.Commands

# PV: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 # snapshot: snipeit-snapshot # size: 21483225088B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 #prep lvcreate -V 21483225088B --thinpool linstor_group/thin_device -n pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 snipeit-snapshot linstor --controller original-xostor-server s l | grep -e 'snipeit-snapshot' #send thin_send linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000_snipeit-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-96cbebbe-f827-4a47-ae95-38b078e0d584_00000 2>/dev/null #linstor setup linstor --controller new-xostor-server-01 resource-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 --resource-group sc-74e1434b-b435-587e-9dea-fa067deec898 linstor --controller new-xostor-server-01 volume-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 21483225088B --storage-pool xcp-sr-linstor_group_thin_device linstor --controller new-xostor-server-01 resource create --storage-pool xcp-sr-linstor_group_thin_device --providers LVM_THIN new-xostor-server-01 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 linstor --controller new-xostor-server-01 resource create --auto-place +1 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 #Run the following on the node with the data. This is the prefered command drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 #Run the following on the node without the data. This is just for reference drbdadm invalidate pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 linstor --controller new-xostor-server-01 r l | grep -e 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584'--- apiVersion: v1 kind: PersistentVolume metadata: name: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 annotations: pv.kubernetes.io/provisioned-by: linstor.csi.linbit.com finalizers: - external-provisioner.volume.kubernetes.io/finalizer - kubernetes.io/pv-protection - external-attacher/linstor-csi-linbit-com spec: accessModes: - ReadWriteOnce capacity: storage: 20Gi # Ensure this matches the actual size of the LINSTOR volume persistentVolumeReclaimPolicy: Retain storageClassName: linstor-replica-three # Adjust to the storage class you want to use volumeMode: Filesystem csi: driver: linstor.csi.linbit.com fsType: ext4 volumeHandle: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 volumeAttributes: linstor.csi.linbit.com/mount-options: '' linstor.csi.linbit.com/post-mount-xfs-opts: '' linstor.csi.linbit.com/uses-volume-context: 'true' linstor.csi.linbit.com/remote-access-policy: 'true' --- apiVersion: v1 kind: PersistentVolumeClaim metadata: annotations: pv.kubernetes.io/bind-completed: 'yes' pv.kubernetes.io/bound-by-controller: 'yes' volume.beta.kubernetes.io/storage-provisioner: linstor.csi.linbit.com volume.kubernetes.io/storage-provisioner: linstor.csi.linbit.com finalizers: - kubernetes.io/pvc-protection name: pp-snipeit-pvc namespace: snipe-it spec: accessModes: - ReadWriteOnce resources: requests: storage: 20Gi storageClassName: linstor-replica-three volumeMode: Filesystem volumeName: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584Walk-through

jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 --resource-group sc-74e1434b-b435-587e-9dea-fa067deec898 SUCCESS: Description: New resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' created. Details: Resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' UUID is: 772692e2-3fca-4069-92e9-2bef22c68a6f jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 volume-definition create pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 21483225088B --storage-pool xcp-sr-linstor_group_thin_device SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: New volume definition with number '0' of resource definition 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' created. jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource create --storage-pool xcp-sr-linstor_group_thin_device --providers LVM_THIN new-xostor-server-01 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Successfully set property key(s): StorPoolName INFO: Updated pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 DRBD auto verify algorithm to 'crct10dif-pclmul' SUCCESS: Description: New resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' registered. Details: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' UUID is: 3072aaae-4a34-453e-bdc6-facb47809b3d SUCCESS: Description: Volume with number '0' on resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on node 'new-xostor-server-01' successfully registered Details: Volume UUID is: 52b11ef6-ec50-42fb-8710-1d3f8c15c657 SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-01' jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 resource create --auto-place +1 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: Successfully set property key(s): StorPoolName SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' successfully autoplaced on 2 nodes Details: Used nodes (storage pool name): 'new-xostor-server-02 (xcp-sr-linstor_group_thin_device)', 'new-xostor-server-03 (xcp-sr-linstor_group_thin_device)' INFO: Resource-definition property 'DrbdOptions/Resource/quorum' updated from 'off' to 'majority' by auto-quorum INFO: Resource-definition property 'DrbdOptions/Resource/on-no-quorum' updated from 'off' to 'suspend-io' by auto-quorum SUCCESS: Added peer(s) 'new-xostor-server-02', 'new-xostor-server-03' to resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-01' SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-02' SUCCESS: Created resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-03' SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-03' ready Details: Auto-placing resource: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 SUCCESS: Description: Resource 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' on 'new-xostor-server-02' ready Details: Auto-placing resource: pvc-96cbebbe-f827-4a47-ae95-38b078e0d584At this point

jonathon@jonathon-framework:~$ linstor --controller new-xostor-server-01 v l | grep -e 'pvc-96cbebbe-f827-4a47-ae95-38b078e0d584' | new-xostor-server-01 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 9.20 GiB | Unused | UpToDate | | new-xostor-server-02 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 112.73 MiB | Unused | UpToDate | | new-xostor-server-03 | pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 | xcp-sr-linstor_group_thin_device | 0 | 1032 | /dev/drbd1032 | 112.73 MiB | Unused | UpToDate |To force the sync, run the following command on the node with the data

drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584This will kick it to get the data re-synced.

[14:51 new-xostor-server-01 ~]# drbdadm invalidate-remote pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 [14:51 new-xostor-server-01 ~]# drbdadm status pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 role:Secondary disk:UpToDate new-xostor-server-02 role:Secondary replication:SyncSource peer-disk:Inconsistent done:1.14 new-xostor-server-03 role:Secondary replication:SyncSource peer-disk:Inconsistent done:1.18 [14:51 new-xostor-server-01 ~]# drbdadm status pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 pvc-96cbebbe-f827-4a47-ae95-38b078e0d584 role:Secondary disk:UpToDate new-xostor-server-02 role:Secondary peer-disk:UpToDate new-xostor-server-03 role:Secondary peer-disk:UpToDateSee: https://github.com/LINBIT/linstor-server/issues/389

Replica-1setup

# PV: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 # S: pgdata-snapshot # s: 10741612544B #get size lvs --noheadings --units B -o lv_size linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 #prep lvcreate -V 10741612544B --thinpool linstor_group/thin_device -n pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 linstor_group #create snapshot linstor --controller original-xostor-server s create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 pgdata-snapshot #send thin_send linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000_pgdata-snapshot 2>/dev/null | ssh root@new-xostor-server-01 thin_recv linstor_group/pvc-6408a214-6def-44c4-8d9a-bebb67be5510_00000 2>/dev/null # 1 linstor --controller new-xostor-server-01 resource-definition create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 --resource-group sc-b066e430-6206-5588-a490-cc91ecef53d6 linstor --controller new-xostor-server-01 volume-definition create pvc-6408a214-6def-44c4-8d9a-bebb67be5510 10741612544B --storage-pool xcp-sr-linstor_group_thin_device linstor --controller new-xostor-server-01 resource create new-xostor-server-01 pvc-6408a214-6def-44c4-8d9a-bebb67be5510--- apiVersion: v1 kind: PersistentVolume metadata: name: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 annotations: pv.kubernetes.io/provisioned-by: linstor.csi.linbit.com finalizers: - external-provisioner.volume.kubernetes.io/finalizer - kubernetes.io/pv-protection - external-attacher/linstor-csi-linbit-com spec: accessModes: - ReadWriteOnce capacity: storage: 10Gi # Ensure this matches the actual size of the LINSTOR volume persistentVolumeReclaimPolicy: Retain storageClassName: linstor-replica-one-local # Adjust to the storage class you want to use volumeMode: Filesystem csi: driver: linstor.csi.linbit.com fsType: ext4 volumeHandle: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 volumeAttributes: linstor.csi.linbit.com/mount-options: '' linstor.csi.linbit.com/post-mount-xfs-opts: '' linstor.csi.linbit.com/uses-volume-context: 'true' linstor.csi.linbit.com/remote-access-policy: | - fromSame: - xcp-ng/node nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: xcp-ng/node operator: In values: - new-xostor-server-01 --- apiVersion: v1 kind: PersistentVolumeClaim metadata: annotations: pv.kubernetes.io/bind-completed: 'yes' pv.kubernetes.io/bound-by-controller: 'yes' volume.beta.kubernetes.io/storage-provisioner: linstor.csi.linbit.com volume.kubernetes.io/selected-node: ovbh-vtest-k8s01-worker01 volume.kubernetes.io/storage-provisioner: linstor.csi.linbit.com finalizers: - kubernetes.io/pvc-protection name: acid-merch-2 namespace: default spec: accessModes: - ReadWriteOnce resources: requests: storage: 10Gi storageClassName: linstor-replica-one-local volumeMode: Filesystem volumeName: pvc-6408a214-6def-44c4-8d9a-bebb67be5510 -

Last week I stood up two XCP-ng hosts with LINSTOR without issue.

This week I am standing up a third XCP-ng host and encounter a show stopping error when I try to install LINSTOR:

Error: Package: xcp-ng-linstor-1.1-3.xcpng8.2.noarch (xcp-ng-linstor)

Requires: sm-linstor

...

Failed to install LINSTOR package: xcp-ng-linstor.This third host is 100% identical in hardware to the first two hosts. Fresh from-scratch new install of XCP-ng. Here is an output of commands and their results on the third host:

[21:35 bol-xcp3 ~]# wget https://gist.githubusercontent.com/Wescoeur/7bb568c0e09e796710b0ea966882fcac/raw/052b3dfff9c06b1765e51d8de72c90f2f90f475b/gistfile1.txt -O install && chmod +x install --2024-11-16 21:35:08-- https://gist.githubusercontent.com/Wescoeur/7bb568c0e09e796710b0ea966882fcac/raw/052b3dfff9c06b1765e51d8de72c90f2f90f475b/gistfile1.txt Resolving gist.githubusercontent.com (gist.githubusercontent.com)... 185.199.111.133, 185.199.110.133, 185.199.109.133, ... Connecting to gist.githubusercontent.com (gist.githubusercontent.com)|185.199.111.133|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 3596 (3.5K) [text/plain] Saving to: ‘install’ 100%[=============================================================================>] 3,596 --.-K/s in 0s 2024-11-16 21:35:08 (22.4 MB/s) - ‘install’ saved [3596/3596] [21:35 bol-xcp3 ~]# ./install --disks /dev/nvme0n1 --thin Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Resolving Dependencies --> Running transaction check ---> Package xcp-ng-release-linstor.noarch 0:1.3-1.xcpng8.2 will be installed --> Finished Dependency Resolution Dependencies Resolved ======================================================================================================================= Package Arch Version Repository Size ======================================================================================================================= Installing: xcp-ng-release-linstor noarch 1.3-1.xcpng8.2 xcp-ng-updates 4.0 k Transaction Summary ======================================================================================================================= Install 1 Package Total download size: 4.0 k Installed size: 477 Downloading packages: xcp-ng-release-linstor-1.3-1.xcpng8.2.noarch.rpm | 4.0 kB 00:00:01 Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : xcp-ng-release-linstor-1.3-1.xcpng8.2.noarch 1/1 Verifying : xcp-ng-release-linstor-1.3-1.xcpng8.2.noarch 1/1 Installed: xcp-ng-release-linstor.noarch 0:1.3-1.xcpng8.2 Complete! Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Resolving Dependencies --> Running transaction check ---> Package xcp-ng-linstor.noarch 0:1.1-3.xcpng8.2 will be installed --> Processing Dependency: sm-linstor for package: xcp-ng-linstor-1.1-3.xcpng8.2.noarch --> Finished Dependency Resolution Error: Package: xcp-ng-linstor-1.1-3.xcpng8.2.noarch (xcp-ng-linstor) Requires: sm-linstor Available: sm-2.30.8-2.1.0.linstor.4.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-2.1.0.linstor.5.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-2.1.0.linstor.6.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-2.3.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-2.3.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-7.1.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-10.1.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-10.1.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-10.1.0.linstor.3.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-12.1.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-12.1.0.linstor.3.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Available: sm-2.30.8-12.1.0.linstor.4.xcpng8.2.x86_64 (xcp-ng-linstor) sm-linstor Installed: sm-2.30.8-13.1.xcpng8.2.x86_64 (@xcp-ng-updates) Not found Available: sm-2.29.1-1.2.xcpng8.2.x86_64 (xcp-ng-base) Not found Available: sm-2.30.4-1.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.4-1.1.0.linstor.8.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.6-1.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.6-1.1.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.6-1.2.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.1.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.2.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.3.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.7-1.3.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.3.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.3.0.linstor.3.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.3.0.linstor.7.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.7-1.3.0.linstor.8.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.8-2.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.8-2.1.0.linstor.1.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.8-2.1.0.linstor.2.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.8-2.1.0.linstor.3.xcpng8.2.x86_64 (xcp-ng-linstor) Not found Available: sm-2.30.8-2.3.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.8-7.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.8-10.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found Available: sm-2.30.8-12.1.xcpng8.2.x86_64 (xcp-ng-updates) Not found You could try using --skip-broken to work around the problem You could try running: rpm -Va --nofiles --nodigest Failed to install LINSTOR package: xcp-ng-linstor.It seems the sm* package(s) are missing ? I don't know what to do.

Edit 2024-11-17 1702 CST:

yum install xcp-ng-release-linstorindicatesxcp-ng-release-linstoris already installed and latest version.[16:14 bol-xcp3 ~]# yum install xcp-ng-release-linstor Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Package xcp-ng-release-linstor-1.3-1.xcpng8.2.noarch already installed and latest version Nothing to do -

@BlueToast This should be fixed now. Please retry the XOSTOR installation.

-

@Danp Success this this - thanks for the assist.

Executed with great success:

Executed with great success:yum install xcp-ng-linstor yum install xcp-ng-release-linstor ./install --disks /dev/nvme0n1 --thin -

Since XOSTOR is now supported on XCP-ng 8.3 LTS, should we use the same script or some other method is required ?

Can you remove the heading which states the script is only compatible with 8.2 ? -

Ping @Team-Storage

-

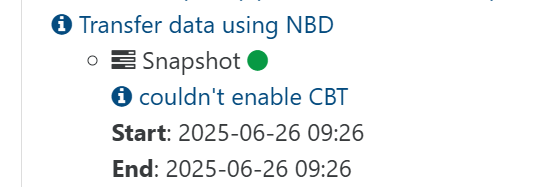

Is CBT meant to be supported on XOSTOR?

I've been experimenting with XOSTOR recently, but upon testing a delta-backup, noticed this warning...

The error message behind this is

SR_OPERATION_NOT_SUPPORTEDwhen callingAsync.VDI.enable_cbt.Running

xe sr-param-list uuid={uuid}shows the following:[~]# xe sr-param-list uuid={...} uuid ( RO) : {...} name-label ( RW): CD6 name-description ( RW): Array of Kioxia CD6 U.2 drives, one in each Host. host ( RO): <shared> allowed-operations (SRO): unplug; plug; PBD.create; update; PBD.destroy; VDI.resize; VDI.clone; scan; VDI.snapshot; VDI.mirror; VDI.create; VDI.destroy {...etc} type ( RO): linstor content-type ( RO): user shared ( RW): true introduced-by ( RO): <not in database> is-tools-sr ( RO): false other-config (MRW): auto-scan: true sm-config (MRO): {...etc}Compared to another SR, the following allowed-operations are missing:

VDI.enable_cbt; VDI.list_changed_blocks; VDI.disable_cbt; VDI.data_destroy; VDI.set_on_bootIs this the expected behaviour? Note that this is using XCP-ng 8.2 (I've yet to test out 8.3).

-

-

@peter_webbird We've already had feedback on CBT and LINSTOR/DRBD, we don't necessarily recommend enabling it. We have a blocking dev card regarding a bug with LVM lvchange command that may fail on CBT volumes used by a XOSTOR SR. We also have other issues related to migration with CBT.

-

@ronan-a @dthenot @Team-Storage

Guys, Can you please clarify which method to use for installing XOSTOR in XCP-ng 8.3 ?

Simple :

yum install xcp-ng-linstor yum install xcp-ng-release-linstor ./install --disks /dev/nvme0n1 --thinOr the script in the first post ?

Or Some other script ? -

@gb.123 Hello,

The instruction in the first post are still the way to go

-

@dthenot said in XOSTOR hyperconvergence preview:

@gb.123 Hello,

The instruction in the first post are still the way to go

I'm curious about that as well but the first post says that the installation script is only compatible with 8.2 and doesn't mention 8.3. Is that still the case or is the installation script now compatible with 8.3 as well? If not, is there an installation script that is compatible with 8.3?

I know that using XO is the recommended method for installation but I'm interested in an installation script as I would like to try to integrate XOSTOR installation into an XCP-ng installation script I already have which runs via PXE boot.

-

@JeffBerntsen That's why I meant, the way to install written in the first post still work in 8.3, the script still work as expected also, it basically only create the VG/LV needed on hosts before you create the SR.

-

@dthenot said in XOSTOR hyperconvergence preview:

@JeffBerntsen That's why I meant, the way to install written in the first post still work in 8.3, the script still work as expected also, it basically only create the VG/LV needed on hosts before you create the SR.

Got it. Thanks!

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login