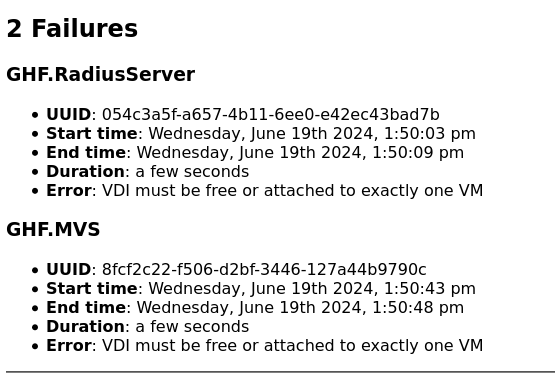

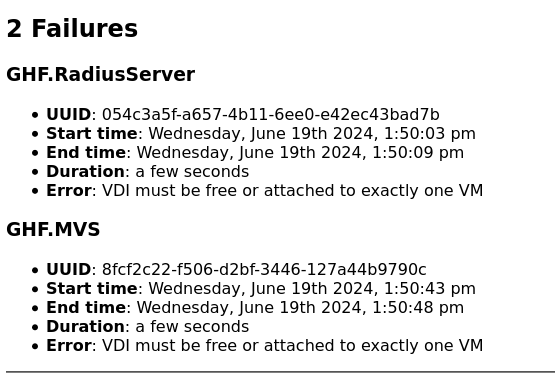

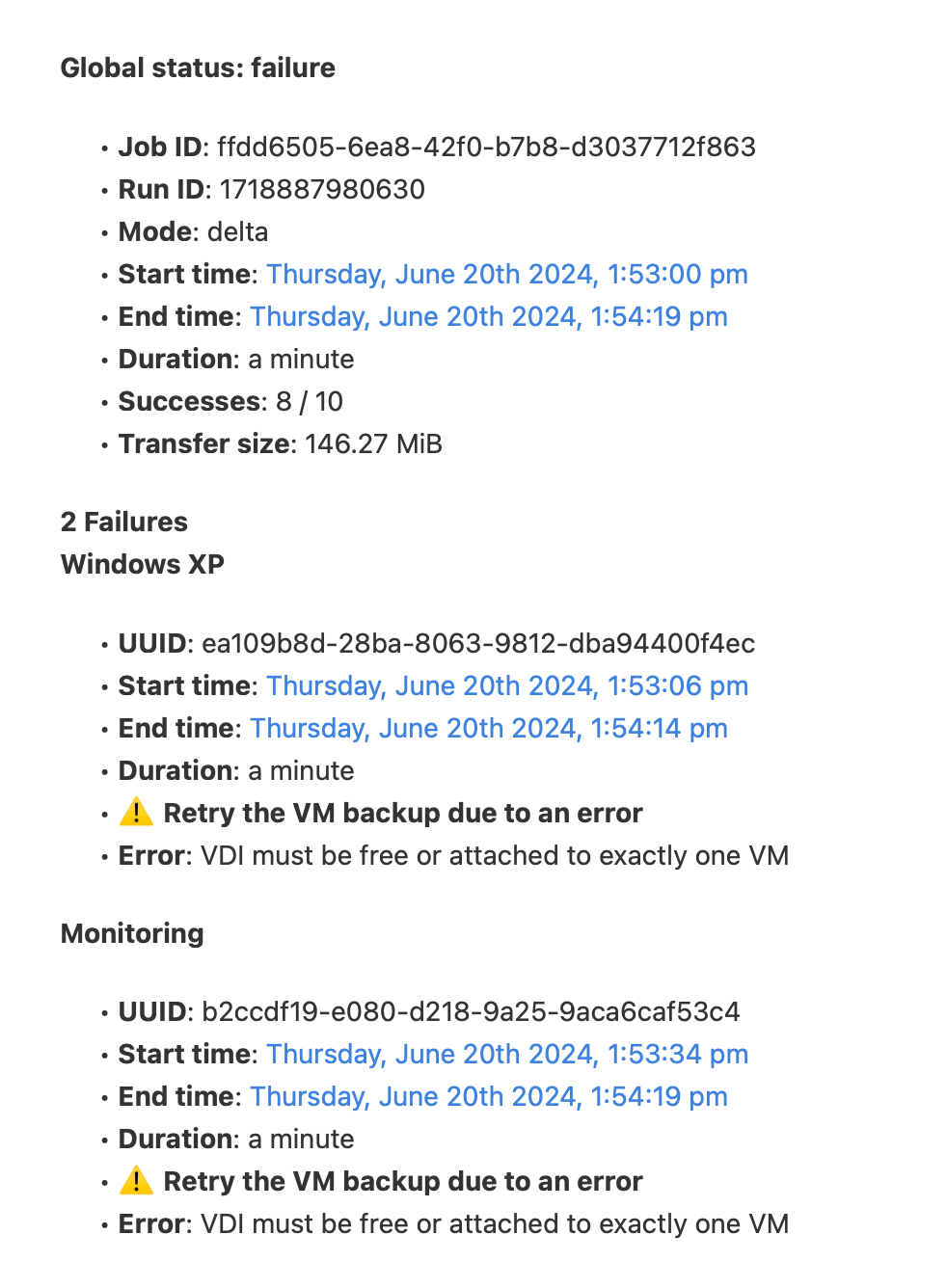

backups started failing: Error: VDI must be free or attached to exactly one VM

-

@manilx Restored Xen Orchestra, commit 31461

Master, commit c134bBackups fine.

-

@manilx Hi, manlix

after a working backup, do you have any disk attached to dom0 ? (dashboard => health )

-

@florent Hi,

Reverted to previous VM and deleted the new one..... Can't check.

BUT I checked the dashboard health before and there was nothing wrong. -

Hi Guys, Just chimeing in that I am seeing the same problem

-

Oh.. Just a side note here: I went into the backup history and "restarted" the backups on the failed VM's and both VM's backed up successfully. Huh...

-

@Anonabhar because this is an immediate error for something thta was under the radarn and was (mostly ) recovering by itself

But when it fails, it's not great ( no VDI coalesce for moth to work with) .

We'll try to find a good equilibrium point between enuring backup are running correctly and detecting problems early by the end of the month

-

@Anonabhar I did restart the failed backups and they always errored out again....

-

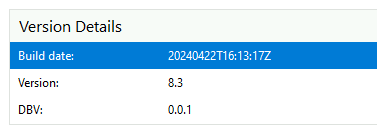

@florent Just installed latest build. Same errors.

Dashboard/Health no errors.Reverting.....

-

@florent I just upgraded to latest build as a test again. As no new news here I'm hoping that one update will fix this.

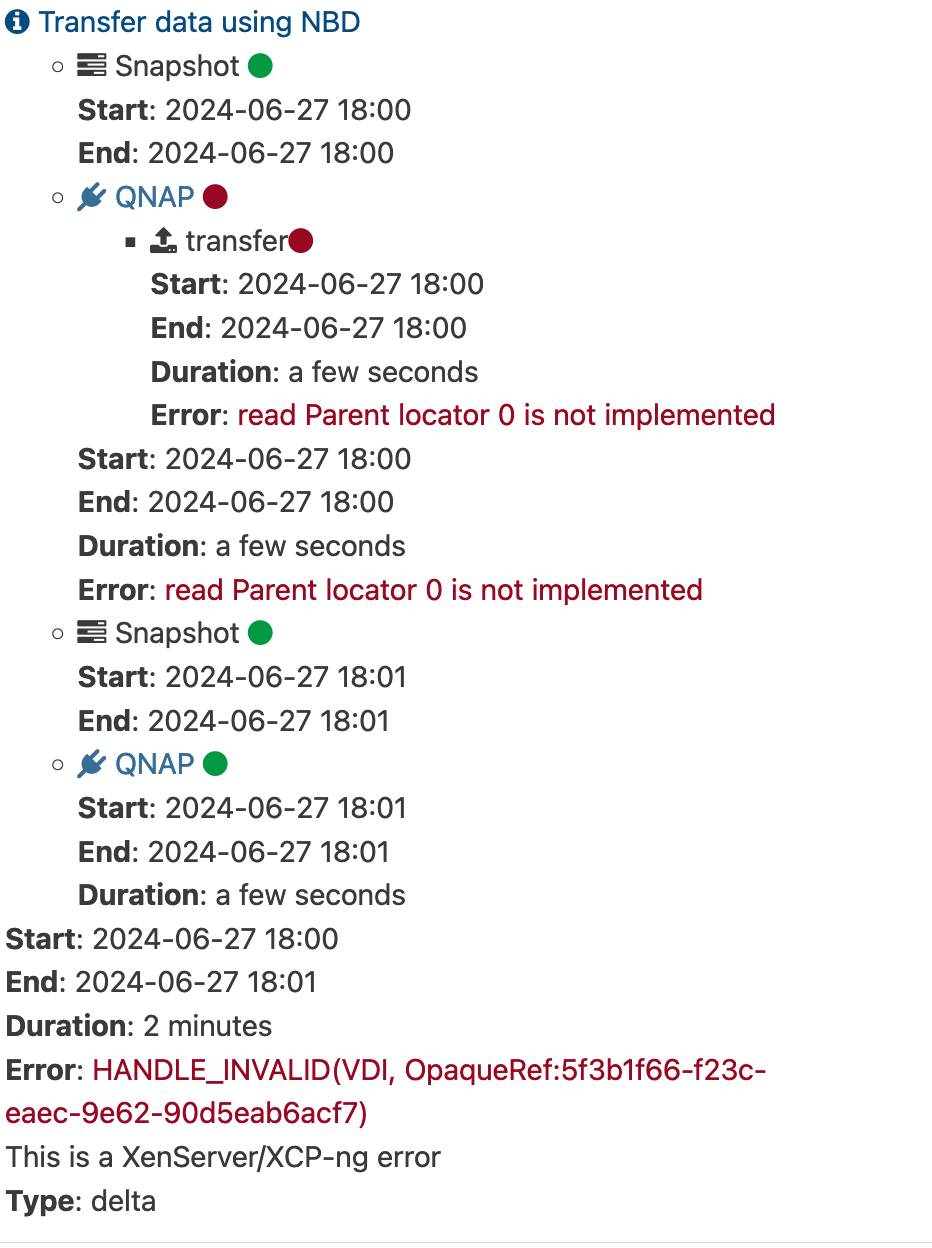

Anyway tested a backup and got the error again:

2 out of 10 VM's

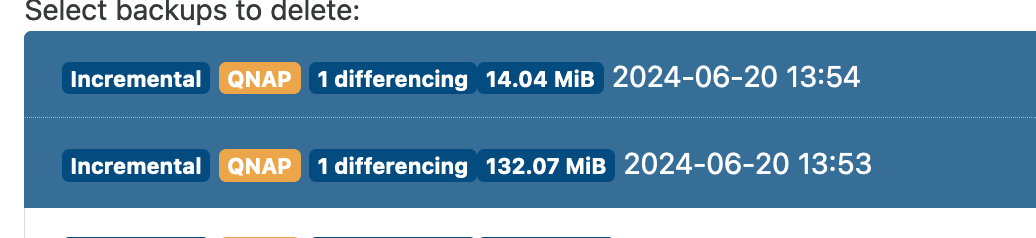

But I found another clue, when trying to delete the backups I see that on those failed VM's I get TWO backups in the list, instead of just one I've started!!!

Again: Xen Orchestra, commit 31461 Master, commit 16ae1 is last working build.

-

M manilx referenced this topic on

-

@florent @manilx @olivierlambert I have these failures too. I find the VDI attached to the control domain as a leftover from Continuous Replication. If I remove the dead VDI then backups work again. The problem is on going as I do CR every hour and sometimes it leaves one or more (random) dead VDI attached to the Control Domain.

I'm waiting for florent to push a XO CR fix before I update to the latest XO. But I don't expect the problem to be resolved in the latest XO update.

-

@Andrew Only doing Delya backups here and no dead VDI left attached...

Something seriously broke.....

-

@manilx They get stuck for me on Delta Backups too (not just CR).

Sometimes I have to do a Restart Toolstack to free it.

Check for running tasks that are stuck. Or try a Restart Toolstack on the master (it normally does not hurt anything to do that just to try it).

-

M manilx referenced this topic on

-

Hi,

We added 2 fixes in latest commit on master, please restart your XAPI (to clear all tasks), and try again with your updated XO

-

@olivierlambert Updated and still backups are broken:

Rolling back

-

Can you try without any previous snapshot?

-

@olivierlambert I reverted to previous version and backups OK.

Then I updated again, deleted all snapshots (had to wait a while for garbage collection) and run fresh backups:1st full: backup progress bars not updating, staying at 0% (I can only check progress by seeing data written to the NAS). Backup finished OK using NBD.

next delta: also run OK, without progress and all VM's showed ' cleanVm: incorrect backup size in metadata'

So, some progress

progress bars are really missing now

progress bars are really missing now

Speed still saturating the 2,5G management interface, so good.

-

@olivierlambert P.S. After delta backup I have all VM's with 'Unhealthy VDIs' waiting to coalesce but no Garbarge Collection job running.....

running

cat /var/log/SMlog | grep -i coalesceon host gives:Jun 27 18:48:03 vp6670 SM: [1043898] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/c892688d-10b6-4ddd-938c-fb4f2bc3eb77.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/6f6389a1-9387-4fdd-8d4c-97cb1cf459bc.cbtlog'] Jun 27 18:48:08 vp6670 SM: [1044409] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/af7a9b25-b1a4-40c0-9d48-21e1f0a9e4b4.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/35b88709-1e17-4bde-8799-0c8b4c879868.cbtlog'] Jun 27 18:48:22 vp6670 SM: [1045338] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/0fea5975-e83a-4c30-89ea-c38b8c8ccb23.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/8893a898-2c21-4325-a7ee-882b3e5b9223.cbtlog'] Jun 27 18:48:25 vp6670 SM: [1045614] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/99ff967e-fbd3-4b86-a76a-37879a08ef56.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/fcbee773-7147-4f80-9eca-48383c73fd81.cbtlog'] Jun 27 18:48:29 vp6670 SM: [1046130] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/3b268ed5-7856-4948-bd27-bcc71b33ce66.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/19d60fac-c805-4c6c-8f02-819dd68a4407.cbtlog'] Jun 27 18:48:34 vp6670 SM: [1046641] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/b83b7e8f-aede-4a37-92f1-4c867af6e39b.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/fdfa17b2-c47d-4b0b-93c3-df78653fbb8f.cbtlog'] Jun 27 18:48:39 vp6670 SM: [1047270] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/b49458fc-3047-417e-b9db-8d188e74105e.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/77a7f093-11a1-4e6c-a502-e8aa07f1fa88.cbtlog'] Jun 27 18:48:41 vp6670 SM: [1047416] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/49911f5d-5da5-48d7-a099-2ad83d2004d5.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/95395cf6-f234-43a6-a9ad-87a09fb2fa3e.cbtlog'] -

@olivierlambert After 15min still nothing. No coalesce. Even after rescanning storage.

So still something broken.

Will revert as I need backups working reliably.......

-

@olivierlambert I had to reboot hosts to remove the Unhealthy VDIs.

-

@olivierlambert Each time I tried the new version(s) and I had trouble with coalesce stopping to work I have to reboot the hosts to get things going.

And I find the following in the log:

Jun 27 21:02:32 vp6670 SMGC: [10573] Coalesced size = 17.851G Jun 27 21:02:32 vp6670 SMGC: [10573] Coalesce candidate: *0ebaa4f7(20.000G/64.168M?) (tree height 3) Jun 27 21:02:50 vp6670 SM: [11873] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/0cc54304-4961-482f-a1b7-a8222dd143a1.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/fe11c7f1-7331-427e-8fa9-76412a2cbb75.cbtlog'] Jun 27 21:03:04 vp6670 SM: [12713] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/72c283d5-0be8-47e5-8af0-27b9590a1477.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/d6cf5333-99e7-46c4-b4cd-9776a2dada07.cbtlog'] Jun 27 21:03:05 vp6670 SM: [12817] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/68087dfa-d1fb-4d15-a05d-0a7aa59d2407.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/17f1ded2-f87e-40c9-97ea-ae89c77cc874.cbtlog'] Jun 27 21:03:14 vp6670 SM: [13471] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/8b373c2b-3e22-4c8f-ab17-26a1987237bd.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/6fa052a8-3fe9-471b-887d-c40bd0436c8c.cbtlog'] Jun 27 21:03:43 vp6670 SM: [14859] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/77c79ae4-884d-40fb-9117-c04bc81d5a84.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/8b9823c4-92cb-4f5f-b073-79adb1277fc1.cbtlog'] Jun 27 21:03:47 vp6670 SM: [15197] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/1a7f411a-6022-4974-8779-92d923d07239.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/9c884a73-b733-479a-bb6d-d6a9e6a7677f.cbtlog'] Jun 27 21:03:55 vp6670 SM: [16078] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/d35a1c18-3547-4b51-b6ef-eb777e233abb.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/15c18cad-fec8-4cd1-83fe-309eff8c200c.cbtlog'] Jun 27 21:04:10 vp6670 SM: [16485] ['/usr/sbin/cbt-util', 'coalesce', '-p', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/81766141-b82a-4f0a-ad47-cd41e0c93104.cbtlog', '-c', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/0e8bea1f-27d6-4f21-88d4-1f6eaf64b545.cbtlog']When there are entries with .cbtlog coalesce stops. These are created by the new NBD/CBT backups and somehow are left over....

After a reboot all works when these disappear.

Jun 27 21:07:32 vp6670 SMGC: [10573] Coalesced size = 17.851G Jun 27 21:07:32 vp6670 SMGC: [10573] Coalesce candidate: *0ebaa4f7(20.000G/64.168M?) (tree height 3) Jun 27 21:07:32 vp6670 SMGC: [10573] Coalesced size = 17.851G Jun 27 21:07:32 vp6670 SMGC: [10573] Coalesce candidate: *0ebaa4f7(20.000G/64.168M?) (tree height 3) Jun 27 21:07:32 vp6670 SMGC: [10573] Running VHD coalesce on *0ebaa4f7(20.000G/64.168M?) Jun 27 21:07:32 vp6670 SM: [18989] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/0ebaa4f7-6920-47e3-9104-00e6486b38ef.vhd'] Jun 27 21:07:35 vp6670 SMGC: [10573] Coalesced size = 49.855G Jun 27 21:07:35 vp6670 SMGC: [10573] Coalesce candidate: *2df0321f(60.000G/342.790M?) (tree height 3) Jun 27 21:07:35 vp6670 SMGC: [10573] Coalesced size = 49.855G Jun 27 21:07:35 vp6670 SMGC: [10573] Coalesce candidate: *2df0321f(60.000G/342.790M?) (tree height 3) Jun 27 21:07:35 vp6670 SMGC: [10573] Running VHD coalesce on *2df0321f(60.000G/342.790M?) Jun 27 21:07:35 vp6670 SM: [19134] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/2df0321f-8f62-4860-8a44-5c62469be1aa.vhd'] Jun 27 21:07:37 vp6670 SMGC: [10573] Coalesced size = 42.258G Jun 27 21:07:37 vp6670 SMGC: [10573] Coalesce candidate: *8f1395d8(64.002G/1.783G?) (tree height 3) Jun 27 21:07:37 vp6670 SMGC: [10573] Coalesced size = 42.258G Jun 27 21:07:37 vp6670 SMGC: [10573] Coalesce candidate: *8f1395d8(64.002G/1.783G?) (tree height 3) Jun 27 21:07:37 vp6670 SMGC: [10573] Running VHD coalesce on *8f1395d8(64.002G/1.783G?) Jun 27 21:07:37 vp6670 SM: [19266] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/8f1395d8-462f-47f0-8a89-57e5ff0ce204.vhd'] Jun 27 21:07:42 vp6670 SMGC: [10573] Coalesced size = 6.096G Jun 27 21:07:42 vp6670 SMGC: [10573] Coalesce candidate: *17aeb054(16.002G/96.223M?) (tree height 3) Jun 27 21:07:42 vp6670 SMGC: [10573] Coalesced size = 6.096G Jun 27 21:07:42 vp6670 SMGC: [10573] Coalesce candidate: *17aeb054(16.002G/96.223M?) (tree height 3) Jun 27 21:07:42 vp6670 SMGC: [10573] Running VHD coalesce on *17aeb054(16.002G/96.223M?) Jun 27 21:07:42 vp6670 SM: [19415] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/17aeb054-f057-4085-af7a-36f80df95106.vhd'] Jun 27 21:07:44 vp6670 SMGC: [10573] Coalesced size = 15.470G Jun 27 21:07:44 vp6670 SMGC: [10573] Coalesce candidate: *6f447181(24.002G/306.649M?) (tree height 3) Jun 27 21:07:44 vp6670 SMGC: [10573] Coalesced size = 15.470G Jun 27 21:07:44 vp6670 SMGC: [10573] Coalesce candidate: *6f447181(24.002G/306.649M?) (tree height 3) Jun 27 21:07:44 vp6670 SMGC: [10573] Running VHD coalesce on *6f447181(24.002G/306.649M?) Jun 27 21:07:44 vp6670 SM: [19557] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/6f447181-5a1f-4f71-83f3-dd50eb991110.vhd'] Jun 27 21:07:47 vp6670 SMGC: [10573] Coalesced size = 16.307G Jun 27 21:07:47 vp6670 SMGC: [10573] Coalesce candidate: *9f9907bc(20.000G/867.735M?) (tree height 3) Jun 27 21:07:47 vp6670 SMGC: [10573] Coalesced size = 16.307G Jun 27 21:07:47 vp6670 SMGC: [10573] Coalesce candidate: *9f9907bc(20.000G/867.735M?) (tree height 3) Jun 27 21:07:47 vp6670 SMGC: [10573] Running VHD coalesce on *9f9907bc(20.000G/867.735M?) Jun 27 21:07:47 vp6670 SM: [19690] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/9f9907bc-665b-4f5d-a191-17420bc7762a.vhd'] Jun 27 21:07:49 vp6670 SMGC: [10573] Coalesced size = 28.939G Jun 27 21:07:49 vp6670 SMGC: [10573] Coalesce candidate: *34823537(48.002G/661.387M?) (tree height 3) Jun 27 21:07:49 vp6670 SMGC: [10573] Coalesced size = 28.939G Jun 27 21:07:49 vp6670 SMGC: [10573] Coalesce candidate: *34823537(48.002G/661.387M?) (tree height 3) Jun 27 21:07:49 vp6670 SMGC: [10573] Running VHD coalesce on *34823537(48.002G/661.387M?) Jun 27 21:07:49 vp6670 SM: [19830] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/34823537-857f-440a-b700-00d6381e43cd.vhd'] Jun 27 21:07:52 vp6670 SMGC: [10573] Coalesced size = 37.160G Jun 27 21:07:52 vp6670 SMGC: [10573] Coalesce candidate: *9383cd6b(64.002G/1.401G?) (tree height 3) Jun 27 21:07:52 vp6670 SMGC: [10573] Coalesced size = 37.160G Jun 27 21:07:52 vp6670 SMGC: [10573] Coalesce candidate: *9383cd6b(64.002G/1.401G?) (tree height 3) Jun 27 21:07:52 vp6670 SMGC: [10573] Running VHD coalesce on *9383cd6b(64.002G/1.401G?) Jun 27 21:07:52 vp6670 SM: [19959] ['/usr/bin/vhd-util', 'coalesce', '--debug', '-n', '/var/run/sr-mount/ea3c92b7-0e82-5726-502b-482b40a8b097/9383cd6b-b631-46ff-bd75-f80ac92d143c.vhd']

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login