CBT: the thread to centralize your feedback

-

@rtjdamen I haven't had any hosts crash recently or any storage issue from what I can tell. The "type" in the log says delta but the size of the backups definitely look like full backups. They're also labelled as key when I look at the restore points for delta backups.

-

@Delgado i believe this error message is incorrect, it should be something like "CBT invalid fall back to base", i have seen it random once in a while on a vm, and also with issues on a host or specific storage pool.

-

not sure is it CBT related, never seen that before. VM backup failed in 1min , as always, but task still looks like active.

-

@olivierlambert Any progress on the attached disks and multiple NBD connections issue?

Related, should we see any performance difference related to the number of NBD connections? I went from 4 to 1 and my backups are still taking the same amount of time.

-

I'm not the right person to ask, I'm not tracking this in details. In our own prod, with use more concurrency with 1x NBD connection and that's the best combo I found so far.

-

@olivierlambert Is there a specific person we should ping or link to watch to get updates on the status?

-

@florent is the main backup guy, but he's ultra busy. No guarantee, so the best isn't to ping anyone in particular and see if you have some feedback. If it's a priority, go directly on the pro support. But we'll do our best to answer here, however it can't be a priority vs support ticket.

-

as for about today commit https://github.com/vatesfr/xen-orchestra/commit/ad8cd3791b9459b06d754defa657c97b66261eb3 - migraion still failing.

-

@Tristis-Oris Can you be more specific? What output do you exactly have?

-

vdi.migrate { "id": "1d536c76-1ee7-41aa-93ff-7c7a297e2e80", "sr_id": "9a80cc74-a807-0475-1cc9-b0e42ffc7bf9" } { "code": "SR_BACKEND_FAILURE_46", "params": [ "", "The VDI is not available [opterr=Error scanning VDI b3e09a17-9b08-48e5-8b47-93f16979b045]", "" ], "task": { "uuid": "a6db64c5-b9d2-946c-3cfd-59cd8c4c4586", "name_label": "Async.VDI.pool_migrate", "name_description": "", "allowed_operations": [], "current_operations": {}, "created": "20240930T07:54:12Z", "finished": "20240930T07:54:30Z", "status": "failure", "resident_on": "OpaqueRef:223881b6-1309-40e6-9e42-5ad74a274d2d", "progress": 1, "type": "<none/>", "result": "", "error_info": [ "SR_BACKEND_FAILURE_46", "", "The VDI is not available [opterr=Error scanning VDI b3e09a17-9b08-48e5-8b47-93f16979b045]", "" ], "other_config": {}, "subtask_of": "OpaqueRef:NULL", "subtasks": [], "backtrace": "(((process xapi)(filename ocaml/xapi-client/client.ml)(line 7))((process xapi)(filename ocaml/xapi-client/client.ml)(line 19))((process xapi)(filename ocaml/xapi-client/client.ml)(line 12359))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 134))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 35))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))" }, "message": "SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Error scanning VDI b3e09a17-9b08-48e5-8b47-93f16979b045], )", "name": "XapiError", "stack": "XapiError: SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=Error scanning VDI b3e09a17-9b08-48e5-8b47-93f16979b045], ) at Function.wrap (file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/_XapiError.mjs:16:12) at default (file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/_getTaskResult.mjs:13:29) at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/index.mjs:1041:24) at file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/index.mjs:1075:14 at Array.forEach (<anonymous>) at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/index.mjs:1065:12) at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202409301043/packages/xen-api/index.mjs:1238:14)" }SMlog

Sep 30 10:54:11 srv SM: [20535] lock: opening lock file /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi Sep 30 10:54:11 srv SM: [20535] lock: acquired /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi Sep 30 10:54:11 srv SM: [20535] Pause for 1d536c76-1ee7-41aa-93ff-7c7a297e2e80 Sep 30 10:54:11 srv SM: [20535] Calling tap pause with minor 2 Sep 30 10:54:11 srv SM: [20535] ['/usr/sbin/tap-ctl', 'pause', '-p', '12281', '-m', '2'] Sep 30 10:54:11 srv SM: [20535] = 0 Sep 30 10:54:11 srv SM: [20535] lock: released /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi Sep 30 10:54:12 srv SM: [20545] lock: opening lock file /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi Sep 30 10:54:12 srv SM: [20545] lock: acquired /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi Sep 30 10:54:12 srv SM: [20545] Unpause for 1d536c76-1ee7-41aa-93ff-7c7a297e2e80 Sep 30 10:54:12 srv SM: [20545] Realpath: /dev/VG_XenStorage-d8c3a5f0-6446-6bc0-79d0-749a3a138662/VHD-1d536c76-1ee7-41aa-93ff-7c7a297e2e80 Sep 30 10:54:12 srv SM: [20545] Setting LVM_DEVICE to /dev/disk/by-scsid/3600c0ff000524e513777c56301000000 Sep 30 10:54:12 srv SM: [20545] lock: opening lock file /var/lock/sm/d8c3a5f0-6446-6bc0-79d0-749a3a138662/sr Sep 30 10:54:12 srv SM: [20545] LVMCache created for VG_XenStorage-d8c3a5f0-6446-6bc0-79d0-749a3a138662 Sep 30 10:54:12 srv SM: [20545] lock: opening lock file /var/lock/sm/.nil/lvm Sep 30 10:54:12 srv SM: [20545] ['/sbin/vgs', '--readonly', 'VG_XenStorage-d8c3a5f0-6446-6bc0-79d0-749a3a138662'] Sep 30 10:54:12 srv SM: [20545] pread SUCCESS Sep 30 10:54:12 srv SM: [20545] Entering _checkMetadataVolume Sep 30 10:54:12 srv SM: [20545] LVMCache: will initialize now Sep 30 10:54:12 srv SM: [20545] LVMCache: refreshing Sep 30 10:54:12 srv SM: [20545] lock: acquired /var/lock/sm/.nil/lvm Sep 30 10:54:12 srv SM: [20545] ['/sbin/lvs', '--noheadings', '--units', 'b', '-o', '+lv_tags', '/dev/VG_XenStorage-d8c3a5f0-6446-6bc0-79d0-749a3a138662'] Sep 30 10:54:12 srv SM: [20545] pread SUCCESS Sep 30 10:54:12 srv SM: [20545] lock: released /var/lock/sm/.nil/lvm Sep 30 10:54:12 srv SM: [20545] lock: acquired /var/lock/sm/.nil/lvm Sep 30 10:54:12 srv SM: [20545] lock: released /var/lock/sm/.nil/lvm Sep 30 10:54:12 srv SM: [20545] Calling tap unpause with minor 2 Sep 30 10:54:12 srv SM: [20545] ['/usr/sbin/tap-ctl', 'unpause', '-p', '12281', '-m', '2', '-a', 'vhd:/dev/VG_XenStorage-d8c3a5f0-6446-6bc0-79d0-749a3a138662/VHD-1d5 36c76-1ee7-41aa-93ff-7c7a297e2e80'] Sep 30 10:54:12 srv SM: [20545] = 0 Sep 30 10:54:12 srv SM: [20545] lock: released /var/lock/sm/1d536c76-1ee7-41aa-93ff-7c7a297e2e80/vdi -

Your issue seems to be related to a storage problem regarding the VDI

b3e09a17-9b08-48e5-8b47-93f16979b045. If your SR cannot be scanned due to whatever issue in it, you won't be able to do any operation, snapshot or migrate. I have the impression this problem isn't related at all with CBT. -

@olivierlambert Probably you right. i got that error with both pool's physical SR, but at other pools disks migration fine. So again iscsi problems?

-

@olivierlambert There's a current workaround with NBD connections set to 1 so it's not a priority. I was just looking for a way to keep an eye on the status of any work on it so I can help test, etc.

-

Hi!

Great work with the CBT feature. I noticed it is now included in the "stable" branch which is good news. Is there a summary of the different settings, how they relate and the considerations to take when choosing these options? The XOA documentation, I think, isn't updated yet (searched for CBT with no results).

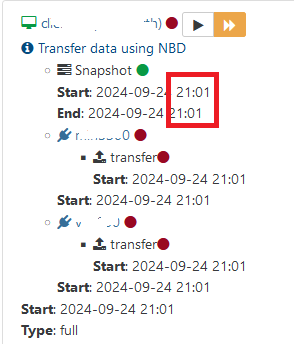

Backup view

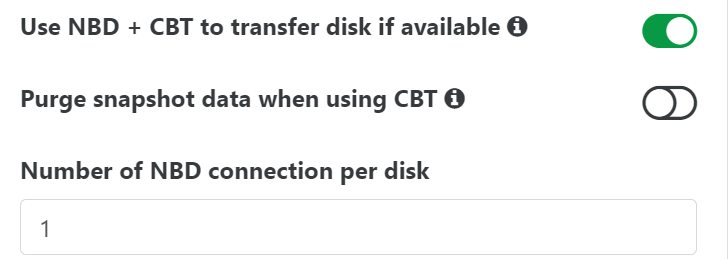

VM disk view

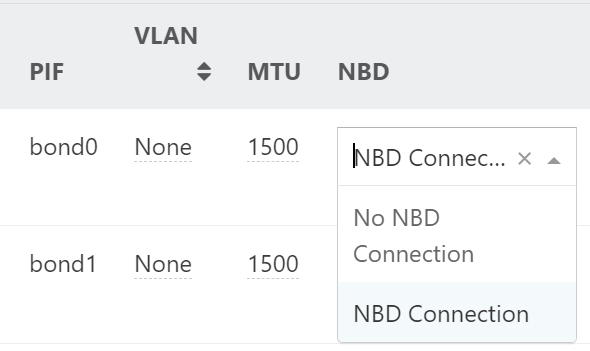

Pool network view

-

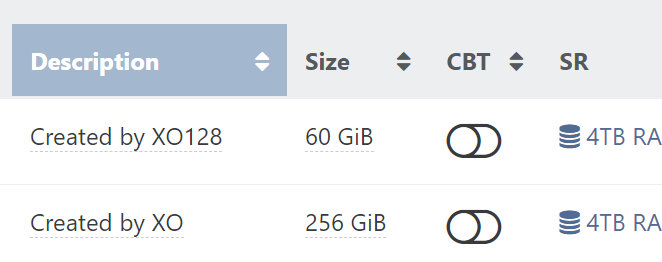

- If you tick the box, CBT will be used for any NBD enabled network (otherwise fallback to regular VHD export). If the box is not tick, then it will never use NBD.

- If you tick the box purge snapshot, the snap will be removed and it rely on CBT metadata to do the delta. Otherwise, the snapshot will be kept.

- NBD connections per disk: we can try to get multiple NBD blocks downloaded in parallel to try to speed up stuff; but in the end, it seems to cause more issues than really improve the transfer speed.

-

To follow up on the backups that break, I have been able to create a reproducible scenario for causing backups to fail with the error message... "can't create a stream from a metadata VDI, fall back to a base"

Hardware:

- 4 XCP-NG hosts (Error is reproducible regardless of which host is hosting the VM.)

- 1 TrueNAS file server providing the NFS share the XCP-NG hosts use for running the VMs.

- 1 TrueNAS file server providing the NFS share for backing up VMs in Continuous Replication mode.

- 1 TrueNAS file server providing the NFS share for backing up VMs in Delta Backups.

Backup Order:

- Full backup in Continuous Replication mode

- Full backup in Delta Backup mode

- Multiple incremental backups in Continuous Replication mode (Not sure of the exact minimum but my servers ran the backup 7 times yesterday.)

- At this point, running an incremental backup of a Delta backup will fail with "can't create a stream from a metadata VDI, fall back to a base"

After this backup has failed, attempting to run any incremental backup will fail. Even the Continuous Replication backups that were working correctly prior to the Delta Backup attempt.

- NDB Connections can be any amount.

- With NBD + CBT = True

- Purge snapshots when using CBP = True

Not purging snapshots generates the same error but also causes problems with the VDI chain when it happens.

All retention policies are set to 1.

This was tested on the current latest version of XCP-NG on all hosts and with commit 0a28a and multiple commits prior to it of the XO Community Edition.

-

@florent feedback for testing this

-

Hi,

I have a problem with a single VM running Almalinux 9. The VM has 2 disks, 50GB and 250GB disk.

Every time i do Delta backup the backup does succeed, but the larger disk is ALWAYS stuck on Control Domain and i have to manually "forget" it, or next backup will fail, which is annoying.This does not occur on full backup, only delta.

I removed all previous snapshots and backup and retried and same thing is happening.

Using XO with latest patches: commit a5967.

This issue started occurring straight after the CBT feature was added.

Using NBD = 1

Here is XO LOG for the backup:

Omitted attempts 2-7.... since they don't fit hereOct 7 11:33:43 xo-ce xo-server[11768]: 2024-10-07T11:33:43.770Z xo:backups:worker INFO starting backup Oct 7 11:33:49 xo-ce xo-server[11768]: 2024-10-07T11:33:49.717Z xo:xapi:vdi INFO found changed blocks { Oct 7 11:33:49 xo-ce xo-server[11768]: changedBlocks: <Buffer 80 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ... 511950 more bytes> Oct 7 11:33:49 xo-ce xo-server[11768]: } Oct 7 11:34:09 xo-ce xo-server[11768]: 2024-10-07T11:34:09.098Z xo:xapi:vdi INFO found changed blocks { Oct 7 11:34:09 xo-ce xo-server[11768]: changedBlocks: <Buffer 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ... 102353 more bytes> Oct 7 11:34:09 xo-ce xo-server[11768]: } Oct 7 11:34:11 xo-ce xo-server[11768]: 2024-10-07T11:34:11.070Z xo:xapi:vdi INFO OpaqueRef:c1f4b3cf-996d-45ba-80ae-59ef7c9cb8ef has been disconnected from dom0 { Oct 7 11:34:11 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:2fd1d459-46b5-473a-9549-a3e0b401209f', Oct 7 11:34:11 xo-ce xo-server[11768]: vbdRef: 'OpaqueRef:c1f4b3cf-996d-45ba-80ae-59ef7c9cb8ef' Oct 7 11:34:11 xo-ce xo-server[11768]: } Oct 7 11:40:47 xo-ce xo-server[11768]: 2024-10-07T11:40:47.534Z xo:xapi:vdi INFO OpaqueRef:7e94c0da-be46-45f8-9a6b-7e9eb04928b1 has been disconnected from dom0 { Oct 7 11:40:47 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:f8be3361-0c5c-48b4-bf51-baee4d02cb20', Oct 7 11:40:47 xo-ce xo-server[11768]: vbdRef: 'OpaqueRef:7e94c0da-be46-45f8-9a6b-7e9eb04928b1' Oct 7 11:40:47 xo-ce xo-server[11768]: } Oct 7 11:41:59 xo-ce xo-server[11768]: 2024-10-07T11:41:59.303Z xo:xapi:vdi INFO OpaqueRef:2e1231be-f65b-4fa4-bb8d-3871ddabfa87 has been disconnected from dom0 { Oct 7 11:41:59 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:cb2af589-ae7e-4b5c-b657-0c2636b3677f', Oct 7 11:41:59 xo-ce xo-server[11768]: vbdRef: 'OpaqueRef:2e1231be-f65b-4fa4-bb8d-3871ddabfa87' Oct 7 11:41:59 xo-ce xo-server[11768]: } Oct 7 11:42:11 xo-ce xo-server[11768]: 2024-10-07T11:42:11.742Z xo:xapi WARN retry { Oct 7 11:42:11 xo-ce xo-server[11768]: attemptNumber: 0, Oct 7 11:42:11 xo-ce xo-server[11768]: delay: 5000, Oct 7 11:42:11 xo-ce xo-server[11768]: error: XapiError: VDI_IN_USE(OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92, destroy) Oct 7 11:42:11 xo-ce xo-server[11768]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_XapiError.mjs:16:12) Oct 7 11:42:11 xo-ce xo-server[11768]: at default (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_getTaskResult.mjs:13:29) Oct 7 11:42:11 xo-ce xo-server[11768]: at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1041:24) Oct 7 11:42:11 xo-ce xo-server[11768]: at file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1075:14 Oct 7 11:42:11 xo-ce xo-server[11768]: at Array.forEach (<anonymous>) Oct 7 11:42:11 xo-ce xo-server[11768]: at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1065:12) Oct 7 11:42:11 xo-ce xo-server[11768]: at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1238:14) Oct 7 11:42:11 xo-ce xo-server[11768]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Oct 7 11:42:11 xo-ce xo-server[11768]: code: 'VDI_IN_USE', Oct 7 11:42:11 xo-ce xo-server[11768]: params: [ 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', 'destroy' ], Oct 7 11:42:11 xo-ce xo-server[11768]: call: undefined, Oct 7 11:42:11 xo-ce xo-server[11768]: url: undefined, Oct 7 11:42:11 xo-ce xo-server[11768]: task: task { Oct 7 11:42:11 xo-ce xo-server[11768]: uuid: '7bb5adaa-5b49-2227-3ede-759420836f59', Oct 7 11:42:11 xo-ce xo-server[11768]: name_label: 'Async.VDI.destroy', Oct 7 11:42:11 xo-ce xo-server[11768]: name_description: '', Oct 7 11:42:11 xo-ce xo-server[11768]: allowed_operations: [], Oct 7 11:42:11 xo-ce xo-server[11768]: current_operations: {}, Oct 7 11:42:11 xo-ce xo-server[11768]: created: '20241007T11:41:59Z', Oct 7 11:42:11 xo-ce xo-server[11768]: finished: '20241007T11:42:11Z', Oct 7 11:42:11 xo-ce xo-server[11768]: status: 'failure', Oct 7 11:42:11 xo-ce xo-server[11768]: resident_on: 'OpaqueRef:010eebba-be27-489f-9f87-d06c8b675f19', Oct 7 11:42:11 xo-ce xo-server[11768]: progress: 1, Oct 7 11:42:11 xo-ce xo-server[11768]: type: '<none/>', Oct 7 11:42:11 xo-ce xo-server[11768]: result: '', Oct 7 11:42:11 xo-ce xo-server[11768]: error_info: [Array], Oct 7 11:42:11 xo-ce xo-server[11768]: other_config: {}, Oct 7 11:42:11 xo-ce xo-server[11768]: subtask_of: 'OpaqueRef:NULL', Oct 7 11:42:11 xo-ce xo-server[11768]: subtasks: [], Oct 7 11:42:11 xo-ce xo-server[11768]: backtrace: '(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 4711))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))' Oct 7 11:42:11 xo-ce xo-server[11768]: } Oct 7 11:42:11 xo-ce xo-server[11768]: }, Oct 7 11:42:11 xo-ce xo-server[11768]: fn: 'destroy', Oct 7 11:42:11 xo-ce xo-server[11768]: arguments: [ 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92' ], Oct 7 11:42:11 xo-ce xo-server[11768]: pool: { Oct 7 11:42:11 xo-ce xo-server[11768]: uuid: 'fe688bb2-b9ac-db7b-737a-cc457195f095', Oct 7 11:42:11 xo-ce xo-server[11768]: name_label: 'SGO Pool' Oct 7 11:42:11 xo-ce xo-server[11768]: } Oct 7 11:42:11 xo-ce xo-server[11768]: } Oct 7 11:42:42 xo-ce xo-server[11768]: 2024-10-07T11:42:42.061Z xo:xapi:vdi WARN Couldn't disconnect OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92 from dom0 { Oct 7 11:42:42 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', Oct 7 11:42:42 xo-ce xo-server[11768]: vbdRef: 'OpaqueRef:2b0c22e7-2794-471a-bd85-145cf302f8e4', Oct 7 11:42:42 xo-ce xo-server[11768]: err: XapiError: OPERATION_NOT_ALLOWED(VBD '0da0be96-45ef-684e-b956-7b5463cd9b09' still attached to 'f3fd6bd3-a622-4838-a7b6-e6e5e2cc6d04') Oct 7 11:42:42 xo-ce xo-server[11768]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_XapiError.mjs:16:12) Oct 7 11:42:42 xo-ce xo-server[11768]: at file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/transports/json-rpc.mjs:38:21 Oct 7 11:42:42 xo-ce xo-server[11768]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Oct 7 11:42:42 xo-ce xo-server[11768]: code: 'OPERATION_NOT_ALLOWED', Oct 7 11:42:42 xo-ce xo-server[11768]: params: [ Oct 7 11:42:42 xo-ce xo-server[11768]: "VBD '0da0be96-45ef-684e-b956-7b5463cd9b09' still attached to 'f3fd6bd3-a622-4838-a7b6-e6e5e2cc6d04'" Oct 7 11:42:42 xo-ce xo-server[11768]: ], Oct 7 11:42:42 xo-ce xo-server[11768]: call: { method: 'VBD.destroy', params: [Array] }, Oct 7 11:42:42 xo-ce xo-server[11768]: url: undefined, Oct 7 11:42:42 xo-ce xo-server[11768]: task: undefined Oct 7 11:42:42 xo-ce xo-server[11768]: } Oct 7 11:42:42 xo-ce xo-server[11768]: } Oct 7 11:46:54 xo-ce xo-server[11768]: 2024-10-07T11:46:54.842Z xo:xapi WARN retry { Oct 7 11:46:54 xo-ce xo-server[11768]: attemptNumber: 8, Oct 7 11:46:54 xo-ce xo-server[11768]: delay: 5000, Oct 7 11:46:54 xo-ce xo-server[11768]: error: XapiError: VDI_IN_USE(OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92, destroy) Oct 7 11:46:54 xo-ce xo-server[11768]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_XapiError.mjs:16:12) Oct 7 11:46:54 xo-ce xo-server[11768]: at default (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_getTaskResult.mjs:13:29) Oct 7 11:46:54 xo-ce xo-server[11768]: at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1041:24) Oct 7 11:46:54 xo-ce xo-server[11768]: at file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1075:14 Oct 7 11:46:54 xo-ce xo-server[11768]: at Array.forEach (<anonymous>) Oct 7 11:46:54 xo-ce xo-server[11768]: at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1065:12) Oct 7 11:46:54 xo-ce xo-server[11768]: at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1238:14) Oct 7 11:46:54 xo-ce xo-server[11768]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Oct 7 11:46:54 xo-ce xo-server[11768]: code: 'VDI_IN_USE', Oct 7 11:46:54 xo-ce xo-server[11768]: params: [ 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', 'destroy' ], Oct 7 11:46:54 xo-ce xo-server[11768]: call: undefined, Oct 7 11:46:54 xo-ce xo-server[11768]: url: undefined, Oct 7 11:46:54 xo-ce xo-server[11768]: task: task { Oct 7 11:46:54 xo-ce xo-server[11768]: uuid: '70afe347-815d-806b-0eed-dd6239adcf0e', Oct 7 11:46:54 xo-ce xo-server[11768]: name_label: 'Async.VDI.destroy', Oct 7 11:46:54 xo-ce xo-server[11768]: name_description: '', Oct 7 11:46:54 xo-ce xo-server[11768]: allowed_operations: [], Oct 7 11:46:54 xo-ce xo-server[11768]: current_operations: {}, Oct 7 11:46:54 xo-ce xo-server[11768]: created: '20241007T11:46:54Z', Oct 7 11:46:54 xo-ce xo-server[11768]: finished: '20241007T11:46:54Z', Oct 7 11:46:54 xo-ce xo-server[11768]: status: 'failure', Oct 7 11:46:54 xo-ce xo-server[11768]: resident_on: 'OpaqueRef:010eebba-be27-489f-9f87-d06c8b675f19', Oct 7 11:46:54 xo-ce xo-server[11768]: progress: 1, Oct 7 11:46:54 xo-ce xo-server[11768]: type: '<none/>', Oct 7 11:46:54 xo-ce xo-server[11768]: result: '', Oct 7 11:46:54 xo-ce xo-server[11768]: error_info: [Array], Oct 7 11:46:54 xo-ce xo-server[11768]: other_config: {}, Oct 7 11:46:54 xo-ce xo-server[11768]: subtask_of: 'OpaqueRef:NULL', Oct 7 11:46:54 xo-ce xo-server[11768]: subtasks: [], Oct 7 11:46:54 xo-ce xo-server[11768]: backtrace: '(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 4711))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))' Oct 7 11:46:54 xo-ce xo-server[11768]: } Oct 7 11:46:54 xo-ce xo-server[11768]: }, Oct 7 11:46:54 xo-ce xo-server[11768]: fn: 'destroy', Oct 7 11:46:54 xo-ce xo-server[11768]: arguments: [ 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92' ], Oct 7 11:46:54 xo-ce xo-server[11768]: pool: { Oct 7 11:46:54 xo-ce xo-server[11768]: uuid: 'fe688bb2-b9ac-db7b-737a-cc457195f095', Oct 7 11:46:54 xo-ce xo-server[11768]: name_label: 'SGO Pool' Oct 7 11:46:54 xo-ce xo-server[11768]: } Oct 7 11:46:54 xo-ce xo-server[11768]: } Oct 7 11:47:25 xo-ce xo-server[11768]: 2024-10-07T11:47:25.194Z xo:xapi:vdi WARN Couldn't disconnect OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92 from dom0 { Oct 7 11:47:25 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', Oct 7 11:47:25 xo-ce xo-server[11768]: vbdRef: 'OpaqueRef:2b0c22e7-2794-471a-bd85-145cf302f8e4', Oct 7 11:47:25 xo-ce xo-server[11768]: err: XapiError: OPERATION_NOT_ALLOWED(VBD '0da0be96-45ef-684e-b956-7b5463cd9b09' still attached to 'f3fd6bd3-a622-4838-a7b6-e6e5e2cc6d04') Oct 7 11:47:25 xo-ce xo-server[11768]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_XapiError.mjs:16:12) Oct 7 11:47:25 xo-ce xo-server[11768]: at file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/transports/json-rpc.mjs:38:21 Oct 7 11:47:25 xo-ce xo-server[11768]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Oct 7 11:47:25 xo-ce xo-server[11768]: code: 'OPERATION_NOT_ALLOWED', Oct 7 11:47:25 xo-ce xo-server[11768]: params: [ Oct 7 11:47:25 xo-ce xo-server[11768]: "VBD '0da0be96-45ef-684e-b956-7b5463cd9b09' still attached to 'f3fd6bd3-a622-4838-a7b6-e6e5e2cc6d04'" Oct 7 11:47:25 xo-ce xo-server[11768]: ], Oct 7 11:47:25 xo-ce xo-server[11768]: call: { method: 'VBD.destroy', params: [Array] }, Oct 7 11:47:25 xo-ce xo-server[11768]: url: undefined, Oct 7 11:47:25 xo-ce xo-server[11768]: task: undefined Oct 7 11:47:25 xo-ce xo-server[11768]: } Oct 7 11:47:25 xo-ce xo-server[11768]: } Oct 7 11:47:30 xo-ce xo-server[11768]: 2024-10-07T11:47:30.323Z xo:xapi:vm WARN VM_destroy: failed to destroy VDI { Oct 7 11:47:30 xo-ce xo-server[11768]: error: XapiError: VDI_IN_USE(OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92, destroy) Oct 7 11:47:30 xo-ce xo-server[11768]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_XapiError.mjs:16:12) Oct 7 11:47:30 xo-ce xo-server[11768]: at default (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/_getTaskResult.mjs:13:29) Oct 7 11:47:30 xo-ce xo-server[11768]: at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1041:24) Oct 7 11:47:30 xo-ce xo-server[11768]: at file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1075:14 Oct 7 11:47:30 xo-ce xo-server[11768]: at Array.forEach (<anonymous>) Oct 7 11:47:30 xo-ce xo-server[11768]: at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1065:12) Oct 7 11:47:30 xo-ce xo-server[11768]: at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202410070635/packages/xen-api/index.mjs:1238:14) Oct 7 11:47:30 xo-ce xo-server[11768]: at process.processTicksAndRejections (node:internal/process/task_queues:95:5) { Oct 7 11:47:30 xo-ce xo-server[11768]: code: 'VDI_IN_USE', Oct 7 11:47:30 xo-ce xo-server[11768]: params: [ 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', 'destroy' ], Oct 7 11:47:30 xo-ce xo-server[11768]: call: undefined, Oct 7 11:47:30 xo-ce xo-server[11768]: url: undefined, Oct 7 11:47:30 xo-ce xo-server[11768]: task: task { Oct 7 11:47:30 xo-ce xo-server[11768]: uuid: '5c688ec4-5523-c1f1-89dc-a881abdbaee1', Oct 7 11:47:30 xo-ce xo-server[11768]: name_label: 'Async.VDI.destroy', Oct 7 11:47:30 xo-ce xo-server[11768]: name_description: '', Oct 7 11:47:30 xo-ce xo-server[11768]: allowed_operations: [], Oct 7 11:47:30 xo-ce xo-server[11768]: current_operations: {}, Oct 7 11:47:30 xo-ce xo-server[11768]: created: '20241007T11:47:30Z', Oct 7 11:47:30 xo-ce xo-server[11768]: finished: '20241007T11:47:30Z', Oct 7 11:47:30 xo-ce xo-server[11768]: status: 'failure', Oct 7 11:47:30 xo-ce xo-server[11768]: resident_on: 'OpaqueRef:010eebba-be27-489f-9f87-d06c8b675f19', Oct 7 11:47:30 xo-ce xo-server[11768]: progress: 1, Oct 7 11:47:30 xo-ce xo-server[11768]: type: '<none/>', Oct 7 11:47:30 xo-ce xo-server[11768]: result: '', Oct 7 11:47:30 xo-ce xo-server[11768]: error_info: [Array], Oct 7 11:47:30 xo-ce xo-server[11768]: other_config: {}, Oct 7 11:47:30 xo-ce xo-server[11768]: subtask_of: 'OpaqueRef:NULL', Oct 7 11:47:30 xo-ce xo-server[11768]: subtasks: [], Oct 7 11:47:30 xo-ce xo-server[11768]: backtrace: '(((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 4711))((process xapi)(filename lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/xapi/rbac.ml)(line 205))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 95)))' Oct 7 11:47:30 xo-ce xo-server[11768]: } Oct 7 11:47:30 xo-ce xo-server[11768]: }, Oct 7 11:47:30 xo-ce xo-server[11768]: vdiRef: 'OpaqueRef:8673586d-a9fc-4651-883a-ad333c13dc92', Oct 7 11:47:30 xo-ce xo-server[11768]: vmRef: 'OpaqueRef:239e4aa1-b3ce-4fa0-abe4-3fb6803a990e' Oct 7 11:47:30 xo-ce xo-server[11768]: } Oct 7 11:47:30 xo-ce xo-server[11768]: 2024-10-07T11:47:30.474Z xo:backups:MixinBackupWriter INFO deleting unused VHD { Oct 7 11:47:30 xo-ce xo-server[11768]: path: '/xo-vm-backups/d5d0334c-a7e3-b29f-51ca-1be9c211d2c1/vdis/5a7a5647-d2ca-4014-b87b-06c621c09a20/9f8da2fd-a08d-43c2-a5b1-ce6125cf52f5/20241007T111850Z.vhd' Oct 7 11:47:30 xo-ce xo-server[11768]: } Oct 7 11:47:30 xo-ce xo-server[11768]: 2024-10-07T11:47:30.475Z xo:backups:MixinBackupWriter INFO deleting unused VHD { Oct 7 11:47:30 xo-ce xo-server[11768]: path: '/xo-vm-backups/d5d0334c-a7e3-b29f-51ca-1be9c211d2c1/vdis/5a7a5647-d2ca-4014-b87b-06c621c09a20/c6853f48-4b06-4c34-9707-b68f9054e6fc/20241007T111850Z.vhd' Oct 7 11:47:30 xo-ce xo-server[11768]: } Oct 7 11:47:30 xo-ce xo-server[11768]: 2024-10-07T11:47:30.568Z xo:backups:worker INFO backup has ended Oct 7 11:48:00 xo-ce xo-server[11768]: 2024-10-07T11:48:00.630Z xo:backups:worker WARN worker process did not exit automatically, forcing... Oct 7 11:48:00 xo-ce xo-server[11768]: 2024-10-07T11:48:00.631Z xo:backups:worker INFO process will exit { Oct 7 11:48:00 xo-ce xo-server[11768]: duration: 856860228, Oct 7 11:48:00 xo-ce xo-server[11768]: exitCode: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: resourceUsage: { Oct 7 11:48:00 xo-ce xo-server[11768]: userCPUTime: 771667771, Oct 7 11:48:00 xo-ce xo-server[11768]: systemCPUTime: 176351529, Oct 7 11:48:00 xo-ce xo-server[11768]: maxRSS: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: sharedMemorySize: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: unsharedDataSize: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: unsharedStackSize: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: minorPageFault: 3322393, Oct 7 11:48:00 xo-ce xo-server[11768]: majorPageFault: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: swappedOut: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: fsRead: 2416, Oct 7 11:48:00 xo-ce xo-server[11768]: fsWrite: 209789336, Oct 7 11:48:00 xo-ce xo-server[11768]: ipcSent: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: ipcReceived: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: signalsCount: 0, Oct 7 11:48:00 xo-ce xo-server[11768]: voluntaryContextSwitches: 527248, Oct 7 11:48:00 xo-ce xo-server[11768]: involuntaryContextSwitches: 342752 Oct 7 11:48:00 xo-ce xo-server[11768]: }, Oct 7 11:48:00 xo-ce xo-server[11768]: summary: { duration: '14m', cpuUsage: '111%', memoryUsage: '0 B' } Oct 7 11:48:00 xo-ce xo-server[11768]: } -

Can you try with XOA on latest channel and see if you have the same behavior?

-

@olivierlambert we have the same issue, i am just updating to latest, i did not see any changes on github regarding backups however, is there a change inside this build?