@Biggen At the moment, xcp-ng center provides some better views and overviews not yet available in XO.. Hoping next major version fixes this

Best posts made by Forza

-

RE: [WARNING] XCP-ng Center shows wrong CITRIX updates for XCP-ng Servers - DO NOT APPLY - Fix released

-

RE: Long backup times via NFS to Data Domain from Xen Orchestra

@florent said in Long backup times via NFS to Data Domain from Xen Orchestra:

@MajorP93 this settings exists (not in the ui )

you can create a configuration file named

/etc/xo-server/config.diskConcurrency.tomlif you use a xoacontaining

[backups] diskPerVmConcurrency = 2That is great. Can we get it as a UI option too?

-

RE: XCP-ng Guest Agent - Reported Windows Version for Servers

@olivierlambert said in XCP-ng Guest Agent - Reported Windows Version for Servers:

It's funny to see Microsoft having a version 10 for an edition named 11. I suppose it's not a surprise for an organization that huge.

They did say that Windows 10 would be the last version of Windows...

-

RE: Citrix or XCP-ng drivers for Windows Server 2022

@dinhngtu Thank you. I think it is clear for me now.

The docs at https://xcp-ng.org/docs/guests.html#windows could be improved to cover all three options but also to be a little more concise to make it easier to read.

-

RE: Citrix or XCP-ng drivers for Windows Server 2022

@iams3le we have switched to the signed xcp-ng drivers. We also replaced our older 2022 servers.

-

RE: Epyc VM to VM networking slow

Tested the new updates on my prod EPYC 7402P pool with

iperf3. Seems like quite a good uplift

Ubuntu 24.04 VM (6 cores) -> bare metal server (6 cores) over a 2x25Gbit LACP link.

Pre-patch

- iperf3 -P1 : 9.72Gbit/s

- iperf3 -P6 : 14.6GBis/s

Post Patch

- iperf3 -P1 : 11.3GBit/s

- iperf3 -P6 : 24.2GBit/s

Ubuntu 24.04 VM (6 cores) -> Ubuntu 24.04 VM (6 cores) on the same host

Pre Patch

Forgot to test this...

Post Patch

- iperf3 -P1 : 13.7GBit/s

- iperf3 -P6 : 30.8GBit/s

- iperf3 -P24 : 40.4GBit/s

Our servers have

Last-Level Cache (LLC) as NUMA Nodeenabled as most our VMs do not have huge amount of vCPUs assigned. This means for the EPYC 7402P (24c/48t) we have 8 NUMA nodes. We however do not usexl cpupool-numa-split. -

RE: Best CPU performance settings for HP DL325/AMD EPYC servers?

Sorry for spamming the thread.

I have two identical servers (srv01 and srv02) with AMD EPYC 7402P 24 Core CPUs. On srv02 I enabled the

LLC as NUMA Node.I've done some quick benchmarks with

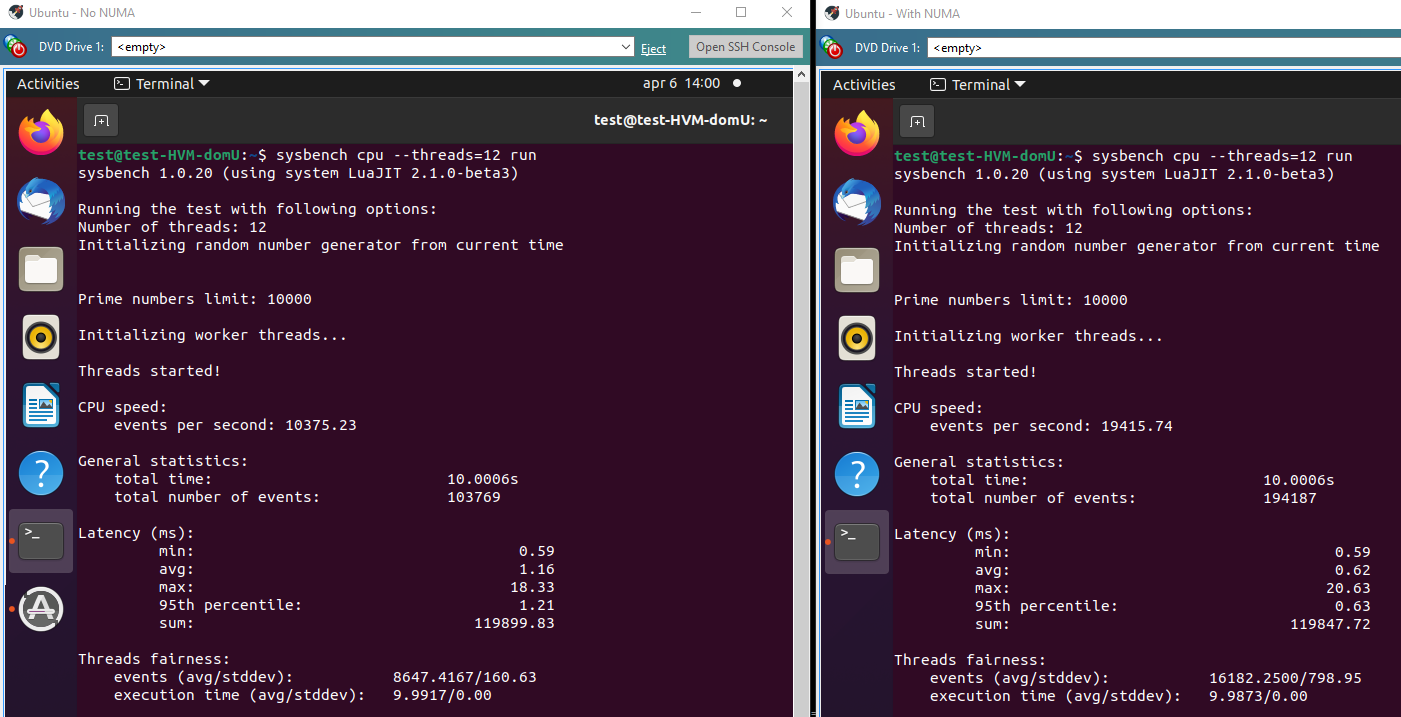

Sysbenchon Ubuntu 20.10 with 12 assigned cores. Command line:sysbench cpu run --threads=12It would seem that in this test the NUMA option is much faster, 194187 events vs 103769 events. Perhaps I am misunderstanding how sysbench works?

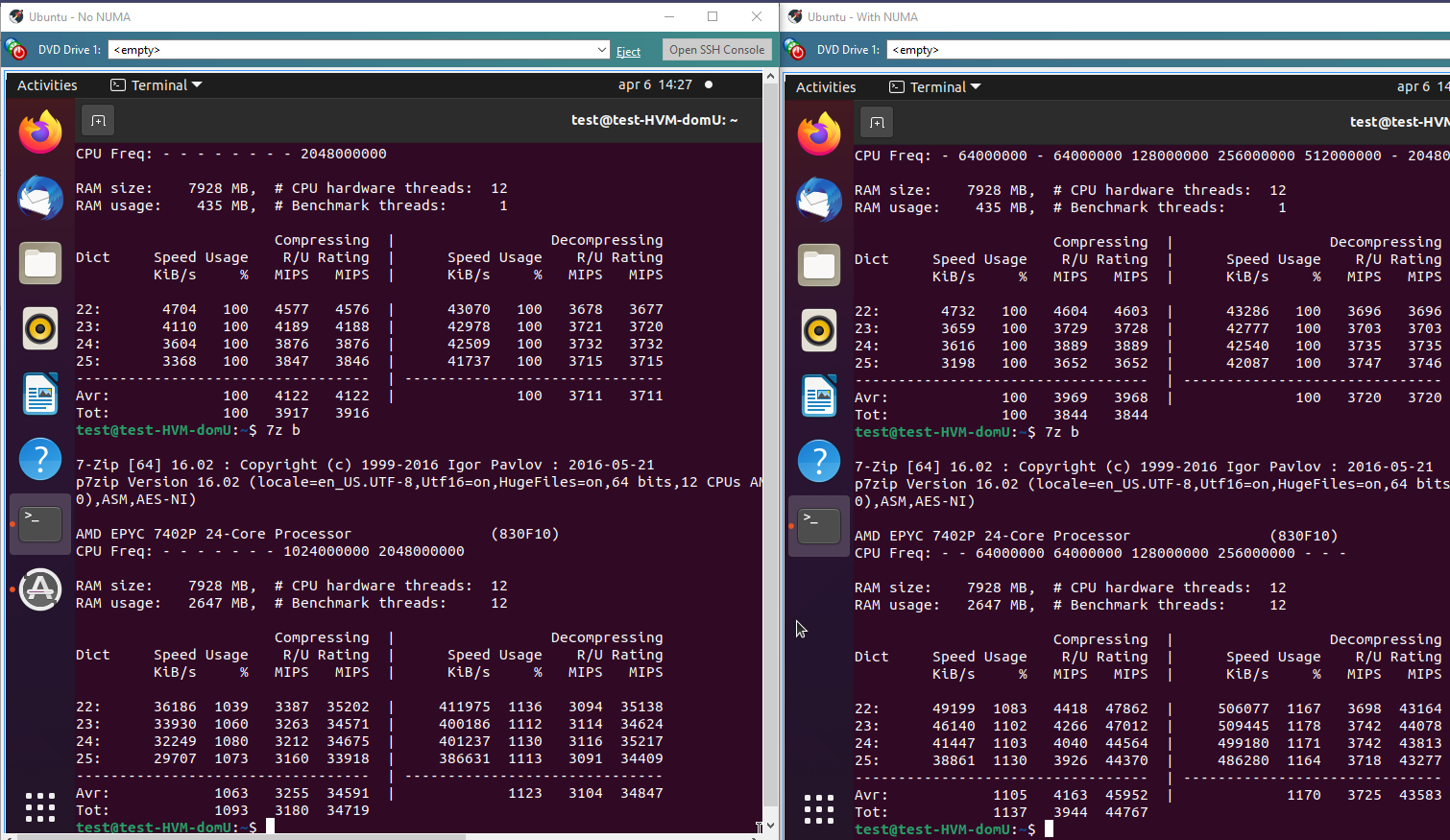

With 7-zip the gain is much less, but still meaningful. A little slower in single-threaded performance but quite a bit faster in multi-threaded mode.

-

RE: Host stuck in booting state.

Problem was a stale connection with the NFS server. A reboot of the NFS server fixed the issue.

-

RE: Restoring a downed host ISNT easy

@xcprocks said in Restoring a downed host ISNT easy:

So, we had a host go down (OS drive failure). No big deal right? According to instructions, just reinstall XCP on a new drive, jump over into XOA and do a metadata restore.

Well, not quite.

First during installation, you really really must not select any of the disks to create an SR as you could potentially wipe out an SR.

Second, you have to do the sr-probe and sr-introduce and pbd-create and pbd-plug to get the SRs back.

Third, you then have to use XOA to restore the metadata which according to the directions is pretty simple looking. According to: https://xen-orchestra.com/docs/metadata_backup.html#performing-a-restore

"To restore one, simply click the blue restore arrow, choose a backup date to restore, and click OK:"

But this isn't quite true. When we did it, the restore threw an error:

"message": "no such object d7b6f090-cd68-9dec-2e00-803fc90c3593",

"name": "XoError",Panic mode sets in... It can't find the metadata? We try an earlier backup. Same error. We check the backup NFS share--no its there alright.

After a couple of hours scouring the internet and not finding anything, it dawns on us... The object XOA is looking for is the OLD server not a backup directory. It is looking for the server that died and no longer exists. The problem is, when you install the new server, it gets a new ID. But the restore program is looking for the ID of the dead server.

But how do you tell XOA, to copy the metadata over to the new server? It assumes that you want to restore it over an existing server. It does not provide a drop down list to pick where to deploy it.

In an act of desperation, we copied the backup directory to a new location and named it with the ID number of the newly recreated server. Now XOA could restore the metadata and we were able to recover the VMs in the SRs without issue.

This long story is really just a way to highlight the need for better host backup in three ways:

A) The first idea would be to create better instructions. It ain't nowhere as easy as the documentation says it is and it's easy to mess up the first step so bad that you can wipe out the contents of an SR. The documentation should spell this out.

B) The second idea is to add to the metadata backup something that reads the states of SR to PBD mappings and provides/saves a script to restore them. This would ease a lot of the difficulty in the actual restoring of a failed OS after a new OS can be installed.

C) The third idea is provide a dropdown during the restoration of the metadata that allows the user to target a particular machine for the restore operation instead of blindly assuming you want to restore it over a machine that is dead and gone.

I hope this helps out the next person trying to bring a host back from the dead, and I hope it also helps make XOA a better product.

Thanks for a good description of the restore process.

I was wary of the metadata-backup option. It sounds simple and good to have, but as you said it is in no way a comprehensive restore of a pool.

I'd like to add my own oppinion here. A full pool restore, including network, re-attaching SRs and everything else that is needed to quickly get back up and running. Also a restore pool backup should be available on the boot media. It could look for a NFS/CIFS mount or a USB disk with the backup files on. This would avoid things like issues with bonded networks not working.

-

RE: Remove VUSB as part of job

Might a different solution be to use a USB network bridge instead of direct attached USB? Something like this https://www.seh-technology.com/products/usb-deviceserver/utnserver-pro.html (There are different options available)... We use my-utn-50a with hardware USB keys and it has shown to be very reliable over the years.

Latest posts made by Forza

-

RE: Citrix or XCP-ng drivers for Windows Server 2022

@iams3le we have switched to the signed xcp-ng drivers. We also replaced our older 2022 servers.

-

RE: Execute pre-freeze and post-thaw

@dinhngtu said in Execute pre-freeze and post-thaw:

There used to be quiescent snapshot capabilities in older versions (mainly for Windows VSS support), but it has since been removed. I'd say @Team-XAPI-Network knows more about the reason.

We have vmware with ppdm backups and the vss part is actually causing some annoying issues such as quite long io stalls. But I do understand the reason for vss, especially for applications that aren't crash safe.

-

RE: Remote syslog broken after update/reboot? - Changing it away, then back fixes.

I've been considering remote syslog too. Does enabling remote syslog remove local logging?

-

RE: restore metadata for pool -> incompatible version

@Danp said in restore metadata for pool -> incompatible version:

My understanding is that you can only restore metadata to the exact same version of xapi. Does your old pool still exist?

This is quite the limitation. Is it documented?

-

RE: 🛰️ XO 6: dedicated thread for all your feedback!

@cmoriarty said in

️ XO 6: dedicated thread for all your feedback!:

️ XO 6: dedicated thread for all your feedback!:I use the "VM notes" feature in XO 5 for lots of things, and I appreciate having the markdown capability. It seems now these VM Notes are stuffed into a small "Description" field inside the "Quick Info" panel for a VM.

Currently in XO 6, it looks like my notes are getting truncated in this new description field, are there plans to preserve the "VM Notes" functionality? Or should I be backing them up now before I lose them?

Me too. They serve as important info, documentation, and other bits of info that needs to be quickly on-hand.

What I would like to add is a way to upload images and include them in the Markdown.

-

RE: SR.Scan performance withing XOSTOR

What is the purpose of the SR scans, and why do they indeed have to run ao frequently?

-

RE: 🛰️ XO 6: dedicated thread for all your feedback!

@acebmxer said in

️ XO 6: dedicated thread for all your feedback!:

️ XO 6: dedicated thread for all your feedback!:So question what happens when i thinks the vm disk is full? When will it clear up? Only thing i can think of was coping 13gb veeam ISO over few times testing things and stuff. but that has been a while now.

No, it will not. You can create a new vdi and move the files over manually.

-

RE: Mirror backup: No new data to upload for this vm?

@Bastien-Nollet Thanks. I am on Stable channel as I use XOA Premium.

-

RE: suggestions for upgrade path XCP-ng 8.2.1 -> XCP-ng 8.3.0

@ditzy-olive I think 'warm migration' should have worked?

But perhaps if your old pool lost its pool master it doesn't work?

-

RE: suggestions for upgrade path XCP-ng 8.2.1 -> XCP-ng 8.3.0

This is what I did.

- Migrated VMs off one host

- Disconnected that host from the pool

- Made a clean install of version 8.3 on that host and made into a new pool.

- Live-migrated grated VMs back to the new pool

- Made clean installs on the remaining hosts

- Joined the remaining hosts to the new pool