XCP-ng 8.3 updates announcements and testing

-

@gduperrey Installed on my usual round test hosts. No issues to report so far! With such a small change i wasn't expecting anything to go wrong!

-

@gduperrey

All good so far -

@gduperrey Up and working on several Intel pools.

-

@gduperrey Seems to be working well on my test systems.

-

How many of these are critical? I haven't even had time to apply the last round of patches to either lab or production.

-

Thank you everyone for your tests and your feedback!

The updates are live now: https://xcp-ng.org/blog/2026/03/26/march-2026-security-updates-2-for-xcp-ng-8-3-lts/

-

@gduperrey

Installed on home lab via rolling pool update and both host updated no issues and vms migrated back to 2nd host as expected this time. fingers crossed work servers have the same luck.I do have open support ticket from last round of updates for work servers. Waiting for response before installing patches.

-

S stormi referenced this topic on

S stormi referenced this topic on

-

New feature, security and maintenance update candidates for you to test!

This release batch contains a major storage feature,

read the RC2 announcement for QCOW2 image format support for 2TiB+ images.The whole platform has been hardened with a major OpenSSH update.

The updated Windows Guest Tools bring support for the XSTDVGA driver, allowing display resizing.

We also publish other non-urgent updates which we had in the pipe for the next update release.

What changed

Storage

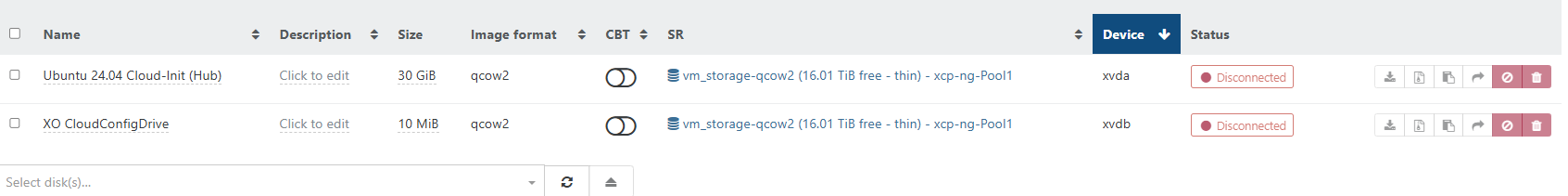

QCOW2 image format support is the major feature of this release batch,

check related announcement in forum.sm: 3.2.12-17.2- Add support for the QCOW2 image format

blktap: 3.55.5-6.4- Add support of new QCOW2 disk type

Maintenance updates

Virtualization & System

xen: 4.17.6-6.1- Sync with XenServer's xen-4.17.6-6.xs8

* Fix boot failure on some UEFI systems.WIP

- Sync with XenServer's xen-4.17.6-6.xs8

kernel: 4.19.19-8.0.46.1- Fix regarding use of the correct MAC address in the rndis_host driver

- Backport fix regarding a potential bug in the ext4 driver (CVE-2020-14314)

- Backports fixes in SUNRPC (related to NFS). This prevents host crashes under some circumstances.

Control plane

xapi: 26.1.3-1.6- Several fixes to QCOW2 enablement for importing and exporting, like reducing memory usage on disk import

xcp-ng-xapi-plugins: 1.16.0-1sdncontroller.py: add support for new optional cookie argument to add-rule and del-rule functions

xcp-ng-pv-tools: 8.3-16- Update to XCP-ng Windows PV Tools 9.1.146.0

- Include the XSTDVGA driver and improvements to the guest agent/installer

UI

xo-lite: Update to 0.20.0-1- [VM/New] Added secureBoot support (PR #9423)

- [Dashboard] Fix reactivity of dashboard (PR #9378)

- [VM] Fixed duplicated ip addresses in the network tab Forum#101359 (PR #9547)

- [Stats] Return null instead of 0 when no stats available (PR #9634)

- [Treeview/Pool/Host] Add button to download bugtools (PR #9419)

Network

gnutls: 3.3.29-10.2- Fix dane removal (no more replacing dane with devel package)

openssh: Update to 9.8p1-1.2.2- Deprecate old OpenSSH clients (7.2 and lower) that use weak SHA1 with ssh-rsa:

- For now, a warning will ask to use an up to date client, on next update weak configurations will be rejected.

- Deprecate old OpenSSH clients (7.2 and lower) that use weak SHA1 with ssh-rsa:

net-snmp: 5.9.3-8.2- Fix SNMP regression (daemon configuration was lost in earlier version)

xcp-ng-deps: 8.3-14- Install

tracerouteto troubleshoot connectivity problems

- Install

Additional packages

Best effort support is provided for additional packages provided by the XCP-ng project.

lldpd: version 1.0.4-1.1 provided for convenience in our repositories, as the EPEL version is not compatible anymore with the latest XCP-ng 8.3 updates. However, please prefer the pre-installed lldapd whenever possible.nut: version 2.8.0-2.1 provided for convenience in our repositories, as the EPEL version is not compatible anymore with the latest XCP-ng updates.

Drivers updates

More information about drivers and current versions is maintained on the drivers wiki page.

emulex-lpfc-alt: 14.4.393.31-1.1- This is an alternative driver which handles newer Emulex lpfc devices.

sfc-module-alt: 5.3.18.1012-1- Initial alternate driver for Solarflare SFN5XXX|6XXX|7XXX|8XXX|X2, version 5.3.18.1012

Versions:

blktap: 3.55.5-6.3.xcpng8.3 -> 3.55.5-6.4.xcpng8.3gnutls: 3.3.29-10.1.xcpng8.3 -> 3.3.29-10.2.xcpng8.3kernel: 4.19.19-8.0.44.1.xcpng8.3 -> 4.19.19-8.0.46.1.xcpng8.3net-snmp: 1:5.9.3-8.1.xcpng8.3 -> 1:5.9.3-8.2.xcpng8.3openssh: 7.4p1-23.3.3.xcpng8.3 -> 9.8p1-1.2.2.xcpng8.3sm: 3.2.12-17.1.xcpng8.3 -> 3.2.12-17.2.xcpng8.3traceroute: 3:2.1.5-2.xcpng8.3xapi: 26.1.3-1.3.xcpng8.3 -> 26.1.3-1.6.xcpng8.3xcp-ng-deps: 8.3-13 -> 8.3-14xcp-ng-pv-tools: 8.3-15.xcpng8.3 -> 8.3-16.xcpng8.3xcp-ng-xapi-plugins: 1.15.0-1.xcpng8.3 -> 1.16.0-1.xcpng8.3xen: 4.17.6-5.2.xcpng8.3 -> 4.17.6-6.1.xcpng8.3xo-lite: 0.19.0-1.xcpng8.3 -> 0.20.0-1.xcpng8.3

Optional packages:

lldpd: 1.0.4-1.1nut: 2.8.0-2.1

Test on XCP-ng 8.3

If you are using XOSTOR, please refer to our documentation for the update method.

yum clean metadata --enablerepo=xcp-ng-testing,xcp-ng-candidates yum update --enablerepo=xcp-ng-testing,xcp-ng-candidates rebootThe usual update rules apply: pool coordinator first, etc.

Known issues

- On

blktapupdate a non blocking error is reported,the fix is ongoing and will be delivered soon

What to test

The most important change is related to storage: adding QCOW2 support also affects the codebase managing VHD disks. What matters here is, above all, to detect any regression on VHD support (we tested it deeply, but on this matter there's no such thing as too much testing). Of course, you are also welcome to test the QCOW2 image format support.

See the dedicated thread for more information.

Other significant changes requiring attention:

* SSH connectivity

* SNMP, if you use itAnd, as usual, normal use and anything else you want to test.

Test window before official release of the updates

~2 weeks

-

@rzr Host updated

-

@rzr Installed on test machines with some warnings:

Updating : blktap-3.55.5-6.4.xcpng8.3.x86_64 9/87 cat: /usr/lib/udev/rules.d/65-md-incremental.rules: No such file or directory warning: %triggerin(blktap-3.55.5-6.4.xcpng8.3.x86_64) scriptlet failed, exit status 1 Non-fatal <unknown> scriptlet failure in rpm package blktap-3.55.5-6.4.xcpng8.3. x86_64 Updating : sm-fairlock-3.2.12-17.2.xcpng8.3.x86_64 32/87 Warning: fairlock@devicemapper.service changed on disk. Run 'systemctl daemon-reload' to reload units. -

Installed on a handful of test machines. Not as many as usual as im being very cautious with this one for now. Everything rebooted and VMs started ok after. Using VHD for everything currently.

-

@rzr Installed on test machines with some warnings:

Updating : blktap-3.55.5-6.4.xcpng8.3.x86_64 9/87 cat: /usr/lib/udev/rules.d/65-md-incremental.rules: No such file or directory warning: %triggerin(blktap-3.55.5-6.4.xcpng8.3.x86_64) scriptlet failed, exit status 1 Non-fatal <unknown> scriptlet failure in rpm package blktap-3.55.5-6.4.xcpng8.3. x86_64Yes this was reported as "Known issues"

On blktap update a non blocking error is reported,the fix is ongoing and will be delivered soon

Updating : sm-fairlock-3.2.12-17.2.xcpng8.3.x86_64 32/87 Warning: fairlock@devicemapper.service changed on disk. Run 'systemctl daemon-reload' to reload units.I observed this too, maybe this should be documented too, a reboot will work too.

-

R rzr referenced this topic on

R rzr referenced this topic on

-

@rzr Always a reboot after big updates, as instructed/required.

-

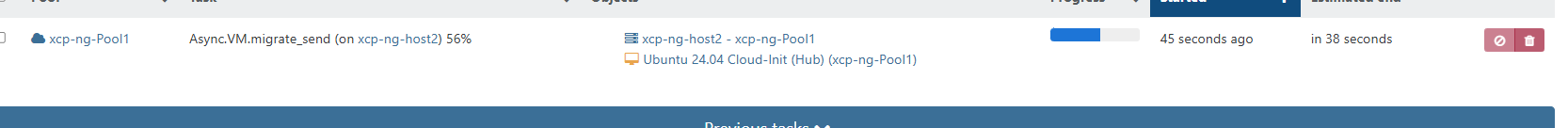

Upgraded my usual pool. VM migrations during reboot worked without issues. So far everything works

-

Now installed on my test systems and all seems to be working so far.

-

Tested but not much

Seems fine so far -

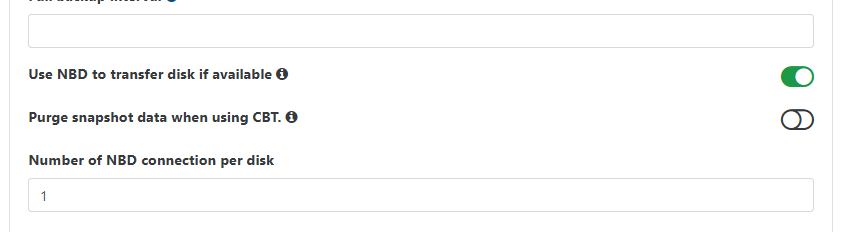

@rzr (edit) After upgrading two main pools, I'm having CR delta backup issues. Everything was working before the XCP update, now every VM has the same error of

Backup fell back to a full. Using XO master db9c4, but the same XO setup was working just fine before the XCP update.(edit 2) XO logs

Apr 15 22:55:40 xo1 xo-server[1409]: 2026-04-16T02:55:40.613Z xo:backups:worker INFO starting backup Apr 15 22:55:42 xo1 xo-server[1409]: 2026-04-16T02:55:42.006Z xo:xapi:xapi-disks INFO export through vhd Apr 15 22:55:44 xo1 xo-server[1409]: 2026-04-16T02:55:44.093Z xo:xapi:vdi INFO OpaqueRef:07ac67ab-05cf-a066-5924-f28e15642d4e was already destroyed { Apr 15 22:55:44 xo1 xo-server[1409]: vdiRef: 'OpaqueRef:49ff18d3-5c18-176c-4930-0163c6727c2b', Apr 15 22:55:44 xo1 xo-server[1409]: vbdRef: 'OpaqueRef:07ac67ab-05cf-a066-5924-f28e15642d4e' Apr 15 22:55:44 xo1 xo-server[1409]: } Apr 15 22:55:44 xo1 xo-server[1409]: 2026-04-16T02:55:44.839Z xo:xapi:vdi INFO OpaqueRef:e5fa3d00-f629-6983-6ff2-841e9edacf82 has been disconnected from dom0 { Apr 15 22:55:44 xo1 xo-server[1409]: vdiRef: 'OpaqueRef:02f9ba92-1ee2-88eb-f660-a2cf3eeb287d', Apr 15 22:55:44 xo1 xo-server[1409]: vbdRef: 'OpaqueRef:e5fa3d00-f629-6983-6ff2-841e9edacf82' Apr 15 22:55:44 xo1 xo-server[1409]: } Apr 15 22:55:44 xo1 xo-server[1409]: 2026-04-16T02:55:44.910Z xo:xapi:vm WARN _assertHealthyVdiChain, could not fetch VDI { Apr 15 22:55:44 xo1 xo-server[1409]: error: XapiError: UUID_INVALID(VDI, 8f233bfc-9deb-4a06-aa07-0510de7496a1) Apr 15 22:55:44 xo1 xo-server[1409]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/_XapiError.mjs:16:12) Apr 15 22:55:44 xo1 xo-server[1409]: at file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/transports/json-rpc.mjs:38:21 Apr 15 22:55:44 xo1 xo-server[1409]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 15 22:55:44 xo1 xo-server[1409]: code: 'UUID_INVALID', Apr 15 22:55:44 xo1 xo-server[1409]: params: [ 'VDI', '8f233bfc-9deb-4a06-aa07-0510de7496a1' ], Apr 15 22:55:44 xo1 xo-server[1409]: call: { duration: 3, method: 'VDI.get_by_uuid', params: [Array] }, Apr 15 22:55:44 xo1 xo-server[1409]: url: undefined, Apr 15 22:55:44 xo1 xo-server[1409]: task: undefined Apr 15 22:55:44 xo1 xo-server[1409]: } Apr 15 22:55:44 xo1 xo-server[1409]: } Apr 15 22:55:46 xo1 xo-server[1409]: 2026-04-16T02:55:46.732Z xo:xapi:xapi-disks INFO Error in openNbdCBT XapiError: SR_BACKEND_FAILURE_460(, Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated], ) Apr 15 22:55:46 xo1 xo-server[1409]: at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/_XapiError.mjs:16:12) Apr 15 22:55:46 xo1 xo-server[1409]: at default (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/_getTaskResult.mjs:13:29) Apr 15 22:55:46 xo1 xo-server[1409]: at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/index.mjs:1078:24) Apr 15 22:55:46 xo1 xo-server[1409]: at file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/index.mjs:1112:14 Apr 15 22:55:46 xo1 xo-server[1409]: at Array.forEach (<anonymous>) Apr 15 22:55:46 xo1 xo-server[1409]: at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/index.mjs:1102:12) Apr 15 22:55:46 xo1 xo-server[1409]: at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202604151415/packages/xen-api/index.mjs:1275:14) Apr 15 22:55:46 xo1 xo-server[1409]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) { Apr 15 22:55:46 xo1 xo-server[1409]: code: 'SR_BACKEND_FAILURE_460', Apr 15 22:55:46 xo1 xo-server[1409]: params: [ Apr 15 22:55:46 xo1 xo-server[1409]: '', Apr 15 22:55:46 xo1 xo-server[1409]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]', Apr 15 22:55:46 xo1 xo-server[1409]: '' Apr 15 22:55:46 xo1 xo-server[1409]: ], Apr 15 22:55:46 xo1 xo-server[1409]: call: undefined, Apr 15 22:55:46 xo1 xo-server[1409]: url: undefined, Apr 15 22:55:46 xo1 xo-server[1409]: task: task { Apr 15 22:55:46 xo1 xo-server[1409]: uuid: '8fae41b4-de82-789c-980a-5ff2d490d2d8', Apr 15 22:55:46 xo1 xo-server[1409]: name_label: 'Async.VDI.list_changed_blocks', Apr 15 22:55:46 xo1 xo-server[1409]: name_description: '', Apr 15 22:55:46 xo1 xo-server[1409]: allowed_operations: [], Apr 15 22:55:46 xo1 xo-server[1409]: current_operations: {}, Apr 15 22:55:46 xo1 xo-server[1409]: created: '20260416T02:55:46Z', Apr 15 22:55:46 xo1 xo-server[1409]: finished: '20260416T02:55:46Z', Apr 15 22:55:46 xo1 xo-server[1409]: status: 'failure', Apr 15 22:55:46 xo1 xo-server[1409]: resident_on: 'OpaqueRef:7b987b11-ada0-99ce-d831-6e589bf34b50', Apr 15 22:55:46 xo1 xo-server[1409]: progress: 1, Apr 15 22:55:46 xo1 xo-server[1409]: type: '<none/>', Apr 15 22:55:46 xo1 xo-server[1409]: result: '', Apr 15 22:55:46 xo1 xo-server[1409]: error_info: [ Apr 15 22:55:46 xo1 xo-server[1409]: 'SR_BACKEND_FAILURE_460', Apr 15 22:55:46 xo1 xo-server[1409]: '', Apr 15 22:55:46 xo1 xo-server[1409]: 'Failed to calculate changed blocks for given VDIs. [opterr=Source and target VDI are unrelated]', Apr 15 22:55:46 xo1 xo-server[1409]: '' Apr 15 22:55:46 xo1 xo-server[1409]: ], Apr 15 22:55:46 xo1 xo-server[1409]: other_config: {}, Apr 15 22:55:46 xo1 xo-server[1409]: subtask_of: 'OpaqueRef:NULL', Apr 15 22:55:46 xo1 xo-server[1409]: subtasks: [], Apr 15 22:55:46 xo1 xo-server[1409]: backtrace: '(((process xapi)(filename lib/backtrace.ml)(line 210))((process xapi)(filename ocaml/xapi/storage_utils.ml)(line 150))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 141))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/rbac.ml)(line 228))((process xapi)(filename ocaml/xapi/rbac.ml)(line 238))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 78)))' Apr 15 22:55:46 xo1 xo-server[1409]: } Apr 15 22:55:46 xo1 xo-server[1409]: } Apr 15 22:55:46 xo1 xo-server[1409]: 2026-04-16T02:55:46.735Z xo:xapi:xapi-disks INFO export through vhd Apr 15 22:55:48 xo1 xo-server[1409]: 2026-04-16T02:55:48.115Z xo:xapi:vdi WARN invalid HTTP header in response body { Apr 15 22:55:48 xo1 xo-server[1409]: body: 'HTTP/1.1 500 Internal Error\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: 'content-length: 318\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: 'content-type: text/html\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: 'connection: close\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: 'cache-control: no-cache, no-store\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: '\r\n' + Apr 15 22:55:48 xo1 xo-server[1409]: '<html><body><h1>HTTP 500 internal server error</h1>An unexpected error occurred; please wait a while and try again. If the problem persists, please contact your support representative.<h1> Additional information </h1>VDI_INCOMPATIBLE_TYPE: [ OpaqueRef:3b37047e-11dd-f836-ebed-acfaff2072ac; CBT metadata ]</body></html>' Apr 15 22:55:48 xo1 xo-server[1409]: } Apr 15 22:55:48 xo1 xo-server[1409]: 2026-04-16T02:55:48.124Z xo:xapi:xapi-disks WARN can't compute delta OpaqueRef:e7de1446-34fd-1ae8-4680-351b1e72b2dd from OpaqueRef:3b37047e-11dd-f836-ebed-acfaff2072ac, fallBack to a full { Apr 15 22:55:48 xo1 xo-server[1409]: error: Error: invalid HTTP header in response body Apr 15 22:55:48 xo1 xo-server[1409]: at checkVdiExport (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/vdi.mjs:37:19) Apr 15 22:55:48 xo1 xo-server[1409]: at process.processTicksAndRejections (node:internal/process/task_queues:104:5) Apr 15 22:55:48 xo1 xo-server[1409]: at async Xapi.exportContent (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/vdi.mjs:261:5) Apr 15 22:55:48 xo1 xo-server[1409]: at async #getExportStream (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/disks/XapiVhdStreamSource.mjs:123:20) Apr 15 22:55:48 xo1 xo-server[1409]: at async XapiVhdStreamSource.init (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/disks/XapiVhdStreamSource.mjs:135:23) Apr 15 22:55:48 xo1 xo-server[1409]: at async #openExportStream (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/disks/Xapi.mjs:182:7) Apr 15 22:55:48 xo1 xo-server[1409]: at async #openNbdStream (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/disks/Xapi.mjs:97:22) Apr 15 22:55:48 xo1 xo-server[1409]: at async XapiDiskSource.openSource (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/xapi/disks/Xapi.mjs:258:18) Apr 15 22:55:48 xo1 xo-server[1409]: at async XapiDiskSource.init (file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/disk-transform/dist/DiskPassthrough.mjs:28:41) Apr 15 22:55:48 xo1 xo-server[1409]: at async file:///opt/xo/xo-builds/xen-orchestra-202604151415/@xen-orchestra/backups/_incrementalVm.mjs:66:5 Apr 15 22:55:48 xo1 xo-server[1409]: } Apr 15 22:55:48 xo1 xo-server[1409]: 2026-04-16T02:55:48.126Z xo:xapi:xapi-disks INFO export through vhd Apr 15 22:56:24 xo1 xo-server[1409]: 2026-04-16T02:56:24.047Z xo:backups:worker INFO backup has ended Apr 15 22:56:24 xo1 xo-server[1409]: 2026-04-16T02:56:24.231Z xo:backups:worker INFO process will exit { Apr 15 22:56:24 xo1 xo-server[1409]: duration: 43618102, Apr 15 22:56:24 xo1 xo-server[1409]: exitCode: 0, Apr 15 22:56:24 xo1 xo-server[1409]: resourceUsage: { Apr 15 22:56:24 xo1 xo-server[1409]: userCPUTime: 45307253, Apr 15 22:56:24 xo1 xo-server[1409]: systemCPUTime: 6674413, Apr 15 22:56:24 xo1 xo-server[1409]: maxRSS: 30928, Apr 15 22:56:24 xo1 xo-server[1409]: sharedMemorySize: 0, Apr 15 22:56:24 xo1 xo-server[1409]: unsharedDataSize: 0, Apr 15 22:56:24 xo1 xo-server[1409]: unsharedStackSize: 0, Apr 15 22:56:24 xo1 xo-server[1409]: minorPageFault: 287968, Apr 15 22:56:24 xo1 xo-server[1409]: majorPageFault: 0, Apr 15 22:56:24 xo1 xo-server[1409]: swappedOut: 0, Apr 15 22:56:24 xo1 xo-server[1409]: fsRead: 0, Apr 15 22:56:24 xo1 xo-server[1409]: fsWrite: 0, Apr 15 22:56:24 xo1 xo-server[1409]: ipcSent: 0, Apr 15 22:56:24 xo1 xo-server[1409]: ipcReceived: 0, Apr 15 22:56:24 xo1 xo-server[1409]: signalsCount: 0, Apr 15 22:56:24 xo1 xo-server[1409]: voluntaryContextSwitches: 14665, Apr 15 22:56:24 xo1 xo-server[1409]: involuntaryContextSwitches: 962 Apr 15 22:56:24 xo1 xo-server[1409]: }, Apr 15 22:56:24 xo1 xo-server[1409]: summary: { duration: '44s', cpuUsage: '119%', memoryUsage: '30.2 MiB' } Apr 15 22:56:24 xo1 xo-server[1409]: } -

Thanks @Andrew. They'll have a close look.

-

Edit - If @olivierlambert wants to make this post its own thread im ok with that.

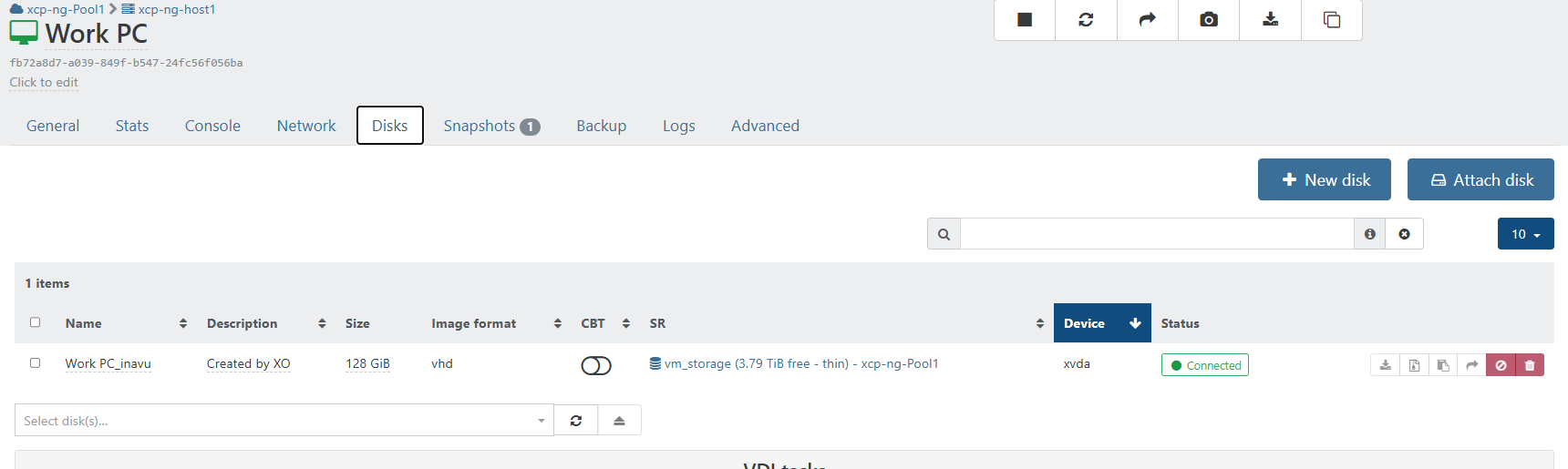

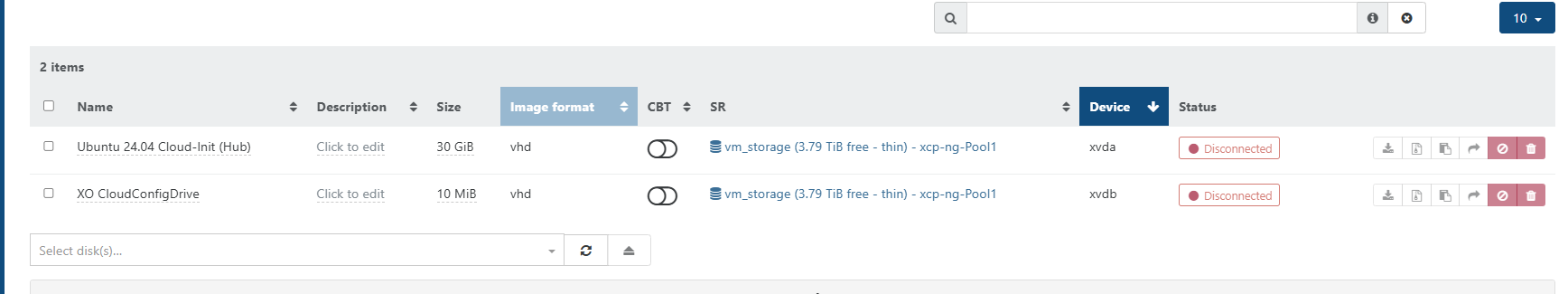

Updated home lab and live convert to qcow2 seems to work.

Process was a little long 30ish min. but it worked and did not fail.

Kubuntu 26.04 lts beta

Tried a windows vm got error - 2026-04-16T13_04_47.720Z - XO.txt

Gives VDI has CBT enabled...

Windows 11 vm.

From backup job...

Just tried another vm that was powerd off. regular ubuntu 24.04 successfull...

-

@acebmxer Hello,

The error

VDI_CBT_ENABLEDmeans that the XAPI doesn't want to move the VDI to not break the CBT chain.

You can disable the CBT on the VDI before migrating the VDI but if you have snapshots with CBT enabled it can be complicated and it might necessitate to remove them before moving the VDI.

We have changes planned to improve the CBT handling in this kind of case.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login