@olivierlambert Thanks.

--rename isn't mentioned in the KB.

If I don't see something in the next week or two, I might be able to test it in a lab and then propose an update via a github fork.

@olivierlambert Thanks.

--rename isn't mentioned in the KB.

If I don't see something in the next week or two, I might be able to test it in a lab and then propose an update via a github fork.

@fohdeesha If this was what was the issue for @dsiminiuk , could you check the documentation?

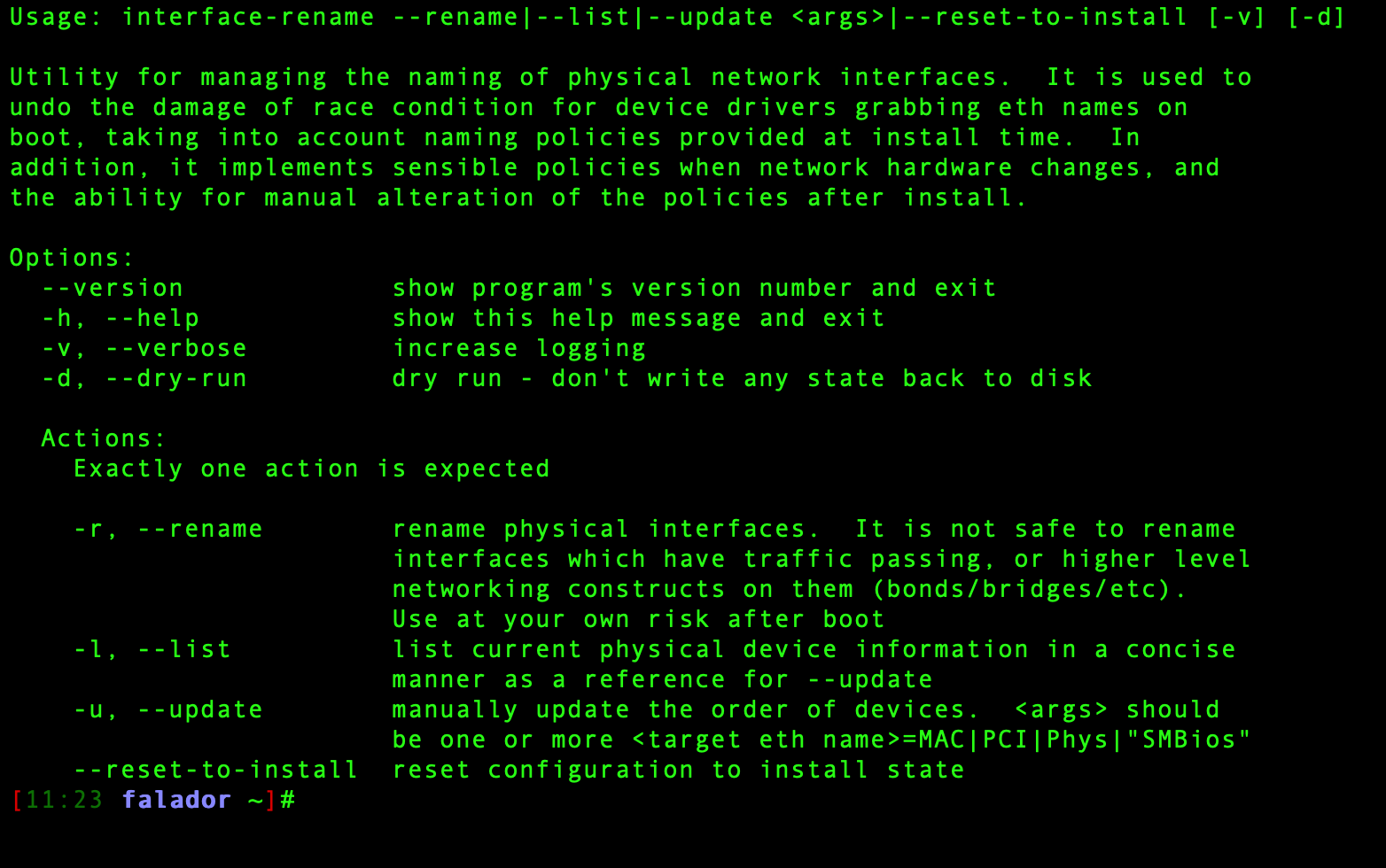

It states:

interface-rename --update eth4=00:24:81:80:19:63 eth8=00:24:81:7f:cf:8b

https://docs.xcp-ng.org/networking/#renaming-nics

Command help states that --update is correct as well.

@dsiminiuk Great! Glad you got it working!

@olivierlambert It ended up not being a resolution issue.

We started having the same issue with a Windows-based server with a GUI.

Our KVM is a Dell AV2108 (DAV2108) which is Dell-branded but manufactured by Vertiv (Avocent).

Our console is a KMMLED185 which again is Dell-branded but manufactured by Vertiv.

The KVM was on firmware version 02.02.00.00. After upgrading the firmware version to the most current, 02.08.05.00 and restarting the KVM, the issue was resolved.

Hopefully this is able to assist another that encounters the same issue.

@guiltykeyboard This was not an issue on 8.2.* and when we originally upgraded to 8.3 it wasn't an issue either.

I think perhaps the latest round of patches might have introduced an issue.

@dinhngtu said in Server 2016 BSOD Loop:

yum localinstall xen-{dom0-libs,dom0-tools,hypervisor,libs,tools}-4.17.5-4.0.lbr.19.xcpng8.3.x86_64.rpm

Installing those RPMs, restarting the host, and then starting the 2016 VM on that host did not fix the issue. It had the same result.

One thing that was different is that it said preparing devices when starting before it hit the BSOD.

@dinhngtu Is there any special process for installing these, or is it just installing all of the RPM's one at a time?

@dinhngtu I can run all of my VM's on one host while testing on the other.

Which of the two methods should I try first?

@dinhngtu Dell R660 with 2x Intel(R) Xeon(R) Gold 5416S

Additionally, I just did a fresh install of Server 2016 in a new VM and experienced the exact same code as soon as the VM finished the OS install and rebooted the first time with no drivers or anything.

Seems to be an issue with Server 2016 VM's.

On 11/12, one of my Server 2016 VM's got stuck in a boot loop.

It starts, and then has a BSOD with the code KMODE Exception Not Handled.

Taking the VHD from our storage server and copying it to a Hyper-V server allows it to boot. The issue seems to be with XCP-NG.

My two hosts are XCP-NG 8.3.0 with the latest patches applied. I've had this VM for a few years - and it seemed fine after the migration to 8.3.0 - until it wasn't. None of our other VM's have this issue.

This VM has secure boot disabled and does not have a VTPM. There are no passthrough PCI or USB devices.

@olivierlambert Correct.

When switching to the virtual console through iDRAC it works.

Is there a way to set the resolution of the OS when it starts and goes into xsconsole?

Same thing happens on the OS installer as well. Maybe the resolution is too low for the screen.

Something worth noting is that when the server itself is booting (bios menu etc) there is output on the rack KVM, but as soon as the OS itself boots, there is nothing.

For some reason, as soon as XCP-NG boots, our Dell-branded rack KVM & Console has no output at all.

This is on XCP-NG 8.2.1, as well as the 8.3 installer (doing upgrade for 8.3 currently).

This shows on the rack KVM & Console as no input detected. It only affects the XCP-NG hosts as well as the installer image (via USB).

The physical servers are Dell R660 hosts.

When using the iDRAC Enterprise virtual console, there is output. I'm able to use the virtual console to interact with both the installer, and XCP-NG once it boots.

When troubleshooting issues at the server rack, we are unable to use the physical KVM at all.

Does anyone have thoughts on why this is, and why it only affects XCP-NG systems?

@olivierlambert Interesting.

When you say SSH disabled by default, are you talking about XCP-NG not supporting SSH connections out of the box? Or XOA?

Interesting.

Does XO connect to each of the servers via SSH to be able to manage the stuff via XAPI etc?

Couldn't you integrate a bash script via the SSH connection to do this when someone tries to rename an interface via the XO UI?

Perhaps I can work on one that could just be ran as a standalone for now.

@CodeMercenary Glad it worked out for you.